目标

- 知道GoogLeNet网络结构的特点

- 能够利用GoogLeNet完成图像分类

一、开发背景

GoogLeNet在2014年由Google团队提出, 斩获当年ImageNet(ILSVRC14)竞赛中Classification Task (分类任务) 第一名,VGG获得了第二名,为了向“LeNet”致敬,因此取名为“GoogLeNet”。

GoogLeNet做了更加大胆的网络结构尝试,虽然深度只有22层,但大小却比AlexNet和VGG小很多。GoogleNet参数为500万个,AlexNet参数个数是GoogleNet的12倍,VGGNet参数又是AlexNet的3倍,因此在内存或计算资源有限时,GoogleNet是比较好的选择,从模型结果来看,GoogLeNet的性能也更加优越。

GoogLeNet的名字不是GoogleNet,而是GoogLeNet,这是为了致敬LeNet。GoogLeNet和AlexNet/VGGNet这类依靠加深网络结构的深度的思想不完全一样。GoogLeNet在加深度的同时做了结构上的创新,引入了一个叫做Inception的结构来代替之前的卷积加激活的经典组件。GoogLeNet在ImageNet分类比赛上的Top-5错误率降低到了6.7%。

1.Inception 块

GoogLeNet中的基础卷积块叫作Inception块,得名于同名电影《盗梦空间》(Inception)。Inception块在结构比较复杂,如下图所示:

Inception块里有4条并行的线路。前3条线路使用窗口大小分别是1×11×1、3×33×3和5×55×5的卷积层来抽取不同空间尺寸下的信息,其中中间2个线路会对输入先做1×11×1卷积来减少输入通道数,以降低模型复杂度。第4条线路则使用3×33×3最大池化层,后接1×11×1卷积层来改变通道数。4条线路都使用了合适的填充来使输入与输出的高和宽一致。最后我们将每条线路的输出在通道维上连结,并向后进行传输。

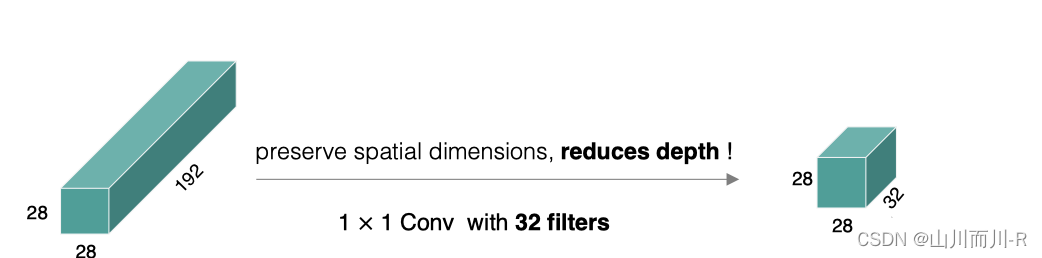

1×11×1卷积:

它的计算方法和其他卷积核一样,唯一不同的是它的大小是1×11×1,没有考虑在特征图局部信息之间的关系。

它的作用主要是:

-

实现跨通道的交互和信息整合

-

卷积核通道数的降维和升维,减少网络参数

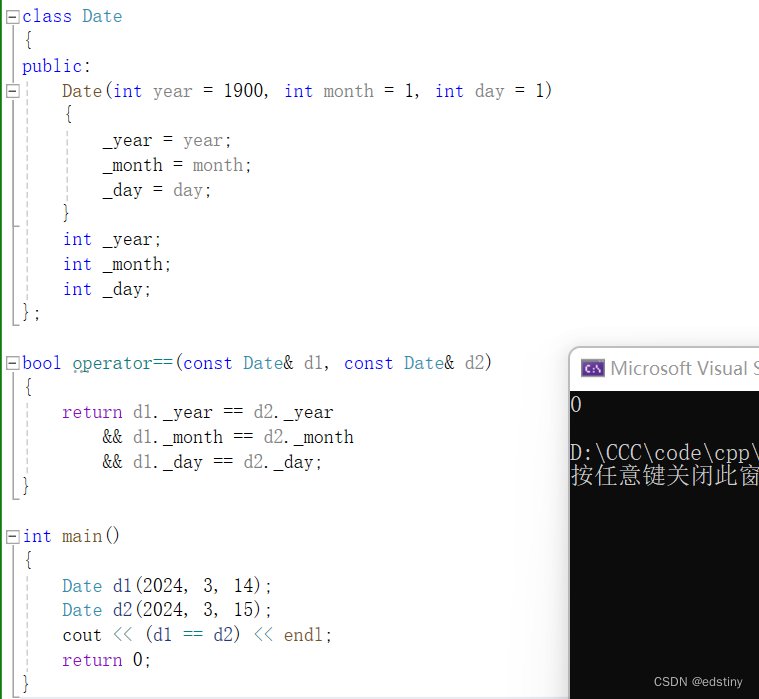

在tf.keras中实现Inception模块,各个卷积层卷积核的个数通过输入参数来控制,如下所示

# 定义Inception模块

class Inception(tf.keras.layers.Layer):

# 输入参数为各个卷积的卷积核个数

def __init__(self, c1, c2, c3, c4):

super().__init__()

# 线路1:1 x 1卷积层,激活函数是RELU,padding是same

self.p1_1 = tf.keras.layers.Conv2D(

c1, kernel_size=1, activation='relu', padding='same')

# 线路2,1 x 1卷积层后接3 x 3卷积层,激活函数是RELU,padding是same

self.p2_1 = tf.keras.layers.Conv2D(

c2[0], kernel_size=1, padding='same', activation='relu')

self.p2_2 = tf.keras.layers.Conv2D(c2[1], kernel_size=3, padding='same',

activation='relu')

# 线路3,1 x 1卷积层后接5 x 5卷积层,激活函数是RELU,padding是same

self.p3_1 = tf.keras.layers.Conv2D(

c3[0], kernel_size=1, padding='same', activation='relu')

self.p3_2 = tf.keras.layers.Conv2D(c3[1], kernel_size=5, padding='same',

activation='relu')

# 线路4,3 x 3最大池化层后接1 x 1卷积层,激活函数是RELU,padding是same

self.p4_1 = tf.keras.layers.MaxPool2D(

pool_size=3, padding='same', strides=1)

self.p4_2 = tf.keras.layers.Conv2D(

c4, kernel_size=1, padding='same', activation='relu')

# 完成前向传播过程

def call(self, x):

# 线路1

p1 = self.p1_1(x)

# 线路2

p2 = self.p2_2(self.p2_1(x))

# 线路3

p3 = self.p3_2(self.p3_1(x))

# 线路4

p4 = self.p4_2(self.p4_1(x))

# 在通道维上concat输出

outputs = tf.concat([p1, p2, p3, p4], axis=-1)

return outputs 指定通道数,对Inception模块进行实例化:

Inception(64, (96, 128), (16, 32), 32)2.GoogLeNet模型

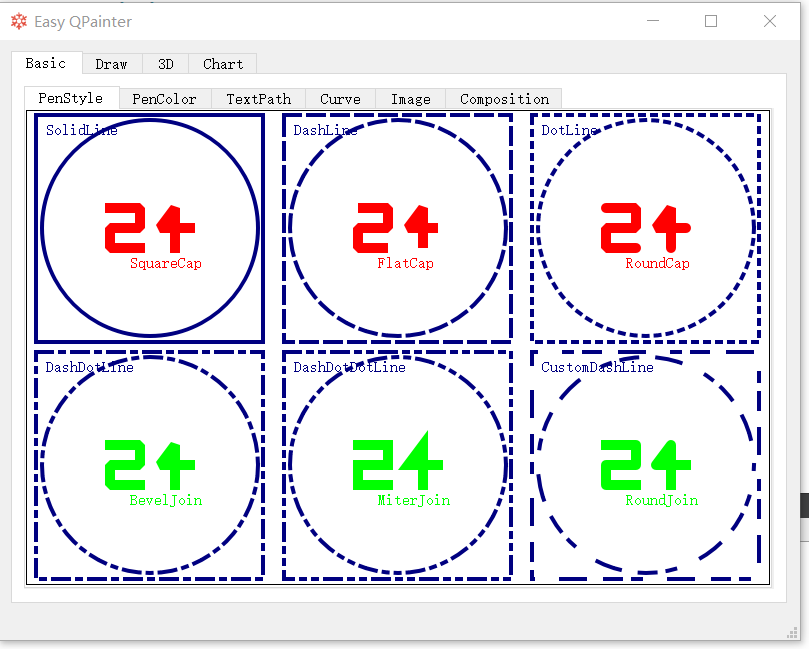

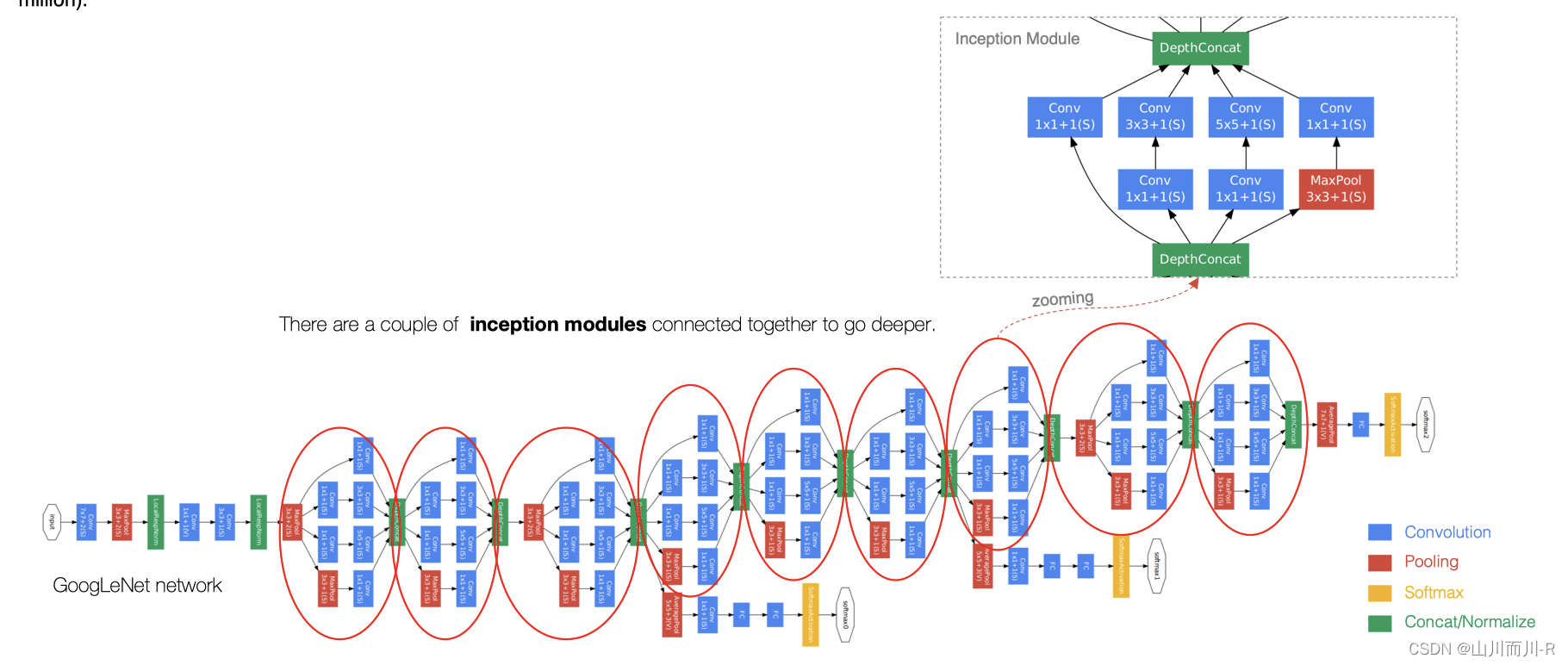

GoogLeNet主要由Inception模块构成,如下图所示:

整个网络架构我们分为五个模块,每个模块之间使用步幅为2的3×33×3最大池化层来减小输出高宽。

2.1 B1模块

第一模块使用一个64通道的7×77×7卷积层。

# 定义模型的输入

inputs = tf.keras.Input(shape=(224,224,3),name = "input")

# b1 模块

# 卷积层7*7的卷积核,步长为2,pad是same,激活函数RELU

x = tf.keras.layers.Conv2D(64, kernel_size=7, strides=2, padding='same', activation='relu')(inputs)

# 最大池化:窗口大小为3*3,步长为2,pad是same

x = tf.keras.layers.MaxPool2D(pool_size=3, strides=2, padding='same')(x)

# b2 模块2.2 B2模块

第二模块使用2个卷积层:首先是64通道的1×11×1卷积层,然后是将通道增大3倍的3×33×3卷积层。

# b2 模块

# 卷积层1*1的卷积核,步长为2,pad是same,激活函数RELU

x = tf.keras.layers.Conv2D(64, kernel_size=1, padding='same', activation='relu')(x)

# 卷积层3*3的卷积核,步长为2,pad是same,激活函数RELU

x = tf.keras.layers.Conv2D(192, kernel_size=3, padding='same', activation='relu')(x)

# 最大池化:窗口大小为3*3,步长为2,pad是same

x = tf.keras.layers.MaxPool2D(pool_size=3, strides=2, padding='same')(x)2.3 B3模块

第三模块串联2个完整的Inception块。第一个Inception块的输出通道数为64+128+32+32=25664+128+32+32=256。第二个Inception块输出通道数增至128+192+96+64=480

# b3 模块

# Inception

x = Inception(64, (96, 128), (16, 32), 32)(x)

# Inception

x = Inception(128, (128, 192), (32, 96), 64)(x)

# 最大池化:窗口大小为3*3,步长为2,pad是same

x = tf.keras.layers.MaxPool2D(pool_size=3, strides=2, padding='same')(x)2.4 B4模块

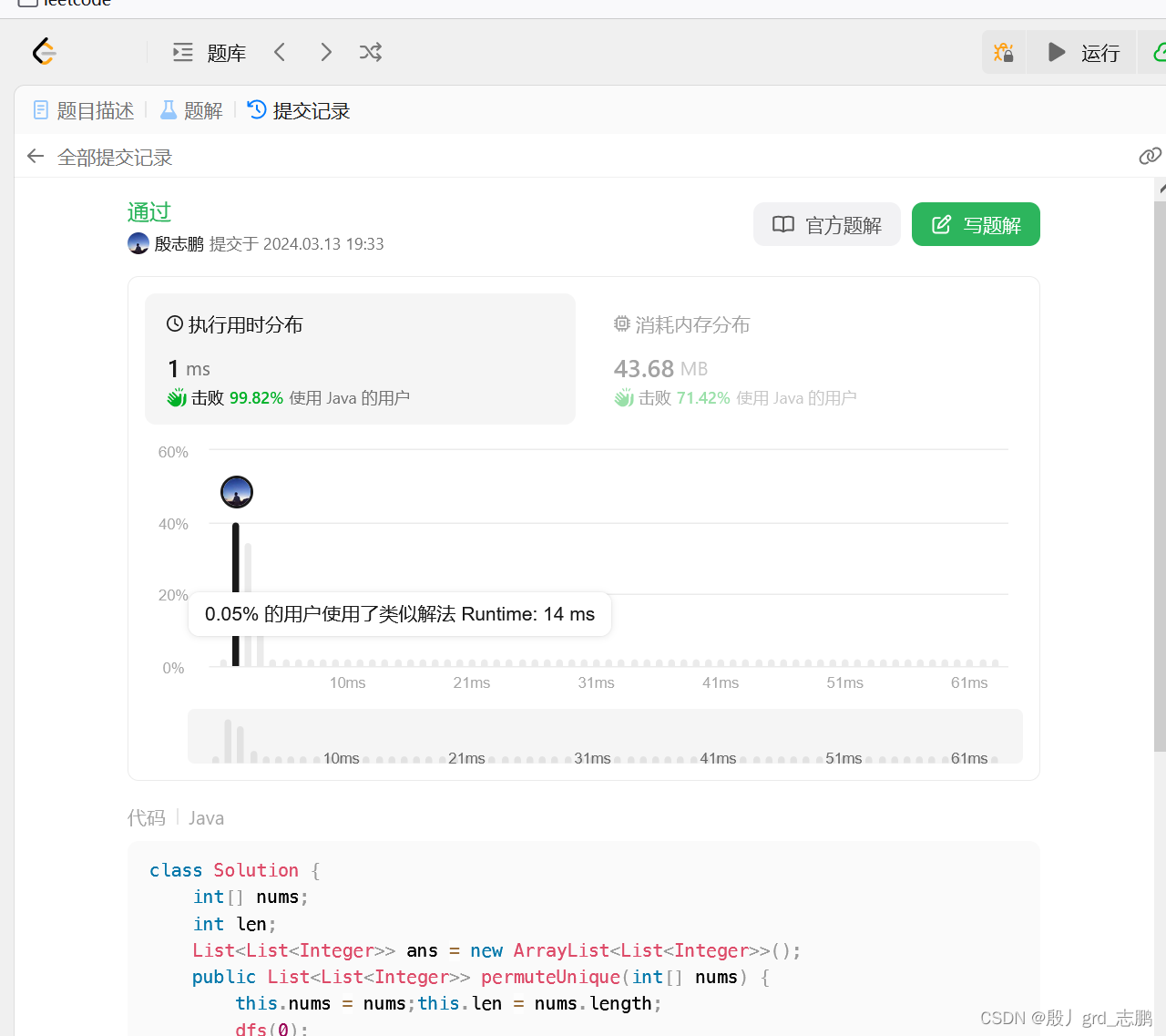

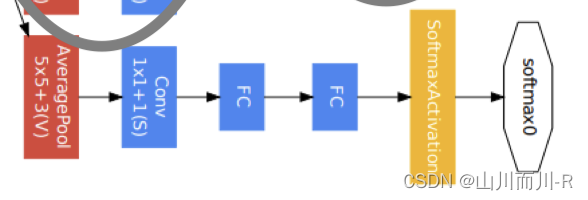

第四模块更加复杂。它串联了5个Inception块,其输出通道数分别是192+208+48+64=512192+208+48+64=512、160+224+64+64=512160+224+64+64=512、128+256+64+64=512128+256+64+64=512、112+288+64+64=528112+288+64+64=528和256+320+128+128=832256+320+128+128=832。并且增加了辅助分类器,根据实验发现网络的中间层具有很强的识别能力,为了利用中间层抽象的特征,在某些中间层中添加含有多层的分类器,如下图所示:

实现如下所示:

def aux_classifier(x, filter_size):

#x:输入数据,filter_size:卷积层卷积核个数,全连接层神经元个数

# 池化层

x = tf.keras.layers.AveragePooling2D(

pool_size=5, strides=3, padding='same')(x)

# 1x1 卷积层

x = tf.keras.layers.Conv2D(filters=filter_size[0], kernel_size=1, strides=1,

padding='valid', activation='relu')(x)

# 展平

x = tf.keras.layers.Flatten()(x)

# 全连接层1

x = tf.keras.layers.Dense(units=filter_size[1], activation='relu')(x)

# softmax输出层

x = tf.keras.layers.Dense(units=10, activation='softmax')(x)

return xb4模块的实现:

# b4 模块

# Inception

x = Inception(192, (96, 208), (16, 48), 64)(x)

# 辅助输出1

aux_output_1 = aux_classifier(x, [128, 1024])

# Inception

x = Inception(160, (112, 224), (24, 64), 64)(x)

# Inception

x = Inception(128, (128, 256), (24, 64), 64)(x)

# Inception

x = Inception(112, (144, 288), (32, 64), 64)(x)

# 辅助输出2

aux_output_2 = aux_classifier(x, [128, 1024])

# Inception

x = Inception(256, (160, 320), (32, 128), 128)(x)

# 最大池化

x = tf.keras.layers.MaxPool2D(pool_size=3, strides=2, padding='same')(x)2.5 B5模块

第五模块有输出通道数为256+320+128+128=832256+320+128+128=832和384+384+128+128=1024384+384+128+128=1024的两个Inception块。后面紧跟输出层,该模块使用全局平均池化层(GAP)来将每个通道的高和宽变成1。最后输出变成二维数组后接输出个数为标签类别数的全连接层。

全局平均池化层(GAP)

用来替代全连接层,将特征图每一通道中所有像素值相加后求平均,得到就是GAP的结果,在将其送入后续网络中进行计算

实现过程是:

# b5 模块

# Inception

x = Inception(256, (160, 320), (32, 128), 128)(x)

# Inception

x = Inception(384, (192, 384), (48, 128), 128)(x)

# GAP

x = tf.keras.layers.GlobalAvgPool2D()(x)

# 输出层

main_outputs = tf.keras.layers.Dense(10,activation='softmax')(x)

# 使用Model来创建模型,指明输入和输出构建GoogLeNet模型并通过summary来看下模型的结构:

# 使用Model来创建模型,指明输入和输出

model = tf.keras.Model(inputs=inputs, outputs=[main_outputs,aux_output_1,aux_output_2])

model.summary()Model: "functional_3" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= input (InputLayer) [(None, 224, 224, 3)] 0 _________________________________________________________________ conv2d_122 (Conv2D) (None, 112, 112, 64) 9472 _________________________________________________________________ max_pooling2d_27 (MaxPooling (None, 56, 56, 64) 0 _________________________________________________________________ conv2d_123 (Conv2D) (None, 56, 56, 64) 4160 _________________________________________________________________ conv2d_124 (Conv2D) (None, 56, 56, 192) 110784 _________________________________________________________________ max_pooling2d_28 (MaxPooling (None, 28, 28, 192) 0 _________________________________________________________________ inception_19 (Inception) (None, 28, 28, 256) 163696 _________________________________________________________________ inception_20 (Inception) (None, 28, 28, 480) 388736 _________________________________________________________________ max_pooling2d_31 (MaxPooling (None, 14, 14, 480) 0 _________________________________________________________________ inception_21 (Inception) (None, 14, 14, 512) 376176 _________________________________________________________________ inception_22 (Inception) (None, 14, 14, 512) 449160 _________________________________________________________________ inception_23 (Inception) (None, 14, 14, 512) 510104 _________________________________________________________________ inception_24 (Inception) (None, 14, 14, 528) 605376 _________________________________________________________________ inception_25 (Inception) (None, 14, 14, 832) 868352 _________________________________________________________________ max_pooling2d_37 (MaxPooling (None, 7, 7, 832) 0 _________________________________________________________________ inception_26 (Inception) (None, 7, 7, 832) 1043456 _________________________________________________________________ inception_27 (Inception) (None, 7, 7, 1024) 1444080 _________________________________________________________________ global_average_pooling2d_2 ( (None, 1024) 0 _________________________________________________________________ dense_10 (Dense) (None, 10) 10250 ================================================================= Total params: 5,983,802 Trainable params: 5,983,802 Non-trainable params: 0 ___________________________________________________________

3.手写数字识别

因为ImageNet数据集较大训练时间较长,我们仍用前面的MNIST数据集来演示GoogLeNet。读取数据的时将图像高和宽扩大到图像高和宽224。这个通过tf.image.resize_with_pad来实现。

2.1 数据读取

首先获取数据,并进行维度调整:

import numpy as np

# 获取手写数字数据集

(train_images, train_labels), (test_images, test_labels) = mnist.load_data()

# 训练集数据维度的调整:N H W C

train_images = np.reshape(train_images,(train_images.shape[0],train_images.shape[1],train_images.shape[2],1))

# 测试集数据维度的调整:N H W C

test_images = np.reshape(test_images,(test_images.shape[0],test_images.shape[1],test_images.shape[2],1))由于使用全部数据训练时间较长,我们定义两个方法获取部分数据,并将图像调整为224*224大小,进行模型训练:(与VGG中是一样的)

# 定义两个方法随机抽取部分样本演示

# 获取训练集数据

def get_train(size):

# 随机生成要抽样的样本的索引

index = np.random.randint(0, np.shape(train_images)[0], size)

# 将这些数据resize成22*227大小

resized_images = tf.image.resize_with_pad(train_images[index],224,224,)

# 返回抽取的

return resized_images.numpy(), train_labels[index]

# 获取测试集数据

def get_test(size):

# 随机生成要抽样的样本的索引

index = np.random.randint(0, np.shape(test_images)[0], size)

# 将这些数据resize成224*224大小

resized_images = tf.image.resize_with_pad(test_images[index],224,224,)

# 返回抽样的测试样本

return resized_images.numpy(), test_labels[index]调用上述两个方法,获取参与模型训练和测试的数据集:

# 获取训练样本和测试样本

train_images,train_labels = get_train(256)

test_images,test_labels = get_test(128)3.2 模型编译

# 指定优化器,损失函数和评价指标

optimizer = tf.keras.optimizers.SGD(learning_rate=0.01, momentum=0.0)

# 模型有3个输出,所以指定损失函数对应的权重系数

net.compile(optimizer=optimizer,

loss='sparse_categorical_crossentropy',

metrics=['accuracy'],loss_weights=[1,0.3,0.3])3.3 模型训练

# 模型训练:指定训练数据,batchsize,epoch,验证集

net.fit(train_images,train_labels,batch_size=128,epochs=3,verbose=1,validation_split=0.1)训练过程:

Epoch 1/3 2/2 [==============================] - 8s 4s/step - loss: 2.9527 - accuracy: 0.1174 - val_loss: 3.3254 - val_accuracy: 0.1154 Epoch 2/3 2/2 [==============================] - 7s 4s/step - loss: 2.8111 - accuracy: 0.0957 - val_loss: 2.2718 - val_accuracy: 0.2308 Epoch 3/3 2/2 [==============================] - 7s 4s/step - loss: 2.3055 - accuracy: 0.0957 - val_loss: 2.2669 - val_accuracy: 0.2308

2.4 模型评估

# 指定测试数据

net.evaluate(test_images,test_labels,verbose=1)输出为:

4/4 [==============================] - 1s 338ms/step - loss: 2.3110 - accuracy: 0.0781 [2.310971260070801, 0.078125]

4.延伸版本

GoogLeNet是以InceptionV1为基础进行构建的,所以GoogLeNet也叫做InceptionNet,在随后的⼏年⾥,研究⼈员对GoogLeNet进⾏了数次改进, 就又产生了InceptionV2,V3,V4等版本。

4.1 InceptionV2

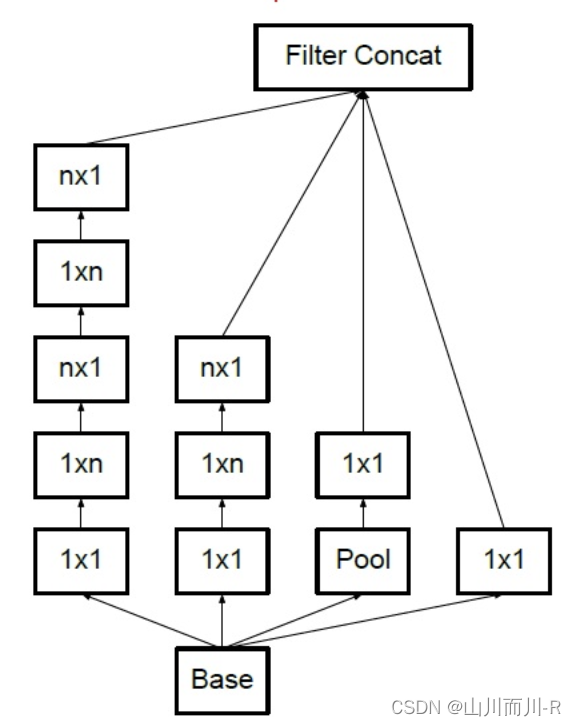

在InceptionV2中将大卷积核拆分为小卷积核,将V1中的5×55×5的卷积用两个3×33×3的卷积替代,从而增加网络的深度,减少了参数。

4.2 InceptionV3

将n×n卷积分割为1×n和n×1两个卷积,例如,一个的3×33×3卷积首先执行一个1×31×3的卷积,然后执行一个3×13×1的卷积,这种方法的参数量和计算量都比原来降低。

总结

- 知道GoogLeNet的网络架构:有基础模块Inception构成

- 能够利用GoogleNet完成图像分类

++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

下面是另一个代码实现GooLeNet网络模型构建和之前代码不冲突

GooLeNet代码实现

展示模型搭建代码

import torch

import torch.nn as nn

import torch.nn.functional as F

#conv+ReLU

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, **kwargs)

self.relu = nn.ReLU()

def forward(self, x):

x = self.conv(x)

x = self.relu(x)

return x

#前部

class Front(nn.Module):

def __init__(self):

super(Front, self).__init__()

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3)

self.maxpool1 = nn.MaxPool2d(3, stride=2,ceil_mode=True)

self.conv2 = BasicConv2d(64, 64, kernel_size=1)

self.conv3 = BasicConv2d(64, 192, kernel_size=3, padding=1)

self.maxpool2 = nn.MaxPool2d(3, stride=2,ceil_mode=True)

def forward(self,input):

#输入:(N,3,224,224)

x = self.conv1(input)#(N,64,112,112)

x = self.maxpool1(x)#(N,64,56,56)

x = self.conv2(x)#(N,64,56,56)

x = self.conv3(x)#(N,192,56,56)

x = self.maxpool2(x)#(N,192,28,28)

return x

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3_1_1, ch3x3_1, ch3x3_2_1, ch3x3_2, pool_ch):

super(Inception, self).__init__()

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3_1_1, kernel_size=1),

BasicConv2d(ch3x3_1_1, ch3x3_1, kernel_size=3, padding=1)

)

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch3x3_2_1, kernel_size=1),

BasicConv2d(ch3x3_2_1, ch3x3_2, kernel_size=3, padding=1)

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1),

BasicConv2d(in_channels, pool_ch, kernel_size=1)

)

def forward(self, x):

#输入(N,Cin,Hin,Win)

branch1 = self.branch1(x)#(N,C1,Hin,Win)

branch2 = self.branch2(x)#(N,C2,Hin,Win)

branch3 = self.branch3(x)#(N,C3,Hin,Win)

branch4 = self.branch4(x)#(N,C4,Hin,Win)

outputs = [branch1, branch2, branch3, branch4]

return torch.cat(outputs, 1)#(N,C1+C2+C3+C4,Hin,Win)

#辅助分类器

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.averagePool = nn.AvgPool2d(kernel_size=5, stride=3)

self.conv = BasicConv2d(in_channels, 128, kernel_size=1)

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

def forward(self, x):

# 输入:aux1:(N,512,14,14), aux2: (N,528,14,14)

x = self.averagePool(x)# aux1:(N,512,4,4), aux2: (N,528,4,4)

x = self.conv(x)# (N,128,4,4)

x = torch.flatten(x, 1)# (N,2048)

x = F.dropout(x, 0.5, training=self.training)

x = F.relu(self.fc1(x))# (N,1024)

x = F.dropout(x, 0.5, training=self.training)

x = self.fc2(x)# (N,num_classes)

return x

# GooLeNet网络主体

class GoogLeNet(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True):

super(GoogLeNet, self).__init__()

self.aux_logits = aux_logits

self.front = Front()

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

self.maxpool3 = nn.MaxPool2d(3, stride=2,ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

self.maxpool4 = nn.MaxPool2d(3, stride=2,ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

if self.aux_logits:

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.dropout = nn.Dropout(0.4)

self.fc = nn.Linear(1024, num_classes)

def forward(self, x):

#输入:(N,3,224,224)

x = self.front(x)#(N,192,28,28)

x = self.inception3a(x)#(N,256,28,28)

x = self.inception3b(x)#(N,480,28,28)

x = self.maxpool3(x)#(N,480,14,14)

x = self.inception4a(x)#(N,512,14,14)

if self.training and self.aux_logits:

aux1 = self.aux1(x)

x = self.inception4b(x)#(N,512,14,14)

x = self.inception4c(x)#(N,512,14,14)

x = self.inception4d(x)#(N,528,14,14)

if self.training and self.aux_logits:

aux2 = self.aux2(x)

x = self.inception4e(x)#(N,832,14,14)

x = self.maxpool4(x)#(N,832,7,7)

x = self.inception5a(x)#(N,832,7,7)

x = self.inception5b(x)#(N,1024,7,7)

x = self.avgpool(x)#(N,1024,1,1)

x = torch.flatten(x, 1)#(N,1024)

x = self.dropout(x)

x = self.fc(x)#(N,num_classes)

if self.training and self.aux_logits:

return x, aux2, aux1

return x使用 Pytorch 搭建 GoogleNet 网络

本代码使用的数据集来自 “花分类” 数据集,→ 传送门 ←(具体内容看 data_set文件夹下的 README.md)

- model.py ( 搭建 GoogleNet 网络模型 )

import torch.nn as nn

import torch

import torch.nn.functional as F

class GoogleNet(nn.Module):

# aux_logits: 是否使用辅助分类器(训练的时候为True, 验证的时候为False)

def __init__(self, num_classes=1000, aux_logits=True, init_weight=False):

super(GoogleNet, self).__init__()

self.aux_logits = aux_logits

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3)

self.maxpool1 = nn.MaxPool2d(3, stride=2, ceil_mode=True) # 当结构为小数时,ceil_mode=True向上取整,=False向下取整

# nn.LocalResponseNorm (此处省略)

self.conv2 = nn.Sequential(

BasicConv2d(64, 64, kernel_size=1),

BasicConv2d(64, 192, kernel_size=3, padding=1)

)

self.maxpool2 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

self.maxpool3 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

self.maxpool4 = nn.MaxPool2d(2, stride=2, ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

if aux_logits: # 使用辅助分类器

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

self.avgpool = nn.AdaptiveAvgPool1d((1, 1))

self.dropout = nn.Dropout(0.4)

self.fc = nn.Linear(1024, num_classes)

if init_weight:

self._initialize_weight()

def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.inception3a(x)

x = self.inception3b(x)

x =self.maxpool3(x)

x =self.inception4a(x)

if self.training and self.aux_logits:

aux1 = self.aux1(x)

x = self.inception4b(x)

x = self.inception4c(x)

x = self.inception4d(x)

if self.training and self.aux_logits:

aux2 = self.aux2(x)

x = self.inception4e(x)

x =self.maxpool4(x)

x = self.inception5a(x)

x = self.inception5b(x)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.dropout(x)

x = self.fc(x)

if self.training and self.aux_logits:

return x, aux1, aux2

return x

def _initialize_weight(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='')

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)

# 创建 Inception 结构函数(模板)

class Inception(nn.Module):

# 参数为 Inception 结构的那几个卷积核的数量(详细见表)

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):

super(Inception, self).__init__()

# 四个并联结构

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3red, kernel_size=1),

BasicConv2d(ch3x3red, ch3x3, kernel_size=3, padding=1)

)

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch5x5red, kernel_size=1),

BasicConv2d(ch5x5red, ch5x5, kernel_size=5, padding=2)

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1),

BasicConv2d(in_channels, pool_proj, kernel_size=1)

)

def forward(self, x):

branch1 = self.branch1(x)

branch2 = self.branch2(x)

branch3 = self.branch3(x)

branch4 = self.branch4(x)

outputs = [branch1, branch2, branch3, branch4]

return torch.cat(outputs, 1)

# 创建辅助分类器结构函数(模板)

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.avgPool = nn.AvgPool2d(kernel_size=5, stride=3)

self.conv = BasicConv2d(in_channels, 128, kernel_size=1)

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

def forward(self, x):

# aux1: N x 512 x 14 x 14 aux2: N x 528 x 14 x 14(输入)

x = self.avgPool(x)

# aux1: N x 512 x 4 x 4 aux2: N x 528 x 4 x 4(输出) 4 = (14 - 5)/3 + 1

x = self.conv(x)

x = torch.flatten(x, 1) # 展平

x = F.dropout(x, 0.5, training=self.training)

x = F.relu(self.fc1(x), inplace=True)

x = F.dropout(x, 0.5, training=self.training)

x = self.fc2(x)

return x

# 创建卷积层函数(模板)

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, **kwargs)

self.relu = nn.ReLU(True)

def forward(self, x):

x = self.conv(x)

x = self.relu(x)

return x

- train.py ( 训练网络 )

import os

import json

import torch

import torch.nn as nn

from torchvision import transforms, datasets

import torch.optim as optim

from tqdm import tqdm

from model import GoogleNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

assert os.path.exists(image_path), "{} path does not exist.".format(image_path)

train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),

transform=data_transform["train"])

train_num = len(train_dataset)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

batch_size = 32

nw = min([os.cpu_count(), batch_size if batch_size > 1 else 0, 8]) # number of workers

print('Using {} dataloader workers every process'.format(nw))

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size, shuffle=True,

num_workers=nw)

validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"),

transform=data_transform["val"])

val_num = len(validate_dataset)

validate_loader = torch.utils.data.DataLoader(validate_dataset,

batch_size=batch_size, shuffle=False,

num_workers=nw)

print("using {} images for training, {} images for validation.".format(train_num,

val_num))

net = GoogleNet(num_classes=5, aux_logits=True, init_weights=True)

net.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0003)

epochs = 30

best_acc = 0.0

save_path = './googleNet.pth'

train_steps = len(train_loader)

for epoch in range(epochs):

# train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader)

for step, data in enumerate(train_bar):

images, labels = data

optimizer.zero_grad()

logits, aux_logits2, aux_logits1 = net(images.to(device)) # 由于训练的时候会使用辅助分类器,所有相当于有三个返回结果

loss0 = loss_function(logits, labels.to(device))

loss1 = loss_function(aux_logits1, labels.to(device))

loss2 = loss_function(aux_logits2, labels.to(device))

loss = loss0 + loss1 * 0.3 + loss2 * 0.3

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch + 1,

epochs,

loss)

# validate

net.eval()

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

val_bar = tqdm(validate_loader)

for val_data in val_bar:

val_images, val_labels = val_data

outputs = net(val_images.to(device)) # eval model only have last output layer

predict_y = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict_y, val_labels.to(device)).sum().item()

val_accurate = acc / val_num

print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %

(epoch + 1, running_loss / train_steps, val_accurate))

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net.state_dict(), save_path)

print('Finished Training')

if __name__ == '__main__':

main()

- predict.py ( 使用训练好的模型网络对图像分类 )

import os

import json

import torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

from model import GoogleNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

data_transform = transforms.Compose(

[transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# load image

img_path = "../tulip.jpg"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)

img = Image.open(img_path)

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img)

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

json_path = './class_indices.json'

assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)

json_file = open(json_path, "r")

class_indict = json.load(json_file)

# create model

model = GoogleNet(num_classes=5, aux_logits=False).to(device)

# load model weights

weights_path = "./googleNet.pth"

assert os.path.exists(weights_path), "file: '{}' dose not exist.".format(weights_path)

missing_keys, unexpected_keys = model.load_state_dict(torch.load(weights_path, map_location=device),

strict=False)

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],

predict[predict_cla].numpy())

plt.title(print_res)

print(print_res)

plt.show()

if __name__ == '__main__':

main()

参考文章:【学习笔记】GoogleNet 网络结构_googlenet特点-CSDN博客

参考文章:GoogLeNet详解-CSDN博客

参考文章:CNN经典网络模型(四):GoogLeNet简介及代码实现(PyTorch超详细注释版)_googlenet代码-CSDN博客