Flink学习笔记

前言:今天是学习 flink 的第 14 天啦!学习了 flink 高级特性和新特性之数据类型及 avro 序列化,主要是解决大数据领域数据规范化写入和规范化读取的问题,avro 数据结构可以节约存储空间,本文中结合企业真实应用场景,即 kafka 的读取和写入采用自定义序列化,结合自己实验猜想和代码实践,总结了很多自己的理解和想法,希望和大家多多交流!

Tips:"分享是快乐的源泉💧,在我的博客里,不仅有知识的海洋🌊,还有满满的正能量加持💪,快来和我一起分享这份快乐吧😊!

喜欢我的博客的话,记得点个红心❤️和小关小注哦!您的支持是我创作的动力!"

文章目录

- Flink学习笔记

- 四、Flink 高级特性和新特性

- 4. 数据类型及序列化

- 4.1 数据类型

- 4.2 POJO 类型细节

- 4.3 Avro优点介绍

- 4.4 定义Avro Json格式

- 4.5 使用 Java 自定义序列化到 Kfaka

- 4.5.1 准备数据

- 4.5.2 自定义Avro 序列化和反序列化

- 4.5.3 创建生产者工具类

- 4.5.4 创建消费者工具类

- 4.5.5 运行程序

- 4.6 使用 Flink 自定义序列化到 Kafka

- 4.6.1 准备数据

- 4.6.2 自定义Avro 序列化和反序列化

- 4.6.3 创建 Flink-source 类

- 4.6.4 创建 Flink-sink 类

- 4.6.5 运行程序

四、Flink 高级特性和新特性

4. 数据类型及序列化

4.1 数据类型

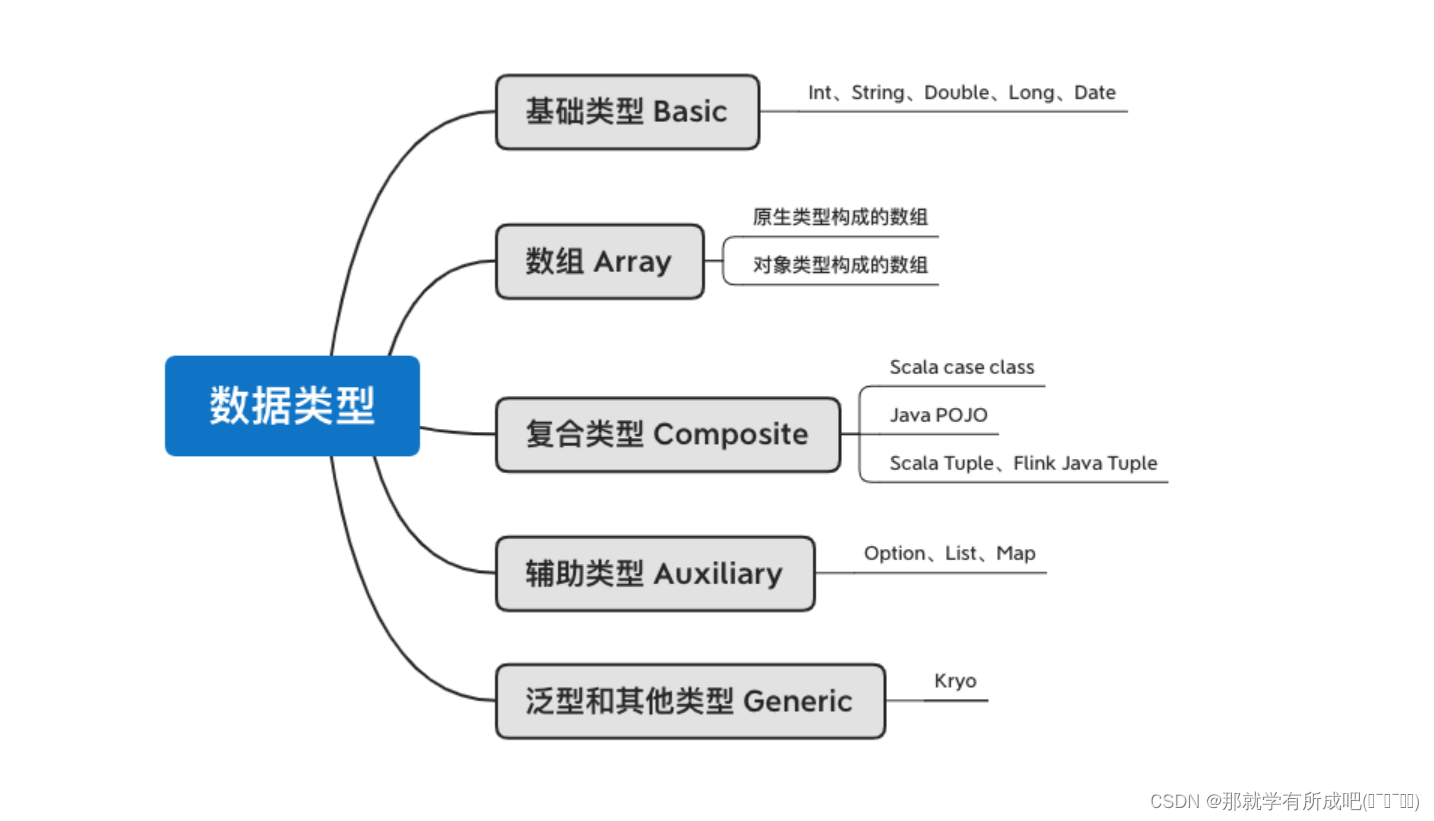

flink 支持的数据类型:七种

4.2 POJO 类型细节

注意事项:

- 该类需要有 public 修饰

- 该类需要有 public 修饰的无参构造函数

- 该类的所有(no-static)、(no-transient)字段必须是 public,如果不是 public 则必须是有标准的 getter 和 setter

- 该类的所有字段都必须是 flink 支持的数据类型

4.3 Avro优点介绍

-

Avro 是数据序列化系统,支持大批量数据交换的应用。

-

支持二进制序列化方式,性能好 / 效率高,使用 JSON 描述。

-

动态语言友好,RPC 远程调用,支持同步和异步通信。

4.4 定义Avro Json格式

- namespace:要生成的目录

- type:类型 avro 需要指定 record

- name:会自动生成的对象

- fields:要指定的字段

注意: 创建的文件后缀名一定要叫 avsc,而不是 avro 后缀,使用 idea 生成 Order 对象

{

"namespace": "cn.itcast.beans",

"type": "record",

"name": "OrderModel",

"fields": [

{"name": "userId", "type": "string"},

{"name": "timestamp", "type": "long"},

{"name": "money", "type": "double"},

{"name": "category", "type": "string"}

]

}

注意:由于在导入 pom 依赖的时候,需要注意插件冲突,注释掉以下依赖,不然会一直爆错!

<!-- 这个会和 avro 冲突,所以先注释一下-->

<!-- <dependency>-->

<!-- <groupId>org.apache.hive</groupId>-->

<!-- <artifactId>hive-exec</artifactId>-->

<!-- <version>2.1.0</version>-->

<!-- </dependency>-->

- 快看一!导入需要的依赖到 pom 文件中:

<dependency>

<groupId>org.apache.avro</groupId>

<artifactId>avro</artifactId>

<version>1.8.2</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-avro -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-avro</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.kafka/kafka-clients -->

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.5.1</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>2.5.1</version>

</dependency>

- 快看二!导入需要的插件到 pom 文件中:

<!-- avro编译插件 -->

<plugin>

<groupId>org.apache.avro</groupId>

<artifactId>avro-maven-plugin</artifactId>

<version>1.8.2</version>

<executions>

<execution>

<phase>generate-sources</phase>

<goals>

<goal>schema</goal>

</goals>

<configuration>

<sourceDirectory>${project.basedir}/src/main/avro/</sourceDirectory>

<outputDirectory>${project.basedir}/src/main/java/</outputDirectory>

</configuration>

</execution>

</executions>

</plugin>

4.5 使用 Java 自定义序列化到 Kfaka

4.5.1 准备数据

order.csv

user_001,1621718199,10.1,电脑

user_001,1621718201,14.1,手机

user_002,1621718202,82.5,手机

user_001,1621718205,15.6,电脑

user_004,1621718207,10.2,家电

user_001,1621718208,15.8,电脑

user_005,1621718212,56.1,电脑

user_002,1621718260,40.3,家电

user_001,1621718580,11.5,家居

user_001,1621718860,61.6,家居

4.5.2 自定义Avro 序列化和反序列化

首先需要实现2个接口分别为 Serializer 和 Deserializer 分别是序列化和反序列化

package cn.itcast.day14.serialization_java;

/**

* @author lql

* @time 2024-03-10 16:29:49

* @description TODO

*/

import cn.itcast.day14.beans.OrderModel;

import org.apache.avro.io.BinaryDecoder;

import org.apache.avro.io.BinaryEncoder;

import org.apache.avro.io.DecoderFactory;

import org.apache.avro.io.EncoderFactory;

import org.apache.avro.specific.SpecificDatumReader;

import org.apache.avro.specific.SpecificDatumWriter;

import java.io.ByteArrayInputStream;

import java.io.ByteArrayOutputStream;

import java.io.IOException;

import java.util.Map;

import org.apache.kafka.common.serialization.*;

/**

* 自定义序列化和反序列化

*/

public class SimpleAvroSchemaJava implements Serializer<OrderModel>, Deserializer<OrderModel> {

@Override

public void configure(Map<String, ?> configs, boolean isKey) {

}

@Override

public void close() {

}

@Override

public byte[] serialize(String s, OrderModel order) {

// 创建序列化执行器

SpecificDatumWriter<OrderModel> writer = new SpecificDatumWriter<OrderModel>(order.getSchema());

// 创建一个流 用存储序列化后的二进制文件

ByteArrayOutputStream out = new ByteArrayOutputStream();

// 创建二进制编码器

BinaryEncoder encoder = EncoderFactory.get().directBinaryEncoder(out, null);

try {

// 数据入都流中

writer.write(order, encoder);

} catch (IOException e) {

e.printStackTrace();

}

return out.toByteArray();

}

@Override

public OrderModel deserialize(String s, byte[] bytes) {

// 用来保存结果数据

OrderModel order = new OrderModel();

// 创建输入流用来读取二进制文件

ByteArrayInputStream arrayInputStream = new ByteArrayInputStream(bytes);

// 创建输入序列化执行器

SpecificDatumReader<OrderModel> stockSpecificDatumReader = new SpecificDatumReader<OrderModel>(order.getSchema());

//创建二进制解码器

BinaryDecoder binaryDecoder = DecoderFactory.get().directBinaryDecoder(arrayInputStream, null);

try {

// 数据读取

order= stockSpecificDatumReader.read(null, binaryDecoder);

} catch (IOException e) {

e.printStackTrace();

}

// 结果返回

return order;

}

}

4.5.3 创建生产者工具类

package cn.itcast.day14.serialization_java;

import cn.itcast.day14.beans.OrderModel;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.io.BufferedReader;

import java.io.File;

import java.io.FileReader;

import java.util.ArrayList;

import java.util.List;

import java.util.Properties;

/**

* @author lql

* @time 2024-03-10 16:33:31

* @description TODO

*/

public class OrderProducerJava {

public static void main(String[] args) {

// 获取数据

List<OrderModel> data = getData();

System.out.println(data);

try {

// 创建配置文件

Properties props = new Properties();

props.setProperty("bootstrap.servers", "node1:9092");

// 这里的健:还是 string 序列化

props.setProperty("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

// 这里的值:需要指向自定义的序列化

props.setProperty("value.serializer", "cn.itcast.day14.serialization_java.SimpleAvroSchemaJava");

// 创建kafka的生产者

KafkaProducer<String, OrderModel> userBehaviorProducer = new KafkaProducer<String, OrderModel>(props);

// 循环遍历数据

for (OrderModel orderModel : data) {

ProducerRecord<String, OrderModel> producerRecord

= new ProducerRecord<String, OrderModel>("order", orderModel);

userBehaviorProducer.send(producerRecord);

System.out.println("数据写入成功"+data);

Thread.sleep(1000);

}

} catch (InterruptedException e) {

e.printStackTrace();

}

}

public static List<OrderModel> getData() {

ArrayList<OrderModel> orderModels = new ArrayList<OrderModel>();

try {

BufferedReader br = new BufferedReader(

new FileReader(

new File("D:\\IDEA_Project\\BigData_Java\\flinkbase_pro\\data\\input\\order.csv")

)

);

String line = "";

while ((line = br.readLine()) != null) {

String[] fields = line.split(",");

orderModels.add(new OrderModel(fields[0],

Long.parseLong(fields[1]),

Double.parseDouble(fields[2]),

fields[3]));

}

} catch (Exception e) {

e.printStackTrace();

}

return orderModels;

}

}

4.5.4 创建消费者工具类

package cn.itcast.day14.serialization_java;

import cn.itcast.day14.beans.OrderModel;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import java.util.Arrays;

import java.util.Properties;

/**

* @author lql

* @time 2024-03-10 16:38:29

* @description TODO

*/

public class OrderConsumerJava {

public static void main(String[] args) {

Properties prop = new Properties();

prop.put("bootstrap.servers", "node1:9092");

prop.put("group.id", "order");

prop.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

// 设置反序列化类为自定义的avro反序列化类

prop.put("value.deserializer", "cn.itcast.day14.serialization_java.SimpleAvroSchemaJava");

KafkaConsumer<String, OrderModel> consumer = new KafkaConsumer<String, OrderModel>(prop);

consumer.subscribe(Arrays.asList("order"));

while (true) {

// poll 方法用于从 kafka 中拉取数据

ConsumerRecords<String, OrderModel> poll = consumer.poll(1000);

for (ConsumerRecord<String, OrderModel> stringStockConsumerRecord : poll) {

System.out.println(stringStockConsumerRecord.value());

}

}

}

}

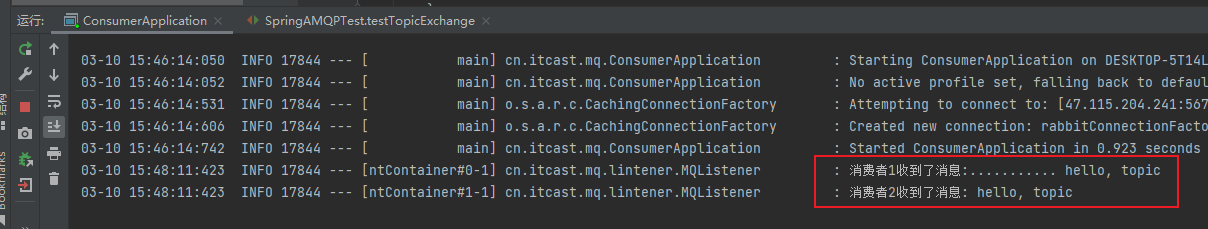

4.5.5 运行程序

# 首先启动zookeeper

# 启动 kafka,记得后台启动

后台:

cd /export/servers/kafka_2.11-0.10.0.0

nohup bin/kafka-server-start.sh config/server.properties 2>&1 &

停止:

cd /export/servers/kafka_2.11-0.10.0.0

bin/kafka-server-stop.sh

# 创建topic

bin/kafka-topics.sh --create --zookeeper node1:2181 --replication-factor 1 --partitions 1 --topic order

# 模拟消费者

bin/kafka-console-consumer.sh --zookeeper node1:2181 --from-beginning --topic order

结果:

- 生产者打印:

[{"userId": "user_001", "timestamp": 1621718199, "money": 10.1, "category": "电脑"}, {"userId": "user_001", "timestamp": 1621718201, "money": 14.1, "category": "手机"}, {"userId": "user_002", "timestamp": 1621718202, "money": 82.5, "category": "手机"}, {"userId": "user_001", "timestamp": 1621718205, "money": 15.6, "category": "电脑"}, {"userId": "user_004", "timestamp": 1621718207, "money": 10.2, "category": "家电"}, {"userId": "user_001", "timestamp": 1621718208, "money": 15.8, "category": "电脑"}, {"userId": "user_005", "timestamp": 1621718212, "money": 56.1, "category": "电脑"}, {"userId": "user_002", "timestamp": 1621718260, "money": 40.3, "category": "家电"}, {"userId": "user_001", "timestamp": 1621718580, "money": 11.5, "category": "家居"}, {"userId": "user_001", "timestamp": 1621718860, "money": 61.6, "category": "家居"}]

数据写入成功

- 消费者打印:

{"userId": "user_001", "timestamp": 1621718199, "money": 10.1, "category": "电脑"}

{"userId": "user_001", "timestamp": 1621718201, "money": 14.1, "category": "手机"}

{"userId": "user_002", "timestamp": 1621718202, "money": 82.5, "category": "手机"}

{"userId": "user_001", "timestamp": 1621718205, "money": 15.6, "category": "电脑"}

{"userId": "user_004", "timestamp": 1621718207, "money": 10.2, "category": "家电"}

{"userId": "user_001", "timestamp": 1621718208, "money": 15.8, "category": "电脑"}

{"userId": "user_005", "timestamp": 1621718212, "money": 56.1, "category": "电脑"}

{"userId": "user_002", "timestamp": 1621718260, "money": 40.3, "category": "家电"}

{"userId": "user_001", "timestamp": 1621718580, "money": 11.5, "category": "家居"}

{"userId": "user_001", "timestamp": 1621718860, "money": 61.6, "category": "家居"}

总结:值的序列化需要指定自己定义的序列化。

4.6 使用 Flink 自定义序列化到 Kafka

4.6.1 准备数据

order.csv

user_001,1621718199,10.1,电脑

user_001,1621718201,14.1,手机

user_002,1621718202,82.5,手机

user_001,1621718205,15.6,电脑

user_004,1621718207,10.2,家电

user_001,1621718208,15.8,电脑

user_005,1621718212,56.1,电脑

user_002,1621718260,40.3,家电

user_001,1621718580,11.5,家居

user_001,1621718860,61.6,家居

4.6.2 自定义Avro 序列化和反序列化

首先需要实现2个接口分别为 SerializationSchema 和 DeserializationSchema 分别是序列化和反序列化

package cn.itcast.day14.serialization_flink;

/**

* @author lql

* @time 2024-03-10 17:35:09

* @description TODO

*/

import cn.itcast.day14.beans.OrderModel;

import org.apache.avro.io.BinaryDecoder;

import org.apache.avro.io.BinaryEncoder;

import org.apache.avro.io.DecoderFactory;

import org.apache.avro.io.EncoderFactory;

import org.apache.avro.specific.SpecificDatumReader;

import org.apache.avro.specific.SpecificDatumWriter;

import org.apache.flink.api.common.serialization.DeserializationSchema;

import org.apache.flink.api.common.serialization.SerializationSchema;

import org.apache.flink.api.common.typeinfo.TypeInformation;

import java.io.ByteArrayInputStream;

import java.io.ByteArrayOutputStream;

import java.io.IOException;

/**

* 自定义序列化和反序列化

*/

public class SimpleAvroSchemaFlink implements DeserializationSchema<OrderModel>, SerializationSchema<OrderModel> {

@Override

public byte[] serialize(OrderModel order) {

// 创建序列化执行器

SpecificDatumWriter<OrderModel> writer = new SpecificDatumWriter<OrderModel>(order.getSchema());

// 创建一个流 用存储序列化后的二进制文件

ByteArrayOutputStream out = new ByteArrayOutputStream();

// 创建二进制编码器

BinaryEncoder encoder = EncoderFactory.get().directBinaryEncoder(out, null);

try {

// 数据入都流中

writer.write(order, encoder);

} catch (IOException e) {

e.printStackTrace();

}

return out.toByteArray();

}

@Override

public TypeInformation<OrderModel> getProducedType() {

return TypeInformation.of(OrderModel.class);

}

@Override

public OrderModel deserialize(byte[] bytes) throws IOException {

// 用来保存结果数据

OrderModel userBehavior = new OrderModel();

// 创建输入流用来读取二进制文件

ByteArrayInputStream arrayInputStream = new ByteArrayInputStream(bytes);

// 创建输入序列化执行器

SpecificDatumReader<OrderModel> stockSpecificDatumReader = new SpecificDatumReader<OrderModel>(userBehavior.getSchema());

//创建二进制解码器

BinaryDecoder binaryDecoder = DecoderFactory.get().directBinaryDecoder(arrayInputStream, null);

try {

// 数据读取

userBehavior=stockSpecificDatumReader.read(null, binaryDecoder);

} catch (IOException e) {

e.printStackTrace();

}

// 结果返回

return userBehavior;

}

@Override

public boolean isEndOfStream(OrderModel userBehavior) {

return false;

}

}

4.6.3 创建 Flink-source 类

package cn.itcast.day14.serialization_flink;

/**

* @author lql

* @time 2024-03-10 17:37:15

* @description TODO

*/

import cn.itcast.day14.beans.OrderModel;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer;

import java.util.Properties;

public class OrderProducerFlink {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> value = env.readTextFile("D:\\IDEA_Project\\BigData_Java\\flinkbase_pro\\data\\input\\order.csv");

DataStream<OrderModel> orderModelDataStream = value.map(row -> {

String[] fields = row.split(",");

return new OrderModel(fields[0], Long.parseLong(fields[1]), Double.parseDouble(fields[2]), fields[3]);

});

Properties prop = new Properties();

prop.setProperty("bootstrap.servers", "node1:9092");

//4.连接Kafka

FlinkKafkaProducer<OrderModel> producer = new FlinkKafkaProducer<>(

"order",

new SimpleAvroSchemaFlink(),

prop

);

//5.将数据打入kafka

orderModelDataStream.addSink(producer);

//6.执行任务

env.execute();

}

}

4.6.4 创建 Flink-sink 类

package cn.itcast.day14.serialization_flink;

/**

* @author lql

* @time 2024-03-10 17:40:04

* @description TODO

*/

import cn.itcast.day14.beans.OrderModel;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer;

import java.util.Properties;

public class OrderConsumerFlink {

public static void main(String[] args) throws Exception {

//1.构建流处理运行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setParallelism(1); // 设置并行度1 方便后面测试

// 2.设置kafka 配置信息

Properties prop = new Properties();

prop.put("bootstrap.servers", "node1:9092");

prop.put("group.id", "UserBehavior");

prop.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

// 下面有提及:SimpleAvroSchemaFlink,上面就不需要指定了!

// 3.构建Kafka 连接器

FlinkKafkaConsumer kafka = new FlinkKafkaConsumer<OrderModel>("order", new SimpleAvroSchemaFlink(), prop);

//4.设置Flink层最新的数据开始消费

kafka.setStartFromLatest();

//5.基于kafka构建数据源

DataStream<OrderModel> data = env.addSource(kafka);

//6.结果打印

data.print();

env.execute();

}

}

4.6.5 运行程序

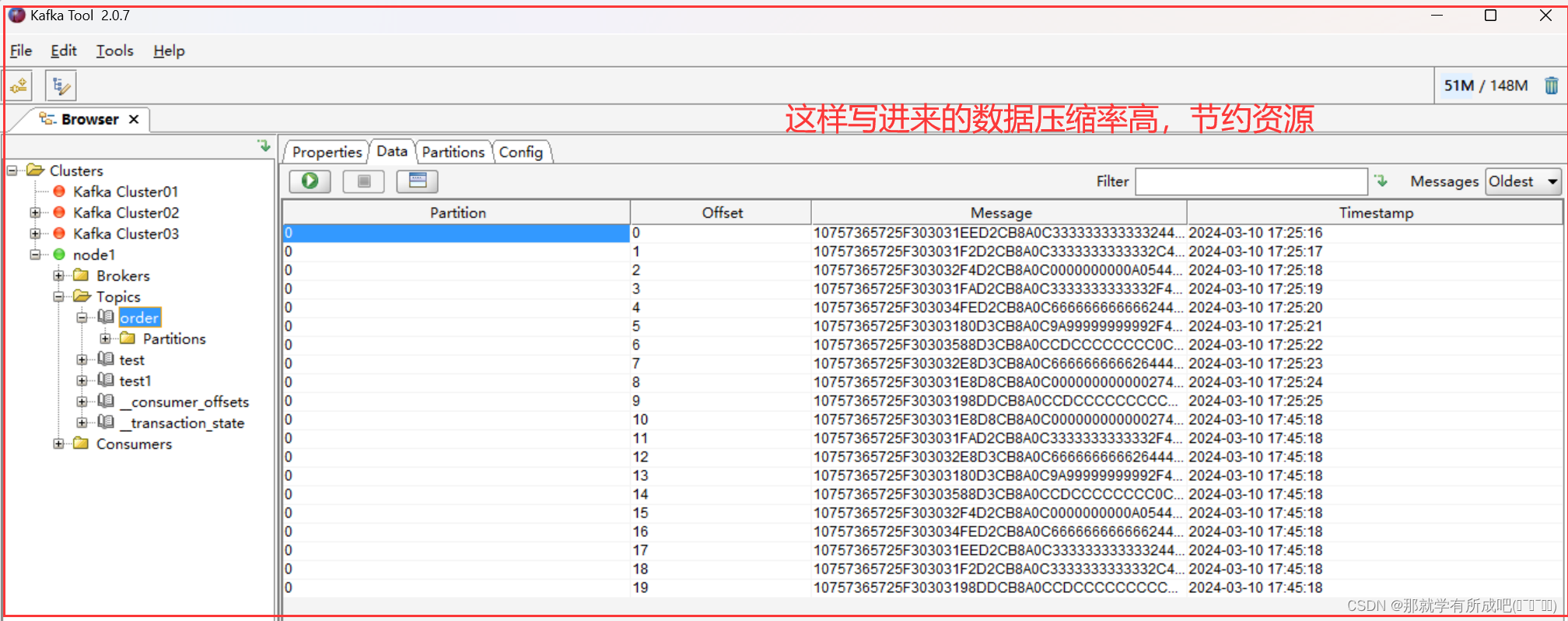

这里运用 Kafka-Tool 2.0.7 可视化工具,工具包放在资源处啦,大家感兴趣可以观看我上传的资源哟!

总结:📚 刚开始学习这个知识点时,我真是感觉有些吃力,觉得它太抽象、太难以理解了。但想到这是企业工作环境中必须掌握的技术,能够为企业节省资源、提高数据存储效率,我就鼓起勇气,决定迎难而上。💪

于是,我根据例子,一个字母一个字母地敲下代码,反复调试、尝试。终于,当程序运行成功的那一刻,我感到了前所未有的成就感!

🎉原来,成功真的就是坚持的结果。只有当你坚持不懈地努力,才能有机会看到胜利的曙光。

🌈明天,我也要继续努力学习,不断挑战自己,迎接更多的成功!🚀 加油!💪