在深度学习的过程中,需要将训练好的模型运用到我们要使用的另一个程序中,这就需要模型的下载与转移操作

代码:

import math

import torch

from torch import nn

from d2l import torch as d2l

import matplotlib.pyplot as plt

# 生成随机的数据集

max_degree = 20 # 多项式的最大阶数

n_train, n_test = 100, 100 # 训练和测试数据集大小

true_w = torch.zeros(max_degree)

true_w[0:4] = torch.Tensor([5, 1.2, -3.4, 5.6])

# 生成特征

features = torch.randn((n_train + n_test, 1))

permutation_indices = torch.randperm(features.size(0))

# 使用随机排列的索引来打乱features张量(原地修改)

features = features[permutation_indices]

poly_features = torch.pow(features, torch.arange(max_degree).reshape(1, -1))

for i in range(max_degree):

poly_features[:, i] /= math.gamma(i + 1)

# 生成标签

labels = torch.matmul(poly_features, true_w)

labels += torch.normal(0, 0.1, size=labels.shape)

# 以下是你原来的训练函数,没有修改

def evaluate_loss(net, data_iter, loss):

metric = d2l.Accumulator(2)

for X, y in data_iter:

out = net(X)

y = y.reshape(out.shape)

l = loss(out, y)

metric.add(l.sum(), l.numel())

return metric[0] / metric[1]

def l2_penalty(w):

w = w[0].weight

return torch.sum(w.pow(2)) / 2

def train(train_features, test_features, train_labels, test_labels, lambd,

num_epochs=100):

loss = d2l.squared_loss

input_shape = train_features.shape[-1]

net = nn.Sequential(nn.Linear(input_shape, 1, bias=False)) # 模型

batch_size = min(10, train_labels.shape[0])

train_iter = d2l.load_array((train_features, train_labels.reshape(-1, 1)),

batch_size)

test_iter = d2l.load_array((test_features, test_labels.reshape(-1, 1)),

batch_size, is_train=False)

# 用于存储训练和测试损失的列表

train_losses = []

test_losses = []

total_loss = 0

total_samples = 0

for epoch in range(num_epochs):

for X, y in train_iter:

out = net(X)

y = y.reshape(-1, 1) # 确保y是二维的

l = loss(out, y) + lambd * l2_penalty(net)

# 反向传播和优化器更新

l.sum().backward()

d2l.sgd(net.parameters(), lr=0.01, batch_size= batch_size)

total_loss += l.sum().item() # 统计所有元素损失

total_samples += y.numel() # 统计个数

a = total_loss / total_samples # 本次训练的平均损失

train_losses.append(a)

test_loss = evaluate_loss(net, test_iter, loss)

test_losses.append(test_loss)

total_loss = 0

total_samples = 0

print(f"Epoch {epoch + 1}/{num_epochs}:")

print(f"训练损失: {a:.4f} 测试损失: {test_loss:.4f} ")

print(net[0].weight)

torch.save(net.state_dict(), "NetSave") # 存模型

net_try = nn.Sequential(nn.Linear(input_shape, 1, bias=False))

print("net_try")

print(net_try[0].weight)

net_try.load_state_dict(torch.load("NetSave"))

net_try.eval() # 评估模式

print("net_try_load")

print(net_try[0].weight)

# 绘制损失曲线

plt.figure(figsize=(10, 6))

plt.plot(train_losses, label='train', color='blue', linestyle='-', marker='.')

plt.plot(test_losses, label='test', color='purple', linestyle='--', marker='.')

plt.xlabel('epoch')

plt.ylabel('loss')

plt.title('Loss over Epochs')

plt.legend()

plt.grid(True)

plt.ylim(0, 1) # 设置y轴的范围从0.01到100

plt.show()

# 选择多项式特征中的前4个维度

train(poly_features[:n_train, :4], poly_features[n_train:, :4],

labels[:n_train], labels[n_train:], 0)

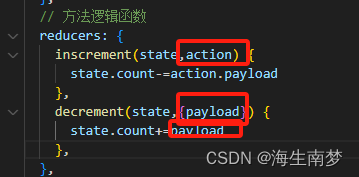

## net.parameters() 是一个 PyTorch 模型的方法,用于返回模型所有参数的迭代器。这个迭代器产生模型中所有可学习的参数(例如权重和偏置)。

上述代码的模型是简单线性模型

net = nn.Sequential(nn.Linear(input_shape, 1, bias=False)) # 模型

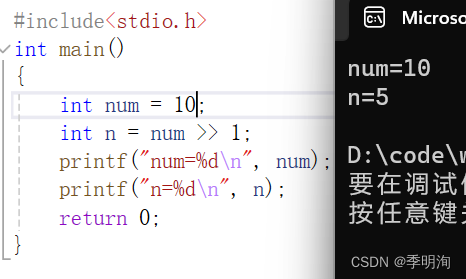

此模型的下载与储存如下

torch.save(net.state_dict(), "NetSave") # 存模型

net_try = nn.Sequential(nn.Linear(input_shape, 1, bias=False)) # 搭建模型框架

print("net_try")

print(net_try[0].weight)

net_try.load_state_dict(torch.load("NetSave")) # 下载模型

net_try.eval() # 评估模式

print("net_try_load")

print(net_try[0].weight)

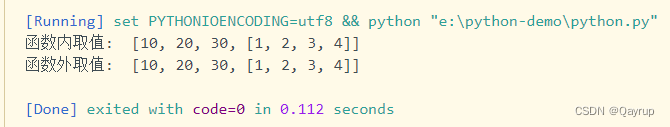

效果

所以说要想在另一个程序中将训练好的模型加载到上面去,首先要保存训练好的模型,另一个程序必须有和本模型一样的框架,再将训练好的模型权重加载到另一个程序框架内即可