和你一起终身学习,这里是程序员Android

经典好文推荐,通过阅读本文,您将收获以下知识点:

一、选择feature和配置feature table

二、 挂载算法

三、自定义metadata

四、APP调用算法

五、结语

一、选择feature和配置feature table

1.1 选择feature

多帧降噪算法(MFNR)是一种很常见的多帧算法,在MTK已预置的feature中有MTK_FEATURE_MFNR和TP_FEATURE_MFNR。因此,我们可以对号入座,不用再额外添加feature。这里我们是第三方算法,所以我们选择TP_FEATURE_MFNR。

1.2 配置feature table

确定了feature为TP_FEATURE_MFNR后,我们还需要将其添加到feature table中:

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/mtk_scenario_mgr.cpp b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/mtk_scenario_mgr.cpp

index f14ff8a6e2..38365e0602 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/mtk_scenario_mgr.cpp

+++ b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/mtk_scenario_mgr.cpp

@@ -106,6 +106,7 @@ using namespace NSCam::v3::pipeline::policy::scenariomgr;

#define MTK_FEATURE_COMBINATION_TP_VSDOF_MFNR (MTK_FEATURE_MFNR | MTK_FEATURE_NR| MTK_FEATURE_ABF| MTK_FEATURE_CZ| MTK_FEATURE_DRE| MTK_FEATURE_HFG| MTK_FEATURE_DCE | MTK_FEATURE_FB| TP_FEATURE_VSDOF| TP_FEATURE_WATERMARK)

#define MTK_FEATURE_COMBINATION_TP_FUSION (NO_FEATURE_NORMAL | MTK_FEATURE_NR| MTK_FEATURE_ABF| MTK_FEATURE_CZ| MTK_FEATURE_DRE| MTK_FEATURE_HFG| MTK_FEATURE_DCE | MTK_FEATURE_FB| TP_FEATURE_FUSION| TP_FEATURE_WATERMARK)

#define MTK_FEATURE_COMBINATION_TP_PUREBOKEH (NO_FEATURE_NORMAL | MTK_FEATURE_NR| MTK_FEATURE_ABF| MTK_FEATURE_CZ| MTK_FEATURE_DRE| MTK_FEATURE_HFG| MTK_FEATURE_DCE | MTK_FEATURE_FB| TP_FEATURE_PUREBOKEH| TP_FEATURE_WATERMARK)

+#define MTK_FEATURE_COMBINATION_TP_MFNR (TP_FEATURE_MFNR | MTK_FEATURE_NR| MTK_FEATURE_ABF| MTK_FEATURE_CZ| MTK_FEATURE_DRE| MTK_FEATURE_HFG| MTK_FEATURE_DCE | MTK_FEATURE_FB| MTK_FEATURE_MFNR)

// streaming feature combination (TODO: it should be refined by streaming scenario feature)

#define MTK_FEATURE_COMBINATION_VIDEO_NORMAL (MTK_FEATURE_FB|TP_FEATURE_FB|TP_FEATURE_WATERMARK)

@@ -136,6 +137,7 @@ const std::vector<std::unordered_map<int32_t, ScenarioFeatures>> gMtkScenarioFe

ADD_CAMERA_FEATURE_SET(TP_FEATURE_HDR, MTK_FEATURE_COMBINATION_HDR)

ADD_CAMERA_FEATURE_SET(MTK_FEATURE_AINR, MTK_FEATURE_COMBINATION_AINR)

ADD_CAMERA_FEATURE_SET(MTK_FEATURE_MFNR, MTK_FEATURE_COMBINATION_MFNR)

+ ADD_CAMERA_FEATURE_SET(TP_FEATURE_MFNR, MTK_FEATURE_COMBINATION_TP_MFNR)

ADD_CAMERA_FEATURE_SET(MTK_FEATURE_REMOSAIC, MTK_FEATURE_COMBINATION_REMOSAIC)

ADD_CAMERA_FEATURE_SET(NO_FEATURE_NORMAL, MTK_FEATURE_COMBINATION_SINGLE)

CAMERA_SCENARIO_END注意:

MTK在Android Q(10.0)及更高版本上优化了scenario配置表的客制化,Android Q及更高版本,feature需要在:

vendor/mediatek/proprietary/custom/[platform]/hal/camera/camera_custom_feature_table.cpp中配置,[platform]是诸如mt6580,mt6763之类的。

二、 挂载算法

2.1 为算法选择plugin

MTK HAL3在vendor/mediatek/proprietary/hardware/mtkcam3/include/mtkcam3/3rdparty/plugin/PipelinePluginType.h 中将三方算法的挂载点大致分为以下几类:

BokehPlugin:Bokeh算法挂载点,双摄景深算法的虚化部分。

DepthPlugin:Depth算法挂载点,双摄景深算法的计算深度部分。

FusionPlugin:Depth和Bokeh放在1个算法中,即合并的双摄景深算法挂载点。

JoinPlugin:Streaming相关算法挂载点,预览算法都挂载在这里。

MultiFramePlugin:多帧算法挂载点,包括YUV与RAW,例如MFNR/HDR

RawPlugin:RAW算法挂载点,例如remosaic

YuvPlugin:Yuv单帧算法挂载点,例如美颜、广角镜头畸变校正等。

对号入座,为要集成的算法选择相应的plugin。这里是多帧算法,只能选择MultiFramePlugin。并且,一般情况下多帧算法只用于拍照,不用于预览。

2.2 添加全局宏控

为了能控制某个项目是否集成此算法,我们在device/mediateksample/[platform]/ProjectConfig.mk中添加一个宏,用于控制新接入算法的编译:

QXT_MFNR_SUPPORT = yes当某个项目不需要这个算法时,将device/mediateksample/[platform]/ProjectConfig.mk的QXT_MFNR_SUPPORT的值设为 no 就可以了。

2.3 编写算法集成文件

参照vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/mfnr/MFNRImpl.cpp中实现MFNR拍照。目录结构如下:

vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/customer/cp_tp_mfnr/

├── Android.mk

├── include

│ └── mf_processor.h

├── lib

│ ├── arm64-v8a

│ │ └── libmultiframe.so

│ └── armeabi-v7a

│ └── libmultiframe.so

└── MFNRImpl.cpp

文件说明:

Android.mk中配置算法库、头文件、集成的源代码MFNRImpl.cpp文件,将它们编译成库libmtkcam.plugin.tp_mfnr,供libmtkcam_3rdparty.customer依赖调用。

libmultiframe.so实现了将连续4帧图像缩小,并拼接成一张图的功能,libmultiframe.so用来模拟需要接入的第三方多帧算法库。mf_processor.h是头文件。

MFNRImpl.cpp是集成的源代码CPP文件。

2.3.1 mtkcam3/3rdparty/customer/cp_tp_mfnr/Android.mk

ifeq ($(QXT_MFNR_SUPPORT),yes)

LOCAL_PATH := $(call my-dir)

include $(CLEAR_VARS)

LOCAL_MODULE := libmultiframe

LOCAL_SRC_FILES_32 := lib/armeabi-v7a/libmultiframe.so

LOCAL_SRC_FILES_64 := lib/arm64-v8a/libmultiframe.so

LOCAL_MODULE_TAGS := optional

LOCAL_MODULE_CLASS := SHARED_LIBRARIES

LOCAL_MODULE_SUFFIX := .so

LOCAL_PROPRIETARY_MODULE := true

LOCAL_MULTILIB := both

include $(BUILD_PREBUILT)

################################################################################

#

################################################################################

include $(CLEAR_VARS)

#-----------------------------------------------------------

-include $(TOP)/$(MTK_PATH_SOURCE)/hardware/mtkcam/mtkcam.mk

#-----------------------------------------------------------

LOCAL_SRC_FILES += MFNRImpl.cpp

#-----------------------------------------------------------

LOCAL_C_INCLUDES += $(MTKCAM_C_INCLUDES)

LOCAL_C_INCLUDES += $(TOP)/$(MTK_PATH_SOURCE)/hardware/mtkcam3/include $(MTK_PATH_SOURCE)/hardware/mtkcam/include

LOCAL_C_INCLUDES += $(TOP)/$(MTK_PATH_COMMON)/hal/inc

LOCAL_C_INCLUDES += $(TOP)/$(MTK_PATH_CUSTOM_PLATFORM)/hal/inc

LOCAL_C_INCLUDES += $(TOP)/external/libyuv/files/include/

LOCAL_C_INCLUDES += $(TOP)/$(MTK_PATH_SOURCE)/hardware/mtkcam3/3rdparty/customer/cp_tp_mfnr/include

#

LOCAL_C_INCLUDES += system/media/camera/include

#-----------------------------------------------------------

LOCAL_CFLAGS += $(MTKCAM_CFLAGS)

#

#-----------------------------------------------------------

LOCAL_STATIC_LIBRARIES +=

#

LOCAL_WHOLE_STATIC_LIBRARIES +=

#-----------------------------------------------------------

LOCAL_SHARED_LIBRARIES += liblog

LOCAL_SHARED_LIBRARIES += libutils

LOCAL_SHARED_LIBRARIES += libcutils

LOCAL_SHARED_LIBRARIES += libmtkcam_modulehelper

LOCAL_SHARED_LIBRARIES += libmtkcam_stdutils

LOCAL_SHARED_LIBRARIES += libmtkcam_pipeline

LOCAL_SHARED_LIBRARIES += libmtkcam_metadata

LOCAL_SHARED_LIBRARIES += libmtkcam_metastore

LOCAL_SHARED_LIBRARIES += libmtkcam_streamutils

LOCAL_SHARED_LIBRARIES += libmtkcam_imgbuf

LOCAL_SHARED_LIBRARIES += libmtkcam_exif

#LOCAL_SHARED_LIBRARIES += libmtkcam_3rdparty

#-----------------------------------------------------------

LOCAL_HEADER_LIBRARIES := libutils_headers liblog_headers libhardware_headers

#-----------------------------------------------------------

LOCAL_MODULE := libmtkcam.plugin.tp_mfnr

LOCAL_PROPRIETARY_MODULE := true

LOCAL_MODULE_OWNER := mtk

LOCAL_MODULE_TAGS := optional

include $(MTK_STATIC_LIBRARY)

################################################################################

#

################################################################################

include $(call all-makefiles-under,$(LOCAL_PATH))

endif2.3.2 mtkcam3/3rdparty/customer/cp_tp_mfnr/include/mf_processor.h

#ifndef QXT_MULTI_FRAME_H

#define QXT_MULTI_FRAME_H

class MFProcessor {

public:

virtual ~MFProcessor() {}

virtual void setFrameCount(int num) = 0;

virtual void setParams() = 0;

virtual void addFrame(unsigned char *src, int srcWidth, int srcHeight) = 0;

virtual void addFrame(unsigned char *srcY, unsigned char *srcU, unsigned char *srcV,

int srcWidth, int srcHeight) = 0;

virtual void scale(unsigned char *src, int srcWidth, int srcHeight,

unsigned char *dst, int dstWidth, int dstHeight) = 0;

virtual void process(unsigned char *output, int outputWidth, int outputHeight) = 0;

virtual void process(unsigned char *outputY, unsigned char *outputU, unsigned char *outputV,

int outputWidth, int outputHeight) = 0;

static MFProcessor* createInstance(int width, int height);

};

#endif //QXT_MULTI_FRAME_H头文件中的接口函数介绍:

setFrameCount:没有实际作用,用于模拟设置第三方多帧算法的帧数。因为部分第三方多帧算法在不同场景下需要的帧数可能是不同的。

setParams:也没有实际作用,用于模拟设置第三方多帧算法所需的参数。

addFrame:用于添加一帧图像数据,用于模拟第三方多帧算法添加图像数据。

process:将前面添加的4帧图像数据,缩小并拼接成一张原大小的图。

createInstance:创建接口类对象。

为了方便有兴趣的童鞋们,实现代码mf_processor_impl.cpp也一并贴上:

#include <libyuv/scale.h>

#include <cstring>

#include "mf_processor.h"

using namespace std;

using namespace libyuv;

class MFProcessorImpl : public MFProcessor {

private:

int frameCount = 4;

int currentIndex = 0;

unsigned char *dstBuf = nullptr;

unsigned char *tmpBuf = nullptr;

public:

MFProcessorImpl();

MFProcessorImpl(int width, int height);

~MFProcessorImpl() override;

void setFrameCount(int num) override;

void setParams() override;

void addFrame(unsigned char *src, int srcWidth, int srcHeight) override;

void addFrame(unsigned char *srcY, unsigned char *srcU, unsigned char *srcV,

int srcWidth, int srcHeight) override;

void scale(unsigned char *src, int srcWidth, int srcHeight,

unsigned char *dst, int dstWidth, int dstHeight) override;

void process(unsigned char *output, int outputWidth, int outputHeight) override;

void process(unsigned char *outputY, unsigned char *outputU, unsigned char *outputV,

int outputWidth, int outputHeight) override;

static MFProcessor *createInstance(int width, int height);

};

MFProcessorImpl::MFProcessorImpl() = default;

MFProcessorImpl::MFProcessorImpl(int width, int height) {

if (dstBuf == nullptr) {

dstBuf = new unsigned char[width * height * 3 / 2];

}

if (tmpBuf == nullptr) {

tmpBuf = new unsigned char[width / 2 * height / 2 * 3 / 2];

}

}

MFProcessorImpl::~MFProcessorImpl() {

if (dstBuf != nullptr) {

delete[] dstBuf;

}

if (tmpBuf != nullptr) {

delete[] tmpBuf;

}

}

void MFProcessorImpl::setFrameCount(int num) {

frameCount = num;

}

void MFProcessorImpl::setParams() {

}

void MFProcessorImpl::addFrame(unsigned char *src, int srcWidth, int srcHeight) {

int srcYCount = srcWidth * srcHeight;

int srcUVCount = srcWidth * srcHeight / 4;

int tmpWidth = srcWidth >> 1;

int tmpHeight = srcHeight >> 1;

int tmpYCount = tmpWidth * tmpHeight;

int tmpUVCount = tmpWidth * tmpHeight / 4;

//scale

I420Scale(src, srcWidth,

src + srcYCount, srcWidth >> 1,

src + srcYCount + srcUVCount, srcWidth >> 1,

srcWidth, srcHeight,

tmpBuf, tmpWidth,

tmpBuf + tmpYCount, tmpWidth >> 1,

tmpBuf + tmpYCount + tmpUVCount, tmpWidth >> 1,

tmpWidth, tmpHeight,

kFilterNone);

//merge

unsigned char *pDstY;

unsigned char *pTmpY;

for (int i = 0; i < tmpHeight; i++) {

pTmpY = tmpBuf + i * tmpWidth;

if (currentIndex == 0) {

pDstY = dstBuf + i * srcWidth;

} else if (currentIndex == 1) {

pDstY = dstBuf + i * srcWidth + tmpWidth;

} else if (currentIndex == 2) {

pDstY = dstBuf + (i + tmpHeight) * srcWidth;

} else {

pDstY = dstBuf + (i + tmpHeight) * srcWidth + tmpWidth;

}

memcpy(pDstY, pTmpY, tmpWidth);

}

int uvHeight = tmpHeight / 2;

int uvWidth = tmpWidth / 2;

unsigned char *pDstU;

unsigned char *pDstV;

unsigned char *pTmpU;

unsigned char *pTmpV;

for (int i = 0; i < uvHeight; i++) {

pTmpU = tmpBuf + tmpYCount + uvWidth * i;

pTmpV = tmpBuf + tmpYCount + tmpUVCount + uvWidth * i;

if (currentIndex == 0) {

pDstU = dstBuf + srcYCount + i * tmpWidth;

pDstV = dstBuf + srcYCount + srcUVCount + i * tmpWidth;

} else if (currentIndex == 1) {

pDstU = dstBuf + srcYCount + i * tmpWidth + uvWidth;

pDstV = dstBuf + srcYCount + srcUVCount + i * tmpWidth + uvWidth;

} else if (currentIndex == 2) {

pDstU = dstBuf + srcYCount + (i + uvHeight) * tmpWidth;

pDstV = dstBuf + srcYCount + srcUVCount + (i + uvHeight) * tmpWidth;

} else {

pDstU = dstBuf + srcYCount + (i + uvHeight) * tmpWidth + uvWidth;

pDstV = dstBuf + srcYCount + srcUVCount + (i + uvHeight) * tmpWidth + uvWidth;

}

memcpy(pDstU, pTmpU, uvWidth);

memcpy(pDstV, pTmpV, uvWidth);

}

if (currentIndex < frameCount) currentIndex++;

}

void MFProcessorImpl::addFrame(unsigned char *srcY, unsigned char *srcU, unsigned char *srcV,

int srcWidth, int srcHeight) {

int srcYCount = srcWidth * srcHeight;

int srcUVCount = srcWidth * srcHeight / 4;

int tmpWidth = srcWidth >> 1;

int tmpHeight = srcHeight >> 1;

int tmpYCount = tmpWidth * tmpHeight;

int tmpUVCount = tmpWidth * tmpHeight / 4;

//scale

I420Scale(srcY, srcWidth,

srcU, srcWidth >> 1,

srcV, srcWidth >> 1,

srcWidth, srcHeight,

tmpBuf, tmpWidth,

tmpBuf + tmpYCount, tmpWidth >> 1,

tmpBuf + tmpYCount + tmpUVCount, tmpWidth >> 1,

tmpWidth, tmpHeight,

kFilterNone);

//merge

unsigned char *pDstY;

unsigned char *pTmpY;

for (int i = 0; i < tmpHeight; i++) {

pTmpY = tmpBuf + i * tmpWidth;

if (currentIndex == 0) {

pDstY = dstBuf + i * srcWidth;

} else if (currentIndex == 1) {

pDstY = dstBuf + i * srcWidth + tmpWidth;

} else if (currentIndex == 2) {

pDstY = dstBuf + (i + tmpHeight) * srcWidth;

} else {

pDstY = dstBuf + (i + tmpHeight) * srcWidth + tmpWidth;

}

memcpy(pDstY, pTmpY, tmpWidth);

}

int uvHeight = tmpHeight / 2;

int uvWidth = tmpWidth / 2;

unsigned char *pDstU;

unsigned char *pDstV;

unsigned char *pTmpU;

unsigned char *pTmpV;

for (int i = 0; i < uvHeight; i++) {

pTmpU = tmpBuf + tmpYCount + uvWidth * i;

pTmpV = tmpBuf + tmpYCount + tmpUVCount + uvWidth * i;

if (currentIndex == 0) {

pDstU = dstBuf + srcYCount + i * tmpWidth;

pDstV = dstBuf + srcYCount + srcUVCount + i * tmpWidth;

} else if (currentIndex == 1) {

pDstU = dstBuf + srcYCount + i * tmpWidth + uvWidth;

pDstV = dstBuf + srcYCount + srcUVCount + i * tmpWidth + uvWidth;

} else if (currentIndex == 2) {

pDstU = dstBuf + srcYCount + (i + uvHeight) * tmpWidth;

pDstV = dstBuf + srcYCount + srcUVCount + (i + uvHeight) * tmpWidth;

} else {

pDstU = dstBuf + srcYCount + (i + uvHeight) * tmpWidth + uvWidth;

pDstV = dstBuf + srcYCount + srcUVCount + (i + uvHeight) * tmpWidth + uvWidth;

}

memcpy(pDstU, pTmpU, uvWidth);

memcpy(pDstV, pTmpV, uvWidth);

}

if (currentIndex < frameCount) currentIndex++;

}

void MFProcessorImpl::scale(unsigned char *src, int srcWidth, int srcHeight,

unsigned char *dst, int dstWidth, int dstHeight) {

I420Scale(src, srcWidth,//Y

src + srcWidth * srcHeight, srcWidth >> 1,//U

src + srcWidth * srcHeight * 5 / 4, srcWidth >> 1,//V

srcWidth, srcHeight,

dst, dstWidth,//Y

dst + dstWidth * dstHeight, dstWidth >> 1,//U

dst + dstWidth * dstHeight * 5 / 4, dstWidth >> 1,//V

dstWidth, dstHeight,

kFilterNone);

}

void MFProcessorImpl::process(unsigned char *output, int outputWidth, int outputHeight) {

memcpy(output, dstBuf, outputWidth * outputHeight * 3 / 2);

currentIndex = 0;

}

void MFProcessorImpl::process(unsigned char *outputY, unsigned char *outputU, unsigned char *outputV,

int outputWidth, int outputHeight) {

int yCount = outputWidth * outputHeight;

int uvCount = yCount / 4;

memcpy(outputY, dstBuf, yCount);

memcpy(outputU, dstBuf + yCount, uvCount);

memcpy(outputV, dstBuf + yCount + uvCount, uvCount);

currentIndex = 0;

}

MFProcessor* MFProcessor::createInstance(int width, int height) {

return new MFProcessorImpl(width, height);

}2.3.3 mtkcam3/3rdparty/customer/cp_tp_mfnr/MFNRImpl.cpp

#ifdef LOG_TAG

#undef LOG_TAG

#endif // LOG_TAG

#define LOG_TAG "MFNRProvider"

static const char *__CALLERNAME__ = LOG_TAG;

//

#include <mtkcam/utils/std/Log.h>

//

#include <stdlib.h>

#include <utils/Errors.h>

#include <utils/List.h>

#include <utils/RefBase.h>

#include <sstream>

#include <unordered_map> // std::unordered_map

//

#include <mtkcam/utils/metadata/client/mtk_metadata_tag.h>

#include <mtkcam/utils/metadata/hal/mtk_platform_metadata_tag.h>

//zHDR

#include <mtkcam/utils/hw/HwInfoHelper.h> // NSCamHw::HwInfoHelper

#include <mtkcam3/feature/utils/FeatureProfileHelper.h> //ProfileParam

#include <mtkcam/drv/IHalSensor.h>

//

#include <mtkcam/utils/imgbuf/IIonImageBufferHeap.h>

//

#include <mtkcam/utils/std/Format.h>

#include <mtkcam/utils/std/Time.h>

//

#include <mtkcam3/pipeline/hwnode/NodeId.h>

//

#include <mtkcam/utils/metastore/IMetadataProvider.h>

#include <mtkcam/utils/metastore/ITemplateRequest.h>

#include <mtkcam/utils/metastore/IMetadataProvider.h>

#include <mtkcam3/3rdparty/plugin/PipelinePlugin.h>

#include <mtkcam3/3rdparty/plugin/PipelinePluginType.h>

//

#include <isp_tuning/isp_tuning.h> //EIspProfile_T, EOperMode_*

//

#include <custom_metadata/custom_metadata_tag.h>

//

#include <libyuv.h>

#include <mf_processor.h>

using namespace NSCam;

using namespace android;

using namespace std;

using namespace NSCam::NSPipelinePlugin;

using namespace NSIspTuning;

/******************************************************************************

*

******************************************************************************/

#define MY_LOGV(fmt, arg...) CAM_LOGV("(%d)[%s] " fmt, ::gettid(), __FUNCTION__, ##arg)

#define MY_LOGD(fmt, arg...) CAM_LOGD("(%d)[%s] " fmt, ::gettid(), __FUNCTION__, ##arg)

#define MY_LOGI(fmt, arg...) CAM_LOGI("(%d)[%s] " fmt, ::gettid(), __FUNCTION__, ##arg)

#define MY_LOGW(fmt, arg...) CAM_LOGW("(%d)[%s] " fmt, ::gettid(), __FUNCTION__, ##arg)

#define MY_LOGE(fmt, arg...) CAM_LOGE("(%d)[%s] " fmt, ::gettid(), __FUNCTION__, ##arg)

//

#define MY_LOGV_IF(cond, ...) do { if ( (cond) ) { MY_LOGV(__VA_ARGS__); } }while(0)

#define MY_LOGD_IF(cond, ...) do { if ( (cond) ) { MY_LOGD(__VA_ARGS__); } }while(0)

#define MY_LOGI_IF(cond, ...) do { if ( (cond) ) { MY_LOGI(__VA_ARGS__); } }while(0)

#define MY_LOGW_IF(cond, ...) do { if ( (cond) ) { MY_LOGW(__VA_ARGS__); } }while(0)

#define MY_LOGE_IF(cond, ...) do { if ( (cond) ) { MY_LOGE(__VA_ARGS__); } }while(0)

//

#define ASSERT(cond, msg) do { if (!(cond)) { printf("Failed: %s\n", msg); return; } }while(0)

#define __DEBUG // enable debug

#ifdef __DEBUG

#include <memory>

#define FUNCTION_SCOPE \

auto __scope_logger__ = [](char const* f)->std::shared_ptr<const char>{ \

CAM_LOGD("(%d)[%s] + ", ::gettid(), f); \

return std::shared_ptr<const char>(f, [](char const* p){CAM_LOGD("(%d)[%s] -", ::gettid(), p);}); \

}(__FUNCTION__)

#else

#define FUNCTION_SCOPE

#endif

template <typename T>

inline MBOOL

tryGetMetadata(

IMetadata* pMetadata,

MUINT32 const tag,

T & rVal

)

{

if (pMetadata == NULL) {

MY_LOGW("pMetadata == NULL");

return MFALSE;

}

IMetadata::IEntry entry = pMetadata->entryFor(tag);

if (!entry.isEmpty()) {

rVal = entry.itemAt(0, Type2Type<T>());

return MTRUE;

}

return MFALSE;

}

#define MFNR_FRAME_COUNT 4

/******************************************************************************

*

******************************************************************************/

class MFNRProviderImpl : public MultiFramePlugin::IProvider {

typedef MultiFramePlugin::Property Property;

typedef MultiFramePlugin::Selection Selection;

typedef MultiFramePlugin::Request::Ptr RequestPtr;

typedef MultiFramePlugin::RequestCallback::Ptr RequestCallbackPtr;

public:

virtual void set(MINT32 iOpenId, MINT32 iOpenId2) {

MY_LOGD("set openId:%d openId2:%d", iOpenId, iOpenId2);

mOpenId = iOpenId;

}

virtual const Property& property() {

FUNCTION_SCOPE;

static Property prop;

static bool inited;

if (!inited) {

prop.mName = "TP_MFNR";

prop.mFeatures = TP_FEATURE_MFNR;

prop.mThumbnailTiming = eTiming_P2;

prop.mPriority = ePriority_Highest;

prop.mZsdBufferMaxNum = 8; // maximum frames requirement

prop.mNeedRrzoBuffer = MTRUE; // rrzo requirement for BSS

inited = MTRUE;

}

return prop;

};

virtual MERROR negotiate(Selection& sel) {

FUNCTION_SCOPE;

IMetadata* appInMeta = sel.mIMetadataApp.getControl().get();

tryGetMetadata<MINT32>(appInMeta, QXT_FEATURE_MFNR, mEnable);

MY_LOGD("mEnable: %d", mEnable);

if (!mEnable) {

MY_LOGD("Force off TP_MFNR shot");

return BAD_VALUE;

}

sel.mRequestCount = MFNR_FRAME_COUNT;

MY_LOGD("mRequestCount=%d", sel.mRequestCount);

sel.mIBufferFull

.setRequired(MTRUE)

.addAcceptedFormat(eImgFmt_I420) // I420 first

.addAcceptedFormat(eImgFmt_YV12)

.addAcceptedFormat(eImgFmt_NV21)

.addAcceptedFormat(eImgFmt_NV12)

.addAcceptedSize(eImgSize_Full);

//sel.mIBufferSpecified.setRequired(MTRUE).setAlignment(16, 16);

sel.mIMetadataDynamic.setRequired(MTRUE);

sel.mIMetadataApp.setRequired(MTRUE);

sel.mIMetadataHal.setRequired(MTRUE);

if (sel.mRequestIndex == 0) {

sel.mOBufferFull

.setRequired(MTRUE)

.addAcceptedFormat(eImgFmt_I420) // I420 first

.addAcceptedFormat(eImgFmt_YV12)

.addAcceptedFormat(eImgFmt_NV21)

.addAcceptedFormat(eImgFmt_NV12)

.addAcceptedSize(eImgSize_Full);

sel.mOMetadataApp.setRequired(MTRUE);

sel.mOMetadataHal.setRequired(MTRUE);

} else {

sel.mOBufferFull.setRequired(MFALSE);

sel.mOMetadataApp.setRequired(MFALSE);

sel.mOMetadataHal.setRequired(MFALSE);

}

return OK;

};

virtual void init() {

FUNCTION_SCOPE;

mDump = property_get_bool("vendor.debug.camera.mfnr.dump", 0);

//nothing to do for MFNR

};

virtual MERROR process(RequestPtr pRequest, RequestCallbackPtr pCallback) {

FUNCTION_SCOPE;

MERROR ret = 0;

// restore callback function for abort API

if (pCallback != nullptr) {

m_callbackprt = pCallback;

}

//maybe need to keep a copy in member<sp>

IMetadata* pAppMeta = pRequest->mIMetadataApp->acquire();

IMetadata* pHalMeta = pRequest->mIMetadataHal->acquire();

IMetadata* pHalMetaDynamic = pRequest->mIMetadataDynamic->acquire();

MINT32 processUniqueKey = 0;

IImageBuffer* pInImgBuffer = NULL;

uint32_t width = 0;

uint32_t height = 0;

if (!IMetadata::getEntry<MINT32>(pHalMeta, MTK_PIPELINE_UNIQUE_KEY, processUniqueKey)) {

MY_LOGE("cannot get unique about MFNR capture");

return BAD_VALUE;

}

if (pRequest->mIBufferFull != nullptr) {

pInImgBuffer = pRequest->mIBufferFull->acquire();

width = pInImgBuffer->getImgSize().w;

height = pInImgBuffer->getImgSize().h;

MY_LOGD("[IN] Full image VA: 0x%p, Size(%dx%d), Format: %s",

pInImgBuffer->getBufVA(0), width, height, format2String(pInImgBuffer->getImgFormat()));

if (mDump) {

char path[256];

snprintf(path, sizeof(path), "/data/vendor/camera_dump/mfnr_capture_in_%d_%dx%d.%s",

pRequest->mRequestIndex, width, height, format2String(pInImgBuffer->getImgFormat()));

pInImgBuffer->saveToFile(path);

}

}

if (pRequest->mIBufferSpecified != nullptr) {

IImageBuffer* pImgBuffer = pRequest->mIBufferSpecified->acquire();

MY_LOGD("[IN] Specified image VA: 0x%p, Size(%dx%d)", pImgBuffer->getBufVA(0), pImgBuffer->getImgSize().w, pImgBuffer->getImgSize().h);

}

if (pRequest->mOBufferFull != nullptr) {

mOutImgBuffer = pRequest->mOBufferFull->acquire();

MY_LOGD("[OUT] Full image VA: 0x%p, Size(%dx%d)", mOutImgBuffer->getBufVA(0), mOutImgBuffer->getImgSize().w, mOutImgBuffer->getImgSize().h);

}

if (pRequest->mIMetadataDynamic != nullptr) {

IMetadata *meta = pRequest->mIMetadataDynamic->acquire();

if (meta != NULL)

MY_LOGD("[IN] Dynamic metadata count: ", meta->count());

else

MY_LOGD("[IN] Dynamic metadata Empty");

}

MY_LOGD("frame:%d/%d, width:%d, height:%d", pRequest->mRequestIndex, pRequest->mRequestCount, width, height);

if (pInImgBuffer != NULL && mOutImgBuffer != NULL) {

uint32_t yLength = pInImgBuffer->getBufSizeInBytes(0);

uint32_t uLength = pInImgBuffer->getBufSizeInBytes(1);

uint32_t vLength = pInImgBuffer->getBufSizeInBytes(2);

uint32_t yuvLength = yLength + uLength + vLength;

if (pRequest->mRequestIndex == 0) {//First frame

//When width or height changed, recreate multiFrame

if (mLatestWidth != width || mLatestHeight != height) {

if (mMFProcessor != NULL) {

delete mMFProcessor;

mMFProcessor = NULL;

}

mLatestWidth = width;

mLatestHeight = height;

}

if (mMFProcessor == NULL) {

MY_LOGD("create mMFProcessor %dx%d", mLatestWidth, mLatestHeight);

mMFProcessor = MFProcessor::createInstance(mLatestWidth, mLatestHeight);

mMFProcessor->setFrameCount(pRequest->mRequestCount);

}

}

mMFProcessor->addFrame((uint8_t *)pInImgBuffer->getBufVA(0),

(uint8_t *)pInImgBuffer->getBufVA(1),

(uint8_t *)pInImgBuffer->getBufVA(2),

mLatestWidth, mLatestHeight);

if (pRequest->mRequestIndex == pRequest->mRequestCount - 1) {//Last frame

if (mMFProcessor != NULL) {

mMFProcessor->process((uint8_t *)mOutImgBuffer->getBufVA(0),

(uint8_t *)mOutImgBuffer->getBufVA(1),

(uint8_t *)mOutImgBuffer->getBufVA(2),

mLatestWidth, mLatestHeight);

if (mDump) {

char path[256];

snprintf(path, sizeof(path), "/data/vendor/camera_dump/mfnr_capture_out_%d_%dx%d.%s",

pRequest->mRequestIndex, mOutImgBuffer->getImgSize().w, mOutImgBuffer->getImgSize().h,

format2String(mOutImgBuffer->getImgFormat()));

mOutImgBuffer->saveToFile(path);

}

} else {

memcpy((uint8_t *)mOutImgBuffer->getBufVA(0),

(uint8_t *)pInImgBuffer->getBufVA(0),

pInImgBuffer->getBufSizeInBytes(0));

memcpy((uint8_t *)mOutImgBuffer->getBufVA(1),

(uint8_t *)pInImgBuffer->getBufVA(1),

pInImgBuffer->getBufSizeInBytes(1));

memcpy((uint8_t *)mOutImgBuffer->getBufVA(2),

(uint8_t *)pInImgBuffer->getBufVA(2),

pInImgBuffer->getBufSizeInBytes(2));

}

mOutImgBuffer = NULL;

}

}

if (pRequest->mIBufferFull != nullptr) {

pRequest->mIBufferFull->release();

}

if (pRequest->mIBufferSpecified != nullptr) {

pRequest->mIBufferSpecified->release();

}

if (pRequest->mOBufferFull != nullptr) {

pRequest->mOBufferFull->release();

}

if (pRequest->mIMetadataDynamic != nullptr) {

pRequest->mIMetadataDynamic->release();

}

mvRequests.push_back(pRequest);

MY_LOGD("collected request(%d/%d)", pRequest->mRequestIndex, pRequest->mRequestCount);

if (pRequest->mRequestIndex == pRequest->mRequestCount - 1) {

for (auto req : mvRequests) {

MY_LOGD("callback request(%d/%d) %p", req->mRequestIndex, req->mRequestCount, pCallback.get());

if (pCallback != nullptr) {

pCallback->onCompleted(req, 0);

}

}

mvRequests.clear();

}

return ret;

};

virtual void abort(vector<RequestPtr>& pRequests) {

FUNCTION_SCOPE;

bool bAbort = false;

IMetadata *pHalMeta;

MINT32 processUniqueKey = 0;

for (auto req:pRequests) {

bAbort = false;

pHalMeta = req->mIMetadataHal->acquire();

if (!IMetadata::getEntry<MINT32>(pHalMeta, MTK_PIPELINE_UNIQUE_KEY, processUniqueKey)) {

MY_LOGW("cannot get unique about MFNR capture");

}

if (m_callbackprt != nullptr) {

MY_LOGD("m_callbackprt is %p", m_callbackprt.get());

/*MFNR plugin callback request to MultiFrameNode */

for (Vector<RequestPtr>::iterator it = mvRequests.begin() ; it != mvRequests.end(); it++) {

if ((*it) == req) {

mvRequests.erase(it);

m_callbackprt->onAborted(req);

bAbort = true;

break;

}

}

} else {

MY_LOGW("callbackptr is null");

}

if (!bAbort) {

MY_LOGW("Desire abort request[%d] is not found", req->mRequestIndex);

}

}

};

virtual void uninit() {

FUNCTION_SCOPE;

if (mMFProcessor != NULL) {

delete mMFProcessor;

mMFProcessor = NULL;

}

mLatestWidth = 0;

mLatestHeight = 0;

};

virtual ~MFNRProviderImpl() {

FUNCTION_SCOPE;

};

const char * format2String(MINT format) {

switch(format) {

case NSCam::eImgFmt_RGBA8888: return "rgba";

case NSCam::eImgFmt_RGB888: return "rgb";

case NSCam::eImgFmt_RGB565: return "rgb565";

case NSCam::eImgFmt_STA_BYTE: return "byte";

case NSCam::eImgFmt_YVYU: return "yvyu";

case NSCam::eImgFmt_UYVY: return "uyvy";

case NSCam::eImgFmt_VYUY: return "vyuy";

case NSCam::eImgFmt_YUY2: return "yuy2";

case NSCam::eImgFmt_YV12: return "yv12";

case NSCam::eImgFmt_YV16: return "yv16";

case NSCam::eImgFmt_NV16: return "nv16";

case NSCam::eImgFmt_NV61: return "nv61";

case NSCam::eImgFmt_NV12: return "nv12";

case NSCam::eImgFmt_NV21: return "nv21";

case NSCam::eImgFmt_I420: return "i420";

case NSCam::eImgFmt_I422: return "i422";

case NSCam::eImgFmt_Y800: return "y800";

case NSCam::eImgFmt_BAYER8: return "bayer8";

case NSCam::eImgFmt_BAYER10: return "bayer10";

case NSCam::eImgFmt_BAYER12: return "bayer12";

case NSCam::eImgFmt_BAYER14: return "bayer14";

case NSCam::eImgFmt_FG_BAYER8: return "fg_bayer8";

case NSCam::eImgFmt_FG_BAYER10: return "fg_bayer10";

case NSCam::eImgFmt_FG_BAYER12: return "fg_bayer12";

case NSCam::eImgFmt_FG_BAYER14: return "fg_bayer14";

default: return "unknown";

};

};

private:

MINT32 mUniqueKey;

MINT32 mOpenId;

MINT32 mRealIso;

MINT32 mShutterTime;

MBOOL mZSDMode;

MBOOL mFlashOn;

Vector<RequestPtr> mvRequests;

RequestCallbackPtr m_callbackprt;

MFProcessor* mMFProcessor = NULL;

IImageBuffer* mOutImgBuffer = NULL;

uint32_t mLatestWidth = 0;

uint32_t mLatestHeight = 0;

MINT32 mEnable = 0;

MINT32 mDump = 0;

// add end

};

REGISTER_PLUGIN_PROVIDER(MultiFrame, MFNRProviderImpl);主要函数介绍:

在property函数中feature类型设置成TP_FEATURE_MFNR,并设置名称、优先级、最大帧数等等属性。尤其注意mNeedRrzoBuffer属性,一般情况下,多帧算法必须要设置为MTRUE。

在negotiate函数中配置算法需要的输入、输出图像的格式、尺寸。注意,多帧算法有多帧输入,但是只需要一帧输出。因此这里设置了mRequestIndex == 0时才需要mOBufferFull。也就是只有第一帧才有输入和输出,其它帧只有输入。

另外,还在negotiate函数中获取上层传下来的metadata参数,根据参数决定算法是否运行。在process函数中接入算法。第一帧时创建算法接口类对象,然后每一帧都调用算法接口函数addFrame加入,最后一帧再调用算法接口函数process进行处理并获取输出。

2.3.4 mtkcam3/3rdparty/customer/Android.mk

最终vendor.img需要的目标共享库是libmtkcam_3rdparty.customer.so。因此,我们还需要修改Android.mk,使模块libmtkcam_3rdparty.customer依赖libmtkcam.plugin.tp_mfnr。

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/customer/Android.mk b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/customer/Android.mk

index ff5763d3c2..5e5dd6524f 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/customer/Android.mk

+++ b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/customer/Android.mk

@@ -77,6 +77,12 @@ LOCAL_SHARED_LIBRARIES += libyuv.vendor

LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.plugin.tp_watermark

endif

+ifeq ($(QXT_MFNR_SUPPORT), yes)

+LOCAL_SHARED_LIBRARIES += libmultiframe

+LOCAL_SHARED_LIBRARIES += libyuv.vendor

+LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.plugin.tp_mfnr

+endif

+

# for app super night ev decision (experimental for customer only)

LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.control.customersupernightevdecision

################################################################################2.3.5 移除MTK示例的MFNR算法

一般情况下,MFNR 算法同一时间只允许运行一个。因此,需要移除 MTK 示例的 MFNR 算法。我们可以使用宏控来移除,这里就简单粗暴,直接注释掉了。

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/Android.mk b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/Android.mk

index 4e2bc68dff..da98ebd0ad 100644

--- a/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/Android.mk

+++ b/vendor/mediatek/proprietary/hardware/mtkcam3/3rdparty/mtk/Android.mk

@@ -118,7 +118,7 @@ LOCAL_SHARED_LIBRARIES += libfeature.stereo.provider

#-----------------------------------------------------------

ifneq ($(strip $(MTKCAM_HAVE_MFB_SUPPORT)),0)

-LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.plugin.mfnr

+#LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.plugin.mfnr

endif

#4 Cell

LOCAL_WHOLE_STATIC_LIBRARIES += libmtkcam.plugin.remosaic三、自定义metadata

添加metadata是为了让APP层能够通过metadata传递相应的参数给HAL层,以此来控制算法在运行时是否启用。APP层是通过CaptureRequest.Builder.set(@NonNull Key<T> key, T value)来设置参数的。由于MTK原生相机APP没有多帧降噪模式,因此,我们自定义metadata来验证集成效果。

vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag.h:

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag.h b/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag.h

index b020352092..714d05f350 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag.h

+++ b/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag.h

@@ -602,6 +602,7 @@ typedef enum mtk_camera_metadata_tag {

MTK_FLASH_FEATURE_END,

QXT_FEATURE_WATERMARK = QXT_FEATURE_START,

+ QXT_FEATURE_MFNR,

QXT_FEATURE_END,

} mtk_camera_metadata_tag_t;vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag_info.inl:

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag_info.inl b/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag_info.inl

index 1b4fc75a0e..cba4511511 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag_info.inl

+++ b/vendor/mediatek/proprietary/hardware/mtkcam/include/mtkcam/utils/metadata/client/mtk_metadata_tag_info.inl

@@ -95,6 +95,8 @@ _IMP_SECTION_INFO_(QXT_FEATURE, "com.qxt.camera")

_IMP_TAG_INFO_( QXT_FEATURE_WATERMARK,

MINT32, "watermark")

+_IMP_TAG_INFO_( QXT_FEATURE_MFNR,

+ MINT32, "mfnr")

/******************************************************************************

*vendor/mediatek/proprietary/hardware/mtkcam/utils/metadata/vendortag/VendorTagTable.h :

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam/utils/metadata/vendortag/VendorTagTable.h b/vendor/mediatek/proprietary/hardware/mtkcam/utils/metadata/vendortag/VendorTagTable.h

index 33e581adfd..4f4772424d 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam/utils/metadata/vendortag/VendorTagTable.h

+++ b/vendor/mediatek/proprietary/hardware/mtkcam/utils/metadata/vendortag/VendorTagTable.h

@@ -383,6 +383,8 @@ static auto& _QxtFeature_()

sInst = {

_TAG_(QXT_FEATURE_WATERMARK,

"watermark", TYPE_INT32),

+ _TAG_(QXT_FEATURE_MFNR,

+ "mfnr", TYPE_INT32),

};

//

return sInst;vendor/mediatek/proprietary/hardware/mtkcam/utils/metastore/metadataprovider/constructStaticMetadata.cpp :

diff --git a/vendor/mediatek/proprietary/hardware/mtkcam/utils/metastore/metadataprovider/constructStaticMetadata.cpp b/vendor/mediatek/proprietary/hardware/mtkcam/utils/metastore/metadataprovider/constructStaticMetadata.cpp

index 591b25b162..9c3db8b1d1 100755

--- a/vendor/mediatek/proprietary/hardware/mtkcam/utils/metastore/metadataprovider/constructStaticMetadata.cpp

+++ b/vendor/mediatek/proprietary/hardware/mtkcam/utils/metastore/metadataprovider/constructStaticMetadata.cpp

@@ -583,10 +583,12 @@ updateData(IMetadata &rMetadata)

{

IMetadata::IEntry qxtAvailRequestEntry = rMetadata.entryFor(MTK_REQUEST_AVAILABLE_REQUEST_KEYS);

qxtAvailRequestEntry.push_back(QXT_FEATURE_WATERMARK , Type2Type< MINT32 >());

+ qxtAvailRequestEntry.push_back(QXT_FEATURE_MFNR , Type2Type< MINT32 >());

rMetadata.update(qxtAvailRequestEntry.tag(), qxtAvailRequestEntry);

IMetadata::IEntry qxtAvailSessionEntry = rMetadata.entryFor(MTK_REQUEST_AVAILABLE_SESSION_KEYS);

qxtAvailSessionEntry.push_back(QXT_FEATURE_WATERMARK , Type2Type< MINT32 >());

+ qxtAvailSessionEntry.push_back(QXT_FEATURE_MFNR , Type2Type< MINT32 >());

rMetadata.update(qxtAvailSessionEntry.tag(), qxtAvailSessionEntry);

}

#endif

@@ -605,7 +607,7 @@ updateData(IMetadata &rMetadata)

// to store manual update metadata for sensor driver.

IMetadata::IEntry availCharactsEntry = rMetadata.entryFor(MTK_REQUEST_AVAILABLE_CHARACTERISTICS_KEYS);

availCharactsEntry.push_back(MTK_MULTI_CAM_FEATURE_SENSOR_MANUAL_UPDATED , Type2Type< MINT32 >());

- rMetadata.update(availCharactsEntry.tag(), availCharactsEntry);

+ rMetadata.update(availCharactsEntry.tag(), availCharactsEntry);

}

if(physicIdsList.size() > 1)

{前面这些步骤完成之后,集成工作就基本完成了。我们需要重新编译一下系统源码,为节约时间,也可以只编译vendor.img。

四、APP调用算法

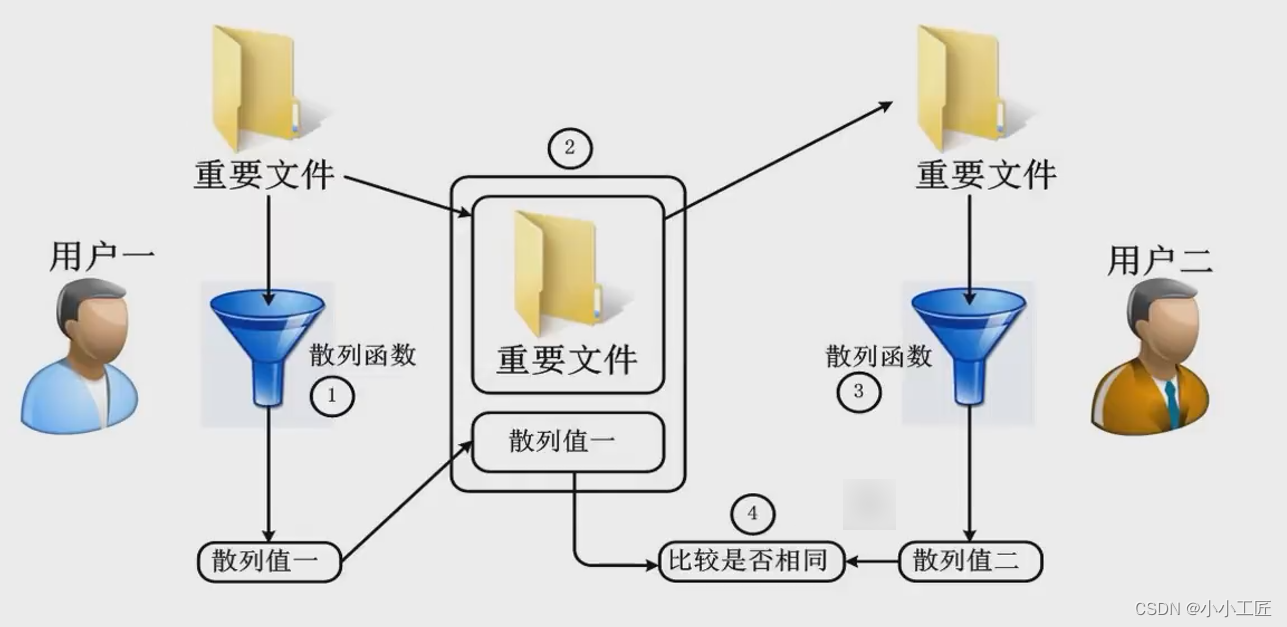

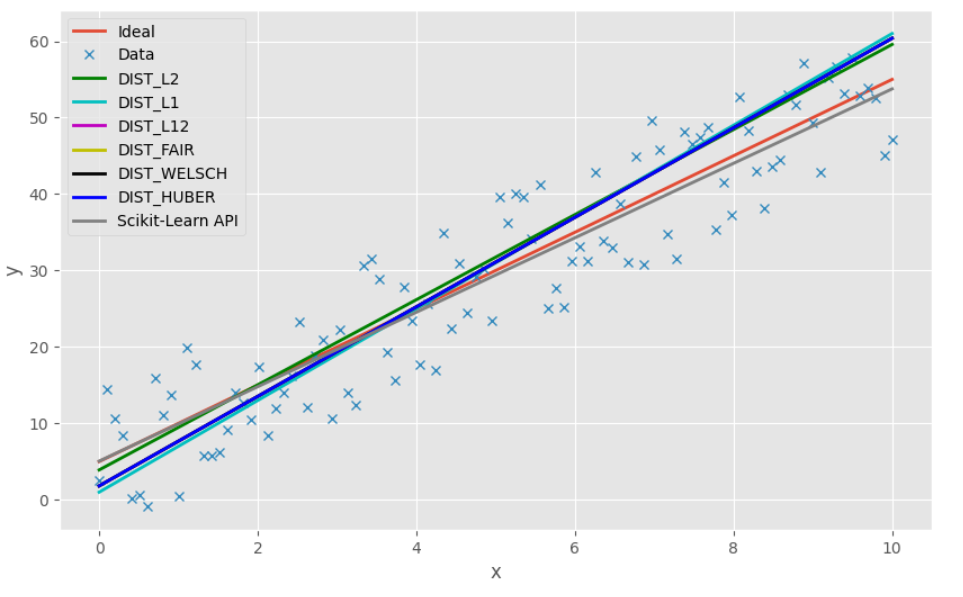

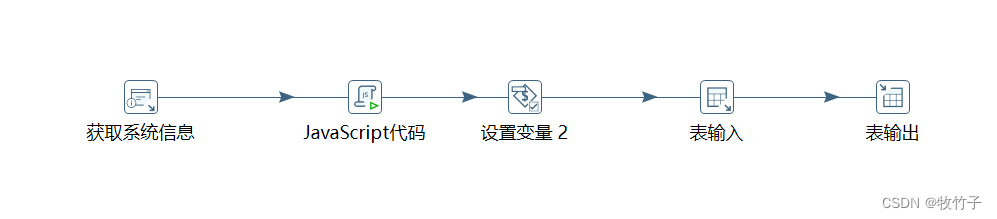

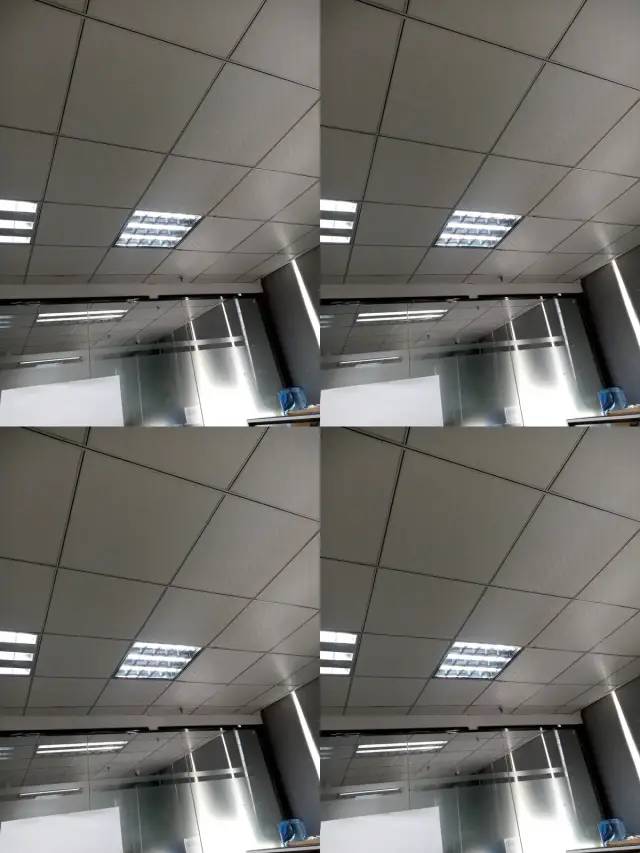

验证算法我们无需再重新写APP,继续使用《MTK HAL算法集成之单帧算法》中的APP代码,只需要将KEY_WATERMARK的值改为"com.qxt.camera.mfnr"即可。为样机刷入系统整包或者vendor.img,开机后,安装demo验证。我们来拍一张看看效果:

image

可以看到,集成后,这个模拟MFNR的多帧算法已经将连续的4帧图像缩小并拼接成一张图了。

五、结语

真正的多帧算法要复杂一些,例如,MFNR算法可能会根据曝光值决定是否启用,光线好就不启用,光线差就启用;HDR算法,可能会要求获取连续几帧不同曝光的图像。可能还会有智能的场景检测等等。但是不管怎么变,多帧算法大体上的集成步骤都是类似的。如果遇到不同的需求,可能要根据需求灵活调整一下代码。

原文链接:https://www.jianshu.com/p/f0

参考文献:

【腾讯文档】Camera学习知识库

https://docs.qq.com/doc/DSWZ6dUlNemtUWndv

至此,本篇已结束。转载网络的文章,小编觉得很优秀,欢迎点击阅读原文,支持原创作者,如有侵权,恳请联系小编删除,欢迎您的建议与指正。同时期待您的关注,感谢您的阅读,谢谢!

点个在看,方便您使用时快速查找!