SK注意力机制

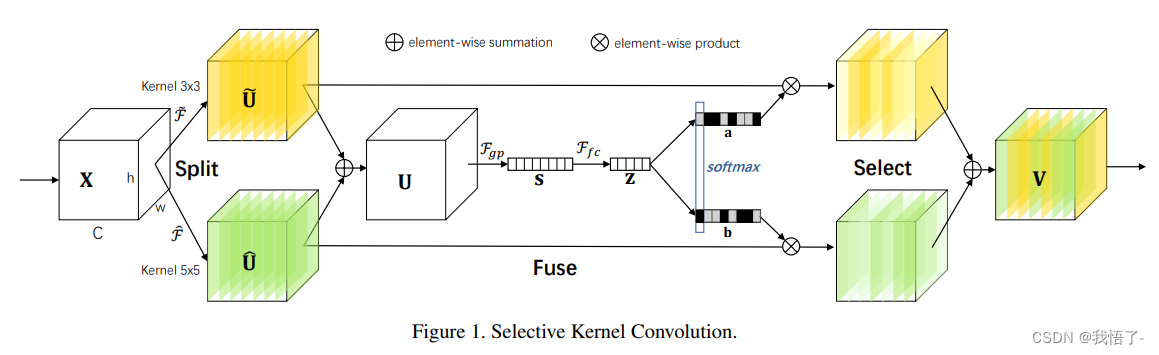

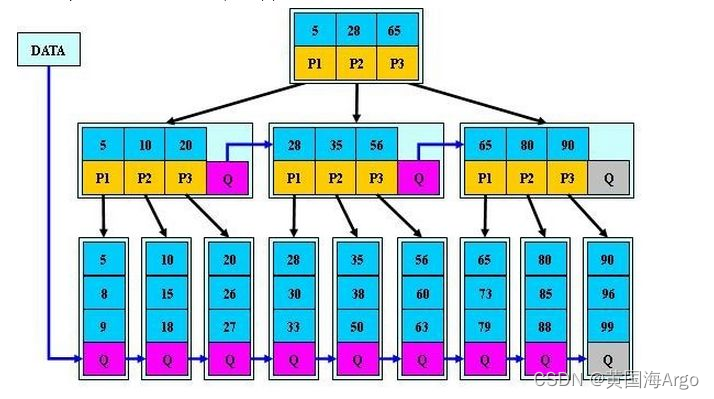

简介:在标准卷积神经网络 (CNN) 中,每一层人工神经元的感受野被设计为共享相同的大小。在神经科学界众所周知,视觉皮层神经元的感受野大小受到刺激的调节,这在构建 CNN 时很少被考虑。我们在CNN中提出了一种动态选择机制,该机制允许每个神经元根据输入信息的多个尺度自适应地调整其感受野大小。设计了一个称为选择性内核 (SK) 单元的构建块,其中使用由这些分支中的信息引导的 softmax 注意力融合具有不同内核大小的多个分支。对这些分支的不同关注会产生不同大小的融合层神经元的有效感受野。多个 SK 单元堆叠到称为选择性内核网络 (SKNets) 的深度网络中。在ImageNet和CIFAR基准测试中,我们通过经验表明,SKNet以较低的模型复杂性优于现有的最先进的架构。详细分析表明,SKNet中的神经元可以捕获不同尺度的目标对象,这验证了神经元根据输入自适应调整其感受野大小的能力。

代码实现

import numpy as np

import torch

from torch import nn

from torch.nn import init

from collections import OrderedDict

class SKAttention(nn.Module):

def __init__(self, channel=512, kernels=[1, 3, 5, 7], reduction=16, group=1, L=32):

super().__init__()

self.d = max(L, channel // reduction)

self.convs = nn.ModuleList([])

for k in kernels:

self.convs.append(

nn.Sequential(OrderedDict([

('conv', nn.Conv2d(channel, channel, kernel_size=k, padding=k // 2, groups=group)),

('bn', nn.BatchNorm2d(channel)),

('relu', nn.ReLU())

]))

)

self.fc = nn.Linear(channel, self.d)

self.fcs = nn.ModuleList([])

for i in range(len(kernels)):

self.fcs.append(nn.Linear(self.d, channel))

self.softmax = nn.Softmax(dim=0)

def forward(self, x):

bs, c, _, _ = x.size()

conv_outs = []

### split

for conv in self.convs:

conv_outs.append(conv(x))

feats = torch.stack(conv_outs, 0) # k,bs,channel,h,w

### fuse

U = sum(conv_outs) # bs,c,h,w

### reduction channel

S = U.mean(-1).mean(-1) # bs,c

Z = self.fc(S) # bs,d

### calculate attention weight

weights = []

for fc in self.fcs:

weight = fc(Z)

weights.append(weight.view(bs, c, 1, 1)) # bs,channel

attention_weughts = torch.stack(weights, 0) # k,bs,channel,1,1

attention_weughts = self.softmax(attention_weughts) # k,bs,channel,1,1

### fuse

V = (attention_weughts * feats).sum(0)

return V

if __name__ == '__main__':

input = torch.randn(50, 512, 7, 7)

se = SKAttention(channel=512, reduction=8)

output = se(input)

print(output.shape)

![[git] windows系统安装git教程和配置](https://img-blog.csdnimg.cn/direct/16773d109c5640d5b5d879542efdfbdd.png)