1. Insert 导出

1)将查询的结果导出到本地

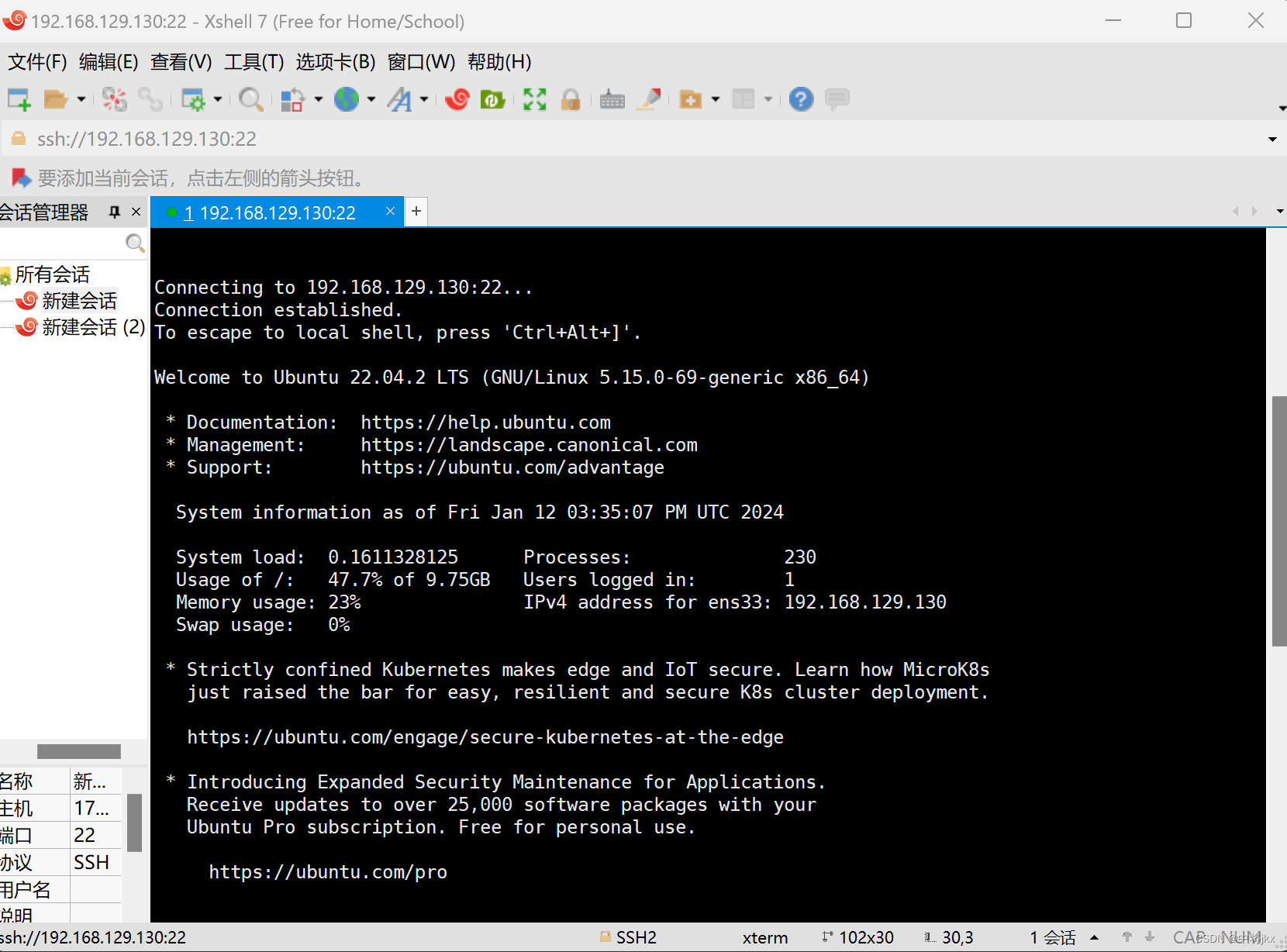

hive (default)> insert overwrite local directory '/opt/module/hive/data/export/student' select * from student5;

Automatically selecting local only mode for query

Query ID = atguigu_20211217153118_31119102-f06a-4313-a1c7-c99c89d5f549

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

Job running in-process (local Hadoop)

2021-12-17 15:31:21,767 Stage-1 map = 100%, reduce = 0%

Ended Job = job_local2085445374_0004

Moving data to local directory /opt/module/hive/data/export/student

MapReduce Jobs Launched:

Stage-Stage-1: HDFS Read: 767 HDFS Write: 41412249 SUCCESS

Total MapReduce CPU Time Spent: 0 msec

OK

student5.id student5.name

Time taken: 2.922 seconds

2)将查询的结果格式化导出到本地(加上一个以“,”隔开数据的格式)

hive(default)>insert overwrite local directory '/opt/module/hive/data/export/student1' ROW FORMAT DELIMITED FIELDS TERMINATED BY ','

select * from student;3)将查询的结果导出到 HDFS 上(没有 local)

hive (default)> insert overwrite directory '/user/zzdq/student2'

ROW FORMAT DELIMITED FIELDS TERMINATED BY ''

select * from student;2. Hadoop 命令导出到本地

hive (default)> dfs -get /user/hive/warehouse/student/student.txt /opt/module/data/export/student3.txt;3. Hive Shell 命令导出

基本语法:(hive -f/-e 执行语句或者脚本> file)

[zzdq@hadoop102 hive]$ bin/hive -e 'select * from default.student;' opt/module/hive/data/export/student4.txt;4. Export 导出到 HDFS 上

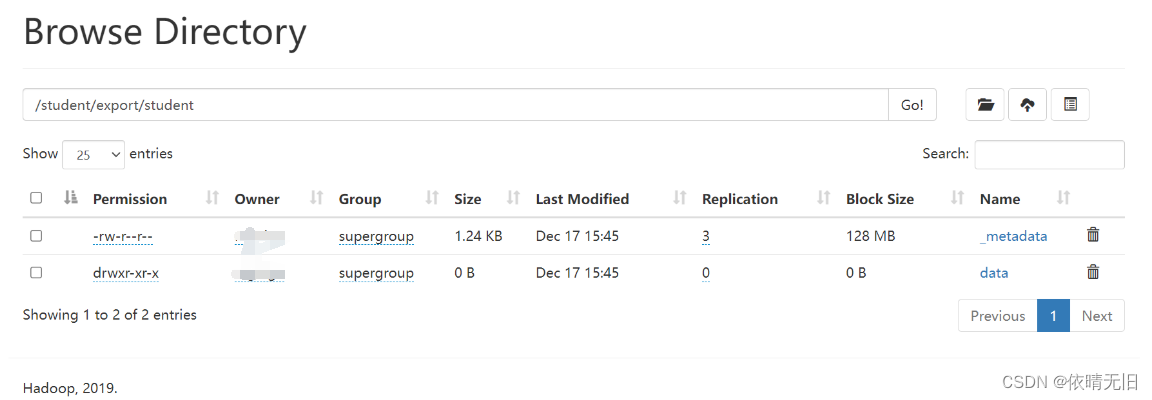

hive (default)> export table default.student5 to '/student/export/student';

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.ExportTask. Cannot copy hdfs://hadoop100:8020/student/export to its subdirectory hdfs://hadoop100:8020/student/export/student/data/export导出的数据中有两个数据源,其中除了主信息之外,还包括记录主数据信息的元数据

export 和 import 主要用于两个 Hadoop 平台集群之间 Hive 表迁移。

我们尝试使用import导入上面所产生的信息,但是导入已存在的表发现报错了

hive (default)> import table student5 from '/student/export/student';

FAILED: SemanticException [Error 10119]: Table exists and contains data files我们创建一个新表来进行导入

hive (default)> import table student6 from '/student/export/student';

Copying data from hdfs://hadoop100:8020/student/export/student/data

Copying file: hdfs://hadoop100:8020/student/export/student/data/student.txt

Loading data to table default.student6

OK

Time taken: 0.984 seconds我们查询数据:

hive (default)> select * from student6;

OK

student6.id student6.name

1001 zzz

1002 ddd

1111 ccc

Time taken: 0.351 seconds, Fetched: 3 row(s)结论:导入的表需要是一张没收数据的表,也就是说该表要么不存在,要么就是一张空表。

5. Sqoop 导出

后续课程专门讲。主要作用就是将数据导入mysql

6. 清除表中数据(Truncate)

注意:Truncate 只能删除管理表,不能删除外部表中数据

hive (default)> truncate table student6; OK Time taken: 0.943 seconds

![[开发语言][c++][python]:C++与Python中的赋值、浅拷贝与深拷贝](https://img-blog.csdnimg.cn/img_convert/b738c28f6b2531e40f980cef0742b6ee.png)