背景

本地搭建canal实现mysql数据到es的简单的数据同步,仅供学习参考

建议首先熟悉一下canal同步方式:https://github.com/alibaba/canal/wiki

前提条件

- 本地搭建MySQL数据库

- 本地搭建ElasticSearch

- 本地搭建canal-server

- 本地搭建canal-adapter

操作步骤

本地环境为window11,大部分组件采用docker进行部署,MySQL采用8.0.27,

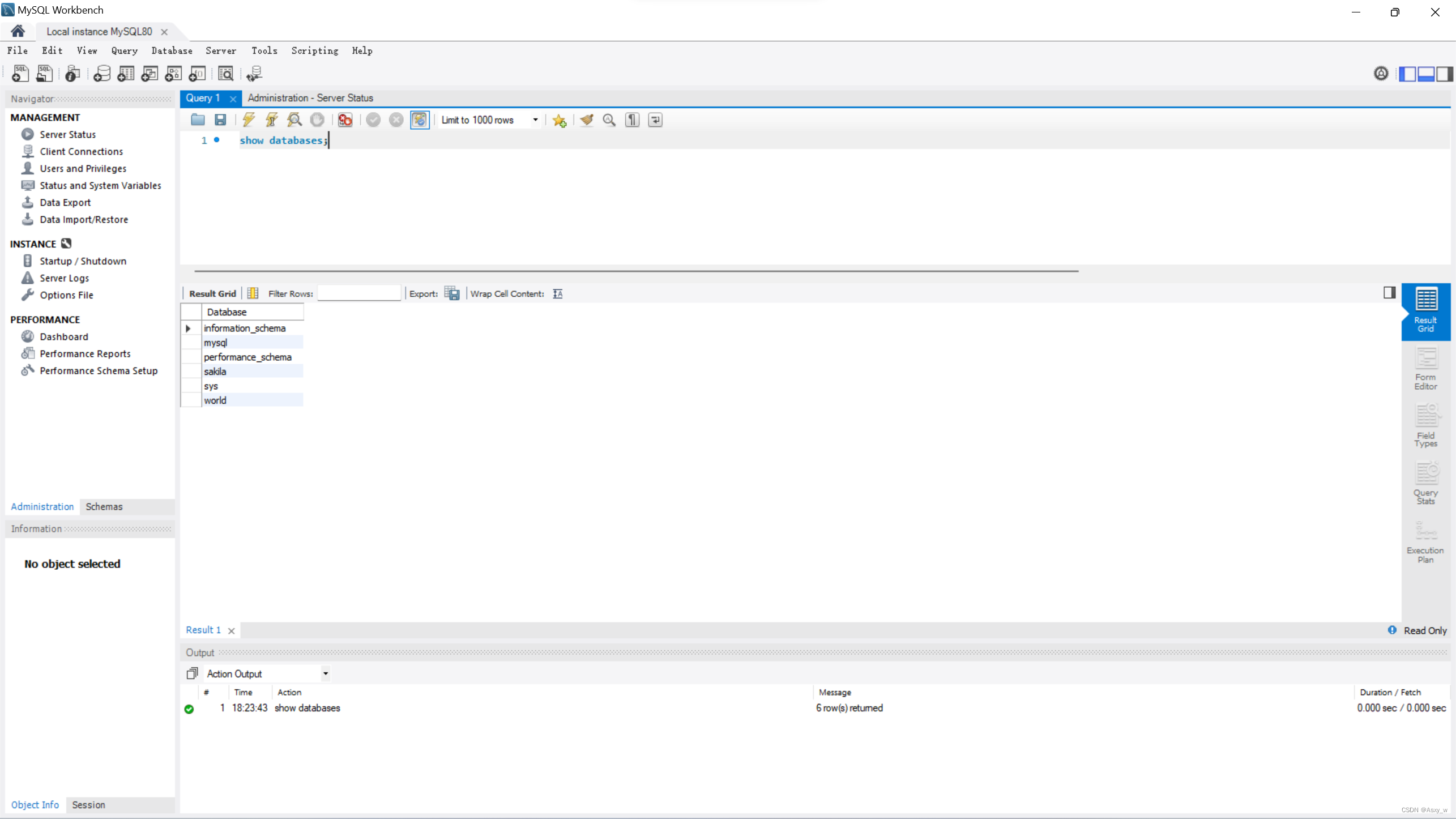

搭建MySQL数据库

推荐使用docker-desktop,可视化操作,首先找到需要使用的版本直接pull到本地,通过界面进行容器启动,指定环境变量并定义挂载卷

也可本地使用docker命令部署MySQL,需要指定环境变量,root账户密码和挂载本地配置文件

#命令根据自己本地路径和配置进行更改

docker run

--env=MYSQL_ROOT_PASSWORD=123456

--volume=D:\docker\mysql\data:/var/lib/mysql/

--volume=D:\docker\mysql\conf:/etc/mysql/conf.d

--volume=/var/lib/mysql

-p 3306:3306

-p 33060:33060 --runtime=runc -d

--privileged=true

mysql:latest

命令说明:

-p 3306:3306

将宿主机的 3306 端口映射到 docker 容器的 3306 端口,格式为:主机(宿主)端口:容器端口--name mysql

运行服务的名字-v

挂载数据卷,格式为:宿主机目录或文件:容器内目录或文件-e MYSQL_ROOT_PASSWORD=123456

初始化root用户的密码为123456-d

后台程序运行容器--privileged=true开启特殊权限

Docker 挂载主机目录时(添加容器数据卷),如果 Docker 访问出现cannot open directory:Permission denied,在挂载目录的命令后多加一个 --privileged=true 参数即可。

因为出于安全原因,容器不允许访问任何设备,privileged让 docker 应用容器获取宿主机root权限(特殊权限),允许我们的 Docker 容器访问连接到主机的所有设备。容器获得所有能力,可以访问主机的所有设备,例如,CD-ROM、闪存驱动器、连接到主机的硬盘驱动器等。

下一步在MySQL配置文件中my.cnf设置开启binlog,更改配置文件之后重启MySQL

# Copyright (c) 2017, Oracle and/or its affiliates. All rights reserved.

#

# This program is free software; you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation; version 2 of the License.

#

# This program is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with this program; if not, write to the Free Software

# Foundation, Inc., 51 Franklin St, Fifth Floor, Boston, MA 02110-1301 USA

#

# The MySQL Server configuration file.

#

# For explanations see

# http://dev.mysql.com/doc/mysql/en/server-system-variables.html

[mysqld]

pid-file = /var/run/mysqld/mysqld.pid

socket = /var/run/mysqld/mysqld.sock

datadir = /var/lib/mysql

secure-file-priv= NULL

--skip-host-cache

--skip-name-resolve

[mysqld]

log-bin=mysql-bin #添加这一行就ok

binlog-format=ROW #选择row模式

server_id=1 #配置mysql replaction需要定义,不能和canal的slaveId重复

# Custom config should go here

!includedir /etc/mysql/conf.d/

更改完配置文件之后验证binlog是否打开,并创建canal用户,设置权限

-- 查询binlog配置

show variables like 'binlog_format';

show variables like 'log_bin';

-- 创建canal账户

CREATE USER canal IDENTIFIED BY 'canal';

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%';

FLUSH PRIVILEGES;

show grants for 'canal';

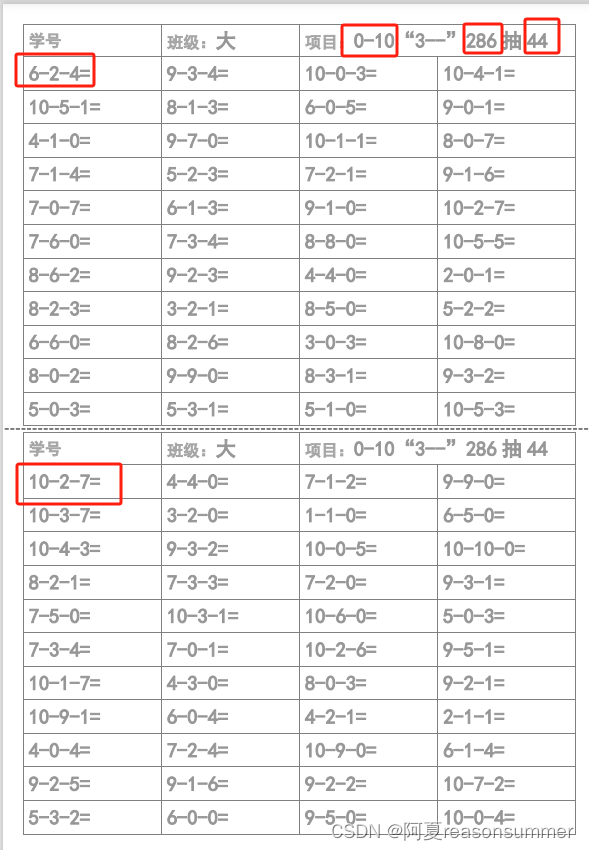

准备工作

- 新建数据库

CREATE SCHEMA `canal_manager` DEFAULT CHARACTER SET utf8mb4 ;

- 新增测试表

create table test_canal

(

id int auto_increment

primary key,

name text null

);

搭建ELK

本地搭建elk推荐使用docker-compose进行搭建,单独搭建的话需要进行各种配置比较麻烦,我本地搭建的版本为7.16.1,如果需要更改版本或者更改其他配置可以直接更改下面docker-compose.yaml文件

docker-compose.yaml

services:

elasticsearch:

image: elasticsearch:7.16.1

container_name: es

environment:

discovery.type: single-node

ES_JAVA_OPTS: "-Xms512m -Xmx512m"

ports:

- "9200:9200"

- "9300:9300"

healthcheck:

test: ["CMD-SHELL", "curl --silent --fail localhost:9200/_cluster/health || exit 1"]

interval: 10s

timeout: 10s

retries: 3

networks:

- elastic

logstash:

image: logstash:7.16.1

container_name: log

environment:

discovery.seed_hosts: logstash

LS_JAVA_OPTS: "-Xms512m -Xmx512m"

volumes:

- ./logstash/pipeline/logstash-nginx.config:/usr/share/logstash/pipeline/logstash-nginx.config

- ./logstash/nginx.log:/home/nginx.log

ports:

- "5000:5000/tcp"

- "5000:5000/udp"

- "5044:5044"

- "9600:9600"

depends_on:

- elasticsearch

networks:

- elastic

command: logstash -f /usr/share/logstash/pipeline/logstash-nginx.config

kibana:

image: kibana:7.16.1

container_name: kib

ports:

- "5601:5601"

depends_on:

- elasticsearch

networks:

- elastic

networks:

elastic:

driver: bridge

Deploy with docker compose

此处不再详细讲解docker-compse如何部署了

$ docker compose up -d

Creating network "elasticsearch-logstash-kibana_elastic" with driver "bridge"

Creating es ... done

Creating log ... done

Creating kib ... done

Expected result

Listing containers must show three containers running and the port mapping as below:

$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

173f0634ed33 logstash:7.8.0 "/usr/local/bin/dock…" 43 seconds ago Up 41 seconds 0.0.0.0:5000->5000/tcp, 0.0.0.0:5044->5044/tcp, 0.0.0.0:9600->9600/tcp, 0.0.0.0:5000->5000/udp log

b448fd3e9b30 kibana:7.8.0 "/usr/local/bin/dumb…" 43 seconds ago Up 42 seconds 0.0.0.0:5601->5601/tcp kib

366d358fb03d elasticsearch:7.8.0 "/tini -- /usr/local…" 43 seconds ago Up 42 seconds (healthy) 0.0.0.0:9200->9200/tcp, 0.0.0.0:9300->9300/tcp

After the application starts, navigate to below links in your web browser:

- Elasticsearch:

http://localhost:9200 - Logstash:

http://localhost:9600 - Kibana:

http://localhost:5601/api/status

准备工作

- 进入kibana,新增测试索引

PUT /test_canal

{

"mappings":{

"properties":{

"id": {

"type": "long"

},

"name": {

"type": "text"

}

}

}

}

搭建canal-server

推荐使用官网下载release版本进行解压部署,官网地址:https://github.com/alibaba/canal/wiki/QuickStart,也可以使用docker进行部署,重点是部署完成后需要修改配置文件。

- 更改配置

vi conf/example/instance.properties

## mysql serverId

canal.instance.mysql.slaveId = 1234

#position info,需要改成自己的数据库信息

canal.instance.master.address = 127.0.0.1:3306

canal.instance.master.journal.name =

canal.instance.master.position =

canal.instance.master.timestamp =

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#username/password,需要改成自己的数据库信息

canal.instance.dbUsername = canal

canal.instance.dbPassword = canal

canal.instance.defaultDatabaseName =

canal.instance.connectionCharset = UTF-8

#table regex

canal.instance.filter.regex = .\*\\\\..\*

具体配置文件介绍可以查看:https://github.com/alibaba/canal/wiki/AdminGuide

注意: 使用docker部署时,如果没有设置为同一个网络,数据库地址无法使用127.0.0.1

- 启动

sh bin/startup.sh

搭建canal-adapter

基本功能

canal 1.1.1版本之后, 增加客户端数据落地的适配及启动功能, 目前支持功能:

- 客户端启动器

- 同步管理REST接口

- 日志适配器, 作为DEMO

- 关系型数据库的数据同步(表对表同步), ETL功能

- HBase的数据同步(表对表同步), ETL功能

- (后续支持) ElasticSearch多表数据同步,ETL功能

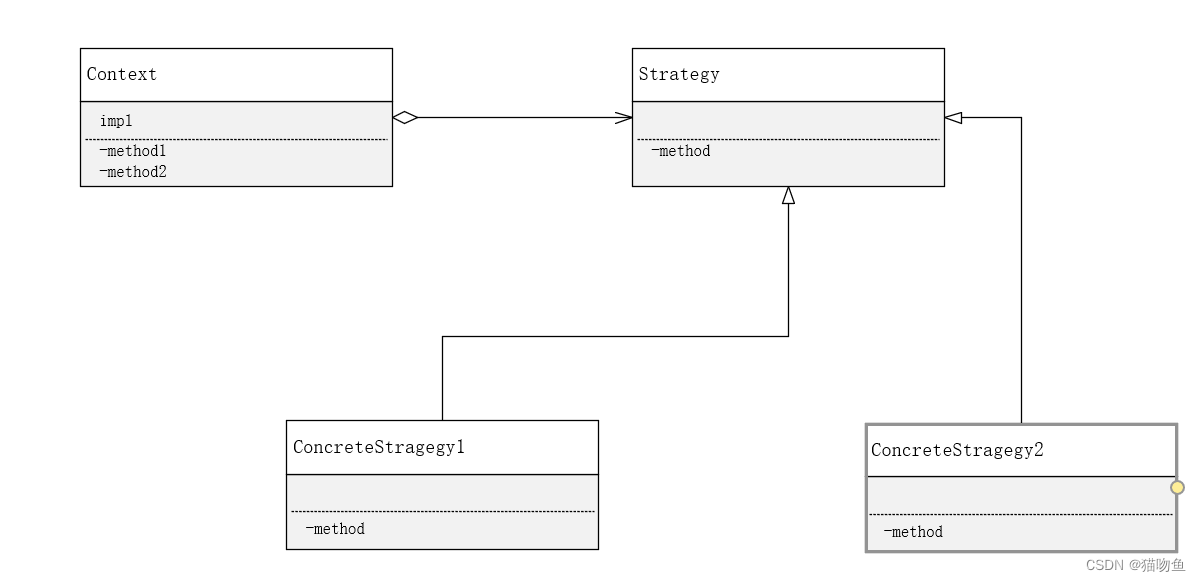

适配器整体结构

client-adapter分为适配器和启动器两部分, 适配器为多个fat jar, 每个适配器会将自己所需的依赖打成一个包, 以SPI的方式让启动器动态加载, 目前所有支持的适配器都放置在plugin目录下

启动器为 SpringBoot 项目, 支持canal-client启动的同时提供相关REST管理接口, 运行目录结构为:

- bin

restart.sh

startup.bat

startup.sh

stop.sh

- lib

...

- plugin

client-adapter.logger-1.1.1-jar-with-dependencies.jar

client-adapter.hbase-1.1.1-jar-with-dependencies.jar

...

- conf

application.yml

- hbase

mytest_person2.yml

- logs

以上目录结构最终会打包成 canal-adapter-*.tar.gz 压缩包

适配器配置介绍

总配置文件 application.yml

adapter定义配置部分

canal.conf:

canalServerHost: 127.0.0.1:11111 # 对应单机模式下的canal server的ip:port

zookeeperHosts: slave1:2181 # 对应集群模式下的zk地址, 如果配置了canalServerHost, 则以canalServerHost为准

mqServers: slave1:6667 #or rocketmq # kafka或rocketMQ地址, 与canalServerHost不能并存

flatMessage: true # 扁平message开关, 是否以json字符串形式投递数据, 仅在kafka/rocketMQ模式下有效

batchSize: 50 # 每次获取数据的批大小, 单位为K

syncBatchSize: 1000 # 每次同步的批数量

retries: 0 # 重试次数, -1为无限重试

timeout: # 同步超时时间, 单位毫秒

mode: tcp # kafka rocketMQ # canal client的模式: tcp kafka rocketMQ

srcDataSources: # 源数据库

defaultDS: # 自定义名称

url: jdbc:mysql://127.0.0.1:3306/mytest?useUnicode=true # jdbc url

username: root # jdbc 账号

password: 121212 # jdbc 密码

canalAdapters: # 适配器列表

- instance: example # canal 实例名或者 MQ topic 名

groups: # 分组列表

- groupId: g1 # 分组id, 如果是MQ模式将用到该值

outerAdapters: # 分组内适配器列表

- name: logger # 日志打印适配器

......

说明:

- 一份数据可以被多个group同时消费, 多个group之间会是一个并行执行, 一个group内部是一个串行执行多个outerAdapters, 比如例子中logger和hbase

- 目前client adapter数据订阅的方式支持两种,直连canal server 或者 订阅kafka/RocketMQ的消息

操作流程

从官网下载relaes版本之后进行解压,解压之后修改cnf目录下得application.yml文件,根据自己得需求选择下面不同的adapters进行配置,我本地安装的es是es7.16,选择es7的配置,具体配置的含义请参考上方适配器配置介绍

- 配置application.yaml

server:

port: 8081

spring:

jackson:

date-format: yyyy-MM-dd HH:mm:ss

time-zone: GMT+8

default-property-inclusion: non_null

canal.conf:

mode: tcp #tcp kafka rocketMQ rabbitMQ

flatMessage: true

zookeeperHosts:

syncBatchSize: 1000

retries: -1

timeout:

accessKey:

secretKey:

consumerProperties:

# canal tcp consumer

canal.tcp.server.host: 127.0.0.1:11111

canal.tcp.zookeeper.hosts:

canal.tcp.batch.size: 500

canal.tcp.username:

canal.tcp.password:

# kafka consumer

kafka.bootstrap.servers: 127.0.0.1:9092

kafka.enable.auto.commit: false

kafka.auto.commit.interval.ms: 1000

kafka.auto.offset.reset: latest

kafka.request.timeout.ms: 40000

kafka.session.timeout.ms: 30000

kafka.isolation.level: read_committed

kafka.max.poll.records: 1000

# rocketMQ consumer

rocketmq.namespace:

rocketmq.namesrv.addr: 127.0.0.1:9876

rocketmq.batch.size: 1000

rocketmq.enable.message.trace: false

rocketmq.customized.trace.topic:

rocketmq.access.channel:

rocketmq.subscribe.filter:

# rabbitMQ consumer

rabbitmq.host:

rabbitmq.virtual.host:

rabbitmq.username:

rabbitmq.password:

rabbitmq.resource.ownerId:

srcDataSources:

defaultDS:

url: jdbc:mysql://127.0.0.1:3306/test?useUnicode=true # 配置数据库地址

username: root

password: 123456

canalAdapters:

- instance: example # canal instance Name or mq topic name

groups:

- groupId: g1

outerAdapters:

- name: logger

# - name: rdb

# key: mysql1

# properties:

# jdbc.driverClassName: com.mysql.jdbc.Driver

# jdbc.url: jdbc:mysql://127.0.0.1:3306/mytest2?useUnicode=true

# jdbc.username: root

# jdbc.password: 121212

# druid.stat.enable: false

# druid.stat.slowSqlMillis: 1000

# - name: rdb

# key: oracle1

# properties:

# jdbc.driverClassName: oracle.jdbc.OracleDriver

# jdbc.url: jdbc:oracle:thin:@localhost:49161:XE

# jdbc.username: mytest

# jdbc.password: m121212

# - name: rdb

# key: postgres1

# properties:

# jdbc.driverClassName: org.postgresql.Driver

# jdbc.url: jdbc:postgresql://localhost:5432/postgres

# jdbc.username: postgres

# jdbc.password: 121212

# threads: 1

# commitSize: 3000

# - name: hbase

# properties:

# hbase.zookeeper.quorum: 127.0.0.1

# hbase.zookeeper.property.clientPort: 2181

# zookeeper.znode.parent: /hbase

- name: es7

hosts: 172.22.80.1:9300 # 127.0.0.1:9200 for rest mode

properties:

mode: transport # or rest

# security.auth: test:123456 # only used for rest mode

cluster.name: docker-cluster

# - name: kudu

# key: kudu

# properties:

# kudu.master.address: 127.0.0.1 # ',' split multi address

# - name: phoenix

# key: phoenix

# properties:

# jdbc.driverClassName: org.apache.phoenix.jdbc.PhoenixDriver

# jdbc.url: jdbc:phoenix:127.0.0.1:2181:/hbase/db

# jdbc.username:

# jdbc.password:

-

新增测试yml文件,再es7目录下新建testcanal.yml配置,更加复杂的映射处理可以参考canal官网示例:

https://github.com/alibaba/canal/wiki/Sync-ES

- 启动canal-adapter启动器

bin/startup.sh

windows启动.bat文件

- 验证

新增mysql mytest.test_canal表的数据, 将会自动同步到es的test_canal索引下面, 并会打出DML的log

番外:adapter管理REST接口

注意:部分操作需要指定cnf下的yml配置

查询所有订阅同步的canal instance或MQ topic

curl http://127.0.0.1:8081/destinations

数据同步开关

curl http://127.0.0.1:8081/syncSwitch/example/off -X PUT

针对 example 这个canal instance/MQ topic 进行开关操作. off代表关闭, instance/topic下的同步将阻塞或者断开连接不再接收数据, on代表开启

注: 如果在配置文件中配置了 zookeeperHosts 项, 则会使用分布式锁来控制HA中的数据同步开关, 如果是单机模式则使用本地锁来控制开关

数据同步开关状态

curl http://127.0.0.1:8081/syncSwitch/example

查看指定 canal instance/MQ topic 的数据同步开关状态

手动ETL

- 不带参数

curl http://127.0.0.1:8081/etl/es7/testcanal.yml -X POST

- 带参数

curl http://127.0.0.1:8081/etl/hbase/mytest_person2.yml -X POST -d "params=2018-10-21 00:00:00"

导入数据到指定类型的库, 如果params参数为空则全表导入, 参数对应的查询条件在配置中的etlCondition指定

查看相关库总数据

curl http://127.0.0.1:8081/count/hbase/mytest_person2.yml

常见问题

not part of the cluster Cluster [elasticsearch], ignoring…

查看canal-adapter配置中的application.yml配置是否和es中的cluster_name配置相同

连接问题

如果本地使用docker进行部署,未指定网桥,切记不能使用127.0.0.1进行es连接或者mysql数据库连接