一 代码

ffmpeg版本5.1.2,dll是:ffmpeg-5.1.2-full_build-shared。x64的。

文件、流地址对使用者来说是一样。

流地址(RTMP、HTTP-FLV、RTSP等):信令完成后,才进行音视频传输。信令包括音视频格式、参数等协商。

接流的在实际中的应用:1 展示,播放。2 给算法用,一般是需要RGB格式的。

#ifndef _DECODE_H264_H_

#define _DECODE_H264_H_

#include <string>

extern "C"

{

#include "libavformat/avformat.h"

#include "libavcodec/avcodec.h"

#include "libswscale/swscale.h"

#include "libavutil/avutil.h"

#include "libavutil/mathematics.h"

#include "libavutil/time.h"

#include "libavutil/pixdesc.h"

#include "libavutil/display.h"

};

#pragma comment(lib, "avformat.lib")

#pragma comment(lib, "avutil.lib")

#pragma comment(lib, "avcodec.lib")

#pragma comment(lib, "swscale.lib")

class CDecodeH264

{

public:

CDecodeH264();

~CDecodeH264();

public:

public:

int DecodeH264();

int Start();

int Close();

int DecodeH264File_Init();

int ReleaseDecode();

void H264Decode_Thread_Fun();

std::string dup_wchar_to_utf8(const wchar_t* wstr);

double get_rotation(AVStream *st);

public:

AVFormatContext* m_pInputFormatCtx = nullptr;

AVCodecContext* m_pVideoDecodeCodecCtx = nullptr;

const AVCodec* m_pCodec = nullptr;

SwsContext* m_pSwsContext = nullptr;

AVFrame* m_pFrameScale = nullptr;

AVFrame* m_pFrameYUV = nullptr;

AVPacket* m_pAVPacket = nullptr;

enum AVMediaType m_CodecType;

int m_output_pix_fmt;

int m_nVideoStream = -1;

int m_nFrameHeight = 0;

int m_nFrameWidth = 0;

int m_nFPS;

int m_nVideoSeconds;

FILE* m_pfOutYUV = nullptr;

FILE* m_pfOutYUV2 = nullptr;

};

#endif

#include "DecodeH264.h"

#include <thread>

#include <functional>

#include <codecvt>

#include <locale>

char av_error2[AV_ERROR_MAX_STRING_SIZE] = { 0 };

#define av_err2str2(errnum) av_make_error_string(av_error2, AV_ERROR_MAX_STRING_SIZE, errnum)

CDecodeH264::CDecodeH264()

{

}

CDecodeH264::~CDecodeH264()

{

ReleaseDecode();

}

std::string CDecodeH264::dup_wchar_to_utf8(const wchar_t* wstr)

{

std::wstring_convert<std::codecvt_utf8<wchar_t>> converter;

return converter.to_bytes(wstr);

}

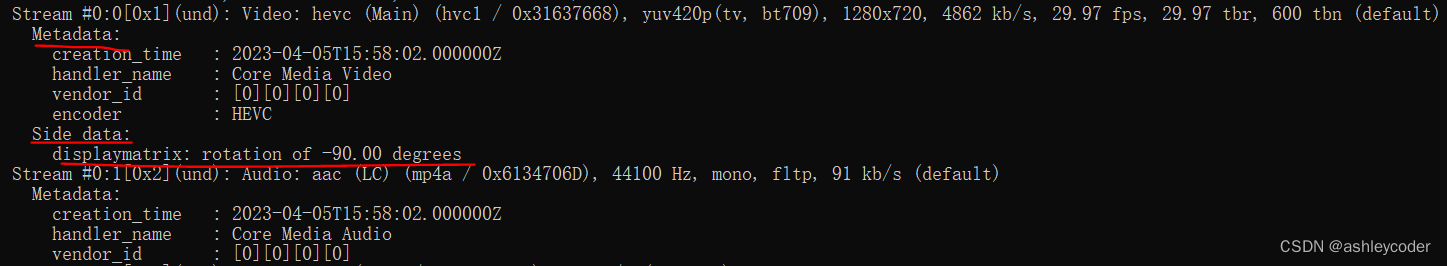

//Side data :

//displaymatrix: rotation of - 90.00 degrees

double CDecodeH264::get_rotation(AVStream *st)

{

uint8_t* displaymatrix = av_stream_get_side_data(st,

AV_PKT_DATA_DISPLAYMATRIX, NULL);

double theta = 0;

if (displaymatrix)

theta = -av_display_rotation_get((int32_t*)displaymatrix);

theta -= 360 * floor(theta / 360 + 0.9 / 360);

if (fabs(theta - 90 * round(theta / 90)) > 2)

av_log(NULL, AV_LOG_WARNING, "Odd rotation angle.\n"

"If you want to help, upload a sample "

"of this file to https://streams.videolan.org/upload/ "

"and contact the ffmpeg-devel mailing list. (ffmpeg-devel@ffmpeg.org)");

return theta;

}

int CDecodeH264::DecodeH264File_Init()

{

avformat_network_init(); //流地址需要

m_pInputFormatCtx = avformat_alloc_context();

std::string strFilename = dup_wchar_to_utf8(L"测试.h264");

//std::string strFilename = dup_wchar_to_utf8(L"rtmp://127.0.0.1/live/now");

int ret = avformat_open_input(&m_pInputFormatCtx, strFilename.c_str(), nullptr, nullptr);

if (ret != 0) {

char* err_str = av_err2str2(ret);

printf("fail to open filename: %s, return value: %d, %s\n", strFilename.c_str(), ret, err_str);

return -1;

}

ret = avformat_find_stream_info(m_pInputFormatCtx, nullptr);

if (ret < 0) {

char* err_str = av_err2str2(ret);

printf("fail to get stream information: %d, %s\n", ret, err_str);

return -1;

}

for (int i = 0; i < m_pInputFormatCtx->nb_streams; ++i) {

const AVStream* stream = m_pInputFormatCtx->streams[i];

if (stream->codecpar->codec_type == AVMEDIA_TYPE_VIDEO) {

m_nVideoStream = i;

printf("type of the encoded data: %d, dimensions of the video frame in pixels: width: %d, height: %d, pixel format: %d\n",

stream->codecpar->codec_id, stream->codecpar->width, stream->codecpar->height, stream->codecpar->format);

}

}

if (m_nVideoStream == -1) {

printf("no video stream\n");

return -1;

}

printf("m_nVideoStream=%d\n", m_nVideoStream);

//获取旋转角度

double theta = get_rotation(m_pInputFormatCtx->streams[m_nVideoStream]);

m_pVideoDecodeCodecCtx = avcodec_alloc_context3(nullptr);

avcodec_parameters_to_context(m_pVideoDecodeCodecCtx,\

m_pInputFormatCtx->streams[m_nVideoStream]->codecpar);

m_pCodec = avcodec_find_decoder(m_pVideoDecodeCodecCtx->codec_id);

if (m_pCodec == nullptr)

{

return -1;

}

m_nFrameHeight = m_pVideoDecodeCodecCtx->height;

m_nFrameWidth = m_pVideoDecodeCodecCtx->width;

printf("w=%d h=%d\n", m_pVideoDecodeCodecCtx->width, m_pVideoDecodeCodecCtx->height);

if (avcodec_open2(m_pVideoDecodeCodecCtx, m_pCodec, nullptr) < 0)

{

return -1;

}

//读文件知道视频宽高

m_output_pix_fmt = AV_PIX_FMT_YUV420P; //AV_PIX_FMT_NV12;

m_pSwsContext = sws_getContext(m_pVideoDecodeCodecCtx->width, m_pVideoDecodeCodecCtx->height,

m_pVideoDecodeCodecCtx->pix_fmt, m_pVideoDecodeCodecCtx->width, m_pVideoDecodeCodecCtx->height,

(AVPixelFormat)m_output_pix_fmt, SWS_FAST_BILINEAR, nullptr, nullptr, nullptr);

//解码后的视频数据

m_pFrameScale = av_frame_alloc();

m_pFrameScale->format = m_output_pix_fmt;

m_pFrameYUV = av_frame_alloc();

m_pFrameYUV->format = m_output_pix_fmt; //mAVFrame.format is not set

m_pFrameYUV->width = m_pVideoDecodeCodecCtx->width;

m_pFrameYUV->height = m_pVideoDecodeCodecCtx->height;

printf("m_pFrameYUV pix_fmt=%d\n", m_pVideoDecodeCodecCtx->pix_fmt);

av_frame_get_buffer(m_pFrameYUV, 64);

char cYUVName[256];

sprintf(cYUVName, "%d_%d_%s.yuv", m_nFrameWidth, m_nFrameHeight, av_get_pix_fmt_name(m_pVideoDecodeCodecCtx->pix_fmt));

fopen_s(&m_pfOutYUV, cYUVName, "wb");

char cYUVName2[256];

sprintf(cYUVName2, "%d_%d_%s_2.yuv", m_nFrameWidth, m_nFrameHeight, av_get_pix_fmt_name(m_pVideoDecodeCodecCtx->pix_fmt));

fopen_s(&m_pfOutYUV2, cYUVName2, "wb");

printf("leave init\n");

return 0;

}

void CDecodeH264::H264Decode_Thread_Fun()

{

int nFrameFinished = 0;

int i = 0;

int ret;

m_pAVPacket = av_packet_alloc();

while (true) {

ret = av_read_frame(m_pInputFormatCtx, m_pAVPacket);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF) {

av_packet_unref(m_pAVPacket);

printf("read_frame break");

break;

}

if (m_pAVPacket->stream_index == m_nVideoStream)

{

int send_packet_ret = avcodec_send_packet(m_pVideoDecodeCodecCtx, m_pAVPacket);

printf("encode video send_packet_ret %d\n", send_packet_ret);

int receive_frame_ret = avcodec_receive_frame(m_pVideoDecodeCodecCtx, m_pFrameScale);

char* err_str = av_err2str2(receive_frame_ret);

printf("frame w=%d, h=%d, linesize[0]=%d, linesize[1]=%d\n", m_pFrameScale->width, m_pFrameScale->height, m_pFrameScale->linesize[0], m_pFrameScale->linesize[1]);

if (receive_frame_ret == 0)

{

++i;

int iReturn = sws_scale(m_pSwsContext, m_pFrameScale->data,

m_pFrameScale->linesize, 0, m_nFrameHeight,

m_pFrameYUV->data, m_pFrameYUV->linesize);

printf("frame w=%d, h=%d, linesize[0]=%d, linesize[1]=%d\n", m_pFrameYUV->width, m_pFrameYUV->height, m_pFrameYUV->linesize[0], m_pFrameYUV->linesize[1]);

/*if (0 != iReturn)

{

fwrite(m_pFrameYUV->data[0], 1, m_nFrameWidth * m_nFrameHeight, m_pfOutYUV);

fwrite(m_pFrameYUV->data[1], 1, m_nFrameWidth * m_nFrameHeight /4, m_pfOutYUV);

fwrite(m_pFrameYUV->data[2], 1, m_nFrameWidth * m_nFrameHeight /4, m_pfOutYUV);

}*/

//用linesize更能兼容特殊的宽

if (0 != iReturn)

{

for (int i = 0; i < m_nFrameHeight; ++i) {

fwrite(m_pFrameYUV->data[0] + i * m_pFrameYUV->linesize[0], 1, m_nFrameWidth, m_pfOutYUV2);

}

for (int i = 0; i < m_nFrameHeight / 2; ++i) {

fwrite(m_pFrameYUV->data[1] + i * m_pFrameYUV->linesize[1], 1, m_nFrameWidth / 2, m_pfOutYUV2);

}

for (int i = 0; i < m_nFrameHeight / 2; ++i) {

fwrite(m_pFrameYUV->data[2] + i * m_pFrameYUV->linesize[2], 1, m_nFrameWidth / 2, m_pfOutYUV2);

}

}

}

}

av_packet_unref(m_pAVPacket);

}

}

int CDecodeH264::DecodeH264()

{

if (DecodeH264File_Init() != 0)

{

return -1;

}

auto video_func = std::bind(&CDecodeH264::H264Decode_Thread_Fun, this);

std::thread video_thread(video_func);

video_thread.join();

return 0;

}

int CDecodeH264::Start()

{

DecodeH264();

return 1;

}

int CDecodeH264::Close()

{

return 0;

}

int CDecodeH264::ReleaseDecode()

{

if (m_pSwsContext)

{

sws_freeContext(m_pSwsContext);

m_pSwsContext = nullptr;

}

if (m_pFrameScale)

{

av_frame_free(&m_pFrameScale);//av_frame_alloc()对应

}

if (m_pFrameYUV)

{

av_frame_free(&m_pFrameYUV);

}

avcodec_close(m_pVideoDecodeCodecCtx);

avformat_close_input(&m_pInputFormatCtx);

return 0;

}#include <iostream>

#include <Windows.h>

#include "1__DecodeH264/DecodeH264.h"

int main()

{

CDecodeH264* m_pDecodeVideo = new CDecodeH264();

m_pDecodeVideo->Start();

return 0;

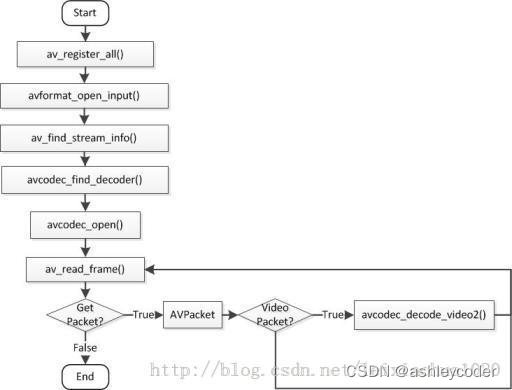

}图是雷神博客的:注册函数废弃了,解码函数变了。

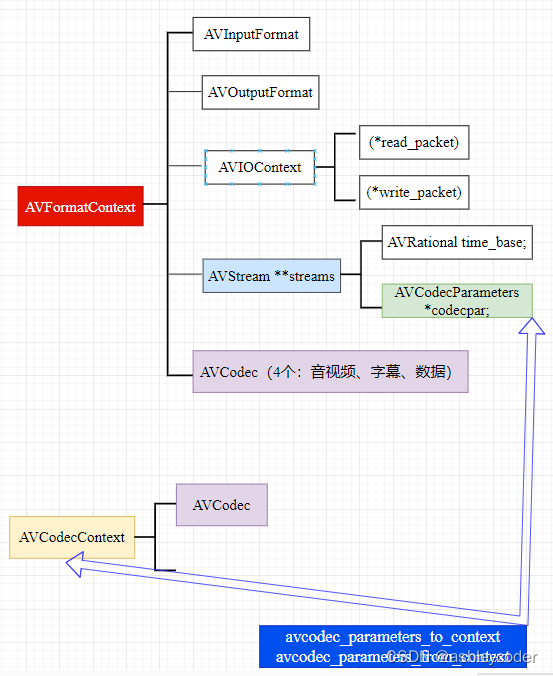

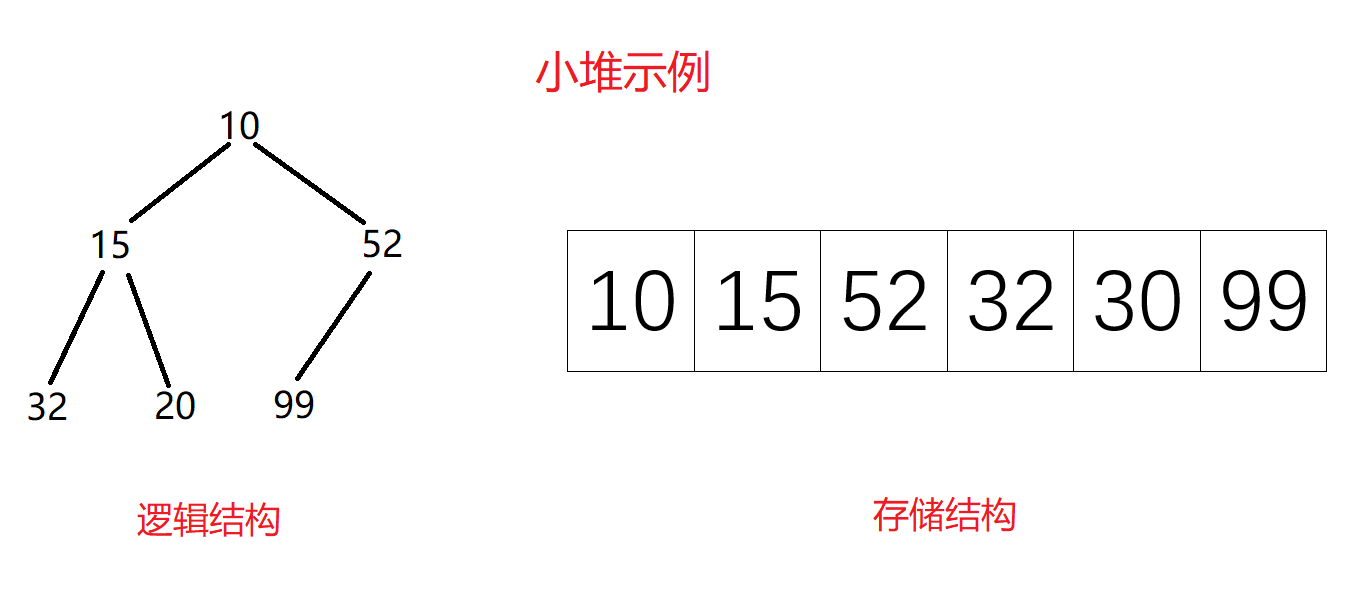

二 相关的结构体,方便记忆

1 AVFrame是未压缩的,解码后的数据。

AVPacket是压缩的,解码前的数据。

知道了这个,编码的send_frame、receive_packet,解码的send_packet、receive_frame,容易记住了。

2 2个Context(上下文):Format(混合文件、流地址)、Codec(单个编码格式,比如H264、AAC,编解码实现)

AVFormatContext* m_pInputFormatCtx;

AVCodecContext* m_pVideoDecodeCodecCtx;

m_pInputFormatCtx会用到的函数:avformat_open_input、avformat_find_stream_info、

av_read_frame、avformat_close_input。

m_pOutputFormatCtx会用到的函数:avcodec_find_decoder、avcodec_open2、

avcodec_send_packet、 avcodec_receive_frame。

3 AVCodec结构体

const AVCodec ff_h264_decoder = {

.name = "h264",

.long_name = NULL_IF_CONFIG_SMALL("H.264 / AVC / MPEG-4 AVC / MPEG-4 part 10"),

.type = AVMEDIA_TYPE_VIDEO,

.id = AV_CODEC_ID_H264,

.priv_data_size = sizeof(H264Context),

.init = h264_decode_init,

.close = h264_decode_end,

.decode = h264_decode_frame,

……

}

static const AVCodec * const codec_list[] = {

...

&ff_h264_decoder,

...

};

三 兼容性问题

1 文件名带中文,需要转换。

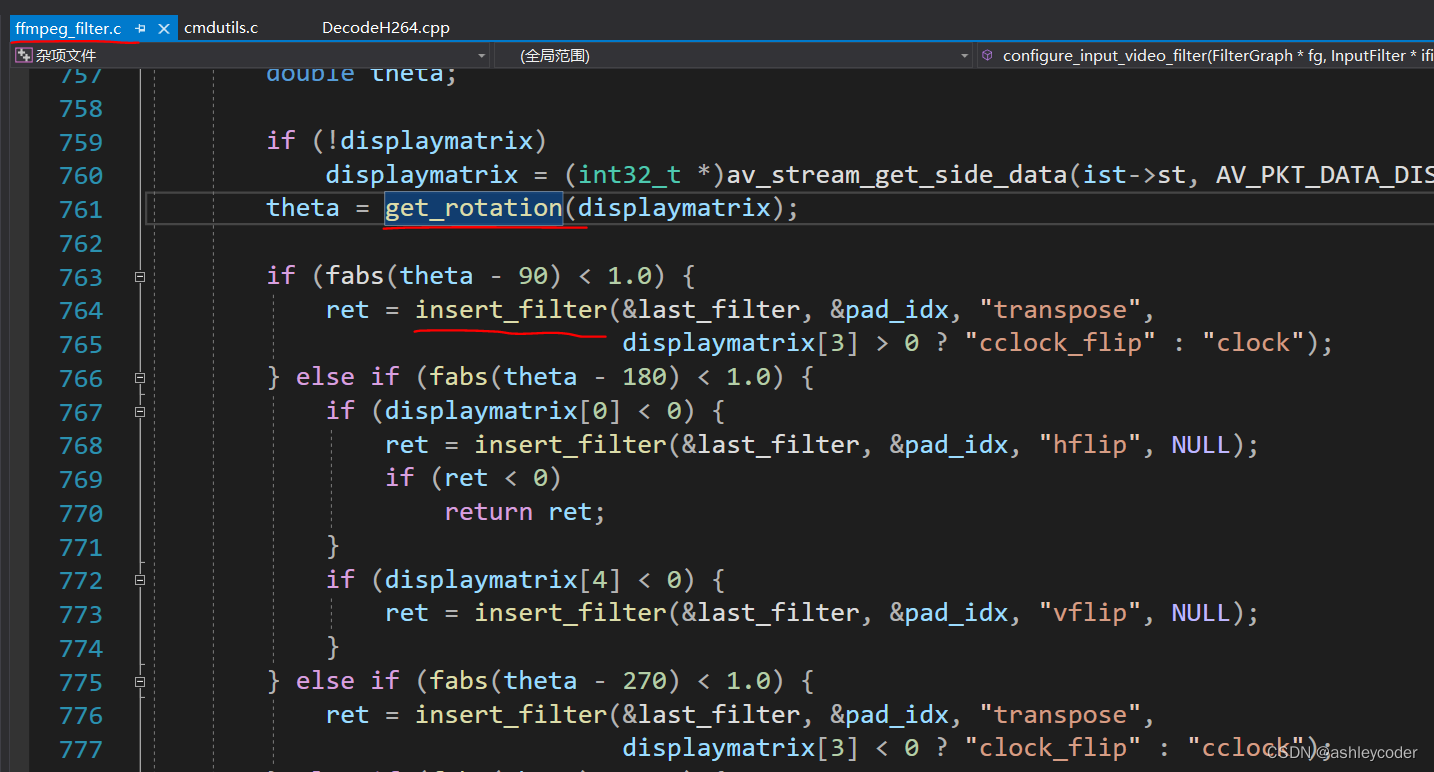

2 播放竖屏视频(手机录的那种),获取旋转角度。

截图是ffmpeg做法:获取角度后,使用filter调整。

3 宽比较特殊,不是16,32的整数。(比如544x960,544是32的倍数)。用linesize[i]代替宽。

linesize跟cpu有关,是cpu 16、32的倍数。

其它,待更新。

四 为什么需要sws_scale?转换到统一格式I420

sws_scale作用:1 分辨率缩放、 2 不同YUV、RGB格式转换。

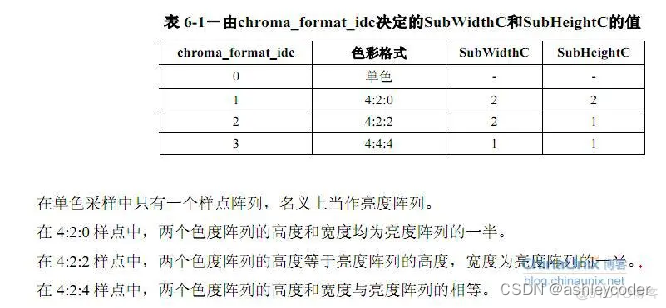

H264有记录编码前的YUV采样格式,chroma_format_idc,在sps里。如果没有这个字段,说明该字段用的默认值1,即yuv 4:2:0。

如果YUV的采样格式是yuv 4:2:0,也不要求缩放,不需要sws_scale。

avcodec_receive_frame(AVCodecContext *avctx, AVFrame *frame);

frame->format记录了yuv的类型。

ffmpeg默认解码成:编码前的yuv格式。即m_pVideoDecodeCodecCtx->pix_fmt。

int ff_decode_frame_props(AVCodecContext *avctx, AVFrame *frame)

{

...

frame->format = avctx->pix_fmt;

...

}五 不同格式的time_base

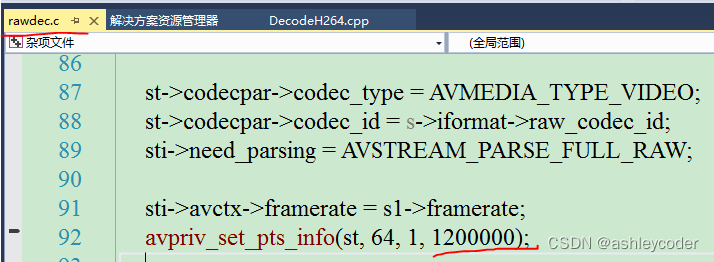

H264的time_base:1/1200000。

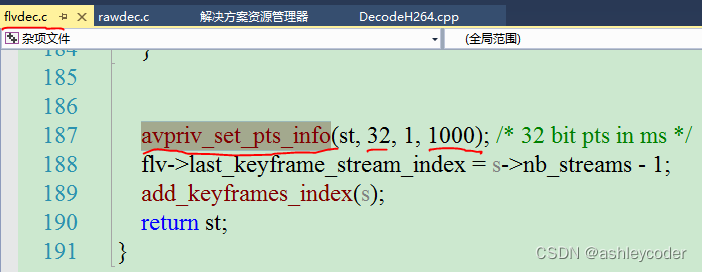

flv:音视频都是1/1000。

mp4:视频1/12800(帧率25,怎么算出来的?),音频:1/48000(1/采样频率)。