目录

- 碎碎念

- 一些基础

- CustomPostProcessing.cs

- CustomPostProcessingFeature.cs

- CustomPostProcessingPass.cs

- 例子:BSC

- 后处理shader(BSC)

- 后处理cs脚本(BSC)

- 例子:ColorBlit

- PostProcessing.hlsl

- ColorBlit2.shader

- ColorBlit.cs文件

- 其他一些参考

碎碎念

额,最近在看一些关于URP的东西,挺难入门的这个东西,因为本身版本就迭代得非常快,一些代码通常你才刚接触到就已经弃用了,就很尴尬。但是新的api教程又少(微笑.jpg)。

这次得自定义后处理框架也是知乎的大神放出来的,我这边放出的代码就是简单当成我个人的学习记录,还是有一些不懂的地方,放出链接:Unity URP14.0 自定义后处理系统。

- 这个教程应该适合刚入门的新手,但是又得对URP和Render Feature有点了解的人。

- 给出的代码大部分都有注释。

一些基础

这个自定义框架总体分成五个部分,其实应该说是四个部分才对,但是作者把他拆成了四个部分。

分别是:

- 后处理基类CustomPostProcessing.cs;

- 我们自定义的Render Feature——CustomPostProcessingFeature.cs;

- 还有Render Pass——CustomPostProcessingPass.cs。

- 我们的后处理效果shader。

- 最后就是我们自定义的,继承于CustomPostProcessing.cs的具体后处理类。这个类就是我们自由发挥了,通常需要跟我们的shader联系起来。

如果大家之前自己写过简单的render feature的话,通常从unity面板上创建的Render Feature都会自动创建一个CustomRenderPass,也就是类中类,但是这位大神把它们拆开了。

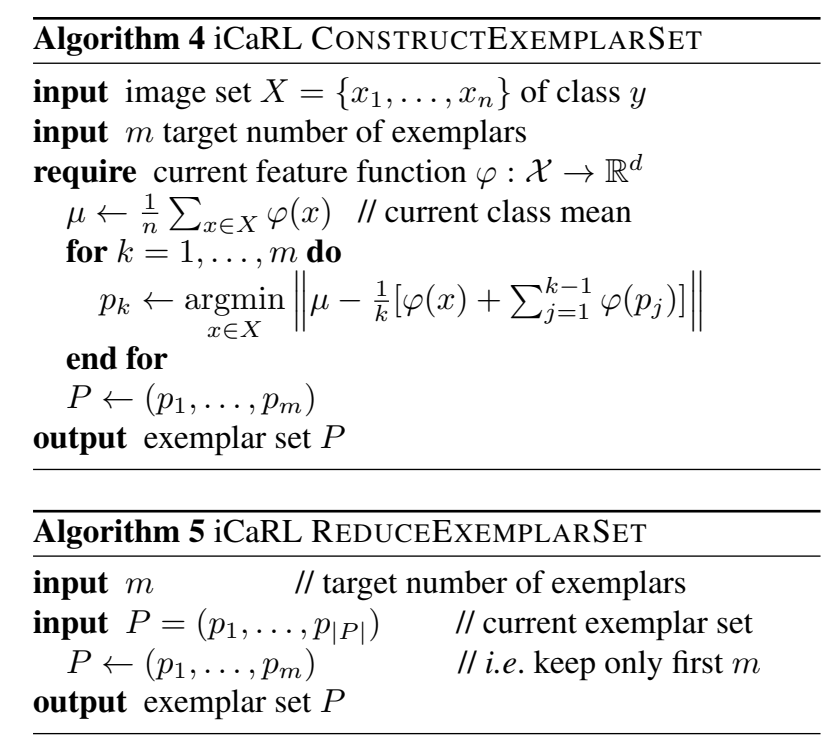

先来了解一下Render Feature的简单框架吧(里面有些api在urp14里是弃用的):

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

public class MyFirstRenderFeatureTest : ScriptableRendererFeature

{

// static Material blitMaterial = new Material(blitShader);

/// <summary>

/// 这个类是Render Feature中的主要组成部分,也就是一个render pass

/// </summary> <summary>

///

/// </summary>

class CustomRenderPass : ScriptableRenderPass

{

//找到场景中的shader中的texture,并获取该贴图的id

static string rt_name = "_ExampleRT";

static int rt_ID = Shader.PropertyToID(rt_name);

static string blitShader_Name = "URP/BlitShader";

static Shader blitShader = Shader.Find(blitShader_Name);

static Material blitMat = new Material(blitShader);

/// <summary>

/// 帮助Excete() 提前准备它需要的RenderTexture或者其他变量

/// </summary>

/// <param name="cmd"></param>

/// <param name="renderingData"></param>

public override void OnCameraSetup(CommandBuffer cmd, ref RenderingData renderingData)

{

// 存储render texture一些格式标准的数据结构

RenderTextureDescriptor descriptor = new RenderTextureDescriptor(1920, 1080,RenderTextureFormat.Default, 0);

// 然后创建一个临时的render texture的缓存/空间

cmd.GetTemporaryRT(rt_ID, descriptor);

// 想画其他东西到rt上的话就需要下面这句

ConfigureTarget(rt_ID);

ConfigureClear(ClearFlag.Color, Color.black);

}

/// <summary>

/// 实现这个render pass做什么事情

/// </summary>

/// <param name="context"></param>

/// <param name="renderingData"></param>

public override void Execute(ScriptableRenderContext context, ref RenderingData renderingData)

{

// 到命令缓存池中get一个

CommandBuffer cmd = CommandBufferPool.Get("tmpCmd");

cmd.Blit(renderingData.cameraData.renderer.cameraColorTarget, rt_ID, blitMat); //添加一个命令:将像素数据从A复制到B

context.ExecuteCommandBuffer(cmd); //因为是自己创建的cmd,所以需要手动地将renderingData提交到context里去

cmd.Clear();

cmd.Release();

}

/// <summary>

/// 释放在OnCameraSetup() 里声明的变量,尤其是Temporary Render Texture

/// </summary>

/// <param name="cmd"></param>

public override void OnCameraCleanup(CommandBuffer cmd)

{

cmd.ReleaseTemporaryRT(rt_ID);

}

}

/// <summary>

/// 声明一个render pass的变量

/// </summary>

CustomRenderPass m_ScriptablePass;

/// <summary>

/// 这个方法是render feature中用来给上面声明的render pass赋值,并决定这个render pass什么使用会被调用(不一定每帧都被执行)

/// </summary>

public override void Create()

{

m_ScriptablePass = new CustomRenderPass();

// renderPassEvent定义什么时候去执行m_ScriptablePass 这个render pass

m_ScriptablePass.renderPassEvent = RenderPassEvent.AfterRenderingPostProcessing;

}

/// <summary>

/// 将create函数里实例化的render pass加入到渲染管线中(每帧都执行)

/// </summary>

/// <param name="renderer"></param>

/// <param name="renderingData"></param>

public override void AddRenderPasses(ScriptableRenderer renderer, ref RenderingData renderingData)

{

renderer.EnqueuePass(m_ScriptablePass);

}

}

可以看到这个脚本的最外层是MyFirstRenderFeatureTest类,继承于ScriptableRendererFeature类。

- MyFirstRenderFeatureTest类包含一个CustomRenderPass类和该pass的变量。

- CustomRenderPass类则继承于ScriptableRenderPass类,他有OnCameraSetup、Execute、OnCameraCleanup三个抽象方法,这在pass类中必须实现,具体解释看代码。

- 包含Create方法,这个方法是render feature中用来给声明的render pass赋值,并决定这个render pass什么使用会被调用(不一定每帧都被执行)。

- 包含AddRenderPasses方法,这个方法将create函数里实例化的render pass加入到渲染管线中(每帧都执行)。

CustomPostProcessing.cs

是所有我们自定义后处理的基类,也就说如果你要创建自己的后处理cs脚本,就需要继承这个脚本。

因为默认的unity后处理是不支持拓展的,但是我们这里这个CustomPostProcessing.cs就为了能实现拓展做了一些操作,也就是继承VolumeComponent类和IPostProcessComponent类,这两个类能将我们自己写的后处理添加到unity的那个全局后处理上的基础。额,当然,这只是第一步,还没那么快呢。

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using System;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

//后处理效果的注入点,这里先分四个

public enum CustomPostProcessingInjectionPoint{

AfterOpaque,

AfterSkybox,

BeforePostProcess,

AfterPostProcess

}

//这个类其实是自定义后处理的基类,应该改名为 MyVolumePostProcessing 比较合适

//自定义后处理的基类 (由于渲染时会生成临时RT,所以还需要继承IDisposable)

public abstract class CustomPostProcessing : VolumeComponent, IPostProcessComponent, IDisposable

{

//注入点

public virtual CustomPostProcessingInjectionPoint InjectionPoint => CustomPostProcessingInjectionPoint.AfterPostProcess;

//在注入点的顺序

public virtual int OrderInInjectionPoint => 0;

#region IPostProcessComponent

//用来返回当前后处理是否active

public abstract bool IsActive();

//不知道用来干嘛的,但Bloom.cs里get值false,抄下来就行了

public virtual bool IsTileCompatible() => false;

//配置当前后处理

public abstract void Setup();

// 当相机初始化时执行(自己取的函数名,跟renderfeature里的OnCameraSetup没什么关系其实)

public virtual void OnCameraSetup(CommandBuffer cmd, ref RenderingData renderingData){

}

#endregion

//执行渲染

public abstract void Render(CommandBuffer cmd, ref RenderingData renderingData, RTHandle source, RTHandle destination);

#region IDisposable

public void Dispose(){

Dispose(true);

GC.SuppressFinalize(this);

}

public virtual void Dispose(bool disposing){

}

#endregion

}

在这个类里我们需要定义一些属性和方法,这是为了我们后面的后处理做准备。

上面还定义了一个枚举类:CustomPostProcessingInjectionPoint。这个枚举是简单的列出我们想要在渲染管线的哪个阶段插入我们的后处理,这里就先简单地列了四个阶段。

这个注入点的顺序我其实还没太明白。

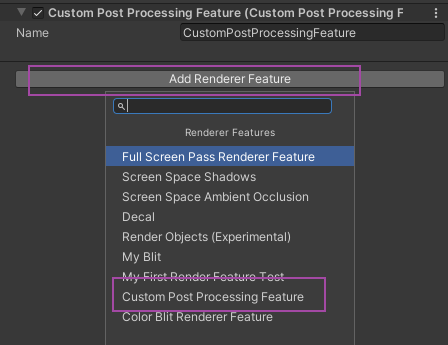

CustomPostProcessingFeature.cs

这个脚本就是我们心心念念的Render Feature。主要的作用是创建并初始化render pass。

这里我们定义了一个列表mCustomPostProcessings,这个列表是存我们所有的后处理类的实例的列表,我们之后要得到这些实例里指定注入点的实例,比如说我们要得到AfterOpaque, AfterSkybox, BeforePostProcess, AfterPostProcess这四个注入点的AfterOpaque注入点的实例。然后我们就能为它创建pass。

using System.Collections.Generic;

using System.Linq;

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

//自定义Render Feature,实现后会自动在RenderFeature面板上可供添加

public class CustomPostProcessingFeature : ScriptableRendererFeature

{

private CustomPostProcessingPass mAfterOpaquePass;

private CustomPostProcessingPass mAfterSkyboxPass;

private CustomPostProcessingPass mBeforePostProcessPass;

private CustomPostProcessingPass mAfterPostProcessPass;

//所有后处理基类列表

private List<CustomPostProcessing> mCustomPostProcessings;

//最重要的方法,用来生成RenderPass

//获取所有CustomPostProcessing实例,并且根据插入点顺序,放入到对应Render Pass中,并且指定Pass Event

public override void Create()

{

//获取VolumeStack

var stack = VolumeManager.instance.stack;

//获取所有的CustomPostProcessing实例

mCustomPostProcessings = VolumeManager.instance.baseComponentTypeArray

.Where(t => t.IsSubclassOf(typeof(CustomPostProcessing))) //筛选出VolumeComponent派生类类型中所有的CustomPostProcessing类型元素,不论是否在Volume中,不论是否激活

.Select(t => stack.GetComponent(t) as CustomPostProcessing) //将类型元素转化为实例

.ToList(); //转化为List

#region 初始化不同插入点的render pass

#region 初始化在不透明物体渲染之后的pass

//找到在不透明物后渲染的CustomPostProcessing

var afterOpaqueCPPs = mCustomPostProcessings

.Where(c => c.InjectionPoint == CustomPostProcessingInjectionPoint.AfterOpaque) // 筛选出所有CustomPostProcessing类中注入点为透明物体和天空后的实例

.OrderBy(c => c.OrderInInjectionPoint) //按顺序排序

.ToList(); //转化为List

// 创建CustomPostProcessingPass类

mAfterOpaquePass = new CustomPostProcessingPass("Custom Post-Process after Opaque", afterOpaqueCPPs);

//设置pass执行时间

mAfterOpaquePass.renderPassEvent = RenderPassEvent.AfterRenderingOpaques;

#endregion

#region 初始化在透明物体和天空渲染后的pass

var afterTransAndSkyboxCPPs = mCustomPostProcessings

.Where(c => c.InjectionPoint == CustomPostProcessingInjectionPoint.AfterSkybox)

.OrderBy(c => c.OrderInInjectionPoint)

.ToList();

mAfterSkyboxPass = new CustomPostProcessingPass("Custom Post-Process after transparent and skybox", afterTransAndSkyboxCPPs);

mAfterSkyboxPass.renderPassEvent = RenderPassEvent.AfterRenderingSkybox;

#endregion

#region 初始化在后处理效果渲染之前的pass

var beforePostProcessCPPs = mCustomPostProcessings

.Where(c => c.InjectionPoint == CustomPostProcessingInjectionPoint.BeforePostProcess)

.OrderBy(c => c.OrderInInjectionPoint)

.ToList();

mBeforePostProcessPass = new CustomPostProcessingPass("Custom Post-Process before PostProcess", beforePostProcessCPPs);

mBeforePostProcessPass.renderPassEvent = RenderPassEvent.BeforeRenderingPostProcessing;

#endregion

#region 初始化在后处理效果渲染之后的pass

var afterPostProcessCPPs = mCustomPostProcessings

.Where(c => c.InjectionPoint == CustomPostProcessingInjectionPoint.AfterPostProcess)

.OrderBy(c => c.OrderInInjectionPoint)

.ToList();

mAfterPostProcessPass = new CustomPostProcessingPass("Custom Post-Process after PostProcess", afterPostProcessCPPs);

mAfterPostProcessPass.renderPassEvent = RenderPassEvent.AfterRenderingPostProcessing;

#endregion

#endregion

}

// 当为每个摄像机设置一个渲染器时,调用此方法

// 将不同注入点的RenderPass注入到renderer中(添加Pass到渲染队列)

//网上有些资料在这个函数里配置RenderPass的源RT和目标RT,具体来说使用类似RenderPass.Setup(renderer.cameraColorTargetHandle, renderer.cameraColorTargetHandle)的方式.

//但是这在URP14.0中会报错,提示renderer.cameraColorTargetHandle只能在ScriptableRenderPass子类里调用。具体细节可以查看最后的参考连接。

public override void AddRenderPasses(ScriptableRenderer renderer, ref RenderingData renderingData)

{

//当前渲染的游戏相机支持后处理

if(renderingData.cameraData.postProcessEnabled){

//为每个render pass设置RT

//并且将pass列表加到renderer中

if(mAfterOpaquePass.SetupCustomPostProcessing()){

mAfterOpaquePass.ConfigureInput(ScriptableRenderPassInput.Color);

renderer.EnqueuePass(mAfterOpaquePass);

}

if(mAfterSkyboxPass.SetupCustomPostProcessing()){

mAfterSkyboxPass.ConfigureInput(ScriptableRenderPassInput.Color);

renderer.EnqueuePass(mAfterSkyboxPass);

}

if(mBeforePostProcessPass.SetupCustomPostProcessing()){

mBeforePostProcessPass.ConfigureInput(ScriptableRenderPassInput.Color);

renderer.EnqueuePass(mBeforePostProcessPass);

}

if(mAfterPostProcessPass.SetupCustomPostProcessing()){

mAfterPostProcessPass.ConfigureInput(ScriptableRenderPassInput.Color);

renderer.EnqueuePass(mAfterPostProcessPass);

}

}

}

protected override void Dispose(bool disposing)

{

base.Dispose(disposing);

//mAfterSkyboxPass.Dispose();

//mBeforePostProcessPass.Dispose();

//mAfterPostProcessPass.Dispose();

if(disposing && mCustomPostProcessings != null){

foreach(var item in mCustomPostProcessings){

item.Dispose();

}

}

}

}

CustomPostProcessingPass.cs

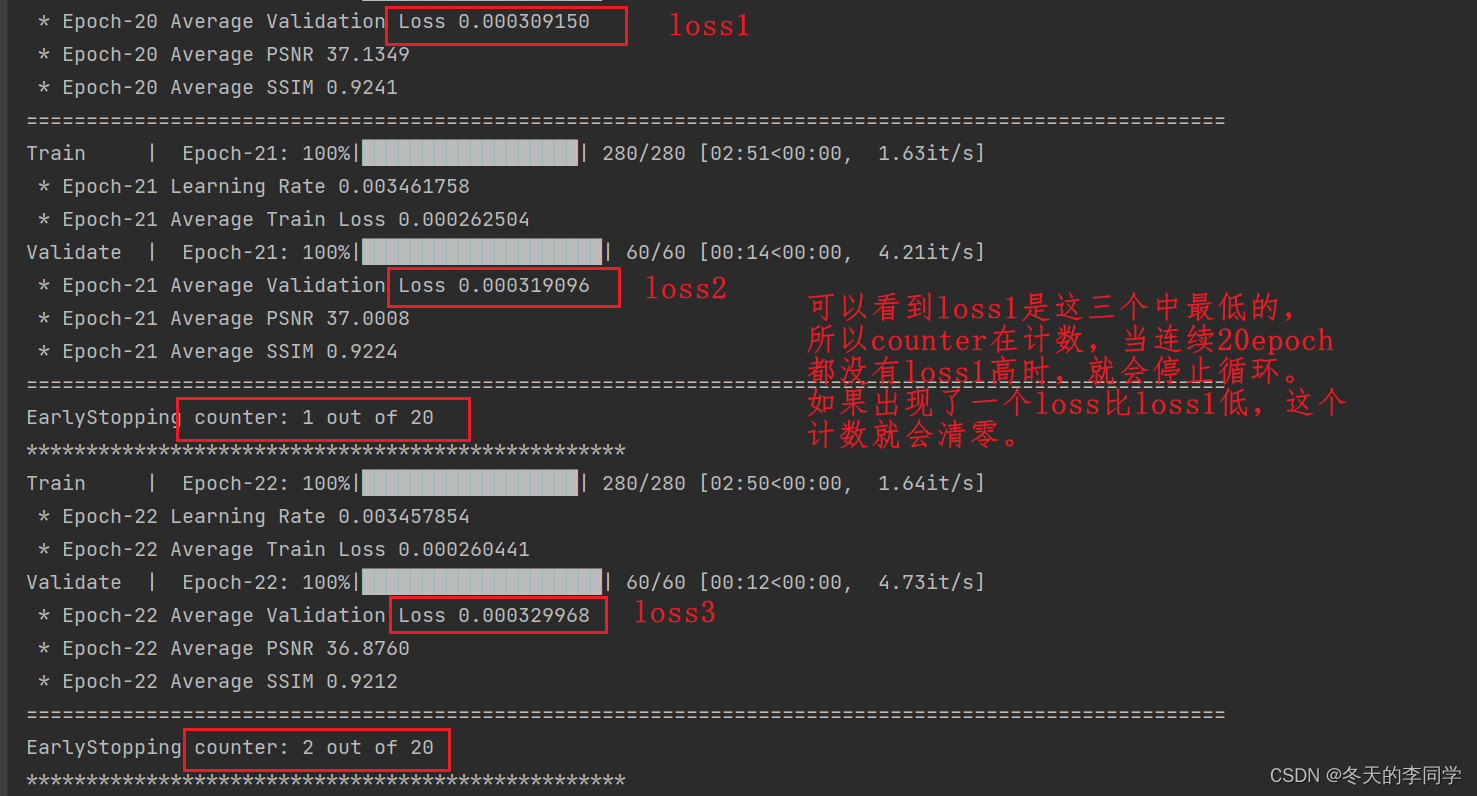

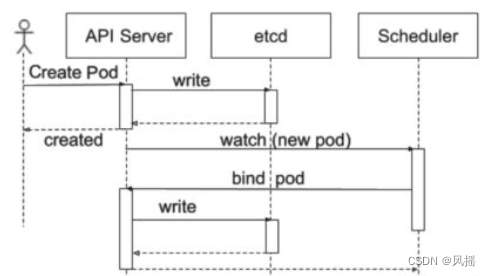

最后就是我们的render pass类。它同样也需要创建一个自定义后处理的列表。为了我们后面获取到当前已激活的后处理。根据我们当前已激活的后处理组件的数量,我们就能决定我们需要添加的pass数量。

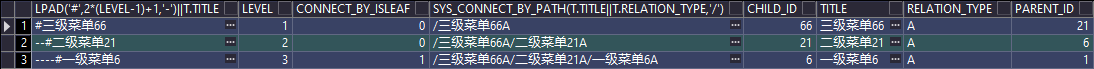

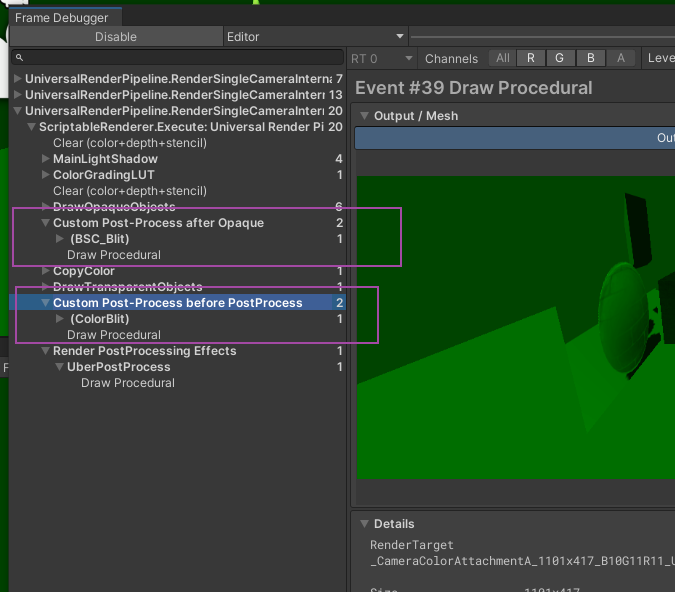

比如下图框出来的两个阶段就是我们自己后处理加上的:

using System;

using System.Collections.Generic;

using System.Linq;

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

public class CustomPostProcessingPass : ScriptableRenderPass

{

//所有自定义后处理基类 列表

private List<CustomPostProcessing> mCustomPostProcessings;

//当前active组件下标

private List<int> mActiveCustomPostProcessingIndex;

//每个组件对应的ProfilingSampler,就是frameDebug上显示的

private string mProfilerTag;

private List<ProfilingSampler> mProfilingSamplers;

//声明

private RTHandle mSourceRT;

private RTHandle mDesRT;

private RTHandle mTempRT0;

private RTHandle mTempRT1;

private string mTempRT0Name => "_TemporaryRenderTexture0";

private string mTempRT1Name => "_TemporaryRenderTexture1";

//Pass的构造方法,参数都由Feature传入

public CustomPostProcessingPass(string profilerTag, List<CustomPostProcessing> customPostProcessings){

mProfilerTag = profilerTag; //这个profilerTag就是在frame dbugger中我们自己额外创建的渲染通道的名字

mCustomPostProcessings = customPostProcessings;

mActiveCustomPostProcessingIndex = new List<int>(customPostProcessings.Count);

//将自定义后处理对象列表转换成一个性能采样器对象列表

mProfilingSamplers = customPostProcessings.Select(c => new ProfilingSampler(c.ToString())).ToList();

//在URP14.0(或者在这之前)中,抛弃了原有RenderTargetHandle,而通通使用RTHandle。原来的Init也变成了RTHandles.Alloc

//mTempRT0 = RTHandles.Alloc(mTempRT0Name, name:mTempRT0Name);

//mTempRT1 = RTHandles.Alloc(mTempRT1Name, name:mTempRT1Name);

}

// 相机初始化时执行

public override void OnCameraSetup(CommandBuffer cmd, ref RenderingData renderingData){

var descriptor = renderingData.cameraData.cameraTargetDescriptor;

descriptor.msaaSamples = 1;

descriptor.depthBufferBits = 0;

//分配临时纹理 TODO:还有疑问,关于_CameraColorAttachmentA和_CameraColorAttachmentB

RenderingUtils.ReAllocateIfNeeded(ref mTempRT0, descriptor, name:mTempRT0Name);

RenderingUtils.ReAllocateIfNeeded(ref mTempRT1, descriptor, name:mTempRT1Name);

foreach(var i in mActiveCustomPostProcessingIndex){

mCustomPostProcessings[i].OnCameraSetup(cmd, ref renderingData);

}

}

//获取active的CPPs下标,并返回是否存在有效组件

public bool SetupCustomPostProcessing(){

mActiveCustomPostProcessingIndex.Clear(); //mActiveCustomPostProcessingIndex的数量和mCustomPostProcessings.Count是相等的

for(int i = 0; i < mCustomPostProcessings.Count; i++){

mCustomPostProcessings[i].Setup();

if(mCustomPostProcessings[i].IsActive()){

mActiveCustomPostProcessingIndex.Add(i);

}

}

return (mActiveCustomPostProcessingIndex.Count != 0);

}

//实现渲染逻辑

public override void Execute(ScriptableRenderContext context, ref RenderingData renderingData)

{

//初始化CommandBuffer

CommandBuffer cmd = CommandBufferPool.Get(mProfilerTag);

context.ExecuteCommandBuffer(cmd);

cmd.Clear();

//获取相机Descriptor

var descriptor = renderingData.cameraData.cameraTargetDescriptor;

descriptor.msaaSamples = 1;

descriptor.depthBufferBits = 0;

//初始化临时RT

bool rt1Used = false;

//1.声明temp0临时纹理

//cmd.GetTemporaryRT(Shader.PropertyToID(mTempRT0.name), descriptor);

// mTempRT0 = RTHandles.Alloc(mTempRT0.name);

RenderingUtils.ReAllocateIfNeeded(ref mTempRT0, descriptor, name:mTempRT0Name);

//2.设置源和目标RT为本次渲染的RT,在Execute里进行,特殊处理 后处理 后注入点

mDesRT = renderingData.cameraData.renderer.cameraColorTargetHandle;

mSourceRT = renderingData.cameraData.renderer.cameraColorTargetHandle;

//执行每个组件的Render方法

//3.如果只有一个后处理效果,则直接将这个后处理效果从mSourceRT渲染到mTempRT0(临时纹理)

if(mActiveCustomPostProcessingIndex.Count == 1){

int index = mActiveCustomPostProcessingIndex[0];

using(new ProfilingScope(cmd, mProfilingSamplers[index])){

mCustomPostProcessings[index].Render(cmd, ref renderingData,mSourceRT, mTempRT0);

}

}

else{

//如果有多个组件,则在两个RT上来回blit。由于每次循环结束交换它们,所以最终纹理依然存在mTempRT0

RenderingUtils.ReAllocateIfNeeded(ref mTempRT1, descriptor, name:mTempRT1Name);

//Blitter.BlitCameraTexture(cmd, mSourceRT, mTempRT0);

rt1Used = true;

Blit(cmd, mSourceRT, mTempRT0);

for(int i = 0; i < mActiveCustomPostProcessingIndex.Count; i++){

int index = mActiveCustomPostProcessingIndex[i];

var customProcessing = mCustomPostProcessings[index];

using(new ProfilingScope(cmd, mProfilingSamplers[index])){

customProcessing.Render(cmd, ref renderingData, mTempRT0, mTempRT1);

}

CoreUtils.Swap(ref mTempRT0, ref mTempRT1);

}

}

Blitter.BlitCameraTexture(cmd,mTempRT0, mDesRT);

//释放

cmd.ReleaseTemporaryRT(Shader.PropertyToID(mTempRT0.name));

if(rt1Used){

cmd.ReleaseTemporaryRT(Shader.PropertyToID(mTempRT1.name));

}

context.ExecuteCommandBuffer(cmd);

CommandBufferPool.Release(cmd);

}

//相机清除时执行

public override void OnCameraCleanup(CommandBuffer cmd)

{

mDesRT = null;

mSourceRT = null;

}

public void Dispose(){

mTempRT0?.Release();

mTempRT1?.Release();

}

}

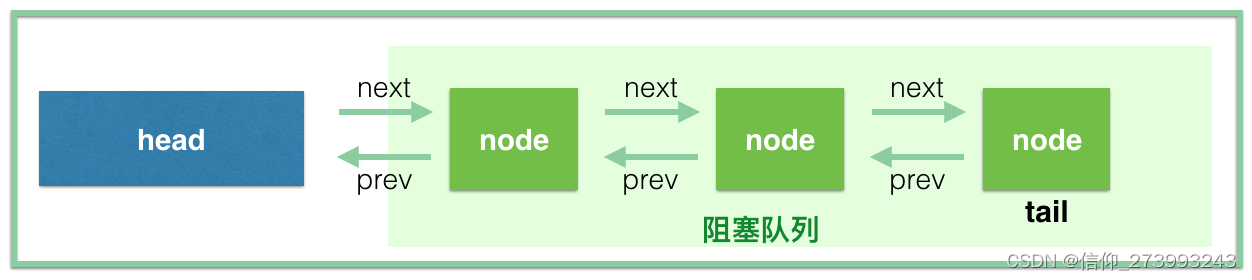

render pass主要也是分成几个部分:

- 构造函数,构造pass,参数都由之前的render feature传入。

- OnCameraSetup函数,相机初始化时执行的函数,我们在这个函数里初始化一些东西。这在那里面打的一个注释TODO关于_CameraColorAttachmentA和_CameraColorAttachmentB,其实是原来的那位大大说如果我们把自定义后处理的注入点设为AfterPostProcessing之后,就会多出来一个Final Blit阶段,这个阶段的输入源RT为_CameraColorAttachmentB而不是_CameraColorAttachmentA。具体怎么操作的我没太明白。

- Execute函数,实现渲染逻辑,也就是我们要在这个render pass中做什么事情。搬一下原文的解释:

- 声明临时纹理

- 设置源渲染纹理mSourceRT目标渲染纹理mDesRT为渲染数据的相机颜色目标处理。(区分有无finalBlit)

- 如果只有一个后处理效果,则直接将这个后处理效果从mSourceRT渲染到mTempRT0。

- 如果有多个后处理效果,则逐后处理的在mTempRT0和mTempRT1之间渲染。由于每次循环结束交换它们,所以最终纹理依然存在mTempRT0。

- 使用Blitter.BlitCameraTexture函数将mTempRT0中的结果复制到目标渲染纹理mDesRT中。

- OnCameraCleanup函数,相机清除时执行。

- Dispose函数,释放资源。

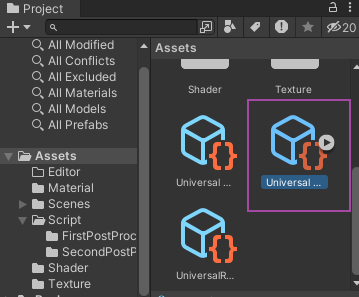

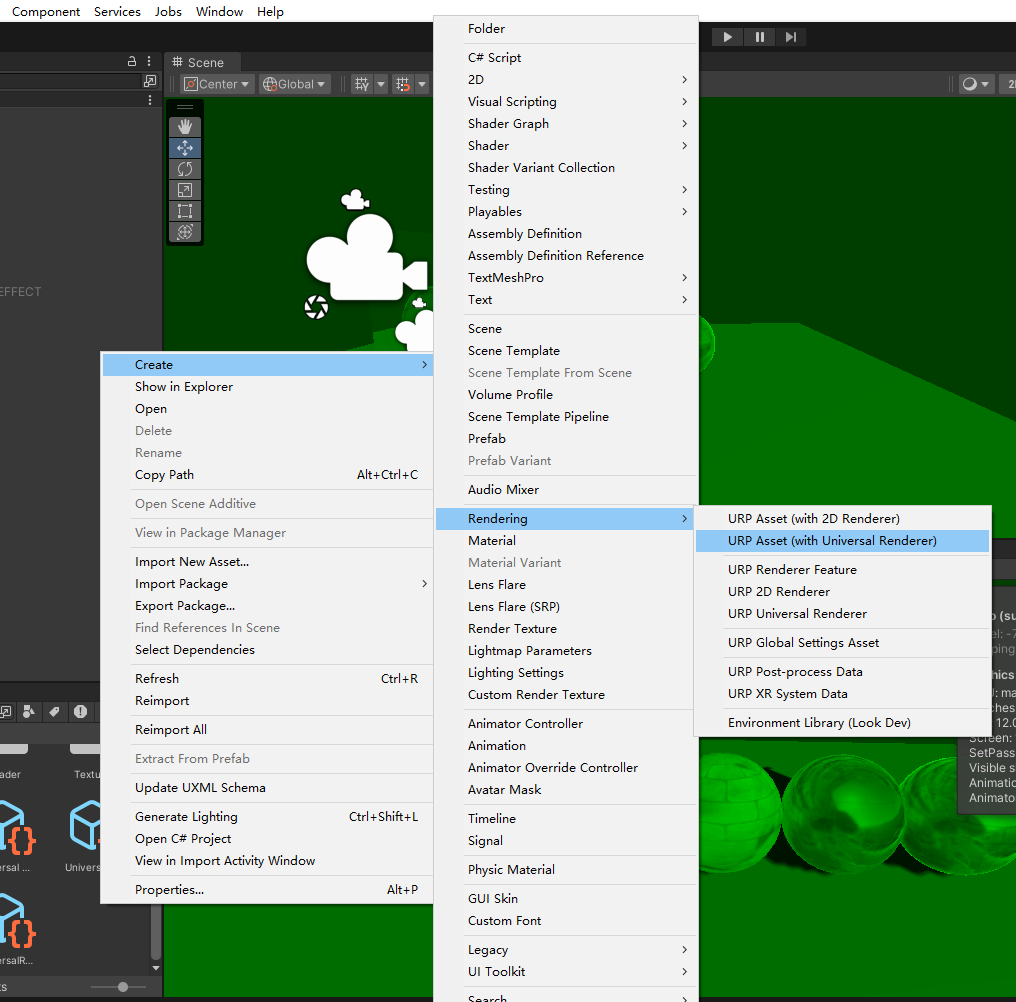

在写完render feature和render pass后就能上unity面板上挂载了。如果没有Universal Render Pipeline Asset就需要自己创建了。

如果没有就创建:

例子:BSC

后处理shader(BSC)

Shader "URP/12_BrightnessSaturationAndContrast"

{

Properties

{

_MainTex ("Texture", 2D) = "white" {}

_Brightness("Brightness",Float)=1.5

_Saturation("Saturation", Float) = 1.5

_Contrast("Contrast", Float) = 1.5

}

SubShader

{

Tags {

"RenderPipeline" = "UniversalRenderPipeline"

"RenderType"="Opaque"

}

//基本是后处理shader的必备设置,放置场景中的透明物体渲染错误

//注意进行该设置后,shader将在完成透明物体的渲染后起作用,即RenderPassEvent.AfterRenderingTransparents后

ZTest Always

Cull Off

ZWrite Off

HLSLINCLUDE

#include "Packages/com.unity.render-pipelines.universal/ShaderLibrary/Core.hlsl"

#include "Packages/com.unity.render-pipelines.universal/ShaderLibrary/Lighting.hlsl"

CBUFFER_START(UnityPerMaterial)

float4 _MainTex_ST;

half _Brightness;

half _Saturation;

half _Contrast;

CBUFFER_END

// 下面两句类似于 sampler2D _MainTex;

TEXTURE2D(_MainTex);

SAMPLER(sampler_MainTex);

struct a2v{

float4 positionOS:POSITION;

float2 texcoord:TEXCOORD;

};

struct v2f{

float4 positionCS:SV_POSITION;

float2 texcoord:TEXCOORD;

};

ENDHLSL

Pass

{

Name "BSC_Pass"

Tags{

"LightMode" = "UniversalForward"

}

HLSLPROGRAM

#pragma vertex vert

#pragma fragment frag

v2f vert (a2v v)

{

v2f o;

//o.vertex = UnityObjectToClipPos(v.vertex);

o.positionCS = TransformObjectToHClip(v.positionOS.xyz); // 类似于上面那句

o.texcoord = TRANSFORM_TEX(v.texcoord, _MainTex);

return o;

}

half4 frag (v2f i) : SV_Target

{

// sample the texture

//fixed4 col = tex2D(_MainTex, i.uv);

half4 tex = SAMPLE_TEXTURE2D(_MainTex, sampler_MainTex, i.texcoord); //SAMPLE_TEXTURE2D类似上面那句采样的tex2D

//应用亮度,亮度的调整非常简单,只需要把原颜色乘以亮度系数_Brightness即可

half3 finalColor = tex.rgb * _Brightness;

//应用饱和度,通过对每个颜色分量乘以一个特定的系数再相加得到一个饱和度为0的颜色值

half luminance = 0.2125 * tex.r + 0.7154 * tex.g + 0.0721 * tex.b;

half3 luminanceColor = half3(luminance,luminance,luminance);

//用_Saturation属性和上一步得到的颜色之间进行插值

finalColor = lerp(luminanceColor, finalColor, _Saturation);

//应用对比度,创建一个对比度为0的颜色值(各分量为0.5)

half3 avgColor = half3(0.5, 0.5, 0.5);

//使用_Contrast属性和上一步得到的颜色之间进行插值

finalColor = lerp(avgColor, finalColor, _Contrast);

return half4(finalColor, 1.0);

}

ENDHLSL

}

}

FallBack "Packages/com.unity.render-pipelines.universal/FallbackError"

}

后处理cs脚本(BSC)

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

[VolumeComponentMenu("My Post-Processing/BSC_Blit")]

public class BSC_Blit : CustomPostProcessing

{

public ClampedFloatParameter brightness = new ClampedFloatParameter(1.5f, 0.0f, 10.0f);

public ClampedFloatParameter saturation = new ClampedFloatParameter(1.5f, 0.0f, 10.0f);

public ClampedFloatParameter contrast = new ClampedFloatParameter(1.5f, 0.0f, 10.0f);

private Material material;

private const string mShaderName = "URP/12_BrightnessSaturationAndContrast";

public override CustomPostProcessingInjectionPoint InjectionPoint => CustomPostProcessingInjectionPoint.AfterOpaque;

public override int OrderInInjectionPoint => 0;

public override bool IsActive()

{

return (material != null);

}

//配置当前后处理,创建材质

public override void Setup()

{

if(material == null){

material = CoreUtils.CreateEngineMaterial(mShaderName);

}

}

//渲染,设置材质的各种参数

public override void Render(CommandBuffer cmd, ref RenderingData renderingData, RTHandle source, RTHandle destination)

{

if(material == null)

{

Debug.LogWarning("材质不存在");

return;

}

material.SetFloat("_Brightness", brightness.value);

material.SetFloat("_Saturation", saturation.value);

material.SetFloat("_Contrast", contrast.value);

cmd.Blit(source, destination,material, 0);

}

public override void Dispose(bool disposing)

{

base.Dispose(disposing);

CoreUtils.Destroy(material);

}

}

例子:ColorBlit

这个是大大的列子,还加了hlsl文件。

PostProcessing.hlsl

#ifndef POSTPROCESSING_INCLUDED

#define POSTPROCESSING_INCLUDED

#include "Packages/com.unity.render-pipelines.universal/ShaderLibrary/Core.hlsl"

TEXTURE2D(_MainTex);

SAMPLER(sampler_MainTex);

TEXTURE2D(_CameraDepthTexture);

SAMPLER(sampler_CameraDepthTexture);

struct Attributes {

float4 positionOS : POSITION;

float2 uv : TEXCOORD0;

};

struct Varyings {

float2 uv : TEXCOORD0;

float4 vertex : SV_POSITION;

UNITY_VERTEX_OUTPUT_STEREO

};

half4 SampleSourceTexture(float2 uv) {

return SAMPLE_TEXTURE2D(_MainTex, sampler_MainTex, uv);

}

half4 SampleSourceTexture(Varyings input) {

return SampleSourceTexture(input.uv);

}

Varyings Vert(Attributes input) {

Varyings output = (Varyings)0;

// 分配instance id

UNITY_INITIALIZE_VERTEX_OUTPUT_STEREO(output);

VertexPositionInputs vertexInput = GetVertexPositionInputs(input.positionOS.xyz);

output.vertex = vertexInput.positionCS;

output.uv = input.uv;

return output;

}

#endif

ColorBlit2.shader

Shader "URP/ColorBlit2"

{

Properties {

// 显式声明出来_MainTex

[HideInInspector]_MainTex ("Base (RGB)", 2D) = "white" {}

}

SubShader {

Tags {

"RenderType"="Opaque"

"RenderPipeline" = "UniversalPipeline"

}

LOD 200

Pass {

Name "ColorBlitPass"

HLSLPROGRAM

#include "PostProcessing.hlsl"

#pragma vertex Vert

#pragma fragment frag

float _Intensity;

half4 frag(Varyings input) : SV_Target {

float4 color = SAMPLE_TEXTURE2D(_MainTex, sampler_MainTex, input.uv);

return color * float4(0, _Intensity, 0, 1);

}

ENDHLSL

}

}

}

ColorBlit.cs文件

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.Rendering;

using UnityEngine.Rendering.Universal;

[VolumeComponentMenu("My Post-processing/Color Blit2")]

public class ColorBlit : CustomPostProcessing

{

public ClampedFloatParameter intensity = new ClampedFloatParameter(0.0f, 0.0f, 2.0f);

private Material material;

private const string mShaderName = "URP/ColorBlit2";

public override bool IsActive()

{

return (material != null && intensity.value > 0);

}

public override CustomPostProcessingInjectionPoint InjectionPoint => CustomPostProcessingInjectionPoint.BeforePostProcess;

public override int OrderInInjectionPoint => 0;

public override void Setup()

{

if(material == null){

material = CoreUtils.CreateEngineMaterial(mShaderName);

}

}

public override void Render(CommandBuffer cmd, ref RenderingData renderingData, RTHandle source, RTHandle destination)

{

if(material == null){

Debug.LogWarning("材质不存在,请检查");

return;

}

material.SetFloat("_Intensity", intensity.value);

cmd.Blit(source, destination, material, 0);

}

public override void Dispose(bool disposing)

{

base.Dispose(disposing);

CoreUtils.Destroy(material);

}

}

其实不太明白这个hlsl文件的意义,因为我自己写的那个BSC是可以用的,如果大家优质的麻烦评论区讲解一下。

其他一些参考

- URP RenderFeature 基础入门教学

- 猫都能看懂的URP RenderFeature使用及自定义方法

- 《Unity Shader 入门精要》从Bulit-in 到URP (HLSL)之后处理(Post-processing : RenderFeature + VolumeComponent)

- Unity URP管线如何截屏,及热扰动(热扭曲)效果的实现