1. 实现几个函数方便下载数据

import hashlib

import os

import tarfile

import zipfile

import requests

#@save

DATA_HUB = dict()

DATA_URL = 'http://d2l-data.s3-accelerate.amazonaws.com/'

def download(name, cache_dir=os.path.join('..', 'data')): #@save

"""下载一个DATA_HUB中的文件,返回本地文件名"""

assert name in DATA_HUB, f"{name} 不存在于 {DATA_HUB}"# 判断变量name是否存在于DATA_HUB中,不在则抛出异常

url, sha1_hash = DATA_HUB[name]

os.makedirs(cache_dir, exist_ok=True)# cache_dir目录不存在,则创建该目录,如果目录已经存在,则什么都不做

fname = os.path.join(cache_dir, url.split('/')[-1])# 拼接成一个完整的路径

if os.path.exists(fname): # 路径存在

sha1 = hashlib.sha1() # 创建了一个哈希对象

with open(fname, 'rb') as f:

while True:

data = f.read(1048576)

if not data:

break

sha1.update(data)

if sha1.hexdigest() == sha1_hash:

return fname # 命中缓存

print(f'正在从{url}下载{fname}...')

r = requests.get(url, stream=True, verify=True)

with open(fname, 'wb') as f:

f.write(r.content)

return fname

def download_extract(name, folder=None): #@save

"""下载并解压zip/tar文件"""

fname = download(name)

base_dir = os.path.dirname(fname)

data_dir, ext = os.path.splitext(fname)

if ext == '.zip':

fp = zipfile.ZipFile(fname, 'r')

elif ext in ('.tar', '.gz'):

fp = tarfile.open(fname, 'r')

else:

assert False, '只有zip/tar文件可以被解压缩'

fp.extractall(base_dir)

return os.path.join(base_dir, folder) if folder else data_dir

def download_all(): #@save

"""下载DATA_HUB中的所有文件"""

for name in DATA_HUB:

download(name)

2. 使用pandas读入并处理数据

%matplotlib inline

import numpy as np

import pandas as pd

import torch

from torch import nn

from d2l import torch as d2l

DATA_HUB['kaggle_house_train'] = ( # 将数据集的名称kaggle_house_train作为字典DATA_HUB的键

DATA_URL + 'kaggle_house_pred_train.csv', # 数据集的下载链接

'585e9cc93e70b39160e7921475f9bcd7d31219ce') # 哈希值用于验证数据的完整性

DATA_HUB['kaggle_house_test'] = (

DATA_URL + 'kaggle_house_pred_test.csv',

'fa19780a7b011d9b009e8bff8e99922a8ee2eb90')

# 从指定的数据源下载名为'kaggle_house_train'的CSV文件,

# 并使用pd.read_csv()函数将其读取为一个DataFrame对象,并将该对象赋值

train_data = pd.read_csv(download('kaggle_house_train'))

test_data = pd.read_csv(download('kaggle_house_test'))

print(train_data.shape)

print(test_data.shape)

观察特征

打印出前4行,前4列和最后3列打印出来

【使用iloc属性对train_data这个DataFrame对象进行切片操作,选取了指定行和列的数据子集】

print(train_data.iloc[0:4, [0, 1, 2, 3, -3, -2, -1]])

在每个样本中,第一个特征ID不能参与训练,所以要将其删除

saleprice作为标签在训练数据中要进行删除

all_features = pd.concat((train_data.iloc[:, 1:-1], test_data.iloc[:, 1:]))# 将train_data去除第一列ID和最后一列标签,和去除id的test_data进行合并

将所有缺失的值替换为相应特征的平均值,通过将特征重新缩放到零均值和单位方差来标准化数据

【.fillna(0)对选择的数值型特征进行了填充操作,将缺失值(NaN值)填充为0。fillna()是一个DataFrame对象的方法,用于填充缺失值】

numeric_features = all_features.dtypes[all_features.dtypes != 'object'].index # all_features.dtypes != 'object'-》数值型数据

"""-》将数值特征均值设为0,方差设为1"""

all_features[numeric_features] = all_features[numeric_features].apply(

lambda x: (x - x.mean()) / (x.std())) # 将(数值特征 - 均值)/方差

all_features[numeric_features] = all_features[numeric_features].fillna(0) # 对选择的数值型特征进行了填充操作,将缺失值(NaN值)填充为0

处理离散值,用一次独热编码替换它们

all_features = pd.get_dummies(all_features, dummy_na=True)

all_features.shape

从pandas格式中提取NumPy格式,并将其转化为张量表示

【.values将该列数据转换为一个Numpy数组。

.reshape(-1, 1)改变数组的形状,将其变为一个列向量(具有一列)。】

n_train = train_data.shape[0]

all_features = all_features.astype(float) # 进行强制类型转化否则会报错

train_features = torch.tensor(all_features[:n_train].values, # 之前将train_data和 test_data结合,现在进行下标分开

dtype=torch.float32)

test_features = torch.tensor(all_features[n_train:].values,

dtype=torch.float32)

train_labels = torch.tensor(train_data.SalePrice.values.reshape(-1, 1),# SalePrice列数据提取出来,并将其转换为一个列向量(具有一列)

dtype=torch.float32)

训练

loss = nn.MSELoss()

in_features = train_features.shape[1]

def get_net():

net = nn.Sequential(nn.Linear(in_features, 1)) # 使用单层线性回归,输入特征数:in_features,输出特征数:1

return net

为解决误差的影响,可以使用相对误差 (真实房价-预测房价/真实房价),其中一种方法是用价格预测的对数来衡量差异

【torch.clamp()函数会将输出结果中小于下界的值替换为下界,将大于上界的值替换为上界,因此它可以用来对输出结果进行范围限制】

def log_rmse(net, features, labels): # log可以将除法转化为减法

clipped_preds = torch.clamp(net(features), 1, float('inf'))# 对输出进行截断,将小于1的值设置为1,大于float('inf')的值保持不变

rmse = torch.sqrt(loss(torch.log(clipped_preds), torch.log(labels))) # 对预测和实际标签进行log,然后传入损失函数后取根号

return rmse.item()# 返回 张量rmse中的值提取为一个标量

训练函数将借助Adam优化器

def train(net, train_features, train_labels, test_features, test_labels,

num_epochs, learning_rate, weight_decay, batch_size):

train_ls, test_ls = [], []

train_iter = d2l.load_array((train_features, train_labels), batch_size)

optimizer = torch.optim.Adam(net.parameters(), lr=learning_rate,# 使用Adam【对学习率不太敏感】进行优化

weight_decay=weight_decay) # 权重衰减(weight decay)参数【lamdb】,用于控制模型参数的正则化

"""训练"""

for epoch in range(num_epochs):

for X, y in train_iter:

optimizer.zero_grad() # 优化器梯度清0

l = loss(net(X), y) # 计算损失

l.backward() # 反向传播计算梯度

optimizer.step() # 更新优化器参数

train_ls.append(log_rmse(net, train_features, train_labels)) # 更新数据

if test_labels is not None:

test_ls.append(log_rmse(net, test_features, test_labels))

return train_ls, test_ls

K则交叉验证

def get_k_fold_data(k, i, X, y):

assert k > 1

fold_size = X.shape[0] // k # 每一折的大小是样本数/k

X_train, y_train = None, None

for j in range(k):

idx = slice(j * fold_size, (j + 1) * fold_size) # 计算每个切片的起始和终止位置,根据切片索引idx取出相应位置上的数。

X_part, y_part = X[idx, :], y[idx] # 取出相应位置

if j == i: # 如果此时j==i,当前迭代的fold为验证集,则将切片X_part和y_part赋值给X_valid和y_valid。

X_valid, y_valid = X_part, y_part

elif X_train is None: # 如果训练集为空,则将切片X_part和y_part赋值给X_train和y_train

X_train, y_train = X_part, y_part

else: # 否则,将切片X_part和y_part与之前的训练集进行拼接,使用torch.cat()函数进行行拼接,将结果重新赋值给X_train和y_train。

X_train = torch.cat([X_train, X_part], 0)

y_train = torch.cat([y_train, y_part], 0)

# 返回训练集和验证集

return X_train, y_train, X_valid, y_valid

返回训练和验证误差的平均值

def k_fold(k, X_train, y_train, num_epochs, learning_rate, weight_decay,

batch_size):

train_l_sum, valid_l_sum = 0, 0

for i in range(k): # 做k次交叉验证

data = get_k_fold_data(k, i, X_train, y_train)

net = get_net()

train_ls, valid_ls = train(net, *data, num_epochs, learning_rate,

weight_decay, batch_size)

train_l_sum += train_ls[-1]

valid_l_sum += valid_ls[-1]

if i == 0:

d2l.plot(list(range(1, num_epochs + 1)), [train_ls, valid_ls],

xlabel='epoch', ylabel='rmse', xlim=[1, num_epochs],

legend=['train', 'valid'], yscale='log')

print(f'fold {i + 1}, train log rmse {float(train_ls[-1]):f}, '

f'valid log rmse {float(valid_ls[-1]):f}')

return train_l_sum / k, valid_l_sum / k # 返回平均测试集和验证集的损失

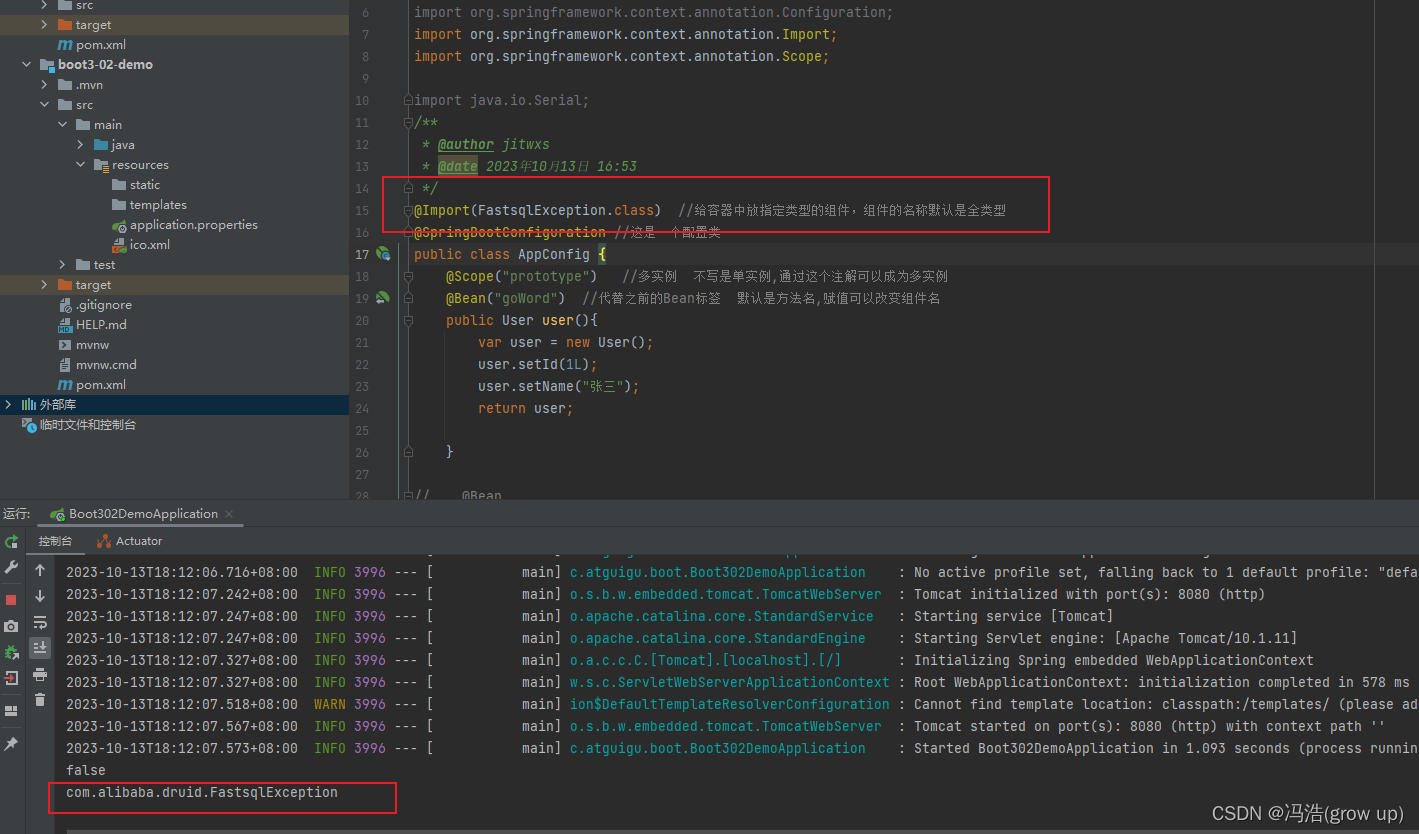

模型选择

k, num_epochs, lr, weight_decay, batch_size = 5, 100, 5, 0, 64

train_l, valid_l = k_fold(k, train_features, train_labels, num_epochs, lr,

weight_decay, batch_size)

print(f'{k}-折验证: 平均训练log rmse: {float(train_l):f}, '

f'平均验证log rmse: {float(valid_l):f}')

需要关注valid验证集的损失,需要不断的调整参数实现最小的损失

提交Kaggle预测

def train_and_pred(train_features, test_feature, train_labels, test_data,

num_epochs, lr, weight_decay, batch_size):

net = get_net()

train_ls, _ = train(net, train_features, train_labels, None, None,

num_epochs, lr, weight_decay, batch_size) # 返回训练过程中的训练误差列表train_ls和验证误差列表valid_ls,但在这个函数调用中用下划线 _ 代替了后者

# 绘制并显示训练误差的变化情况

d2l.plot(np.arange(1, num_epochs + 1), [train_ls], xlabel='epoch',

ylabel='log rmse', xlim=[1, num_epochs], yscale='log')

print(f'train log rmse {float(train_ls[-1]):f}')

# 使用训练好的模型net对测试特征进行预测,得到预测结果preds

preds = net(test_features).detach().numpy()

# 预测结果转换为Numpy数组,并将其赋值给测试数据集test_data的'SalePrice'列。

test_data['SalePrice'] = pd.Series(preds.reshape(1, -1)[0])

# 将预测结果和对应的'Id'列组合成一个DataFrame submission

submission = pd.concat([test_data['Id'], test_data['SalePrice']], axis=1)

# 将submission保存为CSV文件submission.csv

submission.to_csv('submission.csv', index=False)

# 调用了train_and_pred()函数,传入相应的参数,执行整个训练和预测的过程

train_and_pred(train_features, test_features, train_labels, test_data,

num_epochs, lr, weight_decay, batch_size)