import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

7.6.1 函数类

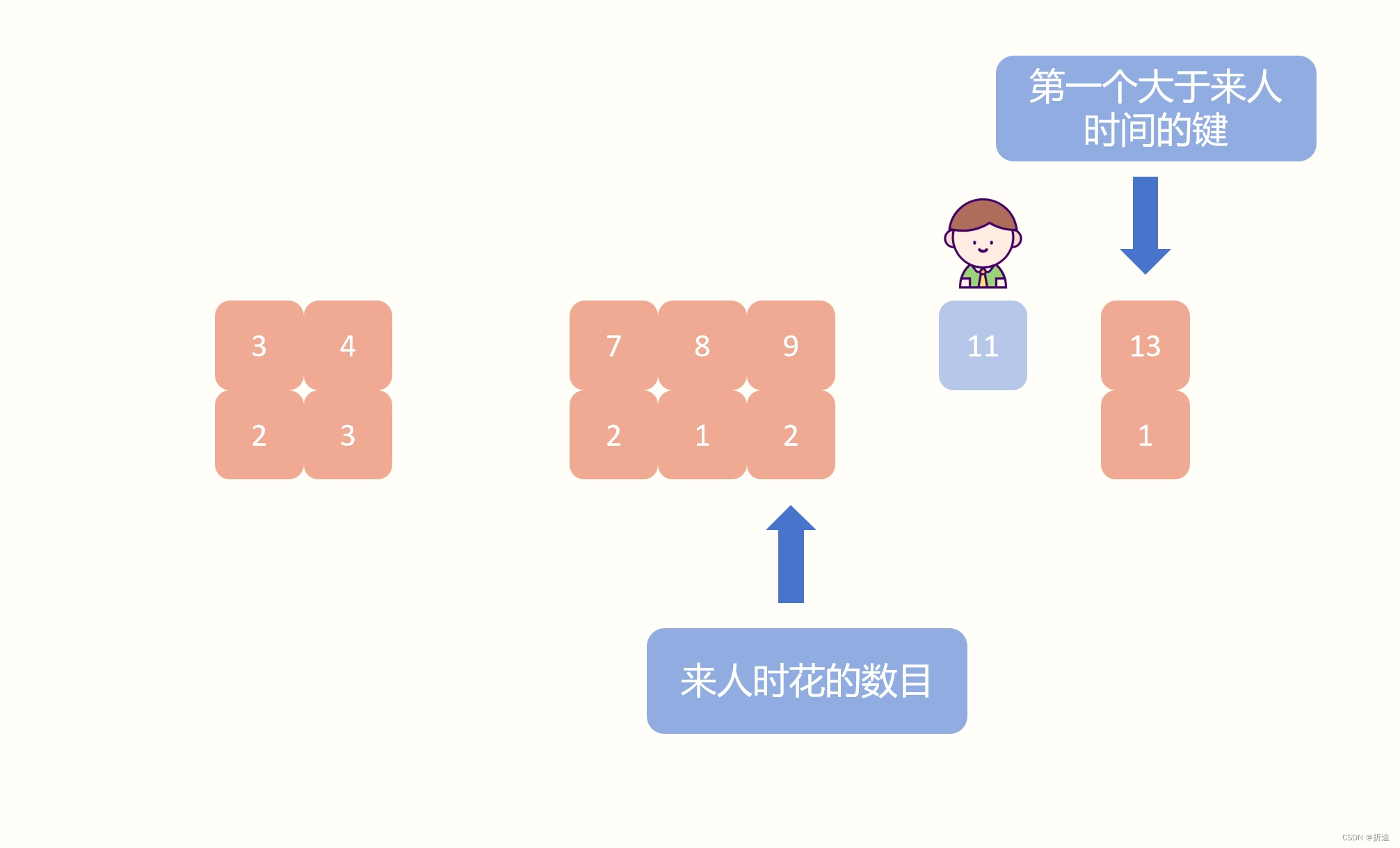

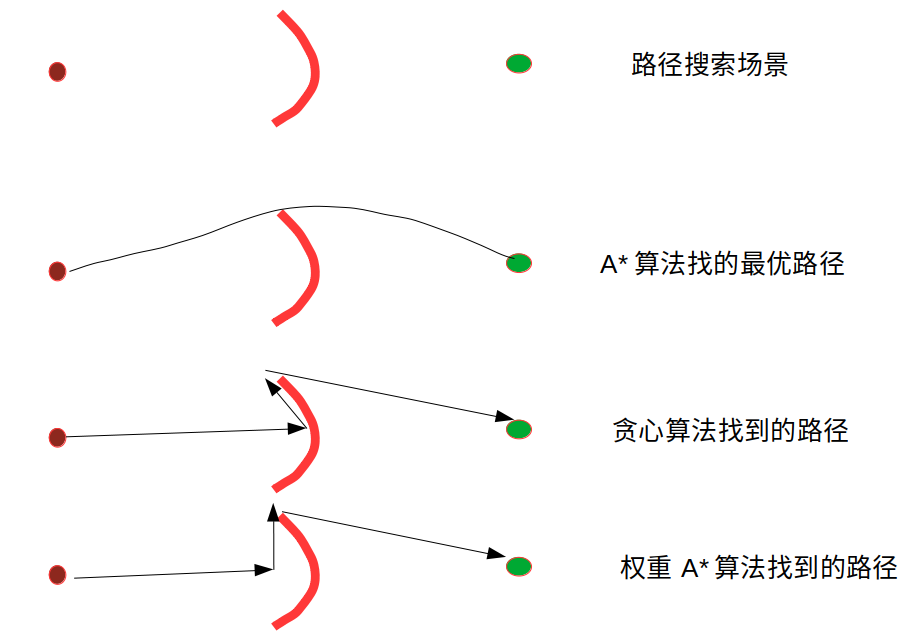

如果把模型看作一个函数,我们设计的更强大的模型则可以看作范围更大的函数。为了使函数能逐渐靠拢到最优解,应尽量使函数嵌套,以减少不必要的偏移。

如下图,更复杂的非嵌套函数不一定能保证更接近真正的函数。只有当较复杂的函数类包含较小的函数类时,我们才能确保提高它们的性能。

7.6.2 残差块

何恺明等人针对上述问题提出了残差网络。它在2015年的ImageNet图像识别挑战赛夺魁,并深刻影响了后来的深度神经网络的设计。残差网络的核心思想是:每个附加层都应该更容易地包含原始函数作为其元素之一。

假设原始输入是 x x x,而希望学习的理想映射为 f ( x ) f(x) f(x),则残差块需要拟合的便是残差映射 f ( x ) − x f(x)-x f(x)−x。残差映射在现实中更容易优化,也更容易捕获恒等函数的细微波动。之后再和 x x x 进行加法从而使整个模型重新变成 f ( x ) f(x) f(x),这里的加法会更有益于靠近数据端的层的训练,因为乘法中的梯度波动会极大的影响链式法则的结果,而在残差块中输入可以通过加法通路更快的前向传播。

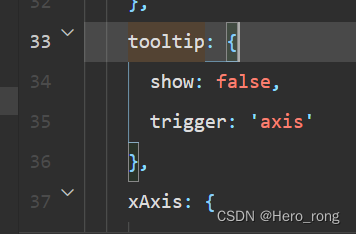

此即为正常块和残差块的区别:

ResNet 沿用了 VGG 完整的卷积层设计。残差块里首先有2个有相同输出通道数的 3 × 3 3\times 3 3×3 卷积层。每个卷积层后接一个批量规范化层和 ReLU 激活函数。然后通过跨层数据通路跳过这 2 个卷积运算,将输入直接加在最后的 ReLU 激活函数前。这样的设计需要 2 个卷积层的输出与输入形状一样才能使它们可以相加。

class Residual(nn.Module): #@save

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return F.relu(Y)

残差块如果想改变通道数,就需要引入一个额外的 1 × 1 1\times1 1×1 卷积层来将输入变换成需要的形状后再做相加运算。上述类在 use_1x1conv=False 时,应用在 ReLU 非线性函数之前,将输入添加到输出;在当 use_1x1conv=True 时,添加通过 1 × 1 1\times1 1×1 卷积调整通道和分辨率。

blk = Residual(3, 3)

X = torch.rand(4, 3, 6, 6)

Y = blk(X)

Y.shape # 输入形状和输出形状一致

torch.Size([4, 3, 6, 6])

blk = Residual(3, 6, use_1x1conv=True, strides=2) # 增加输出通道数的同时 减半输出的高度和宽度

blk(X).shape

torch.Size([4, 6, 3, 3])

7.6.3 ResNet 模型

每个模块有 4 个卷积层(不包括恒等映射的 1 × 1 1\times 1 1×1 卷积层)。加上第一个 $ 7\times 7$ 卷积层和最后一个全连接层,共有18层。因此,这种模型通常被称为 ResNet-18。虽然 ResNet 的主体架构跟 GoogLeNet 类似,但 ResNet 架构更简单,修改也更方便。

ResNet 的前两层跟 GoogLeNet 一样,在输出通道数为 64、步幅为 2 的 7 × 7 7\times7 7×7 卷积层后,接步幅为 2 的 3 × 3 3\times3 3×3 最大汇聚层。不同之处在于 ResNet 每个卷积层后增加了批量规范化层。

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

ResNet 在后面使用了 4 个由残差块组成的模块,每个模块使用若干个同样输出通道数的残差块。第一个模块的通道数同输入通道数一致。由于之前已经使用了步幅为 2 的最大汇聚层,所以无须减小高和宽。之后的每个模块在第一个残差块里将上一个模块的通道数翻倍,并将高和宽减半。

def resnet_block(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block: # 第一块特别处理

blk.append(Residual(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual(num_channels, num_channels))

return blk

每个模块使用 2 个残差块,最后加入全局平均汇聚层,以及全连接层输出。

b2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 2))

b4 = nn.Sequential(*resnet_block(128, 256, 2))

b5 = nn.Sequential(*resnet_block(256, 512, 2))

net = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

X = torch.rand(size=(1, 1, 224, 224))

for layer in net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)

Sequential output shape: torch.Size([1, 64, 56, 56])

Sequential output shape: torch.Size([1, 64, 56, 56])

Sequential output shape: torch.Size([1, 128, 28, 28])

Sequential output shape: torch.Size([1, 256, 14, 14])

Sequential output shape: torch.Size([1, 512, 7, 7])

AdaptiveAvgPool2d output shape: torch.Size([1, 512, 1, 1])

Flatten output shape: torch.Size([1, 512])

Linear output shape: torch.Size([1, 10])

7.6.4 训练模型

lr, num_epochs, batch_size = 0.05, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net, train_iter, test_iter, num_epochs, lr, d2l.try_gpu()) # 大约需要十五分钟,慎跑

loss 0.010, train acc 0.998, test acc 0.913

731.5 examples/sec on cuda:0

练习

(1)图 7-5 中的 Inception 块与残差块之间的主要区别是什么?在删除了 Inception 块中的一些路径之后,它们是如何相互关联的?

残差块并没有像 Inception 那样使用太多并行路径。和 Inception 的相似之处在于都使用了并联的 1 × 1 1\times 1 1×1的卷积核。

(2)参考 ResNet 论文中的表 1,以实现不同的变体。

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

b2 = nn.Sequential(*resnet_block(64, 64, 3, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 128, 4))

b4 = nn.Sequential(*resnet_block(128, 256, 6))

b5 = nn.Sequential(*resnet_block(256, 512, 3))

net34 = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

lr, num_epochs, batch_size = 0.05, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net34, train_iter, test_iter, num_epochs, lr, d2l.try_gpu()) # 大约需要二十五分钟,慎跑

loss 0.048, train acc 0.983, test acc 0.885

429.5 examples/sec on cuda:0

ResNet-34 还是阶梯状下降,只不过台阶变低了。起步就不如18,最终精度也不如 ResNet-18。

(3)对于更深层的网络,ResNet 引入了“bottleneck”架构来降低模型复杂度。请尝试它。

class Residual_bottleneck(nn.Module):

def __init__(self, input_channels, mid_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

# 下面改成 bottleneck

self.conv1 = nn.Conv2d(input_channels, mid_channels,

kernel_size=1)

self.conv2 = nn.Conv2d(mid_channels, mid_channels,

kernel_size=3, padding=1, stride=strides)

self.conv3 = nn.Conv2d(mid_channels, num_channels,

kernel_size=1)

if use_1x1conv:

self.conv4 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv4 = None

self.bn1 = nn.BatchNorm2d(mid_channels)

self.bn2 = nn.BatchNorm2d(mid_channels)

self.bn3 = nn.BatchNorm2d(num_channels)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = F.relu(self.bn2(self.conv2(Y)))

Y = self.bn3(self.conv3(Y))

if self.conv4:

X = self.conv4(X)

Y += X

return F.relu(Y)

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

def resnet_block_bottleneck(input_channels, mid_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block: # 第一块特别处理

blk.append(Residual_bottleneck(input_channels, mid_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual_bottleneck(num_channels, mid_channels, num_channels))

return blk

b2 = nn.Sequential(*resnet_block_bottleneck(64, 16, 64, 3, first_block=True))

b3 = nn.Sequential(*resnet_block_bottleneck(64, 32, 128, 4))

b4 = nn.Sequential(*resnet_block_bottleneck(128, 64, 256, 6))

b5 = nn.Sequential(*resnet_block_bottleneck(256, 128, 512, 3))

net1 = nn.Sequential(b1, b2, b3, b4, b5,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

lr, num_epochs, batch_size = 0.05, 10, 64

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net1, train_iter, test_iter, num_epochs, lr, d2l.try_gpu()) # 大约需要十五分钟,慎跑

loss 0.115, train acc 0.957, test acc 0.915

887.9 examples/sec on cuda:0

ResNet-50 跑不了一点,十分钟一个batch都跑不完。还是给 ResNet-34 强行换上 bottleneck 吧。

可以说提速效果显著,训练嘎嘎快,精度还反升了。

(4)在 ResNet 的后续版本中,作者将“卷积层、批量规范化层和激活层”架构更改为“批量规范化层、激活层和卷积层”架构。请尝试做这个改进。详见参考文献[57]中的图1。

class Residual_change(nn.Module):

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(input_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

def forward(self, X): # 修改顺序

Y = self.conv1(F.relu(self.bn1(X)))

Y = self.conv2(F.relu(self.bn2(Y)))

if self.conv3:

X = self.conv3(X)

Y += X

return Y

b1 = nn.Sequential(nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

def resnet_block_change(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(Residual_change(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual_change(num_channels, num_channels))

return blk

b2 = nn.Sequential(*resnet_block_change(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block_change(64, 128, 2))

b4 = nn.Sequential(*resnet_block_change(128, 256, 2))

b5 = nn.Sequential(*resnet_block_change(256, 512, 2))

net2 = nn.Sequential(b1, b2, b3, b4, b5, nn.BatchNorm2d(512), nn.ReLU(), # 如果最后不再上个BatchNorm2d则会完全不收敛

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 10))

lr, num_epochs, batch_size = 0.05, 10, 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size, resize=96)

d2l.train_ch6(net2, train_iter, test_iter, num_epochs, lr, d2l.try_gpu()) # 大约需要二十五分钟,慎跑

loss 0.039, train acc 0.988, test acc 0.905

724.1 examples/sec on cuda:0

精度有所下降

(5)为什么即使函数类是嵌套的,我们也仍然要限制增加函数的复杂度呢?

限制复杂度是永远不变的主题,复杂度高更易过拟合,可解释性成吨下降。