目录

MapReduce 编程规范

Mapper阶段

Reducer阶段

Driver阶段

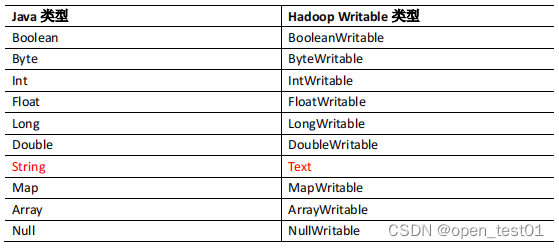

常用数据序列化类型

案例实施

WordCountMapper类

WordCountReducer类

WordCountDriverr 驱动类

HDFS测试

MapReduce 编程规范

Mapper阶段

Reducer阶段

Driver阶段

常用数据序列化类型

案例实施

创建maven工程并添加依赖

<dependencies>

<!--hadoop客户端-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.3.4</version>

</dependency>

<!--单元测试框架-->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.13.2</version>

</dependency>

</dependencies>

resources目录里创建

log4j.properties文件

log4j.rootLogger=INFO, stdout, logfile

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/wordcount.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

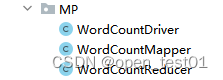

完成后在包下创建三个java类

WordCountMapper类

在WordCountMapper类中继承MapReduce类

参数解读:

KEYIN :map阶段输入的key类型:LongWritable

VALUEIN:map阶段输入的value类型:Text

KEYOUT:map阶段输出的key类型:Text

VALUEOUT:map阶段输入的value类型:IntWritable

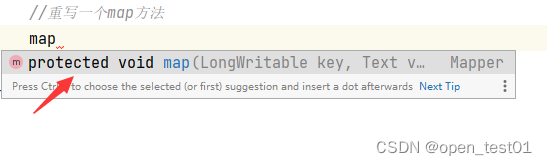

重写一个map方法

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text,Text, IntWritable> {//继承MapReduce类(泛型)

private Text outKey = new Text(); //定义输出的key值

private IntWritable outVlue = new IntWritable(1); //不进行聚合操作默认为1

//重写一个map方法

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

//获取一行

String line = value.toString();

//切割

String[] words = line.split(" "); //每一行单词都放到键中 遇到空格则分割

//循环写出

for (String word : words) {

//封装outKey

outKey.set(word);

//写出

context.write(outKey,outVlue); //参数为输出的KEY和VALUE

}

}

}

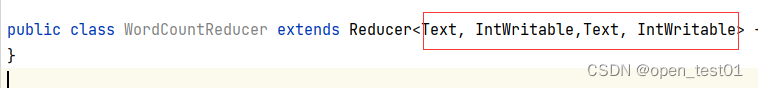

WordCountReducer类

在WordCountReducer类中继承Reducer类

参数解读:

KEYIN :reduce阶段输入的key类型:Text

VALUEIN:reduce阶段输入的value类型:IntWritable

KEYOUT:reduce阶段输出的key类型:Text

VALUEOUT:reduce阶段输入的value类型:IntWritable

重写reduce方法

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text, IntWritable,Text, IntWritable> { //继承Reducer类(泛型)

//封装

private IntWritable outValue = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

//定义变量累加

int sum = 0;

//要统计的单词(1,1)

//累加

for (IntWritable value : values) {

sum += value.get(); //获取

}

//变换类型

outValue.set(sum);

//写出

context.write(key,outValue); //outValue就是转换后的sum

}

}WordCountDriverr 驱动类

基本流程:

- 获取job 设置jar包路径

- 关联Mapper、Reducer

- 设置map输出的k,v类型

- 最终输出的k,v类型

- 设置输入路径和输出路径

- 提交job

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCountDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException { //抛出异常

//获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//设置jar包路径

job.setJarByClass(WordCountDriver.class);

//关联Mapper、Reducer

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

//设置map输出的k,v类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//最终输出的k,v类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//设置输入路径和输出路径

FileInputFormat.setInputPaths(job,new Path(args[0])); //本机输入路径

FileOutputFormat.setOutputPath(job,new Path(args[1])); //本机输出路径

//提交job

boolean result = job.waitForCompletion(true);

System.exit(result?0:1); //退出返回

}

}

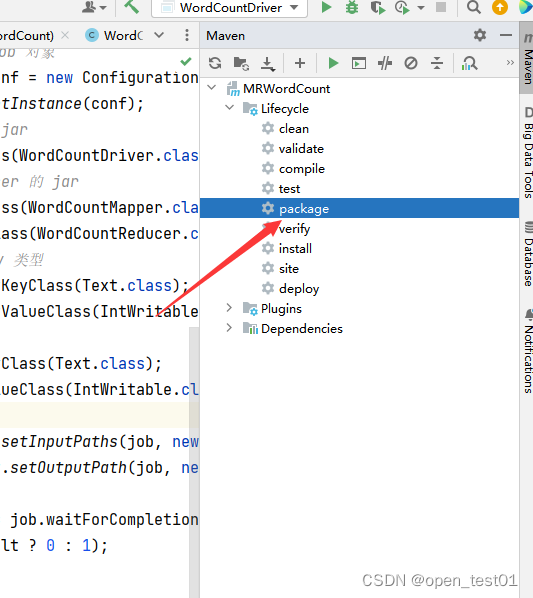

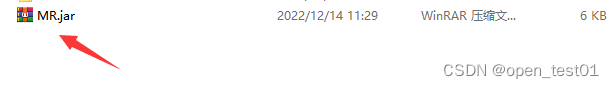

HDFS测试

改名并打包到服务器虚拟机进行测试

启动hadoop集群

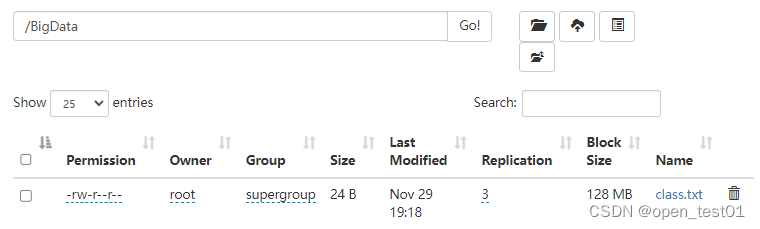

上传到hadoop目录下

准备好该目录的文件进行词频统计

拷贝WordCountDriverr 驱动类全类名

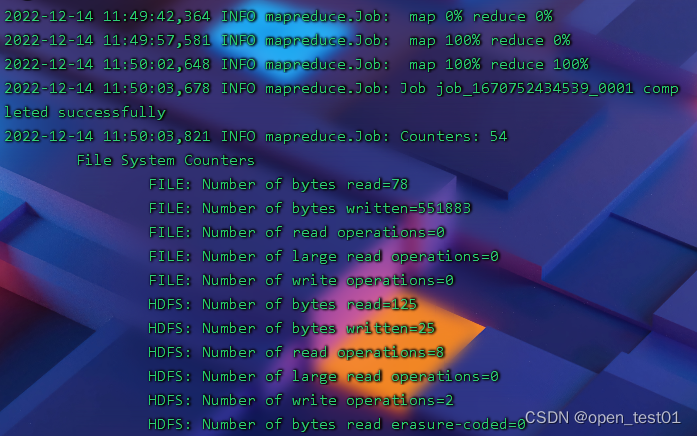

hadoop中执行命令

hadoop jar MR.jar MP.WordCountDriver /BigData /output

hadoop jar 使用的jar包 全类名 /输入数据原文件 /输出路径目录

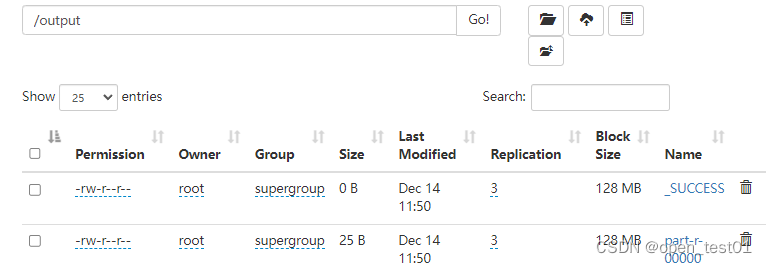

执行完毕在HDFS查看输出成功

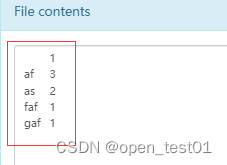

查看词频统计的运行结果

![使用粒子效果动画组成模型[自定义shader实现]](https://img-blog.csdnimg.cn/7e3ce8add4cb45dd8f973e84f2f11bf4.gif)

![[附源码]Node.js计算机毕业设计房车营地在线管理系统Express](https://img-blog.csdnimg.cn/5775f2fd384d4b04a8364d998fc28ce8.png)