序言

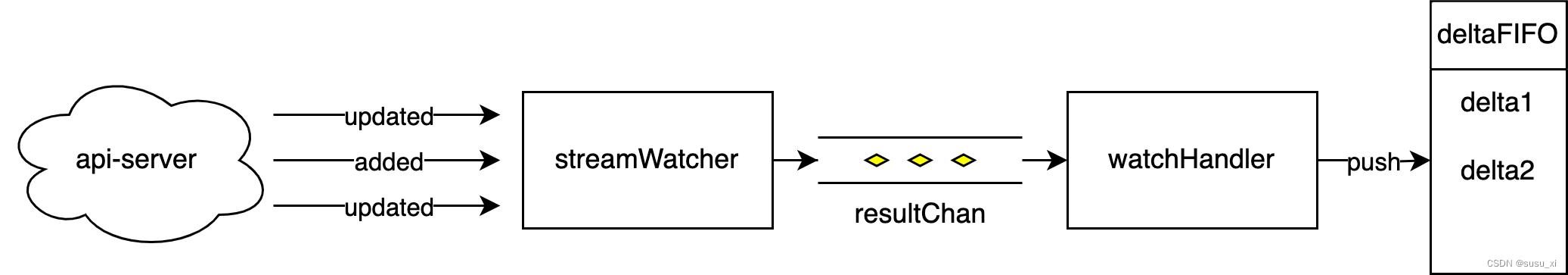

ViewFs 是在Federation的基础上提出的,用于通过一个HDFS路径来访问多个NameSpace,同时与ViewFs搭配的技术是client-side mount table(这个就是具体的规则配置信息可以放置在core.xml中,也可以放置在mountTable.xml中).

总的来说ViewFs的其实就是一个中间层,用于去连接不同的Namenode,然后返还给我们的客户端程序. 所以ViewFs必须要实现HDFS的所有接口,这样才能来做转发管理. 这样就会有一些问题,比如不同的NameNode版本带来的问题,就没法解决cuiyaonan2000@163.com

Federation Of HDFS

只是单纯的搭建联盟其实比较简单.

core.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/soft/hadoop/data_hadoop</value>

</property>

<!-- 这里的ip要改成对应的namenode地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop:9000/</value>

</property>

</configuration>

hfds-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/soft/hadoop/data_hadoop/datanode</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/soft/hadoop/data_hadoop/namenode</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<property>

<name>dfs.nameservices</name>

<value>ns1,ns2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.ns1</name>

<value>hadoop:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.ns1</name>

<value>hadoop:50070</value>

</property>

<property>

<name>dfs.namenode.secondaryhttp-address.ns1</name>

<value>hadoop:50090</value>

</property>

<property>

<name>dfs.namenode.rpc-address.ns2</name>

<value>hadoop1:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.ns2</name>

<value>hadoop1:50070</value>

</property>

<property>

<name>dfs.namenode.secondaryhttp-address.ns2</name>

<value>hadoop1:50090</value>

</property>

</configuration>

启动

首先就是需要格式化namenode,这个很常规 hdfs namenode -format

关于联盟版本的创建则需要设置联盟的id,所以需要再格式化namenode 的时候指定

hdfs namenode -format -clusterId cui

验证

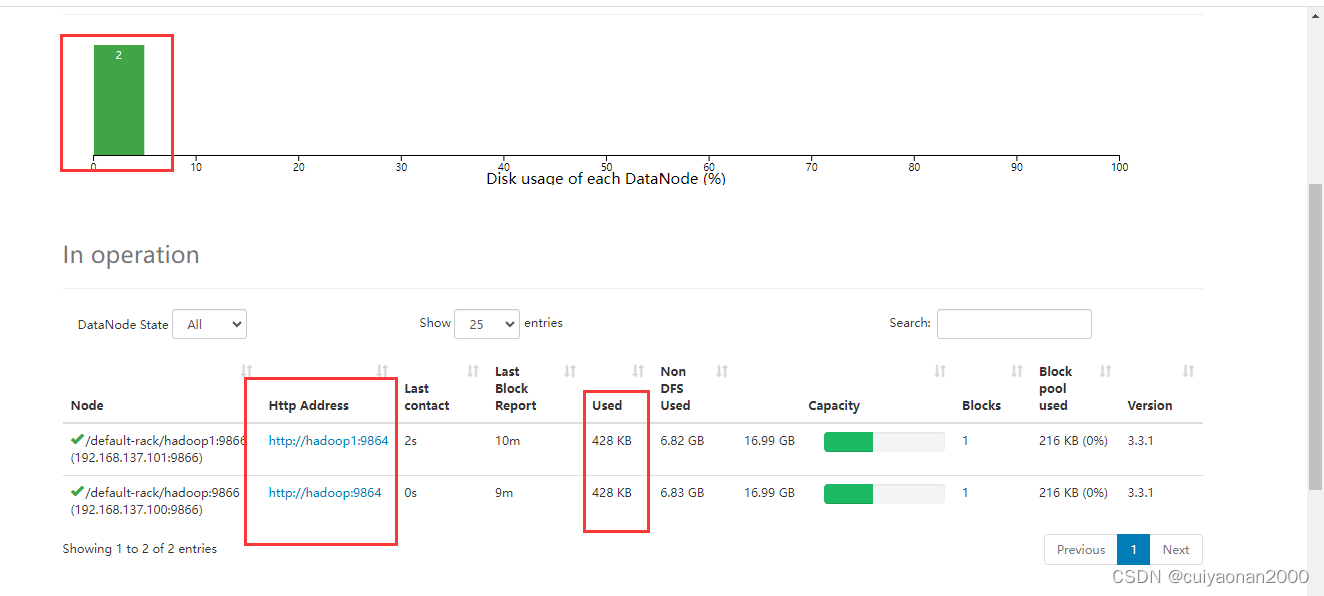

最主要的就是共用DataNode,即他们的DataNode 信息一样