服务器配置如下:

Cuda版本:11.1

Cudnn版本:8.2.0

显卡版本:RTX3090

使用转换脚本将.pth模型转换为ONNX格式

python mmdeploy/tools/deploy.py \

mmdeploy/configs/mmdet/detection/detection_onnxruntime_dynamic.py \

mmdetection/configs/yolox/yolox_x_8xb8-300e_coco.py \

mmdetection/checkpoint/yolox_x_8x8_300e_coco_20211126_140254-1ef88d67.pth \

mmdetection/demo/demo.jpg \

--work-dir mmdeploy_models/mmdet/yolox \

--device cpu \

--show \

--dump-info

生成文件夹其中,

end2end.onnx: 推理引擎文件。可用ONNX Runtime推理。*.json:mmdeploy SDK推理所需的meta信息

2--MMDeploy安装

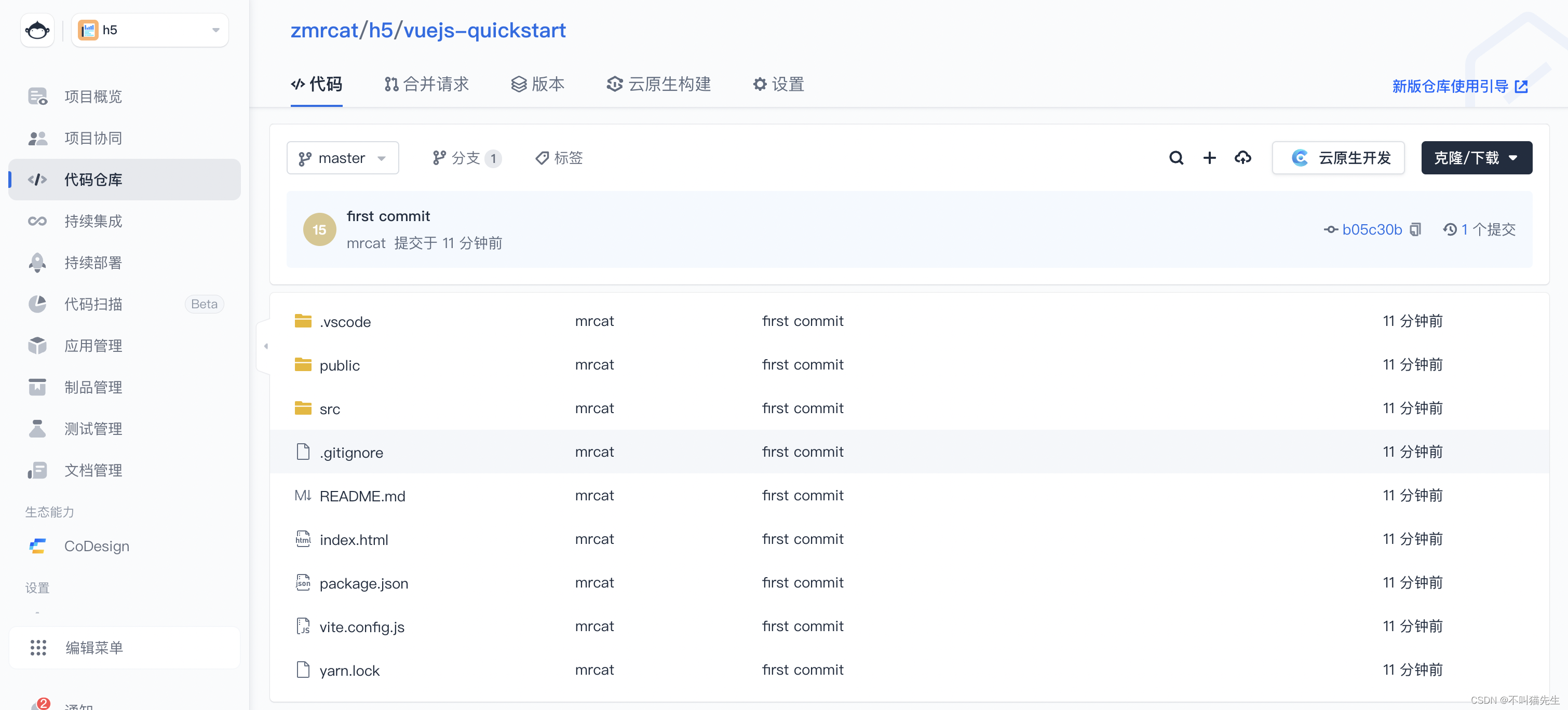

2-1--下载代码仓库

cd xxxx # xxxx表示仓库代码存放的地址

git clone -b master https://github.com/open-mmlab/mmdeploy.git MMDeploy

cd MMDeploy

git submodule update --init --recursive

2-2--安装构建和编译工具链

安装cmake:(版本≥3.14.0)

安装gcc:(版本7+)

2-3--创建Conda虚拟环境

①创建mmdeploy环境:

conda create -n mmdeploy python=3.8 -y

conda activate mmdeploy②安装pytorch:(版本≥1.8.0)

conda install pytorch==1.8.0 torchvision==0.9.0 cudatoolkit=11.1 -c pytorch -c conda-forge③安装mmcv-full

export cu_version=cu111 # cuda 11.1

export torch_version=torch1.8

pip install mmcv-full==1.4.0 -f https://download.openmmlab.com/mmcv/dist/${cu_version}/${torch_version}/index.html2-4--安装MMDeploy SDK依赖

①安装spdlog:

sudo apt-get install libspdlog-dev②安装opencv:(版本≥3.0)

sudo apt-get install libopencv-dev③安装pplcv(强调!这里与官网不同)

cd XXXX # xxxx表示存放pplcv安装包的地址

git clone https://github.com/openppl-public/ppl.cv.git

cd ppl.cv

export PPLCV_DIR=$(pwd)

git checkout tags/v0.6.2 -b v0.6.2首先进入安装包ppl.cv的地址,修改cuda.cmake的代码,增加以下部分:

if (CUDA_VERSION_MAJOR VERSION_GREATER_EQUAL "11")

set(_NVCC_FLAGS "${_NVCC_FLAGS} -gencode arch=compute_80,code=sm_80")

if (CUDA_VERSION_MINOR VERSION_GREATER_EQUAL "1")

# cuda doesn't support `sm_86` until version 11.1

set(_NVCC_FLAGS "${_NVCC_FLAGS} -gencode arch=compute_86,code=sm_86")

endif ()

endif ()因为这个版本的ppl.cv不支持cuda11.1和RTX3090 8.6的算力,需要修改cuda.cmake程序后再编译安装ppl.cv

./build.sh cuda2-5--安装推理引擎

①安装ONNXRuntime

安装ONNXRuntime的python包

pip install onnxruntime==1.8.1安装ONNXRuntime的预编译包

cd xxxx # xxxx表示存放ONNXRuntime编译包的地址

wget https://github.com/microsoft/onnxruntime/releases/download/v1.8.1/onnxruntime-linux-x64-1.8.1.tgz

tar -zxvf onnxruntime-linux-x64-1.8.1.tgz

cd onnxruntime-linux-x64-1.8.1

export ONNXRUNTIME_DIR=$(pwd)

export LD_LIBRARY_PATH=$ONNXRUNTIME_DIR/lib:$LD_LIBRARY_PATH②安装TensorRT

从官网下载TensorRT安装包:

https://developer.nvidia.com/nvidia-tensorrt-downloadcd /the/path/of/tensorrt/tar/gz/file

tar -zxvf TensorRT-8.2.3.0.Linux.x86_64-gnu.cuda-11.4.cudnn8.2.tar.gz

pip install TensorRT-8.2.3.0/python/tensorrt-8.2.3.0-cp37-none-linux_x86_64.whl

export TENSORRT_DIR=$(pwd)/TensorRT-8.2.3.0

export LD_LIBRARY_PATH=$TENSORRT_DIR/lib:$LD_LIBRARY_PATH安装uff包和graphsurgeon包:

cd XXXX # XXXX表示解压后TensorRT的地址

cd uff

pip install uff-0.6.9-py2.py3-none-any.whl # 视具体文件而定

cd graphsurgeon

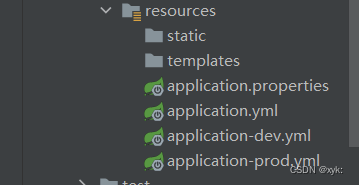

pip install graphsurgeon-0.4.5-py2.py3-none-any.whl # 视具体文件而定2-6--设置PATH

sudo ~/.bashrc

根据个人情况修改

export PATH="/root/anaconda3/bin:$PATH"

export PATH=/usr/local/cuda-11.1/bin:${PATH:+:${PATH}}

export LD_LIBRARY_PATH=/usr/local/cuda-11.1/lib64:${LD_LIBRARY_PATH:+:${LD_LIBRARY_PATH}}

export MMDEPLOY_DIR=/root/MMDeploy

export LD_LIBRARY_PATH=$MMDEPLOY_DIR/build/lib:$LD_LIBRARY_PATH

export PPLCV_DIR=/root/ppl.cv

export TENSORRT_DIR=/root/Downloads/TensorRT-8.2.3.0

export LD_LIBRARY_PATH=$TENSORRT_DIR/lib:$LD_LIBRARY_PATH

export CUDNN_DIR=/root/Downloads/cuda

export LD_LIBRARY_PATH=$CUDNN_DIR/lib64:$LD_LIBRARY_PATH

export ONNXRUNTIME_DIR=/root/onnxruntime-linux-x64-1.8.1

export LD_LIBRARY_PATH=$ONNXRUNTIME_DIR/lib:$LD_LIBRARY_PATHsource ~/.bashrc

2-7--编译安装依赖库

cd ${MMDEPLOY_DIR}

pip install -e .

3--编译MMDeploy SDK及Python API测试

①激活mmdeploy环境

②设置Path和库目录(在~/.bashrc设置后就无需每次都重新设置)

# 根据实际安装地址设置

# 设置PATH和库目录 (在~/.bashrc中设置就不需要每次导入)

export ONNXRUNTIME_DIR=/root/onnxruntime-linux-x64-1.8.1

export LD_LIBRARY_PATH=$ONNXRUNTIME_DIR/lib:$LD_LIBRARY_PATH

export DTENSORRT_DIR=/root/Downloads/TensorRT-8.2.3.0

export LD_LIBRARY_PATH=$DTENSORRT_DIR/lib:$LD_LIBRARY_PATH③编译自定义算子

# 进入MMDeploy根目录下

cd ${MMDEPLOY_DIR}

# 删除build文件夹 (这是本人之前已经编译过了,所以要删除)

rm -r build

# 新建并进入build文件夹

mkdir -p build && cd build

# 编译自定义算子

cmake -DMMDEPLOY_TARGET_BACKENDS="ort;trt" -DTENSORRT_DIR=${TENSORRT_DIR} -DCUDNN_DIR=${CUDNN_DIR} -DONNXRUNTIME_DIR=${ONNXRUNTIME_DIR} ..

make -j$(nproc)④编译MMDeploy SDK

# 编译MMDeploy SDK (cmake这步有时容易出bug,可能需要再执行一遍cmake操作,再执行make操作)

cmake .. -DMMDEPLOY_BUILD_SDK=ON \

-DCMAKE_CXX_COMPILER=g++-7 \

-DTENSORRT_DIR=${TENSORRT_DIR} \

-DMMDEPLOY_TARGET_BACKENDS="ort;trt" \

-DMMDEPLOY_CODEBASES=mmdet \

-DCUDNN_DIR=${CUDNN_DIR} \

-DMMDEPLOY_TARGET_DEVICES="cuda;cpu" \

-Dpplcv_DIR=/root/ppl.cv/cuda-build/install/lib/cmake/ppl

make -j$(nproc)

make install

⑤python API测试(这里需要安装MMDetection的源码;根据实际情况新建Checkpoints文件夹,使用预训练权重.pth文件;模型保存的地址--work-dir;测试图片demo.jpg等;)

以下提供两个实例,需根据个人路径来更改:

fasterrcnn:

https://github.com/open-mmlab/mmdetection/tree/master/configs/faster_rcnn# 进入MMDeploy根目录

cd ${MMDEPLOY_DIR}

## faseter_RCNN 实例

# 调用pythonAPI 转换模型: pytorch→onnx→engine

python tools/deploy.py configs/mmdet/detection/detection_tensorrt_dynamic-320x320-1344x1344.py /root/mmdetection/configs/faster_rcnn/faster_rcnn_r50_fpn_1x_coco.py /root/mmdetection/checkpoints/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth /root/mmdetection/demo/demo.jpg --work-dir work_dirs/faster_rcnn/ --device cuda --show --dump-infomaskrcnn:

https://github.com/open-mmlab/mmdetection/tree/master/configs/mask_rcnn

## mask_RCNN 实例

# 调用pythonAPI 转换模型: pytorch→onnx→engine

python tools/deploy.py \

configs/mmdet/instance-seg/instance-seg_tensorrt_dynamic-320x320-1344x1344.py \

/root/mmdetection/configs/mask_rcnn/mask_rcnn_x101_64x4d_fpn_mstrain-poly_3x_coco.py \

/root/MMDeploy/cheackpoints/mask_rcnn_x101_64x4d_fpn_mstrain-poly_3x_coco_20210526_120447-c376f129.pth \

/root/mmdetection/demo/demo.jpg --work-dir work_dirs/mask_rcnn/ \

--device cuda --show --dump-info4--C++推理测试

# 进入MMDeploy根目录

cd ${MMDEPLOY_DIR}

## 以后可以从这部分开始运行

# 进入example文件夹

cd build/install/example编译object_detection.cpp

# 编译object_detection.cpp

mkdir -p build && cd build

cmake -DMMDeploy_DIR=${MMDEPLOY_DIR}/build/install/lib/cmake/MMDeploy ..

make object_detection# 设置log等级

export SPDLOG_LEVEL=warn运行实例分割程序

# 运行实例分割程序(参数1:gpu加速; 参数2:推理模型的地址; 参数3:推理测试图片的地址)

./object_detection cuda /root/MMDeploy/work_dirs/mask_rcnn/ /root/mmdetection/demo/demo.jpg # 测试图片

./object_detection cuda /root/MMDeploy/work_dirs/mask_rcnn/ /root/MMDeploy/tests/data/tiger.jpeg # 测试图片

# 查看输出图片

ls

xdg-open output_detection.png5--修改object_detection.cpp显示推理时长

#include <iostream> // new adding

#include <ctime> // new adding在推理过程“status = mmdeploy_detector_apply(detector, &mat, 1, &bboxes, &res_count);”中前后添加记录时间的代码:

clock_t startTime,endTime; // new adding startTime = clock(); // 推理计时开始 // new adding status = mmdeploy_detector_apply(detector, &mat, 1, &bboxes, &res_count); // 推理过程 endTime = clock(); // 推理计时结束 // new adding std::cout << "The inference time time is: " <<(double)(endTime - startTime) / CLOCKS_PER_SEC << "s" << "!!!"<< std::endl; // 打印时间 // new adding

7--补充问题

①调用Python API转换模型时,报错:Could not load the Qt platform plugin "xcb"

猜测原因:本人后续在原来的Conda环境中配置了不同版本的Opencv和Pyqt5,导致版本不兼容。

解决方法:降低opencv-python和PyQt5的版本。

pip install opencv-python==4.3.0.36 PyQt5==5.15.2