3.神经网络

深度学习入门

本文的文件和代码链接:github地址

1.激活函数

- sigmoid

h ( x ) = 1 1 + e − x h(x) = \frac{1}{1 + e^{-x}} h(x)=1+e−x1

def sigmod(x):

return 1 / (1 + np.exp(-1 * x))

- ReLU

h ( x ) = { x : x > 0 0 : x ≤ 0 h(x) = \left\{ \begin{array}{lr} x & : x > 0\\ 0 & : x \le 0 \end{array} \right. h(x)={x0:x>0:x≤0

- softmax 函数(常用来分类)

y k = e a k ∑ i = 1 n e a i y_k = \frac{e^{a_k}}{\sum_{i=1}^n e^{a_i}} yk=∑i=1neaieak

需要关注:softmax需要进行指数运算,因此容易溢出

解决方法:

y k = e a k ∑ i = 1 n e a i = C ∗ e a k C ∗ ∑ i = 1 n e a i = e x p ( a k + l o g C ) ∗ ∑ i = 1 n e x p ( a i + l o g C ) = e x p ( a k + C ′ ) ∗ ∑ i = 1 n e x p ( a i + C ′ ) y_k = \frac{e^{a_k}}{\sum_{i=1}^n e^{a_i}} = \frac{C *e^{a_k}}{C * \sum_{i= 1}^n e^{a_i}} = \frac{exp(a_k + logC)}{* \sum_{i= 1}^n exp(a_i + logC)} = \frac{exp(a_k + C')}{* \sum_{i= 1}^n exp(a_i + C')} yk=∑i=1neaieak=C∗∑i=1neaiC∗eak=∗∑i=1nexp(ai+logC)exp(ak+logC)=∗∑i=1nexp(ai+C′)exp(ak+C′)

即在进行softmax指数运算的时候,加上或减去某个数,结果不变,因此可以减去输入信号中的最大值

softmax代码实现:

def softmax(a):

c = np.max(a)

return np.exp(a - c) / np.sum(np.exp(a - c)) # 利用了数组的广播机制

2. 使用mnist数据集进行推理

数据集导入

import sys, os

import numpy as np

# 为了导入父目录中的文件, 即将父目录加入到 sys.path(Python的搜索模块)的路径集中

sys.path.append(os.pardir)

# 其中 dataset.mnist 为dataset文件夹下的python文件,用来进行数据集的预处理

from dataset.mnist import load_mnist

# 下载数据集

(x_train, t_train), (x_test, t_test) = load_mnist(normalize=True, flatten=True, one_hot_label=True)

显示mnist图像

img = x_train[0]

label = t_train[0]

# 将图像形状转为(1, 28, 28)

img = img.reshape(28, 28)

# 使用 matplotlib.pyplot 进行查看

import matplotlib.pyplot as plt

plt.imshow(img)

显示结果:

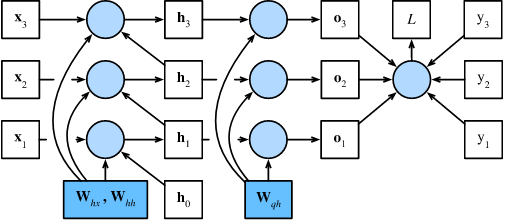

前向推理过程

函数定义:

import pickle

# 因为只是进行测试,所以只需要获取测试集的数据

def get_data():

(x_train, t_train), (x_test, t_test) = load_mnist(normalize=True, flatten=True, one_hot_label=True)

return x_test, t_test

# 初始化网络,从文件中读取之前保存好的权重(因为此时还没有学习如何进行训练,只是进行推理,因此使用给定的参数进行推理)

def init_network(file_path):

with open(file_path, 'rb') as f:

network = pickle.load(f)

return network

# 进行推理预测

def predict(network, x):

W1, W2, W3 = network['W1'], network['W2'], network['W3']

b1, b2, b3 = network['b1'], network['b2'], network['b3']

a1 = np.dot(x, W1) + b1

z1 = sigmod(a1)

a2 = np.dot(z1, W2) + b2

z2 = sigmod(a2)

a3 = np.dot(z2, W3) + b3

z3 = softmax(a3)

return z3

进行推理:

# 进行推理

x, t = get_data()

network = init_network("sample_weight.pkl")

# cnt 统计预测正确的个数

cnt = 0

# 遍历每一个样本

for i in range(x.shape[0]):

y = predict(network, x[i])

h = np.argmax(y) # 获取y中最大值的索引

if h == np.argmax(t[i]):

cnt += 1

# cnt 最终输出为 9352

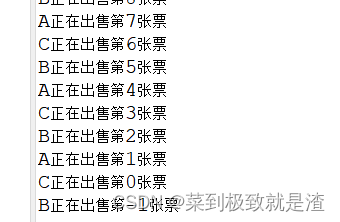

3. 批处理

之前预测的过程中一次处理一个样本,现在考虑一次处理多个样本的情况,即批处理。

一次打包输入多张图片(一张图片是一个样本,多张图片就是多个样本),这种打包式的输入就被称为批。

# 进行推理

x, t = get_data()

network = init_network("sample_weight.pkl")

# batch_size 定义一批处理的样本数

batch_size = 100

# cnt 统计预测正确的个数

cnt = 0

# 遍历每一个样本

for i in range(0, x.shape[0], batch_size):

y = predict(network, x[i:i+batch_size])

h = np.argmax(y, axis = 1) # 按照列, 获取y中每一行中最大值的索引(行不变,在列上计算, 因此axis=1)

cnt += np.sum(h == np.argmax(t[i:i+batch_size], axis = 1))

# cnt仍然为 9352

4. 补充说明

dataset目录下 mnist.py 文件内容:

# coding: utf-8

try:

import urllib.request

except ImportError:

raise ImportError('You should use Python 3.x')

import os.path

import gzip

import pickle

import os

import numpy as np

url_base = 'http://yann.lecun.com/exdb/mnist/'

key_file = {

'train_img':'train-images-idx3-ubyte.gz',

'train_label':'train-labels-idx1-ubyte.gz',

'test_img':'t10k-images-idx3-ubyte.gz',

'test_label':'t10k-labels-idx1-ubyte.gz'

}

dataset_dir = os.path.dirname(os.path.abspath(__file__))

save_file = dataset_dir + "/mnist.pkl"

train_num = 60000

test_num = 10000

img_dim = (1, 28, 28)

img_size = 784

def _download(file_name):

file_path = dataset_dir + "/" + file_name

if os.path.exists(file_path):

return

print("Downloading " + file_name + " ... ")

urllib.request.urlretrieve(url_base + file_name, file_path)

print("Done")

def download_mnist():

for v in key_file.values():

# 其中 v 是 key_file 中的值, 不是key

_download(v) # 下载后文件名为 /train-images-idx3-ubyte.gz 等

def _load_label(file_name):

file_path = dataset_dir + "/" + file_name

print("Converting " + file_name + " to NumPy Array ...")

with gzip.open(file_path, 'rb') as f:

labels = np.frombuffer(f.read(), np.uint8, offset=8)

print("Done")

return labels

def _load_img(file_name):

# 此时 file_path 为 /train-images-idx3-ubyte.gz等

file_path = dataset_dir + "/" + file_name

print("Converting " + file_name + " to NumPy Array ...")

with gzip.open(file_path, 'rb') as f:

# np.frombuffer 将缓冲区解释为一维数组, 即将 /train-images-idx3-ubyte.gz 解释为一维数组

data = np.frombuffer(f.read(), np.uint8, offset=16)

data = data.reshape(-1, img_size)

print("Done")

return data

# 将下载后的对象转为 numpy

def _convert_numpy():

dataset = {}

dataset['train_img'] = _load_img(key_file['train_img'])

dataset['train_label'] = _load_label(key_file['train_label'])

dataset['test_img'] = _load_img(key_file['test_img'])

dataset['test_label'] = _load_label(key_file['test_label'])

return dataset

def init_mnist():

download_mnist()

dataset = _convert_numpy()

print("Creating pickle file ...")

with open(save_file, 'wb') as f:

# 序列化操作,将对象dataset保存到 f 文件中,其中 f为 dataset_dir + "/mnist.pkl"

pickle.dump(dataset, f, -1)

print("Done!")

def _change_one_hot_label(X):

T = np.zeros((X.size, 10))

for idx, row in enumerate(T):

row[X[idx]] = 1

return T

def load_mnist(normalize=True, flatten=True, one_hot_label=False):

"""读入MNIST数据集

Parameters

----------

normalize : 将图像的像素值正规化为0.0~1.0

one_hot_label :

one_hot_label为True的情况下,标签作为one-hot数组返回

one-hot数组是指[0,0,1,0,0,0,0,0,0,0]这样的数组

flatten : 是否将图像展开为一维数组

Returns

-------

(训练图像, 训练标签), (测试图像, 测试标签)

"""

if not os.path.exists(save_file):

init_mnist()

with open(save_file, 'rb') as f:

dataset = pickle.load(f)

if normalize:

for key in ('train_img', 'test_img'):

dataset[key] = dataset[key].astype(np.float32)

dataset[key] /= 255.0

if one_hot_label:

dataset['train_label'] = _change_one_hot_label(dataset['train_label'])

dataset['test_label'] = _change_one_hot_label(dataset['test_label'])

if not flatten:

for key in ('train_img', 'test_img'):

dataset[key] = dataset[key].reshape(-1, 1, 28, 28)

return (dataset['train_img'], dataset['train_label']), (dataset['test_img'], dataset['test_label'])

if __name__ == '__main__':

init_mnist()

![[附源码]计算机毕业设计基于Java的图书购物商城Springboot程序](https://img-blog.csdnimg.cn/fa1c5040c70a4471a469f1567ca63dfd.png)