解压文件

修改文件名

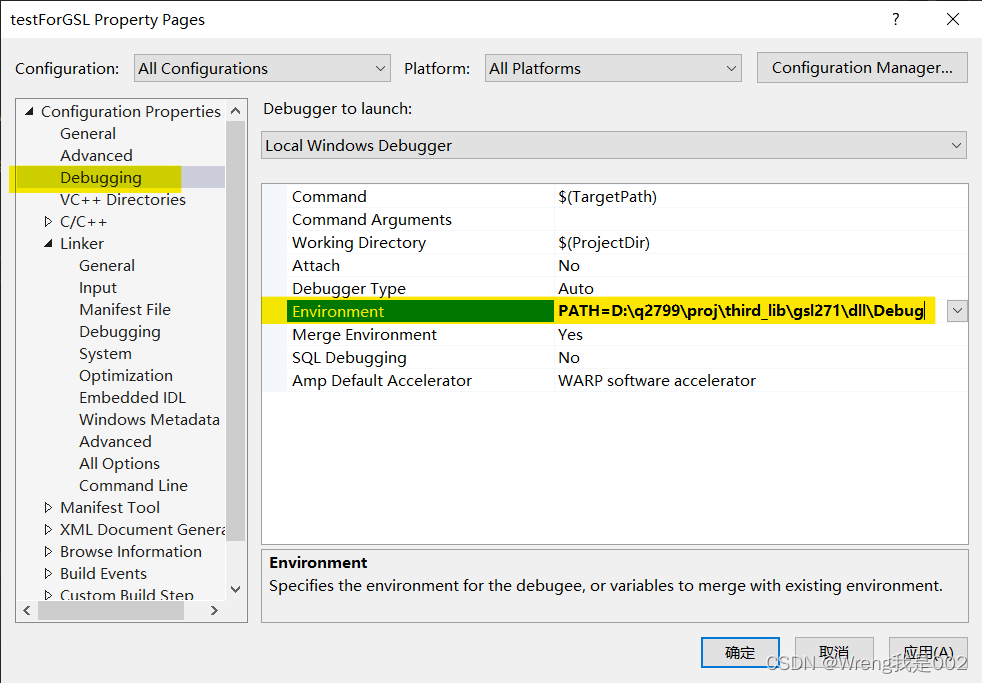

配置环境变量

执行flume-ng version

将flume-env.sh.template改名为flume-env.sh, 并修改其配置

启动Flume传输Hadoop日志

启动flume

解压文件

tar -zxvf apache-flume-1.9.0-bin.tar.gz -C /opt修改文件名

mv apache-flume-1.9.0-bin flume配置环境变量

vim /etc/profile

需要保证hadoop与hive的环境变量存在切无误

export HADOOP_HOME=/opt/module/hadoop-3.3.1

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export HIVE_HOME=/opt/module/hive-3.1.2

export PATH=$PATH:$HIVE_HOME/bin

export FLUME_HOME=/opt/flume

export PATH=$FLUME_HOME/bin:$PATH

source /etc/profile执行flume-ng version

将 lib 文件夹下的 guava-11.0.2.jar 删除以兼容 Hadoop 3.1.3

rm /opt/flume/lib/guava-11.0.2.jar

将flume-env.sh.template改名为flume-env.sh, 并修改其配置

flume-1.9.0/conf目录下

cp flume-env.sh.template flume-env.sh

vi flume/conf/flume-env.sh export JAVA_HOME=/opt/jdk1.8flume必须持有hadoop相关的包才能将数据输出到hdfs, 将如下包上传到flume/lib下

启动Flume传输Hadoop日志

flume必须持有hadoop相关的包才能将数据输出到hdfs, 将如下包上传到flume/lib下

cp $HADOOP_HOME/share/hadoop/common/hadoop-common-3.1.3.jar /opt/flume/lib

cp $HADOOP_HOME/share/hadoop/common/lib/hadoop-auth-3.1.3.jar /opt/flume/lib

cp $HADOOP_HOME/share/hadoop/common/lib/commons-configuration2-2.1.1.jar /opt/flume/lib将hadoop的hdfs-site.xml和core-site.xml 放到flume/conf下

cp $HADOOP_HOME/etc/hadoop/core-site.xml //opt/flume/conf

cp $HADOOP_HOME/etc/hadoop/hdfs-site.xml //opt/flume/confflume-1.9.0/conf目录下

rm /opt/flume-1.9.0/lib/guava-11.0.2.jar

配置文件监控NameNode 日志文件

flume/conf目录下

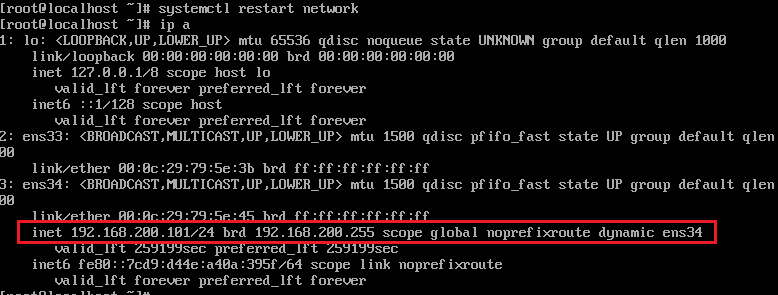

cp flume-conf.properties.template flume-conf.properties在 Hadoop 的默认配置下,NameNode 的日志文件位于 $HADOOP_HOME/logs/hadoop-hdfs-namenode-[hostname].log。

其中,$HADOOP_HOME 为 Hadoop 的安装目录,[hostname] 为运行 NameNode 的主机名。如果启用了安全模式,还会有一个专门的安全模式日志文件,路径为 $HADOOP_HOME/logs/hadoop-hdfs-namenode-[hostname]-safemode.log。

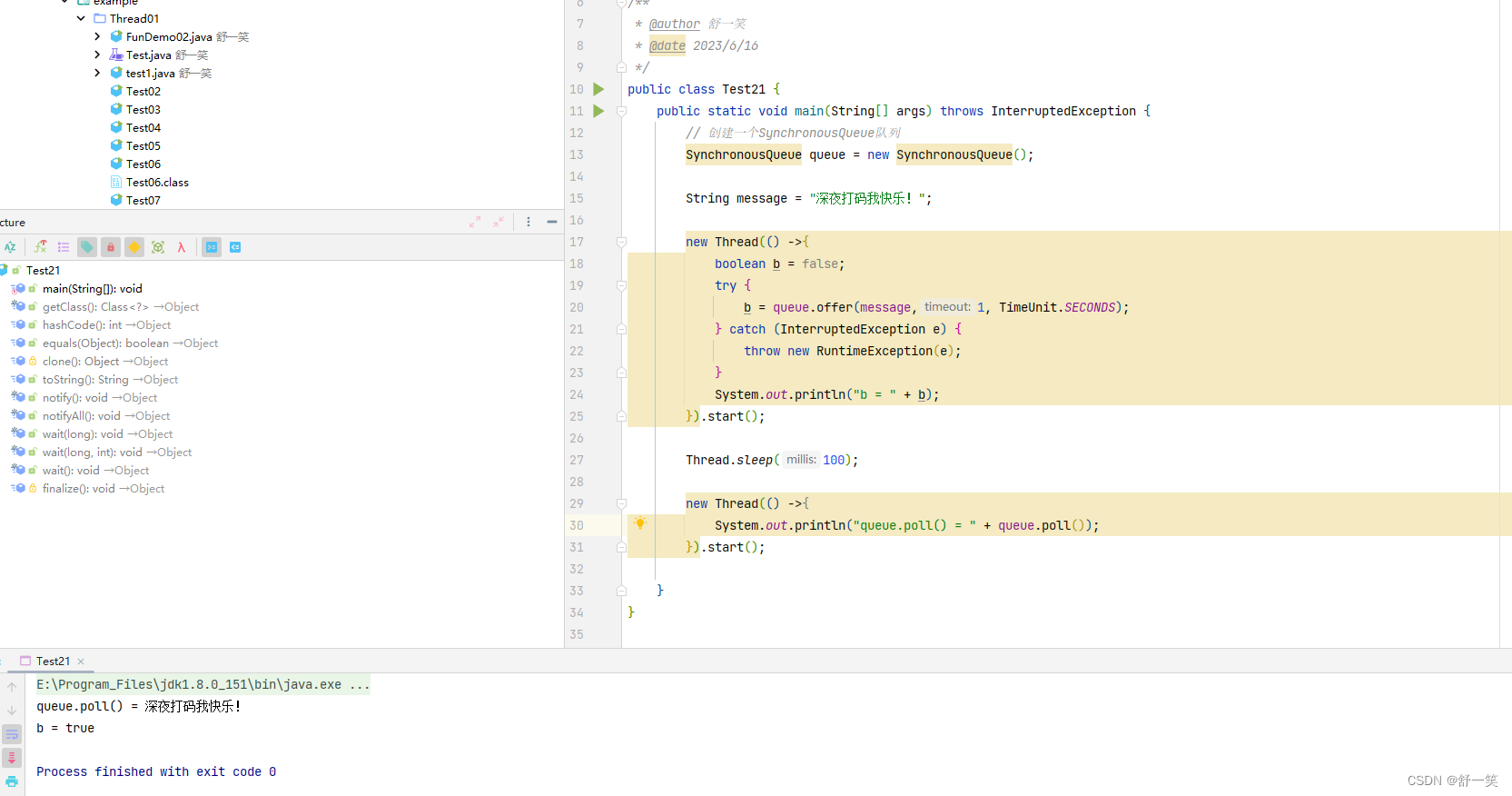

vim flume-conf.propertiesa1.sources = r1

a1.sinks = k1

a1.channels = c1

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /opt/hadoop-3.1.3/logs/hadoop-root-namenode-master.log

a1.sources.r1.basenameHeader=true

a1.sources.r1.basenameHeaderKey=fileName

a1.channels.c1.type = memory

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path = hdfs://master:8020/tmp/flume/%Y%m%d/%H

a1.sinks.k1.hdfs.filePrefix = %{fileName}

a1.sinks.k1.hdfs.fileType=DataStream

a1.sinks.k1.hdfs.useLocalTimeStamp = true

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1启动flume

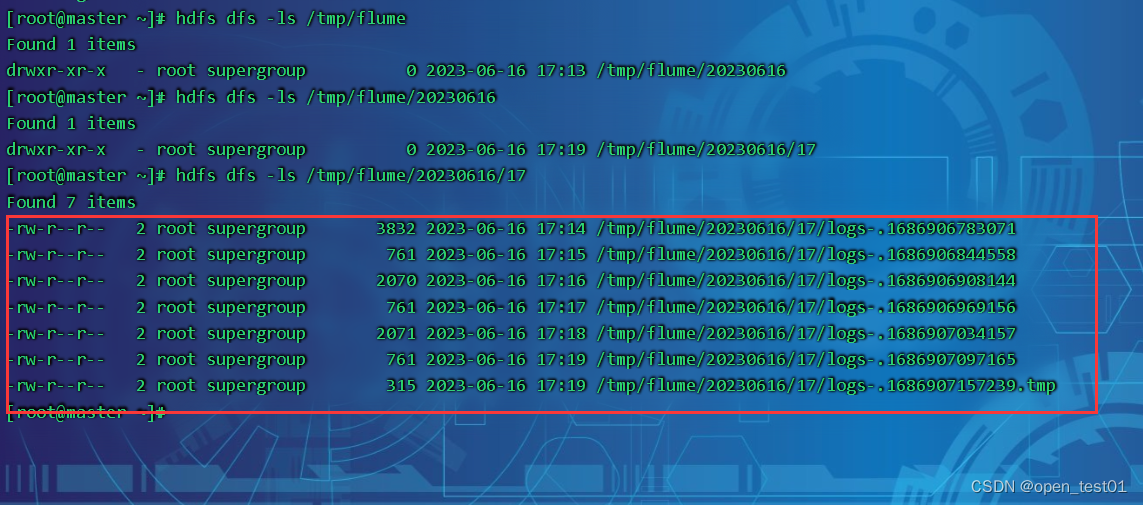

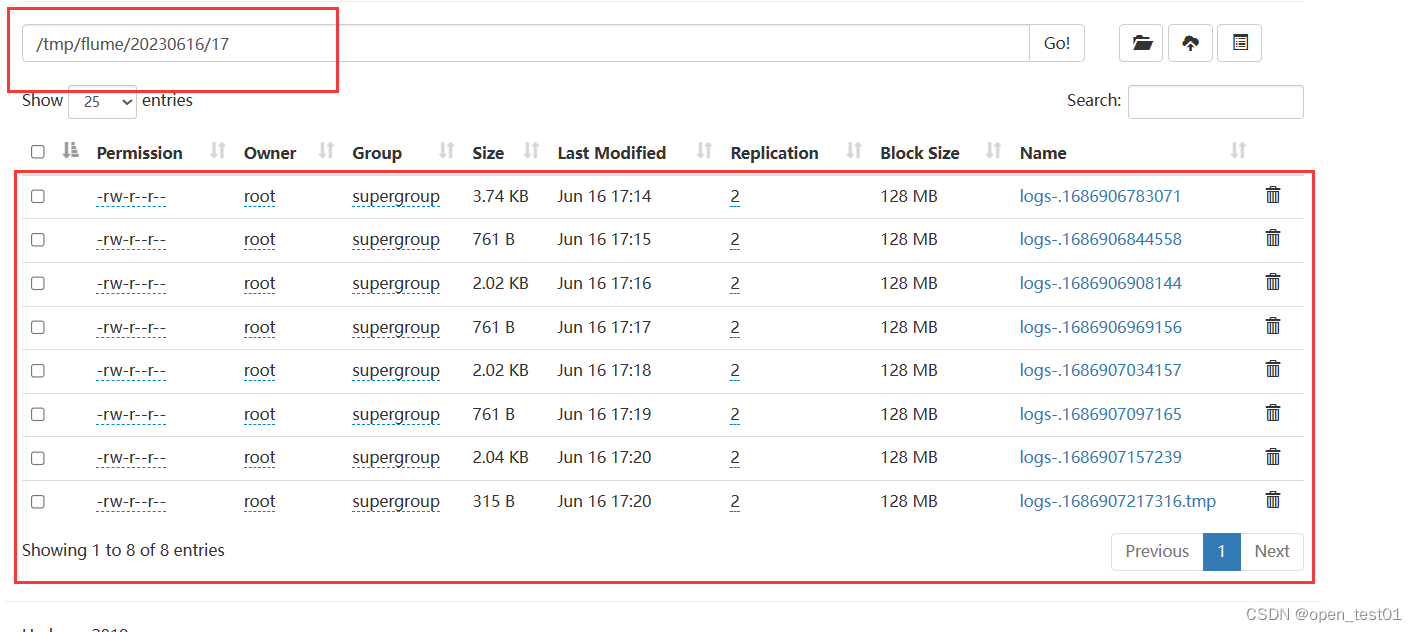

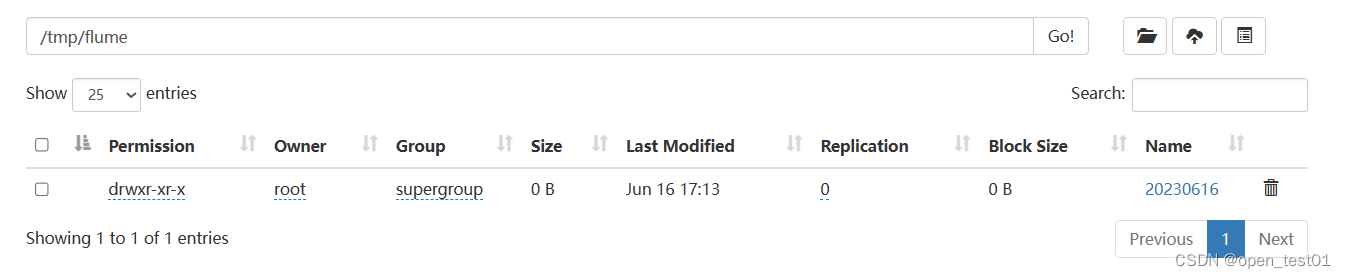

flume-ng agent --conf conf/ --conf-file /opt/flume/conf/flume-conf.properties --name a1 -Dflume.root.logger=DEBUG,console在hdfs上查看内容

hdfs dfs -ls /tmp/flume