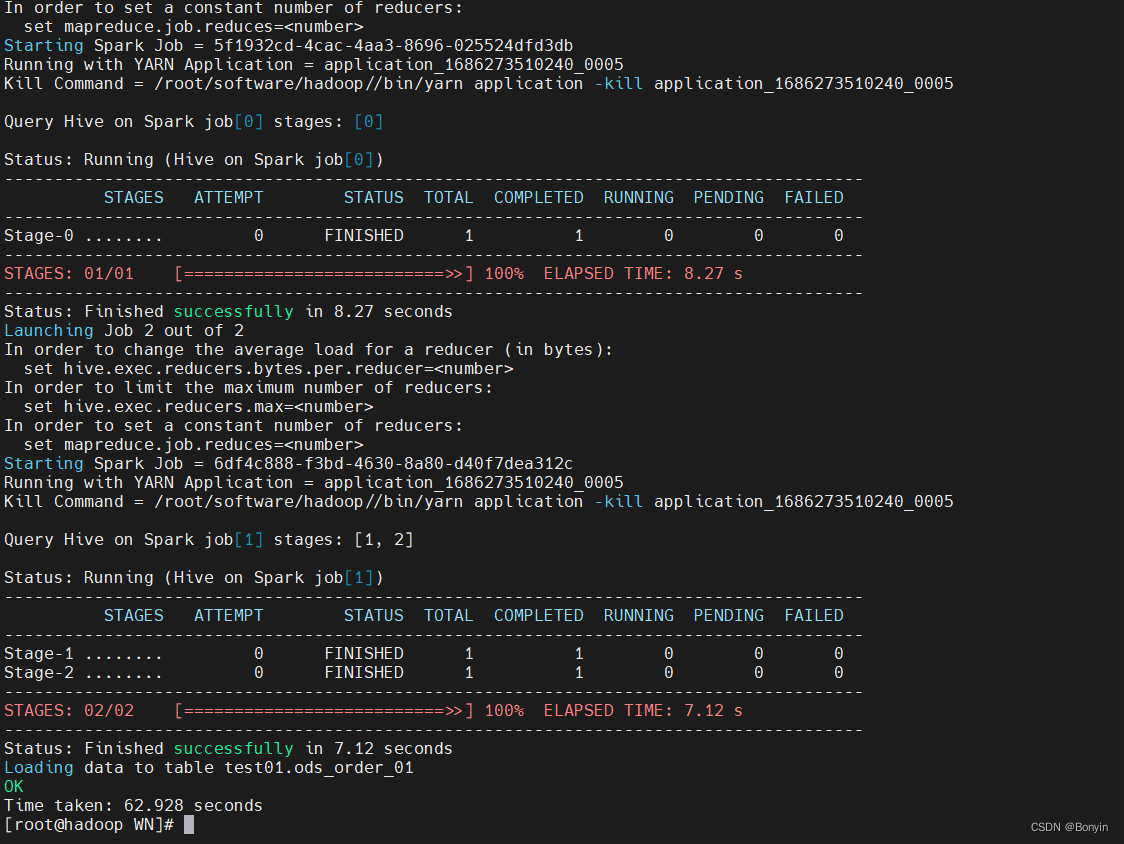

hive on spark 进行编译操作

软件

hive 2.3.6

spark 2.0.0版本

hadoop-2.7.6版本

操作流程:

hadoop-2.7.6

1、安装hadoop不说了。简单。

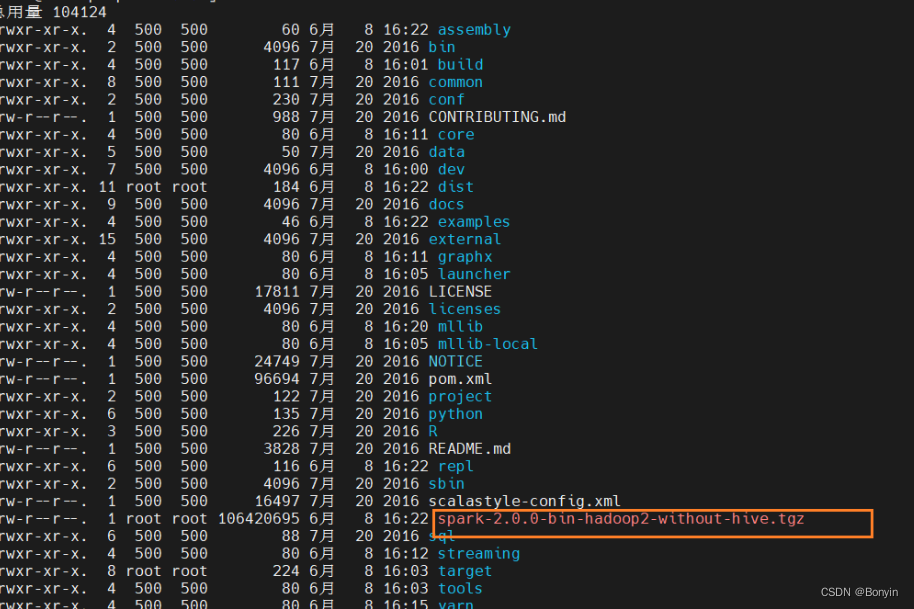

spark-2.0.0

2、下载spark-2.0.0的源码. https://archive.apache.org/dist/spark/spark-2.1.0/ 这个下载spark各个版本。

3、编译spark源码

[root@master local]# tar -zxvf spark-2.0.0.tgz

[root@master local]# vim ./spark-2.0.0/dev/make-distribution.sh

# 在该文件中找到以下内容删除

VERSION=$("$MVN" help:evaluate -Dexpression=project.version $@ 2>/dev/null | grep -v "INFO" | tail -n 1)

SCALA_VERSION=$("$MVN" help:evaluate -Dexpression=scala.binary.version $@ 2>/dev/null\

| grep -v "INFO"\

| tail -n 1)

SPARK_HADOOP_VERSION=$("$MVN" help:evaluate -Dexpression=hadoop.version $@ 2>/dev/null\

| grep -v "INFO"\

| tail -n 1)

SPARK_HIVE=$("$MVN" help:evaluate -Dexpression=project.activeProfiles -pl sql/hive $@ 2>/dev/null\

| grep -v "INFO"\

| fgrep --count "<id>hive</id>";\

# Reset exit status to 0, otherwise the script stops here if the last grep finds nothing\

# because we use "set -o pipefail"

echo -n)

#删除完成后修改为

VERSION=2.0.0

SCALA_VERSION=2.11

SPARK_HADOOP_VERSION=2.7.7

执行编译操作:

编译spark

./dev/make-distribution.sh --name "hadoop2-without-hive" --tgz "-Pyarn,hadoop-provided,hadoop-2.7,parquet-provided"

当前目录下面会多一个tgz的安装包。需要把这个文件拷贝的机器的安装目录下面,解压配置安装。

[root@master local]# cd ./spark/conf/

[root@master conf]# cp spark-env.sh.template spark-env.sh

[root@master conf]# vim spark-env.sh

#将以下配置添加到spark-env.sh文件中

export JAVA_HOME=/usr/java/jdk1.8.0_144

export SCALA_HOME=/usr/local/scala

export HADOOP_HOME=/usr/local/hadoop

export HADOOP_CONF_DIR=/usr/local/hadoop/etc/hadoop

export HADOOP_YARN_CONF_DIR=/usr/local/hadoop/etc/hadoop

export SPARK_HOME=/usr/local/spark

export SPARK_WORKER_MEMORY=512m

export SPARK_EXECUTOR_MEMORY=512m

export SPARK_DRIVER_MEMORY=512m

export SPARK_DIST_CLASSPATH=$(/usr/local/hadoop/bin/hadoop classpath)

安装hive

[root@master local]# tar -zxvf apache-hive-2.3.7-bin.tar.gz

[root@master local]# mv apache-hive-2.3.7-bin hive

[root@master local]# vim /usr/local/hive/conf/hive-site.xml

#在文件中添加以下配置

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<!-- 查询数据时 显示出列的名字 -->

<name>hive.cli.print.header</name>

<value>true</value>

</property>

<property>

<!-- 在命令行中显示当前所使用的数据库 -->

<name>hive.cli.print.current.db</name>

<value>true</value>

</property>

<property>

<!-- 默认数据仓库存储的位置,该位置为HDFS上的路径 -->

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<!-- 5.x -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive_metastore?createDatabaseIfNotExist=true</value>

</property>

<!-- 5.x -->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<!-- MySQL密码 -->

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<!-- 设置mysql密码 -->

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<property>

<!-- 设置引擎为Spark-->

<name>hive.execution.engine</name>

<value>spark</value>

</property>

<property>

<name>hive.enable.spark.execution.engine</name>

<value>true</value>

</property>

<property>

<name>spark.home</name>

<value>/usr/local/spark</value>

</property>

<property>

<name>spark.master</name>

<value>yarn</value>

</property>

<property>

<name>spark.eventLog.enabled</name>

<value>true</value>

</property>

<property>

<!-- Hive的日志存储目录,HDFS -->

<name>spark.eventLog.dir</name>

<value>hdfs://master:9000/spark-hive-jobhistory</value>

</property>

<property>

<name>spark.executor.memory</name>

<value>512m</value>

</property>

<property>

<name>spark.driver.memory</name>

<value>512m</value>

</property>

<property>

<name>spark.serializer</name>

<value>org.apache.spark.serializer.KryoSerializer</value>

</property>

<property>

<!-- HDFS中jar包的存储路径 -->

<name>spark.yarn.jars</name>

<value>hdfs://master:9000/spark-jars/*</value>

</property>

<property>

<name>hive.spark.client.server.connect.timeout</name>

<value>300000</value>

</configuration>

细节:

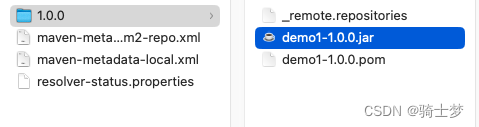

编译的spark目录下面的jars文件全部copy到hive/lib下面,将所有的hive/lib jar上传到hdfs目录:hdfs://master:9000/spark-jars/。

启动流程

1、启动hadoop

2.启动spark

3、hive --service metastore &

4、执行hive查询操作