基于RV1126平台检测模型全流程部署

- 模型训练

- ONNX导出

- ONNX模型简化

- Python部署

- C++部署

本工程地址:https://github.com/liuyuan000/Rv1126_YOLOv5-Lite

模型训练

这次选用的是方便部署的YOLOv5 Lite模型,是一种更轻更快易于部署的YOLOv5,主要摘除Focus层和四次slice操作,让模型量化精度下降在可接受范围内。

官方仓库:https://github.com/ppogg/YOLOv5-Lite

选择自己的yolo数据集即可进行训练,训练YOLOv5-Lite-s模型即可达到较好的检测效果,在此以s为例:

把coco.yaml数据集路径改为你的即可,执行以下命令进行训练。

python train.py --data coco.yaml --cfg v5lite-s.yaml --weights v5lite-s.pt --batch-size 128

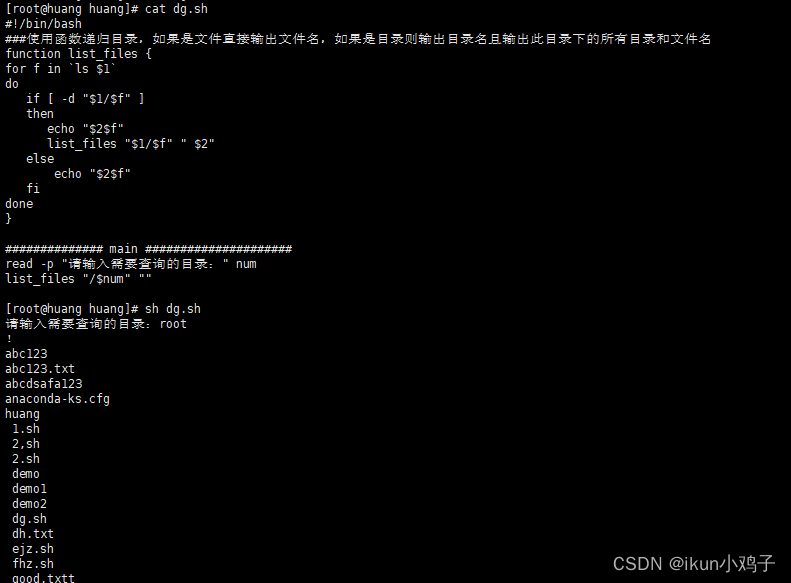

我这里只训练了口罩的数据集,检测结果如下:

ONNX导出

在exp文件夹下就是训练得到的模型文件,使用export.py进行导出即可。

需要注意的是opset_version别太高。

torch.onnx.export(model, img, f, verbose=False, opset_version=11, input_names=['images'],

修改export.py里的模型路径和推理的图片大小,图片大小一定要是32的整数倍,我这里使用的是640x640的图片大小。使用Notore导出得到:

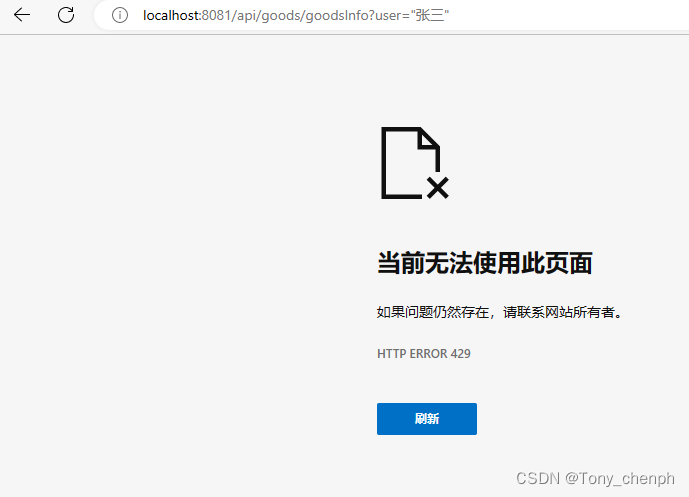

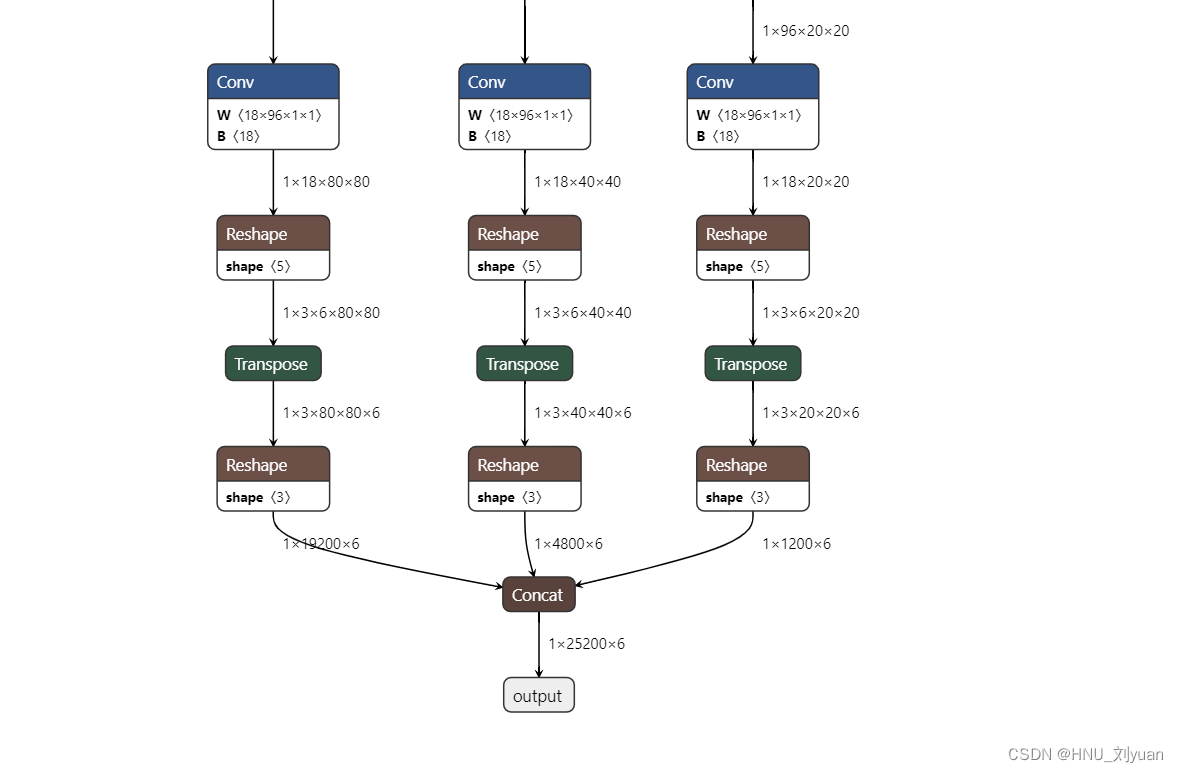

可以看出最终导出的模型推理结果包括三个尺度,即原图下采样8倍、16倍、32倍将其进行汇总concat得到最终的结果,得到25200个结果,维度6是由:中心点x、y、宽高w、h、是否含有目标、以及目标是口罩的概率。

此维度也就是5+目标种类数构成。

25200=(640/8)2+(640/16)2+(640/32)2

具体可以看yolo论文,即预设锚框数量。

ONNX模型简化

原工程未提供onnx简化代码,简化代码较简单,在此直接给出:

from onnxsim import simplify

import onnx

in_path='/home/liuyuan/YOLOv5-Lite/runs/train/exp8/weights/best.onnx'

onnx_model = onnx.load(in_path) # load onnx model

graph = onnx_model.graph

model_simp, check = simplify(onnx_model)

assert check, "Simplified ONNX model could not be validated"

output_path='/home/liuyuan/YOLOv5-Lite/runs/train/exp8/weights/simplify.onnx'

onnx.save(model_simp, output_path)

print('finished exporting onnx')

打开简化后的模型如下所示:

可以看出输出部分更加简洁。当然rknn可能也会默认进行onnx优化。

Python部署

部署代码如下所示,连接开发板后,设置相关模型路径即可进行运行,值得关注的是后处理部分,这部分我是参照Yolov5-lite项目里的cpp_demo/onnxruntime/v5lite.cpp来写的。

import numpy as np

import cv2

import os

import urllib.request

from matplotlib import gridspec

from matplotlib import pyplot as plt

from PIL import Image

from tensorflow.python.platform import gfile

from rknn.api import RKNN

GRID0 = 80

GRID1 = 40

GRID2 = 20

LISTSIZE = 6

SPAN = 3

NUM_CLS = 1

MAX_BOXES = 500

OBJ_THRESH = 0.5

NMS_THRESH = 0.6

IMG_SIZE=640

CLASSES = ["mask"]

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def nms_boxes(boxes, scores):

"""Suppress non-maximal boxes.

# Arguments

boxes: ndarray, boxes of objects.

scores: ndarray, scores of objects.

# Returns

keep: ndarray, index of effective boxes.

"""

x = boxes[:, 0]

y = boxes[:, 1]

w = boxes[:, 2]

h = boxes[:, 3]

areas = w * h

order = scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

xx1 = np.maximum(x[i], x[order[1:]])

yy1 = np.maximum(y[i], y[order[1:]])

xx2 = np.minimum(x[i] + w[i], x[order[1:]] + w[order[1:]])

yy2 = np.minimum(y[i] + h[i], y[order[1:]] + h[order[1:]])

w1 = np.maximum(0.0, xx2 - xx1 + 0.00001)

h1 = np.maximum(0.0, yy2 - yy1 + 0.00001)

inter = w1 * h1

ovr = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(ovr <= NMS_THRESH)[0]

order = order[inds + 1]

keep = np.array(keep)

return keep

def yolov3_post_process(input_data):

# yolov3

masks = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]

stride = [8,16,32]

anchors = [[10, 13], [16, 30], [33, 23], [30, 61], [62, 45],

[59, 119], [116, 90], [156, 198], [373, 326]]

# yolov3-tiny

# masks = [[3, 4, 5], [0, 1, 2]]

# anchors = [[10, 14], [23, 27], [37, 58], [81, 82], [135, 169], [344, 319]]

generate_boxes = []

pred_index = 0

ratiow = 1

ratioh = 1

print(input_data.shape)

for n in range(3):

num_grid_x = int(IMG_SIZE / stride[n])

num_grid_y = int(IMG_SIZE / stride[n])

for q in range(3):

anchor_w = anchors[n*3+q][0]

anchor_h = anchors[n*3+q][1]

for i in range(num_grid_y):

for j in range(num_grid_x):

preds = input_data[pred_index]

box_score = preds[4]

if(box_score>OBJ_THRESH):

class_score = 0

class_ind = 0

for k in range(NUM_CLS):

if preds[k+5]>class_score:

class_score = preds[k+5]

class_ind = k

cx = (preds[0]*2-0.5+j)*stride[n]

cy = (preds[1]*2-0.5+i)*stride[n]

w = np.power(preds[2]*2,2)*anchor_w

h = np.power(preds[3]*2,2)*anchor_h

xmin = (cx - 0.5*w)*ratiow

xmax = (cx + 0.5*w)*ratiow

ymin = (cy - 0.5*h)*ratioh

ymax = (cy + 0.5*h)*ratioh

generate_boxes.append([xmin, ymin, w, h, class_score, class_ind])

pred_index += 1

print(generate_boxes)

generate_boxes = np.array(generate_boxes)

classes = generate_boxes[:,5]

nboxes, nclasses, nscores = [], [], []

for c in set(classes):

inds = np.where(classes == c)

b = generate_boxes[inds][:,0:4]

s = generate_boxes[inds][:,4]

c = generate_boxes[inds][:,5]

keep = nms_boxes(b, s)

nboxes.append(b[keep])

nclasses.append(c[keep])

nscores.append(s[keep])

if not nclasses and not nscores:

return None, None, None

boxes = np.concatenate(nboxes)

classes = np.concatenate(nclasses)

scores = np.concatenate(nscores)

return boxes, classes, scores

def draw(image, boxes, scores, classes):

"""Draw the boxes on the image.

# Argument:

image: original image.

boxes: ndarray, boxes of objects.

classes: ndarray, classes of objects.

scores: ndarray, scores of objects.

all_classes: all classes name.

"""

for box, score, cl in zip(boxes, scores, classes):

x, y, w, h = box

cl = int(cl)

print('class: {}, score: {}'.format(CLASSES[cl], score))

print('box coordinate left,top,right,down: [{}, {}, {}, {}]'.format(x, y, x+w, y+h))

top = max(0, np.floor(x + 0.5).astype(int))

left = max(0, np.floor(y + 0.5).astype(int))

right = min(image.shape[1], np.floor(x + w + 0.5).astype(int))

bottom = min(image.shape[0], np.floor(y + h + 0.5).astype(int))

cv2.rectangle(image, (top, left), (right, bottom), (255, 0, 0), 2)

cv2.putText(image, '{0} {1:.2f}'.format(CLASSES[cl], score),

(top, left - 6),

cv2.FONT_HERSHEY_SIMPLEX,

0.6, (0, 0, 255), 2)

cv2.imwrite("result.jpg",image)

if __name__ == '__main__':

ONNX_MODEL = './simplify.onnx'

RKNN_MODEL_PATH = './yolov5.rknn'

im_file = './mask.jpg' # 推理图片

DATASET = './dataset.txt'

# Create RKNN object

rknn = RKNN()

NEED_BUILD_MODEL = True

if NEED_BUILD_MODEL:

# Load caffe model

print('--> Loading model')

ret = rknn.load_onnx(model=ONNX_MODEL)

if ret != 0:

print('load caffe model failed!')

exit(ret)

print('done')

rknn.config(reorder_channel='0 1 2', mean_values=[[0, 0, 0]], std_values=[[255, 255, 255]],target_platform='rv1126')

# Build model

print('--> Building model')

ret = rknn.build(do_quantization=True, dataset='./dataset.txt')

if ret != 0:

print('build model failed.')

exit(ret)

print('done')

# Export rknn model

print('--> Export RKNN model')

ret = rknn.export_rknn(RKNN_MODEL_PATH)

if ret != 0:

print('Export rknn model failed.')

exit(ret)

print('done')

else:

# Direct load rknn model

print('Loading RKNN model')

ret = rknn.load_rknn(RKNN_MODEL_PATH)

if ret != 0:

print('load rknn model failed.')

exit(ret)

print('done')

print('--> init runtime')

# ret = rknn.init_runtime()

ret = rknn.init_runtime(target='rv1126')

if ret != 0:

print('init runtime failed.')

exit(ret)

print('done')

img = cv2.imread(im_file)

img = cv2.resize(img,(IMG_SIZE,IMG_SIZE))

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

# inference

print('--> inference')

outputs = rknn.inference(inputs=[img])

print('done')

boxes, classes, scores = yolov3_post_process(outputs[0][0])

image = cv2.imread(im_file)

image = cv2.resize(image,(IMG_SIZE,IMG_SIZE))

if boxes is not None:

draw(image, boxes, scores, classes)

cv2.imwrite("result.jpg",image)

rknn.release()

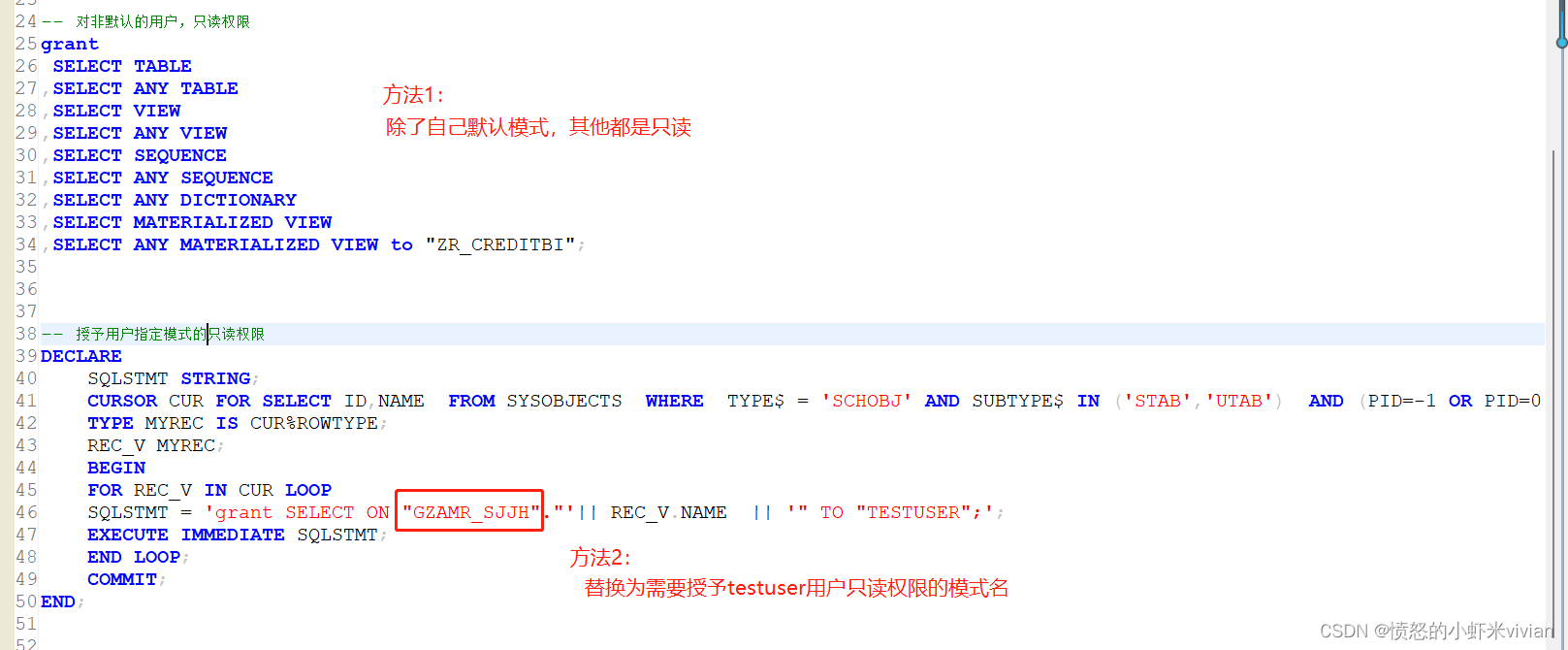

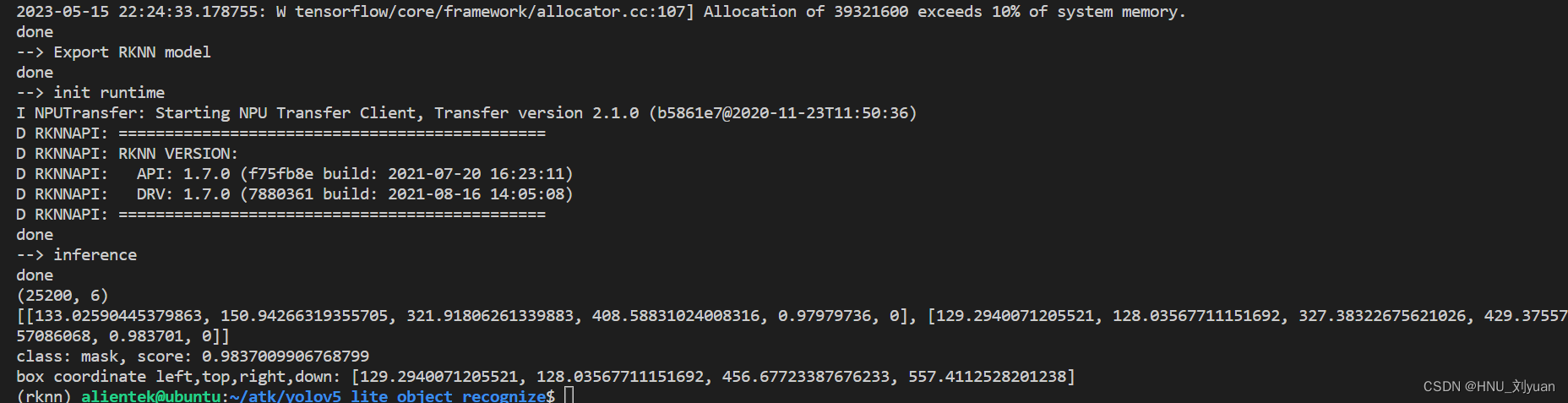

运行结果如下所示:

从图中可以看出,这张图片只检测出了一个口罩,最终会将检测结果保存成result.jpg方便查看,我们使用adb pull下载到本地,打开后:

最值得注意的就是后处理部分,这部分需要非常熟悉yolo的检测原理,同时使用cpp进行部署的时候也要进行相同的操作。

C++部署

使用c++部署相比于使用python部署的难点也是在后处理部分,前面的模型加载部分大同小异,最后得到的检测结果是个地址,我们需要手动对地址进行解码,也是参考的同样的示例,下面给出代码,不过有一些依赖需要注意,当时也可以下载提供的工程:

/*-------------------------------------------

Includes

-------------------------------------------*/

#include <stdio.h>

#include <stdint.h>

#include <stdlib.h>

#include <fstream>

#include <iostream>

#include <sstream>

#include <sys/time.h>

// #include <time.h>

#include <chrono>

#define STB_IMAGE_IMPLEMENTATION

#include "stb/stb_image.h"

#define STB_IMAGE_RESIZE_IMPLEMENTATION

#include <stb/stb_image_resize.h>

#include "rknn_api.h"

#include <vector>

#include "opencv2/core/core.hpp"

#include "opencv2/imgproc/imgproc.hpp"

#include "opencv2/highgui/highgui.hpp"

#include <opencv2/opencv.hpp>

#include "rga.h"

#include "drm_func.h"

#include "rga_func.h"

#include "rknn_api.h"

using namespace std;

int num_class = 1;

int img_size = 640;

float objThreshold = 0.5;

float class_score = 0.5;

float nmsThreshold = 0.5;

std::vector<string> class_names = {"mask"};

std::vector<int> stride = {8,16,32};

std::vector<std::vector<int>> anchors = {{10,13,16,30,33,23},{30,61,62,45,59,119},{116,90,156,198,373,326}};

typedef struct BoxInfo

{

float x1;

float y1;

float x2;

float y2;

float score;

int label;

} BoxInfo;

void nms(vector<BoxInfo>& input_boxes)

{

sort(input_boxes.begin(), input_boxes.end(), [](BoxInfo a, BoxInfo b) { return a.score > b.score; });

vector<float> vArea(input_boxes.size());

for (int i = 0; i < int(input_boxes.size()); ++i)

{

vArea[i] = (input_boxes.at(i).x2 - input_boxes.at(i).x1 + 1)

* (input_boxes.at(i).y2 - input_boxes.at(i).y1 + 1);

}

vector<bool> isSuppressed(input_boxes.size(), false);

for (int i = 0; i < int(input_boxes.size()); ++i)

{

if (isSuppressed[i]) { continue; }

for (int j = i + 1; j < int(input_boxes.size()); ++j)

{

if (isSuppressed[j]) { continue; }

float xx1 = (max)(input_boxes[i].x1, input_boxes[j].x1);

float yy1 = (max)(input_boxes[i].y1, input_boxes[j].y1);

float xx2 = (min)(input_boxes[i].x2, input_boxes[j].x2);

float yy2 = (min)(input_boxes[i].y2, input_boxes[j].y2);

float w = (max)(float(0), xx2 - xx1 + 1);

float h = (max)(float(0), yy2 - yy1 + 1);

float inter = w * h;

float ovr = inter / (vArea[i] + vArea[j] - inter);

if (ovr >= nmsThreshold)

{

isSuppressed[j] = true;

}

}

}

// return post_nms;

int idx_t = 0;

input_boxes.erase(remove_if(input_boxes.begin(), input_boxes.end(), [&idx_t, &isSuppressed](const BoxInfo& f) { return isSuppressed[idx_t++]; }), input_boxes.end());

}

/*-------------------------------------------

Functions

-------------------------------------------*/

static void printRKNNTensor(rknn_tensor_attr *attr)

{

printf("index=%d name=%s n_dims=%d dims=[%d %d %d %d] n_elems=%d size=%d fmt=%d type=%d qnt_type=%d fl=%d zp=%d scale=%f\n",

attr->index, attr->name, attr->n_dims, attr->dims[3], attr->dims[2], attr->dims[1], attr->dims[0],

attr->n_elems, attr->size, 0, attr->type, attr->qnt_type, attr->fl, attr->zp, attr->scale);

}

static unsigned char *load_model(const char *filename, int *model_size)

{

FILE *fp = fopen(filename, "rb");

if (fp == nullptr)

{

printf("fopen %s fail!\n", filename);

return NULL;

}

fseek(fp, 0, SEEK_END);

int model_len = ftell(fp);

unsigned char *model = (unsigned char *)malloc(model_len);

fseek(fp, 0, SEEK_SET);

if (model_len != fread(model, 1, model_len, fp))

{

printf("fread %s fail!\n", filename);

free(model);

return NULL;

}

*model_size = model_len;

if (fp)

{

fclose(fp);

}

return model;

}

static int rknn_GetTop(

float *pfProb,

float *pfMaxProb,

uint32_t *pMaxClass,

uint32_t outputCount,

uint32_t topNum)

{

uint32_t i, j;

#define MAX_TOP_NUM 20

if (topNum > MAX_TOP_NUM)

return 0;

memset(pfMaxProb, 0, sizeof(float) * topNum);

memset(pMaxClass, 0xff, sizeof(float) * topNum);

for (j = 0; j < topNum; j++)

{

for (i = 0; i < outputCount; i++)

{

if ((i == *(pMaxClass + 0)) || (i == *(pMaxClass + 1)) || (i == *(pMaxClass + 2)) ||

(i == *(pMaxClass + 3)) || (i == *(pMaxClass + 4)))

{

continue;

}

if (pfProb[i] > *(pfMaxProb + j))

{

*(pfMaxProb + j) = pfProb[i];

*(pMaxClass + j) = i;

}

}

}

return 1;

}

static unsigned char *load_image(const char *image_path, rknn_tensor_attr *input_attr)

{

int req_height = 0;

int req_width = 0;

int req_channel = 0;

switch (input_attr->fmt)

{

case RKNN_TENSOR_NHWC:

req_height = input_attr->dims[2];

req_width = input_attr->dims[1];

req_channel = input_attr->dims[0];

break;

case RKNN_TENSOR_NCHW:

req_height = input_attr->dims[1];

req_width = input_attr->dims[0];

req_channel = input_attr->dims[2];

break;

default:

printf("meet unsupported layout\n");

return NULL;

}

printf("w=%d,h=%d,c=%d, fmt=%d\n", req_width, req_height, req_channel, input_attr->fmt);

int height = 0;

int width = 0;

int channel = 0;

unsigned char *image_data = stbi_load(image_path, &width, &height, &channel, req_channel);

if (image_data == NULL)

{

printf("load image failed!\n");

return NULL;

}

if (width != req_width || height != req_height)

{

unsigned char *image_resized = (unsigned char *)STBI_MALLOC(req_width * req_height * req_channel);

if (!image_resized)

{

printf("malloc image failed!\n");

STBI_FREE(image_data);

return NULL;

}

if (stbir_resize_uint8(image_data, width, height, 0, image_resized, req_width, req_height, 0, channel) != 1)

{

printf("resize image failed!\n");

STBI_FREE(image_data);

return NULL;

}

STBI_FREE(image_data);

image_data = image_resized;

}

return image_data;

}

/*-------------------------------------------

Main Function

-------------------------------------------*/

int main(int argc, char **argv)

{

rknn_context ctx;

int ret;

int model_len = 0;

unsigned char *model;

const char *model_path = argv[1];

const char *img_path = argv[2];

// Load RKNN Model

model = load_model(model_path, &model_len);

ret = rknn_init(&ctx, model, model_len, 0);

if (ret < 0)

{

printf("rknn_init fail! ret=%d\n", ret);

return -1;

}

// Get Model Input Output Info

rknn_input_output_num io_num;

ret = rknn_query(ctx, RKNN_QUERY_IN_OUT_NUM, &io_num, sizeof(io_num));

if (ret != RKNN_SUCC)

{

printf("rknn_query fail! ret=%d\n", ret);

return -1;

}

printf("model input num: %d, output num: %d\n", io_num.n_input, io_num.n_output);

printf("input tensors:\n");

rknn_tensor_attr input_attrs[io_num.n_input];

memset(input_attrs, 0, sizeof(input_attrs));

for (int i = 0; i < io_num.n_input; i++)

{

input_attrs[i].index = i;

ret = rknn_query(ctx, RKNN_QUERY_INPUT_ATTR, &(input_attrs[i]), sizeof(rknn_tensor_attr));

if (ret != RKNN_SUCC)

{

printf("rknn_query fail! ret=%d\n", ret);

return -1;

}

printRKNNTensor(&(input_attrs[i]));

}

printf("output tensors:\n");

rknn_tensor_attr output_attrs[io_num.n_output];

memset(output_attrs, 0, sizeof(output_attrs));

for (int i = 0; i < io_num.n_output; i++)

{

output_attrs[i].index = i;

ret = rknn_query(ctx, RKNN_QUERY_OUTPUT_ATTR, &(output_attrs[i]), sizeof(rknn_tensor_attr));

if (ret != RKNN_SUCC)

{

printf("rknn_query fail! ret=%d\n", ret);

return -1;

}

printRKNNTensor(&(output_attrs[i]));

printf("%d, %d, %d, %d\n",output_attrs[i].dims[0],output_attrs[i].dims[1],output_attrs[i].dims[2],output_attrs[i].dims[3]);

}

vector<BoxInfo> generate_boxes;

rknn_output outputs[io_num.n_output];

memset(outputs, 0, sizeof(outputs));

unsigned char *input_data = NULL;

rga_context rga_ctx;

drm_context drm_ctx;

memset(&rga_ctx, 0, sizeof(rga_context));

memset(&drm_ctx, 0, sizeof(drm_context));

// DRM alloc buffer

int drm_fd = -1;

int buf_fd = -1; // converted from buffer handle

unsigned int handle;

size_t actual_size = 0;

void *drm_buf = NULL;

cv::Mat input_img = cv::imread(img_path);

int video_width = input_img.cols;

int video_height = input_img.rows;

int channel = input_img.channels();

int width = img_size;

int height = img_size;

drm_fd = drm_init(&drm_ctx);

drm_buf = drm_buf_alloc(&drm_ctx, drm_fd, video_width, video_height, channel * 8, &buf_fd, &handle, &actual_size);

void *resize_buf = malloc(height * width * channel);

// init rga context

RGA_init(&rga_ctx);

uint32_t input_model_image_size = width * height * channel;

// Set Input Data

rknn_input inputs[1];

memset(inputs, 0, sizeof(inputs));

inputs[0].index = 0;

inputs[0].type = RKNN_TENSOR_UINT8;

inputs[0].size = input_model_image_size;

inputs[0].fmt = RKNN_TENSOR_NHWC;

for(int run_times =0;run_times<10;run_times++)

{

// clock_t start,end;

// start = clock();

auto start=std::chrono::steady_clock::now();

// Load image

// input_data = load_image(img_path, &input_attrs[0]);

// if (!input_data)

// {

// return -1;

// }

cv::Mat input_img = cv::imread(img_path);

cv::cvtColor(input_img, input_img, cv::COLOR_BGR2RGB);

// clock_t load_img_time = clock();

auto load_img_time=std::chrono::steady_clock::now();

double dr_ms=std::chrono::duration<double,std::milli>(load_img_time-start).count();

std::cout<<"load_img_time = "<< dr_ms << std::endl;

// cout<<"load_img_time = "<<double(load_img_time-start)/CLOCKS_PER_SEC*1000<<"ms"<<endl;

/*

// Set Input Data

rknn_input inputs[1];

memset(inputs, 0, sizeof(inputs));

inputs[0].index = 0;

inputs[0].type = RKNN_TENSOR_UINT8;

inputs[0].size = input_attrs[0].size;

inputs[0].fmt = RKNN_TENSOR_NHWC;

inputs[0].buf = input_img.data;//input_data;

ret = rknn_inputs_set(ctx, io_num.n_input, inputs);

if (ret < 0)

{

printf("rknn_input_set fail! ret=%d\n", ret);

return -1;

}

*/

memcpy(drm_buf, (uint8_t *)input_img.data , video_width * video_height * channel);

img_resize_slow(&rga_ctx, drm_buf, video_width, video_height, resize_buf, width, height);

inputs[0].buf = resize_buf;

ret = rknn_inputs_set(ctx, io_num.n_input, inputs);

if (ret < 0)

{

printf("ERROR: rknn_inputs_set fail! ret=%d\n", ret);

return NULL;

}

auto pre_time=std::chrono::steady_clock::now();

dr_ms=std::chrono::duration<double,std::milli>(pre_time-load_img_time).count();

std::cout<<"pre_time = "<< dr_ms << std::endl;

// Run

printf("rknn_run\n");

ret = rknn_run(ctx, nullptr);

if (ret < 0)

{

printf("rknn_run fail! ret=%d\n", ret);

return -1;

}

auto rknn_run_time=std::chrono::steady_clock::now();

dr_ms=std::chrono::duration<double,std::milli>(rknn_run_time-pre_time).count();

std::cout<<"rknn_run_time = "<< dr_ms << std::endl;

// Get Output

for (int i = 0; i < io_num.n_output; i++)

{

outputs[i].want_float = 1;

}

ret = rknn_outputs_get(ctx, io_num.n_output, outputs, NULL);

if (ret < 0)

{

printf("ERROR: rknn_outputs_get fail! ret=%d\n", ret);

return NULL;

}

// resize ratio

float ratioh = 1.0, ratiow = 1.0;

int n = 0, q = 0, i = 0, j = 0, k = 0; ///xmin,ymin,xamx,ymax,box_score,class_score

// clock_t post_time = clock();

const int nout = num_class + 5;

float *preds = (float *)outputs[0].buf;

for (n = 0; n < 3; n++) ///

{

int num_grid_x = (int)(img_size / stride[n]);

int num_grid_y = (int)(img_size / stride[n]);

for (q = 0; q < 3; q++) ///anchor

{

const float anchor_w = anchors[n][q * 2];

const float anchor_h = anchors[n][q * 2 + 1];

for (i = 0; i < num_grid_y; i++)

{

for (j = 0; j < num_grid_x; j++)

{

float box_score = preds[4];

if (box_score > objThreshold)

{

float class_score = 0;

int class_ind = 0;

for (k = 0; k < num_class; k++)

{

if (preds[k + 5] > class_score)

{

class_score = preds[k + 5];

class_ind = k;

}

}

//if (class_score > this->confThreshold)

//{

float cx = (preds[0] * 2.f - 0.5f + j) * stride[n]; ///cx

float cy = (preds[1] * 2.f - 0.5f + i) * stride[n]; ///cy

float w = powf(preds[2] * 2.f, 2.f) * anchor_w; ///w

float h = powf(preds[3] * 2.f, 2.f) * anchor_h; ///h

float xmin = (cx - 0.5 * w)*ratiow;

float ymin = (cy - 0.5 * h)*ratioh;

float xmax = (cx + 0.5 * w)*ratiow;

float ymax = (cy + 0.5 * h)*ratioh;

//printf("%f,%f,%f,%f,%f,%d\n",xmin, ymin, xmax, ymax, class_score, class_ind);

generate_boxes.push_back(BoxInfo{ xmin, ymin, xmax, ymax, class_score, class_ind });

//}

}

preds += nout;

}

}

}

}

nms(generate_boxes);

auto end_time = std::chrono::steady_clock::now();

dr_ms=std::chrono::duration<double,std::milli>(end_time-start).count();

std::cout<<"end_time = "<< dr_ms << std::endl;

}

cv::Mat frame = cv::imread(img_path);

cv::resize(frame,frame,cv::Size(img_size,img_size));

for (size_t i = 0; i < generate_boxes.size(); ++i)

{

int xmin = int(generate_boxes[i].x1);

int ymin = int(generate_boxes[i].y1);

cv::rectangle(frame, cv::Point(xmin, ymin), cv::Point(int(generate_boxes[i].x2), int(generate_boxes[i].y2)), cv::Scalar(0, 0, 255), 2);

std::string str_num = std::to_string(generate_boxes[i].score);

std::string label = str_num.substr(0, str_num.find(".") + 3);

label = class_names[generate_boxes[i].label] + ":" + label;

cv::putText(frame, label, cv::Point(xmin, ymin - 5), cv::FONT_HERSHEY_SIMPLEX, 0.75, cv::Scalar(0, 255, 0), 1);

}

cv::imwrite("output.jpg",frame);

// Release rknn_outputs

rknn_outputs_release(ctx, 1, outputs);

free(resize_buf);

drm_buf_destroy(&drm_ctx, drm_fd, buf_fd, handle, drm_buf, actual_size);

drm_deinit(&drm_ctx, drm_fd);

RGA_deinit(&rga_ctx);

// Release

if (ctx >= 0)

{

rknn_destroy(ctx);

}

if (model)

{

free(model);

}

if (input_data)

{

stbi_image_free(input_data);

}

return 0;

}

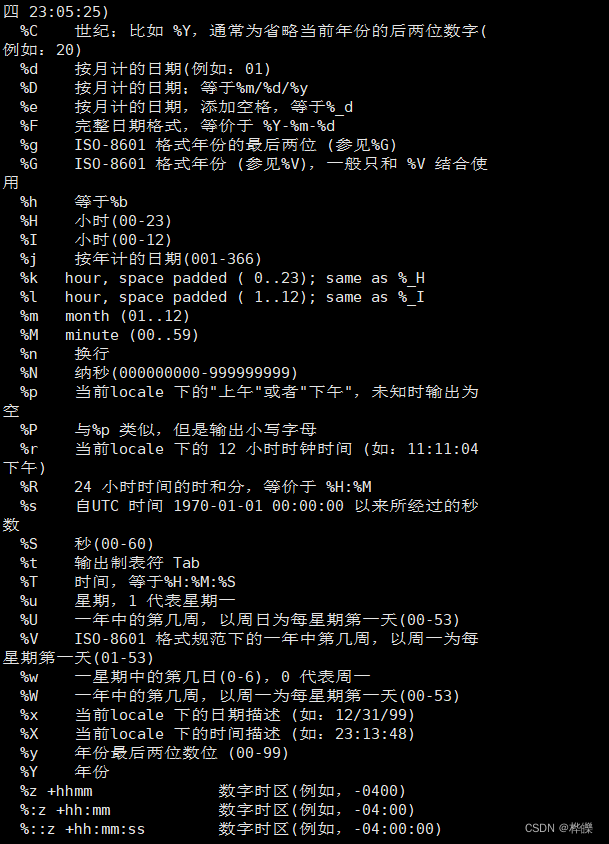

如果没有opencv环境的话可以直接使用load_image进行图片加载,resize部分可以使用rga或者opencv,比较奇怪的是我这里的rga并没有快很多,有机会再仔细研究吧。上面程序对同一张图片进行了10次推理,统计图片加载、图片准备、模型推理以及后处理所用时间。

下载工程后需要修改build.sh和CMakeList中RV1109_TOOL_CHAIN路径,然后将install下的文件夹拷贝到开发板即可。

对于640x640的图片推理一次耗时21ms,还是非常快的。

本工程地址:https://github.com/liuyuan000/Rv1126_YOLOv5-Lite