当编写完一个深层的网络时,可能求导方式过于复杂稍微不小心就会出错,在开始训练使用这个网络模型之前我们可以先进行梯度检查。

梯度检查的步骤如下:

然后反向传播计算loss的导数grad,用以下公式计算误差:

通常来说,当

ϵ

\epsilon

ϵ为

1

0

−

7

10^{-7}

10−7时,误差在

1

0

−

7

10^{-7}

10−7数量级或者小于

1

0

−

7

10^{-7}

10−7,基本上就没有错误。

下面开始代码部分(假设3层网络)。

首先写入参数格式转换所需的一些函数gc_utils.py:

# -*- coding: utf-8 -*-

import numpy as np

import matplotlib.pyplot as plt

def sigmoid(x):

"""

Compute the sigmoid of x

Arguments:

x -- A scalar or numpy array of any size.

Return:

s -- sigmoid(x)

"""

s = 1/(1+np.exp(-x))

return s

def relu(x):

"""

Compute the relu of x

Arguments:

x -- A scalar or numpy array of any size.

Return:

s -- relu(x)

"""

s = np.maximum(0,x)

return s

def dictionary_to_vector(parameters):

"""

Roll all our parameters dictionary into a single vector satisfying our specific required shape.

"""

keys = []

count = 0

for key in ["W1", "b1", "W2", "b2", "W3", "b3"]:

# flatten parameter

new_vector = np.reshape(parameters[key], (-1,1)) # 将元素转化为一行(列值为1)

keys = keys + [key]*new_vector.shape[0]

if count == 0:

theta = new_vector

else:

theta = np.concatenate((theta, new_vector), axis=0)

count = count + 1

return theta, keys

def vector_to_dictionary(theta):

"""

Unroll all our parameters dictionary from a single vector satisfying our specific required shape.

"""

parameters = {}

parameters["W1"] = theta[:20].reshape((5,4))

parameters["b1"] = theta[20:25].reshape((5,1))

parameters["W2"] = theta[25:40].reshape((3,5))

parameters["b2"] = theta[40:43].reshape((3,1))

parameters["W3"] = theta[43:46].reshape((1,3))

parameters["b3"] = theta[46:47].reshape((1,1))

return parameters

def gradients_to_vector(gradients):

"""

Roll all our gradients dictionary into a single vector satisfying our specific required shape.

"""

count = 0

for key in ["dW1", "db1", "dW2", "db2", "dW3", "db3"]:

# flatten parameter

new_vector = np.reshape(gradients[key], (-1,1))

if count == 0:

theta = new_vector

else:

theta = np.concatenate((theta, new_vector), axis=0)

count = count + 1

return theta

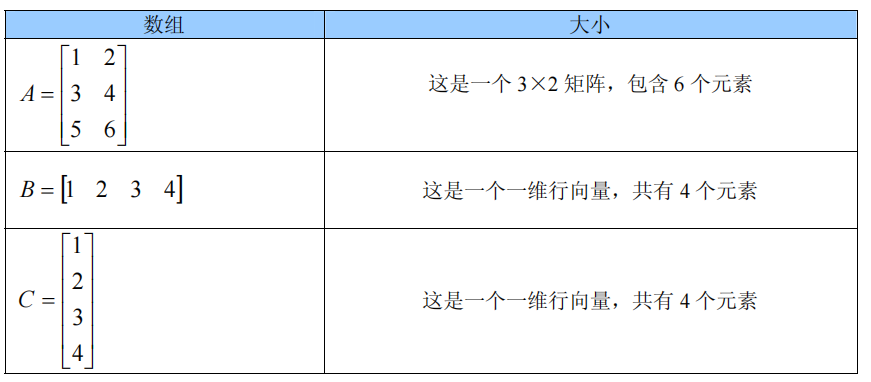

函数dictionary_to_vector()将"parameters" 字典转换为一个称为 “values"的向量,通过将所有参数(W1,b1,W2,b2,W3,b3)reshape为列向量并将它们连接起来而获得。反函数是”vector_to_dictionary",它返回“parameters”字典用于正向传播求loss。

以下为测试代码:

先添加等会测试使用的例子。

import numpy as np

import gc_utils

def gradient_check_n_test_case():

np.random.seed(1)

x = np.random.randn(4, 3)

y = np.array([1, 1, 0])

W1 = np.random.randn(5, 4)

b1 = np.random.randn(5, 1)

W2 = np.random.randn(3, 5)

b2 = np.random.randn(3, 1)

W3 = np.random.randn(1, 3)

b3 = np.random.randn(1, 1)

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2,

"W3": W3,

"b3": b3}

return x, y, parameters

然后是前向传播与反向传播:

def forward_propagation_n(X, Y, parameters):

"""

实现图中的前向传播(并计算成本)。

参数:

X - 训练集为m个例子

Y - m个示例的标签

parameters - 包含参数“W1”,“b1”,“W2”,“b2”,“W3”,“b3”的python字典:

W1 - 权重矩阵,维度为(5,4)

b1 - 偏向量,维度为(5,1)

W2 - 权重矩阵,维度为(3,5)

b2 - 偏向量,维度为(3,1)

W3 - 权重矩阵,维度为(1,3)

b3 - 偏向量,维度为(1,1)

返回:

cost - 成本函数(logistic)

"""

m = X.shape[1]

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID

Z1 = np.dot(W1, X) + b1

A1 = gc_utils.relu(Z1)

Z2 = np.dot(W2, A1) + b2

A2 = gc_utils.relu(Z2)

Z3 = np.dot(W3, A2) + b3

A3 = gc_utils.sigmoid(Z3)

# 计算成本

logprobs = np.multiply(-np.log(A3), Y) + np.multiply(-np.log(1 - A3), 1 - Y)

cost = (1 / m) * np.sum(logprobs)

cache = (Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3)

return cost, cache

def backward_propagation_n(X, Y, cache):

"""

实现图中所示的反向传播。

参数:

X - 输入数据点(输入节点数量,1)

Y - 标签

cache - 来自forward_propagation_n()的cache输出

返回:

gradients - 一个字典,其中包含与每个参数、激活和激活前变量相关的成本梯度。

"""

m = X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1. / m * np.dot(dZ3, A2.T)

db3 = 1. / m * np.sum(dZ3, axis=1, keepdims=True)

dA2 = np.dot(W3.T, dZ3)

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

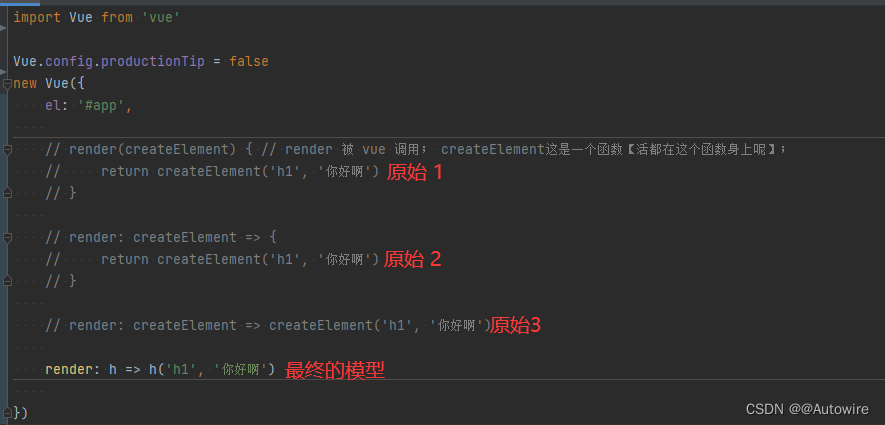

dW2 = 1. / m * np.dot(dZ2, A1.T) * 2 # Should not multiply by 2

# dW2 = 1. / m * np.dot(dZ2, A1.T)

db2 = 1. / m * np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1. / m * np.dot(dZ1, X.T)

db1 = 4. / m * np.sum(dZ1, axis=1, keepdims=True) # Should not multiply by 4

# db1 = 1. / m * np.sum(dZ1, axis=1, keepdims=True)

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,

"dA2": dA2, "dZ2": dZ2, "dW2": dW2, "db2": db2,

"dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradients

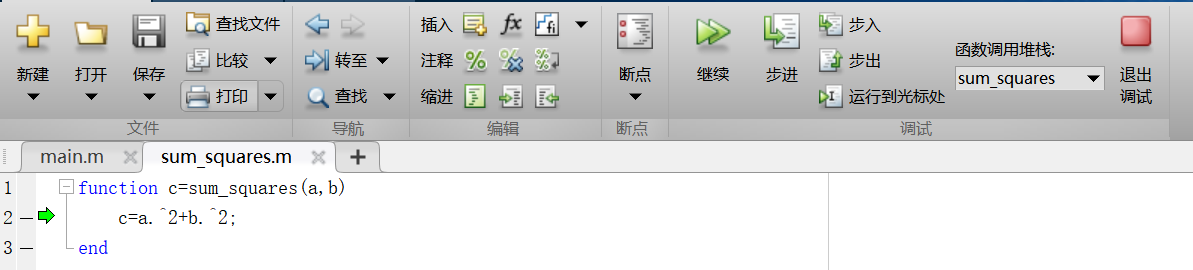

然后开始梯度检验:

这里大概的逻辑是遍历每个参数用上面的公式求得每个参数的grandapprox,然后总的grandapprox向量进行求误差的计算。

def gradient_check_n(parameters, gradients, X, Y, epsilon=1e-7):

"""

检查backward_propagation_n是否正确计算forward_propagation_n输出的成本梯度

参数:

parameters - 包含参数“W1”,“b1”,“W2”,“b2”,“W3”,“b3”的python字典:

grad_output_propagation_n的输出包含与参数相关的成本梯度。

x - 输入数据点,维度为(输入节点数量,1)

y - 标签

epsilon - 计算输入的微小偏移以计算近似梯度

返回:

difference - 近似梯度和后向传播梯度之间的差异

"""

# 初始化参数

parameters_values, keys = gc_utils.dictionary_to_vector(parameters) # keys用不到

grad = gc_utils.gradients_to_vector(gradients)

num_parameters = parameters_values.shape[0]

J_plus = np.zeros((num_parameters, 1))

J_minus = np.zeros((num_parameters, 1))

gradapprox = np.zeros((num_parameters, 1))

# 计算gradapprox

for i in range(num_parameters):

# 计算J_plus [i]。输入:“parameters_values,epsilon”。输出=“J_plus [i]”

thetaplus = np.copy(parameters_values) # Step 1

thetaplus[i][0] = thetaplus[i][0] + epsilon # Step 2

J_plus[i], cache = forward_propagation_n(X, Y, gc_utils.vector_to_dictionary(thetaplus)) # Step 3 ,cache用不到

# 计算J_minus [i]。输入:“parameters_values,epsilon”。输出=“J_minus [i]”。

thetaminus = np.copy(parameters_values) # Step 1

thetaminus[i][0] = thetaminus[i][0] - epsilon # Step 2

J_minus[i], cache = forward_propagation_n(X, Y, gc_utils.vector_to_dictionary(thetaminus)) # Step 3 ,cache用不到

# 计算gradapprox[i]

gradapprox[i] = (J_plus[i] - J_minus[i]) / (2 * epsilon)

# 通过计算差异比较gradapprox和后向传播梯度。

numerator = np.linalg.norm(grad - gradapprox) # Step 1'

denominator = np.linalg.norm(grad) + np.linalg.norm(gradapprox) # Step 2'

difference = numerator / denominator # Step 3'

if difference < 1e-6:

print("梯度检查:梯度正常!")

else:

print("梯度检查:梯度超出阈值!")

print(difference)

return difference

X, Y, parameters = gradient_check_n_test_case() # 自定义的简易数据集

cost, cache = forward_propagation_n(X, Y, parameters)

gradients = backward_propagation_n(X, Y, cache)

difference = gradient_check_n(parameters, gradients, X, Y)

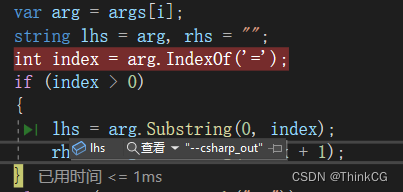

运行后结果如下:

梯度检查:梯度超出阈值!

0.2850931566540251

显然反向传播出现了问题,我们进行检查,发现是dW2和db1出现问题,进行修改后再次运行:

梯度检查:梯度正常!

1.1885552035482147e-07

注意,如果网络很深参数量势必会很大,计算时间会很长,所以一般训练会关闭梯度检查,在训练之前先进行检查,没问题后进行训练。