目录

kubeadm 和二进制安装 k8s 适用场景分析

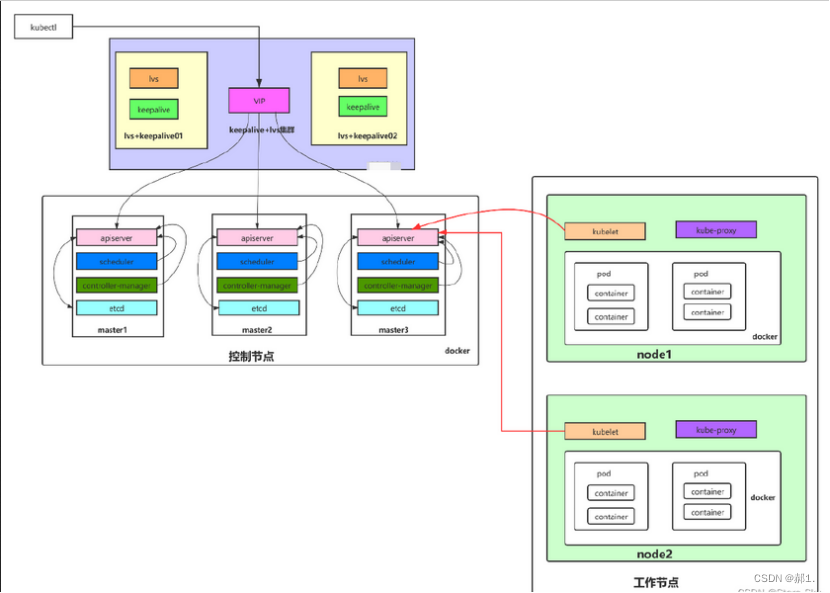

多 master 节点高可用架构图

集群环境准备

部署过程

修改主机内核参数(所有节点)

配置阿里云的repo源(所有节点)

配置国内安装 docker 和 containerd 的阿里云的 repo 源

配置安装 k8s 组件需要的阿里云的 repo 源(所有节点)

主机系统优化(所有节点)

开启 ipvs(所有节点)

升级 Linux 内核(所有节点)

配置免密登录(在 k8s-master1 上操作 )

安装 Docker 和容器运行时 containerd(所有节点)

配置 docker 镜像加速器和驱动(所有节点)

搭建 etcd 集群(在 master1 上操作)

部署 etcd 集群

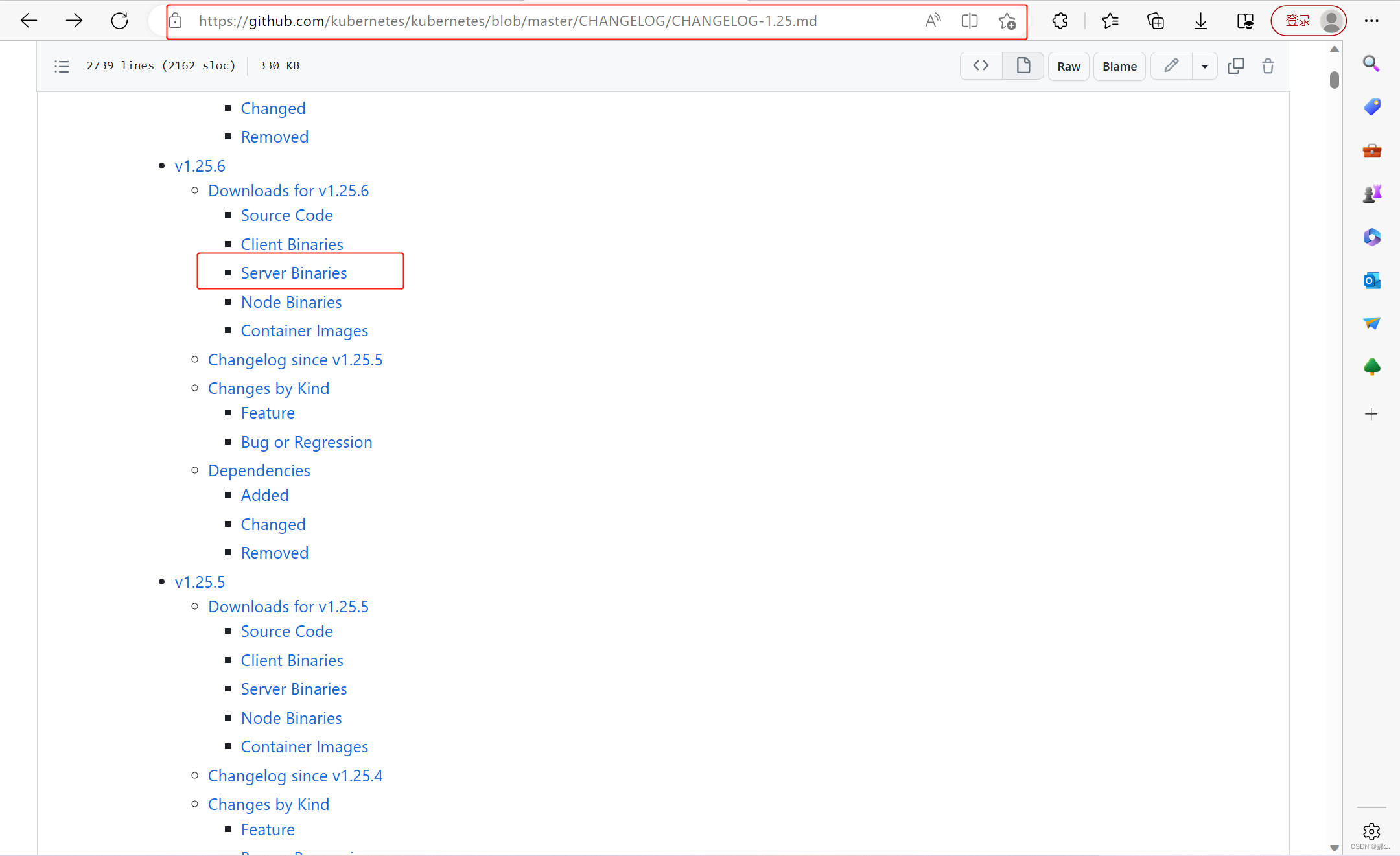

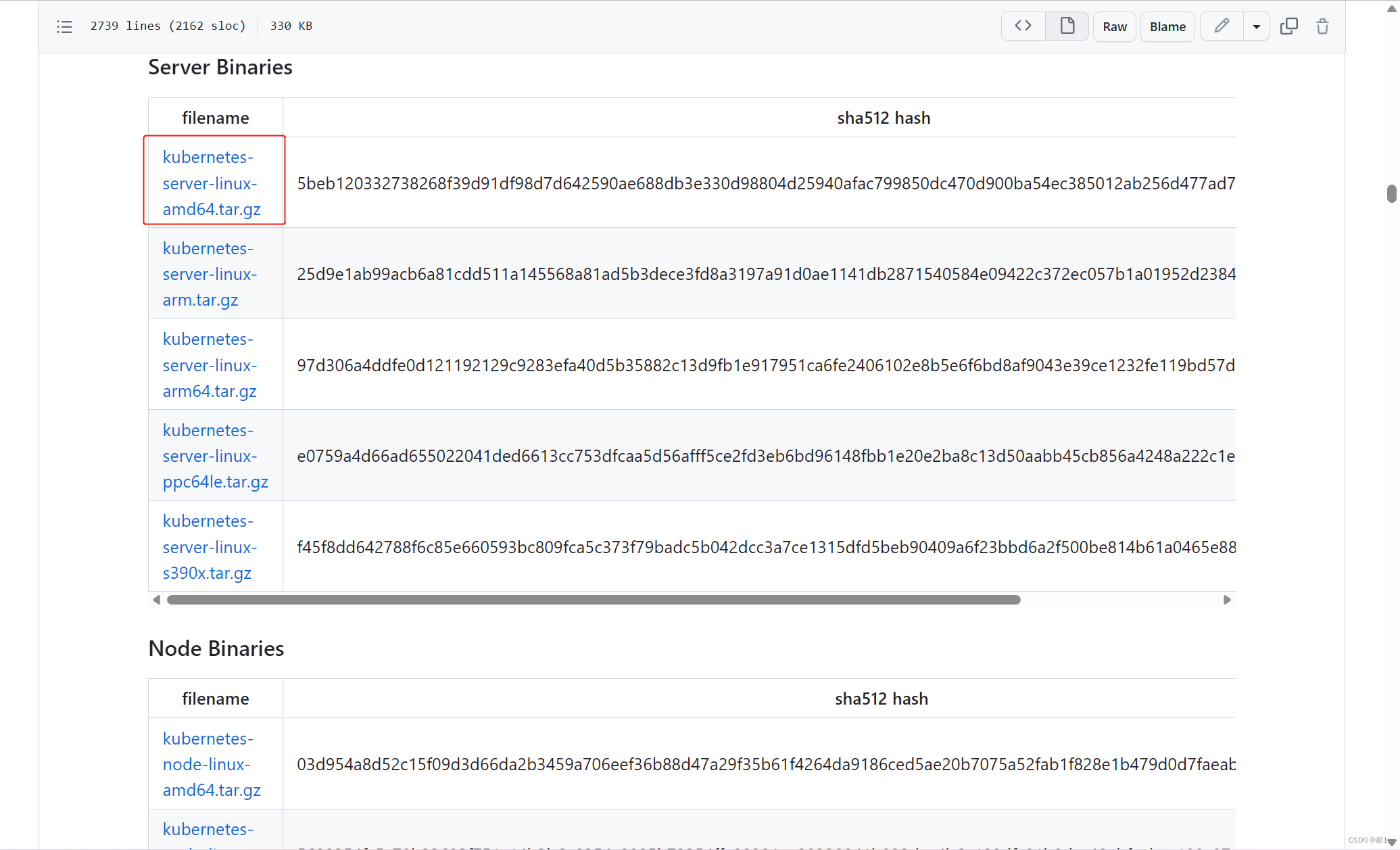

Kubernetes 软件包下载

部署 api-server

生成 apiserver 证书及 token 文件

创建 apiserver 服务配置文件

创建 apiserver 服务管理配置文件

部署 kubectl

部署 kube-controller-manager

部署 kube-scheduler

工作节点(worker node)部署

部署 kubelet(在 k8s-master1 上操作)

部署 kube-proxy

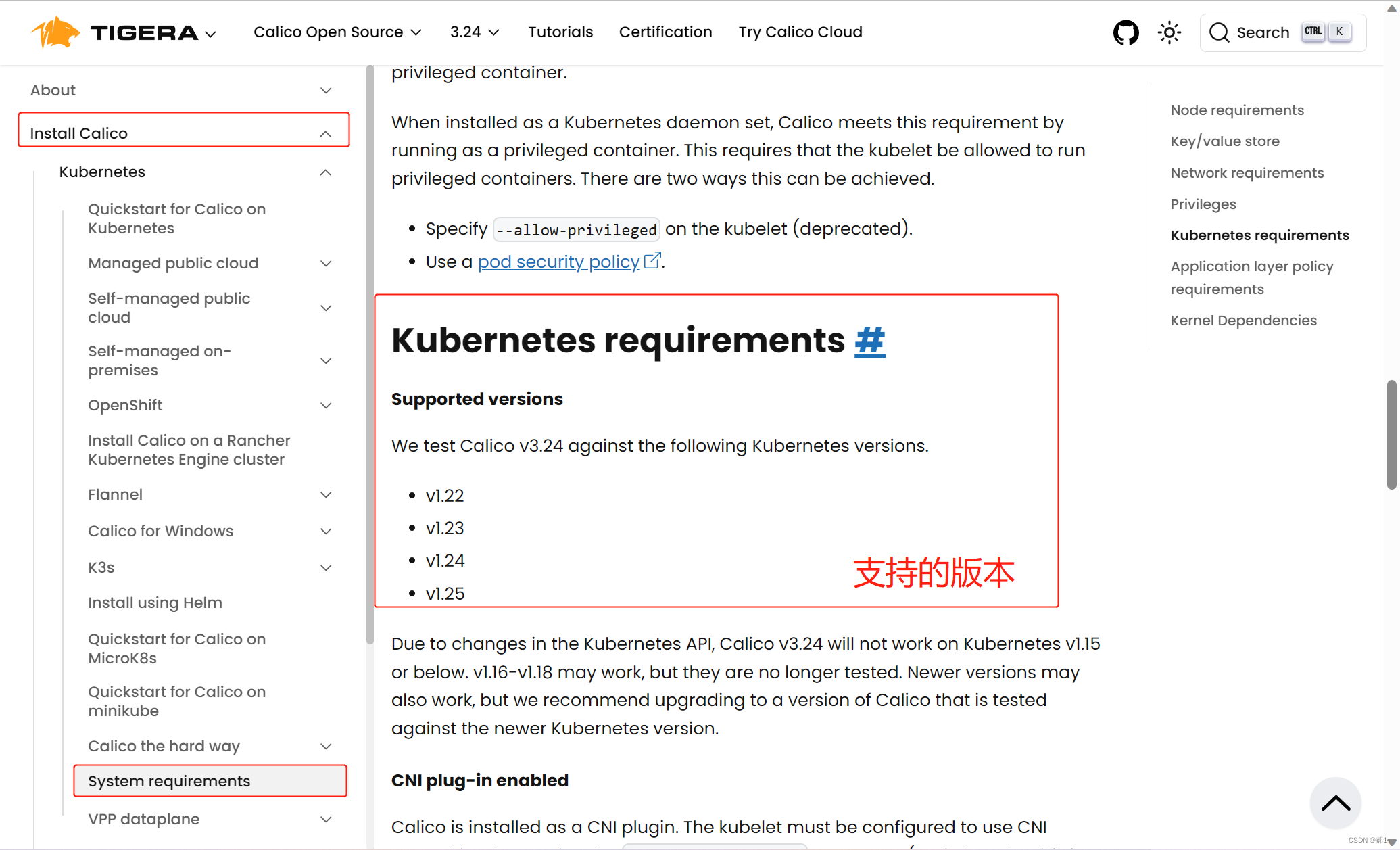

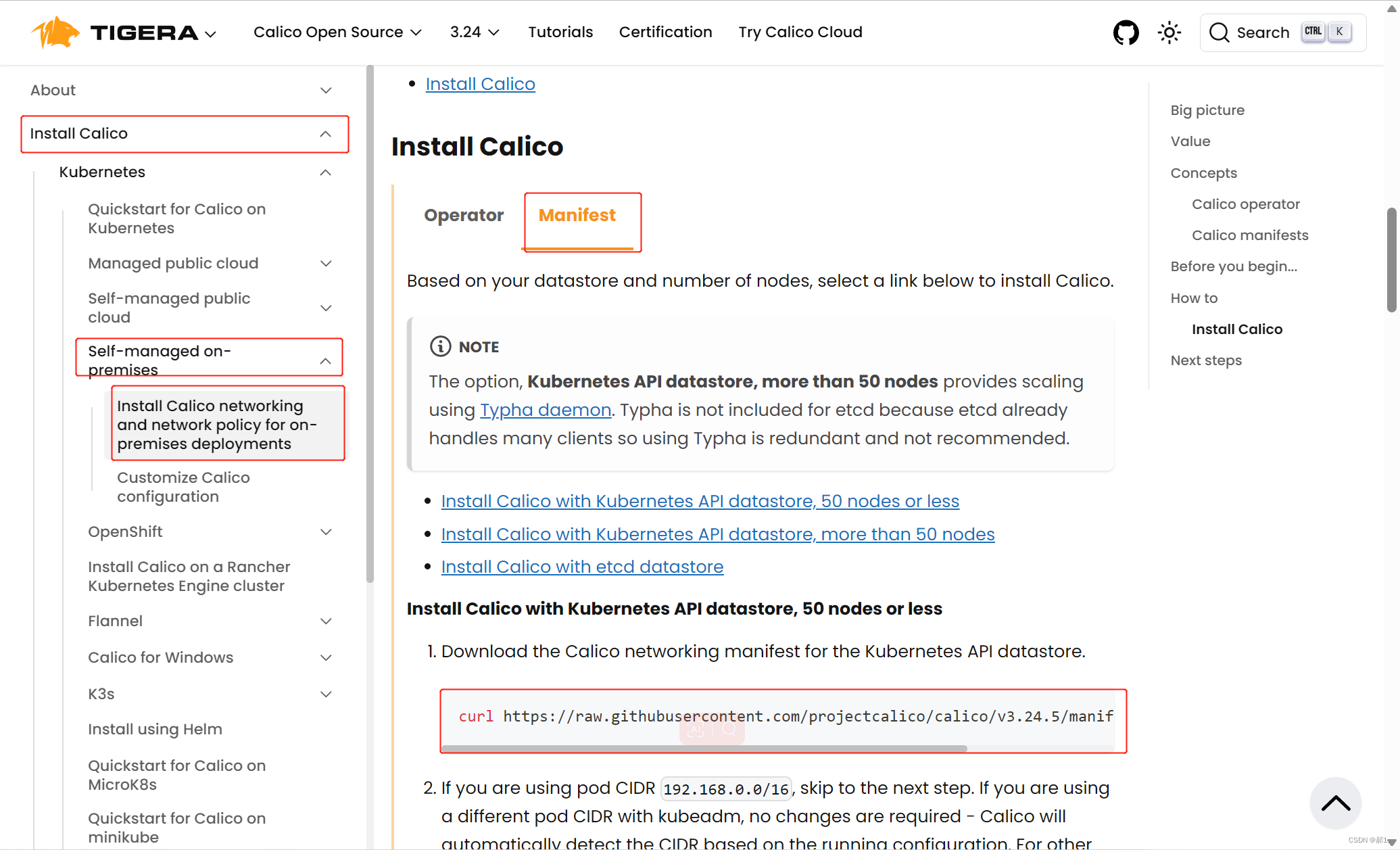

网络组件部署 Calico

下载 calico.yaml

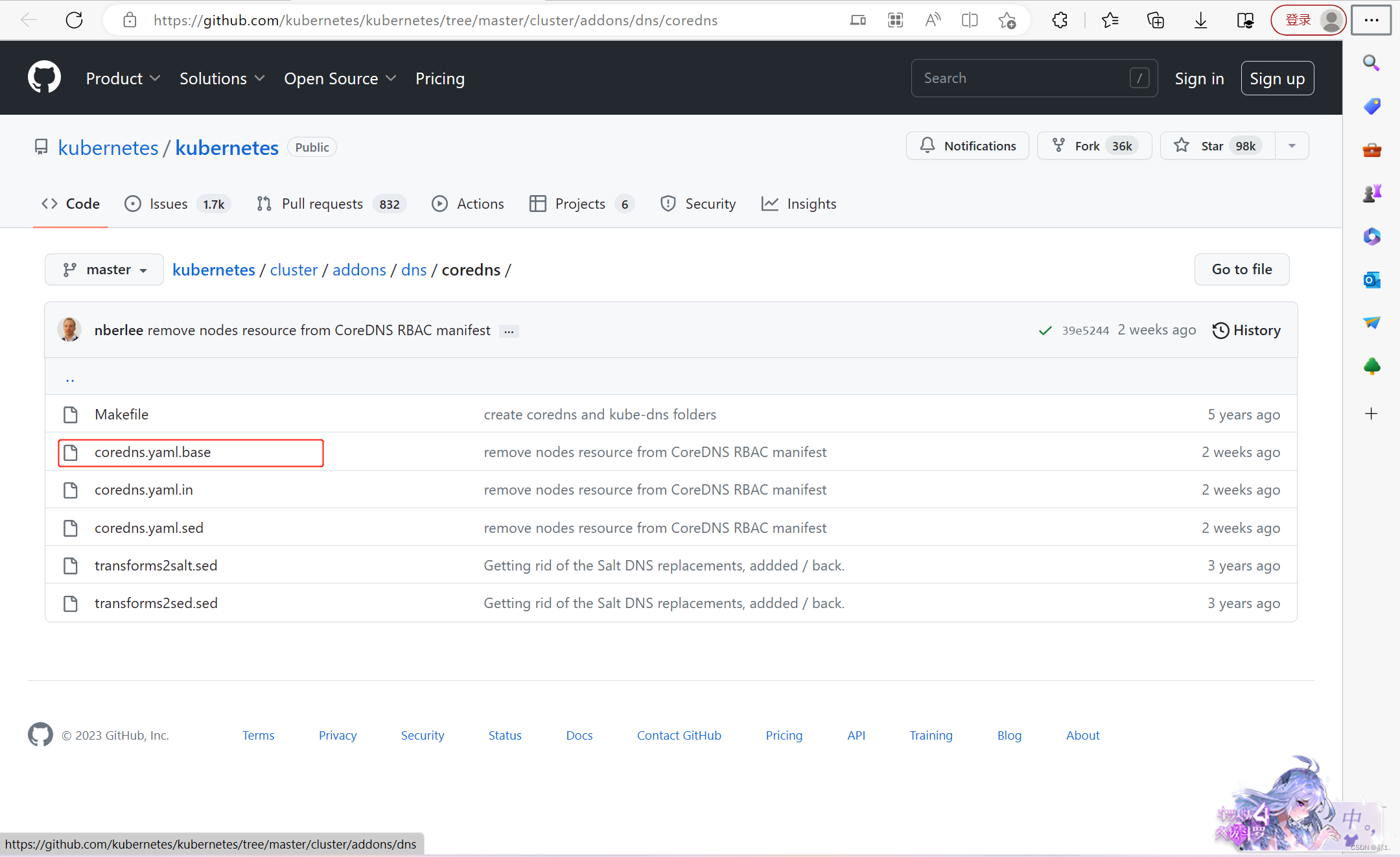

部署 CoreDNS

安装 keepalived+nginx 实现 k8s apiserver 高可用

kubeadm 和二进制安装 k8s 适用场景分析

kubeadm 是官方提供的开源工具,是一个开源项目,用于快速搭建 kubernetes 集群,目前是比较方便和推荐使用的。kubeadm init 以及 kubeadm join 这两个命令可以快速创建 kubernetes 集群。Kubeadm 初始化 k8s,所有的组件都是以 pod 形式运行的,具备故障自恢复能力。

kubeadm 是工具,可以快速搭建集群,也就是相当于用程序脚本帮我们装好了集群,属于自动部署,简化部署操作,证书、组件资源清单文件都是自动创建的,自动部署屏蔽了很多细节,使得对各个模块感知很少,如果对 k8s 架构组件理解不深的话,遇到问题比较难排查。

kubeadm 适合需要经常部署 k8s,或者对自动化要求比较高的场景下使用。

二进制:在官网下载相关组件的二进制包,如果手动安装,对 kubernetes 理解也会更全面。

Kubeadm 和二进制都适合生产环境,在生产环境运行都很稳定,具体如何选择,可以根据实际项目进行评估。

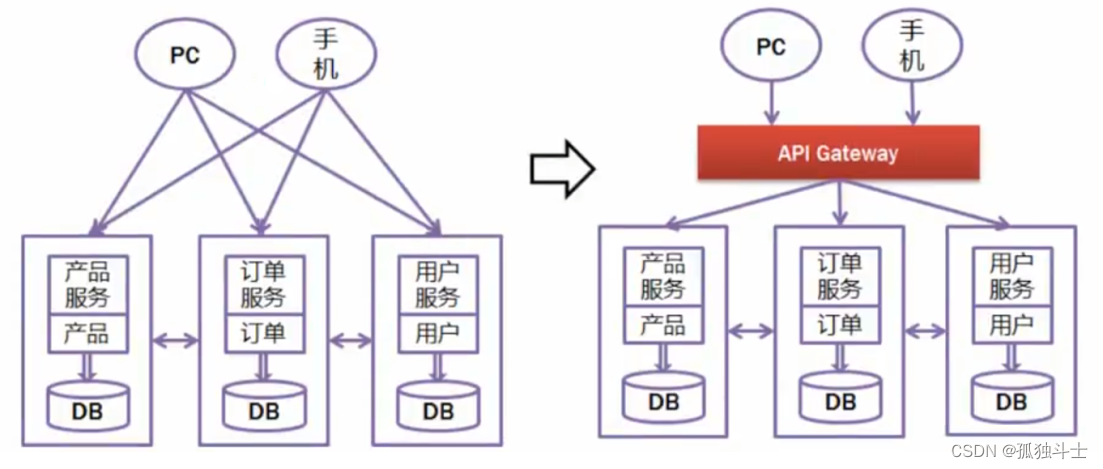

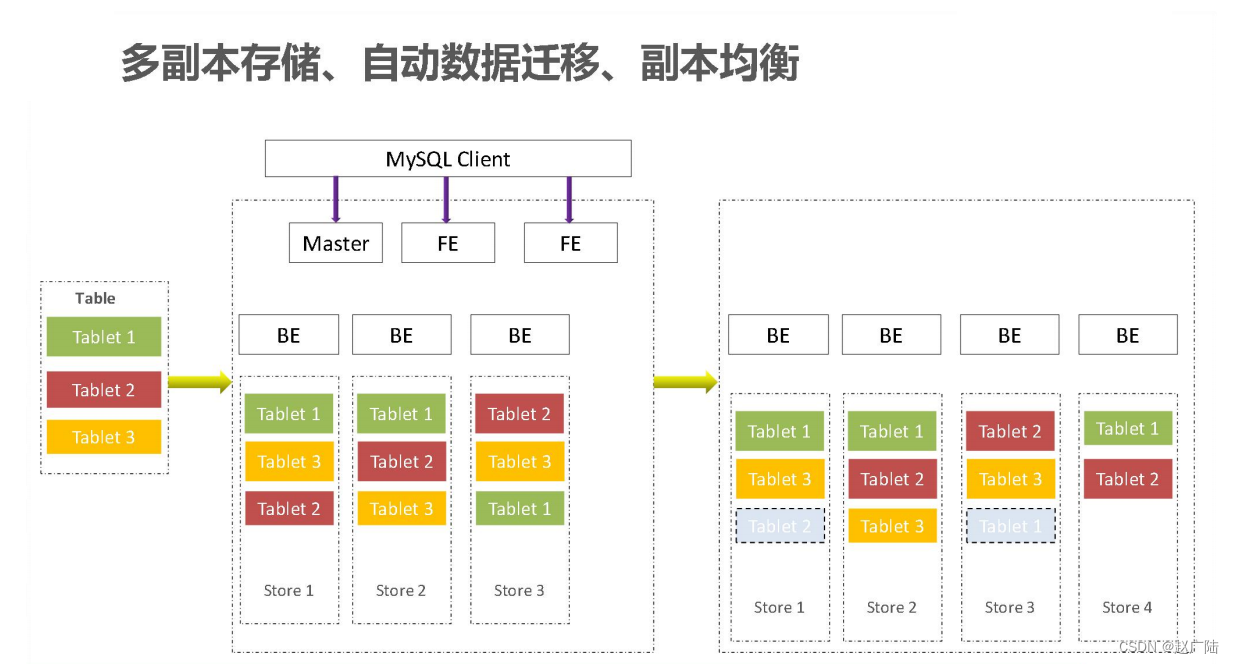

多 master 节点高可用架构图

集群环境准备

- 操作系统:CentOS 7.9

- 最低配置: 2Gib 内存 / 2vCPU / 30G 硬盘

- 虚拟机网络:桥接模式

- k8s 版本:1.25.x

| k8s集群角色 | IP | 主机名 | 安装组件 |

|---|---|---|---|

| 控制节点(master) | 192.168.100.11 | k8s-master1 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubelet、kube-proxy、docker、KeepAlive、nginx |

| 控制节点(master) | 192.168.100.12 | k8s-master2 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubelet、kube-proxy、docker、KeepAlive、nginx |

| 控制节点(master) | 192.168.100.13 | k8s-master3 | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubelet、kube-proxy、docker、KeepAlive、nginx |

| 工作节点(node) | 192.168.100.14 | k8s-node1 | kubelet、kube-proxy、docker、calico、coredns |

| 工作节点(node) | 192.168.100.15 | k8s-node2 | kubelet、kube-proxy、docker、calico、coredns |

| 虚拟IP(VIP) | 192.168.100.100 |

部署过程

#修改主机名

hostnamectl set-hostname k8s-master1

hostnamectl set-hostname k8s-master2

hostnamectl set-hostname k8s-master3

hostnamectl set-hostname k8s-node1

hostnamectl set-hostname k8s-node2#每台主机都要做

[root@k8s-master2 ~]# cat <<END>> /etc/hosts

> 192.168.100.11 k8s-master1

> 192.168.100.12 k8s-master2

> 192.168.100.100 k8s-master-lb

> 192.168.100.13 k8s-master3

> 192.168.100.14 k8s-node1

> 192.168.100.15 k8s-node2

> END

#关闭交换分区

[root@k8s-master1 ~]# swapoff -a

[root@k8s-master1 ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@k8s-master1 ~]# free -m修改主机内核参数(所有节点)

# 加载 br_netfilter 模块

[root@k8s-master1 ~]# cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

[root@k8s-master1 ~]# modprobe overlay

[root@k8s-master1 ~]# modprobe br_netfilter

#验证模块是否加载成功:

[root@k8s-master1 ~]# lsmod | grep br_netfilter

br_netfilter 22256 0

bridge 151336 1 br_netfilter

# 修改内核参数

[root@k8s-master1 ~]# echo "modprobe br_netfilter" >> /etc/profile

[root@k8s-master1 ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

# 使刚才修改的内核参数生效

[root@k8s-master1 ~]# sysctl -p /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

# 设置开机自启

[root@k8s-master1 ~]# systemctl enable --now systemd-modules-load.service配置阿里云的repo源(所有节点)

配置国内安装 docker 和 containerd 的阿里云的 repo 源

[root@k8s-master1 ~]# yum install yum-utils -y

[root@k8s-master1 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo配置安装 k8s 组件需要的阿里云的 repo 源(所有节点)

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

EOF主机系统优化(所有节点)

# limit 优化

[root@k8s-master1 ~]# ulimit -SHn 65535

[root@k8s-master1 ~]# cat <<EOF >> /etc/security/limits.conf

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

开启 ipvs(所有节点)

不开启 ipvs 将会使用 iptables 进行数据包转发,效率低,所以官网推荐需要开通 ipvs:

# 编写 ipvs 脚本

[root@k8s-master1 ~]# vim /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ 0 -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

[root@k8s-master1 ~]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26583 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

ip_vs_lblcr 12922 0

ip_vs_lblc 12819 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs_wlc 12519 0

ip_vs_lc 12516 0

ip_vs 145458 22 ip_vs_dh,ip_vs_lc,ip_vs_nq,ip_vs_rr,ip_vs_sh,ip_vs_ftp,ip_vs_sed,ip_vs_wlc,ip_vs_wrr,ip_vs_lblcr,ip_vs_lblc

nf_conntrack 139264 2 ip_vs,nf_nat

libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack#清除邮件提示消息

# 清除 "您在 /var/spool/mail/root 中有新邮件"信息

echo "unset MAILCHECK" >> /etc/profile

source /etc/profile

# 清空邮箱数据站空间

cat /dev/null > /var/spool/mail/root升级 Linux 内核(所有节点)

#安装 kubernetes 1.24及以上版本,Linux Kernel 需要在 5.x 以上的版本才可满足需求。

[root@k8s-master1 ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

[root@k8s-master1 ~]# yum install https://www.elrepo.org/elrepo-release-7.el7.elrepo.noarch.rpm -y

#查看可用的稳定版镜像,kernel-ml 为长期稳定版本,lt 为长期维护版本

[root@k8s-master1 ~]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

# 此次安装最新长期支持版内核(推荐)

[root@k8s-master1 ~]# yum --enablerepo=elrepo-kernel install -y kernel-lt

查看当前系统已安装内核

[root@k8s-master1 ~]# awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

0 : CentOS Linux (5.4.242-1.el7.elrepo.x86_64) 7 (Core)

1 : CentOS Linux (3.10.0-1160.el7.x86_64) 7 (Core)

2 : CentOS Linux (0-rescue-e28c60d9e458469ebed5e1bc6967f765) 7 (Core)

#所有节点更改内核启动顺序

[root@k8s-master1 ~]# grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

Generating grub configuration file ...

Found linux image: /boot/vmlinuz-5.4.242-1.el7.elrepo.x86_64

Found initrd image: /boot/initramfs-5.4.242-1.el7.elrepo.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-1160.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-1160.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-e28c60d9e458469ebed5e1bc6967f765

Found initrd image: /boot/initramfs-0-rescue-e28c60d9e458469ebed5e1bc6967f765.img

done

[root@k8s-master1 ~]# grubby --args="user.namespace.enable=1" --update-kernel="$(grubby --default-kernel)"

#检查默认内核是不是5.4

[root@k8s-master1 ~]# grubby --default-kernel

/boot/vmlinuz-5.4.242-1.el7.elrepo.x86_64

#所有节点重启然后检查内核是不是5.4

[root@k8s-master1 ~]# reboot

[root@k8s-master1 ~]# uname -r

5.4.242-1.el7.elrepo.x86_64配置免密登录(在 k8s-master1 上操作 )

Master1 节点免密钥登录其他节点,安装过程中⽣成配置⽂件和证书均在 Master1 上操作,集群管理也在 Master01上操作。密钥配置如下:

[root@k8s-master1 ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:5ud1cdQnESsN+MpWjgzhDXQIKO1xxNfcOa57ClhQBZk root@k8s-master1

The key's' randomart image is:

+---[RSA 2048]----+

| . +oo+Ooo..o. |

| . + o.E.+ +o o.|

| o o.o + o..+ +|

| . .o . +. o.|

| S+ * . .|

| = B . o |

| . o.... . |

| +.... |

| oo |

+----[SHA256]-----+

[root@k8s-master1 ~]#

#输入 yes,再输入各主机的密码即可

[root@k8s-master1 ~]# for i in k8s-master1 k8s-master2 k8s-master3 k8s-node1 k8s-node2;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

安装 Docker 和容器运行时 containerd(所有节点)

[root@k8s-master1 ~]# yum -y install docker-ce docker-ce-cli containerd.io docker-compose-plugin

#设置开机自启

[root@k8s-master1 ~]# systemctl enable docker.service --now

#接下来生成 containerd 的配置文件

[root@k8s-master1 ~]# mkdir -p /etc/containerd

[root@k8s-master1 ~]# containerd config default > /etc/containerd/config.toml

#修改配置文件:

[root@k8s-master1 ~]# vim /etc/containerd/config.toml

#把 SystemdCgroup = false 修改成 SystemdCgroup = true

#把 sandbox_image = "k8s.gcr.io/pause:3.6" 修改成 sandbox_image="registry.aliyuncs.com/google_containers/pause:3.7"

61 sandbox_image="registry.aliyuncs.com/google_containers/pause:3.7"

125 SystemdCgroup = true

#设置开机自启

[root@k8s-master1 ~]# systemctl enable containerd --now

Created symlink from /etc/systemd/system/multi-user.target.wants/containerd.service to /usr/lib/systemd/system/containerd.service.

#使用 crictl 对 Kubernetes 节点进行调试

[root@k8s-master1 ~]# cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

[root@k8s-master1 ~]# systemctl restart containerd

配置 containerd 镜像加速器

[root@k8s-master1 ~]# vim /etc/containerd/config.toml

145 config_path = "/etc/containerd/certs.d"

[root@k8s-master1 ~]# mkdir /etc/containerd/certs.d/docker.io/ -p

[root@k8s-master1 ~]# vim /etc/containerd/certs.d/docker.io/hosts.toml

[host."https://vh3bm52y.mirror.aliyuncs.com",host."https://registry.docker-cn.com"]

capabilities = ["pull"]

重启containerd

[root@k8s-master1 ~]# systemctl restart containerd配置 docker 镜像加速器和驱动(所有节点)

# 修改 docker 文件驱动为 systemd,默认为 cgroupfs,kubelet 默认使用 systemd,两者必须一致才可

[root@k8s-master1 ~]# mkdir -p /etc/docker

[root@k8s-master1 ~]# vim /etc/docker/daemon.json

{

"registry-mirrors":["https://hlfcd01t.mirror.aliyuncs.com","https://registry.docker-cn.com","https://docker.mirrors.ustc.edu.cn","https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

[root@k8s-master1 ~]# systemctl daemon-reload && systemctl restart docker搭建 etcd 集群(在 master1 上操作)

#etcd 是一个高可用的键值数据库,存储 k8s 的资源状态信息和网络信息的,etcd 中的数据变更是通过 api server 进行的

#配置 etcd 工作目录

[root@k8s-master1 ~]# mkdir -p /etc/etcd

[root@k8s-master1 ~]# mkdir -p /etc/etcd/ssl

[root@k8s-master1 ~]# mkdir -p /var/lib/etcd/default.etcd

#安装签发证书工具 cfssl

cfssl 是使用 go 编写,由 CloudFlare 开源的一款 PKI/TLS 工具。主要程序有:

cfssl 是 CFSSL 的命令行工具;

cfssljson 用来从 cfssl 程序获取 JSON 输出,并将证书、密钥、CSR 和 bundle 写入文件中。

[root@k8s-master1 work]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@k8s-master1 work]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@k8s-master1 work]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@k8s-master1 work]# ls

cfssl-certinfo_linux-amd64 cfssljson_linux-amd64 cfssl_linux-amd64

[root@k8s-master1 work]# chmod +x cfssl*

[root@k8s-master1 work]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@k8s-master1 work]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@k8s-master1 work]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

[root@k8s-master1 work]# cfssl version

Version: 1.2.0

Revision: dev

Runtime: go1.6

#配置 CA 证书

[root@k8s-master1 work]# vim ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

],

"ca": {

"expiry": "87600h"

}

}

#创建 ca 证书

[root@k8s-master1 work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2023/05/03 15:41:21 [INFO] generating a new CA key and certificate from CSR

2023/05/03 15:41:21 [INFO] generate received request

2023/05/03 15:41:21 [INFO] received CSR

2023/05/03 15:41:21 [INFO] generating key: rsa-2048

2023/05/03 15:41:21 [INFO] encoded CSR

2023/05/03 15:41:21 [INFO] signed certificate with serial number 283743141116621611026606940086884917874781978486

#配置 ca 证书策略

[root@k8s-master1 work]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

#server auth 表示 client 可以对使用该 ca 对 server 提供的证书进行验证。

#client auth 表示 server 可以使用该 ca 对 client 提供的证书进行验证。

#创建 etcd 证书

[root@k8s-master1 work]# vim etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.100.11",

"192.168.100.12",

"192.168.100.13",

"192.168.100.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}]

}

# 上述文件 hosts 字段中 IP 为所有 etcd 节点的集群内部通信IP,可以预留几个 ip,方便日后做扩容用

#生成 etcd 证书

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2023/05/03 15:45:23 [INFO] generate received request

2023/05/03 15:45:23 [INFO] received CSR

2023/05/03 15:45:23 [INFO] generating key: rsa-2048

2023/05/03 15:45:24 [INFO] encoded CSR

2023/05/03 15:45:24 [INFO] signed certificate with serial number 10818560920006802419549402238020307296834685735

2023/05/03 15:45:24 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master1 work]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd.csr etcd-csr.json etcd-key.pem etcd.pem

部署 etcd 集群

[root@k8s-master1 ~]# wget https://github.com/etcd-io/etcd/releases/download/v3.5.2/etcd-v3.5.2-linux-amd64.tar.gz

[root@k8s-master1 ~]# tar -zxvf etcd-v3.5.2-linux-amd64.tar.gz

[root@k8s-master1 ~]# cp -p etcd-v3.5.2-linux-amd64/etcd* /usr/local/bin/

#分发

[root@k8s-master1 ~]# scp etcd-v3.5.2-linux-amd64/etcd* k8s-master2:/usr/local/bin/

[root@k8s-master1 ~]# scp etcd-v3.5.2-linux-amd64/etcd* k8s-master3:/usr/local/bin/

[root@k8s-master1 ~]# cat > /etc/etcd/etcd.conf <<"EOF"

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.100.11:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.100.11:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.11:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.100.11:2380,etcd2=https://192.168.100.12:2380,etcd3=https://192.168.100.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

#ETCD_NAME:节点名称,集群中唯一

#ETCD_DATA_DIR:数据目录

#ETCD_LISTEN_PEER_URLS:集群通信监听地址

#ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

#ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

#ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

#ETCD_INITIAL_CLUSTER:集群节点地址

#ETCD_INITIAL_CLUSTER_TOKEN:集群 Token

#ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new 是新集群,existing 表示加入已有集群(扩容时用)

[root@k8s-master1 ~]# cat > /usr/lib/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

[root@k8s-master1 ~]# cd /data/work/

[root@k8s-master1 work]# cp ca*.pem /etc/etcd/ssl/

[root@k8s-master1 work]# cp etcd*.pem /etc/etcd/ssl/

[root@k8s-master1 work]# ls /etc/etcd/ssl/

ca-key.pem ca.pem etcd-key.pem etcd.pem

#同步 etcd 配置到集群其它 master 节点,需要手动修改 etcd 节点名称及 IP 地址

[root@k8s-master1 ~]# for i in k8s-master2 k8s-master3;do scp /etc/etcd/etcd.conf $i:/etc/etcd/; done;

etcd.conf 100% 535 372.8KB/s 00:00

etcd.conf 100% 535 287.5KB/s 00:00

[root@k8s-master1 ~]# for i in k8s-master2 k8s-master3; do scp /etc/etcd/ssl/* $i:/etc/etcd/ssl; done

ca-key.pem 100% 1679 725.6KB/s 00:00

ca.pem 100% 1359 1.5MB/s 00:00

etcd-key.pem 100% 1675 1.7MB/s 00:00

etcd.pem 100% 1444 1.2MB/s 00:00

ca-key.pem 100% 1679 569.7KB/s 00:00

ca.pem 100% 1359 1.3MB/s 00:00

etcd-key.pem 100% 1675 2.0MB/s 00:00

etcd.pem 100% 1444 2.1MB/s 00:00

[root@k8s-master1 ~]# for i in k8s-master2 k8s-master3;do scp /usr/lib/systemd/system/etcd.service $i:/usr/lib/systemd/system/; done

etcd.service 100% 634 221.0KB/s 00:00

etcd.service 100% 634 454.2KB/s 00:00

#修改相应的配置文件

#在192.168.100.12上

[root@k8s-master2 ~]# mkdir /var/lib/etcd/

[root@k8s-master2 ~]# vim /etc/etcd/etcd.conf

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.100.12:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.100.12:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.12:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.12:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.100.11:2380,etcd2=https://192.168.100.12:2380,etcd3=https://192.168.100.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#在192.168.100.13上

[root@k8s-master2 ~]# mkdir /var/lib/etcd/

[root@k8s-master3 ~]# vim /etc/etcd/etcd.conf

#[Member]

ETCD_NAME="etcd3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.100.13:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.100.13:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.100.13:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.100.13:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://192.168.100.11:2380,etcd2=https://192.168.100.12:2380,etcd3=https://192.168.100.13:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

#启动

[root@k8s-master1 ~]# systemctl daemon-reload

[root@k8s-master1 ~]# systemctl enable --now etcd.service

[root@k8s-master1 ~]# systemctl status etcd

#测试集群状态

[root@k8s-master1 ~]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://192.168.100.11:2379,https://192.168.100.12:2379,https://192.168.100.13:2379 endpoint health

+-----------------------------+--------+-------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+-----------------------------+--------+-------------+-------+

| https://192.168.100.13:2379 | true | 67.397037ms | |

| https://192.168.100.11:2379 | true | 69.496046ms | |

| https://192.168.100.12:2379 | true | 64.810984ms | |

+-----------------------------+--------+-------------+-------+Kubernetes 软件包下载

kubernetes/CHANGELOG-1.25.md at master · kubernetes/kubernetes · GitHub

#上传包,然后解压

[root@k8s-master1 ~]# tar -zxvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master1 ~]# cd kubernetes/server/bin/

[root@k8s-master1 bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

#软件分发

[root@k8s-master1 bin]# scp kube-apiserver kube-controller-manager kube-scheduler kubectl k8s-master2:/usr/local/bin/

[root@k8s-master1 bin]# scp kube-apiserver kube-controller-manager kube-scheduler kubectl k8s-master3:/usr/local/bin/

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-master1:/usr/local/bin

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-master2:/usr/local/bin

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-master3:/usr/local/bin

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-node1:/usr/local/bin

[root@k8s-master1 bin]# scp kubelet kube-proxy k8s-node2:/usr/local/bin

[root@k8s-master1 bin]#

#在集群节点上创建目录(所有节点)

[root@k8s-master2 ~]# mkdir -p /etc/kubernetes/

[root@k8s-master2 ~]# mkdir -p /etc/kubernetes/ssl

[root@k8s-master2 ~]# mkdir -p /var/log/kubernetes

部署 api-server

apiserver:提供 k8s api,是整个系统的对外接口,提供资源操作的唯一入口,供客户端和其它组件调用,提供了 k8s 各类资源对象(pod、deployment、Service 等)的增删改查,是整个系统的数据总线和数据中心,并提供认证、授权、访问控制、API 注册和发现等机制,并将操作对象持久化到 etcd 中。相当于“营业厅”。

#创建 apiserver 证书请求文件

[root@k8s-master1 bin]# cd /data/work/

[root@k8s-master1 work]# cat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.100.11",

"192.168.100.12",

"192.168.100.13",

"192.168.100.14",

"192.168.100.15",

"192.168.100.100",

"10.255.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

]

}

EOF

#如果 hosts 字段不为空则需要指定授权使用该证书的 IP(含 VIP) 或域名列表。由于该证书被 kubernetes master 集群使用,需要将节点的 IP 都填上,为了方便后期扩容可以多写几个预留的 IP。同时还需要填写 service 网络的首个 IP(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个 IP,如 10.255.0.1)。生成 apiserver 证书及 token 文件

[root@k8s-master1 work]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

#说明:

启动 TLS Bootstrapping 机制

Master apiserver 启用 TLS 认证后,每个节点的 kubelet 组件都要使用由 apiserver 使用的 CA 签发的有效证书才能与 apiserver 通讯,当 Node 节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。

为了简化流程,Kubernetes引入了TLS bootstraping 机制来自动颁发客户端证书,kubelet 会以一个低权限用户自动向 apiserver 申请证书,kubelet 的证书由 apiserver 动态签署。所以强烈建议在 Node 上使用这种方式,目前主要用于 kubelet,kube-proxy 还是由我们统一颁发一个证书。

Bootstrap 是很多系统中都存在的程序,比如 Linux 的 bootstrap,bootstrap 一般都是作为预先配置在开启或者系统启动的时候加载,这可以用来生成一个指定环境。Kubernetes 的 kubelet 在启动时同样可以加载一个这样的配置文件,这个文件的内容类似如下形式:

apiVersion: v1

clusters: null

contexts:

- context:

cluster: kubernetes

user: kubelet-bootstrap

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kubelet-bootstrap

user: {}

TLS bootstrapping 具体引导过程 :

1.TLS 作用

TLS 的作用就是对通讯加密,防止中间人窃听;同时如果证书不信任的话根本就无法与 apiserver 建立连接,更不用提有没有权限向 apiserver 请求指定内容。

2.RBAC 作用

当 TLS 解决了通讯问题后,那么权限问题就应由 RBAC 解决(可以使用其他权限模型,如 ABAC);RBAC 中规定了一个用户或者用户组(subject)具有请求哪些 api 的权限;在配合 TLS 加密的时候,实际上 apiserver 读取客户端证书的 CN 字段作为用户名,读取 O 字段作为用户组。

kubelet 首次启动流程

TLS bootstrapping 功能是让 kubelet 组件去 apiserver 申请证书,然后用于连接 apiserver;那么第一次启动时没有证书如何连接 apiserver ?

在 apiserver 配置中指定了一个 token.csv 文件,该文件中是一个预设的用户配置;同时该用户的 Token 和由 apiserver 的 CA 签发的用户被写入了 kubelet 所使用的 bootstrap.kubeconfig 配置文件中;这样在首次请求时,kubelet 使用 bootstrap.kubeconfig 中被 apiserver CA 签发证书时信任的用户来与 apiserver 建立 TLS 通讯,使用 bootstrap.kubeconfig 中的用户 Token 来向apiserver 声明自己的 RBAC 授权身份。

token.csv 格式:

# 格式:token,用户名,UID,用户组

3940fd7fbb391d1b4d861ad17a1f0613,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

首次启动时,可能与遇到 kubelet 报 401 无权访问 apiserver 的错误;这是因为在默认情况下,kubelet 通过 bootstrap.kubeconfig 中的预设用户 Token 声明了自己的身份,然后创建 CSR 请求;但是不要忘记这个用户在我们不处理的情况下他没任何权限的,包括创建 CSR 请求;所以需要创建一个 ClusterRoleBinding,将预设用户 kubelet-bootstrap 与内置的 ClusterRole system:node-bootstrapper 绑定到一起,使其能够发起 CSR 请求。创建 apiserver 服务配置文件

[root@k8s-master1 work]# mkdir /etc/kubernetes/

[root@k8s-master1 work]# cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=192.168.100.11 \

--secure-port=6443 \

--advertise-address=192.168.100.11 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.255.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://192.168.100.11:2379,https://192.168.100.12:2379,https://192.168.100.13:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=4"

EOF

说明:

--logtostderr:启用日志

--v:日志等级

--log-dir:日志目录

--etcd-servers:etcd 集群地址

--bind-address:监听地址

--secure-port:https 安全端口

--advertise-address:集群通告地址

--allow-privileged:启用授权

--service-cluster-ip-range:Service 虚拟 IP 地址段

--enable-admission-plugins:准入控制模块

--authorization-mode:认证授权,启用 RBAC 授权和节点自管理

--enable-bootstrap-token-auth:启用 TLS bootstrap 机制

--token-auth-file:bootstrap token 文件

--service-node-port-range:Service nodeport 类型默认分配端口范围

--kubelet-client-xxx:apiserver 访问 kubelet 客户端证书

--tls-xxx-file:apiserver https 证书

--etcd-xxxfile:连接 Etcd 集群证书

-audit-log-xxx:审计日志创建 apiserver 服务管理配置文件

[root@k8s-master1 work]# cat > /usr/lib/systemd/system/kube-apiserver.service << "EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#同步文件到集群 master 节点

[root@k8s-master1 work]# mkdir /etc/kubernetes/ssl

[root@k8s-master1 work]# cp ca*.pem /etc/kubernetes/ssl/

[root@k8s-master1 work]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@k8s-master1 work]# cp token.csv /etc/kubernetes/

[root@k8s-master1 work]# scp /etc/kubernetes/token.csv k8s-master2:/etc/kubernetes

[root@k8s-master1 work]# scp /etc/kubernetes/token.csv k8s-master3:/etc/kubernetes

[root@k8s-master1 work]# scp /etc/kubernetes/ssl/kube-apiserver*.pem k8s-master2:/etc/kubernetes/ssl

[root@k8s-master1 work]# scp /etc/kubernetes/ssl/kube-apiserver*.pem k8s-master3:/etc/kubernetes/ssl

[root@k8s-master1 work]# scp /etc/kubernetes/ssl/ca*.pem k8s-master2:/etc/kubernetes/ssl

[root@k8s-master1 work]# scp /etc/kubernetes/ssl/ca*.pem k8s-master3:/etc/kubernetes/ssl

[root@k8s-master1 work]# scp /etc/kubernetes/kube-apiserver.conf k8s-master2:/etc/kubernetes/

[root@k8s-master1 work]# scp /etc/kubernetes/kube-apiserver.conf k8s-master3:/etc/kubernetes/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-apiserver.service k8s-master2:/usr/lib/systemd/system/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-apiserver.service k8s-master3:/usr/lib/systemd/system/

#在master2和master3需要修改/etc/kubernetes/kube-apiserver.conf中的IP地址,改为本机IP

[root@k8s-master1 work]# systemctl daemon-reload

[root@k8s-master1 work]# systemctl enable --now kube-apiserver

[root@k8s-master1 work]# systemctl status kube-apiserver

[root@k8s-master1 work]# curl --insecure https://192.168.100.11:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

上面看到 401,这个是正常的的状态,只是还没认证。部署 kubectl

Kubectl 是客户端工具,操作 k8s 资源的,如增删改查等。Kubectl 操作资源的时候,怎么知道连接到哪个集群呢?这时需要一个文件 /etc/kubernetes/admin.conf,kubectl 会根据这个文件的配置,去访问 k8s 资源。/etc/kubernetes/admin.conf 文件记录了访问的 k8s 集群和用到的证书。

#创建 kubectl 证书请求文件

[root@k8s-master1 work]# cat > admin-csr.json << "EOF"

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

EOF

说明:后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权;kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用 kube-apiserver 的所有 API 的权限;O 指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限。

注: 这个 admin 证书,是将来生成管理员用的 kube config 配置文件用的,现在我们一般建议使用 RBAC 来对 kubernetes 进行角色权限控制, kubernetes 将证书中的 CN 字段 作为 User, O 字段作为 Group; "O": "system:masters", 必须是 system:masters,否则后面 kubectl create clusterrolebinding 报错。

证书 O 配置为 system:masters 在集群内部 cluster-admin 的 clusterrolebinding 将 system:masters 组和 cluster-admin clusterrole 绑定在一起。

#生成证书文件

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#复制文件到指定目录

[root@k8s-master1 work]# cp admin*.pem /etc/kubernetes/ssl/

#生成 kubeconfig 配置文件

# 设置集群参数

[root@k8s-master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.100.11:6443 --kubeconfig=kube.config

#设置客户端认证参数

[root@k8s-master1 work]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

#设置上下文参数

[root@k8s-master1 work]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

#设置当前上下文

[root@k8s-master1 work]# kubectl config use-context kubernetes --kubeconfig=kube.config

#准备 kubectl 配置文件并进行角色绑定

[root@k8s-master1 work]# mkdir ~/.kube

[root@k8s-master1 work]# cp kube.config ~/.kube/config

[root@k8s-master1 work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config

#查看集群信息

[root@k8s-master1 work]# kubectl cluster-info

Kubernetes control plane is running at https://192.168.100.11:6443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

#查看集群组件状态

[root@k8s-master1 work]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "https://127.0.0.1:10259/healthz": dial tcp 127.0.0.1:10259: connect: connection refused

controller-manager Unhealthy Get "https://127.0.0.1:10257/healthz": dial tcp 127.0.0.1:10257: connect: connection refused

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

etcd-2 Healthy {"health":"true","reason":""}

#查看命名空间中资源对象

[root@k8s-master1 work]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.255.0.1 <none> 443/TCP 14m

#同步 kubectl 配置文件到集群其它 master 节点

[root@k8s-master2 ~]# mkdir /root/.kube

[root@k8s-master3 ~]# mkdir /root/.kube

[root@k8s-master1 ~]# scp /root/.kube/config k8s-master2:/root/.kube/

[root@k8s-master1 ~]# scp /root/.kube/config k8s-master3:/root/.kube/

#配置 kubectl 命令补全

#在三个master上执行

#在 bash 中设置当前 shell 的自动补全,要先安装 bash-completion 包

[root@k8s-master1 ~]# yum install -y bash-completion

[root@k8s-master1 ~]# source /usr/share/bash-completion/bash_completion

#在你的 bash shell 中永久地添加自动补全

[root@k8s-master1 ~]# source <(kubectl completion bash)

[root@k8s-master1 ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc部署 kube-controller-manager

controller-manager:作为集群内部的管理控制中心,负责集群内的 Node、Pod 副本、服务端点(Endpoint)、命名空间(Namespace)、服务账号(ServiceAccount)、资源定额(ResourceQuota) 的管理,当某个 Node 意外宕机时,Controller Manager 会及时发现并执行自动化修复流程,确保集群始终处于预期的工作状态。与 apiserver 交互,实时监控和维护 k8s 集群的控制器的健康情况,对有故障的进行处理和恢复,相当于“大总管”。

#创建 kube-controller-manager 证书请求文件

[root@k8s-master1 work]# cat > kube-controller-manager-csr.json << "EOF"

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"192.168.100.11",

"192.168.100.12",

"192.168.100.13",

"192.168.100.100"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

EOF

说明:

hosts 列表包含所有 kube-controller-manager 节点 IP;

CN 为 system:kube-controller-manager;

O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

#创建 kube-controller-manager 证书文件

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

#创建 kube-controller-manager 的 kube-controller-manager.kubeconfig

#设置集群参数

[root@k8s-master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.100.11:6443 --kubeconfig=kube-controller-manager.kubeconfig

#设置客户端认证参数

[root@k8s-master1 work]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

#设置上下文参数

[root@k8s-master1 work]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

#设置当前上下文

[root@k8s-master1 work]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

#创建 kube-controller-manager 配置文件

[root@k8s-master1 work]# cat > kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS=" \

--secure-port=10257 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.255.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=10.0.0.0/16 \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--horizontal-pod-autoscaler-sync-period=10s \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

EOF

–secure-port=10257、–bind-address=127.0.0.1: 在本地网络接口监听 10257 端口的 https /metrics 请求;

–kubeconfig:指定 kubeconfig 文件路径,kube-controller-manager 使用它连接和验证 kube-apiserver;

–authentication-kubeconfig 和 --authorization-kubeconfig:kube-controller-manager 使用它连接 apiserver,对 client 的请求进行认证和授权。kube-controller-manager 不再使用 --tls-ca-file 对请求 https metrics 的 Client 证书进行校验。如果没有配置这两个 kubeconfig 参数,则 client 连接 kube-controller-manager https 端口的请求会被拒绝(提示权限不足)。

–cluster-signing-*-file:签名 TLS Bootstrap 创建的证书;

–experimental-cluster-signing-duration:指定 TLS Bootstrap 证书的有效期;

–root-ca-file:放置到容器 ServiceAccount 中的 CA 证书,用来对 kube-apiserver 的证书进行校验;

–service-account-private-key-file:签名 ServiceAccount 中 Token 的私钥文件,必须和 kube-apiserver 的 --service-account-key-file 指定的公钥文件配对使用;

–service-cluster-ip-range :指定 Service Cluster IP 网段,必须和 kube-apiserver 中的同名参数一致;

–leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

–controllers=*,bootstrapsigner,tokencleaner:启用的控制器列表,tokencleaner 用于自动清理过期的 Bootstrap token;

–horizontal-pod-autoscaler-*:custom metrics 相关参数,支持 autoscaling/v2alpha1;

–tls-cert-file、–tls-private-key-file:使用 https 输出 metrics 时使用的 Server 证书和秘钥;

–use-service-account-credentials=true: kube-controller-manager 中各 controller 使用 serviceaccount 访问 kube-apiserver。

# 创建服务启动文件

[root@k8s-master1 work]# cat > /usr/lib/systemd/system/kube-controller-manager.service << "EOF"

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

#同步文件到集群 master 节点

[root@k8s-master1 work]# cp kube-controller-manager*.pem /etc/kubernetes/ssl/

[root@k8s-master1 work]# cp kube-controller-manager.kubeconfig /etc/kubernetes/

[root@k8s-master1 work]# cp kube-controller-manager.conf /etc/kubernetes/

[root@k8s-master1 work]# scp kube-controller-manager*.pem k8s-master2:/etc/kubernetes/ssl/

[root@k8s-master1 work]# scp kube-controller-manager*.pem k8s-master3:/etc/kubernetes/ssl/

[root@k8s-master1 work]# scp kube-controller-manager.kubeconfig kube-controller-manager.conf k8s-master2:/etc/kubernetes/

[root@k8s-master1 work]# scp kube-controller-manager.kubeconfig kube-controller-manager.conf k8s-master3:/etc/kubernetes/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-controller-manager.service k8s-master2:/usr/lib/systemd/system/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-controller-manager.service k8s-master3:/usr/lib/systemd/system/

#启动服务(在三个 master 执行)

[root@k8s-master1 work]# systemctl daemon-reload

[root@k8s-master1 work]# systemctl enable --now kube-controller-manager

[root@k8s-master1 work]# systemctl status kube-controller-manager

[root@k8s-master1 work]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "https://127.0.0.1:10259/healthz": dial tcp 127.0.0.1:10259: connect: connection refused

controller-manager Healthy ok

etcd-0 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

etcd-2 Healthy {"health":"true","reason":""} 部署 kube-scheduler

scheduler:负责 k8s 集群中 pod 调度 , scheduler 通过与 apiserver 交互监听到创建 Pod 副本的信息后,它会检索所有符合该 Pod 要求的工作节点列表,开始执行 Pod 调度逻辑。调度成功后将 Pod 绑定到目标节点上,相当于“调度室”。

#创建 kube-scheduler 证书请求文件

[root@k8s-master1 work]# cat > kube-scheduler-csr.json << "EOF"

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.100.11",

"192.168.100.12",

"192.168.100.13",

"192.168.100.100"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

EOF

注:

hosts 列表包含所有 kube-scheduler 节点 IP 和 VIP;

CN 为 system:kube-scheduler;

O 为 system:kube-scheduler,kubernetes 内置的 ClusterRoleBindings system:kube-scheduler 将赋予 kube-scheduler 工作所需的权限。

#生成 kube-scheduler 证书

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

#创建 kube-scheduler 的 kubeconfig

[root@k8s-master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.100.11:6443 --kubeconfig=kube-scheduler.kubeconfig

#设置客户端认证参数

[root@k8s-master1 work]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

#设置上下文参数

[root@k8s-master1 work]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

#设置当前上下文

[root@k8s-master1 work]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

#创建服务配置文件

[root@k8s-master1 work]# cat > kube-scheduler.conf << "EOF"

KUBE_SCHEDULER_OPTS=" \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2"

EOF

#创建服务启动配置文件

[root@k8s-master1 work]# cat > /usr/lib/systemd/system/kube-scheduler.service << "EOF"

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

#同步文件至集群 master 节点

[root@k8s-master1 work]# cp kube-scheduler*.pem /etc/kubernetes/ssl/

[root@k8s-master1 work]# cp kube-scheduler.kubeconfig /etc/kubernetes/

[root@k8s-master1 work]# cp kube-scheduler.conf /etc/kubernetes/

[root@k8s-master1 work]# scp kube-scheduler*.pem k8s-master2:/etc/kubernetes/ssl/

[root@k8s-master1 work]# scp kube-scheduler*.pem k8s-master3:/etc/kubernetes/ssl/

[root@k8s-master1 work]# scp kube-scheduler.kubeconfig kube-scheduler.conf k8s-master2:/etc/kubernetes/

[root@k8s-master1 work]# scp kube-scheduler.kubeconfig kube-scheduler.conf k8s-master3:/etc/kubernetes/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-scheduler.service k8s-master2:/usr/lib/systemd/system/

[root@k8s-master1 work]# scp /usr/lib/systemd/system/kube-scheduler.service k8s-master3:/usr/lib/systemd/system/

#启动服务(三台master)

[root@k8s-master1 work]# systemctl daemon-reload

[root@k8s-master1 work]# systemctl enable --now kube-scheduler

[root@k8s-master1 work]# systemctl status kube-scheduler

[root@k8s-master1 work]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

etcd-0 Healthy {"health":"true","reason":""}

controller-manager Healthy ok

etcd-2 Healthy {"health":"true","reason":""}

etcd-1 Healthy {"health":"true","reason":""}

scheduler Healthy ok

工作节点(worker node)部署

部署 kubelet(在 k8s-master1 上操作)

kubelet:每个 Node 节点上的 kubelet 定期就会调用 API Server 的 REST 接口报告自身状态,API Server 接收这些信息后,将节点状态信息更新到 etcd 中。kubelet 也通过 API Server 监听 Pod 信息,从而对 Node 机器上的 POD 进行管理,如创建、删除、更新 Pod。

#创建 kubelet-bootstrap.kubeconfig

[root@k8s-master1 work]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

[root@k8s-master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.100.11:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 work]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 work]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

[root@k8s-master1 work]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

# 添加权限

[root@k8s-master1 work]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

#创建 kubelet 配置文件

# "cgroupDriver": "systemd" 要和 docker 的驱动一致。address 替换为自己 master1 的 IP 地址。

[root@k8s-master1 work]# cat > kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "192.168.100.11",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.255.0.2"]

}

EOF

#创建 kubelet 服务启动管理文件

[root@k8s-master1 work]# cat > /usr/lib/systemd/system/kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--container-runtime-endpoint=unix:///run/containerd/containerd.sock \

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.2 \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

–network-plugin:启用 CNI

–kubeconfig:用于连接 apiserver

–bootstrap-kubeconfig:首次启动向 apiserver 申请证书

–config:配置参数文件

–cert-dir:kubelet 证书生成目录

–pod-infra-container-image:管理 Pod 网络容器的镜像地址

#同步文件到集群节点,注意:传送后到各个节点后的 kubelet.json 中 address 需要自己去手动修改为当前主机 IP 地址。

#如果不想 master 节点也安装 kubelet 组件,可以只给 node 节点传送文件,只在 node 节点安装也可

[root@k8s-master1 work]# cp kubelet-bootstrap.kubeconfig kubelet.json /etc/kubernetes/

[root@k8s-master1 work]# for i in k8s-master2 k8s-master3 k8s-node1 k8s-node2;do scp /etc/kubernetes/kubelet-bootstrap.kubeconfig /etc/kubernetes/kubelet.json $i:/etc/kubernetes/;done

[root@k8s-master1 work]# for i in k8s-master2 k8s-master3 k8s-node1 k8s-node2;do scp ca.pem $i:/etc/kubernetes/ssl/;done

[root@k8s-master1 work]# for i in k8s-master2 k8s-master3 k8s-node1 k8s-node2;do scp /usr/lib/systemd/system/kubelet.service $i:/usr/lib/systemd/system/;done

#修改address的IP

[root@k8s-master2 ~]# vim /etc/kubernetes/kubelet.json

[root@k8s-master3 ~]# vim /etc/kubernetes/kubelet.json

[root@k8s-node1 ~]# vim /etc/kubernetes/kubelet.json

[root@k8s-node2 ~]# vim /etc/kubernetes/kubelet.json

#创建目录及启动服务(所有节点执行)

[root@k8s-master1 work]# mkdir -p /var/lib/kubelet

[root@k8s-master1 work]# systemctl daemon-reload

[root@k8s-master1 work]# systemctl enable --now kubelet

#验证集群状态

#可以看到一个各节点发送了 CSR 请求,如果状态是 Pending,则需要 Approve 一下 bootstrap 请求:

[root@k8s-master1 work]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

node-csr-EFeCRq-RmJISKRqAYV6lCmwdAHGLlbqZ42tfVt-I7I8 54s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

node-csr-LBbsiFL2BVxwdARw3h5oTlF560m2UcWMBdcXpCMXpJg 61s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

node-csr-_mBJt9-2c0ozEGfFvhR0IiuAfl4H2zHDS0kCpCSMRYs 57s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

node-csr-evzqSpMfq_7Vrnz3gVZlN7pd8Rq6DJLQRaw0o5BDou4 64s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

node-csr-qsPcjRatznU5_zrL9kJAOXDuNbXecnn6nhRV68mus6M 58s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending

#approve 方法:

语法是 kubectl certificate approve 后面跟kubectl get csr结果的NAME

[root@k8s-master1 work]# kubectl certificate approve node-csr-EFeCRq-RmJISKRqAYV6lCmwdAHGLlbqZ42tfVt-I7I8

certificatesigningrequest.certificates.k8s.io/node-csr-EFeCRq-RmJISKRqAYV6lCmwdAHGLlbqZ42tfVt-I7I8 approved

[root@k8s-master1 work]# kubectl certificate approve node-csr-LBbsiFL2BVxwdARw3h5oTlF560m2UcWMBdcXpCMXpJg

certificatesigningrequest.certificates.k8s.io/node-csr-LBbsiFL2BVxwdARw3h5oTlF560m2UcWMBdcXpCMXpJg approved

[root@k8s-master1 work]# kubectl certificate approve node-csr-_mBJt9-2c0ozEGfFvhR0IiuAfl4H2zHDS0kCpCSMRYs

certificatesigningrequest.certificates.k8s.io/node-csr-_mBJt9-2c0ozEGfFvhR0IiuAfl4H2zHDS0kCpCSMRYs approved

[root@k8s-master1 work]# kubectl certificate approve node-csr-evzqSpMfq_7Vrnz3gVZlN7pd8Rq6DJLQRaw0o5BDou4

certificatesigningrequest.certificates.k8s.io/node-csr-evzqSpMfq_7Vrnz3gVZlN7pd8Rq6DJLQRaw0o5BDou4 approved

[root@k8s-master1 work]# kubectl certificate approve node-csr-qsPcjRatznU5_zrL9kJAOXDuNbXecnn6nhRV68mus6M

certificatesigningrequest.certificates.k8s.io/node-csr-qsPcjRatznU5_zrL9kJAOXDuNbXecnn6nhRV68mus6M approved

# 再次查看状态是否变为 Approved,Issued

[root@k8s-master1 work]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR REQUESTEDDURATION CONDITION

node-csr-EFeCRq-RmJISKRqAYV6lCmwdAHGLlbqZ42tfVt-I7I8 4m31s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued

node-csr-LBbsiFL2BVxwdARw3h5oTlF560m2UcWMBdcXpCMXpJg 4m38s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued

node-csr-_mBJt9-2c0ozEGfFvhR0IiuAfl4H2zHDS0kCpCSMRYs 4m34s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued

node-csr-evzqSpMfq_7Vrnz3gVZlN7pd8Rq6DJLQRaw0o5BDou4 4m41s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued

node-csr-qsPcjRatznU5_zrL9kJAOXDuNbXecnn6nhRV68mus6M 4m35s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Is

[root@k8s-master1 work]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 NotReady <none> 3m8s v1.25.6

k8s-master2 NotReady <none> 30s v1.25.6

k8s-master3 NotReady <none> 31s v1.25.6

k8s-node1 NotReady <none> 31s v1.25.6

k8s-node2 NotReady <none> 31s v1.25.6

注意:STATUS 是 NotReady 表示还没有安装网络插件。部署 kube-proxy

kube-proxy:提供网络代理和负载均衡,是实现 service 的通信与负载均衡机制的重要组件,kube-proxy 负责为 Pod 创建代理服务,从 apiserver 获取所有 service 信息,并根据 service 信息创建代理服务,实现 service 到 Pod 的请求路由和转发,从而实现 K8s 层级的虚拟转发网络,将到 service 的请求转发到后端的 pod 上。

#创建 kube-proxy 证书请求文件

[root@k8s-master1 work]# cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "k8s",

"OU": "system"

}

]

}

EOF

#生成证书

[root@k8s-master1 work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

#创建 kubeconfig 文件

[root@k8s-master1 work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://192.168.100.11:6443 --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 work]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 work]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master1 work]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

#创建服务配置文件

[root@k8s-master1 work]# cat > kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 192.168.100.11

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 192.168.100.0/24

healthzBindAddress: 192.168.100.11:10256

kind: KubeProxyConfiguration

metricsBindAddress: 192.168.100.11:10249

mode: "ipvs"

EOF

#创建服务启动管理文件

[root@k8s-master1 work]# cat > /usr/lib/systemd/system/kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--alsologtostderr=true \

--logtostderr=false \

--log-dir=/var/log/kubernetes \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#同步文件到集群节点

#如果不想 master 节点也安装 kube-proxy 组件,可以只给 node 节点传送文件,只在 node 节点安装也可。

[root@k8s-master1 work]# cp kube-proxy*.pem /etc/kubernetes/ssl/

[root@k8s-master1 work]# cp kube-proxy.kubeconfig kube-proxy.yaml /etc/kubernetes/

[root@k8s-master1 work]# for i in k8s-master2 k8s-master3 k8s-node1 k8s-node2;do scp kube-proxy.kubeconfig kube-proxy.yaml $i:/etc/kubernetes/;done

[root@k8s-master1 work]# for i in k8s-master2 k8s-master3 k8s-node1 k8s-node2;do scp /usr/lib/systemd/system/kube-proxy.service $i:/usr/lib/systemd/system/;done

注意:传送后需要手动修改 kube-proxy.yaml 中三个 Address IP 地址为当前主机 IP.

[root@k8s-master2 ~]# vim /etc/kubernetes/kube-proxy.yaml

[root@k8s-master3 ~]# vim /etc/kubernetes/kube-proxy.yaml

[root@k8s-node1 ~]# vim /etc/kubernetes/kube-proxy.yaml

[root@k8s-node2 ~]# vim /etc/kubernetes/kube-proxy.yaml

#服务启动(所有节点)

[root@k8s-master1 work]# mkdir -p /var/lib/kube-proxy

[root@k8s-master1 work]# systemctl daemon-reload

[root@k8s-master1 work]# systemctl enable --now kube-proxy

[root@k8s-master1 work]# systemctl status kube-proxy

网络组件部署 Calico

官方文档:System requirements | Calico Documentation (tigera.io)

下载 calico.yaml

复制这个连接:https://raw.githubusercontent.com/projectcalico/calico/v3.24.5/manifests/calico.yaml 到浏览器下载,再上传到 master1 即可:

#修改 calico.yaml 里的 pod 网段

在命令模式下输入 /192 就可以快速定位到需要修改的地址了,不然慢慢找太麻烦了,文件很长;然后把注释取消掉,把 value 修改成我们的 pod ip 地址即可:

[root@k8s-master1 ~]# vim calico.yaml

4551 - name: CALICO_IPV4POOL_CIDR

4552 value: "10.0.0.0/16"

#

提前下载好所需的镜像(可省略,直接执行下一步)

ctr 是 containerd 自带的工具,有命名空间的概念,若是 k8s 相关的镜像,都默认在 k8s.io 这个命名空间,所以导入镜像时需要指定命令空间为 k8s.io:

[root@k8s-master1 ~]# grep image calico.yaml

image: docker.io/calico/cni:v3.24.5

imagePullPolicy: IfNotPresent

image: docker.io/calico/cni:v3.24.5

imagePullPolicy: IfNotPresent

image: docker.io/calico/node:v3.24.5

imagePullPolicy: IfNotPresent

image: docker.io/calico/node:v3.24.5

imagePullPolicy: IfNotPresent

image: docker.io/calico/kube-controllers:v3.24.5

imagePullPolicy: IfNotPresent

#安装 calico 网络

[root@k8s-master1 ~]# kubectl apply -f calico.yaml

#验证结果

[root@k8s-master1 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-798cc86c47-mrpl8 1/1 Running 5 (2m21s ago) 12m

kube-system calico-node-6747n 1/1 Running 0 12m

kube-system calico-node-8tgkw 1/1 Running 0 12m

kube-system calico-node-qrvvx 1/1 Running 0 12m

kube-system calico-node-scmc9 1/1 Running 0 12m

kube-system calico-node-z4g4t 1/1 Running 0 12m

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 68m v1.25.6

k8s-master2 Ready <none> 65m v1.25.6

k8s-master3 Ready <none> 65m v1.25.6

k8s-node1 Ready <none> 65m v1.25.6

k8s-node2 Ready <none> 65m v1.25.6

部署 CoreDNS

下载官网kubernetes/cluster/addons/dns/coredns at master · kubernetes/kubernetes · GitHub

#修改 coredns.yaml

#1. 修改 k8s 集群后缀名称 __DNS__DOMAIN__ 为 cluster.local

kubernetes __DNS__DOMAIN__ in-addr.arpa ip6.arpa {

修改后:kubernetes cluster.local in-addr.arpa ip6.arpa {

#2. 修改 coredns 谷歌地址为 dockerhub 地址,容易下载

image: registry.k8s.io/coredns/coredns:v1.10.0

修改后:image: coredns/coredns:1.10.0

#3. 修改 pod 启动内存限制大小,300Mi 即可(这步可省略不做,我修改了~)

memory: __DNS__MEMORY__LIMIT__

修改后:memory: 300Mi

#4. 修改 coredns 的 svcIP 地址,一般为 svc 网段的第二位(一开始我们规划好的 10.255.0.0),10.255.0.2,第一位为 apiserver 的 svc

clusterIP: __DNS__SERVER__

修改后:clusterIP: 10.255.0.2

#安装 coredns

[root@k8s-master1 ~]# kubectl apply -f coredns.yaml

[root@k8s-master1 ~]# kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-798cc86c47-mrpl8 1/1 Running 5 (11h ago) 11h

kube-system calico-node-6747n 1/1 Running 0 11h

kube-system calico-node-8tgkw 1/1 Running 0 11h

kube-system calico-node-qrvvx 1/1 Running 0 11h

kube-system calico-node-scmc9 1/1 Running 0 11h

kube-system calico-node-z4g4t 1/1 Running 0 11h

kube-system coredns-65bc566d6f-ptpjx 1/1 Running 0 36s

#节点⻆⾊名字更改

[root@k8s-master1 ~]# kubectl label nodes k8s-master1 node-role.kubernetes.io/master=master

[root@k8s-master1 ~]# kubectl label nodes k8s-node1 node-role.kubernetes.io/work=work

[root@k8s-master1 ~]# kubectl label nodes k8s-node2 node-role.kubernetes.io/work=work

[root@k8s-master1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 12h v1.25.6

k8s-master2 Ready <none> 12h v1.25.6

k8s-master3 Ready <none> 12h v1.25.6

k8s-node1 Ready work 12h v1.25.6

k8s-node2 Ready work 12h v1.25.6

#测试 coredns 域名解析功能

[root@k8s-master1 ~]# cd /data/work/

[root@k8s-master1 work]# cat<<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default

spec:

containers:

- name: busybox

image: busybox:1.28

command:

- sleep

- "3600"

imagePullPolicy: IfNotPresent

restartPolicy: Always

EOF

#⾸先查看 pod 是否安装成功

[root@k8s-master1 work]# kubectl get pods

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 0 75s

#通过下面可以看到能访问网络

[root@k8s-master1 work]# kubectl exec busybox -it -- sh

/ # ping www.baidu.com

64 bytes from 182.61.200.6: seq=0 ttl=127 time=84.916 ms

64 bytes from 182.61.200.6: seq=1 ttl=127 time=35.237 ms

64 bytes from 182.61.200.6: seq=2 ttl=127 time=43.888 ms

64 bytes from 182.61.200.6: seq=3 ttl=127 time=69.534 ms

64 bytes from 182.61.200.6: seq=4 ttl=127 time=33.419 ms

/ # nslookup kubernetes.default.svc.cluster.local

Server: 10.255.0.2

Address 1: 10.255.0.2 kube-dns.kube-system.svc.cluster.local

Name: kubernetes.default.svc.cluster.local

Address 1: 10.255.0.1 kubernetes.default.svc.cluster.local安装 keepalived+nginx 实现 k8s apiserver 高可用

#安装 nginx 与 keeplived(所有 master 节点)

[root@k8s-master1 ~]# yum install -y nginx keepalived nginx-all-modules.noarch

#nginx 配置(三个 master 节点的 nginx 配置一样)

[root@k8s-master1 ~]# cat /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为三台 Master apiserver 组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.100.11:6443;

server 192.168.100.12:6443;

server 192.168.100.13:6443;

}

server {

listen 16443;

proxy_pass k8s-apiserver;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 4096;

include /etc/nginx/mime.types;

default_type application/octet-stream;

include /etc/nginx/conf.d/*.conf;

server {

listen 80;

listen [::]:80;

server_name _;

root /usr/share/nginx/html;

include /etc/nginx/default.d/*.conf;

error_page 404 /404.html;

location = /404.html {

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

}

}

}

#配置keeplived(所有master)

#注意 !!!! 主从配置不一样

#master1

#注意修改的内容

[root@k8s-master1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

vrrp_skip_check_adv_addr

# vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh" # 心跳执行的脚本,检测 nginx 是否启动

interval 2 #(检测脚本执行的间隔,单位是秒)

weight 2 # 权重

}

vrrp_instance VI_1 {

state MASTER #定义主从

interface ens33 #本机网卡

virtual_router_id 51 #VRRP 路由 ID 实例,每个实例是唯一的,主从要一致

priority 100 #优先级,优先级,数值越大,获取处理请求的优先级越高。备服务器设置 90

advert_int 1 #指定 VRRP 心跳包通告间隔时间,默认 1 秒

authentication {

auth_type PASS #设置验证类型和密码,MASTER 和 BACKUP 必须使用相同的密码才能正常通信

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100 #定义虚拟 ip(VIP),可多设,每行一个

}

track_script {

check_nginx #调用检测脚本

}

}

#master2

[root@k8s-master2 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

vrrp_skip_check_adv_addr

# vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100

}

track_script {

check_nginx

}

}

#master3

[root@k8s-master3 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

vrrp_skip_check_adv_addr

# vrrp_strict

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100

}

track_script {

check_nginx

}

}

#健康检查脚本(三个 master 执行)

[root@k8s-master3 ~]# vim /etc/keepalived/check_nginx.sh

#!/bin/bash

#1、判断 Nginx 是否存活

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

#2、如果不存活则尝试启动 Nginx

systemctl start nginx

sleep 2

#3、等待 2 秒后再次获取一次 Nginx 状态

counter=`ps -C nginx --no-header | wc -l`

#4、再次进行判断,如 Nginx 还不存活则停止 Keepalived,让地址进行漂移

if [ $counter -eq 0 ]; then

systemctl stop keepalived

fi

fi

#授权

[root@k8s-master3 ~]# chmod +x /etc/keepalived/check_nginx.sh

#启动 nginx 和 keepalived(所有 master 节点)

[root@k8s-master1 ~]# systemctl daemon-reload

[root@k8s-master1 ~]# systemctl enable --now nginx

[root@k8s-master1 ~]# systemctl enable --now keepalived

#查看 vip 是否绑定成功

用ip a即可

#测试 keepalived

先停掉 master1 上的 keepalived,然后 vip 会随机漂移到 master2 或 master3 上,因为它两的权重相同:

目前所有的 Worker Node 组件连接都还是 master1 Node,如果不改为连接 VIP 走负载均衡器,那么 Master 还是单点故障。

因此接下来就是要改所有 Worker Node(kubectl get node 命令查看到的节点)组件配置文件,由原来 192.168.200.11 修改为 192.168.100.100(VIP)。

#在node1和node2上

[root@k8s-node1 ~]# sed -i 's#192.168.100.11:6443#192.168.100.100:16443#' /etc/kubernetes/kubelet-bootstrap.kubeconfig

[root@k8s-node1 ~]# sed -i 's#192.168.100.11:6443#192.168.100.100:16443#' /etc/kubernetes/kubelet.json

[root@k8s-node1 ~]# sed -i 's#192.168.100.11:6443#192.168.100.100:16443#' /etc/kubernetes/kubelet.kubeconfig

[root@k8s-node1 ~]# sed -i 's#192.168.100.11:6443#192.168.100.100:16443#' /etc/kubernetes/kube-proxy.kubeconfig

[root@k8s-node1 ~]# sed -i 's#192.168.100.11:6443#192.168.100.100:16443#' /etc/kubernetes/kube-proxy.yaml

[root@k8s-node1 ~]# systemctl restart kubelet kube-proxy