深度学习训练营

- 学习内容

- 原文链接

- 环境介绍

- 前置工作

- 设置GPU

- 加载数据

- 创建测试集

- 数据类型查看以及数据归一化

- 数据增强操作

- 使用嵌入model的方法进行数据增强

- 模型训练

- 结果可视化

- 自定义数据增强

- 查看数据增强后的图片

学习内容

在深度学习当中,由于准备数据集本身是一件十分复杂的过程,很难保障每一张图片的学习能力都很高,所以对于同一种图片采用数据增强就显得十分重要了

原文链接

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍦 参考文章:365天深度学习训练营-第P10周:实现数据增强

- 🍖 原作者:K同学啊|接辅导、项目定制

环境介绍

- 语言环境:Python3.9.13

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2

- 数据链接:猫和狗数据

前置工作

设置GPU

import matplotlib.pyplot as plt

import numpy as np

#隐藏警告

import warnings

warnings.filterwarnings('ignore')

from tensorflow.keras import layers

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

# 打印显卡信息,确认GPU可用

print(gpus)

加载数据

将对应的数据按照不同种类放入到不同文件夹当中,再将数据整合为animal_data

data_dir = "animal_data"

img_height = 224

img_width = 224

batch_size = 32

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 3400 files belonging to 2 classes.

Using 2380 files for training.

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 3400 files belonging to 2 classes.

Using 2380 files for training.

创建测试集

因为数据本身没有设置测试集,这里需要进行手动创建

val_batches = tf.data.experimental.cardinality(val_ds)

test_ds = val_ds.take(val_batches // 5)

val_ds = val_ds.skip(val_batches // 5)

print('Number of validation batches: %d' % tf.data.experimental.cardinality(val_ds))

print('Number of test batches: %d' % tf.data.experimental.cardinality(test_ds))

运行结构如下

Number of validation batches: 60

Number of test batches: 15

预测的batches和测试batches分别为60和15

数据类型查看以及数据归一化

class_names = train_ds.class_names

print(class_names)

['cat', 'dog']

进行数据归一化操作

AUTOTUNE = tf.data.AUTOTUNE

def preprocess_image(image,label):

return (image/255.0,label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

test_ds = test_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

查看数据集

plt.figure(figsize=(15, 10)) # 图形的宽为15高为10

for images, labels in train_ds.take(1):

for i in range(8):

ax = plt.subplot(5, 8, i + 1)

plt.imshow(images[i])

plt.title(class_names[labels[i]])

plt.axis("off")

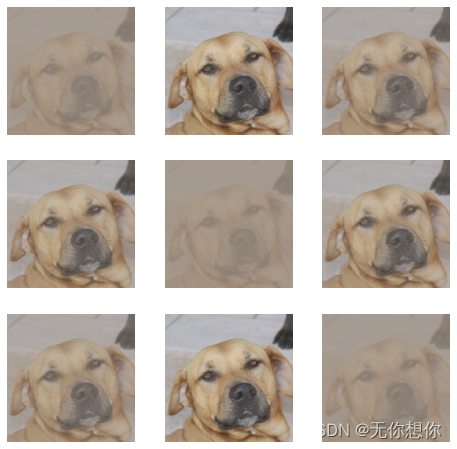

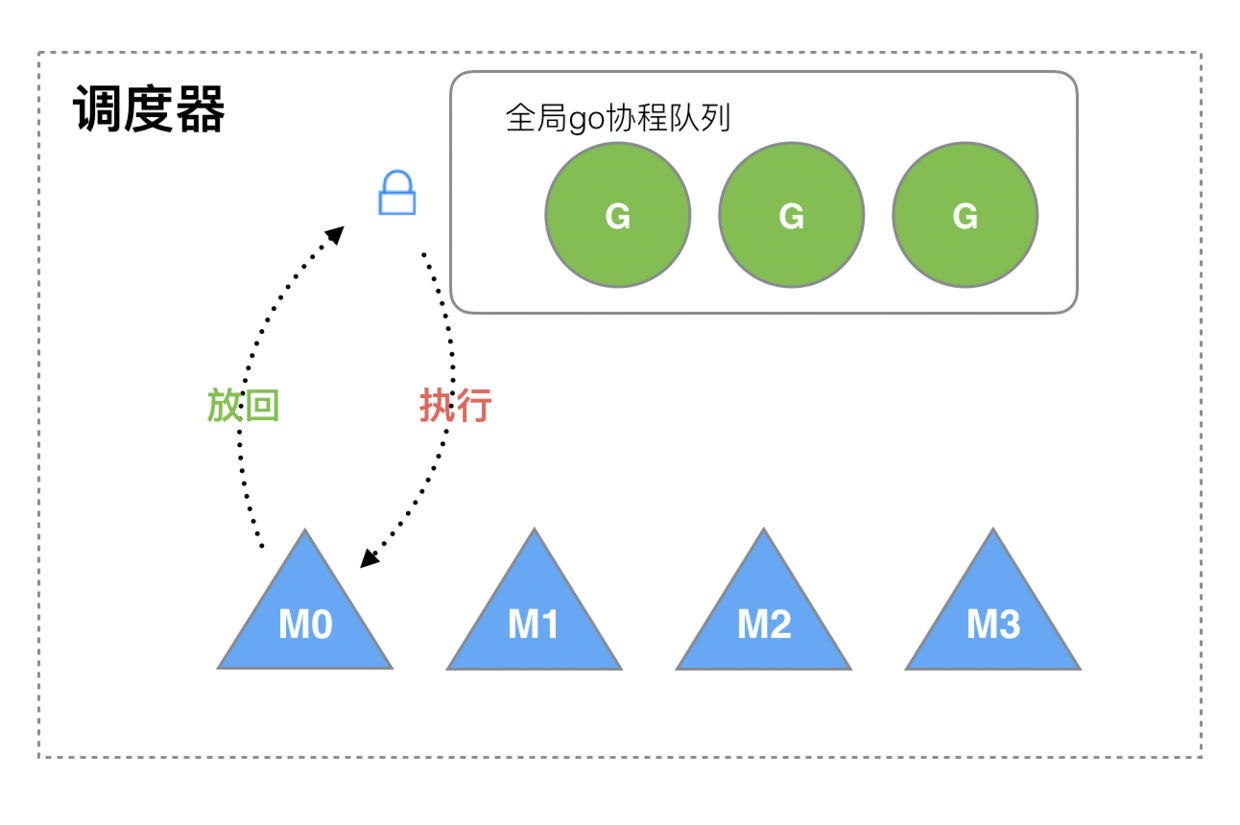

数据增强操作

data_augmentation = tf.keras.Sequential([

tf.keras.layers.experimental.preprocessing.RandomFlip("horizontal_and_vertical"),

tf.keras.layers.experimental.preprocessing.RandomRotation(0.2),

])

#进行随机的水平翻转和垂直翻转

# Add the image to a batch.

image = tf.expand_dims(images[i], 0)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = data_augmentation(image)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0])

plt.axis("off")

使用嵌入model的方法进行数据增强

model = tf.keras.Sequential([

data_augmentation,

layers.Conv2D(16, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

])

- 这样的操作可以得到

GPU的加速

模型训练

模型开始训练之前都需要进行这个模型的调整

model = tf.keras.Sequential([

layers.Conv2D(16, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(32, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Flatten(),

layers.Dense(128, activation='relu'),

layers.Dense(len(class_names))

])

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

开始进行正式的训练

epochs=20

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

75/75 [==============================] - 35s 462ms/step - loss: 2.0268e-05 - accuracy: 1.0000 - val_loss: 1.8425e-05 - val_accuracy: 1.0000

Epoch 2/20

75/75 [==============================] - 34s 461ms/step - loss: 1.7937e-05 - accuracy: 1.0000 - val_loss: 1.6272e-05 - val_accuracy: 1.0000

Epoch 3/20

75/75 [==============================] - 35s 461ms/step - loss: 1.5871e-05 - accuracy: 1.0000 - val_loss: 1.4373e-05 - val_accuracy: 1.0000

Epoch 4/20

75/75 [==============================] - 34s 450ms/step - loss: 1.4039e-05 - accuracy: 1.0000 - val_loss: 1.2682e-05 - val_accuracy: 1.0000

Epoch 5/20

75/75 [==============================] - 34s 450ms/step - loss: 1.2429e-05 - accuracy: 1.0000 - val_loss: 1.1195e-05 - val_accuracy: 1.0000

Epoch 6/20

75/75 [==============================] - 35s 462ms/step - loss: 1.1014e-05 - accuracy: 1.0000 - val_loss: 9.8961e-06 - val_accuracy: 1.0000

Epoch 7/20

75/75 [==============================] - 34s 450ms/step - loss: 9.7220e-06 - accuracy: 1.0000 - val_loss: 8.6961e-06 - val_accuracy: 1.0000

Epoch 8/20

75/75 [==============================] - 34s 455ms/step - loss: 8.5416e-06 - accuracy: 1.0000 - val_loss: 7.6252e-06 - val_accuracy: 1.0000

Epoch 9/20

75/75 [==============================] - 34s 459ms/step - loss: 7.5130e-06 - accuracy: 1.0000 - val_loss: 6.7169e-06 - val_accuracy: 1.0000

Epoch 10/20

75/75 [==============================] - 34s 460ms/step - loss: 6.6338e-06 - accuracy: 1.0000 - val_loss: 5.9490e-06 - val_accuracy: 1.0000

Epoch 11/20

75/75 [==============================] - 34s 457ms/step - loss: 5.8835e-06 - accuracy: 1.0000 - val_loss: 5.2946e-06 - val_accuracy: 1.0000

Epoch 12/20

75/75 [==============================] - 34s 456ms/step - loss: 5.2507e-06 - accuracy: 1.0000 - val_loss: 4.7294e-06 - val_accuracy: 1.0000

Epoch 13/20

...

Epoch 19/20

75/75 [==============================] - 34s 449ms/step - loss: 2.5978e-06 - accuracy: 1.0000 - val_loss: 2.3737e-06 - val_accuracy: 1.0000

Epoch 20/20

75/75 [==============================] - 34s 449ms/step - loss: 2.3849e-06 - accuracy: 1.0000 - val_loss: 2.1841e-06 - val_accuracy: 1.0000

这里比较奇怪的是训练的结果准确性很高,loss的值都是很小很小的,和原本博主的相应的内容是不一样的,我觉得很大的可能应该是首先这个数据的内容很大,原本只有几百张图片,但是这里一共有3400张图片,再加上模型训练的增强方式比较简单,导致在结果上面训练看起来很好

loss, acc = model.evaluate(test_ds)

print("Accuracy", acc)

15/15 [==============================] - 1s 83ms/step - loss: 1.9960e-06 - accuracy: 1.0000

Accuracy 1.0

结果可视化

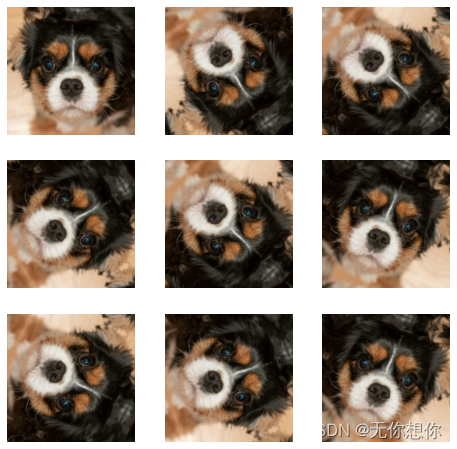

自定义数据增强

这里主要是可以更改随机数种子的大小

import random

# 这是大家可以自由发挥的一个地方

def aug_img(image):

seed = (random.randint(5,10), 0)

#设立随机数种植,randint是指在0到9之间进行一个数据的增强

# 随机改变图像对比度

stateless_random_brightness = tf.image.stateless_random_contrast(image, lower=0.1, upper=1.0, seed=seed)

return stateless_random_brightness

查看数据增强后的图片

image = tf.expand_dims(images[3]*255, 0)

print("Min and max pixel values:", image.numpy().min(), image.numpy().max())

Min and max pixel values: 2.4591687 241.47968

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(image)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")