1 模型下载

可按照此处方法下载预热后的模型,速度较快(推荐artget方式)

https://mirrors.tools.huawei.com/mirrorDetail/67b75986118b030fb5934fc7?mirrorName=huggingface&catalog=llms

或者从hugging face官方下载。

2 vllm-ascend安装

2.1 使用vllm+vllm-ascend基础镜像

基础镜像地址:https://quay.io/repository/ascend/vllm-ascend?tab=tags&tag=latest

拉取镜像(v0.7.0.3的正式版本尚未发布)

docker pull quay.io/ascend/vllm-ascend:v0.7.3-dev

启动镜像

QwQ-32B 需要70G以上显存,2张64G的卡

docker run -itd --net=host --name vllm-ascend-QwQ-32B --device /dev/davinci0 --device /dev/davinci1 --device /dev/davinci_manager --device /dev/devmm_svm --device /dev/hisi_hdc -v /usr/local/dcmi:/usr/local/dcmi -v /usr/local/bin/npu-smi:/usr/local/bin/npu-smi -v /usr/local/Ascend/driver/lib64/:/usr/local/Ascend/driver/lib64/ -v /usr/local/Ascend/driver/version.info:/usr/local/Ascend/driver/version.info -v /etc/ascend_install.info:/etc/ascend_install.info -v /xxx/models/llmmodels:/usr1/project/models quay.io/ascend/vllm-ascend:v0.7.3-dev bash

/xxx/models/llmmodels是宿主机放模型的目录,/usr1/project/models是容器内目录

2.2 源码编译安装

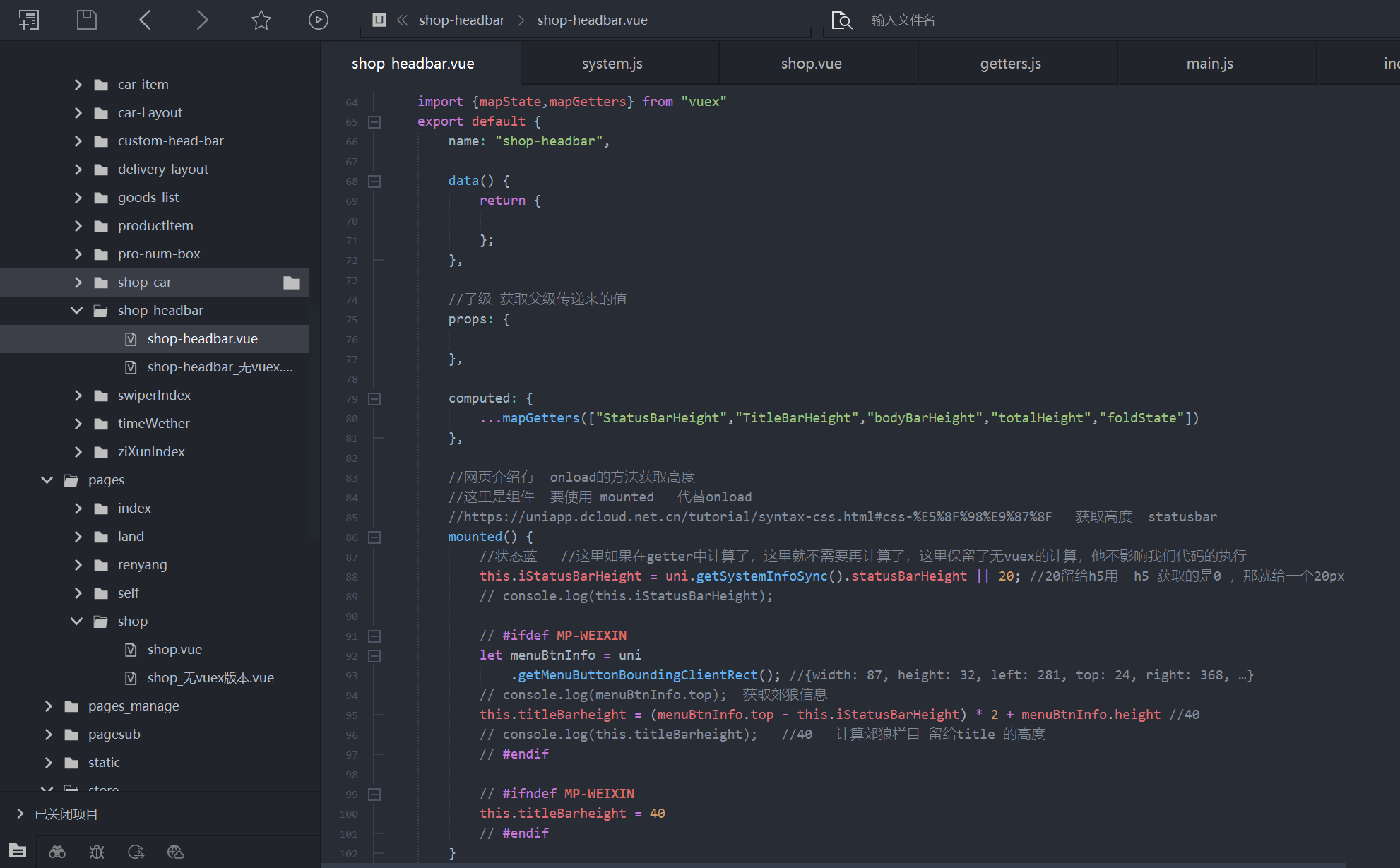

# Install vLLM

git clone --depth 1 --branch v0.8.4 https://github.com/vllm-project/vllm

cd vllm

VLLM_TARGET_DEVICE=empty pip install . --extra-index https://download.pytorch.org/whl/cpu/

cd ..

# Install vLLM Ascend

git clone --depth 1 --branch v0.8.4rc1 https://github.com/vllm-project/vllm-ascend.git

cd vllm-ascend

pip install -e . --extra-index https://download.pytorch.org/whl/cpu/

cd ..

具体可以参考链接:https://vllm-ascend.readthedocs.io/en/latest/installation.html

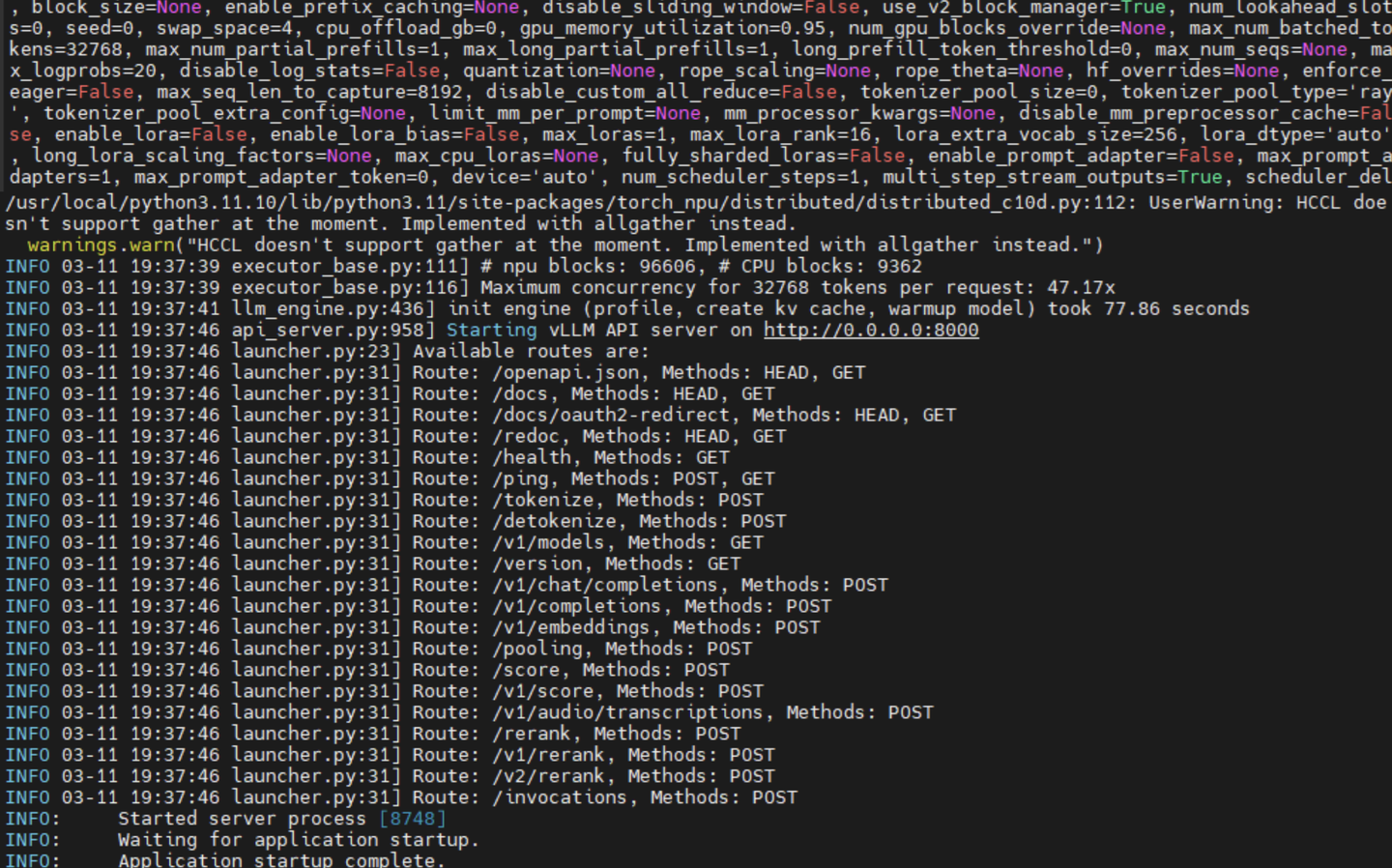

3 启动模型

openai兼容接口

vllm serve /usr1/project/models/QwQ-32B --tensor_parallel_size 2 --served-model-name "QwQ-32B" --max-num-seqs 256 --max-model-len=4096 --host xx.xx.xx.xx --port 8001 &

/usr1/project/models/QwQ-32B:模型路径

tensor_parallel_size:和卡数量保持一致

served-model-name:接口调用需要传入的模型名称

vllm其余具体参数含义请参考vllm官方文档