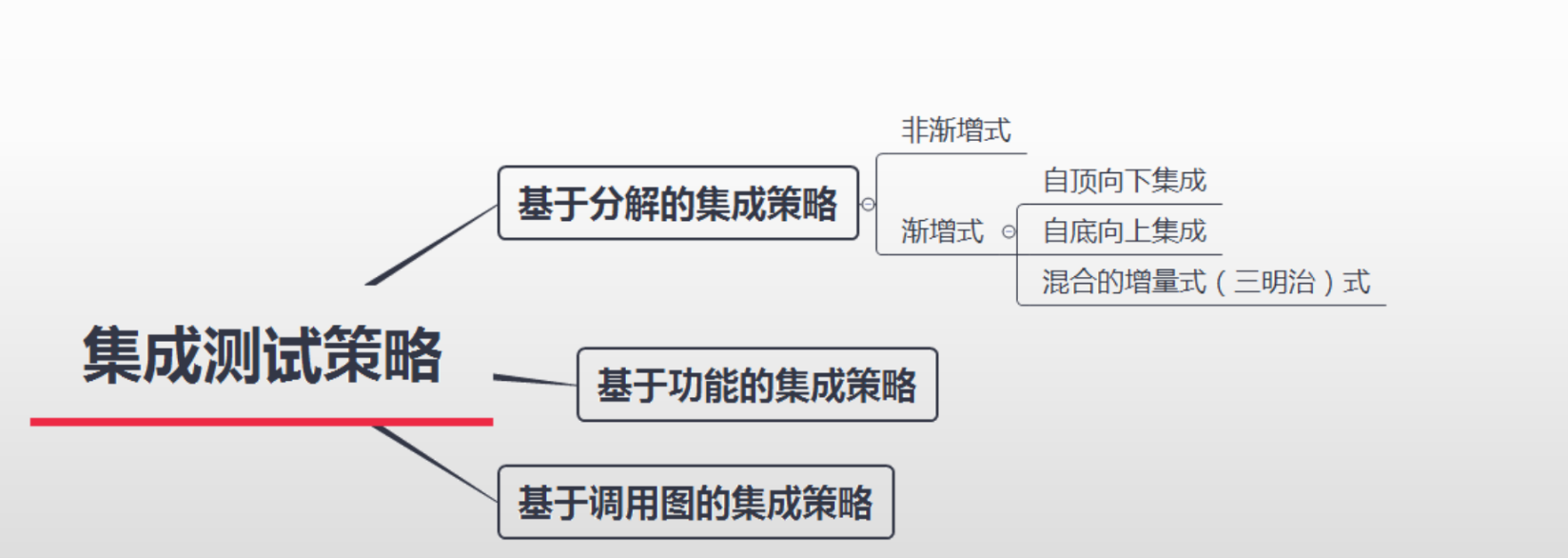

图2 18-layer、34-layer的残差结构

图3 50-layer、101-layer、102-layer的残差结构

import torch

import torch.nn as nn

#这个18或者34层网络的残差模块,根据ResNet的具体实现可以自动匹配

class BasicBlock(nn.Module):

'''

conv1 stride=1对应的实线残差,因为不会改变高宽

stride=2对应的虚线残差,因为会改变高宽(减半)

conv2 stride都为1

'''

expansion = 1#便于控制通道数

def __init__(self,in_channels,out_channels,stride=1,downsample=None):

super(BasicBlock,self).__init__()

self.conv1 = nn.Conv2d(in_channels,out_channels,kernel_size=3,stride=stride,padding=1,bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.relu = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(in_channels=out_channels,out_channels=out_channels,kernel_size=3,stride=1,padding=1,bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

self.downsample = downsample

def forward(self,x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

#50,101,152

class Bottleneck(nn.Module):

expansion = 4

def __init__(self,in_channels,out_channels,stride=1,downsample=None):

super(Bottleneck,self).__init__()

self.conv1 = nn.Conv2d(in_channels,out_channels,kernel_size=1,stride=1,bias=False)

self.bn1 = nn.BatchNorm2d(out_channels)

self.conv2 = nn.Conv2d(out_channels,out_channels,kernel_size=3,stride=stride,padding=1,bias=False)

self.bn2 = nn.BatchNorm2d(out_channels)

self.conv3 = nn.Conv2d(out_channels,out_channels*self.expansion,kernel_size=1,stride=1,bias=False)

self.bn3 = nn.BatchNorm2d(out_channels*self.expansion)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

def forward(self,x):

identity = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

out = self.relu(out)

if self.downsample is not None:

identity = self.downsample(x)

out += identity

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self,block,layer_nums,num_classes=1000,include_top=True):

'''

:param block:

:param layer_nums:模块数目34layers[3,4,6,4]

:param include_top:

'''

super(ResNet,self).__init__()

self.include_top = include_top

self.in_channel = 64#经过cnov1 后,进入残差块的通道数变成64

#这里指定你的数据通道数是3

self.conv1 = nn.Conv2d(1,self.in_channel,kernel_size=7,stride=2,padding=3,bias=False)

self.bn1 = nn.BatchNorm2d(self.in_channel)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3,stride=2,padding=1)#长宽减半

self.layer1 = self._make_layer(block,64,layer_nums[0])#conv2 图中看出,不同deep的layer只有通道不同,不用调整步长

self.layer2 = self._make_layer(block,128,layer_nums[1],stride=2)#conv3

self.layer3 = self._make_layer(block,256,layer_nums[2],stride=2)#conv4

self.layer4 = self._make_layer(block,512,layer_nums[3],stride=2)#conv5

if self.include_top:

self.avgpool = nn.AdaptiveAvgPool2d((1,1))

self.fc = nn.Linear(512 * block.expansion, num_classes)

def _make_layer(self,block,out_channels,block_num,stride=1):

'''

:param block: 选择不同的残差模块

:param out_channels: 输出通道

:param block_num: 残差模块的个数 3就是这个block连续用3次数

:param stride: 默认步长1

'''

downsample = None

if stride != 1 or self.in_channel != out_channels*block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.in_channel,out_channels*block.expansion,kernel_size=1,stride=stride,bias=False),

nn.BatchNorm2d(out_channels*block.expansion)

)

layers = []

layers.append(block(self.in_channel,out_channels,stride,downsample))

self.in_channel = out_channels*block.expansion

for _ in range(1,block_num):

layers.append(block(self.in_channel,out_channels))

return nn.Sequential(*layers)

def forward(self,x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

if self.include_top:

x = self.avgpool(x)

x = torch.flatten(x,1)#用于将输入张量展平成一维张量torch.Size([10, 3072])

x = self.fc(x)

return x

def resnet18(num_classes=1000, include_top=True):

return ResNet(BasicBlock, [2, 2, 2, 2], num_classes=num_classes, include_top=include_top)

def resnet34(num_classes=1000,include_top=True):

return ResNet(BasicBlock,[3,4,6,3],num_classes=num_classes, include_top=include_top)

if __name__ == '__main__':

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

input_tensor = torch.rand(1, 3, 224, 224).to(device) # Ensure input tensor is on the correct device

model = resnet18(num_classes=1000, include_top=True).to(device) # Explicitly pass num_classes and include_top

# print(model)

print(model(input_tensor).shape)参考源:

deep-learning-for-image-processing/pytorch_classification/Test5_resnet/model.py at master · WZMIAOMIAO/deep-learning-for-image-processing