首先下载github项目:https://github.com/Lednik7/CLIP-ONNX

修改clip_onnx/utils.py第61行opset_version=12为opset_version=15 , 运行测试脚本:

import clip

from PIL import Image

import numpy as np

# ONNX不支持CUDA

model, preprocess = clip.load("ViT-B/32", device="cpu", jit=False)

# 批量优先

image = preprocess(Image.open("CLIP.png")).unsqueeze(0).cpu() # [1, 3, 224, 224]

image_onnx = image.detach().cpu().numpy().astype(np.float32)

# 批量优先

text = clip.tokenize(["a diagram", "a dog", "a cat"]).cpu() # [3, 77]

text_onnx = text.detach().cpu().numpy().astype(np.int32)

from clip_onnx import clip_onnx

onnx_model = clip_onnx(model, visual_path="clip_visual.onnx", textual_path="clip_textual.onnx")

onnx_model.convert2onnx(image, text, verbose=True)

onnx_model.start_sessions(providers=["CPUExecutionProvider"]) # CPU模式

image_features = onnx_model.encode_image(image_onnx)

text_features = onnx_model.encode_text(text_onnx)

logits_per_image, logits_per_text = onnx_model(image_onnx, text_onnx)

probs = logits_per_image.softmax(dim=-1).detach().cpu().numpy()

print("标签概率:", probs)

onnx_model = clip_onnx(None)

onnx_model.load_onnx(visual_path="clip_visual.onnx", textual_path="clip_textual.onnx", logit_scale=100.0000) # model.logit_scale.exp()

onnx_model.start_sessions(providers=["CPUExecutionProvider"])

image_features = onnx_model.encode_image(image_onnx)

text_features = onnx_model.encode_text(text_onnx)

logits_per_image, logits_per_text = onnx_model(image_onnx, text_onnx)

probs = logits_per_image.softmax(dim=-1).detach().cpu().numpy()

print("标签概率:", probs)

onnxruntime推理

import clip

from PIL import Image

import numpy as np

import onnxruntime

model, preprocess = clip.load("ViT-B/32", device="cpu", jit=False)

image = preprocess(Image.open("CLIP.png")).unsqueeze(0).cpu() # [1, 3, 224, 224]

image_input = image.detach().cpu().numpy().astype(np.float32)

text = clip.tokenize(["a diagram", "a dog", "a cat"]).cpu() # [3, 77]

text_input = text.detach().cpu().numpy().astype(np.int32)

class clip_onnx:

def __init__(self, model=None, visual_path: str = "clip_visual.onnx", textual_path: str = "clip_textual.onnx", logit_scale=None):

self.model = model

self.visual_path = visual_path

self.textual_path = textual_path

self.logit_scale = logit_scale

def start_sessions(self, providers=['TensorrtExecutionProvider', 'CUDAExecutionProvider', 'CPUExecutionProvider']):

self.visual_session = onnxruntime.InferenceSession(self.visual_path, providers=providers)

self.textual_session = onnxruntime.InferenceSession(self.textual_path, providers=providers)

def __call__(self, image, text, device: str = "cpu"):

onnx_input_image = {self.visual_session.get_inputs()[0].name: image}

image_features, = self.visual_session.run(None, onnx_input_image)

onnx_input_text = {self.textual_session.get_inputs()[0].name: text}

text_features, = self.textual_session.run(None, onnx_input_text)

image_features = image_features /np.linalg.norm(image_features, axis=-1, keepdims=True)

text_features = text_features / np.linalg.norm(text_features, axis=-1, keepdims=True)

logits_per_image = self.logit_scale * image_features @ text_features.T

logits_per_text = logits_per_image.T

return logits_per_image, logits_per_text

onnx_model = clip_onnx(visual_path="clip_visual.onnx", textual_path="clip_textual.onnx", logit_scale=100.0000)

onnx_model.start_sessions(providers=["CPUExecutionProvider"])

logits_per_image, logits_per_text = onnx_model(image_input, text_input)

def softmax(x, axis=-1):

e_x = np.exp(x - np.max(x, axis=axis, keepdims=True))

return e_x / np.sum(e_x, axis=axis, keepdims=True)

probs = softmax(logits_per_image)

print("标签概率:", probs)

tensorrt推理

要使得tensorrt正常推理还需注释掉clip_onnx/utils.py第63行的dynamic_axes,再生成onnx模型。

通过trtexec 转换onnx模型得到engine文件:

/docker_share/TensorRT-8.6.1.6/bin/trtexec --onnx=./clip_textual.onnx --saveEngine=./clip_textual.engine

/docker_share/TensorRT-8.6.1.6/bin/trtexec --onnx=./clip_visual.onnx --saveEngine=./clip_visual.engine

import clip

from PIL import Image

import numpy as np

import tensorrt as trt

import pycuda.autoinit

import pycuda.driver as cuda

model, preprocess = clip.load("ViT-B/32", device="cpu", jit=False)

image = preprocess(Image.open("CLIP.png")).unsqueeze(0).cpu() # [1, 3, 224, 224]

image_input = image.detach().cpu().numpy().astype(np.float32)

text = clip.tokenize(["a diagram", "a dog", "a cat"]).cpu() # [3, 77]

text_input = text.detach().cpu().numpy().astype(np.int32)

class clip_tensorrt:

def __init__(self, model=None, visual_path: str = "clip_visual.engine", textual_path: str = "clip_textual.engine", logit_scale=None):

self.model = model

self.visual_path = visual_path

self.textual_path = textual_path

self.logit_scale = logit_scale

def start_engines(self, providers=['CUDAExecutionProvider', 'CPUExecutionProvider']):

logger = trt.Logger(trt.Logger.WARNING)

with open(self.visual_path, "rb") as f, trt.Runtime(logger) as runtime:

self.visual_engine = runtime.deserialize_cuda_engine(f.read())

self.visual_context = self.visual_engine.create_execution_context()

self.visual_inputs_host = cuda.pagelocked_empty(trt.volume(self.visual_context.get_binding_shape(0)), dtype=np.float32)

self.visual_outputs_host = cuda.pagelocked_empty(trt.volume(self.visual_context.get_binding_shape(1)), dtype=np.float32)

self.visual_inputs_device = cuda.mem_alloc(self.visual_inputs_host.nbytes)

self.visual_outputs_device = cuda.mem_alloc(self.visual_outputs_host.nbytes)

self.visual_stream = cuda.Stream()

with open(self.textual_path, "rb") as f, trt.Runtime(logger) as runtime:

self.textual_engine = runtime.deserialize_cuda_engine(f.read())

self.textual_context = self.textual_engine.create_execution_context()

self.textual_inputs_host = cuda.pagelocked_empty(trt.volume(self.textual_context.get_binding_shape(0)), dtype=np.int32)

self.textual_outputs_host = cuda.pagelocked_empty(trt.volume(self.textual_context.get_binding_shape(1)), dtype=np.float32)

self.textual_inputs_device = cuda.mem_alloc(self.textual_inputs_host.nbytes)

self.textual_outputs_device = cuda.mem_alloc(self.textual_outputs_host.nbytes)

self.textual_stream = cuda.Stream()

def __call__(self, image, text):

np.copyto(self.visual_inputs_host, image.ravel())

with self.visual_engine.create_execution_context() as context:

cuda.memcpy_htod_async(self.visual_inputs_device, self.visual_inputs_host, self.visual_stream)

context.execute_async_v2(bindings=[int(self.visual_inputs_device), int(self.visual_outputs_device)], stream_handle=self.visual_stream.handle)

cuda.memcpy_dtoh_async(self.visual_outputs_host, self.visual_outputs_device, self.visual_stream)

self.visual_stream.synchronize()

np.copyto(self.textual_inputs_host, text.ravel())

with self.textual_engine.create_execution_context() as context:

cuda.memcpy_htod_async(self.textual_inputs_device, self.textual_inputs_host, self.textual_stream)

context.execute_async_v2(bindings=[int(self.textual_inputs_device), int(self.textual_outputs_device)], stream_handle=self.textual_stream.handle)

cuda.memcpy_dtoh_async(self.textual_outputs_host, self.textual_outputs_device, self.textual_stream)

self.textual_stream.synchronize()

image_features = self.visual_outputs_host.reshape(1,512)

text_features = self.textual_outputs_host.reshape(3,512)

image_features = image_features /np.linalg.norm(image_features, axis=-1, keepdims=True)

text_features = text_features / np.linalg.norm(text_features, axis=-1, keepdims=True)

logits_per_image = self.logit_scale * image_features @ text_features.T

logits_per_text = logits_per_image.T

return logits_per_image, logits_per_text

onnx_model = clip_tensorrt(visual_path="clip_visual.engine", textual_path="clip_textual.engine", logit_scale=100.0000)

onnx_model.start_engines()

logits_per_image, logits_per_text = onnx_model(image_input, text_input)

def softmax(x, axis=-1):

e_x = np.exp(x - np.max(x, axis=axis, keepdims=True))

return e_x / np.sum(e_x, axis=axis, keepdims=True)

probs = softmax(logits_per_image)

print("标签概率:", probs)

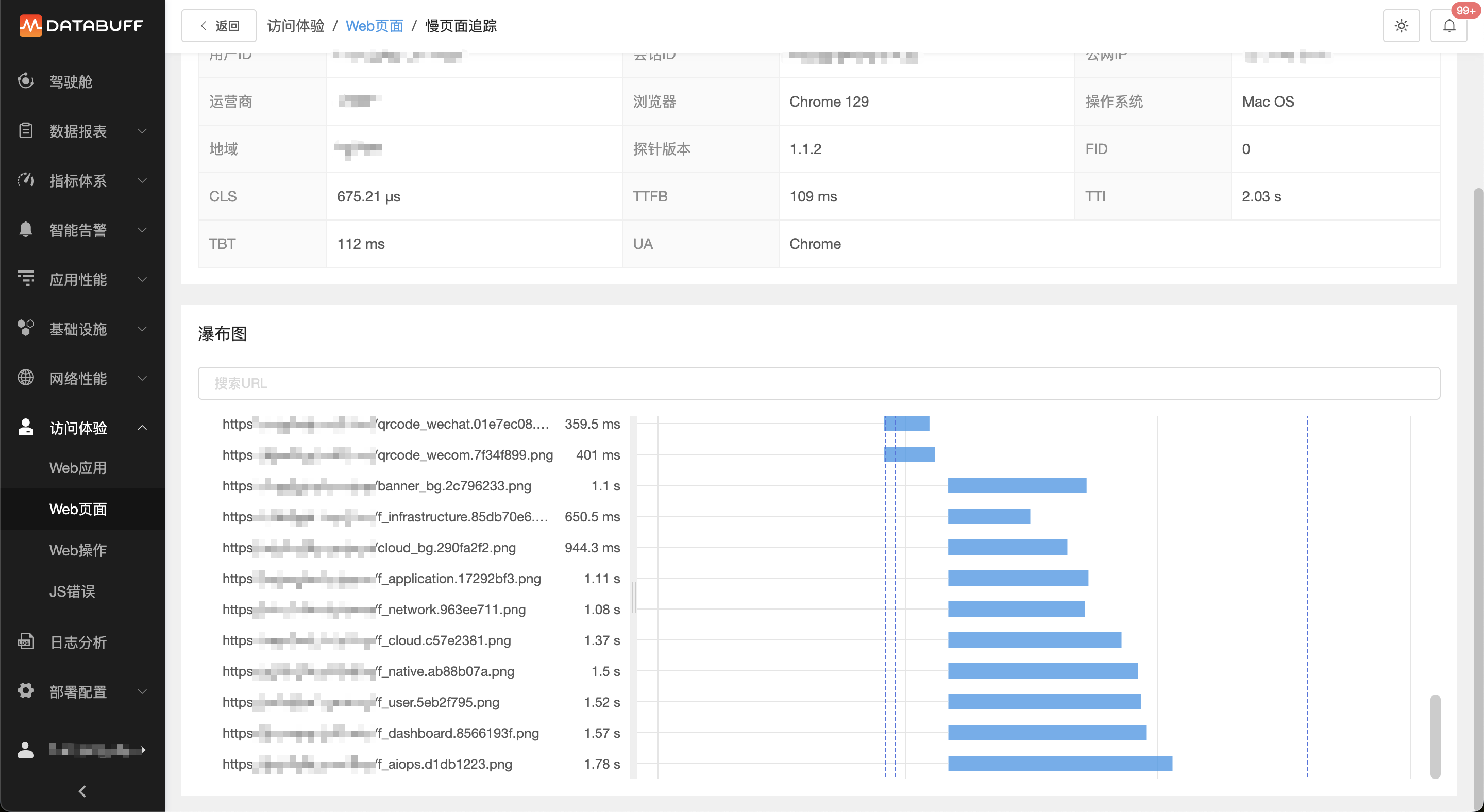

CLIP.png

正常运行得到结果

标签概率: [[0.9927926 0.00421789 0.00298956]]