骨架行为识别-论文复现(论文复现)

本文所涉及所有资源均在传知代码平台可获取

序言

骨架行为识别的定义

骨架行为识别是指通过分析人体骨架的运动轨迹和姿态,来识别和理解人体的行为动作。它是计算机视觉和模式识别领域的一个重要研究方向,具有广泛的应用前景,如人机交互、智能监控、运动分析等。

谈到骨架行为识别,不得不提OpenPose算法。该算法是一种基于深度学习的姿态估计算法,用于从图像或视频中检测和跟踪人体的关键点和姿态信息。它由卡耐基梅隆大学的研究团队开发,旨在实现对多人姿态的准确和实时估计。只需要将视频或者图片在项目中部署路径,即可进行姿态估计

论文概述

2021年发表在ICCV的"Channel-wise Topology Refinement Graph Convolution for Skeleton-Based Action Recognition" paper链接:CTR-GCN

几乎成为了近两年顶刊顶会人体骨架行为识别论文的基线模型,例如HD-GCN(2023 ICCV),INFO-GCN(2022 CVPR),GAP(2023 ICCV)。CTR-GCN相较于上一代基线模型2S-AGCN有何改进呢?2s-agcn链接

提出了一种新的通道拓扑优化图卷积(ctr - gc)来动态学习不同的拓扑并有效地聚合不同通道中的联合特征,用于基于骨架的动作识别。

提出的ctr - gc通过学习共享拓扑作为所有通道的通用先验,并使用每个通道特定于通道的相关性对其进行细化,从而对通道拓扑进行建模。

ctr - gc与时间建模模块相结合,我们开发了一个强大的图形卷积网络

简单总结一下,CTR-GCN的突出贡献有2点:

第一,提出一种通道拓扑细化模块,该模块通过对通道维度的压缩与聚合,对每个通道运用不同的图卷积网络进行特征提取

第二,ctr-gc与简化后的多尺度时间卷积模块MS-TCN模块结合MS-TCN,构成了CTR-GCN架构,该模型参数量小,同时相较于baseline提升巨大

骨干网络架构分析及代码详解

整体架构

CTR-GCN整体架构由三部分构成如下图,分别对应以下三种:

通道细化拓扑建模(蓝色)

通过激活函数M,这里为tanh激活函数,对原始特征进行拓扑细化,得到三个通道特征不同的特征x1,x2,x3。

# start self.conv1 = nn.Conv2d(self.in_channels, self.rel_channels, kernel_size=1) self.conv2 = nn.Conv2d(self.in_channels, self.rel_channels, kernel_size=1) self.conv3 = nn.Conv2d(self.in_channels, self.out_channels, kernel_size=1) self.conv4 = nn.Conv2d(self.rel_channels, self.out_channels, kernel_size=1) x1, x2, x3 = self.conv1(x).mean(-2), self.conv2(x).mean(-2), self.conv3(x) x1 = self.tanh(x1.unsqueeze(-1) - x2.unsqueeze(-2)) x1 = self.conv4(x1) * alpha + (A.unsqueeze(0).unsqueeze(0) if A is not None else 0) # N,C,V,V x1 = torch.einsum('ncuv,nctv->nctu', x1, x3) return x1特征变换(粉色)

通过对通道进行压缩进行维度变换,进行通道拓扑细化的准备阶段

# start self.in_channels = in_channels self.out_channels = out_channels if in_channels == 3 or in_channels == 9: self.rel_channels = 8 self.mid_channels = 16 else: self.rel_channels = in_channels // rel_reduction self.mid_channels = in_channels // mid_reduction通道维度增强(黄色)

将三个进行通道拓扑细化后的特征向量与对应的超参数a卷积,得到输出y

# start def forward(self, x): y = None if self.adaptive: A = self.PA else: A = self.A.cuda(x.get_device()) # 这里的num_subset为3,3根据图3a中代表CTR-GC的个数 for i in range(self.num_subset): z = self.convs[i](x, A[i], self.alpha) y = z + y if y is not None else z y = self.bn(y) y += self.down(x) y = self.relu(y) return y

模块介绍

图a蓝色部分为空间建模,空间建模模块由CTR-GC基本块构成,CTR-GC的结构如图b。图a黄色部分为简化的多尺度时间建模,相对于原本的MS-TCN架构删除了一部分卷积分支

CTR-GC:空间建模的基本单元,代码如下:

# start # rel_reduction和mid_reduction分别表示基于相对位置关系和中间特征的注意力子模块中间使用的通道数缩减比例,用于控制模型的参数量。 class CTRGC(nn.Module): def __init__(self, in_channels, out_channels, rel_reduction=8, mid_reduction=1): super(CTRGC, self).__init__() self.in_channels = in_channels self.out_channels = out_channels if in_channels == 3 or in_channels == 9: self.rel_channels = 8 self.mid_channels = 16 else: self.rel_channels = in_channels // rel_reduction self.mid_channels = in_channels // mid_reduction self.conv1 = nn.Conv2d(self.in_channels, self.rel_channels, kernel_size=1) self.conv2 = nn.Conv2d(self.in_channels, self.rel_channels, kernel_size=1) self.conv3 = nn.Conv2d(self.in_channels, self.out_channels, kernel_size=1) self.conv4 = nn.Conv2d(self.rel_channels, self.out_channels, kernel_size=1) self.tanh = nn.Tanh() for m in self.modules(): if isinstance(m, nn.Conv2d): conv_init(m) elif isinstance(m, nn.BatchNorm2d): bn_init(m, 1) def forward(self, x, A=None, alpha=1): # x.mean(-2)表示对张量x沿着倒数第二个维度进行求平均值的操作。 x1, x2, x3 = self.conv1(x).mean(-2), self.conv2(x).mean(-2), self.conv3(x) x1 = self.tanh(x1.unsqueeze(-1) - x2.unsqueeze(-2)) x1 = self.conv4(x1) * alpha + (A.unsqueeze(0).unsqueeze(0) if A is not None else 0) # N,C,V,V x1 = torch.einsum('ncuv,nctv->nctu', x1, x3) return x1spatial modeling:空间建模,由CTR-GC和残差连接组成,残差连接的目的是为了保存部分原始特征

# start class unit_gcn(nn.Module): def __init__(self, in_channels, out_channels, A, coff_embedding=4, adaptive=True, residual=True): super(unit_gcn, self).__init__() inter_channels = out_channels // coff_embedding self.inter_c = inter_channels self.out_c = out_channels self.in_c = in_channels self.adaptive = adaptive self.num_subset = A.shape[0] self.convs = nn.ModuleList() for i in range(self.num_subset): self.convs.append(CTRGC(in_channels, out_channels)) if residual: if in_channels != out_channels: self.down = nn.Sequential( nn.Conv2d(in_channels, out_channels, 1), nn.BatchNorm2d(out_channels) ) else: self.down = lambda x: x else: self.down = lambda x: 0 if self.adaptive: self.PA = nn.Parameter(torch.from_numpy(A.astype(np.float32))) else: self.A = Variable(torch.from_numpy(A.astype(np.float32)), requires_grad=False) self.alpha = nn.Parameter(torch.zeros(1)) self.bn = nn.BatchNorm2d(out_channels) self.soft = nn.Softmax(-2) self.relu = nn.ReLU(inplace=True) for m in self.modules(): if isinstance(m, nn.Conv2d): conv_init(m) elif isinstance(m, nn.BatchNorm2d): bn_init(m, 1) bn_init(self.bn, 1e-6) def forward(self, x): y = None if self.adaptive: A = self.PA else: A = self.A.cuda(x.get_device()) # 这里的num_subset为3,3根据图3a中代表CTR-GC的个数 for i in range(self.num_subset): z = self.convs[i](x, A[i], self.alpha) y = z + y if y is not None else z y = self.bn(y) y += self.down(x) y = self.relu(y) return ytemporal modeling:时间建模,是简化版的MS-TCN架构

# start class MultiScale_TemporalConv(nn.Module): def __init__(self, in_channels, out_channels, kernel_size=3, stride=1, dilations=[1,2,3,4], residual=True, residual_kernel_size=1): super().__init__() # 检查每一个分支膨胀率+2 是否能整除 assert out_channels % (len(dilations) + 2) == 0, '# out channels should be multiples of # branches' # Multiple branches of temporal convolution # 分支的数量=膨胀率+2 self.num_branches = len(dilations) + 2 # 分支的通道数 = 输出通道 / 分支数 # 这个计算的目的是确保每个分支的输出通道数相等,从而使得多分支结构中各个分支的特征映射可以合并在一起。 branch_channels = out_channels // self.num_branches # if type(kernel_size) == list: assert len(kernel_size) == len(dilations) else: kernel_size = [kernel_size]*len(dilations) # Temporal Convolution branches self.branches = nn.ModuleList([ nn.Sequential( nn.Conv2d( in_channels, branch_channels, kernel_size=1, padding=0), nn.BatchNorm2d(branch_channels), nn.ReLU(inplace=True), TemporalConv( branch_channels, branch_channels, kernel_size=ks, stride=stride, dilation=dilation), ) for ks, dilation in zip(kernel_size, dilations) ]) # Additional Max & 1x1 branch self.branches.append(nn.Sequential( nn.Conv2d(in_channels, branch_channels, kernel_size=1, padding=0), nn.BatchNorm2d(branch_channels), nn.ReLU(inplace=True), nn.MaxPool2d(kernel_size=(3,1), stride=(stride,1), padding=(1,0)), nn.BatchNorm2d(branch_channels) # 为什么还要加bn )) self.branches.append(nn.Sequential( nn.Conv2d(in_channels, branch_channels, kernel_size=1, padding=0, stride=(stride,1)), nn.BatchNorm2d(branch_channels) )) # Residual connection if not residual: self.residual = lambda x: 0 elif (in_channels == out_channels) and (stride == 1): self.residual = lambda x: x else: self.residual = TemporalConv(in_channels, out_channels, kernel_size=residual_kernel_size, stride=stride) # initialize self.apply(weights_init) def forward(self, x): # Input dim: (N,C,T,V) res = self.residual(x) branch_outs = [] for tempconv in self.branches: out = tempconv(x) branch_outs.append(out) out = torch.cat(branch_outs, dim=1) out += res return out

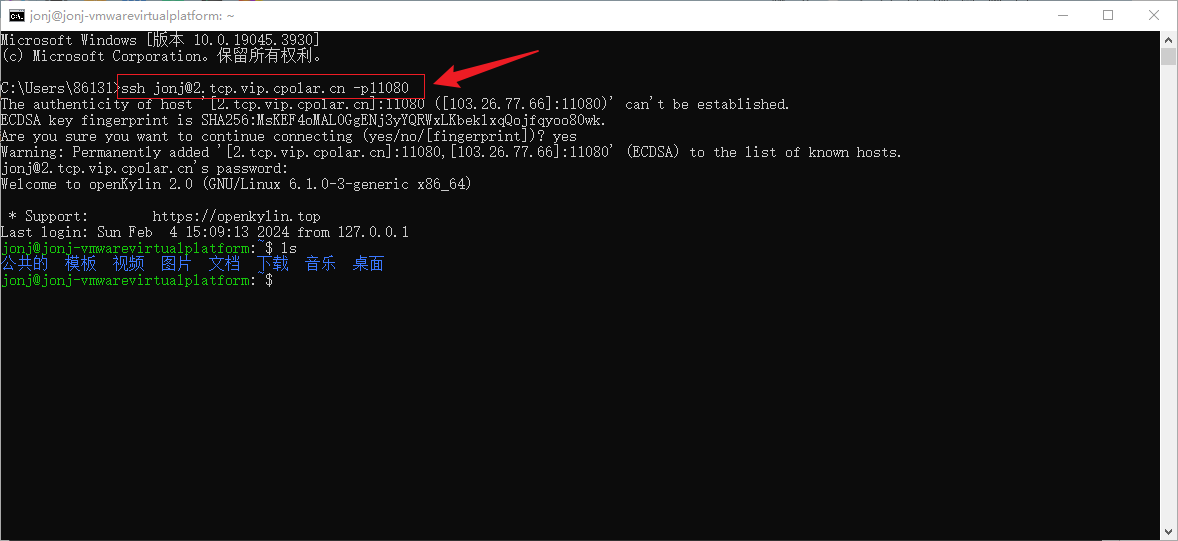

代码部署详解

接下来描述代码如何部署,也可以参看readme文件,这里结合图例进行说明

数据集下载

所需要的数据集链接: NTU-RGB-D,下载骨架数据集:

- nturgbd_skeletons_s001_to_s017.zip (NTU RGB+D 60)

- nturgbd_skeletons_s018_to_s032.zip (NTU RGB+D 120)

- 将下载的数据解压到如下目录: ./data/nturgbd_raw

NW-UCLA

- 所需要的数据集链接 NW-UCLA

- 将 all_sqe 解压并移动到 ./data/NW-UCLA

将下载的数据按照以下目录结构放置

- data/

- NW-UCLA/

- all_sqe

... # raw data of NW-UCLA

- ntu/

- ntu120/

- nturgbd_raw/

- nturgb+d_skeletons/ # from `nturgbd_skeletons_s001_to_s017.zip`

...

- nturgb+d_skeletons120/ # from `nturgbd_skeletons_s018_to_s032.zip`

...

生成骨架数据

生成 NTU RGB+D 60 or NTU RGB+D 120 数据集:

注意:每一个文件都需要改写路径,换成你数据集存放的路径,可以直接使用绝对路径

cd ./data/ntu # or cd ./data/ntu120

# Get skeleton of each performer

python get_raw_skes_data.py

# Remove the bad skeleton

python get_raw_denoised_data.py

# Transform the skeleton to the center of the first frame

python seq_transformation.py

(注意)正常生成所有数据的目录如下:

训练和测试

训练

修改配置文件(重要)

方法1:按照如下配置修修改好配置文件,即可运行以下命令

python main.py --config ''你刚刚修改配置文件的绝对路径'

方法2:直接在命令里设置参数,方法如下:

# Example: training CTRGCN on NTU RGB+D 120 cross subject with GPU 0

python main.py --config config/nturgbd120-cross-subject/default.yaml --work-dir work_dir/ntu120/csub/ctrgcn --device 0

# Example: training provided baseline on NTU RGB+D 120 cross subject

python main.py --config config/nturgbd120-cross-subject/default.yaml --model model.baseline.Model--work-dir work_dir/ntu120/csub/baseline --device 0

# Example: training CTRGCN on NTU RGB+D 120 cross subject under bone modality

python main.py --config config/nturgbd120-cross-subject/default.yaml --train_feeder_args bone=True --test_feeder_args bone=True --work-dir work_dir/ntu120/csub/ctrgcn_bone --device 0

在 NW-UCLA 数据集上训练模型, 你需要在配置文件里修改

train_feeder_args and test_feeder_args 里修改data_path为"bone" or “motion” or "bone motion"去变换模态,然后运行

python main.py --config config/ucla/default.yaml --work-dir work_dir/ucla/ctrgcn_xxx --device 0

训练你自己的模型将你的模型文件 your_model.py放入 ./model 目录下然后运行:

# Example: training your own model on NTU RGB+D 120 cross subject

python main.py --config config/nturgbd120-cross-subject/default.yaml --model model.your_model.Model --work-dir work_dir/ntu120/csub/your_model --device 0

测试

测试保存在 <work_dir>的训练模型, 运行以下命令:

python main.py --config <work_dir>/config.yaml --work-dir <work_dir> --phase test --save-score True --weights <work_dir>/xxx.pt --device 0将不同模态的数据进行融合,请在项目目录下运行:

# Example: ensemble four modalities of CTRGCN on NTU RGB+D 120 cross subject python ensemble.py --datasets ntu120/xsub --joint-dir work_dir/ntu120/csub/ctrgcn --bone-dir work_dir/ntu120/csub/ctrgcn_bone --joint-motion-dir work_dir/ntu120/csub/ctrgcn_motion --bone-motion-dir work_dir/ntu120/csub/ctrgcn_bone_motion

模型优化&&创新

在

./model/ctr-gcn.py里的class类进行修改,由于CTR-GCN的架构和2s-AGCN如出一辙。所以在层与层之间或者空间、时间建模可加入注意力模块或者即插即用的卷积模块,例如空洞卷积、大核卷积。详细的源码注释已放附件

# 定义自注意力层

class SelfAttention(nn.Module):

def __init__(self,in_channels,out_channels):

super(SelfAttention, self).__init__()

# q,k,v的kernel_size只能是1

self.conv_q = nn.Conv2d(in_channels,out_channels,1)

self.conv_k = nn.Conv2d(in_channels,out_channels,1)

self.conv_v = nn.Conv2d(in_channels,out_channels,1)

# 空间建模部分加入自注意力层

# 这里对应的是 figure3 图a的 Temporal modeling

def forward(self, x):

# Input dim: (N,C,T,V)

res = self.residual(x)

branch_outs = []

for tempconv in self.branches:

out = tempconv(x)

branch_outs.append(out)

# 这里的是所有的结果concat,dim=1

out = torch.cat(branch_outs, dim=1)

# 这里尝试在多尺度时间卷积上加入自注意力机制效果

out = self.selfattention(out) + out

out += res

return out

文章代码资源点击附件获取