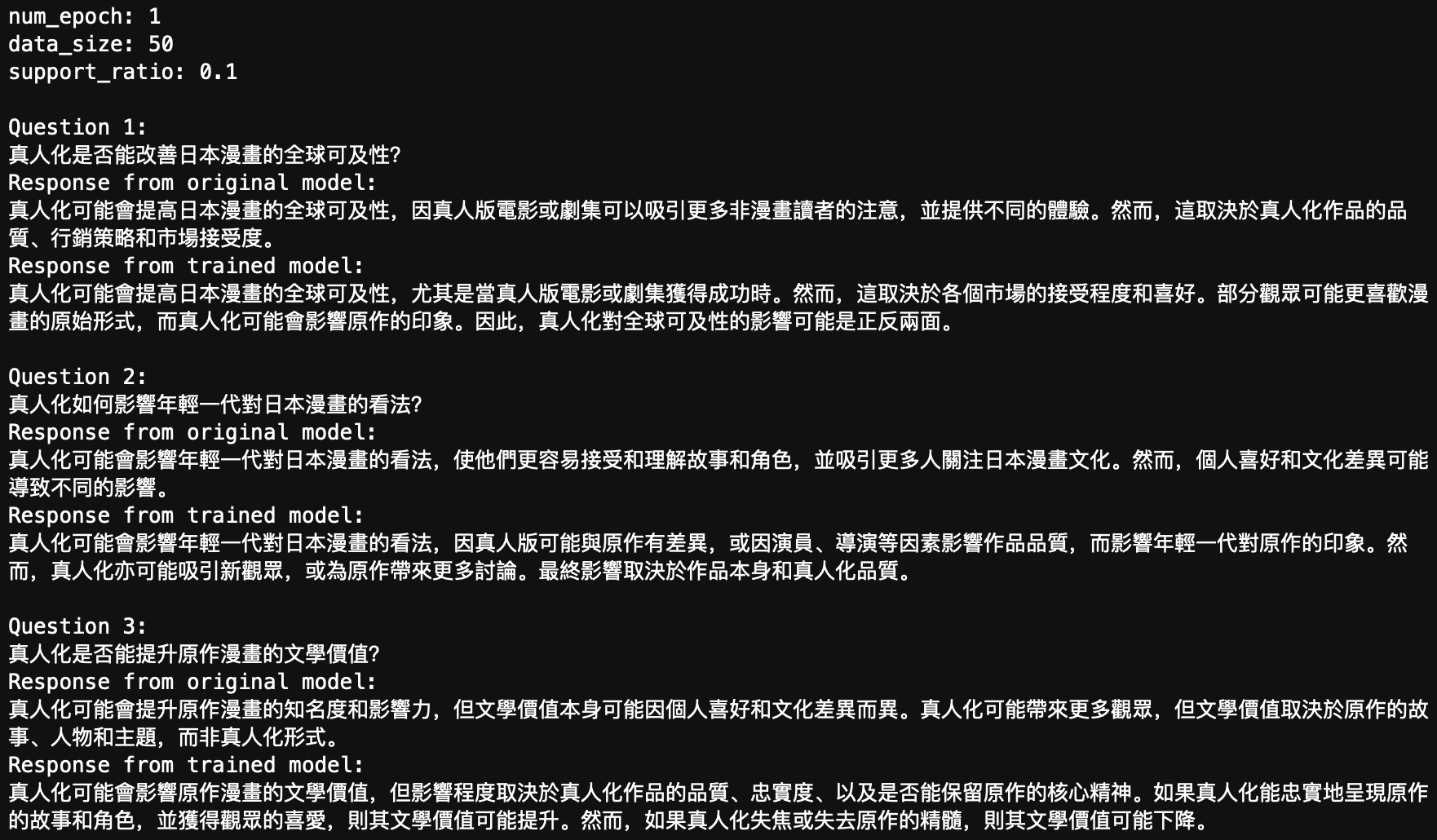

1.GRU的原理

1.1重置门和更新门

1.2候选隐藏状态

1.3隐状态

2. GRU的代码实现

#导包

import torch

from torch import nn

import dltools

#加载数据

batch_size, num_steps = 32, 35

train_iter, vocab = dltools.load_data_time_machine(batch_size, num_steps)

#封装函数:实现初始化模型参数

def get_params(vocab_size, num_hiddens, device):

num_inputs = num_outputs = vocab_size

def normal(shape):

return torch.randn(size=shape, device=device) * 0.01

def three():

return (normal((num_inputs, num_hiddens)),

normal((num_hiddens, num_hiddens)),

torch.zeros(num_hiddens, device=device))

# 更新门参数

W_xz, W_hz, b_z = three()

# 重置门

W_xr, W_hr, b_r = three()

# 候选隐藏状态参数

W_xh, W_hh, b_h = three()

# 输出层参数

W_hq = normal((num_hiddens, num_outputs))

b_q = torch.zeros(num_outputs, device=device)

params = [W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q]

for param in params:

param.requires_grad_(True)

return params

#定义函数:初始化隐藏状态

def init_gru_state(batch_size, num_hiddens, device):

return (torch.zeros((batch_size, num_hiddens), device=device))

#定义函数:构建GRU网络结构

def gru(inputs, state, params):

[W_xz, W_hz, b_z, W_xr, W_hr, b_r, W_xh, W_hh, b_h, W_hq, b_q] = params

H, = state

outputs = []

for X in inputs:

Z = torch.sigmoid((X @ W_xz) + (H @ W_hz) + b_z)

R = torch.sigmoid((X @ W_xr) + (H @ W_hr) + b_r)

H_tilda = torch.tanh((X @ W_xh) + ((R * H) @ W_hh) + b_h)

H = Z * H + (1 - Z) * H_tilda

Y = H @ W_hq + b_q

outputs.append(Y)

return torch.cat(outputs, dim=0), (H, )

#训练和预测

vocab_size, num_hiddens, device = len(vocab), 256, dltools.try_gpu()

num_epochs, lr = 500, 5

model = dltools.RNNModelScratch(len(vocab), num_hiddens, device, get_params, init_gru_state, gru)

dltools.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

3.pytorch 简洁实现版_GRU调包实现

num_inputs = vocab_size

#创建网络层

gru_layer = nn.GRU(num_inputs, num_hiddens)

#建模

model = dltools.RNNModel(gru_layer, len(vocab))

#将模型转到device上

model = model.to(device)

#模型训练

dltools.train_ch8(model, train_iter, vocab, lr, num_epochs, device)

4.知识点个人理解

![[产品管理-30]:NPDP新产品开发 - 29 - 产品生命周期管理 - 可持续产品创新](https://i-blog.csdnimg.cn/direct/901f4e8718ae44f8840a74870185e3f2.png)