目录

概述

动手实验室

概述

小型语言模型 (SLM) 的动机

- 效率:SLM 的计算效率更高,需要的内存和存储空间更少,而且由于需要处理的参数更少,因此运行速度更快。

- 成本:培训和部署 SLM 的成本较低,使其可供更广泛的企业使用,并适合边缘计算中的应用。

- 可定制性:SLM 更适合专业应用,与大型模型相比,SLM 可以更轻松地针对特定任务进行微调· 未被充分开发的潜力:虽然大型模型已显示出明显的优势,但使用大型数据集训练的小型模型的潜力尚未得到充分开发。SLM 旨在展示小型模型在使用足够的数据进行训练时可以实现高性能。

- 推理效率:较小的模型在推理过程中通常效率更高,这是在资源受限的实际应用中部署模型时的一个关键方面。这种效率包括更快的响应时间并降低计算和能源成本。

- 研究可访问性:由于是开源的,而且体积较小,SLM 更容易被更多没有资源使用较大模型的研究人员使用。它为语言模型研究的实验和创新提供了一个平台,无需大量计算资源。

- 架构和优化方面的进步:SLM 采用了各种架构和速度优化来提高计算效率。这些增强功能使 SLM 能够以更快的速度和更少的内存进行训练,从而可以在常见的 GPU 上进行训练。

- 开源贡献:SLM 的作者已公开模型检查点和代码,为开源社区做出贡献并支持其他人的进一步进步和应用。

- 终端用户应用程序:凭借其出色的性能和紧凑的尺寸,SLM 适用于终端用户应用程序,甚至可以在移动设备上使用,为广泛的应用程序提供轻量级平台。

- 训练数据和流程:SLM 训练过程旨在有效且可重复,使用自然语言数据和代码数据的混合,旨在使预训练变得可访问且透明。

Phi-3(微软研究院)

Phi-3 是微软研究院创建的 SLM,是 phi-2 的后继者。phi-3 在多个公开基准测试中表现良好(例如 phi-3 在 MMLU 中达到 69%),并支持长达 128k 的上下文。3.8B phi-3 mini 模型于 2024 年 4 月底首次发布,phi-3-small、phi-3-medium 和 phi-3-vision 于 5 月在 Microsoft Build 大会上亮相。

- Phi-3-mini 是一个 3.8B 参数语言模型(128K 和 4K)。

- Phi-3-small 是一个 7B 参数语言模型(128K 和 8K)。

- Phi-3-medium 是一个14B 参数语言模型(128K 和 4K)。

- Phi-3 vision 是一个具有语言和视觉功能的 4.2B 参数多模式模型。

在此示例中,我们将学习如何使用 QLoRA 对 phi-3-mini-4k-instruct 进行微调:使用 Flash Attention 对量化 LLM 进行高效微调。QLoRA 是一种高效的微调技术,可将预训练语言模型量化为 4 位,并附加经过微调的小型“低秩适配器”。这使得在单个 GPU 上微调多达 650 亿个参数的模型成为可能;尽管效率不高,但 QLoRA 的性能可与全精度微调相媲美,并在语言任务上取得了最先进的结果。

动手实验室

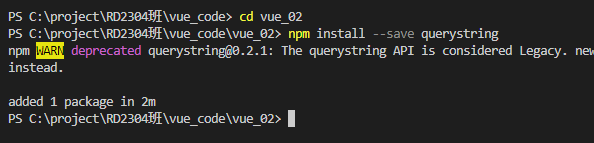

[第一步:准备]

让我们准备数据集。在本例中,我们将下载 ultrachat 数据集。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> datasets <span style="color:#00e0e0">import</span> load_dataset

<span style="color:#00e0e0">from</span> random <span style="color:#00e0e0">import</span> randrange

<span style="color:#d4d0ab"># Load dataset from the hub</span>

dataset <span style="color:#00e0e0">=</span> load_dataset<span style="color:#fefefe">(</span><span style="color:#abe338">"HuggingFaceH4/ultrachat_200k"</span><span style="color:#fefefe">,</span> split<span style="color:#00e0e0">=</span><span style="color:#abe338">'train_sft[:2%]'</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">f"dataset size: </span><span style="color:#fefefe">{</span><span style="color:#abe338">len</span><span style="color:#fefefe">(</span>dataset<span style="color:#fefefe">)</span><span style="color:#fefefe">}</span><span style="color:#abe338">"</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span>dataset<span style="color:#fefefe">[</span>randrange<span style="color:#fefefe">(</span><span style="color:#abe338">len</span><span style="color:#fefefe">(</span>dataset<span style="color:#fefefe">)</span><span style="color:#fefefe">)</span><span style="color:#fefefe">]</span><span style="color:#fefefe">)</span></code></span></span>

让我们使用较短版本的数据集来创建训练和测试示例。为了指导调整我们的模型,我们需要将结构化示例转换为通过指令描述的任务集合。我们定义一个,formatting_function它接受一个样本并返回一个带有格式指令的字符串。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python">dataset <span style="color:#00e0e0">=</span> dataset<span style="color:#fefefe">.</span>train_test_split<span style="color:#fefefe">(</span>test_size<span style="color:#00e0e0">=</span><span style="color:#00e0e0">0.2</span><span style="color:#fefefe">)</span>

train_dataset <span style="color:#00e0e0">=</span> dataset<span style="color:#fefefe">[</span><span style="color:#abe338">'train'</span><span style="color:#fefefe">]</span>

train_dataset<span style="color:#fefefe">.</span>to_json<span style="color:#fefefe">(</span><span style="color:#abe338">f"data/train.jsonl"</span><span style="color:#fefefe">)</span>

test_dataset <span style="color:#00e0e0">=</span> dataset<span style="color:#fefefe">[</span><span style="color:#abe338">'test'</span><span style="color:#fefefe">]</span>

test_dataset<span style="color:#fefefe">.</span>to_json<span style="color:#fefefe">(</span><span style="color:#abe338">f"data/eval.jsonl"</span><span style="color:#fefefe">)</span></code></span></span>

让我们以 json 格式保存这个训练和测试数据集。现在让我们加载 Azure ML SDK。这将帮助我们创建必要的组件。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#d4d0ab"># import required libraries</span>

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>identity <span style="color:#00e0e0">import</span> DefaultAzureCredential<span style="color:#fefefe">,</span> InteractiveBrowserCredential

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> MLClient<span style="color:#fefefe">,</span> Input

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>dsl <span style="color:#00e0e0">import</span> pipeline

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> load_component

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> command

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> Data

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> Input

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> Output

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>constants <span style="color:#00e0e0">import</span> AssetTypes</code></span></span>

现在让我们创建工作区客户端。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python">credential <span style="color:#00e0e0">=</span> DefaultAzureCredential<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

workspace_ml_client <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">None</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

workspace_ml_client <span style="color:#00e0e0">=</span> MLClient<span style="color:#fefefe">.</span>from_config<span style="color:#fefefe">(</span>credential<span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span> Exception <span style="color:#00e0e0">as</span> ex<span style="color:#fefefe">:</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span>ex<span style="color:#fefefe">)</span>

subscription_id<span style="color:#00e0e0">=</span> <span style="color:#abe338">"Enter your subscription_id"</span>

resource_group <span style="color:#00e0e0">=</span> <span style="color:#abe338">"Enter your resource_group"</span>

workspace<span style="color:#00e0e0">=</span> <span style="color:#abe338">"Enter your workspace name"</span>

workspace_ml_client <span style="color:#00e0e0">=</span> MLClient<span style="color:#fefefe">(</span>credential<span style="color:#fefefe">,</span> subscription_id<span style="color:#fefefe">,</span> resource_group<span style="color:#fefefe">,</span> workspace<span style="color:#fefefe">)</span></code></span></span>

这里我们创建一个自定义的训练环境。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> Environment<span style="color:#fefefe">,</span> BuildContext

env_docker_image <span style="color:#00e0e0">=</span> Environment<span style="color:#fefefe">(</span>

image<span style="color:#00e0e0">=</span><span style="color:#abe338">"mcr.microsoft.com/azureml/curated/acft-hf-nlp-gpu:latest"</span><span style="color:#fefefe">,</span>

conda_file<span style="color:#00e0e0">=</span><span style="color:#abe338">"environment/conda.yml"</span><span style="color:#fefefe">,</span>

name<span style="color:#00e0e0">=</span><span style="color:#abe338">"llm-training"</span><span style="color:#fefefe">,</span>

description<span style="color:#00e0e0">=</span><span style="color:#abe338">"Environment created for llm training."</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

workspace_ml_client<span style="color:#fefefe">.</span>environments<span style="color:#fefefe">.</span>create_or_update<span style="color:#fefefe">(</span>env_docker_image<span style="color:#fefefe">)</span></code></span></span>

让我们看看conda.yaml

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-yaml"><span style="color:#ffd700">name</span><span style="color:#fefefe">:</span> model<span style="color:#fefefe">-</span>env

<span style="color:#ffd700">channels</span><span style="color:#fefefe">:</span>

<span style="color:#fefefe">-</span> conda<span style="color:#fefefe">-</span>forge

<span style="color:#ffd700">dependencies</span><span style="color:#fefefe">:</span>

<span style="color:#fefefe">-</span> python=3.8

<span style="color:#fefefe">-</span> pip=24.0

<span style="color:#fefefe">-</span> <span style="color:#ffd700">pip</span><span style="color:#fefefe">:</span>

<span style="color:#fefefe">-</span> bitsandbytes==0.43.1

<span style="color:#fefefe">-</span> transformers~=4.41

<span style="color:#fefefe">-</span> peft~=0.11

<span style="color:#fefefe">-</span> accelerate~=0.30

<span style="color:#fefefe">-</span> trl==0.8.6

<span style="color:#fefefe">-</span> einops==0.8.0

<span style="color:#fefefe">-</span> datasets==2.19.1

<span style="color:#fefefe">-</span> wandb==0.17.0

<span style="color:#fefefe">-</span> mlflow==2.13.0

<span style="color:#fefefe">-</span> azureml<span style="color:#fefefe">-</span>mlflow==1.56.0

<span style="color:#fefefe">-</span> torchvision==0.18.0 </code></span></span>

[第二步:训练]

让我们看一下训练脚本。我们将使用 Tim Dettmers 等人在论文“ QLoRA:用于语言生成的量化感知低秩适配器调整”中最近介绍的方法。QLoRA 是一种新技术,可以在不牺牲性能的情况下减少大型语言模型在微调过程中的内存占用。QLoRA 工作原理的 TL;DR; 如下:

- 将预训练模型量化为 4 位并冻结。

- 附加小型、可训练的适配器层。(LoRA)

- 仅对适配器层进行微调,同时将冻结的量化模型用于上下文。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">%</span><span style="color:#00e0e0">%</span>writefile src<span style="color:#00e0e0">/</span>train<span style="color:#fefefe">.</span>py

<span style="color:#00e0e0">import</span> os

<span style="color:#d4d0ab">#import mlflow</span>

<span style="color:#00e0e0">import</span> argparse

<span style="color:#00e0e0">import</span> sys

<span style="color:#00e0e0">import</span> logging

<span style="color:#00e0e0">import</span> datasets

<span style="color:#00e0e0">from</span> datasets <span style="color:#00e0e0">import</span> load_dataset

<span style="color:#00e0e0">from</span> peft <span style="color:#00e0e0">import</span> LoraConfig

<span style="color:#00e0e0">import</span> torch

<span style="color:#00e0e0">import</span> transformers

<span style="color:#00e0e0">from</span> trl <span style="color:#00e0e0">import</span> SFTTrainer

<span style="color:#00e0e0">from</span> transformers <span style="color:#00e0e0">import</span> AutoModelForCausalLM<span style="color:#fefefe">,</span> AutoTokenizer<span style="color:#fefefe">,</span> TrainingArguments<span style="color:#fefefe">,</span> BitsAndBytesConfig

<span style="color:#00e0e0">from</span> datasets <span style="color:#00e0e0">import</span> load_dataset

logger <span style="color:#00e0e0">=</span> logging<span style="color:#fefefe">.</span>getLogger<span style="color:#fefefe">(</span>__name__<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab">###################</span>

<span style="color:#d4d0ab"># Hyper-parameters</span>

<span style="color:#d4d0ab">###################</span>

training_config <span style="color:#00e0e0">=</span> <span style="color:#fefefe">{</span>

<span style="color:#abe338">"bf16"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">True</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"do_eval"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">False</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"learning_rate"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">5.0e-06</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"log_level"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"info"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"logging_steps"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">20</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"logging_strategy"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"steps"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"lr_scheduler_type"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"cosine"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"num_train_epochs"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"max_steps"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">-</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"output_dir"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"./checkpoint_dir"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"overwrite_output_dir"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">True</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"per_device_eval_batch_size"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">4</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"per_device_train_batch_size"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">4</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"remove_unused_columns"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">True</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"save_steps"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">100</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"save_total_limit"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"seed"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">0</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"gradient_checkpointing"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">True</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"gradient_checkpointing_kwargs"</span><span style="color:#fefefe">:</span><span style="color:#fefefe">{</span><span style="color:#abe338">"use_reentrant"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">False</span><span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"gradient_accumulation_steps"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"warmup_ratio"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">0.2</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">}</span>

peft_config <span style="color:#00e0e0">=</span> <span style="color:#fefefe">{</span>

<span style="color:#abe338">"r"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">16</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"lora_alpha"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">32</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"lora_dropout"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">0.05</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"bias"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"none"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"task_type"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"CAUSAL_LM"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"target_modules"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"all-linear"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"modules_to_save"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">None</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">}</span>

train_conf <span style="color:#00e0e0">=</span> TrainingArguments<span style="color:#fefefe">(</span><span style="color:#00e0e0">**</span>training_config<span style="color:#fefefe">)</span>

peft_conf <span style="color:#00e0e0">=</span> LoraConfig<span style="color:#fefefe">(</span><span style="color:#00e0e0">**</span>peft_config<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab">###############</span>

<span style="color:#d4d0ab"># Setup logging</span>

<span style="color:#d4d0ab">###############</span>

logging<span style="color:#fefefe">.</span>basicConfig<span style="color:#fefefe">(</span>

<span style="color:#abe338">format</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"%(asctime)s - %(levelname)s - %(name)s - %(message)s"</span><span style="color:#fefefe">,</span>

datefmt<span style="color:#00e0e0">=</span><span style="color:#abe338">"%Y-%m-%d %H:%M:%S"</span><span style="color:#fefefe">,</span>

handlers<span style="color:#00e0e0">=</span><span style="color:#fefefe">[</span>logging<span style="color:#fefefe">.</span>StreamHandler<span style="color:#fefefe">(</span>sys<span style="color:#fefefe">.</span>stdout<span style="color:#fefefe">)</span><span style="color:#fefefe">]</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

log_level <span style="color:#00e0e0">=</span> train_conf<span style="color:#fefefe">.</span>get_process_log_level<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

logger<span style="color:#fefefe">.</span>setLevel<span style="color:#fefefe">(</span>log_level<span style="color:#fefefe">)</span>

datasets<span style="color:#fefefe">.</span>utils<span style="color:#fefefe">.</span>logging<span style="color:#fefefe">.</span>set_verbosity<span style="color:#fefefe">(</span>log_level<span style="color:#fefefe">)</span>

transformers<span style="color:#fefefe">.</span>utils<span style="color:#fefefe">.</span>logging<span style="color:#fefefe">.</span>set_verbosity<span style="color:#fefefe">(</span>log_level<span style="color:#fefefe">)</span>

transformers<span style="color:#fefefe">.</span>utils<span style="color:#fefefe">.</span>logging<span style="color:#fefefe">.</span>enable_default_handler<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

transformers<span style="color:#fefefe">.</span>utils<span style="color:#fefefe">.</span>logging<span style="color:#fefefe">.</span>enable_explicit_format<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># Log on each process a small summary</span>

logger<span style="color:#fefefe">.</span>warning<span style="color:#fefefe">(</span>

<span style="color:#abe338">f"Process rank: </span><span style="color:#fefefe">{</span>train_conf<span style="color:#fefefe">.</span>local_rank<span style="color:#fefefe">}</span><span style="color:#abe338">, device: </span><span style="color:#fefefe">{</span>train_conf<span style="color:#fefefe">.</span>device<span style="color:#fefefe">}</span><span style="color:#abe338">, n_gpu: </span><span style="color:#fefefe">{</span>train_conf<span style="color:#fefefe">.</span>n_gpu<span style="color:#fefefe">}</span><span style="color:#abe338">"</span>

<span style="color:#00e0e0">+</span> <span style="color:#abe338">f" distributed training: </span><span style="color:#fefefe">{</span><span style="color:#abe338">bool</span><span style="color:#fefefe">(</span>train_conf<span style="color:#fefefe">.</span>local_rank <span style="color:#00e0e0">!=</span> <span style="color:#00e0e0">-</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">)</span><span style="color:#fefefe">}</span><span style="color:#abe338">, 16-bits training: </span><span style="color:#fefefe">{</span>train_conf<span style="color:#fefefe">.</span>fp16<span style="color:#fefefe">}</span><span style="color:#abe338">"</span>

<span style="color:#fefefe">)</span>

logger<span style="color:#fefefe">.</span>info<span style="color:#fefefe">(</span><span style="color:#abe338">f"Training/evaluation parameters </span><span style="color:#fefefe">{</span>train_conf<span style="color:#fefefe">}</span><span style="color:#abe338">"</span><span style="color:#fefefe">)</span>

logger<span style="color:#fefefe">.</span>info<span style="color:#fefefe">(</span><span style="color:#abe338">f"PEFT parameters </span><span style="color:#fefefe">{</span>peft_conf<span style="color:#fefefe">}</span><span style="color:#abe338">"</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab">################</span>

<span style="color:#d4d0ab"># Modle Loading</span>

<span style="color:#d4d0ab">################</span>

checkpoint_path <span style="color:#00e0e0">=</span> <span style="color:#abe338">"microsoft/Phi-3-mini-4k-instruct"</span>

<span style="color:#d4d0ab"># checkpoint_path = "microsoft/Phi-3-mini-128k-instruct"</span>

model_kwargs <span style="color:#00e0e0">=</span> <span style="color:#abe338">dict</span><span style="color:#fefefe">(</span>

use_cache<span style="color:#00e0e0">=</span><span style="color:#00e0e0">False</span><span style="color:#fefefe">,</span>

trust_remote_code<span style="color:#00e0e0">=</span><span style="color:#00e0e0">True</span><span style="color:#fefefe">,</span>

attn_implementation<span style="color:#00e0e0">=</span><span style="color:#abe338">"flash_attention_2"</span><span style="color:#fefefe">,</span> <span style="color:#d4d0ab"># loading the model with flash-attenstion support</span>

torch_dtype<span style="color:#00e0e0">=</span>torch<span style="color:#fefefe">.</span>bfloat16<span style="color:#fefefe">,</span>

device_map<span style="color:#00e0e0">=</span><span style="color:#00e0e0">None</span>

<span style="color:#fefefe">)</span>

model <span style="color:#00e0e0">=</span> AutoModelForCausalLM<span style="color:#fefefe">.</span>from_pretrained<span style="color:#fefefe">(</span>checkpoint_path<span style="color:#fefefe">,</span> <span style="color:#00e0e0">**</span>model_kwargs<span style="color:#fefefe">)</span>

tokenizer <span style="color:#00e0e0">=</span> AutoTokenizer<span style="color:#fefefe">.</span>from_pretrained<span style="color:#fefefe">(</span>checkpoint_path<span style="color:#fefefe">)</span>

tokenizer<span style="color:#fefefe">.</span>model_max_length <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">2048</span>

tokenizer<span style="color:#fefefe">.</span>pad_token <span style="color:#00e0e0">=</span> tokenizer<span style="color:#fefefe">.</span>unk_token <span style="color:#d4d0ab"># use unk rather than eos token to prevent endless generation</span>

tokenizer<span style="color:#fefefe">.</span>pad_token_id <span style="color:#00e0e0">=</span> tokenizer<span style="color:#fefefe">.</span>convert_tokens_to_ids<span style="color:#fefefe">(</span>tokenizer<span style="color:#fefefe">.</span>pad_token<span style="color:#fefefe">)</span>

tokenizer<span style="color:#fefefe">.</span>padding_side <span style="color:#00e0e0">=</span> <span style="color:#abe338">'right'</span>

<span style="color:#d4d0ab">##################</span>

<span style="color:#d4d0ab"># Data Processing</span>

<span style="color:#d4d0ab">##################</span>

<span style="color:#00e0e0">def</span> <span style="color:#ffd700">apply_chat_template</span><span style="color:#fefefe">(</span>

example<span style="color:#fefefe">,</span>

tokenizer<span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">:</span>

messages <span style="color:#00e0e0">=</span> example<span style="color:#fefefe">[</span><span style="color:#abe338">"messages"</span><span style="color:#fefefe">]</span>

<span style="color:#d4d0ab"># Add an empty system message if there is none</span>

<span style="color:#00e0e0">if</span> messages<span style="color:#fefefe">[</span><span style="color:#00e0e0">0</span><span style="color:#fefefe">]</span><span style="color:#fefefe">[</span><span style="color:#abe338">"role"</span><span style="color:#fefefe">]</span> <span style="color:#00e0e0">!=</span> <span style="color:#abe338">"system"</span><span style="color:#fefefe">:</span>

messages<span style="color:#fefefe">.</span>insert<span style="color:#fefefe">(</span><span style="color:#00e0e0">0</span><span style="color:#fefefe">,</span> <span style="color:#fefefe">{</span><span style="color:#abe338">"role"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"system"</span><span style="color:#fefefe">,</span> <span style="color:#abe338">"content"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">""</span><span style="color:#fefefe">}</span><span style="color:#fefefe">)</span>

example<span style="color:#fefefe">[</span><span style="color:#abe338">"text"</span><span style="color:#fefefe">]</span> <span style="color:#00e0e0">=</span> tokenizer<span style="color:#fefefe">.</span>apply_chat_template<span style="color:#fefefe">(</span>

messages<span style="color:#fefefe">,</span> tokenize<span style="color:#00e0e0">=</span><span style="color:#00e0e0">False</span><span style="color:#fefefe">,</span> add_generation_prompt<span style="color:#00e0e0">=</span><span style="color:#00e0e0">False</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">return</span> example

<span style="color:#00e0e0">def</span> <span style="color:#ffd700">main</span><span style="color:#fefefe">(</span>args<span style="color:#fefefe">)</span><span style="color:#fefefe">:</span>

train_dataset <span style="color:#00e0e0">=</span> load_dataset<span style="color:#fefefe">(</span><span style="color:#abe338">'json'</span><span style="color:#fefefe">,</span> data_files<span style="color:#00e0e0">=</span>args<span style="color:#fefefe">.</span>train_file<span style="color:#fefefe">,</span> split<span style="color:#00e0e0">=</span><span style="color:#abe338">'train'</span><span style="color:#fefefe">)</span>

test_dataset <span style="color:#00e0e0">=</span> load_dataset<span style="color:#fefefe">(</span><span style="color:#abe338">'json'</span><span style="color:#fefefe">,</span> data_files<span style="color:#00e0e0">=</span>args<span style="color:#fefefe">.</span>eval_file<span style="color:#fefefe">,</span> split<span style="color:#00e0e0">=</span><span style="color:#abe338">'train'</span><span style="color:#fefefe">)</span>

column_names <span style="color:#00e0e0">=</span> <span style="color:#abe338">list</span><span style="color:#fefefe">(</span>train_dataset<span style="color:#fefefe">.</span>features<span style="color:#fefefe">)</span>

processed_train_dataset <span style="color:#00e0e0">=</span> train_dataset<span style="color:#fefefe">.</span><span style="color:#abe338">map</span><span style="color:#fefefe">(</span>

apply_chat_template<span style="color:#fefefe">,</span>

fn_kwargs<span style="color:#00e0e0">=</span><span style="color:#fefefe">{</span><span style="color:#abe338">"tokenizer"</span><span style="color:#fefefe">:</span> tokenizer<span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

num_proc<span style="color:#00e0e0">=</span><span style="color:#00e0e0">10</span><span style="color:#fefefe">,</span>

remove_columns<span style="color:#00e0e0">=</span>column_names<span style="color:#fefefe">,</span>

desc<span style="color:#00e0e0">=</span><span style="color:#abe338">"Applying chat template to train_sft"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

processed_test_dataset <span style="color:#00e0e0">=</span> test_dataset<span style="color:#fefefe">.</span><span style="color:#abe338">map</span><span style="color:#fefefe">(</span>

apply_chat_template<span style="color:#fefefe">,</span>

fn_kwargs<span style="color:#00e0e0">=</span><span style="color:#fefefe">{</span><span style="color:#abe338">"tokenizer"</span><span style="color:#fefefe">:</span> tokenizer<span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

num_proc<span style="color:#00e0e0">=</span><span style="color:#00e0e0">10</span><span style="color:#fefefe">,</span>

remove_columns<span style="color:#00e0e0">=</span>column_names<span style="color:#fefefe">,</span>

desc<span style="color:#00e0e0">=</span><span style="color:#abe338">"Applying chat template to test_sft"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab">###########</span>

<span style="color:#d4d0ab"># Training</span>

<span style="color:#d4d0ab">###########</span>

trainer <span style="color:#00e0e0">=</span> SFTTrainer<span style="color:#fefefe">(</span>

model<span style="color:#00e0e0">=</span>model<span style="color:#fefefe">,</span>

args<span style="color:#00e0e0">=</span>train_conf<span style="color:#fefefe">,</span>

peft_config<span style="color:#00e0e0">=</span>peft_conf<span style="color:#fefefe">,</span>

train_dataset<span style="color:#00e0e0">=</span>processed_train_dataset<span style="color:#fefefe">,</span>

eval_dataset<span style="color:#00e0e0">=</span>processed_test_dataset<span style="color:#fefefe">,</span>

max_seq_length<span style="color:#00e0e0">=</span><span style="color:#00e0e0">2048</span><span style="color:#fefefe">,</span>

dataset_text_field<span style="color:#00e0e0">=</span><span style="color:#abe338">"text"</span><span style="color:#fefefe">,</span>

tokenizer<span style="color:#00e0e0">=</span>tokenizer<span style="color:#fefefe">,</span>

packing<span style="color:#00e0e0">=</span><span style="color:#00e0e0">True</span>

<span style="color:#fefefe">)</span>

train_result <span style="color:#00e0e0">=</span> trainer<span style="color:#fefefe">.</span>train<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

metrics <span style="color:#00e0e0">=</span> train_result<span style="color:#fefefe">.</span>metrics

trainer<span style="color:#fefefe">.</span>log_metrics<span style="color:#fefefe">(</span><span style="color:#abe338">"train"</span><span style="color:#fefefe">,</span> metrics<span style="color:#fefefe">)</span>

trainer<span style="color:#fefefe">.</span>save_metrics<span style="color:#fefefe">(</span><span style="color:#abe338">"train"</span><span style="color:#fefefe">,</span> metrics<span style="color:#fefefe">)</span>

trainer<span style="color:#fefefe">.</span>save_state<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab">#############</span>

<span style="color:#d4d0ab"># Evaluation</span>

<span style="color:#d4d0ab">#############</span>

tokenizer<span style="color:#fefefe">.</span>padding_side <span style="color:#00e0e0">=</span> <span style="color:#abe338">'left'</span>

metrics <span style="color:#00e0e0">=</span> trainer<span style="color:#fefefe">.</span>evaluate<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

metrics<span style="color:#fefefe">[</span><span style="color:#abe338">"eval_samples"</span><span style="color:#fefefe">]</span> <span style="color:#00e0e0">=</span> <span style="color:#abe338">len</span><span style="color:#fefefe">(</span>processed_test_dataset<span style="color:#fefefe">)</span>

trainer<span style="color:#fefefe">.</span>log_metrics<span style="color:#fefefe">(</span><span style="color:#abe338">"eval"</span><span style="color:#fefefe">,</span> metrics<span style="color:#fefefe">)</span>

trainer<span style="color:#fefefe">.</span>save_metrics<span style="color:#fefefe">(</span><span style="color:#abe338">"eval"</span><span style="color:#fefefe">,</span> metrics<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># ############</span>

<span style="color:#d4d0ab"># # Save model</span>

<span style="color:#d4d0ab"># ############</span>

os<span style="color:#fefefe">.</span>makedirs<span style="color:#fefefe">(</span>args<span style="color:#fefefe">.</span>model_dir<span style="color:#fefefe">,</span> exist_ok<span style="color:#00e0e0">=</span><span style="color:#00e0e0">True</span><span style="color:#fefefe">)</span>

torch<span style="color:#fefefe">.</span>save<span style="color:#fefefe">(</span>model<span style="color:#fefefe">,</span> os<span style="color:#fefefe">.</span>path<span style="color:#fefefe">.</span>join<span style="color:#fefefe">(</span>args<span style="color:#fefefe">.</span>model_dir<span style="color:#fefefe">,</span> <span style="color:#abe338">"model.pt"</span><span style="color:#fefefe">)</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">def</span> <span style="color:#ffd700">parse_args</span><span style="color:#fefefe">(</span><span style="color:#fefefe">)</span><span style="color:#fefefe">:</span>

<span style="color:#d4d0ab"># setup argparse</span>

parser <span style="color:#00e0e0">=</span> argparse<span style="color:#fefefe">.</span>ArgumentParser<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># add arguments</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--train-file"</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">str</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"Input data for training"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--eval-file"</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">str</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"Input data for eval"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--model-dir"</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">str</span><span style="color:#fefefe">,</span> default<span style="color:#00e0e0">=</span><span style="color:#abe338">"./"</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"output directory for model"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--epochs"</span><span style="color:#fefefe">,</span> default<span style="color:#00e0e0">=</span><span style="color:#00e0e0">10</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">int</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"number of epochs"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span>

<span style="color:#abe338">"--batch-size"</span><span style="color:#fefefe">,</span>

default<span style="color:#00e0e0">=</span><span style="color:#00e0e0">16</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">int</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"mini batch size for each gpu/process"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--learning-rate"</span><span style="color:#fefefe">,</span> default<span style="color:#00e0e0">=</span><span style="color:#00e0e0">0.001</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">float</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"learning rate"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span><span style="color:#abe338">"--momentum"</span><span style="color:#fefefe">,</span> default<span style="color:#00e0e0">=</span><span style="color:#00e0e0">0.9</span><span style="color:#fefefe">,</span> <span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">float</span><span style="color:#fefefe">,</span> <span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"momentum"</span><span style="color:#fefefe">)</span>

parser<span style="color:#fefefe">.</span>add_argument<span style="color:#fefefe">(</span>

<span style="color:#abe338">"--print-freq"</span><span style="color:#fefefe">,</span>

default<span style="color:#00e0e0">=</span><span style="color:#00e0e0">200</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">int</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">help</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"frequency of printing training statistics"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># parse args</span>

args <span style="color:#00e0e0">=</span> parser<span style="color:#fefefe">.</span>parse_args<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># return args</span>

<span style="color:#00e0e0">return</span> args

<span style="color:#d4d0ab"># run script</span>

<span style="color:#00e0e0">if</span> __name__ <span style="color:#00e0e0">==</span> <span style="color:#abe338">"__main__"</span><span style="color:#fefefe">:</span>

<span style="color:#d4d0ab"># parse args</span>

args <span style="color:#00e0e0">=</span> parse_args<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># call main function</span>

main<span style="color:#fefefe">(</span>args<span style="color:#fefefe">)</span></code></span></span>

让我们创建一个训练计算。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> AmlCompute

<span style="color:#d4d0ab"># If you have a specific compute size to work with change it here. By default we use the 1 x A100 compute from the above list</span>

compute_cluster_size <span style="color:#00e0e0">=</span> <span style="color:#abe338">"Standard_NC24ads_A100_v4"</span> <span style="color:#d4d0ab"># 1 x A100 (80GB)</span>

<span style="color:#d4d0ab"># If you already have a gpu cluster, mention it here. Else will create a new one with the name 'gpu-cluster-big'</span>

compute_cluster <span style="color:#00e0e0">=</span> <span style="color:#abe338">"gpu-a100"</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

compute <span style="color:#00e0e0">=</span> ml_client<span style="color:#fefefe">.</span>compute<span style="color:#fefefe">.</span>get<span style="color:#fefefe">(</span>compute_cluster<span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"The compute cluster already exists! Reusing it for the current run"</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span> Exception <span style="color:#00e0e0">as</span> ex<span style="color:#fefefe">:</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span>

<span style="color:#abe338">f"Looks like the compute cluster doesn't exist. Creating a new one with compute size </span><span style="color:#fefefe">{</span>compute_cluster_size<span style="color:#fefefe">}</span><span style="color:#abe338">!"</span>

<span style="color:#fefefe">)</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"Attempt #1 - Trying to create a dedicated compute"</span><span style="color:#fefefe">)</span>

compute <span style="color:#00e0e0">=</span> AmlCompute<span style="color:#fefefe">(</span>

name<span style="color:#00e0e0">=</span>compute_cluster<span style="color:#fefefe">,</span>

size<span style="color:#00e0e0">=</span>compute_cluster_size<span style="color:#fefefe">,</span>

tier<span style="color:#00e0e0">=</span><span style="color:#abe338">"Dedicated"</span><span style="color:#fefefe">,</span>

max_instances<span style="color:#00e0e0">=</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span> <span style="color:#d4d0ab"># For multi node training set this to an integer value more than 1</span>

<span style="color:#fefefe">)</span>

ml_client<span style="color:#fefefe">.</span>compute<span style="color:#fefefe">.</span>begin_create_or_update<span style="color:#fefefe">(</span>compute<span style="color:#fefefe">)</span><span style="color:#fefefe">.</span>wait<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span> Exception <span style="color:#00e0e0">as</span> e<span style="color:#fefefe">:</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"Error"</span><span style="color:#fefefe">)</span></code></span></span>

一些有用的提示:

- LoRA 等级不需要很高。(例如,r=256)根据我们的经验,8 或 16 作为基准就足够了。

- 如果训练数据集较小,最好设置rank=alpha。通常,2*rank或4*rank训练在小数据集上往往不稳定。

- 使用lora时,请将学习率设置得较小。不建议使用1e-3或2e-4之类的学习率。我们从8e-4或5e-5开始。

- 与其设置更大的批处理大小,不如检查我们是否有足够的 GPU 内存。这是因为如果上下文长度很长(如 8K),则可能会发生 OOM(内存不足)。使用梯度检查点和梯度累积可以增加批处理大小。

- 如果你对批量大小和内存很敏感,绝对不要坚持使用 Adam,包括低位 Adam。Adam 需要额外的 GPU 内存来计算第一和第二动量。SGD(随机梯度下降)收敛速度较慢,但不占用额外的 GPU 内存。

现在让我们在刚刚创建的 AML 计算中使用上述训练脚本调用计算作业。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> command

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml <span style="color:#00e0e0">import</span> Input

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> ResourceConfiguration

job <span style="color:#00e0e0">=</span> command<span style="color:#fefefe">(</span>

inputs<span style="color:#00e0e0">=</span><span style="color:#abe338">dict</span><span style="color:#fefefe">(</span>

train_file<span style="color:#00e0e0">=</span>Input<span style="color:#fefefe">(</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"uri_file"</span><span style="color:#fefefe">,</span>

path<span style="color:#00e0e0">=</span><span style="color:#abe338">"data/train.jsonl"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

eval_file<span style="color:#00e0e0">=</span>Input<span style="color:#fefefe">(</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"uri_file"</span><span style="color:#fefefe">,</span>

path<span style="color:#00e0e0">=</span><span style="color:#abe338">"data/eval.jsonl"</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

epoch<span style="color:#00e0e0">=</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

batchsize<span style="color:#00e0e0">=</span><span style="color:#00e0e0">64</span><span style="color:#fefefe">,</span>

lr <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">0.01</span><span style="color:#fefefe">,</span>

momentum <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">0.9</span><span style="color:#fefefe">,</span>

prtfreq <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">200</span><span style="color:#fefefe">,</span>

output <span style="color:#00e0e0">=</span> <span style="color:#abe338">"./outputs"</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

code<span style="color:#00e0e0">=</span><span style="color:#abe338">"./src"</span><span style="color:#fefefe">,</span> <span style="color:#d4d0ab"># local path where the code is stored</span>

compute <span style="color:#00e0e0">=</span> <span style="color:#abe338">'gpu-a100'</span><span style="color:#fefefe">,</span>

command<span style="color:#00e0e0">=</span><span style="color:#abe338">"accelerate launch train.py --train-file ${{inputs.train_file}} --eval-file ${{inputs.eval_file}} --epochs ${{inputs.epoch}} --batch-size ${{inputs.batchsize}} --learning-rate ${{inputs.lr}} --momentum ${{inputs.momentum}} --print-freq ${{inputs.prtfreq}} --model-dir ${{inputs.output}}"</span><span style="color:#fefefe">,</span>

environment<span style="color:#00e0e0">=</span><span style="color:#abe338">"azureml://registries/azureml/environments/acft-hf-nlp-gpu/versions/52"</span><span style="color:#fefefe">,</span>

distribution<span style="color:#00e0e0">=</span><span style="color:#fefefe">{</span>

<span style="color:#abe338">"type"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"PyTorch"</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"process_count_per_instance"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

returned_job <span style="color:#00e0e0">=</span> workspace_ml_client<span style="color:#fefefe">.</span>jobs<span style="color:#fefefe">.</span>create_or_update<span style="color:#fefefe">(</span>job<span style="color:#fefefe">)</span>

workspace_ml_client<span style="color:#fefefe">.</span>jobs<span style="color:#fefefe">.</span>stream<span style="color:#fefefe">(</span>returned_job<span style="color:#fefefe">.</span>name<span style="color:#fefefe">)</span></code></span></span>

让我们看一下管道输出。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#d4d0ab"># check if the `trained_model` output is available</span>

job_name <span style="color:#00e0e0">=</span> returned_job<span style="color:#fefefe">.</span>name

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"pipeline job outputs: "</span><span style="color:#fefefe">,</span> workspace_ml_client<span style="color:#fefefe">.</span>jobs<span style="color:#fefefe">.</span>get<span style="color:#fefefe">(</span>job_name<span style="color:#fefefe">)</span><span style="color:#fefefe">.</span>outputs<span style="color:#fefefe">)</span></code></span></span>

[步骤 3:终点]

一旦模型微调完毕,就可以在工作区中注册该作业来创建端点。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> Model

<span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>constants <span style="color:#00e0e0">import</span> AssetTypes

run_model <span style="color:#00e0e0">=</span> Model<span style="color:#fefefe">(</span>

path<span style="color:#00e0e0">=</span><span style="color:#abe338">f"azureml://jobs/</span><span style="color:#fefefe">{</span>job_name<span style="color:#fefefe">}</span><span style="color:#abe338">/outputs/artifacts/paths/outputs/mlflow_model_folder"</span><span style="color:#fefefe">,</span>

name<span style="color:#00e0e0">=</span><span style="color:#abe338">"phi-3-finetuned"</span><span style="color:#fefefe">,</span>

description<span style="color:#00e0e0">=</span><span style="color:#abe338">"Model created from run."</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span>AssetTypes<span style="color:#fefefe">.</span>MLFLOW_MODEL<span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

model <span style="color:#00e0e0">=</span> workspace_ml_client<span style="color:#fefefe">.</span>models<span style="color:#fefefe">.</span>create_or_update<span style="color:#fefefe">(</span>run_model<span style="color:#fefefe">)</span></code></span></span>

让我们创建端点。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> <span style="color:#fefefe">(</span>

ManagedOnlineEndpoint<span style="color:#fefefe">,</span>

IdentityConfiguration<span style="color:#fefefe">,</span>

ManagedIdentityConfiguration<span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># Check if the endpoint already exists in the workspace</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

endpoint <span style="color:#00e0e0">=</span> workspace_ml_client<span style="color:#fefefe">.</span>online_endpoints<span style="color:#fefefe">.</span>get<span style="color:#fefefe">(</span>endpoint_name<span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"---Endpoint already exists---"</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span><span style="color:#fefefe">:</span>

<span style="color:#d4d0ab"># Create an online endpoint if it doesn't exist</span>

<span style="color:#d4d0ab"># Define the endpoint</span>

endpoint <span style="color:#00e0e0">=</span> ManagedOnlineEndpoint<span style="color:#fefefe">(</span>

name<span style="color:#00e0e0">=</span>endpoint_name<span style="color:#fefefe">,</span>

description<span style="color:#00e0e0">=</span><span style="color:#abe338">f"Test endpoint for </span><span style="color:#fefefe">{</span>model<span style="color:#fefefe">.</span>name<span style="color:#fefefe">}</span><span style="color:#abe338">"</span><span style="color:#fefefe">,</span>

identity<span style="color:#00e0e0">=</span>IdentityConfiguration<span style="color:#fefefe">(</span>

<span style="color:#abe338">type</span><span style="color:#00e0e0">=</span><span style="color:#abe338">"user_assigned"</span><span style="color:#fefefe">,</span>

user_assigned_identities<span style="color:#00e0e0">=</span><span style="color:#fefefe">[</span>ManagedIdentityConfiguration<span style="color:#fefefe">(</span>resource_id<span style="color:#00e0e0">=</span>uai_id<span style="color:#fefefe">)</span><span style="color:#fefefe">]</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#00e0e0">if</span> uai_id <span style="color:#00e0e0">!=</span> <span style="color:#abe338">""</span>

<span style="color:#00e0e0">else</span> <span style="color:#00e0e0">None</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># Trigger the endpoint creation</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

workspace_ml_client<span style="color:#fefefe">.</span>begin_create_or_update<span style="color:#fefefe">(</span>endpoint<span style="color:#fefefe">)</span><span style="color:#fefefe">.</span>wait<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"\n---Endpoint created successfully---\n"</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span> Exception <span style="color:#00e0e0">as</span> err<span style="color:#fefefe">:</span>

<span style="color:#00e0e0">raise</span> RuntimeError<span style="color:#fefefe">(</span>

<span style="color:#abe338">f"Endpoint creation failed. Detailed Response:\n</span><span style="color:#fefefe">{</span>err<span style="color:#fefefe">}</span><span style="color:#abe338">"</span>

<span style="color:#fefefe">)</span> <span style="color:#00e0e0">from</span> err</code></span></span>

一旦创建端点,我们就可以继续创建部署。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#d4d0ab"># Initialize deployment parameters</span>

deployment_name <span style="color:#00e0e0">=</span> <span style="color:#abe338">"phi3-deploy"</span>

sku_name <span style="color:#00e0e0">=</span> <span style="color:#abe338">"Standard_NCs_v3"</span>

REQUEST_TIMEOUT_MS <span style="color:#00e0e0">=</span> <span style="color:#00e0e0">90000</span>

deployment_env_vars <span style="color:#00e0e0">=</span> <span style="color:#fefefe">{</span>

<span style="color:#abe338">"SUBSCRIPTION_ID"</span><span style="color:#fefefe">:</span> subscription_id<span style="color:#fefefe">,</span>

<span style="color:#abe338">"RESOURCE_GROUP_NAME"</span><span style="color:#fefefe">:</span> resource_group<span style="color:#fefefe">,</span>

<span style="color:#abe338">"UAI_CLIENT_ID"</span><span style="color:#fefefe">:</span> uai_client_id<span style="color:#fefefe">,</span>

<span style="color:#fefefe">}</span></code></span></span>

为了进行推理,我们将使用不同的基础图像。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> Model<span style="color:#fefefe">,</span> Environment

env <span style="color:#00e0e0">=</span> Environment<span style="color:#fefefe">(</span>

image<span style="color:#00e0e0">=</span><span style="color:#abe338">'mcr.microsoft.com/azureml/curated/foundation-model-inference:latest'</span><span style="color:#fefefe">,</span>

inference_config<span style="color:#00e0e0">=</span><span style="color:#fefefe">{</span>

<span style="color:#abe338">"liveness_route"</span><span style="color:#fefefe">:</span> <span style="color:#fefefe">{</span><span style="color:#abe338">"port"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">5001</span><span style="color:#fefefe">,</span> <span style="color:#abe338">"path"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"/"</span><span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"readiness_route"</span><span style="color:#fefefe">:</span> <span style="color:#fefefe">{</span><span style="color:#abe338">"port"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">5001</span><span style="color:#fefefe">,</span> <span style="color:#abe338">"path"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"/"</span><span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#abe338">"scoring_route"</span><span style="color:#fefefe">:</span> <span style="color:#fefefe">{</span><span style="color:#abe338">"port"</span><span style="color:#fefefe">:</span> <span style="color:#00e0e0">5001</span><span style="color:#fefefe">,</span> <span style="color:#abe338">"path"</span><span style="color:#fefefe">:</span> <span style="color:#abe338">"/score"</span><span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">}</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span></code></span></span>

让我们部署模型

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python"><span style="color:#00e0e0">from</span> azure<span style="color:#fefefe">.</span>ai<span style="color:#fefefe">.</span>ml<span style="color:#fefefe">.</span>entities <span style="color:#00e0e0">import</span> <span style="color:#fefefe">(</span>

OnlineRequestSettings<span style="color:#fefefe">,</span>

CodeConfiguration<span style="color:#fefefe">,</span>

ManagedOnlineDeployment<span style="color:#fefefe">,</span>

ProbeSettings<span style="color:#fefefe">,</span>

Environment

<span style="color:#fefefe">)</span>

deployment <span style="color:#00e0e0">=</span> ManagedOnlineDeployment<span style="color:#fefefe">(</span>

name<span style="color:#00e0e0">=</span>deployment_name<span style="color:#fefefe">,</span>

endpoint_name<span style="color:#00e0e0">=</span>endpoint_name<span style="color:#fefefe">,</span>

model<span style="color:#00e0e0">=</span>model<span style="color:#fefefe">.</span><span style="color:#abe338">id</span><span style="color:#fefefe">,</span>

instance_type<span style="color:#00e0e0">=</span>sku_name<span style="color:#fefefe">,</span>

instance_count<span style="color:#00e0e0">=</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

<span style="color:#d4d0ab">#code_configuration=code_configuration,</span>

environment <span style="color:#00e0e0">=</span> env<span style="color:#fefefe">,</span>

environment_variables<span style="color:#00e0e0">=</span>deployment_env_vars<span style="color:#fefefe">,</span>

request_settings<span style="color:#00e0e0">=</span>OnlineRequestSettings<span style="color:#fefefe">(</span>request_timeout_ms<span style="color:#00e0e0">=</span>REQUEST_TIMEOUT_MS<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

liveness_probe<span style="color:#00e0e0">=</span>ProbeSettings<span style="color:#fefefe">(</span>

failure_threshold<span style="color:#00e0e0">=</span><span style="color:#00e0e0">30</span><span style="color:#fefefe">,</span>

success_threshold<span style="color:#00e0e0">=</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

period<span style="color:#00e0e0">=</span><span style="color:#00e0e0">100</span><span style="color:#fefefe">,</span>

initial_delay<span style="color:#00e0e0">=</span><span style="color:#00e0e0">500</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

readiness_probe<span style="color:#00e0e0">=</span>ProbeSettings<span style="color:#fefefe">(</span>

failure_threshold<span style="color:#00e0e0">=</span><span style="color:#00e0e0">30</span><span style="color:#fefefe">,</span>

success_threshold<span style="color:#00e0e0">=</span><span style="color:#00e0e0">1</span><span style="color:#fefefe">,</span>

period<span style="color:#00e0e0">=</span><span style="color:#00e0e0">100</span><span style="color:#fefefe">,</span>

initial_delay<span style="color:#00e0e0">=</span><span style="color:#00e0e0">500</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span><span style="color:#fefefe">,</span>

<span style="color:#fefefe">)</span>

<span style="color:#d4d0ab"># Trigger the deployment creation</span>

<span style="color:#00e0e0">try</span><span style="color:#fefefe">:</span>

workspace_ml_client<span style="color:#fefefe">.</span>begin_create_or_update<span style="color:#fefefe">(</span>deployment<span style="color:#fefefe">)</span><span style="color:#fefefe">.</span>wait<span style="color:#fefefe">(</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">print</span><span style="color:#fefefe">(</span><span style="color:#abe338">"\n---Deployment created successfully---\n"</span><span style="color:#fefefe">)</span>

<span style="color:#00e0e0">except</span> Exception <span style="color:#00e0e0">as</span> err<span style="color:#fefefe">:</span>

<span style="color:#00e0e0">raise</span> RuntimeError<span style="color:#fefefe">(</span>

<span style="color:#abe338">f"Deployment creation failed. Detailed Response:\n</span><span style="color:#fefefe">{</span>err<span style="color:#fefefe">}</span><span style="color:#abe338">"</span>

<span style="color:#fefefe">)</span> <span style="color:#00e0e0">from</span> err</code></span></span>

如果您想删除端点,请参阅下面的代码。

<span style="background-color:#2b2b2b"><span style="color:#f8f8f2"><code class="language-python">workspace_ml_client<span style="color:#fefefe">.</span>online_deployments<span style="color:#fefefe">.</span>begin_delete<span style="color:#fefefe">(</span>name <span style="color:#00e0e0">=</span> deployment_name<span style="color:#fefefe">,</span>

endpoint_name <span style="color:#00e0e0">=</span> endpoint_name<span style="color:#fefefe">)</span>

workspace_ml_client<span style="color:#fefefe">.</span>_online_endpoints<span style="color:#fefefe">.</span>begin_delete<span style="color:#fefefe">(</span>name <span style="color:#00e0e0">=</span> endpoint_name<span style="color:#fefefe">)</span></code></span></span>