SpringBoot集成kafka-指定topic-partition-offset消费信息

- 1、消费者

- 2、生产者

- 3、配置类

- 4、配置文件

- 5、实体类

- 6、工具类

- 7、测试类

- 8、第一次测试(读取到19条信息)

- 9、第二次测试(读取到3条信息)

1、消费者

指定消费者读取配置文件中

topic = "

k

a

f

k

a

.

t

o

p

i

c

.

n

a

m

e

"

,

g

r

o

u

p

I

d

=

"

{kafka.topic.name}", groupId="

kafka.topic.name",groupId="{kafka.consumer.group}"下的数据。

package com.power.consumer;

import com.power.model.User;

import com.power.util.JSONUtils;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.annotation.PartitionOffset;

import org.springframework.kafka.annotation.TopicPartition;

import org.springframework.kafka.support.Acknowledgment;

import org.springframework.kafka.support.KafkaHeaders;

import org.springframework.messaging.handler.annotation.Header;

import org.springframework.messaging.handler.annotation.Payload;

import org.springframework.stereotype.Component;

import java.util.function.Consumer;

@Component

public class EventConsumer {

@KafkaListener(groupId="${kafka.consumer.group}",

topicPartitions = {

@TopicPartition(

topic = "${kafka.topic.name}",

partitions={"0","1","2"},

partitionOffsets = {

@PartitionOffset(partition="3",initialOffset = "3"),

@PartitionOffset(partition="4",initialOffset = "3")

})

})

public void onEvent5(String userJson,

@Header(value=KafkaHeaders.RECEIVED_TOPIC) String topic,

@Header(value=KafkaHeaders.RECEIVED_PARTITION_ID) String partition,

ConsumerRecord<String,String> record,

Acknowledgment ack){

try {

User user =JSONUtils.toBean(userJson,User.class);

System.out.println("读取到的事件5:"+user+",topic:"+topic+",partition:"+partition);

//业务确认完成,给kafka服务器反馈确认

ack.acknowledge();//手动确认消息,就是告诉kafka服务器,该消息我已经接收到了,默认情况下是自动确认

//手动确认后,下次启动消费者,偏移量会从新的位置开始;没有手动确认,下次启动消费者,偏移量还是从老位置开始

}catch (Exception e){

e.printStackTrace();

}

}

}

2、生产者

package com.power.producer;

import com.power.model.User;

import com.power.util.JSONUtils;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Component;

import javax.annotation.Resource;

import java.util.Date;

@Component

public class EventProducer {

@Resource

private KafkaTemplate<String,Object> kafkaTemplate;

public void sendEvent3(){

for (int i = 0; i < 25; i++) {

User user = User.builder().id(i).phone("1567676767"+i).birthday(new Date()).build();

String userJson = JSONUtils.toJSON(user);

kafkaTemplate.send("helloTopic","k"+i,userJson);

}

}

}

3、配置类

package com.power.config;

import org.apache.kafka.clients.admin.NewTopic;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

@Configuration

public class KafkaConfig {

@Bean

public NewTopic newTopic(){

return new NewTopic("helloTopic",5,(short)1);

}

}

4、配置文件

spring:

application:

#应用名称

name: spring-boot-02-kafka-base

#kafka连接地址(ip+port)

kafka:

bootstrap-servers: <你的服务器IP>:9092

#消费者

consumer:

auto-offset-reset: earliest

#配置消息监听器

listener:

ack-mode: manual

#自定义配置

kafka:

topic:

name: helloTopic

consumer:

group: helloGroup

5、实体类

package com.power.model;

import lombok.AllArgsConstructor;

import lombok.Builder;

import lombok.Data;

import lombok.NoArgsConstructor;

import java.util.Date;

@Builder

@AllArgsConstructor

@NoArgsConstructor

@Data

public class User {

private Integer id;

private String phone;

private Date birthday;

}

6、工具类

package com.power.util;

import com.fasterxml.jackson.core.JsonProcessingException;

import com.fasterxml.jackson.databind.ObjectMapper;

public class JSONUtils {

private static final ObjectMapper OBJECTMAPPER = new ObjectMapper();

public static String toJSON(Object object){

try {

return OBJECTMAPPER.writeValueAsString(object);

} catch (JsonProcessingException e) {

throw new RuntimeException(e);

}

}

public static <T> T toBean(String json,Class<T> clazz){

try {

return OBJECTMAPPER.readValue(json,clazz);

} catch (JsonProcessingException e) {

throw new RuntimeException(e);

}

}

}

7、测试类

package com.power;

import com.power.producer.EventProducer;

import org.junit.jupiter.api.Test;

import org.springframework.boot.test.context.SpringBootTest;

import javax.annotation.Resource;

@SpringBootTest

public class SpringBoot02KafkaBaseApplication {

@Resource

private EventProducer eventProducer;

@Test

void sendEvent5(){

eventProducer.sendEvent5();

}

}

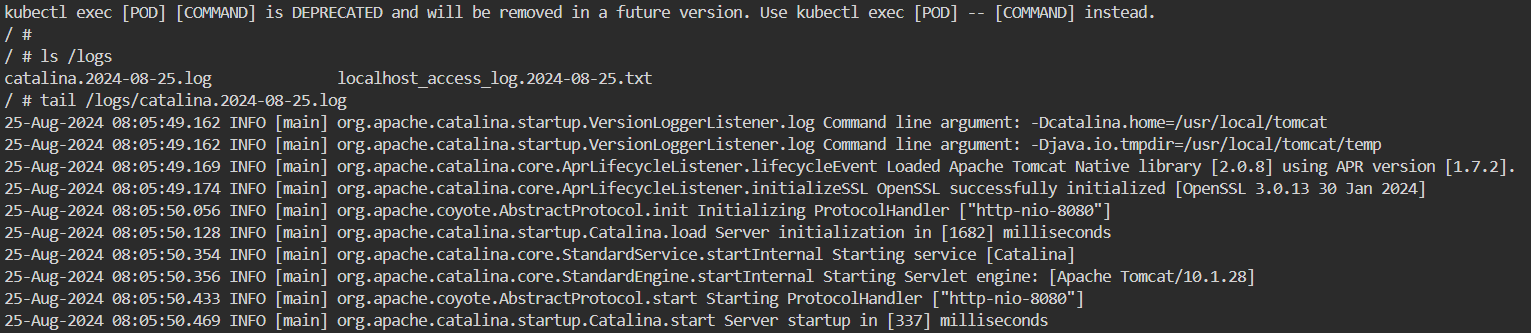

8、第一次测试(读取到19条信息)

总共读取1、2、3分区全部数据和4分区从4偏移量开始读,5分区从4开始读取。

6+4+6+3+0=19条数据。

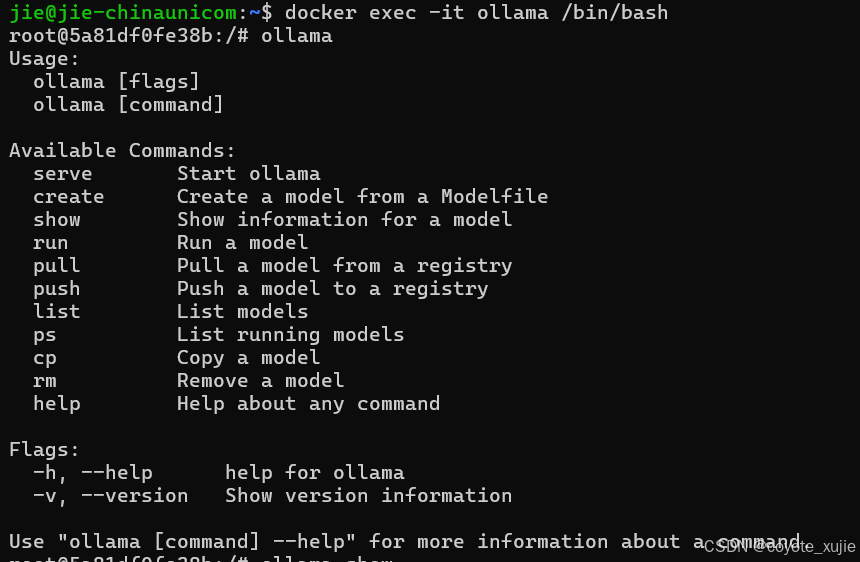

9、第二次测试(读取到3条信息)

因为第一次读取已经记下来偏移量offset,即便在配置文件中指定了消费者从最开始读,也依然读取不到的。

此时可以通过修改组id来从头开始读取

![[pytorch] --- pytorch环境配置](https://i-blog.csdnimg.cn/direct/7a5cb71c4dc4421099ced66e85807b0d.png)

![洛谷 P2569 [SCOI2010] 股票交易](https://i-blog.csdnimg.cn/direct/4bc0daa61b8242fb9293c5b627ddd568.png)