简介

本文主要内容是《视觉SLAM十四讲》(第二版)第7章的习题解答,并介绍了在解答习题中的一下思考和总结的经验。本文代码部分参考了:HW-of-SLAMBOOK2

1、除了本书介绍的ORB特征点,你还能找到哪些特征点?请说说SIFT或SURF的原理,并对比它们与ORB之间的优劣。

除ORB特征点外还存在大量优秀的特征点,例如:

SIFT (Scale-Invariant Feature Transform):对尺度和旋转不变,能够在不同的图像变换中识别关键点。

SURF (Speeded-Up Robust Features):比SIFT更快,具有良好的旋转和尺度不变性。

FAST (Features from Accelerated Segment Test):提供快速特征点检测,常用于实时应用。

Harris corner detection:经典的角点检测方法,检测图像中的角点。

KAZE 和 AKAZE: 针对多尺度特征提取的方法,具有较强的鲁棒性。

下面简要介绍SIFT和SURF算法步骤,详情请参考《学习OpenCV3》p472。

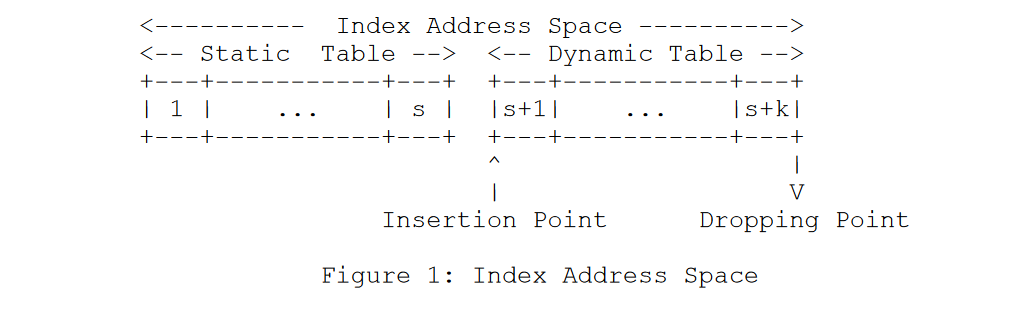

SIFT算法的初始阶段会计算输入图像和一组高斯核(这组高斯核的尺寸依次变大)之间的卷积,这赋予SIFT特征尺度不变的特性,同时也是SIFT算法如此命名的原因。然后将这些卷积和另一组卷积(图像与更大的高斯核卷积的结果)组合起来。该过程的结果是一组新的图像,这组图像和高斯(DoG)算子的差相类似。每幅图像中的像素其所在图像中的相邻像素(有8个)、上下图像中对应的自身位置及其相邻位置的像素进行比较(上层9个像素,下层9个像素)。如果一个像素高斯卷积的差比这些相邻的26个像素都高,就会被认为是高斯算子的差尺度空间极值。

图 1

如图1所示:

- SIFT首先用各种大小的高斯内核卷积原始图像。

- 在相邻尺寸的卷积之间计算差分图像来定位尺度空间极值。

- 在差分图像中,在相同层和相邻层中将每个像素与其所有邻居进行比较。如果高斯信号的差比三层图像上所有相邻像素都强,则认为该像素是尺度空间极值。

关于特征点的描述,SIFT取特征点16*16的邻域块,再将其划分为4*4的子区域,然后对梯度方向进行划分成8个区间并计算,这样在每个子区域内会得到4*4*8=128维的特征向量,向量元素大小为每个梯度方向的区间权值。在得到特征向量后,对邻域的特征向量进行了归一化处理,归一化的方向是计算邻域关键点的主方向,并根据主方向将邻域旋转至特定方向,并根据邻域各像素大小把邻域缩放至指定尺度。

图2

如图2所示:

- 从图像(A)中提取SIFT特征。

- 该特征具有大小和方向,如(B)所示。

- 特征周围的区域被划分为块(C)。

- 并且对于每个块,针对小区(D)中的每个像素计算方向导数。

- 这些方向导数被聚合成每个块(E)的直方图。

- 所有块的所有直方图中的每个bin中的幅度被级联成特征(F)的向量描述符。

SURF对SIFT进行了改进,通过引入积分图和箱式滤波器加速Hessian矩阵的计算,通过比较Hessian矩阵行列式的大小来选择特征点的位置和尺度。

图3

- 两个连续高斯核的差异图3中(A)所示。

- 在中心(B)中显示出了离散的9x9滤波器核,其近似于垂直方向上的二阶导数。

- 图(C)显示了DoG滤波器核心的一个盒滤波器近似值

像SIFT一样,SURF包括一个特征的方向概念,通过再次使用积分图像来估计特征周围区域的局部体积梯度。使用一对简单的Haar小波(图4)来近似局部梯度并将这些小波应用于尺度空间的不同区域发现极值区域。

- 通过分析发现尺度空间极值的区域(B)来确定图像的SURF方向(A)。

- 使用两个简单的小波(C)来近似局部梯度。

- 与该极值附近许多位置的图像进行卷积(在B中由实心圆表示的区域内规则采样的虚线框)。

- 以这种方式测量的所有梯度的分析中提取最终方向(D)。

关于特征点的描述,SURF基于Harr小波设定了特征点的主方向,并充分利用积分图构建64维的特征描述子。

图5

- 通过估计400个子单元中的每一个的梯度来计算SURF特征。

- 特征周围的区域首先划分为4x4的单元格(A)。

- 将每个单元划分成25个子单元格,并为每个子单元估计方向导数(B)。

- 然后将子单元的方向导数求和,以计算大网格(C)中的每个单元格的四个值。

| 优点 | 缺点 | |

| SIFT | 特征稳定、对旋转、尺度变换和亮度保持不变性,对视角变换、噪声也有一定程度的稳定性 | 实时性不高,对边缘光滑目标的特征的点提取能力较弱 |

| SURF | 对 SIFT 进行了改进,在保证性能的情况下提升了算法的效率(约一个数量级) | 在求取特征点主方向时过于依赖局部区域像素点的梯度方向,导致得到的主方向可能存在较大误差,使得特征描述子不准 |

| ORB | 计算速度较快,实时性强,比SIFT的效率高约两个数量级 | 对尺度变换的应对能力较差 |

2、设计程序调用OpenCV中的其他种类特征点,统计在提取1000个特征点时在你的机器上所用的时间。

程序调用了ORB、SIFT、SURF和KAZE特征点,其中SURF和KAZE无法指定提取特征点的个数,因此通过调整阈值使得输出的特征点数量接近1000个。

另外,因为SURF算法是有专利的,因此需要额外安装opencv-contrib才能使用,需要配置cmake选项OPENCV_ENABLE_NONFREE = NO,这样generate后编译才能使用SURF。(因为笔者的OpenCV是3.4.6版,该版本因为专利原因并没有SIFT特征点,故没有测试SIFT,但是参考代码中给出了这部分代码)

//OPENCV_ENABLE_NONFREE:BOOL如果不开启在使用sift/surf等算法时会报错

//因为我是用c++进行编程 所以用INSTALL_C_EXAMPLES=ON,如果想用python则替换为INSTALL_PYTHON_EXAMPLES=ON

//OPENCV_EXTRA_MODULES_PATH后面跟的是opencv_contrib-4.1.2的路径,记得照着自己的电脑路径改一下

//如果不需要装opencv_contrib-4.1.2 那就删掉OPENCV_EXTRA_MODULES_PATH

cmake -D CMAKE_BUILD_TYPE=RELEASE -D CMAKE_INSTALL_PREFIX=/usr/local -D OPENCV_ENABLE_NONFREE:BOOL=ON -D INSTALL_C_EXAMPLES=ON -D OPENCV_EXTRA_MODULES_PATH=~/opencv-4.1.2/opencv_contrib-4.1.2/modules ..

详细请参考:解决OpenCV xfeatures2d_SURF -213:功能/功能未实现。

Code

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/xfeatures2d/nonfree.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <chrono>

using namespace std;

using namespace cv;

int main(int argc, char **argv) {

if (argc != 2) {

cout << "usage: feature_extraction img" << endl;

return 1;

}

//-- 读取图像

Mat img = imread(argv[1], CV_LOAD_IMAGE_COLOR);

assert(img.data != nullptr);

//-- 初始化

std::vector<KeyPoint> keypoints_orb,keypoints_sift,keypoints_surf,keypoints_kaze;

Ptr<FeatureDetector> detector_orb = ORB::create(1000);

Ptr<FeatureDetector> detector_sift= SIFT::create(1000);

Ptr<FeatureDetector> detector_surf= xfeatures2d::SURF::create(400);

Ptr<FeatureDetector> detector_kaze= KAZE::create();

//-- Orb特征点

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

detector_orb->detect(img, keypoints_orb);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout<<"number of keypoints="<<keypoints_orb.size()<<endl;

cout<<"time of orb="<<time_used.count()<<endl;

cout<<"***************************************"<<endl;

Mat outimg_orb;

drawKeypoints(img,keypoints_orb, outimg_orb, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("ORB features", outimg_orb);

//-- Sift特征点

t1 = chrono::steady_clock::now();

detector_sift->detect(img, keypoints_sift);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout<<"number of keypoints="<<keypoints_sift.size()<<endl;

cout<<"time of sift="<<time_used.count()<<endl;

cout<<"***************************************"<<endl;

Mat outimg_sift;

drawKeypoints(img,keypoints_sift, outimg_sift, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("SIFT features", outimg_sift);

//-- Surf特征点

t1 = chrono::steady_clock::now();

detector_surf->detect(img, keypoints_surf);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout<<"number of keypoints="<<keypoints_surf.size()<<endl;

cout<<"time of surf="<<time_used.count()<<endl;

cout<<"***************************************"<<endl;

Mat outimg_surf;

drawKeypoints(img,keypoints_surf, outimg_surf, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("SURF features", outimg_surf);

//-- Kaze特征点

t1 = chrono::steady_clock::now();

detector_surf->detect(img, keypoints_kaze);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout<<"number of keypoints="<<keypoints_kaze.size()<<endl;

cout<<"time of kazef="<<time_used.count()<<endl;

cout<<"***************************************"<<endl;

Mat outimg_kaze;

drawKeypoints(img,keypoints_kaze, outimg_kaze, Scalar::all(-1), DrawMatchesFlags::DEFAULT);

imshow("KAZE features", outimg_kaze);

waitKey(0);

return 0;

}

3、我们发现,OpenCV提供的ORB特征点在图像中分布不够均匀。你是否能够找到或提出让特征点分布更均匀的方法?

参考ORB-SLAM中做法:

1、根据总的图像金字塔层级数和待提取的特征点总数,计算图像金字塔中每个层级需要提取的特征点数量。

2、划分格子,在ORB-SLAM2中格子固定尺寸为30像素×30

像素。

3、对每个格子提取FAST角点,如果初始的FAST 角点阈值没有检测到角点,则降低FAST角点阈值,这样在弱纹理区域也能提取到更多的角点。如果降低一次阈值后还是提取不到角点,则不在这个格子里提取,这样可以避免提取到质量特别差的角点。

4、使用四叉树均匀地选取 FAST 角点,直到达到特征点总数。

详细介绍请参考:《视觉惯性SLAM:理论与源码解析》p95

4、研究FLANN为何能够快速处理匹配问题,除了FLANN,还有哪些可以加速匹配的手段?

术语FLANN是指用于近似近邻计算的快速库。本身提供了各种算法,用于在高维空间中查找(或至少近似地找到)最近邻点。这正是图像处理需要的描述符匹配。OpenCV也为FLANN提供了一个接口。

FLANN采用了近似算法,能够以较低的计算成本找到与查询点相近的点。这意味着在大规模数据集中,即使牺牲了一定的精度(与精确算法相比),也能够显著减少计算时间。

1. 多种数据结构:FLANN提供了多种高效的数据结构,如KD树(k-dimensional tree)、LSH(Locality-Sensitive Hashing)和这是由于它支持多种类型的距离度量(如欧几里得距离、汉明距离等)。这些数据结构可以根据具体应用场景的特点来选择最合适的,从而优化查询时间。

以FLANN中的KD树为例,在进行特征点匹配前,KD树根据特征点描述子的信息进行构建,当搜索某个特征点度量距离最近的特征点时,可以回溯之前构建的KD树辅助搜索,从而降低搜索的复杂度(即充分利用前面搜索得到的信息)。

2. 适用于高维数据:FLANN设计之初就考虑到了高维数据的问题,特别是在图像匹配中,高维特征向量(如SIFT、SURF)是常见的。FLANN在高维空间中的性能表现优于一般的最近邻搜索方法。

其他加速手段:预排序图像检索、GPU加速和FGPA加速等。

5、把演示程序使用的EPnP改成其他PnP方法,并研究它们的工作原理。

阅读OpenCV官方文档可以知道solvePnP函数中最后的flags是指定求解PnP问题方式的参数,默认是SOLVEPNP_ITERATIVE ,即通过LM方法迭代优化,只需设置为其他参数,如SOLVEPNP_UPNP 可以更改求解方法。

具体在程序中的修改是:

solvePnP(pts_3d, pts2_2d, K, Mat(), r, t, false, SOLVEPNP_SQPNP);

以下是多种解决PnP问题的方法的工作原理:

| 求解方法 | 工作原理 |

| DLT | 通过 OR分解求解空间点三维坐标与其对应特征点的像素坐标的线性方程得到 3D 到 2D 点对的运动。 |

| P3P | 利用三角形相似性质,求解投影点在相机坐标系下的3D坐标,最终将问题转换为 3D 到 3D 的位姿估计问题。 |

| EPNP | 将空间中的三维点表示为4个控制点的组合,并求得控制点在相机坐标系中的坐标,最终将问题转换为3D 到 3D 的位姿估计问题。 |

| UPNP | 与 EPNP的原理类似,在求解过程中同时估计相机的焦距 |

6、在PnP优化中,将第一个相机的观测也考虑进来,程序应如何书写?最后结果会有何变化?

将第一个相机的观测考虑进去的程序修改思路:原先不考虑第一个相机的位姿前提是设定第一个相机的坐标系为世界坐标系,而考虑第一个相机的观测时,则第一个相机的位姿节点是未知的,因此需将第一个相机的位姿作为新增的待优化节点,连接的是一元边,可以说两个顶点是孤立的,但此时假定3D点是是世界坐标系的坐标,这相当于在一次优化中分别对相机1和2做了一次PNP求解。因此最后优化输出的vertex_pose应该是两个相机的相对世界坐标系的位姿。

Code

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <Eigen/Core>

#include <g2o/core/base_vertex.h>

#include <g2o/core/base_unary_edge.h>

#include <g2o/core/sparse_optimizer.h>

#include <g2o/core/block_solver.h>

#include <g2o/core/solver.h>

#include <g2o/core/optimization_algorithm_gauss_newton.h>

#include <g2o/solvers/dense/linear_solver_dense.h>

#include <sophus/se3.hpp>

#include <chrono>

using namespace std;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

// BA by g2o

typedef vector<Eigen::Vector2d, Eigen::aligned_allocator<Eigen::Vector2d>> VecVector2d;

typedef vector<Eigen::Vector3d, Eigen::aligned_allocator<Eigen::Vector3d>> VecVector3d;

void bundleAdjustmentG2O(

const VecVector3d &points_3d,

const VecVector2d &points1_2d,

const VecVector2d &points2_2d,

const Mat &K,

Sophus::SE3d &pose

);

// BA by gauss-newton

void bundleAdjustmentGaussNewton(

const VecVector3d &points_3d,

const VecVector2d &points_2d,

const Mat &K,

Sophus::SE3d &pose

);

int main(int argc, char **argv) {

if (argc != 5) {

cout << "usage: pose_estimation_3d2d img1 img2 depth1 depth2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

assert(img_1.data && img_2.data && "Can not load images!");

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

// 建立3D点

Mat d1 = imread(argv[3], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point3f> pts_3d;

vector<Point2f> pts1_2d,pts2_2d;

for (DMatch m:matches) {

ushort d = d1.ptr<unsigned short>(int(keypoints_1[m.queryIdx].pt.y))[int(keypoints_1[m.queryIdx].pt.x)];

if (d == 0) // bad depth

continue;

float dd = d / 5000.0;

Point2d p1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

pts_3d.push_back(Point3f(p1.x * dd, p1.y * dd, dd));//第一个相机观察到的3D点坐标

pts1_2d.push_back(keypoints_1[m.queryIdx].pt);//特征点在第一个相机中的投影

pts2_2d.push_back(keypoints_2[m.trainIdx].pt);//特征点在第二个相机中的投影

}

cout << "3d-2d pairs: " << pts_3d.size() << endl;

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

Mat r, t;

solvePnP(pts_3d, pts2_2d, K, Mat(), r, t, false, SOLVEPNP_SQPNP); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

Mat R;

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

VecVector3d pts_3d_eigen;

VecVector2d pts1_2d_eigen,pts2_2d_eigen;

for (size_t i = 0; i < pts_3d.size(); ++i) {

pts_3d_eigen.push_back(Eigen::Vector3d(pts_3d[i].x, pts_3d[i].y, pts_3d[i].z));

pts1_2d_eigen.push_back(Eigen::Vector2d(pts1_2d[i].x, pts1_2d[i].y));//新增部分:求取第一个相机拍摄照片特征点的像素坐标

pts2_2d_eigen.push_back(Eigen::Vector2d(pts2_2d[i].x, pts2_2d[i].y));

}

cout << "calling bundle adjustment by gauss newton" << endl;

Sophus::SE3d pose_gn;

t1 = chrono::steady_clock::now();

bundleAdjustmentGaussNewton(pts_3d_eigen, pts2_2d_eigen, K, pose_gn);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp by gauss newton cost time: " << time_used.count() << " seconds." << endl;

cout << "calling bundle adjustment by g2o" << endl;

Sophus::SE3d pose_g2o;

t1 = chrono::steady_clock::now();

bundleAdjustmentG2O(pts_3d_eigen, pts1_2d_eigen,pts2_2d_eigen, K, pose_g2o);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp by g2o cost time: " << time_used.count() << " seconds." << endl;

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

void bundleAdjustmentGaussNewton(

const VecVector3d &points_3d,

const VecVector2d &points_2d,

const Mat &K,

Sophus::SE3d &pose) {

typedef Eigen::Matrix<double, 6, 1> Vector6d;

const int iterations = 10;

double cost = 0, lastCost = 0;

double fx = K.at<double>(0, 0);

double fy = K.at<double>(1, 1);

double cx = K.at<double>(0, 2);

double cy = K.at<double>(1, 2);

for (int iter = 0; iter < iterations; iter++) {

Eigen::Matrix<double, 6, 6> H = Eigen::Matrix<double, 6, 6>::Zero();

Vector6d b = Vector6d::Zero();

cost = 0;

// compute cost

for (int i = 0; i < points_3d.size(); i++) {

Eigen::Vector3d pc = pose * points_3d[i];

double inv_z = 1.0 / pc[2];

double inv_z2 = inv_z * inv_z;

Eigen::Vector2d proj(fx * pc[0] / pc[2] + cx, fy * pc[1] / pc[2] + cy);

Eigen::Vector2d e = points_2d[i] - proj;

cost += e.squaredNorm();

Eigen::Matrix<double, 2, 6> J;

J << -fx * inv_z,

0,

fx * pc[0] * inv_z2,

fx * pc[0] * pc[1] * inv_z2,

-fx - fx * pc[0] * pc[0] * inv_z2,

fx * pc[1] * inv_z,

0,

-fy * inv_z,

fy * pc[1] * inv_z2,

fy + fy * pc[1] * pc[1] * inv_z2,

-fy * pc[0] * pc[1] * inv_z2,

-fy * pc[0] * inv_z;

H += J.transpose() * J;

b += -J.transpose() * e;

}

Vector6d dx;

dx = H.ldlt().solve(b);

if (isnan(dx[0])) {

cout << "result is nan!" << endl;

break;

}

if (iter > 0 && cost >= lastCost) {

// cost increase, update is not good

cout << "cost: " << cost << ", last cost: " << lastCost << endl;

break;

}

// update your estimation

pose = Sophus::SE3d::exp(dx) * pose;

lastCost = cost;

cout << "iteration " << iter << " cost=" << std::setprecision(12) << cost << endl;

if (dx.norm() < 1e-6) {

// converge

break;

}

}

cout << "pose by g-n: \n" << pose.matrix() << endl;

}

/// vertex and edges used in g2o ba

class VertexPose : public g2o::BaseVertex<6, Sophus::SE3d> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

virtual void setToOriginImpl() override {

_estimate = Sophus::SE3d();

}

/// left multiplication on SE3

virtual void oplusImpl(const double *update) override {

Eigen::Matrix<double, 6, 1> update_eigen;

update_eigen << update[0], update[1], update[2], update[3], update[4], update[5];

_estimate = Sophus::SE3d::exp(update_eigen) * _estimate;

}

virtual bool read(istream &in) override {}

virtual bool write(ostream &out) const override {}

};

//1元边,测量值维度是2,对应测量值类型为Eigen::Vector2d,顶点对应的数据类型都是VertexPose

class EdgeProjection : public g2o::BaseUnaryEdge<2, Eigen::Vector2d, VertexPose> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

EdgeProjection(const Eigen::Vector3d &pos, const Eigen::Matrix3d &K) : _pos3d(pos), _K(K) {}

virtual void computeError() override {

const VertexPose *v = static_cast<VertexPose *> (_vertices[0]);

Sophus::SE3d T = v->estimate();

Eigen::Vector3d pos_pixel = _K * (T * _pos3d);

pos_pixel /= pos_pixel[2];

_error = _measurement - pos_pixel.head<2>();

}

virtual void linearizeOplus() override {

const VertexPose *v = static_cast<VertexPose *> (_vertices[0]);

Sophus::SE3d T = v->estimate();

Eigen::Vector3d pos_cam = T * _pos3d;

double fx = _K(0, 0);

double fy = _K(1, 1);

double cx = _K(0, 2);

double cy = _K(1, 2);

double X = pos_cam[0];

double Y = pos_cam[1];

double Z = pos_cam[2];

double Z2 = Z * Z;

_jacobianOplusXi

<< -fx / Z, 0, fx * X / Z2, fx * X * Y / Z2, -fx - fx * X * X / Z2, fx * Y / Z,

0, -fy / Z, fy * Y / (Z * Z), fy + fy * Y * Y / Z2, -fy * X * Y / Z2, -fy * X / Z;

}

virtual bool read(istream &in) override {}

virtual bool write(ostream &out) const override {}

private:

Eigen::Vector3d _pos3d;

Eigen::Matrix3d _K;

};

//新增部分:points1_2d,将第一个相机拍摄图片的特征点像素坐标传递进BA函数中计算测量量

void bundleAdjustmentG2O(

const VecVector3d &points_3d,

const VecVector2d &points1_2d,

const VecVector2d &points2_2d,

const Mat &K,

Sophus::SE3d &pose) {

// 构建图优化,先设定g2o

typedef g2o::BlockSolver<g2o::BlockSolverTraits<6, 3>> BlockSolverType; // pose is 6, landmark is 3

typedef g2o::LinearSolverDense<BlockSolverType::PoseMatrixType> LinearSolverType; // 线性求解器类型

// 梯度下降方法,可以从GN, LM, DogLeg 中选

auto solver = new g2o::OptimizationAlgorithmGaussNewton(

g2o::make_unique<BlockSolverType>(g2o::make_unique<LinearSolverType>()));

g2o::SparseOptimizer optimizer; // 图模型

optimizer.setAlgorithm(solver); // 设置求解器

optimizer.setVerbose(true); // 打开调试输出

// 新增部分:vertex --(0,0,0) 第一个相机的位姿此时是未知的,待优化

VertexPose *vertex_pose0 = new VertexPose(); // camera vertex_pose

vertex_pose0->setId(0);

Eigen::Matrix3d R=Eigen::Matrix3d::Identity();

Eigen::Vector3d t(0,0,0);

Sophus::SE3d SE3_Rt(R,t);

vertex_pose0->setEstimate(SE3_Rt);

optimizer.addVertex(vertex_pose0);

// vertex --第二个相机的位姿,待优化

VertexPose *vertex_pose = new VertexPose(); // camera vertex_pose

vertex_pose->setId(1);

vertex_pose->setEstimate(Sophus::SE3d());

optimizer.addVertex(vertex_pose);

// K

Eigen::Matrix3d K_eigen;

K_eigen <<

K.at<double>(0, 0), K.at<double>(0, 1), K.at<double>(0, 2),

K.at<double>(1, 0), K.at<double>(1, 1), K.at<double>(1, 2),

K.at<double>(2, 0), K.at<double>(2, 1), K.at<double>(2, 2);

// edges

int index = 1;

//新增部分:第一个相机作为顶点连接的边

for (size_t i = 0; i < points1_2d.size(); ++i) {

auto p2d = points1_2d[i];

auto p3d = points_3d[i];

EdgeProjection *edge = new EdgeProjection(p3d, K_eigen);

edge->setId(index);

edge->setVertex(0, vertex_pose0);

edge->setMeasurement(p2d);

edge->setInformation(Eigen::Matrix2d::Identity());

optimizer.addEdge(edge);

index++;

}

//第二个相机作为顶点连接的边

for (size_t i = 0; i < points2_2d.size(); ++i) {

auto p2d = points2_2d[i];

auto p3d = points_3d[i];

EdgeProjection *edge = new EdgeProjection(p3d, K_eigen);

edge->setId(index);

edge->setVertex(0, vertex_pose);

edge->setMeasurement(p2d);

edge->setInformation(Eigen::Matrix2d::Identity());

optimizer.addEdge(edge);

index++;

}

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

optimizer.setVerbose(true);

optimizer.initializeOptimization();

optimizer.optimize(10);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "optimization costs time: " << time_used.count() << " seconds." << endl;

cout << "pose estimated of camera1 by g2o =\n" << vertex_pose0->estimate().matrix() << endl;

cout<<"********************************************************************************************"<<endl;

cout << "pose estimated of camera2 by g2o =\n" << vertex_pose->estimate().matrix() << endl;

cout<<"********************************************************************************************"<<endl;

pose = vertex_pose0->estimate().inverse()*vertex_pose->estimate();//此时待求的两个相机之间的相对位姿

cout << "pose estimated by g2o =\n" << pose.matrix() << endl;

cout<<"********************************************************************************************"<<endl;

}

可见因为是利用相机 1恢复的3D点坐标,所以pose1的R几乎为单位阵,t几乎为0向量。

因此在加入第一个相机的观测后优化得到的位姿也应该和世界坐标系非常接近,从而使得两个相机间的相对位姿变化极小。加入pose1优化后的相当坐标基本不变。

7、在ICP程序中,将空间点也作为优化变量考虑进来,程序应如何书写?最后结果会有何变化?

1.在将空间点作为优化变量考虑后,此时图优化的边应该为二元边,二元边的其中一个顶点为原来的李代数位姿,另一个顶点是在相机二坐标系下的空间点三维坐标,因此需要新增顶点类型VertexPoint;

2.修改一元边g2o::BaseUnaryEdge为二元边g2o::BaseBinaryEdge,需要将头文件#include <g2o/core/base_unary_edge.h>改为#include <g2o/core/base_binary_edge.h>;

3.二元边中误差的计算需要修改,测量值为相机一坐标系下的空间点三维坐标值,与之作差的是需要优化的位姿乘以相机二坐标系下的空间点三维坐标,即将其转化到世界坐标系下;

4.构建图优化模型时设置两个顶点,按二元边中顶点的定义顺序来设置,本文先VertexPose后VertexPoint;

5.最后新增了对重投影误差的计算,并将优化后的相机二坐标系下的空间点三维坐标应用于计算。

Code

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <Eigen/Core>

#include <Eigen/Dense>

#include <Eigen/Geometry>

#include <Eigen/SVD>

#include <g2o/core/base_vertex.h>

#include <g2o/core/base_binary_edge.h>

#include <g2o/core/block_solver.h>

#include <g2o/core/optimization_algorithm_gauss_newton.h>

#include <g2o/core/optimization_algorithm_levenberg.h>

#include <g2o/solvers/dense/linear_solver_dense.h>

#include <chrono>

#include <sophus/se3.hpp>

using namespace std;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

void pose_estimation_3d3d(

const vector<Point3f> &pts1,

const vector<Point3f> &pts2,

Mat &R, Mat &t

);

void bundleAdjustment(

const vector<Point3f> &points_3d,

vector<Point3f> &points_2d,

Mat &R, Mat &t

);

/// vertex and edges used in g2o ba

class VertexPose : public g2o::BaseVertex<6, Sophus::SE3d> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

virtual void setToOriginImpl() override {

_estimate = Sophus::SE3d();

}

/// left multiplication on SE3

virtual void oplusImpl(const double *update) override {

Eigen::Matrix<double, 6, 1> update_eigen;

update_eigen << update[0], update[1], update[2], update[3], update[4], update[5];

_estimate = Sophus::SE3d::exp(update_eigen) * _estimate;

}

virtual bool read(istream &in) override {}

virtual bool write(ostream &out) const override {}

};

/// 新增的空间点顶点

class VertexPoint : public g2o::BaseVertex<3, Eigen::Vector3d> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

virtual void setToOriginImpl() override {

_estimate = Eigen::Vector3d(0,0,0);

}

/// 空间点

virtual void oplusImpl(const double *update) override {

_estimate += Eigen::Vector3d(update[0], update[1], update[2]) ;

}

virtual bool read(istream &in) override {}

virtual bool write(ostream &out) const override {}

};

/// g2o edge-- 修改为二元边,两个顶点为相机位姿VertexPose和空间点坐标VertexPoint

class EdgeProjectXYZRGBDPoseAndPoint : public g2o::BaseBinaryEdge<3, Eigen::Vector3d, VertexPose, VertexPoint> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

//EdgeProjectXYZRGBDPoseAndPoint(const Eigen::Vector3d &point) : _point(point) {}

virtual void computeError() override {

const VertexPose *pose = static_cast<const VertexPose *> ( _vertices[0] );

const VertexPoint *point = static_cast<const VertexPoint *> ( _vertices[1] );

_error = _measurement - pose->estimate() * point->estimate();

//_measurement指世界坐标系下(即第一组相机的坐标系)第一组相机求得的三维点

//pose->estimate() * point->estimate()指从第二个相机的坐标系下转化到世界坐标系下第二组相机求得的三维点

}

// 因为我们不知道雅克比矩阵,这里可以不写,g2o会自动求导,不过速度会下降

// virtual void linearizeOplus() override {

// VertexPose *pose = static_cast<VertexPose *>(_vertices[0]);

// Sophus::SE3d T = pose->estimate();

// Eigen::Vector3d xyz_trans = T * _point;

// _jacobianOplusXi.block<3, 3>(0, 0) = -Eigen::Matrix3d::Identity();

// _jacobianOplusXi.block<3, 3>(0, 3) = Sophus::SO3d::hat(xyz_trans);

// }

bool read(istream &in) {}

bool write(ostream &out) const {}

protected:

Eigen::Vector3d _point;

};

int main(int argc, char **argv) {

if (argc != 5) {

cout << "usage: pose_estimation_3d3d img1 img2 depth1 depth2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

// 建立3D点

Mat depth1 = imread(argv[3], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat depth2 = imread(argv[4], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point3f> pts1, pts2;

for (DMatch m:matches) {

ushort d1 = depth1.ptr<unsigned short>(int(keypoints_1[m.queryIdx].pt.y))[int(keypoints_1[m.queryIdx].pt.x)];

ushort d2 = depth2.ptr<unsigned short>(int(keypoints_2[m.trainIdx].pt.y))[int(keypoints_2[m.trainIdx].pt.x)];

if (d1 == 0 || d2 == 0) // bad depth

continue;

Point2d p1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

Point2d p2 = pixel2cam(keypoints_2[m.trainIdx].pt, K);

float dd1 = float(d1) / 5000.0;

float dd2 = float(d2) / 5000.0;

pts1.push_back(Point3f(p1.x * dd1, p1.y * dd1, dd1));

pts2.push_back(Point3f(p2.x * dd2, p2.y * dd2, dd2));

}

cout << "3d-3d pairs: " << pts1.size() << endl;

Mat R, t;

pose_estimation_3d3d(pts1, pts2, R, t);

cout << "ICP via SVD results: " << endl;

cout << "R = " << R << endl;

cout << "t = " << t << endl;

cout << "R_inv = " << R.t() << endl;

cout << "t_inv = " << -R.t() * t << endl;

cout << "calling bundle adjustment" << endl;

double error_total = 0;

for(size_t i = 0; i < pts1.size(); i++)

{

cv::Mat error = (Mat_<double>(3, 1) << pts1[i].x, pts1[i].y, pts1[i].z) - (R * (Mat_<double>(3, 1) << pts2[i].x, pts2[i].y, pts2[i].z) + t);

error_total += norm(error);

}

cout << "SVD total error is " << error_total << endl;

Mat R1, t1;

bundleAdjustment(pts1, pts2, R1, t1);

// verify p1 = R * p2 + t

// for (int i = 0; i < 5; i++) {

// cout << "p1 = " << pts1[i] << endl;

// cout << "p2 = " << pts2[i] << endl;

// cout << "(R*p2+t) = " <<

// R * (Mat_<double>(3, 1) << pts2[i].x, pts2[i].y, pts2[i].z) + t

// << endl;

// cout << endl;

// }

error_total = 0;

for(size_t i = 0; i < pts1.size(); i++)

{

cv::Mat error = (Mat_<double>(3, 1) << pts1[i].x, pts1[i].y, pts1[i].z) - (R1 * (Mat_<double>(3, 1) << pts2[i].x, pts2[i].y, pts2[i].z) + t1);

error_total += norm(error);

}

cout << "total error is " << error_total << endl;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

void pose_estimation_3d3d(const vector<Point3f> &pts1,

const vector<Point3f> &pts2,

Mat &R, Mat &t) {

Point3f p1, p2; // center of mass

int N = pts1.size();

for (int i = 0; i < N; i++) {

p1 += pts1[i];

p2 += pts2[i];

}

p1 = Point3f(Vec3f(p1) / N);

p2 = Point3f(Vec3f(p2) / N);

vector<Point3f> q1(N), q2(N); // remove the center

for (int i = 0; i < N; i++) {

q1[i] = pts1[i] - p1;

q2[i] = pts2[i] - p2;

}

// compute q1*q2^T

Eigen::Matrix3d W = Eigen::Matrix3d::Zero();

for (int i = 0; i < N; i++) {

W += Eigen::Vector3d(q1[i].x, q1[i].y, q1[i].z) * Eigen::Vector3d(q2[i].x, q2[i].y, q2[i].z).transpose();

}

cout << "W=" << W << endl;

// SVD on W

Eigen::JacobiSVD<Eigen::Matrix3d> svd(W, Eigen::ComputeFullU | Eigen::ComputeFullV);

Eigen::Matrix3d U = svd.matrixU();

Eigen::Matrix3d V = svd.matrixV();

cout << "U=" << U << endl;

cout << "V=" << V << endl;

Eigen::Matrix3d R_ = U * (V.transpose());

if (R_.determinant() < 0) {

R_ = -R_;

}

Eigen::Vector3d t_ = Eigen::Vector3d(p1.x, p1.y, p1.z) - R_ * Eigen::Vector3d(p2.x, p2.y, p2.z);

// convert to cv::Mat

R = (Mat_<double>(3, 3) <<

R_(0, 0), R_(0, 1), R_(0, 2),

R_(1, 0), R_(1, 1), R_(1, 2),

R_(2, 0), R_(2, 1), R_(2, 2)

);

t = (Mat_<double>(3, 1) << t_(0, 0), t_(1, 0), t_(2, 0));

}

void bundleAdjustment(

const vector<Point3f> &pts1,

vector<Point3f> &pts2,

Mat &R, Mat &t) {

// 构建图优化,先设定g2o

typedef g2o::BlockSolverX BlockSolverType;

typedef g2o::LinearSolverDense<BlockSolverType::PoseMatrixType> LinearSolverType; // 线性求解器类型

// 梯度下降方法,可以从GN, LM, DogLeg 中选

auto solver = new g2o::OptimizationAlgorithmLevenberg(

g2o::make_unique<BlockSolverType>(g2o::make_unique<LinearSolverType>()));

g2o::SparseOptimizer optimizer; // 图模型

optimizer.setAlgorithm(solver); // 设置求解器

optimizer.setVerbose(true); // 打开调试输出

// vertex李代数位姿

VertexPose *pose = new VertexPose(); // camera pose

pose->setId(0);

pose->setEstimate(Sophus::SE3d());

optimizer.addVertex(pose);

// vertex空间点

for (size_t i = 0; i < pts2.size(); i++){

VertexPoint *point = new VertexPoint(); // camera pose

point->setId(i+1);

point->setEstimate(Eigen::Vector3d(pts2[i].x, pts2[i].y, pts2[i].z));

optimizer.addVertex(point);

}

// edges pts1.size()=pts2.size()

for (size_t i = 0; i < pts1.size(); i++) {

EdgeProjectXYZRGBDPoseAndPoint *edge = new EdgeProjectXYZRGBDPoseAndPoint();

edge->setVertex(0, pose);

edge->setVertex(1, dynamic_cast<VertexPoint *> ( optimizer.vertex ( i+1 ) ));

edge->setMeasurement(Eigen::Vector3d(pts1[i].x, pts1[i].y, pts1[i].z));

edge->setInformation(Eigen::Matrix3d::Identity());

optimizer.addEdge(edge);

}

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

optimizer.initializeOptimization();

optimizer.optimize(10);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "optimization costs time: " << time_used.count() << " seconds." << endl;

cout << endl << "after optimization:" << endl;

cout << "T=\n" << pose->estimate().matrix() << endl;

// convert to cv::Mat

Eigen::Matrix3d R_ = pose->estimate().rotationMatrix();

Eigen::Vector3d t_ = pose->estimate().translation();

R = (Mat_<double>(3, 3) <<

R_(0, 0), R_(0, 1), R_(0, 2),

R_(1, 0), R_(1, 1), R_(1, 2),

R_(2, 0), R_(2, 1), R_(2, 2)

);

t = (Mat_<double>(3, 1) << t_(0, 0), t_(1, 0), t_(2, 0));

for(size_t i = 0; i < pts2.size(); i++)

{

Eigen::Vector3d vertex_point = dynamic_cast<VertexPoint *> ( optimizer.vertex ( i+1 ) )->estimate();

pts2[i] = Point3f(vertex_point(0),vertex_point(1),vertex_point(2));

}

}

输出结果:

Note:虽然位姿并未发生明显改变,但是点云总体误差明显减小,这代表了目前3D点更加接近,建图效果更好。在稠密建图时可以考虑增加此部分。

8、在特征点匹配过程中,不可避免地会遇到误匹配的情况。如果我们把错误匹配输入到PnP或ICP中,会发生怎样的情况?你能想到哪些避免误匹配的方法?

通过设置不同的距离阈值,可以得到较多的误匹配,当将误匹配输入PnP求解后,对比正确结果可以得知,解得的旋转矩阵和平移向量完全是错误的。

然后将存在错误匹配的信息利用单应矩阵进行RANSAC筛选,再进行PnP求解。

单应性矩阵描述的是针对同一事物,在不同的视角下拍摄的两幅图像之间的关系。所以同一物体在不同位置成像的特征点也可以用单应矩阵描述变换。

RANSAC算法介绍请参考:特征点匹配——使用基础矩阵、单应性矩阵的RANSAC算法去除误匹配点对

Code

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <Eigen/Core>

#include <g2o/core/base_vertex.h>

#include <g2o/core/base_unary_edge.h>

#include <g2o/core/sparse_optimizer.h>

#include <g2o/core/block_solver.h>

#include <g2o/core/solver.h>

#include <g2o/core/optimization_algorithm_gauss_newton.h>

#include <g2o/solvers/dense/linear_solver_dense.h>

#include <sophus/se3.hpp>

#include <chrono>

using namespace std;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches,

double threshold

);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

int main(int argc, char **argv) {

if (argc != 5) {

cout << "usage: pose_estimation_3d2d img1 img2 depth1 depth2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

assert(img_1.data && img_2.data && "Can not load images!");

//正确匹配

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

double threshold=30;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches,threshold);

cout << "当距离为"<<threshold <<",一共找到了" << matches.size() << "组匹配点" << endl;

// 建立3D点

Mat d1 = imread(argv[3], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point3f> pts_3d;

vector<Point2f> pts_2d;

for (DMatch m:matches) {

ushort d = d1.ptr<unsigned short>(int(keypoints_1[m.queryIdx].pt.y))[int(keypoints_1[m.queryIdx].pt.x)];

if (d == 0) // bad depth

continue;

float dd = d / 5000.0;

Point2d p1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

pts_3d.push_back(Point3f(p1.x * dd, p1.y * dd, dd));

pts_2d.push_back(keypoints_2[m.trainIdx].pt);

}

cout << "3d-2d pairs: " << pts_3d.size() << endl;

Mat img_match;

drawMatches(img_1, keypoints_1, img_2, keypoints_2, matches, img_match);

imshow("all matches", img_match);

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

Mat r, t;

solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

Mat R;

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

//******************************************//

//****************存在误匹配****************//

vector<KeyPoint> bad_keypoints_1, bad_keypoints_2;

vector<DMatch> bad_matches;

threshold=60;

find_feature_matches(img_1, img_2, bad_keypoints_1, bad_keypoints_2, bad_matches,threshold);

cout << "当距离为"<<threshold <<",一共找到了" << bad_matches.size() << "组匹配点" << endl;

// 建立3D点

vector<Point3f> bad_pts_3d;

vector<Point2f> bad_pts_2d;

for (DMatch m:bad_matches) {

ushort d = d1.ptr<unsigned short>(int(bad_keypoints_1[m.queryIdx].pt.y))[int(bad_keypoints_1[m.queryIdx].pt.x)];

if (d == 0) // bad depth

continue;

float dd = d / 5000.0;

Point2d p1 = pixel2cam(bad_keypoints_1[m.queryIdx].pt, K);

bad_pts_3d.push_back(Point3f(p1.x * dd, p1.y * dd, dd));

bad_pts_2d.push_back(bad_keypoints_2[m.trainIdx].pt);

}

cout << "3d-2d pairs: " << bad_pts_3d.size() << endl;

Mat bad_img_match;

drawMatches(img_1, bad_keypoints_1, img_2, bad_keypoints_2, bad_matches, bad_img_match);

imshow("bad matches", bad_img_match);

t1 = chrono::steady_clock::now();

solvePnP(bad_pts_3d, bad_pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

// waitKey(0);

//******************************************//

//****************存在误匹配,利用单应矩阵RANSAC****************//

vector<KeyPoint> h_keypoints_1, h_keypoints_2;

vector<DMatch> h_matches;

threshold=1000;

find_feature_matches(img_1, img_2, h_keypoints_1, h_keypoints_2, h_matches,threshold);

// 添加匹配的点到 vectors

std::vector<Point2f> points1;

std::vector<Point2f> points2;

for (const auto& match : h_matches) {

points1.push_back(h_keypoints_1[match.queryIdx].pt);

points2.push_back(h_keypoints_2[match.trainIdx].pt);

}

// 使用 RANSAC 去除误匹配

std::vector<uchar> inliers;

Mat homography = findHomography(points1, points2, RANSAC, 3, inliers);

// 根据 inliers 过滤匹配点

std::vector<DMatch> inlier_matches;

for (size_t i = 0; i < inliers.size(); i++) {

if (inliers[i]) {

inlier_matches.push_back(h_matches[i]);

}

}

cout << "RANSAC: " << inlier_matches.size() << "组匹配点" << endl;

// 绘制匹配结果

Mat img_matches;

drawMatches(img_1, h_keypoints_1, img_2, h_keypoints_2, inlier_matches, img_matches);

// 显示最终结果

imshow("Matches after RANSAC", img_matches);

// 建立3D点

vector<Point3f> h_pts_3d;

vector<Point2f> h_pts_2d;

for (DMatch m:inlier_matches) {

ushort d = d1.ptr<unsigned short>(int(h_keypoints_1[m.queryIdx].pt.y))[int(h_keypoints_2[m.queryIdx].pt.x)];

if (d == 0) // bad depth

continue;

float dd = d / 5000.0;

Point2d p1 = pixel2cam(h_keypoints_1[m.queryIdx].pt, K);

h_pts_3d.push_back(Point3f(p1.x * dd, p1.y * dd, dd));

h_pts_2d.push_back(h_keypoints_2[m.trainIdx].pt);

}

t1 = chrono::steady_clock::now();

solvePnP(h_pts_3d, h_pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法(SOLVEPNP_EPNP)

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

waitKey(0);

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches,

double threshold

) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, threshold)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

// #include <opencv2/opencv.hpp>

// #include <opencv2/features2d.hpp>

// #include <opencv2/highgui.hpp>

// using namespace cv;

// using namespace std;

// int main() {

// // 读取图像

// Mat img1 = imread("image1.jpg", IMREAD_GRAYSCALE);

// Mat img2 = imread("image2.jpg", IMREAD_GRAYSCALE);

// if (img1.empty() || img2.empty()) {

// cout << "Could not open or find the images!" << endl;

// return -1;

// }

// // 初始化 ORB 特征检测器

// Ptr<ORB> orb = ORB::create();

// // 棻特征点以及描述子

// std::vector<KeyPoint> keypoints1, keypoints2;

// Mat descriptors1, descriptors2;

// orb->detectAndCompute(img1, noArray(), keypoints1, descriptors1);

// orb->detectAndCompute(img2, noArray(), keypoints2, descriptors2);

// // 进行特征匹配

// std::vector<DMatch> matches;

// Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create(DescriptorMatcher::BRUTEFORCE_HAMMING);

// matcher->match(descriptors1, descriptors2, matches);

// // 添加匹配的点到 vectors

// std::vector<Point2f> points1;

// std::vector<Point2f> points2;

// for (const auto& match : matches) {

// points1.push_back(keypoints1[match.queryIdx].pt);

// points2.push_back(keypoints2[match.trainIdx].pt);

// }

// // 使用 RANSAC 去除误匹配

// std::vector<uchar> inliers;

// Mat homography = findHomography(points1, points2, RANSAC, 3, inliers);

// // 根据 inliers 过滤匹配点

// std::vector<DMatch> inlier_matches;

// for (size_t i = 0; i < inliers.size(); i++) {

// if (inliers[i]) {

// inlier_matches.push_back(matches[i]);

// }

// }

// // 绘制匹配结果

// Mat img_matches;

// drawMatches(img1, keypoints1, img2, keypoints2, inlier_matches, img_matches);

// // 显示最终结果

// imshow("Matches after RANSAC", img_matches);

// waitKey(0);

// return 0;

// }

Note:虽然RANSAC筛选后t在第一维相比最初版本仍有差距,但如果对比书中2D-2D的例程来说,只有RANSAC这此结果与2D-2D结果相差一个比例因子s。其它结果在第一维均不符合比例关系。此次求解PnP采用了OpenCV的EPnP解法。

9、使用Sophus的SE3类,自己设计g2o的节点和边,实现PnP和ICP的优化。

关于使用Sophus的SE3类设计g2o的节点和边的实现,参考书中例程或第6和7题的代码均可。

SE3d使用双精度浮点数(double),而SE3可能会有不同的实现(如使用浮点数或其他数值类型)

10、在Ceres中实现PnP和ICP的优化。

安装Ceres流程编写即可,具体参考下列代码。

Code

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <ceres/ceres.h>

#include <ceres/rotation.h>

#include <Eigen/Core>

#include <Eigen/Geometry>

#include "sophus/se3.hpp"

#include <chrono>

using namespace std;

using namespace Eigen;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

//Ref:http://www.ceres-solver.org/nnls_tutorial.html#bundle-adjustment

struct PnPReprojectionError {

PnPReprojectionError(Point2f pts_2d, Point3f pts_3d)

: _pts_2d(pts_2d), _pts_3d(pts_3d) {}

template <typename T>

bool operator()(const T* const rotation_vector,

const T* const translation_vector,

T* residuals) const {

T p_transformed[3], p_origin[3];

p_origin[0]=T(_pts_3d.x);

p_origin[1]=T(_pts_3d.y);

p_origin[2]=T(_pts_3d.z);

ceres::AngleAxisRotatePoint(rotation_vector, p_origin, p_transformed);

//旋转后加上平移向量

p_transformed[0] += translation_vector[0];

p_transformed[1] += translation_vector[1];

p_transformed[2] += translation_vector[2];

//归一化

T xp = p_transformed[0] / p_transformed[2];

T yp = p_transformed[1] / p_transformed[2];

double fx=520.9, fy=521.0, cx=325.1, cy=249.7;

// Compute final projected point position.

T predicted_x = fx * xp + cx;

T predicted_y = fy * yp + cy;

// The error is the difference between the predicted and observed position.

residuals[0] = T(_pts_2d.x) - predicted_x;

residuals[1] = T(_pts_2d.y) - predicted_y;

return true;

}

// 2,3,3指输出维度(residuals)为2

//待优化变量(rotation_vector,translation_vector)维度分别为3

static ceres::CostFunction* Create(const Point2f _pts_2d,

const Point3f _pts_3d) {

return (new ceres::AutoDiffCostFunction<PnPReprojectionError, 2, 3, 3>(

new PnPReprojectionError(_pts_2d, _pts_3d)));

}

Point2f _pts_2d;

Point3f _pts_3d;

};

int main(int argc, char **argv){

if (argc != 5) {

cout << "usage: pose_estimation_3d2d img1 img2 depth1 depth2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

assert(img_1.data && img_2.data && "Can not load images!");

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

// 建立3D点

Mat d1 = imread(argv[3], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point3f> pts_3d;

vector<Point2f> pts_2d;

vector<Vector3d> pts_3d_eigen;

vector<Vector2d> pts_2d_eigen;

for (DMatch m:matches) {

ushort d = d1.ptr<unsigned short>(int(keypoints_1[m.queryIdx].pt.y))[int(keypoints_1[m.queryIdx].pt.x)];

if (d == 0) // bad depth

continue;

float dd = d / 5000.0;

Point2d p1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

pts_3d.push_back(Point3f(p1.x * dd, p1.y * dd, dd));//第一个相机观察到的3D点坐标

pts_2d.push_back(keypoints_2[m.trainIdx].pt);//特征点在第二个相机中的投影

pts_3d_eigen.push_back(Vector3d(p1.x * dd, p1.y * dd, dd));

pts_2d_eigen.push_back(Vector2d(keypoints_2[m.trainIdx].pt.x, keypoints_2[m.trainIdx].pt.y));

}

cout << "3d-2d pairs: " << pts_3d.size() << endl;

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

Mat r, t;

solvePnP(pts_3d, pts_2d, K, Mat(), r, t, false); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

Mat R;

cv::Rodrigues(r, R); // r为旋转向量形式,用Rodrigues公式转换为矩阵

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in opencv cost time: " << time_used.count() << " seconds." << endl;

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t << endl;

cout << endl;

double r_ceres[3]={0,0,0};

double t_ceres[3]={0,0,0};

ceres::Problem problem;

for (size_t i = 0; i < pts_2d.size(); ++i) {

ceres::CostFunction* cost_function =

PnPReprojectionError::Create(pts_2d[i],pts_3d[i]);

problem.AddResidualBlock(cost_function,

nullptr /* squared loss */,

r_ceres,

t_ceres);

}

t1 = chrono::steady_clock::now();

ceres::Solver::Options options;

options.linear_solver_type = ceres::DENSE_SCHUR;

options.minimizer_progress_to_stdout = true;

ceres::Solver::Summary summary;

ceres::Solve(options, &problem, &summary);

std::cout << summary.BriefReport() << "\n";

Mat r_ceres_cv=(Mat_<double>(3, 1) <<r_ceres[0], r_ceres[1], r_ceres[2]);

Mat t_ceres_cv=(Mat_<double>(3, 1) <<t_ceres[0], t_ceres[1], t_ceres[2]);

cv::Rodrigues(r_ceres_cv, R);

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t_ceres_cv << endl;

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in ceres cost time: " << time_used.count() << " seconds." << endl<< endl;

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <ceres/ceres.h>

#include <ceres/rotation.h>

#include <Eigen/Core>

#include <Eigen/Geometry>

#include "sophus/se3.hpp"

#include <chrono>

using namespace std;

using namespace Eigen;

using namespace cv;

void find_feature_matches(

const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches);

// 像素坐标转相机归一化坐标

Point2d pixel2cam(const Point2d &p, const Mat &K);

void pose_estimation_3d3d(

const vector<Point3f> &pts1,

const vector<Point3f> &pts2,

Mat &R, Mat &t

);

//Ref:http://www.ceres-solver.org/nnls_tutorial.html#bundle-adjustment

struct ICPReprojectionError {

ICPReprojectionError(Point3f pts1_3d, Point3f pts2_3d)

: _pts1_3d(pts1_3d), _pts2_3d(pts2_3d) {}

template <typename T>

bool operator()(const T* const rotation_vector,

const T* const translation_vector,

T* residuals) const {

T p_transformed[3], p_origin[3];

p_origin[0]=T(_pts2_3d.x);

p_origin[1]=T(_pts2_3d.y);

p_origin[2]=T(_pts2_3d.z);

ceres::AngleAxisRotatePoint(rotation_vector, p_origin, p_transformed);

//旋转后加上平移向量

p_transformed[0] += translation_vector[0];

p_transformed[1] += translation_vector[1];

p_transformed[2] += translation_vector[2];

//计算error

residuals[0] = T(_pts1_3d.x) - p_transformed[0];

residuals[1] = T(_pts1_3d.y) - p_transformed[1];

residuals[2] = T(_pts1_3d.z) - p_transformed[2];

return true;

}

// 3,3,3指输出维度(residuals)为3

//待优化变量(rotation_vector,translation_vector)维度分别为3

static ceres::CostFunction* Create(const Point3f _pts1_3d,

const Point3f _pts2_3d) {

return (new ceres::AutoDiffCostFunction<ICPReprojectionError, 3, 3, 3>(

new ICPReprojectionError(_pts1_3d, _pts2_3d)));

}

Point3f _pts1_3d;

Point3f _pts2_3d;

};

int main(int argc, char **argv){

if (argc != 5) {

cout << "usage: pose_estimation_3d3d img1 img2 depth1 depth2" << endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread(argv[1], CV_LOAD_IMAGE_COLOR);

Mat img_2 = imread(argv[2], CV_LOAD_IMAGE_COLOR);

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches(img_1, img_2, keypoints_1, keypoints_2, matches);

cout << "一共找到了" << matches.size() << "组匹配点" << endl;

// 建立3D点

Mat depth1 = imread(argv[3], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat depth2 = imread(argv[4], CV_LOAD_IMAGE_UNCHANGED); // 深度图为16位无符号数,单通道图像

Mat K = (Mat_<double>(3, 3) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1);

vector<Point3f> pts1, pts2;

vector<Vector3d> pts1_eigen, pts2_eigen;

for (DMatch m:matches) {

ushort d1 = depth1.ptr<unsigned short>(int(keypoints_1[m.queryIdx].pt.y))[int(keypoints_1[m.queryIdx].pt.x)];

ushort d2 = depth2.ptr<unsigned short>(int(keypoints_2[m.trainIdx].pt.y))[int(keypoints_2[m.trainIdx].pt.x)];

if (d1 == 0 || d2 == 0) // bad depth

continue;

Point2d p1 = pixel2cam(keypoints_1[m.queryIdx].pt, K);

Point2d p2 = pixel2cam(keypoints_2[m.trainIdx].pt, K);

float dd1 = float(d1) / 5000.0;

float dd2 = float(d2) / 5000.0;

pts1.push_back(Point3f(p1.x * dd1, p1.y * dd1, dd1));

pts2.push_back(Point3f(p2.x * dd2, p2.y * dd2, dd2));

pts1_eigen.push_back(Vector3d(p1.x * dd1, p1.y * dd1, dd1));

pts2_eigen.push_back(Vector3d(p2.x * dd2, p2.y * dd2, dd2));

}

cout << "3d-3d pairs: " << pts1.size() << endl;

Mat R, t;

pose_estimation_3d3d(pts1, pts2, R, t);

cout << "ICP via SVD results: " << endl;

cout << "R = " << R << endl;

cout << "t = " << t << endl;

cout << endl;

double r_ceres[3]={0,0,0};

double t_ceres[3]={0,0,0};

ceres::Problem problem;

for (size_t i = 0; i < pts1.size(); ++i) {

ceres::CostFunction* cost_function =

ICPReprojectionError::Create(pts1[i],pts2[i]);

problem.AddResidualBlock(cost_function,

nullptr /* squared loss */,

r_ceres,

t_ceres);

}

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

ceres::Solver::Options options;

options.linear_solver_type = ceres::DENSE_SCHUR;

options.minimizer_progress_to_stdout = true;

ceres::Solver::Summary summary;

ceres::Solve(options, &problem, &summary);

std::cout << summary.BriefReport() << "\n";

Mat r_ceres_cv=(Mat_<double>(3, 1) <<r_ceres[0], r_ceres[1], r_ceres[2]);

Mat t_ceres_cv=(Mat_<double>(3, 1) <<t_ceres[0], t_ceres[1], t_ceres[2]);

cv::Rodrigues(r_ceres_cv, R);

cout << "R=" << endl << R << endl;

cout << "t=" << endl << t_ceres_cv << endl;

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "solve pnp in ceres cost time: " << time_used.count() << " seconds." << endl;

return 0;

}

void find_feature_matches(const Mat &img_1, const Mat &img_2,

std::vector<KeyPoint> &keypoints_1,

std::vector<KeyPoint> &keypoints_2,

std::vector<DMatch> &matches) {

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect(img_1, keypoints_1);

detector->detect(img_2, keypoints_2);

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute(img_1, keypoints_1, descriptors_1);

descriptor->compute(img_2, keypoints_2, descriptors_2);

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match(descriptors_1, descriptors_2, match);

//-- 第四步:匹配点对筛选

double min_dist = 10000, max_dist = 0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for (int i = 0; i < descriptors_1.rows; i++) {

double dist = match[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

printf("-- Max dist : %f \n", max_dist);

printf("-- Min dist : %f \n", min_dist);

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for (int i = 0; i < descriptors_1.rows; i++) {

if (match[i].distance <= max(2 * min_dist, 30.0)) {

matches.push_back(match[i]);

}

}

}

Point2d pixel2cam(const Point2d &p, const Mat &K) {

return Point2d

(

(p.x - K.at<double>(0, 2)) / K.at<double>(0, 0),

(p.y - K.at<double>(1, 2)) / K.at<double>(1, 1)

);

}

void pose_estimation_3d3d(const vector<Point3f> &pts1,

const vector<Point3f> &pts2,

Mat &R, Mat &t) {

Point3f p1, p2; // center of mass

int N = pts1.size();

for (int i = 0; i < N; i++) {

p1 += pts1[i];

p2 += pts2[i];

}

p1 = Point3f(Vec3f(p1) / N);

p2 = Point3f(Vec3f(p2) / N);

vector<Point3f> q1(N), q2(N); // remove the center

for (int i = 0; i < N; i++) {

q1[i] = pts1[i] - p1;

q2[i] = pts2[i] - p2;

}

// compute q1*q2^T

Eigen::Matrix3d W = Eigen::Matrix3d::Zero();

for (int i = 0; i < N; i++) {

W += Eigen::Vector3d(q1[i].x, q1[i].y, q1[i].z) * Eigen::Vector3d(q2[i].x, q2[i].y, q2[i].z).transpose();

}

cout << "W=" << W << endl;

// SVD on W

Eigen::JacobiSVD<Eigen::Matrix3d> svd(W, Eigen::ComputeFullU | Eigen::ComputeFullV);

Eigen::Matrix3d U = svd.matrixU();

Eigen::Matrix3d V = svd.matrixV();

cout << "U=" << U << endl;

cout << "V=" << V << endl;

Eigen::Matrix3d R_ = U * (V.transpose());

if (R_.determinant() < 0) {

R_ = -R_;

}

Eigen::Vector3d t_ = Eigen::Vector3d(p1.x, p1.y, p1.z) - R_ * Eigen::Vector3d(p2.x, p2.y, p2.z);

// convert to cv::Mat

R = (Mat_<double>(3, 3) <<

R_(0, 0), R_(0, 1), R_(0, 2),

R_(1, 0), R_(1, 1), R_(1, 2),

R_(2, 0), R_(2, 1), R_(2, 2)

);

t = (Mat_<double>(3, 1) << t_(0, 0), t_(1, 0), t_(2, 0));

}