目录

1、先导知识

2、案例

2.1 需求

2.2 代码实现

FlowBean类

Mapper类

Reducer类

Driver类

3、总结

1、先导知识

TreeMap底层是根据红黑树的数据结构构建的,默认是根据key的自然排序来组织(比如integer的大小,String的字典排序),如果key是自定义类,可以通过重写compareTo方法自定义排序。

firstKey ()方法 用于返回此TreeMap中具有最小键值的第一个键元素。.

lastKey ()方法 用于返回此TreeMap中具有最大键值的最后一个键元素。.

2、案例

2.1 需求

2.2 代码实现

setup()与cleanup()方法:

1、setup(),此方法被MapReduce框架仅且执行一次,在执行Map任务前,进行相关变量或者资源的集中初始化工作。若是将资源初始化工作放在方法map()中,导致Mapper任务在解析每一行输入时都会进行资源初始化工作,导致重复,程序运行效率不高!

2、cleanup(),此方法被MapReduce框架仅且执行一次,在执行完毕Map任务后,进行相关变量或资源的释放工作。若是将释放资源工作放入方法map()中,也会导致Mapper任务在解析、处理每一行文本后释放资源,而且在下一行文本解析前还要重复初始化,导致反复重复,程序运行效率不高!

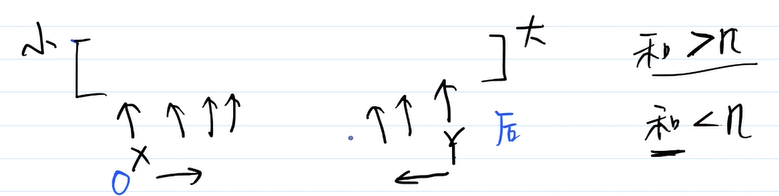

这里就使用了cleanup方法,map方法和reduce方法保持TreeMap只有n个元素;cleanup用于输出TreeMap的元素给下一个环节用,只需要执行一次,就放在cleanup。

FlowBean类

package com.atguigu.mr.top;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.WritableComparable;

public class FlowBean implements WritableComparable<FlowBean>{

private long upFlow;

private long downFlow;

private long sumFlow;

public FlowBean() {

super();

}

public FlowBean(long upFlow, long downFlow) {

super();

this.upFlow = upFlow;

this.downFlow = downFlow;

}

@Override

public void write(DataOutput out) throws IOException {

out.writeLong(upFlow);

out.writeLong(downFlow);

out.writeLong(sumFlow);

}

@Override

public void readFields(DataInput in) throws IOException {

upFlow = in.readLong();

downFlow = in.readLong();

sumFlow = in.readLong();

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

public void set(long downFlow2, long upFlow2) {

downFlow = downFlow2;

upFlow = upFlow2;

sumFlow = downFlow2 + upFlow2;

}

@Override

public int compareTo(FlowBean bean) {

int result;

if (this.sumFlow > bean.getSumFlow()) {

result = -1;

}else if (this.sumFlow < bean.getSumFlow()) {

result = 1;

}else {

result = 0;

}

return result;

}

}Mapper类

package com.atguigu.mr.top;

import java.io.IOException;

import java.util.Iterator;

import java.util.TreeMap;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class TopNMapper extends Mapper<LongWritable, Text, FlowBean, Text>{

// 定义一个TreeMap作为存储数据的容器(天然按key排序)

private TreeMap<FlowBean, Text> flowMap = new TreeMap<FlowBean, Text>();

private FlowBean kBean;

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

kBean = new FlowBean();

Text v = new Text();

// 1 获取一行

String line = value.toString();

// 2 切割

String[] fields = line.split("\t");

// 3 封装数据

String phoneNum = fields[0];

long upFlow = Long.parseLong(fields[1]);

long downFlow = Long.parseLong(fields[2]);

long sumFlow = Long.parseLong(fields[3]);

kBean.setDownFlow(downFlow);

kBean.setUpFlow(upFlow);

kBean.setSumFlow(sumFlow);

v.set(phoneNum);

// 4 向TreeMap中添加数据

flowMap.put(kBean, v);

// 5 限制TreeMap的数据量,超过10条就删除掉流量最小的一条数据

if (flowMap.size() > 10) {

// flowMap.remove(flowMap.firstKey());

flowMap.remove(flowMap.lastKey());

}

}

@Override

protected void cleanup(Context context) throws IOException, InterruptedException {

// 6 遍历treeMap集合,输出数据

Iterator<FlowBean> bean = flowMap.keySet().iterator();

while (bean.hasNext()) {

FlowBean k = bean.next();

context.write(k, flowMap.get(k));

}

}

}Reducer类

package com.atguigu.mr.top;

import java.io.IOException;

import java.util.Iterator;

import java.util.TreeMap;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class TopNReducer extends Reducer<FlowBean, Text, Text, FlowBean> {

// 定义一个TreeMap作为存储数据的容器(天然按key排序)

TreeMap<FlowBean, Text> flowMap = new TreeMap<FlowBean, Text>();

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context)throws IOException, InterruptedException {

for (Text value : values) {

FlowBean bean = new FlowBean();

bean.set(key.getDownFlow(), key.getUpFlow());

// 1 向treeMap集合中添加数据

flowMap.put(bean, new Text(value));

// 2 限制TreeMap数据量,超过10条就删除掉流量最小的一条数据

if (flowMap.size() > 10) {

// flowMap.remove(flowMap.firstKey());

flowMap.remove(flowMap.lastKey());

}

}

}

@Override

protected void cleanup(Reducer<FlowBean, Text, Text, FlowBean>.Context context) throws IOException, InterruptedException {

// 3 遍历集合,输出数据

Iterator<FlowBean> it = flowMap.keySet().iterator();

while (it.hasNext()) {

FlowBean v = it.next();

context.write(new Text(flowMap.get(v)), v);

}

}

}Driver类

package com.atguigu.mr.top;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class TopNDriver {

public static void main(String[] args) throws Exception {

args = new String[]{"e:/output1","e:/output3"};

// 1 获取配置信息,或者job对象实例

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration);

// 6 指定本程序的jar包所在的本地路径

job.setJarByClass(TopNDriver.class);

// 2 指定本业务job要使用的mapper/Reducer业务类

job.setMapperClass(TopNMapper.class);

job.setReducerClass(TopNReducer.class);

// 3 指定mapper输出数据的kv类型

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

// 4 指定最终输出的数据的kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

// 5 指定job的输入原始文件所在目录

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 将job中配置的相关参数,以及job所用的java类所在的jar包, 提交给yarn去运行

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}3、总结

MapReduce实现TopN的步骤:

(1)利用TreeMap排序, 每过来一个数据 先放入TreeMap中, 只要TreeMap的size超过n,就移除firstKey或者lastKey对应的(看是从小到大还是从大到小排序);

(2)在众多的Mapper的端,首先计算出各端Mapper的TopN,然后在将每一个Mapper端的TopN汇总到Reducer端进行计算最终的TopN,这样就可以最大化的提高运行并行处理的能力,同时极大的减少网络的Shuffle传输数据,从而极大的加快的整个处理的效率。

参考:mapreduce求topN - hdc520 - 博客园 (cnblogs.com)