大家好,我是微学AI,今天给大家介绍一下人工智能算法工程师(中级)课程15-常见的网络模型及设计原理与代码详解。

本文给大家介绍常见的网络模型及其设计原理与代码实现,涵盖了LeNet、AlexNet、VggNet、GoogLeNet、InceptionNet、ResNet、DenseNet、DarkNet、MobileNet等经典网络。通过对这些网络模型的深入剖析,我们了解到LeNet是最早的卷积神经网络,适用于手写数字识别;AlexNet引入了ReLU激活函数和Dropout策略,显著提高了图像识别性能;VggNet通过堆叠卷积层实现了深层网络;GoogLeNet的Inception模块创新性地提高了网络深度和宽度;ResNet引入残差学习解决了深层网络训练困难的问题;DenseNet通过特征复用提高了参数效率;DarkNet作为YOLO系列的基础网络,具有高效的特点;MobileNet则通过深度可分离卷积实现了移动端部署

文章目录

- 一、LeNet

- 二、AlexNet

- 三、VggNet

- 四、GoogLeNet (InceptionNet v1)

- 五、ResNet

- 六、DenseNet

- 七、DarkNet

- 八、MobileNet

- 九、总结

一、LeNet

- 数学原理

LeNet是一种用于手写数字识别的卷积神经网络(CNN),主要包括卷积层、池化层和全连接层。其数学原理主要涉及卷积运算和池化运算。 - 代码详解

import torch.nn as nn

import torch.nn.functional as F

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Conv2d(1, 6, 5)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16*4*4, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = F.max_pool2d(F.relu(self.conv1(x)), (2, 2))

x = F.max_pool2d(F.relu(self.conv2(x)), (2, 2))

x = x.view(-1, self.num_flat_features(x))

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

def num_flat_features(self, x):

size = x.size()[1:] # all dimensions except the batch dimension

num_features = 1

for s in size:

num_features *= s

return num_features

net = LeNet()

二、AlexNet

- 数学原理

AlexNet是第一个在ImageNet竞赛中取得优异成绩的深度卷积神经网络。它引入了ReLU激活函数、局部响应归一化(LRN)和重叠的最大池化。 - 代码详解

class AlexNet(nn.Module):

def __init__(self, num_classes=1000):

super(AlexNet, self).__init__()

self.features = nn.Sequential(

nn.Conv2d(3, 96, kernel_size=11, stride=4, padding=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Conv2d(96, 256, kernel_size=5, padding=2),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

nn.Conv2d(256, 384, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(384, 384, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(384, 256, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=3, stride=2),

)

self.classifier = nn.Sequential(

nn.Dropout(),

nn.Linear(256 * 6 * 6, 4096),

nn.ReLU(inplace=True),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(inplace=True),

nn.Linear(4096, num_classes),

)

def forward(self, x):

x = self.features(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

net = AlexNet()

三、VggNet

- 数学原理

VggNet通过重复使用简单的卷积层来构建深度网络。它证明了通过堆叠小的卷积核可以构建深层网络。 - 代码详解

class VGG(nn.Module):

def __init__(self, features, num_classes=1000):

super(VGG, self).__init__()

self.features = features

self.classifier = nn.Sequential(

nn.Linear(512 * 7 * 7, 4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096, 4096),

nn.ReLU(True),

nn.Dropout(),

nn.Linear(4096, num_classes),

)

def forward(self, x):

x = self.features(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

def make_layers(cfg, batch_norm=False):

layers = []

in_channels = 3

for v in cfg:

if v == 'M':

layers += [nn.MaxPool2d(kernel_size=2, stride=2)]

else:

conv2d = nn.Conv2d(in_channels, v,kernel_size=3, padding=1)

if batch_norm:

layers += [conv2d, nn.BatchNorm2d(v), nn.ReLU(inplace=True)]

else:

layers += [conv2d, nn.ReLU(inplace=True)]

in_channels = v

return nn.Sequential(*layers)

cfg = {

'VGG11': [64, 'M', 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],

'VGG13': [64, 64, 'M', 128, 128, 'M', 256, 256, 'M', 512, 512, 'M', 512, 512, 'M'],

'VGG16': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 'M', 512, 512, 512, 'M', 512, 512, 512, 'M'],

'VGG19': [64, 64, 'M', 128, 128, 'M', 256, 256, 256, 256, 'M', 512, 512, 512, 512, 'M', 512, 512, 512, 512, 'M'],

}

vgg11 = VGG(make_layers(cfg['VGG11']))

四、GoogLeNet (InceptionNet v1)

- 数学原理

GoogLeNet引入了Inception模块,该模块通过并行连接不同尺寸的卷积层和池化层来增加网络的深度和宽度,同时控制计算成本。 - 代码详解

class Inception(nn.Module):

def __init__(self, in_planes, n1x1, n3x3red, n3x3, n5x5red, n5x5, pool_planes):

super(Inception, self).__init__()

self.b1 = nn.Sequential(

nn.Conv2d(in_planes, n1x1, kernel_size=1),

nn.ReLU(True),

)

self.b2 = nn.Sequential(

nn.Conv2d(in_planes, n3x3red, kernel_size=1),

nn.ReLU(True),

nn.Conv2d(n3x3red, n3x3, kernel_size=3, padding=1),

nn.ReLU(True),

)

self.b3 = nn.Sequential(

nn.Conv2d(in_planes, n5x5red, kernel_size=1),

nn.ReLU(True),

nn.Conv2d(n5x5red, n5x5, kernel_size=5, padding=2),

nn.ReLU(True),

)

self.b4 = nn.Sequential(

nn.MaxPool2d(3, stride=1, padding=1),

nn.Conv2d(in_planes, pool_planes, kernel_size=1),

nn.ReLU(True),

)

def forward(self, x):

y1 = self.b1(x)

y2 = self.b2(x)

y3 = self.b3(x)

y4 = self.b4(x)

return torch.cat([y1, y2, y3, y4], 1)

class GoogLeNet(nn.Module):

def __init__(self):

super(GoogLeNet, self).__init__()

self.pre_layers = nn.Sequential(

nn.Conv2d(3, 192, kernel_size=3, padding=1),

nn.ReLU(True),

)

self.a3 = Inception(192, 64, 96, 128, 16, 32, 32)

self.b3 = Inception(256, 128, 128, 192, 32, 96, 64)

self.maxpool = nn.MaxPool2d(3, stride=2, padding=1)

self.a4 = Inception(480, 192, 96, 208, 16, 48, 64)

self.b4 = Inception(512, 160, 112, 224, 24, 64, 64)

self.c4 = Inception(512, 128, 128, 256, 24, 64, 64)

self.d4 = Inception(512, 112, 144, 288, 32, 64, 64)

self.e4 = Inception(528, 256, 160, 320, 32, 128, 128)

self.a5 = Inception(832, 256, 160, 320, 32, 128, 128)

self.b5 = Inception(832, 384, 192, 384, 48, 128, 128)

self.avgpool = nn.AvgPool2d(8, stride=1)

self.linear = nn.Linear(1024, 1000)

def forward(self, x):

out = self.pre_layers(x)

out = self.a3(out)

out = self.b3(out)

out = self.maxpool(out)

out = self.a4(out)

out = self.b4(out)

out = self.c4(out)

out = self.d4(out)

out = self.e4(out)

out = self.maxpool(out)

out = self.a5(out)

out = self.b5(out)

out = self.avgpool(out)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

net = GoogLeNet()

五、ResNet

- 数学原理

ResNet引入了残差学习来解决深度网络的训练问题。通过引入跳跃连接(shortcut connections),允许梯度直接流回前面的层,从而缓解了梯度消失的问题。 - 代码详解

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_planes, planes, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = nn.Conv2d(in_planes, planes, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=1, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.shortcut = nn.Sequential()

if stride != 1 or in_planes != self.expansion*planes:

self.shortcut = nn.Sequential(

nn.Conv2d(in_planes, self.expansion*planes, kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(self.expansion*planes)

)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

out += self.shortcut(x)

out = F.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, num_blocks, num_classes=1000):

super(ResNet, self).__init__()

self.in_planes = 64

self.conv1 = nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1, bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.layer1 = self._make_layer(block, 64, num_blocks[0], stride=1)

self.layer2 = self._make_layer(block, 128, num_blocks[1], stride=2)

self.layer3 = self._make_layer(block, 256, num_blocks[2], stride=2)

self.layer4 = self._make_layer(block, 512, num_blocks[3], stride=2)

self.linear = nn.Linear(512*block.expansion, num_classes)

def _make_layer(self, block, planes, num_blocks, stride):

strides = [stride] + [1]*(num_blocks-1)

layers = []

for stride in strides:

layers.append(block(self.in_planes, planes, stride))

self.in_planes = planes * block.expansion

return nn.Sequential(*layers)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)))

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.layer4(out)

out = F.avg_pool2d(out, 4)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

def ResNet18():

return ResNet(BasicBlock, [2,2,2,2])

net = ResNet18()

六、DenseNet

- 数学原理

DenseNet通过将每层与其他层连接,而不是仅与前一层连接,来提高网络的效率。这种连接方式减少了参数数量,并促进了特征的重用。 - 代码详解

class Bottleneck(nn.Module):

def __init__(self, in_planes, growth_rate):

super(Bottleneck, self).__init__()

self.bn1 = nn.BatchNorm2d(in_planes)

self.conv1 = nn.Conv2d(in_planes, 4*growth_rate, kernel_size=1, bias=False)

self.bn2 = nn.BatchNorm2d(4*growth_rate)

self.conv2 = nn.Conv2d(4*growth_rate, growth_rate, kernel_size=3, padding=1, bias=False)

def forward(self, x):

out = self.conv1(F.relu(self.bn1(x)))

out = self.conv2(F.relu(self.bn2(out)))

out = torch.cat([out,x], 1)

return out

class Transition(nn.Module):

def __init__(self, in_planes, out_planes):

super(Transition, self).__init__()

self.bn = nn.BatchNorm2d(in_planes)

self.conv = nn.Conv2d(in_planes, out_planes, kernel_size=1, bias=False)

def forward(self, x):

out = self.conv(F.relu(self.bn(x)))

out = F.avg_pool2d(out, 2)

return out

class DenseNet(nn.Module):

def __init__(self, block, nblocks, growth_rate=12, reduction=0.5, num_classes=1000):

super(DenseNet, self).__init__()

self.growth_rate = growth_rate

num_planes = 2*growth_rate

self.conv1 = nn.Conv2d(3, num_planes, kernel_size=3, padding=1, bias=False)

self.dense1 = self._make_dense_layers(block, num_planes, nblocks[0])

num_planes += nblocks[0]*growth_rate

out_planes = int(math.floor(num_planes*reduction))

self.trans1 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense2 = self._make_dense_layers(block, num_planes, nblocks[1])

num_planes += nblocks[1]*growth_rate

out_planes = int(math.floor(num_planes*reduction))

self.trans2 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense3 = self._make_dense_layers(block, num_planes, nblocks[2])

num_planes += nblocks[2]*growth_rate

out_planes = int(math.floor(num_planes*reduction))

self.trans3 = Transition(num_planes, out_planes)

num_planes = out_planes

self.dense4 = self._make_dense_layers(block, num_planes, nblocks[3])

num_planes += nblocks[3]*growth_rate

self.bn = nn.BatchNorm2d(num_planes)

self.linear = nn.Linear(num_planes, num_classes)

def _make_dense_layers(self, block, in_planes, nblock):

layers = []

for i in range(nblock):

layers.append(block(in_planes, self.growth_rate))

in_planes += self.growth_rate

return nn.Sequential(*layers)

def forward(self, x):

out = self.conv1(x)

out = self.trans1(self.dense1(out))

out = self.trans2(self.dense2(out))

out = self.trans3(self.dense3(out))

out = self.dense4(out)

out = F.avg_pool2d(F.relu(self.bn(out)), 4)

out = out.view(out.size(0), -1)

out = self.linear(out)

return out

def DenseNet121():

return DenseNet(Bottleneck, [6,12,24,16], growth_rate=32)

net = DenseNet121()

七、DarkNet

- 数学原理

DarkNet是一个开源的神经网络框架,它被用于YOLO(You Only Look Once)目标检测系统。它由一系列卷积层组成,这些层具有不同数量的过滤器和尺寸,以及步长和填充。 - 代码详解

class DarknetBlock(nn.Module):

def __init__(self, in_channels):

super(DarknetBlock, self).__init__()

self.conv1 = nn.Conv2d(in_channels, in_channels * 2, kernel_size=3, stride=1, padding=1)

self.bn1 = nn.BatchNorm2d(in_channels * 2)

self.conv2 = nn.Conv2d(in_channels * 2, in_channels, kernel_size=1, stride=1, padding=0)

self.bn2 = nn.BatchNorm2d(in_channels)

def forward(self, x):

out = F.relu(self.bn1(self.conv1(x)), inplace=True)

out = F.relu(self.bn2(self.conv2(out)), inplace=True)

out += x

return out

class Darknet(nn.Module):

def __init__(self, num_classes=1000):

super(Darknet, self).__init__()

self.conv1 = nn.Conv2d(3, 32, kernel_size=3, stride=1, padding=1)

self.bn1 = nn.BatchNorm2d(32)

self.layer1 = self._make_layer(32, 64, 1)

self.layer2 = self._make_layer(64, 128, 2)

self.layer3 = self._make_layer(128, 256, 8)

self.layer4 = self._make_layer(256, 512, 8)

self.layer5 = self._make_layer(512, 1024, 4)

self.global_avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(1024, num_classes)

def _make_layer(self, in_channels, out_channels, num_blocks):

layers = []

layers.append(nn.Conv2d(in_channels, out_channels, kernel_size=3, stride=2, padding=1))

layers.append(nn.BatchNorm2d(out_channels))

layers.append(nn.ReLU(inplace=True))

for i in range(num_blocks):

layers.append(DarknetBlock(out_channels))

return nn.Sequential(*layers)

def forward(self, x):

x = F.relu(self.bn1(self.conv1(x)), inplace=True)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.layer5(x)

x = self.global_avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

net = Darknet()

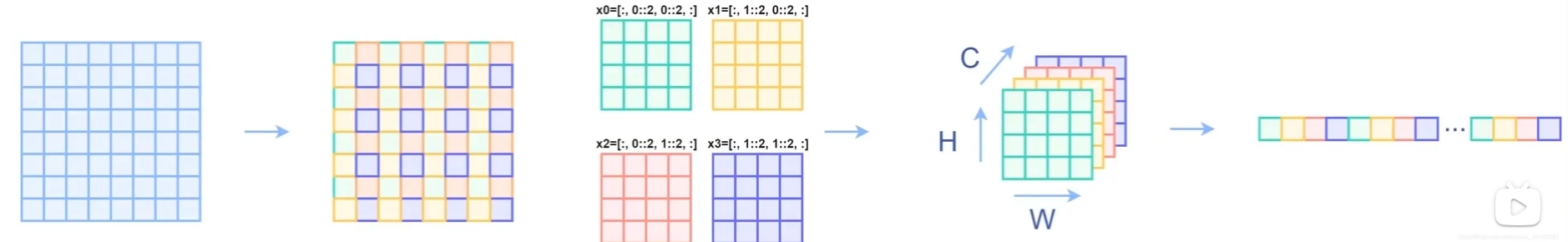

八、MobileNet

- 数学原理

MobileNet基于深度可分离卷积(depthwise separable convolution),它将标准卷积分解为深度卷积和逐点卷积,从而大幅减少参数数量和计算量。 - 代码详解

class DepthwiseSeparableConv(nn.Module):

def __init__(self, in_channels, out_channels, stride):

super(DepthwiseSeparableConv, self).__init__()

self.depthwise = nn.Conv2d(in_channels, in_channels, kernel_size=3, stride=stride, padding=1, groups=in_channels)

self.pointwise = nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=1)

def forward(self, x):

x = self.depthwise(x)

x = self.pointwise(x)

return x

class MobileNet(nn.Module):

def __init__(self, num_classes=1000):

super(MobileNet, self).__init__()

self.conv1 = nn.Conv2d(3, 32, kernel_size=3, stride=2, padding=1)

self.bn1 = nn.BatchNorm2d(32)

self.layers = self._make_layers(32, num_classes)

def _make_layers(self, in_channels, num_classes):

layers = []

for x in [64, 128, 128, 256, 256, 512, 512, 512, 512, 512, 512, 1024, 1024]:

stride = 2 if x > in_channels else 1

layers.append(DepthwiseSeparableConv(in_channels, x, stride))

layers.append(nn.BatchNorm2d(x))

layers.append(nn.ReLU(inplace=True))

in_channels = x

layers.append(nn.AdaptiveAvgPool2d((1, 1)))

layers.append(nn.Conv2d(in_channels, num_classes, kernel_size=1))

return nn.Sequential(*layers)

def forward(self, x):

x = F.relu(self.bn1(self.conv1(x)), inplace=True)

x = self.layers(x)

x = x.view(x.size(0), -1)

return x

net = MobileNet()

九、总结

以上代码展示了如何使用PyTorch构建不同的神经网络模型。每个模型都有其独特的结构和设计原理,适用于不同的应用场景。在实际应用中,可以根据任务需求和硬件条件选择合适的模型。由于篇幅限制,这里没有包含每个模型的完整训练和测试代码,但上述代码可以作为构建和训练这些模型的基础。