文章目录

- 网络介绍

- 网络结构

- 部分实现

- 对应网络结构

- 模型训练

- shuffleNet的优缺点总结

- 优点

- 不足

网络介绍

ShuffleNet主要应用在移动端,所以模型的设计目标就是利用有限的计算资源来达到最好的模型精度。ShuffleNetV1的设计核心是引入了两种操作:Pointwise Group Convolution和Channel Shuffle,这在保持精度的同时大大降低了模型的计算量。ShuffleNet在保持不低的准确率的前提下,将参数量几乎降低到了最小,因此其运算速度较快,单位参数量对模型准确率的贡献非常高。

网络结构

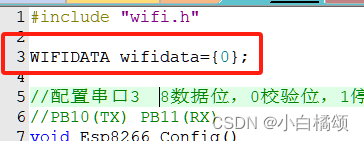

部分实现

class ShuffleV1Block(nn.Cell):

def __init__(self, inp, oup, group, first_group, mid_channels, ksize, stride):

super(ShuffleV1Block, self).__init__()

self.stride = stride

pad = ksize // 2

self.group = group

if stride == 2:

outputs = oup - inp

else:

outputs = oup

self.relu = nn.ReLU()

branch_main_1 = [

GroupConv(in_channels=inp, out_channels=mid_channels,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=1 if first_group else group),

nn.BatchNorm2d(mid_channels),

nn.ReLU(),

]

branch_main_2 = [

nn.Conv2d(mid_channels, mid_channels, kernel_size=ksize, stride=stride,

pad_mode='pad', padding=pad, group=mid_channels,

weight_init='xavier_uniform', has_bias=False),

nn.BatchNorm2d(mid_channels),

GroupConv(in_channels=mid_channels, out_channels=outputs,

kernel_size=1, stride=1, pad_mode="pad", pad=0,

groups=group),

nn.BatchNorm2d(outputs),

]

self.branch_main_1 = nn.SequentialCell(branch_main_1)

self.branch_main_2 = nn.SequentialCell(branch_main_2)

if stride == 2:

self.branch_proj = nn.AvgPool2d(kernel_size=3, stride=2, pad_mode='same')

def construct(self, old_x):

left = old_x

right = old_x

out = old_x

right = self.branch_main_1(right)

if self.group > 1:

right = self.channel_shuffle(right)

right = self.branch_main_2(right)

if self.stride == 1:

out = self.relu(left + right)

elif self.stride == 2:

left = self.branch_proj(left)

out = ops.cat((left, right), 1)

out = self.relu(out)

return out

def channel_shuffle(self, x):

batchsize, num_channels, height, width = ops.shape(x)

group_channels = num_channels // self.group

x = ops.reshape(x, (batchsize, group_channels, self.group, height, width))

x = ops.transpose(x, (0, 2, 1, 3, 4))

x = ops.reshape(x, (batchsize, num_channels, height, width))

return x

对应网络结构

模型训练

import time

import mindspore

import numpy as np

from mindspore import Tensor, nn

from mindspore.train import ModelCheckpoint, CheckpointConfig, TimeMonitor, LossMonitor, Model, Top1CategoricalAccuracy, Top5CategoricalAccuracy

def train():

mindspore.set_context(mode=mindspore.PYNATIVE_MODE, device_target="Ascend")

net = ShuffleNetV1(model_size="2.0x", n_class=10)

loss = nn.CrossEntropyLoss(weight=None, reduction='mean', label_smoothing=0.1)

min_lr = 0.0005

base_lr = 0.05

lr_scheduler = mindspore.nn.cosine_decay_lr(min_lr,

base_lr,

batches_per_epoch*250,

batches_per_epoch,

decay_epoch=250)

lr = Tensor(lr_scheduler[-1])

optimizer = nn.Momentum(params=net.trainable_params(), learning_rate=lr, momentum=0.9, weight_decay=0.00004, loss_scale=1024)

loss_scale_manager = ms.amp.FixedLossScaleManager(1024, drop_overflow_update=False)

model = Model(net, loss_fn=loss, optimizer=optimizer, amp_level="O3", loss_scale_manager=loss_scale_manager)

callback = [TimeMonitor(), LossMonitor()]

save_ckpt_path = "./"

config_ckpt = CheckpointConfig(save_checkpoint_steps=batches_per_epoch, keep_checkpoint_max=5)

ckpt_callback = ModelCheckpoint("shufflenetv1", directory=save_ckpt_path, config=config_ckpt)

callback += [ckpt_callback]

print("============== Starting Training ==============")

start_time = time.time()

# 由于时间原因,epoch = 5,可根据需求进行调整

model.train(5, dataset, callbacks=callback)

use_time = time.time() - start_time

hour = str(int(use_time // 60 // 60))

minute = str(int(use_time // 60 % 60))

second = str(int(use_time % 60))

print("total time:" + hour + "h " + minute + "m " + second + "s")

print("============== Train Success ==============")

if __name__ == '__main__':

train()

shuffleNet的优缺点总结

优点

- 轻量化设计:ShuffleNet通过减少参数和计算量,使得模型更适合在资源受限的设备上运行。

- 高效的通道重排:ShuffleNet引入了一种高效的通道重排机制,称为"Shuffle",以提高模型的表达能力,同时保持参数数量较低。

- 性能与速度的平衡:ShuffleNet在保持较低延迟的同时,提供了与较大模型相当的准确性,这使得它在实时应用中非常有用。

- 适用于移动设备:由于其轻量化的特性,ShuffleNet非常适合在移动设备和嵌入式系统中使用。

- 参数共享:ShuffleNet通过在网络中使用参数共享技术,进一步减少了模型的大小和计算成本。

- 灵活性:ShuffleNet提供了不同版本的模型,允许研究者和开发者根据特定应用的需求选择合适的模型大小。

不足

- 准确率折损:由于模型的轻量化设计,ShuffleNet在某些复杂任务上可能无法达到最高精度。

- 特定任务的局限性:在一些需要非常高精度或对模型容量要求较高的任务中,ShuffleNet可能不是最佳选择。

- 对输入尺寸敏感:ShuffleNet的性能可能对输入图像的尺寸较为敏感,这可能需要在设计网络时进行额外的考虑。

此章节学习到此结束,感谢昇思平台。