Embedding介绍

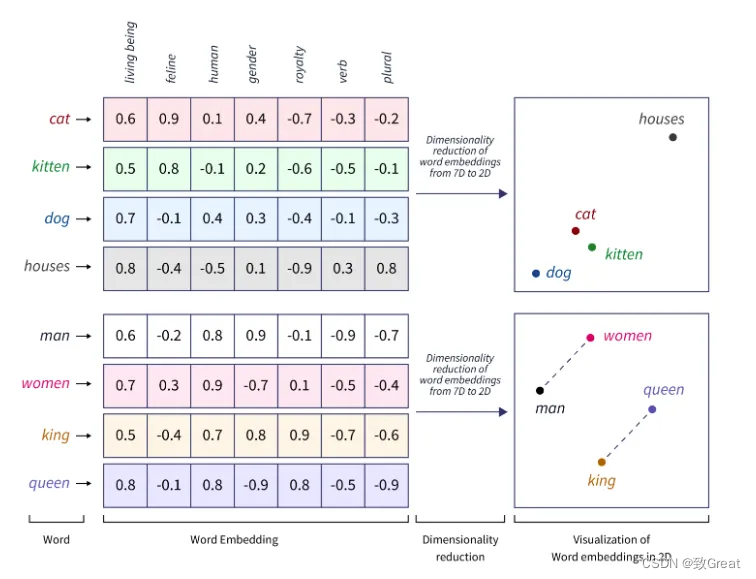

词向量是 NLP 中的一种表示形式,其中词汇表中的单词或短语被映射到实数向量。它们用于捕获高维空间中单词之间的语义和句法相似性。

在词嵌入的背景下,我们可以将单词表示为高维空间中的向量,其中每个维度对应一个特定的特征,例如“生物”、“猫科动物”、“人类”、“性别”等。每个单词在每个维度上都分配有一个数值,通常在 -1 到 1 之间,表示该词与该特征的关联程度。

例如,“猫”这个词在“猫科动物”维度上可能具有较高的正值,而在“人类”维度上具有接近于零的值,这反映了它与猫科动物的紧密关联性,而与人类的关联性较低。

这种数值表示使我们能够捕捉单词之间的语义关系并对其执行数学运算,例如计算单词之间的相似度或将其用作 NLP 任务中 ML 模型的输入。

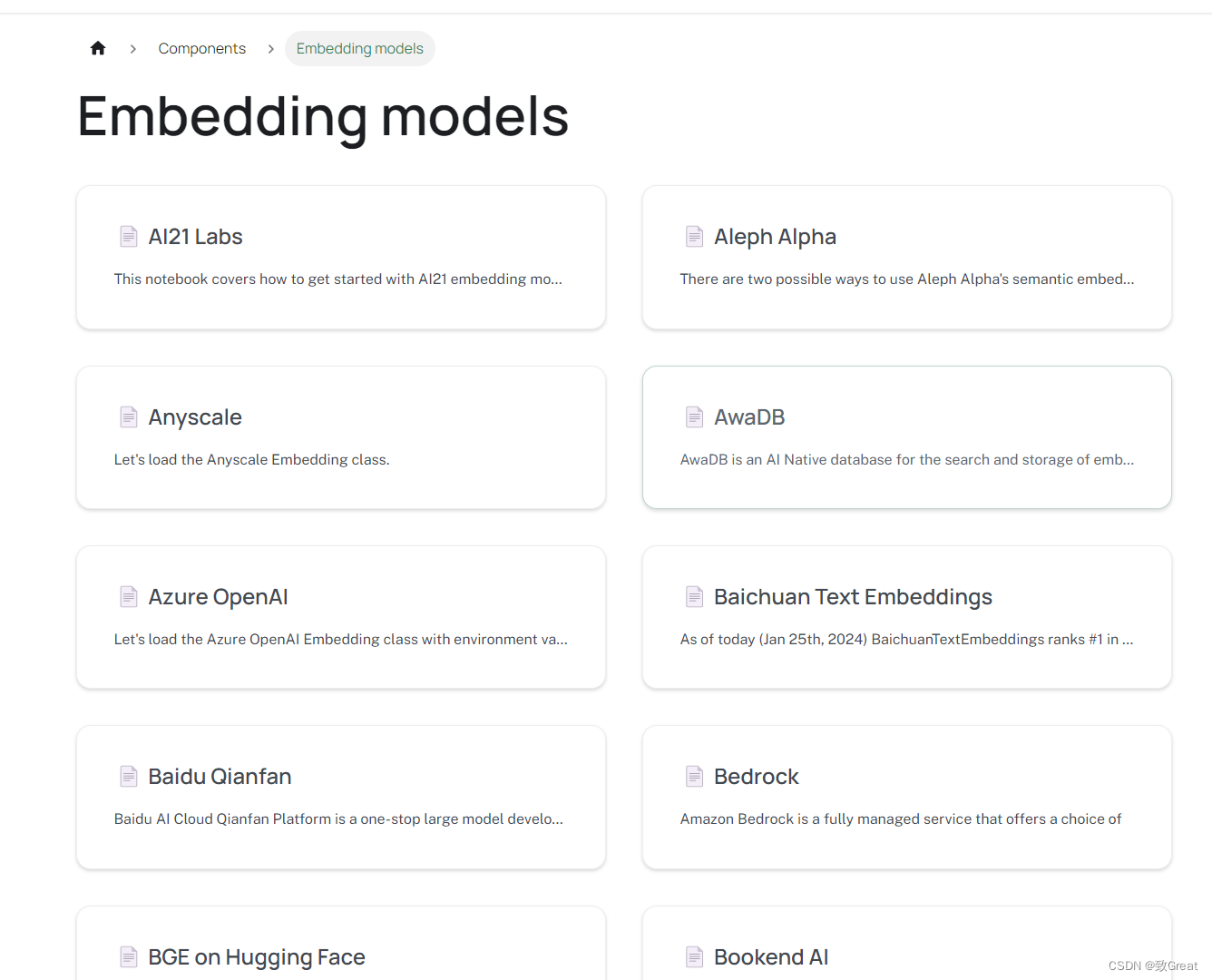

LangChain 可容纳来自不同来源的多种嵌入。

OpenAI

import os

os.environ["OPENAI_API_KEY"] = "your-key"

from langchain_openai import OpenAIEmbeddings

embeddings = OpenAIEmbeddings()

text = "Text"

text_embedding = embeddings.embed_query(text)

print(text_embedding)

"""

[-0.0006077770551231004,

-0.02036312831034526,

0.0015661947077772864,

-0.0008398058726938265,

0.00801365303172794,

0.01648443640533639,

-0.015071485112588635,

-0.006794635682304868,

-0.009232670381151012,

-0.004512441507728793,

0.00296615975583046,

0.02781575545470095,

-0.004290802116650396,

0.009204965399058554,

-0.007286398183123463,

0.01896402857732122,

0.03457576177203527,

0.01469746878566298,

0.03812199202928964,

-0.033024282774857694,

-0.014143370075136358,

-0.0016640276929606461,

-0.00023289462736494386,

-0.009856030615586264,

-0.018867061139997622,

...

-0.0007159994667987885,

-0.024920590413974295,

0.009017956769934473,

0.005336663327995613,

...]

"""

print(len(text_embedding))

"""

1536

"""

HuggingFace

from langchain_community.embeddings import HuggingFaceEmbeddings

embedding_path = r'H:\pretrained_models\bert\english\paraphrase-multilingual-mpnet-base-v2'

embeddings = HuggingFaceEmbeddings(model_name=embedding_path)

text = "This is a test document."

text_embedding = embeddings.embed_query(text)

print(len(text_embedding)) # 768

from langchain_google_genai import GoogleGenerativeAIEmbeddings

os.environ["GOOGLE_API_KEY"] = "your-key"

embeddings = GoogleGenerativeAIEmbeddings(model="models/embedding-001")

text_embedding = embeddings.embed_query("hello, world!")

print(text_embedding) # 768

更多Embedding可以查看https://python.langchain.com/v0.2/docs/integrations/text_embedding/

计算相似性

我们可以使用嵌入来计算文本的相似度。

word_list = ["Cat", "Dog", "Car""Truck","Computer","Laptop","Apple","Orange", "Music","Dance"]

embedding_model = OpenAIEmbeddings()

embeds = [embedding_model.embed_query(word) for word in word_list]

embeds

"""

[[-0.008174207879591734,

-0.007511803310590743,

-0.00995655437174355,

-0.024788951157780095,

-0.012790553094547429,

0.006654775143594856,

-0.0015151649503578363,

-0.03783217392596492,

-0.014422662356334227,

-0.026250339680779597,

0.017154227704543168,

0.046327340706031526,

0.0035646922858117093,

0.004240754467349556,

-0.032287098019987186,

-0.004592443287070655,

0.03955306057962428,

0.005261676778755394,

0.00789422251521935,

-0.015501631209043845,

-0.023723641081760536,

0.0053197228543978925,

0.014873371253461594,

-0.012141805905252653,

-0.006781109980413554,

...

0.00566348496318421,

0.01855802589283819,

0.00531267762533671,

0.02393075147421956,

...]]

"""

我们引入另一个单词并计算相似度。

input_word = "Lion"

input_embed = embedding_model.embed_query(input_word)

import numpy as np

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

similarity = cosine_similarity(embeds[0], input_embed)

print(similarity) #0.8400893968591456

from sklearn.metrics.pairwise import cosine_similarity

similarity = cosine_similarity(np.array([embeds[0]]), np.array([input_embed]))

print(similarity) #array([[0.8400894]])

sims = [cosine_similarity(np.array([emb]), np.array([input_embed])) for emb in embeds]

"""

[array([[0.8400894]]),

array([[0.80272758]]),

array([[0.79536215]]),

array([[0.81627175]]),

array([[0.82762581]]),

array([[0.81705796]]),

array([[0.82609729]]),

array([[0.78917449]]),

array([[0.79970112]])]

"""

考虑文本存储在 CSV 文件中,我们计划将其用作评估输入相似性的参考。

from langchain.document_loaders.csv_loader import CSVLoader

loader = CSVLoader(file_path='data.csv', csv_args={

'delimiter': ',',

'quotechar': '"',

'fieldnames': ['Words']

})

data = loader.load()

data

"""

[Document(page_content='Words: Words', metadata={'source': 'data.csv', 'row': 0}),

Document(page_content='Words: Cat', metadata={'source': 'data.csv', 'row': 1}),

Document(page_content='Words: Dog', metadata={'source': 'data.csv', 'row': 2}),

Document(page_content='Words: CarTruck', metadata={'source': 'data.csv', 'row': 3}),

Document(page_content='Words: Computer', metadata={'source': 'data.csv', 'row': 4}),

Document(page_content='Words: Laptop', metadata={'source': 'data.csv', 'row': 5}),

Document(page_content='Words: Apple', metadata={'source': 'data.csv', 'row': 6}),

Document(page_content='Words: Orange', metadata={'source': 'data.csv', 'row': 7}),

Document(page_content='Words: Music', metadata={'source': 'data.csv', 'row': 8}),

Document(page_content='Words: Dance', metadata={'source': 'data.csv', 'row': 9})]

"""

CSVLoader 类用于从 CSV 文件加载数据。我们将在系列后面介绍装载机。 我们可以利用FAISS结合LangChain来创建一个向量存储。

embeddings = OpenAIEmbeddings()

from langchain_community.vectorstores import FAISS

db = FAISS.from_documents(data, embeddings)

user_input = "Lion"

results = db.similarity_search(user_input)

results

"""

[Document(page_content='Words: Cat', metadata={'source': 'data.csv', 'row': 1}),

Document(page_content='Words: Apple', metadata={'source': 'data.csv', 'row': 6}),

Document(page_content='Words: Dog', metadata={'source': 'data.csv', 'row': 2}),

Document(page_content='Words: Orange', metadata={'source': 'data.csv', 'row': 7})]

"""

![[Cloud Networking] Layer 2 Protocol](https://img-blog.csdnimg.cn/direct/70300f65f3f8422498f323c38858672f.png)

![Java面向对象-[封装、继承、多态、权限修饰符]](https://img-blog.csdnimg.cn/direct/ee0b16d8512b4735b994de318f1b1783.gif#pic_center)