说明

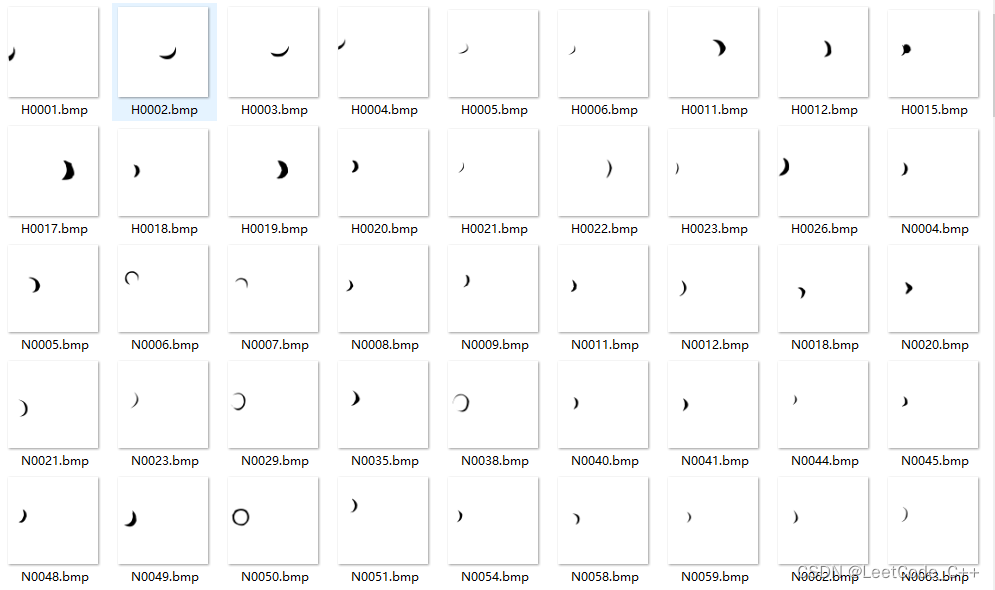

病理性近视(Pathologic Myopia,PM)的医疗类数据集,包含1200个受试者的眼底视网膜图片,训练、验证和测试数据集各400张。

说明:

如今近视已经成为困扰人们健康的一项全球性负担,在近视人群中,有超过35%的人患有重度近视。近似将会导致眼睛的光轴被拉长,有可能引起视网膜或者络网膜的病变。随着近似度数的不断加深,高度近似有可能引发病理性病变,这将会导致以下几种症状:视网膜或者络网膜发生退化、视盘区域萎缩、漆裂样纹损害、Fuchs斑等。因此及早发现近似患者眼睛的病变并采取治疗,显得非常重要。

数据集准备

training.zip:包含训练中的图片和标签

validation.zip:包含验证集的图片

valid_gt.zip:包含验证集的标签

部分代码:

import os

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

from PIL import Image

DATADIR = '/home/aistudio/work/palm/PALM-Training400/PALM-Training400'

# 文件名以N开头的是正常眼底图片,以P开头的是病变眼底图片

file1 = 'N0012.jpg'

file2 = 'P0095.jpg'

# 读取图片

img1 = Image.open(os.path.join(DATADIR, file1))

img1 = np.array(img1)

img2 = Image.open(os.path.join(DATADIR, file2))

img2 = np.array(img2)

# 画出读取的图片

plt.figure(figsize=(16, 8))

f = plt.subplot(121)

f.set_title('Normal', fontsize=20)

plt.imshow(img1)

f = plt.subplot(122)

f.set_title('PM', fontsize=20)

plt.imshow(img2)

plt.show()# LeNet 识别眼疾图片

import os

import random

import paddle

import paddle.fluid as fluid

import numpy as np

DATADIR = '/home/aistudio/work/palm/PALM-Training400/PALM-Training400'

DATADIR2 = '/home/aistudio/work/palm/PALM-Validation400'

CSVFILE = '/home/aistudio/work/palm/PALM-Validation-GT/labels.csv'

# 定义训练过程

def train(model):

with fluid.dygraph.guard():

print('start training ... ')

model.train()

epoch_num = 5

# 定义优化器

opt = fluid.optimizer.Momentum(learning_rate=0.001, momentum=0.9, parameter_list=model.parameters())

# 定义数据读取器,训练数据读取器和验证数据读取器

train_loader = data_loader(DATADIR, batch_size=10, mode='train')

valid_loader = valid_data_loader(DATADIR2, CSVFILE)

for epoch in range(epoch_num):

for batch_id, data in enumerate(train_loader()):

x_data, y_data = data

img = fluid.dygraph.to_variable(x_data)

label = fluid.dygraph.to_variable(y_data)

# 运行模型前向计算,得到预测值

logits = model(img)

# 进行loss计算

loss = fluid.layers.sigmoid_cross_entropy_with_logits(logits, label)

avg_loss = fluid.layers.mean(loss)

if batch_id % 10 == 0:

print("epoch: {}, batch_id: {}, loss is: {}".format(epoch, batch_id, avg_loss.numpy()))

# 反向传播,更新权重,清除梯度

avg_loss.backward()

opt.minimize(avg_loss)

model.clear_gradients()

model.eval()

accuracies = []

losses = []

for batch_id, data in enumerate(valid_loader()):

x_data, y_data = data

img = fluid.dygraph.to_variable(x_data)

label = fluid.dygraph.to_variable(y_data)

# 运行模型前向计算,得到预测值

logits = model(img)

# 二分类,sigmoid计算后的结果以0.5为阈值分两个类别

# 计算sigmoid后的预测概率,进行loss计算

pred = fluid.layers.sigmoid(logits)

loss = fluid.layers.sigmoid_cross_entropy_with_logits(logits, label)

# 计算预测概率小于0.5的类别

pred2 = pred * (-1.0) + 1.0

# 得到两个类别的预测概率,并沿第一个维度级联

pred = fluid.layers.concat([pred2, pred], axis=1)

acc = fluid.layers.accuracy(pred, fluid.layers.cast(label, dtype='int64'))

accuracies.append(acc.numpy())

losses.append(loss.numpy())

print("[validation] accuracy/loss: {}/{}".format(np.mean(accuracies), np.mean(losses)))

model.train()

# save params of model

fluid.save_dygraph(model.state_dict(), 'mnist')

# save optimizer state

fluid.save_dygraph(opt.state_dict(), 'mnist')

# 定义评估过程

def evaluation(model, params_file_path):

with fluid.dygraph.guard():

print('start evaluation .......')

#加载模型参数

model_state_dict, _ = fluid.load_dygraph(params_file_path)

model.load_dict(model_state_dict)

model.eval()

eval_loader = load_data('eval')

acc_set = []

avg_loss_set = []

for batch_id, data in enumerate(eval_loader()):

x_data, y_data = data

img = fluid.dygraph.to_variable(x_data)

label = fluid.dygraph.to_variable(y_data)

# 计算预测和精度

prediction, acc = model(img, label)

# 计算损失函数值

loss = fluid.layers.cross_entropy(input=prediction, label=label)

avg_loss = fluid.layers.mean(loss)

acc_set.append(float(acc.numpy()))

avg_loss_set.append(float(avg_loss.numpy()))

# 求平均精度

acc_val_mean = np.array(acc_set).mean()

avg_loss_val_mean = np.array(avg_loss_set).mean()

print('loss={}, acc={}'.format(avg_loss_val_mean, acc_val_mean))

# 导入需要的包

import paddle

import paddle.fluid as fluid

import numpy as np

from paddle.fluid.dygraph.nn import Conv2D, Pool2D, Linear

# 定义 LeNet 网络结构

class LeNet(fluid.dygraph.Layer):

def __init__(self, name_scope, num_classes=1):

super(LeNet, self).__init__(name_scope)

# 创建卷积和池化层块,每个卷积层使用Sigmoid激活函数,后面跟着一个2x2的池化

self.conv1 = Conv2D(num_channels=3, num_filters=6, filter_size=5, act='sigmoid')

self.pool1 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

self.conv2 = Conv2D(num_channels=6, num_filters=16, filter_size=5, act='sigmoid')

self.pool2 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 创建第3个卷积层

self.conv3 = Conv2D(num_channels=16, num_filters=120, filter_size=4, act='sigmoid')

# 创建全连接层,第一个全连接层的输出神经元个数为64, 第二个全连接层输出神经元个数为分裂标签的类别数

self.fc1 = Linear(input_dim=300000, output_dim=64, act='sigmoid')

self.fc2 = Linear(input_dim=64, output_dim=num_classes)

# 网络的前向计算过程

def forward(self, x):

x = self.conv1(x)

x = self.pool1(x)

x = self.conv2(x)

x = self.pool2(x)

x = self.conv3(x)

x = fluid.layers.reshape(x, [x.shape[0], -1])

x = self.fc1(x)

x = self.fc2(x)

return x

if __name__ == '__main__':

# 创建模型

with fluid.dygraph.guard():

model = LeNet("LeNet_", num_classes=1)

train(model)