原论文地址:原论文下载地址

论文相关内容介绍:

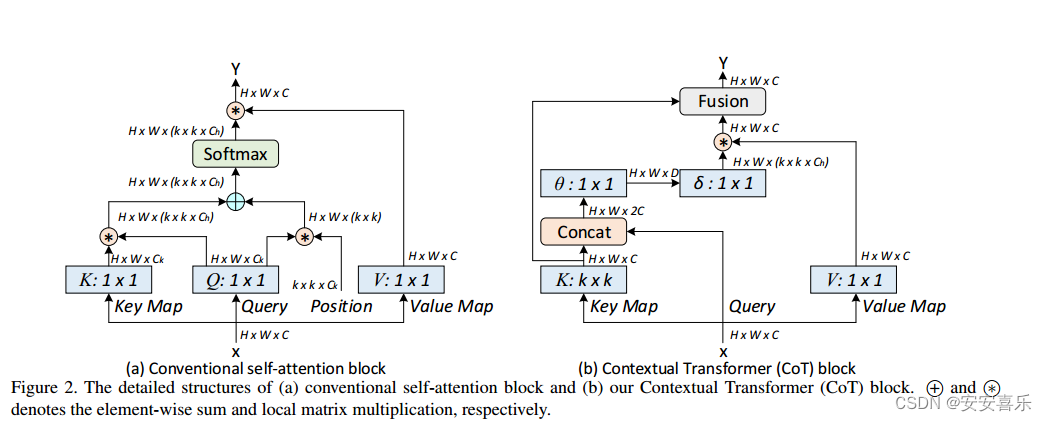

论文摘要翻译: 具有自关注的Transformer导致了自然语言处理领域的革命,并且最近在许多计算机视觉任务中激发了具有竞争性结果的Transformer风格架构设计的出现。然而,大多数现有设计直接使用二维特征图上的自关注来获得基于每个空间位置上的孤立查询和键对的关注矩阵,而没有充分利用相邻键之间的丰富上下文。在这项工作中,我们设计了一个新颖的Transformer风格模块,即上下文Transformer (CoT)块,用于视觉识别。这样的设计充分利用输入键之间的语境信息来引导动态注意矩阵的学习,从而增强视觉表征能力。从技术上讲,CoT块首先通过3×3卷积对输入键进行上下文编码,从而得到输入的静态上下文表示。我们进一步将编码的键与输入查询连接起来,通过两个连续的1 × 1卷积来学习动态多头注意矩阵。将学习到的注意矩阵乘以输入值,实现输入的动态上下文表示。最后将静态和动态上下文表示的融合作为输出。我们的CoT块很吸引人,因为它可以很容易地替换ResNet架构中的每个3x3卷积,从而产生一个名为上下文变压器网络(Contextual Transformer Networks, CoTNet)的Transformer风格主干。通过广泛的应用(例如,图像识别,对象检测和实例分割)的广泛实验,我们验证了CoTNet作为更强大骨干的优势。

作者提出了一种新的Transformer风格的构建块,称为上下文Transformer (CoT),用于图像表示学习。该设计超越了传统的自注意机制,通过额外利用输入键之间的上下文信息来促进自注意学习,最终提高了深度网络的表征特性。在整个深度架构中用CoT块替换3×3卷积后,进一步阐述了分别由ResNet和ResNeX衍生的两种上下文转换网络(Contextual Transformer Networks),即CoTNet和CoTNeXt。

CoTAttention网络中的“CoT”代表“Cross-modal Transformer”,即跨模态Transformer。在该网络中,视觉和语言输入分别被编码为一组特征向量,然后通过一个跨模态的Transformer模块进行交互和整合。在这个跨模态的Transformer模块中,Co-Attention机制被用来计算视觉和语言特征之间的交互注意力,从而实现更好的信息交换和整合。在计算机视觉和自然语言处理紧密结合的VQA任务中,CoTAttention网络取得了很好的效果。

2.yolov8加入 CoTAttention的步骤:

2.1 在/ultralytics/nn/modules/block.py添加代码到末尾

class CoTAttention(nn.Module):

def __init__(self, dim=512, kernel_size=3):

super().__init__()

self.dim = dim

self.kernel_size = kernel_size

self.key_embed = nn.Sequential(

nn.Conv2d(dim, dim, kernel_size=kernel_size, padding=kernel_size // 2, groups=4, bias=False),

nn.BatchNorm2d(dim),

nn.ReLU()

)

self.value_embed = nn.Sequential(

nn.Conv2d(dim, dim, 1, bias=False),

nn.BatchNorm2d(dim)

)

factor = 4

self.attention_embed = nn.Sequential(

nn.Conv2d(2 * dim, 2 * dim // factor, 1, bias=False),

nn.BatchNorm2d(2 * dim // factor),

nn.ReLU(),

nn.Conv2d(2 * dim // factor, kernel_size * kernel_size * dim, 1)

)

def forward(self, x):

bs, c, h, w = x.shape

k1 = self.key_embed(x) # bs,c,h,w

v = self.value_embed(x).view(bs, c, -1) # bs,c,h,w

y = torch.cat([k1, x], dim=1) # bs,2c,h,w

att = self.attention_embed(y) # bs,c*k*k,h,w

att = att.reshape(bs, c, self.kernel_size * self.kernel_size, h, w)

att = att.mean(2, keepdim=False).view(bs, c, -1) # bs,c,h*w

k2 = F.softmax(att, dim=-1) * v

k2 = k2.view(bs, c, h, w)

return k1 + k2

2.2 在/ultralytics/nn/modules/block.py的头部all里面将”CoTAttention"加入到末尾

__all__ = (

"DFL",

"HGBlock",

"HGStem",

"SPP",

"SPPF",

"C1",

"C2",

"C3",

"C2f",

"C2fAttn",

"ImagePoolingAttn",

"ContrastiveHead",

"BNContrastiveHead",

"C3x",

"C3TR",

"C3Ghost",

"GhostBottleneck",

"Bottleneck",

"BottleneckCSP",

"Proto",

"RepC3",

"ResNetLayer",

"RepNCSPELAN4",

"ADown",

"SPPELAN",

"CBFuse",

"CBLinear",

"Silence",

"CoTAttention",)

2.3在/ultralytics/nn/modules/__init__.py的头部

from .block import (

里面将”CoTAttention"加入到末尾

from .block import (

C1,

C2,

C3,

C3TR,

DFL,

SPP,

SPPF,

Bottleneck,

BottleneckCSP,

C2f,

C2fAttn,

ImagePoolingAttn,

C3Ghost,

C3x,

GhostBottleneck,

HGBlock,

HGStem,

Proto,

RepC3,

ResNetLayer,

ContrastiveHead,

BNContrastiveHead,

RepNCSPELAN4,

ADown,

SPPELAN,

CBFuse,

CBLinear,

Silence,

CoTAttention,

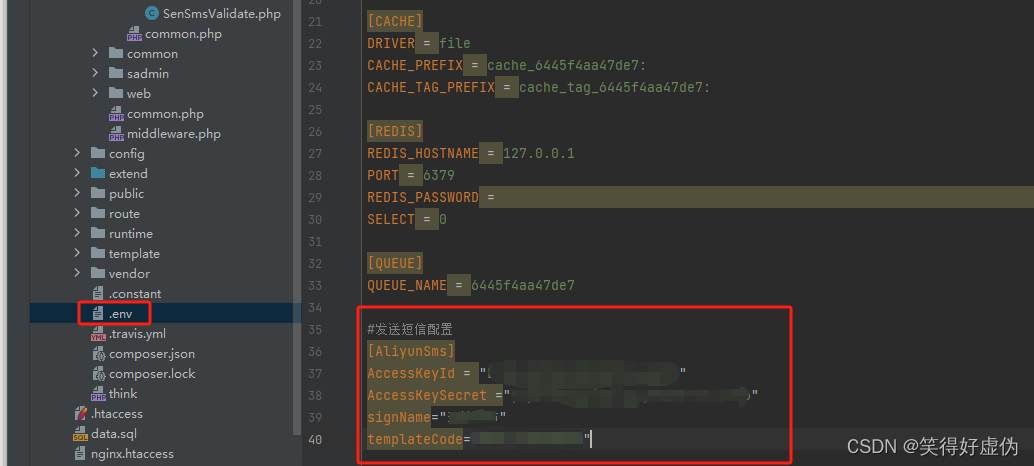

)2.4 在/ultralytics/nn/tasks.py

from ultralytics.nn.modules import (C1, C2, C3, C3TR, SPP, SPPF,

Bottleneck, BottleneckCSP, C2f, C3Ghost, C3x, Classify,Concat, Conv,

ConvTranspose, Detect, DWConv, DWConvTranspose2d, Ensemble,

Focus,GhostBottleneck, GhostConv, Segment, CoTAttention)def parse_model(d, ch, verbose=True): 加入以下代码:

elif m is CoTAttention:

c1, c2 = ch[f], args[0]

if c2 != nc:

c2 = make_divisible(min(c2, max_channels) * width, 8)

args = [c1, *args[1:]]2.5 yolov8_CoTAttention.yaml

# Ultralytics YOLO 🚀, GPL-3.0 license

# YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 4 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 3, C2f, [128, True]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 6, C2f, [256, True]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 6, C2f, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 3, C2f, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 12

- [-1, 1, nn.Upsample, [None, 2, 'nearest']]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 15 (P3/8-small)

- [-1, 3, CoTAttention, [256]] # 16

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 12], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 19 (P4/16-medium)

- [-1, 3, CoTAttention, [512]]

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 9], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 23 (P5/32-large)

- [-1, 3, CoTAttention, [1024]]

- [[16, 20, 24], 1, Detect, [nc]] # Detect(P3, P4, P5)