20240202在Ubuntu20.04.6下使用whisper.cpp的显卡模式

2024/2/2 19:43

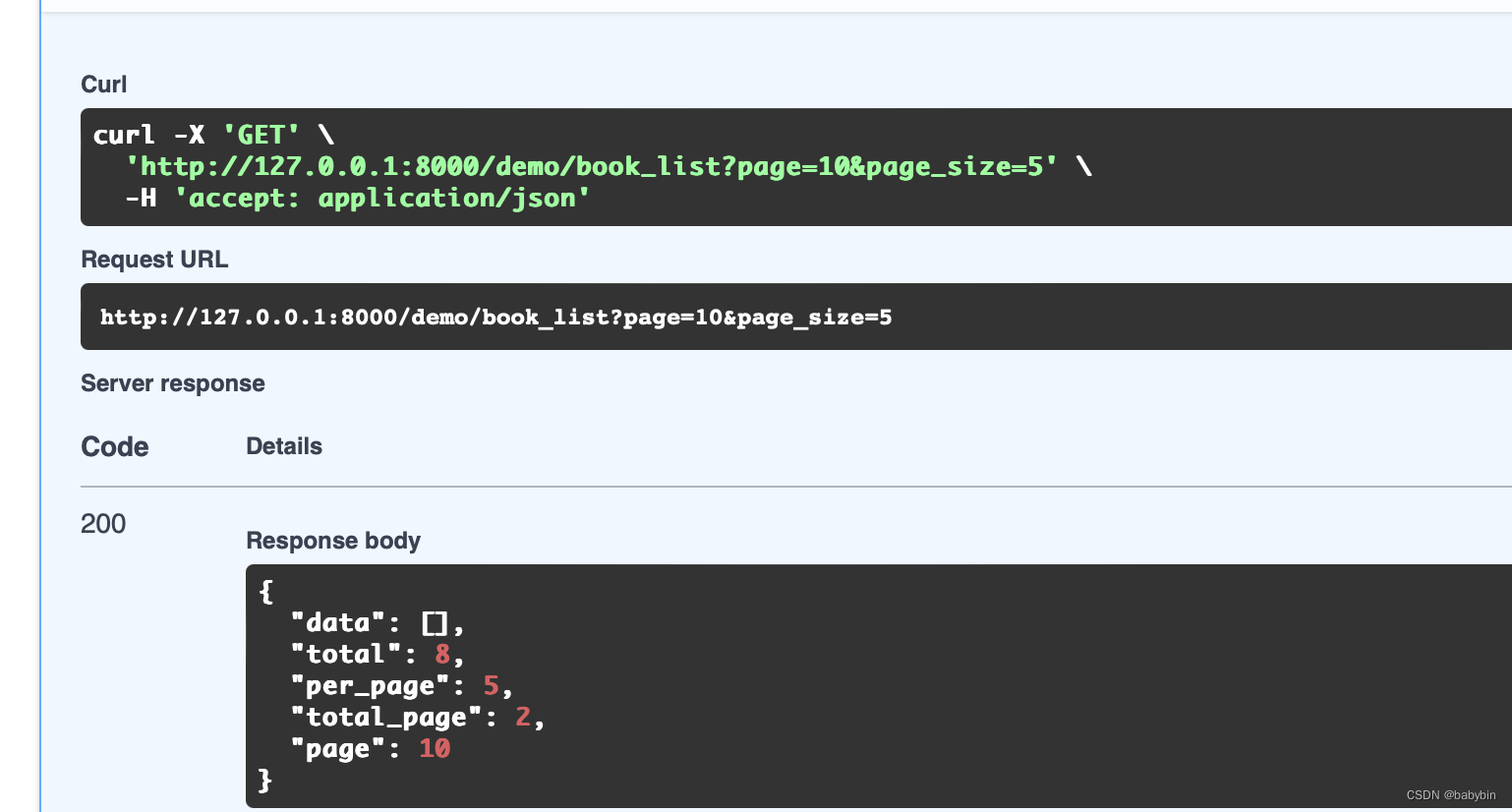

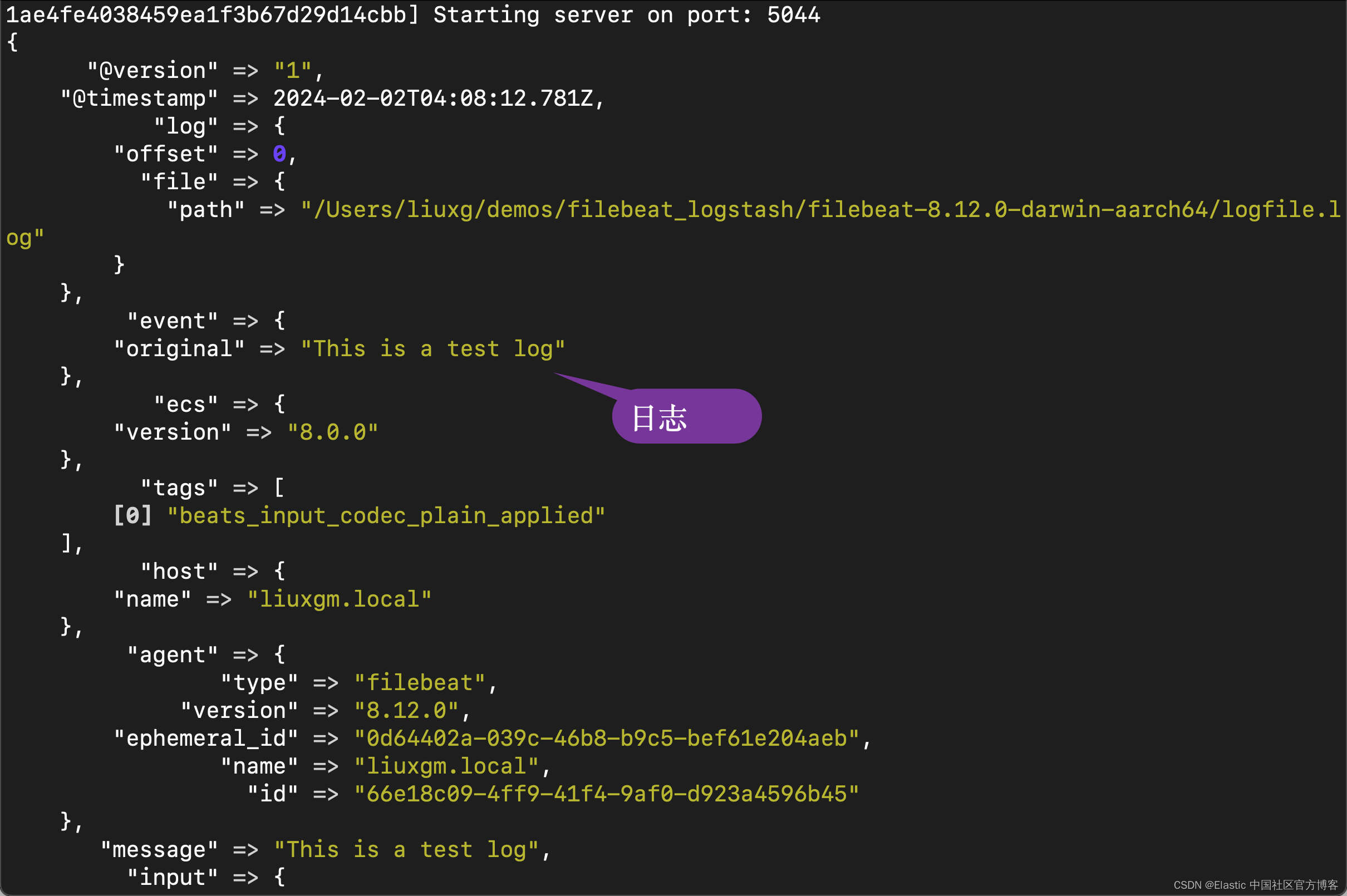

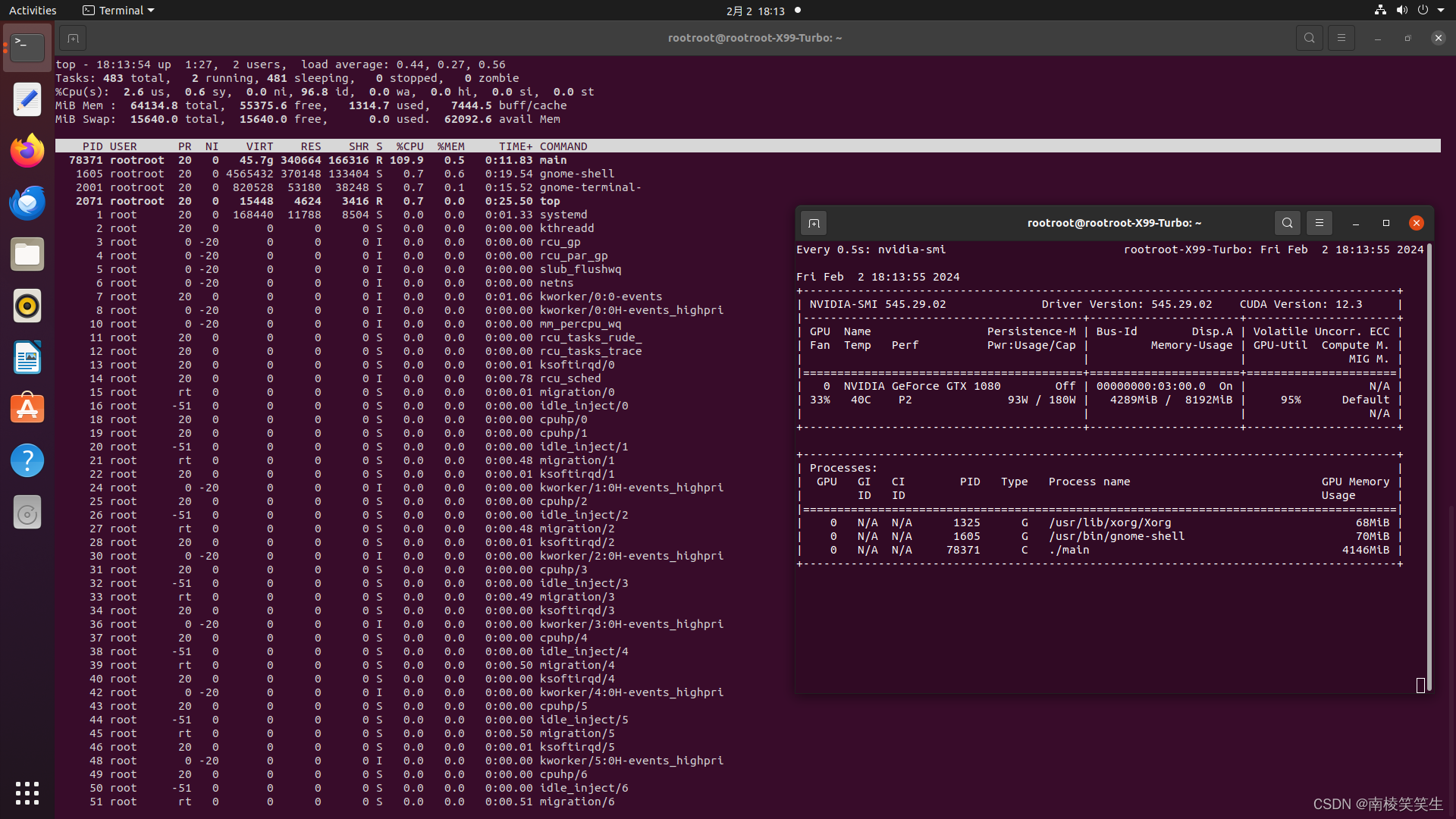

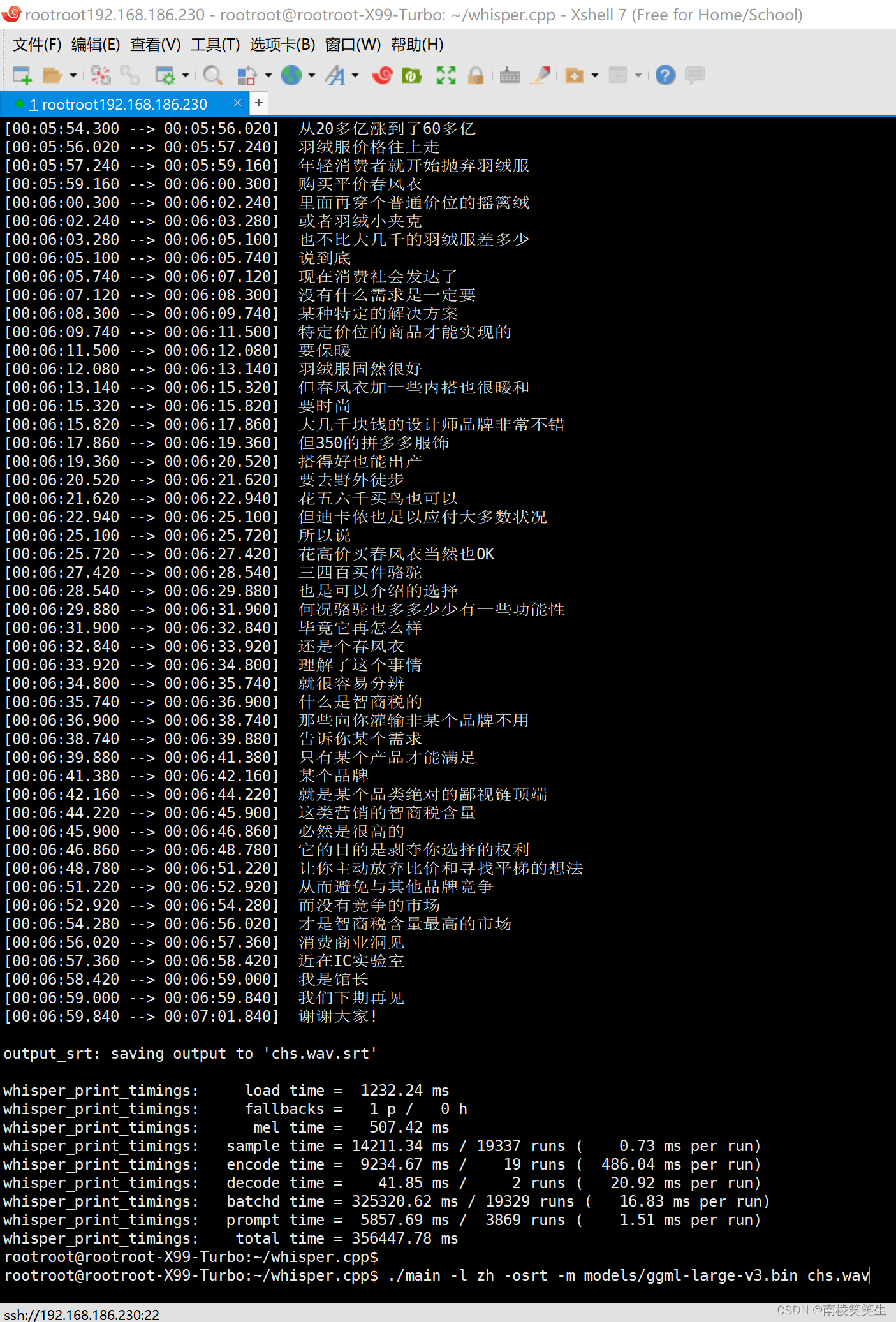

【结论:在Ubuntu20.04.6下,确认large模式识别7分钟中文视频,需要356447.78 ms,也就是356.5秒,需要大概5分钟!效率太差!】

前提条件,可以通过技术手段上外网!^_

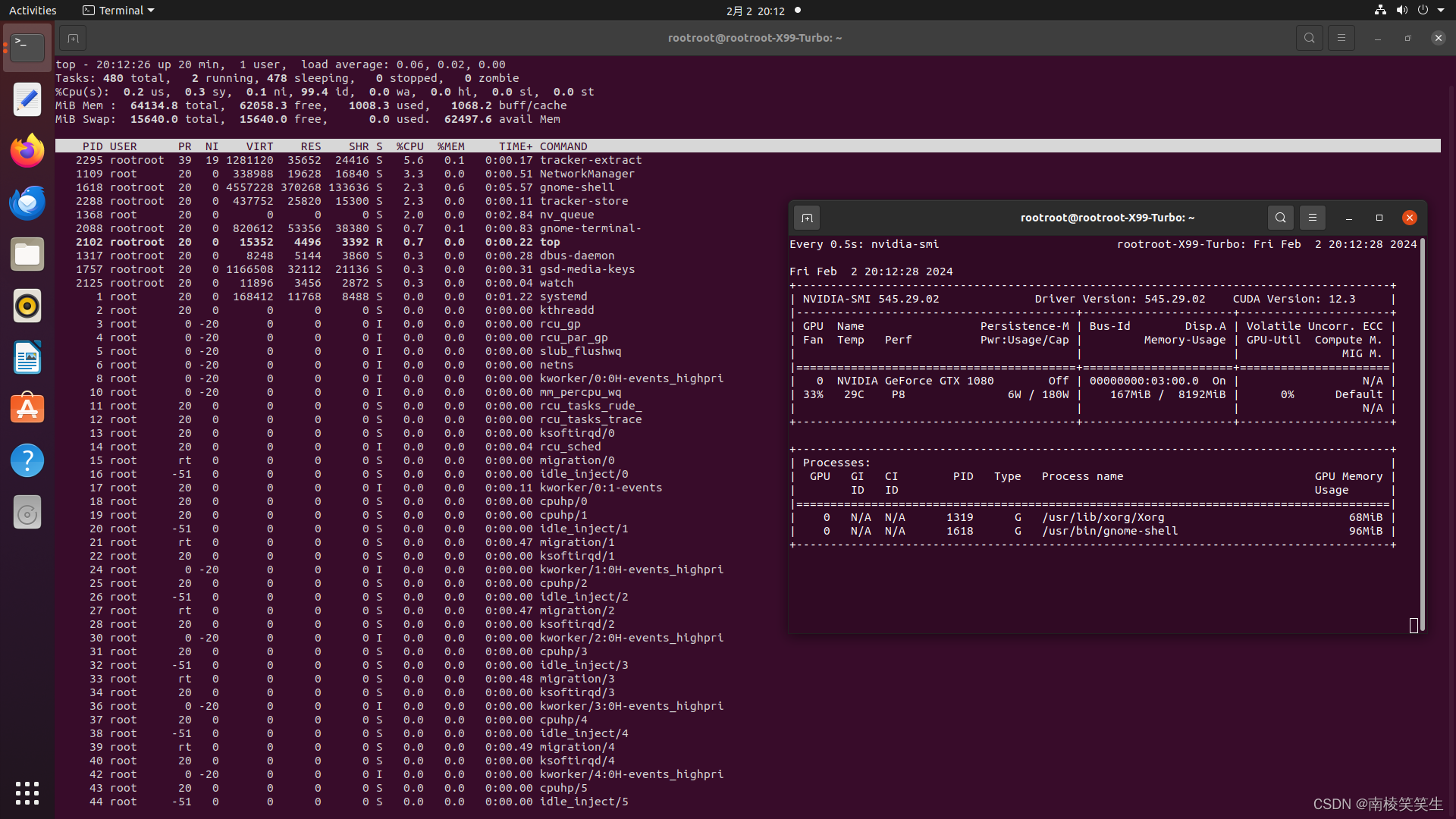

首先你要有一张NVIDIA的显卡,比如我用的PDD拼多多的二手GTX1080显卡。【并且极其可能是矿卡!】800¥

2、请正确安装好NVIDIA最新的545版本的驱动程序和CUDA、cuDNN。

2、安装Torch

3、配置whisper

https://github.com/ggerganov/whisper.cpp

https://www.toutiao.com/article/7276732434920653312/?app=news_article×tamp=1706802934&use_new_style=1&req_id=2024020123553463D3509B1706BC79D479&group_id=7276732434920653312&tt_from=mobile_qq&utm_source=mobile_qq&utm_medium=toutiao_android&utm_campaign=client_share&share_token=7bcb7488-a03d-4291-96fb-d0835ac76cca&source=m_redirect

https://www.toutiao.com/article/7276732434920653312/

OpenAI的whisper的c/c++ 版本体验

首先下载代码,注:我的OS环境是Ubuntu20.04.6。

git clone https://github.com/ggerganov/whisper.cpp

下载成功后进入项目目录:

cd whisper.cpp

执行如下脚本命令下载模型,这里选择的base 版本,我们先来测试英语识别:

bash ./models/download-ggml-model.sh base.en

但是尝试了几次都无法下载成功,报错消息如下:

网上search 了一下,找到可提供下载的链接:

https://github.com/ggerganov/whisper.cpp/tree/master/models

https://huggingface.co/ggerganov/whisper.cpp/tree/main

我选择下载全部35个文件!

下载成功后将模型文件copy 到项目中的models目录:

cp ~/Downloads/ggml-base.en.gin /home/havelet/ai/whisper.cpp/models

接下来执行如下编译命令:

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ make clean

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ WHISPER_CLBLAST=1 make -j16

执行结果如下:

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ WHISPER_CUBLAS=1 make

I whisper.cpp build info:

I UNAME_S: Linux

I UNAME_P: x86_64

I UNAME_M: x86_64

I CFLAGS: -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I LDFLAGS: -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

I CC: cc (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

I CXX: g++ (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

nvcc --forward-unknown-to-host-compiler -arch=native -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -Wno-pedantic -c ggml-cuda.cu -o ggml-cuda.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -c ggml.c -o ggml.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -c ggml-alloc.c -o ggml-alloc.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -c ggml-backend.c -o ggml-backend.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -c ggml-quants.c -o ggml-quants.o

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -c whisper.cpp -o whisper.o

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include examples/main/main.cpp examples/common.cpp examples/common-ggml.cpp ggml-cuda.o ggml.o ggml-alloc.o ggml-backend.o ggml-quants.o whisper.o -o main -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

./main -h

usage: ./main [options] file0.wav file1.wav ...

options:

-h, --help [default] show this help message and exit

-t N, --threads N [4 ] number of threads to use during computation

-p N, --processors N [1 ] number of processors to use during computation

-ot N, --offset-t N [0 ] time offset in milliseconds

-on N, --offset-n N [0 ] segment index offset

-d N, --duration N [0 ] duration of audio to process in milliseconds

-mc N, --max-context N [-1 ] maximum number of text context tokens to store

-ml N, --max-len N [0 ] maximum segment length in characters

-sow, --split-on-word [false ] split on word rather than on token

-bo N, --best-of N [5 ] number of best candidates to keep

-bs N, --beam-size N [5 ] beam size for beam search

-wt N, --word-thold N [0.01 ] word timestamp probability threshold

-et N, --entropy-thold N [2.40 ] entropy threshold for decoder fail

-lpt N, --logprob-thold N [-1.00 ] log probability threshold for decoder fail

-debug, --debug-mode [false ] enable debug mode (eg. dump log_mel)

-tr, --translate [false ] translate from source language to english

-di, --diarize [false ] stereo audio diarization

-tdrz, --tinydiarize [false ] enable tinydiarize (requires a tdrz model)

-nf, --no-fallback [false ] do not use temperature fallback while decoding

-otxt, --output-txt [false ] output result in a text file

-ovtt, --output-vtt [false ] output result in a vtt file

-osrt, --output-srt [false ] output result in a srt file

-olrc, --output-lrc [false ] output result in a lrc file

-owts, --output-words [false ] output script for generating karaoke video

-fp, --font-path [/System/Library/Fonts/Supplemental/Courier New Bold.ttf] path to a monospace font for karaoke video

-ocsv, --output-csv [false ] output result in a CSV file

-oj, --output-json [false ] output result in a JSON file

-ojf, --output-json-full [false ] include more information in the JSON file

-of FNAME, --output-file FNAME [ ] output file path (without file extension)

-np, --no-prints [false ] do not print anything other than the results

-ps, --print-special [false ] print special tokens

-pc, --print-colors [false ] print colors

-pp, --print-progress [false ] print progress

-nt, --no-timestamps [false ] do not print timestamps

-l LANG, --language LANG [en ] spoken language ('auto' for auto-detect)

-dl, --detect-language [false ] exit after automatically detecting language

--prompt PROMPT [ ] initial prompt

-m FNAME, --model FNAME [models/ggml-base.en.bin] model path

-f FNAME, --file FNAME [ ] input WAV file path

-oved D, --ov-e-device DNAME [CPU ] the OpenVINO device used for encode inference

-ls, --log-score [false ] log best decoder scores of tokens

-ng, --no-gpu [false ] disable GPU

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include examples/bench/bench.cpp ggml-cuda.o ggml.o ggml-alloc.o ggml-backend.o ggml-quants.o whisper.o -o bench -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include examples/quantize/quantize.cpp examples/common.cpp examples/common-ggml.cpp ggml-cuda.o ggml.o ggml-alloc.o ggml-backend.o ggml-quants.o whisper.o -o quantize -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include examples/server/server.cpp examples/common.cpp examples/common-ggml.cpp ggml-cuda.o ggml.o ggml-alloc.o ggml-backend.o ggml-quants.o whisper.o -o server -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ll

编译成功后,则可以执行测试程序,首先执行自带测试音频:【英文】

./main -f samples/jfk.wav

执行结果如下,我们可看到识别结果正确:

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ./main -l zh -osrt -m models/g

generate-coreml-interface.sh generate-coreml-model.sh ggml-base.en.bin ggml-large-v3.bin ggml-medium.bin ggml_to_pt.py

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ./main -l zh -osrt -m models/ggml

ggml-base.en.bin ggml-large-v3.bin ggml-medium.bin ggml_to_pt.py

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ./main -l zh -osrt -m models/ggml-large-v3.bin chs.wav

whisper_init_from_file_with_params_no_state: loading model from 'models/ggml-large-v3.bin'

whisper_model_load: loading model

whisper_model_load: n_vocab = 51866

whisper_model_load: n_audio_ctx = 1500

whisper_model_load: n_audio_state = 1280

whisper_model_load: n_audio_head = 20

whisper_model_load: n_audio_layer = 32

whisper_model_load: n_text_ctx = 448

whisper_model_load: n_text_state = 1280

whisper_model_load: n_text_head = 20

whisper_model_load: n_text_layer = 32

whisper_model_load: n_mels = 128

whisper_model_load: ftype = 1

whisper_model_load: qntvr = 0

whisper_model_load: type = 5 (large v3)

whisper_model_load: adding 1609 extra tokens

whisper_model_load: n_langs = 100

ggml_init_cublas: GGML_CUDA_FORCE_MMQ: no

ggml_init_cublas: CUDA_USE_TENSOR_CORES: yes

ggml_init_cublas: found 1 CUDA devices:

Device 0: NVIDIA GeForce GTX 1080, compute capability 6.1, VMM: yes

whisper_backend_init: using CUDA backend

whisper_model_load: CUDA0 total size = 3094.86 MB (3 buffers)

whisper_model_load: model size = 3094.36 MB

whisper_backend_init: using CUDA backend

whisper_init_state: kv self size = 220.20 MB

whisper_init_state: kv cross size = 245.76 MB

whisper_init_state: compute buffer (conv) = 35.50 MB

whisper_init_state: compute buffer (encode) = 233.50 MB

whisper_init_state: compute buffer (cross) = 10.15 MB

whisper_init_state: compute buffer (decode) = 108.99 MB

system_info: n_threads = 4 / 36 | AVX = 1 | AVX2 = 1 | AVX512 = 0 | FMA = 1 | NEON = 0 | ARM_FMA = 0 | METAL = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | CUDA = 1 | COREML = 0 | OPENVINO = 0 |

main: processing 'chs.wav' (6748501 samples, 421.8 sec), 4 threads, 1 processors, 5 beams + best of 5, lang = zh, task = transcribe, timestamps = 1 ...

[00:00:00.040 --> 00:00:01.460] 前段时间有个巨石横火

[00:00:01.460 --> 00:00:02.860] 某某是男人最好的衣媒

[00:00:02.860 --> 00:00:04.800] 这里的某某可以替换为减肥

[00:00:04.800 --> 00:00:07.620] 长发 西装 考研 书唱 永结无间等等等等

[00:00:07.620 --> 00:00:09.320] 我听到最新的一个说法是

[00:00:09.320 --> 00:00:11.940] 微分碎盖加口罩加半框眼镜加冲锋衣

[00:00:11.940 --> 00:00:13.440] 等于男人最好的衣媒

[00:00:13.440 --> 00:00:14.420] 大概也就前几年

[00:00:14.420 --> 00:00:17.560] 冲锋衣还和格子衬衫并列为程序员穿搭精华

[00:00:17.560 --> 00:00:19.940] 紫红色冲锋衣还被誉为广场舞达妈标配

[00:00:19.940 --> 00:00:22.700] 骆驼牌还是我爹这个年纪的人才会愿意买的牌子

[00:00:22.700 --> 00:00:24.380] 不知道风向为啥变得这么快

[00:00:24.380 --> 00:00:26.680] 为啥这东西突然变成男生逆袭神器

[00:00:26.680 --> 00:00:27.660] 时尚潮流单品

[00:00:27.660 --> 00:00:29.580] 后来我翻了一下小红书就懂了

[00:00:29.580 --> 00:00:30.460] 时尚这个时期

[00:00:30.460 --> 00:00:31.620] 重点不在于衣服

[00:00:31.620 --> 00:00:32.160] 在于人

[00:00:32.160 --> 00:00:34.500] 现在小红书上面和冲锋衣相关的笔记

[00:00:34.500 --> 00:00:36.220] 照片里的男生都是这样的

[00:00:36.220 --> 00:00:36.880] 这样的

[00:00:36.880 --> 00:00:38.140] 还有这样的

[00:00:38.140 --> 00:00:39.460] 你们哪里是看穿搭的

[00:00:39.460 --> 00:00:40.540] 你们明明是看脸

[00:00:40.540 --> 00:00:41.780] 就这个造型这个年龄

[00:00:41.780 --> 00:00:43.920] 你换上老头衫也能穿出氛围感好吗

[00:00:43.920 --> 00:00:46.560] 我又想起了当年郭德纲老师穿计繁西的残剧

[00:00:46.560 --> 00:00:48.560] 这个世界对我们这些长得不好看的人

[00:00:48.560 --> 00:00:49.480] 还真是苛刻呢

[00:00:49.480 --> 00:00:52.100] 所以说我总结了一下冲锋衣传达的要领

[00:00:52.100 --> 00:00:54.200] 大概就是一张白净且人畜无汉的脸

[00:00:54.200 --> 00:00:55.120] 充足的发量

[00:00:55.120 --> 00:00:55.980] 纤细的体型

[00:00:55.980 --> 00:00:58.160] 当然身上的冲锋衣还得是骆驼的

[00:00:58.160 --> 00:00:59.320] 去年在户外用品界

[00:00:59.320 --> 00:01:01.100] 最顶流的既不是鸟像书

[00:01:01.100 --> 00:01:02.560] 也不是有校服之称的北面

[00:01:02.560 --> 00:01:04.120] 或者老台顶流哥伦比亚

[00:01:04.120 --> 00:01:04.800] 而是骆驼

[00:01:04.800 --> 00:01:06.980] 双十一骆驼在天猫户外服饰品类

[00:01:06.980 --> 00:01:08.860] 拿下销售额和销量双料冠军

[00:01:08.860 --> 00:01:09.980] 销量达到百万级

[00:01:09.980 --> 00:01:10.620] 在抖音

[00:01:10.620 --> 00:01:13.200] 骆驼销售同比增幅高达百分之296

[00:01:13.200 --> 00:01:15.920] 旗下主打的三合一高性价比冲锋衣成为爆品

[00:01:15.920 --> 00:01:17.260] 哪怕不看双十一

[00:01:17.260 --> 00:01:18.020] 随手一搜

[00:01:18.020 --> 00:01:21.040] 骆驼在冲锋衣的七日销售榜上都是图榜的存在

[00:01:21.040 --> 00:01:22.480] 这是线上的销售表现

[00:01:22.480 --> 00:01:24.200] 至于线下还是网友总结的好

[00:01:24.200 --> 00:01:26.740] 如今在南方街头的骆驼比沙漠里的都多

[00:01:26.740 --> 00:01:27.540] 爬个华山

[00:01:27.540 --> 00:01:28.320] 满山的骆驼

[00:01:28.320 --> 00:01:29.840] 随便逛个街撞山了

[00:01:29.840 --> 00:01:31.060] 至于骆驼为啥这么火

[00:01:31.060 --> 00:01:31.800] 便宜啊

[00:01:31.800 --> 00:01:33.400] 拿卖的最好的丁真同款

[00:01:33.400 --> 00:01:35.500] 幻影黑三合一冲锋衣举个例子

[00:01:35.500 --> 00:01:36.000] 线下买

[00:01:36.000 --> 00:01:37.440] 标牌价格2198

[00:01:37.440 --> 00:01:38.940] 但是跑到网上看一下

[00:01:38.940 --> 00:01:40.460] 标价就变成了699

[00:01:40.460 --> 00:01:41.220] 至于折扣

[00:01:41.220 --> 00:01:42.360] 日常也都是有的

[00:01:42.360 --> 00:01:43.440] 400出头就能买到

[00:01:43.440 --> 00:01:44.960] 甚至有时候能低到300价

[00:01:44.960 --> 00:01:46.140] 要是你还嫌贵

[00:01:46.140 --> 00:01:48.200] 路头还有200块出头的单层冲锋衣

[00:01:48.200 --> 00:01:49.080] 就这个价格

[00:01:49.080 --> 00:01:51.520] 搁上海恐怕还不够两次CityWalk的报名费

[00:01:51.520 --> 00:01:52.560] 看了这个价格

[00:01:52.560 --> 00:01:53.560] 再对比一下北面

[00:01:53.560 --> 00:01:54.640] 1000块钱起步

[00:01:54.640 --> 00:01:56.000] 你就能理解为啥北面

[00:01:56.000 --> 00:01:58.120] 这么快就被大学生踢出了校服序列了

[00:01:58.120 --> 00:02:00.380] 我不知道现在大学生每个月生活费多少

[00:02:00.380 --> 00:02:02.160] 反正按照我上学时候的生活费

[00:02:02.160 --> 00:02:03.200] 一个月不吃不喝

[00:02:03.200 --> 00:02:05.080] 也就买得起俩袖子加一个帽子

[00:02:05.080 --> 00:02:06.420] 难怪当年全是假北面

[00:02:06.420 --> 00:02:07.400] 现在都是真路头

[00:02:07.400 --> 00:02:08.640] 至少人家是正品啊

[00:02:08.640 --> 00:02:10.080] 我翻了一下社交媒体

[00:02:10.080 --> 00:02:12.060] 发现对路头的吐槽和买了路头的

[00:02:12.060 --> 00:02:13.340] 基本上是1比1的比例

[00:02:13.340 --> 00:02:15.040] 吐槽最多的就是衣服会掉色

[00:02:15.040 --> 00:02:15.960] 还会串色

[00:02:15.960 --> 00:02:17.100] 比如图增洗个几次

[00:02:17.100 --> 00:02:18.240] 穿个两天就掉光了

[00:02:18.240 --> 00:02:19.600] 比如不同仓库发的货

[00:02:19.600 --> 00:02:20.600] 质量参差不齐

[00:02:20.600 --> 00:02:22.300] 买衣服还得看户口拼出身

[00:02:22.300 --> 00:02:23.660] 至于什么做工比较差

[00:02:23.660 --> 00:02:24.300] 内胆多

[00:02:24.300 --> 00:02:24.880] 走线糙

[00:02:24.880 --> 00:02:26.380] 不防水之类的就更多了

[00:02:26.380 --> 00:02:27.360] 但是这些吐槽

[00:02:27.360 --> 00:02:29.160] 并不意味着会影响路头的销量

[00:02:29.160 --> 00:02:30.820] 甚至还会有不少自来水表示

[00:02:30.820 --> 00:02:32.680] 就这价格要啥自行车啊

[00:02:32.680 --> 00:02:34.080] 所谓性价比性价比

[00:02:34.080 --> 00:02:35.340] 脱离价位谈性能

[00:02:35.340 --> 00:02:36.980] 这就不符合消费者的需求嘛

[00:02:36.980 --> 00:02:38.480] 无数次价格战告诉我们

[00:02:38.480 --> 00:02:39.500] 只要肯降价

[00:02:39.500 --> 00:02:40.960] 就没有卖不出去的产品

[00:02:40.960 --> 00:02:41.820] 一件冲锋衣

[00:02:41.820 --> 00:02:43.500] 1000多你觉得平平无奇

[00:02:43.500 --> 00:02:44.900] 500多你觉得差点意思

[00:02:44.900 --> 00:02:46.480] 200块你就要秒下单了

[00:02:46.480 --> 00:02:48.520] 到99恐怕就要拼点手速了

[00:02:48.520 --> 00:02:49.560] 像冲锋衣这个品类

[00:02:49.560 --> 00:02:50.720] 本来价格跨度就大

[00:02:50.720 --> 00:02:52.660] 北面最便宜的Gortex冲锋衣

[00:02:52.660 --> 00:02:53.740] 价格3000起步

[00:02:53.740 --> 00:02:56.360] 大概是同品牌最便宜冲锋衣的三倍价格

[00:02:56.360 --> 00:02:57.060] 至于十足鸟

[00:02:57.060 --> 00:02:59.020] 搭载了Gortex的硬壳起步价

[00:02:59.020 --> 00:02:59.780] 就要到4500

[00:02:59.780 --> 00:03:01.080] 而且同样是Gortex

[00:03:01.080 --> 00:03:02.860] 内部也有不同的系列和档次

[00:03:02.860 --> 00:03:03.520] 做成衣服

[00:03:03.520 --> 00:03:05.780] 中间的差价恐怕就够买两件骆驼了

[00:03:05.780 --> 00:03:06.620] 至于智能控温

[00:03:06.620 --> 00:03:07.320] 防水拉链

[00:03:07.320 --> 00:03:07.900] 全压胶

[00:03:07.900 --> 00:03:09.760] 更加不可能出现在骆驼这里了

[00:03:09.760 --> 00:03:11.780] 至少不会是三四百的骆驼身上会有的

[00:03:11.780 --> 00:03:12.660] 有的价外的衣服

[00:03:12.660 --> 00:03:14.040] 买的就是一个放弃幻想

[00:03:14.040 --> 00:03:15.660] 吃到肚子里的科技鱼很活

[00:03:15.660 --> 00:03:16.840] 是能给你省钱的

[00:03:16.840 --> 00:03:18.320] 穿在身上的科技鱼很活

[00:03:18.320 --> 00:03:20.040] 装装件件都是要加钱的

[00:03:20.040 --> 00:03:21.440] 所以正如罗曼罗兰所说

[00:03:21.440 --> 00:03:23.040] 这世界上只有一种英雄主义

[00:03:23.040 --> 00:03:24.860] 就是在认清了骆驼的本质以后

[00:03:24.860 --> 00:03:26.060] 依然选择买骆驼

[00:03:26.060 --> 00:03:26.900] 关于骆驼的火爆

[00:03:26.900 --> 00:03:28.180] 我有一些小小的看法

[00:03:28.180 --> 00:03:28.960] 骆驼这个东西

[00:03:28.960 --> 00:03:30.220] 它其实就是个潮牌

[00:03:30.220 --> 00:03:31.940] 看看它的营销方式就知道了

[00:03:31.940 --> 00:03:32.920] 现在打开小红书

[00:03:32.920 --> 00:03:35.120] 日常可以看到骆驼穿搭是这样的

[00:03:35.120 --> 00:03:36.900] 加一点氛围感是这样的

[00:03:36.900 --> 00:03:37.400] 对比一下

[00:03:37.400 --> 00:03:39.240] 其他品牌的风格是这样的

[00:03:39.240 --> 00:03:40.020] 这样的

[00:03:40.020 --> 00:03:41.280] 其实对比一下就知道了

[00:03:41.280 --> 00:03:42.600] 其他品牌突出一个时程

[00:03:42.600 --> 00:03:44.240] 能防风就一定要讲防风

[00:03:44.240 --> 00:03:45.960] 能扛冻就一定要讲扛冻

[00:03:45.960 --> 00:03:47.340] 但骆驼在营销的时候

[00:03:47.340 --> 00:03:49.080] 主打的就是一个城市户外风

[00:03:49.080 --> 00:03:50.440] 虽然造型是春风衣

[00:03:50.440 --> 00:03:52.180] 但场景往往是在城市里

[00:03:52.180 --> 00:03:54.220] 哪怕在野外也要突出一个风和日丽

[00:03:54.220 --> 00:03:54.940] 阳光敏媚

[00:03:54.940 --> 00:03:56.500] 至少不会在明显的严寒

[00:03:56.500 --> 00:03:58.020] 高海拔或是恶劣气候下

[00:03:58.020 --> 00:04:00.160] 如果用一个词形容骆驼的营销风格

[00:04:00.160 --> 00:04:00.920] 那就是清洗

[00:04:00.920 --> 00:04:03.060] 或者说他很理解自己的消费者是谁

[00:04:03.060 --> 00:04:03.920] 需要什么产品

[00:04:03.920 --> 00:04:05.260] 从使用场景来说

[00:04:05.260 --> 00:04:06.600] 骆驼的消费者买春风衣

[00:04:06.600 --> 00:04:08.640] 不是真的有什么大风大雨要去应对

[00:04:08.640 --> 00:04:10.880] 春风衣的作用是下雨没带伞的时候

[00:04:10.880 --> 00:04:12.160] 临时顶个几分钟

[00:04:12.160 --> 00:04:13.700] 让你能图书馆跑回宿舍

[00:04:13.700 --> 00:04:14.940] 或者是冬天骑电动车

[00:04:14.940 --> 00:04:16.220] 被风吹得不行的时候

[00:04:16.220 --> 00:04:17.200] 稍微扛一下风

[00:04:17.200 --> 00:04:18.340] 不至于体感太冷

[00:04:18.340 --> 00:04:19.700] 当然他们也会出门

[00:04:19.700 --> 00:04:21.780] 但大部分时候也都是去别的城市

[00:04:21.780 --> 00:04:23.860] 或者在城市周边搞搞简单的徒步

[00:04:23.860 --> 00:04:24.920] 这种情况下

[00:04:24.920 --> 00:04:25.920] 穿个骆驼也就够了

[00:04:25.920 --> 00:04:27.220] 从购买动机来说

[00:04:27.220 --> 00:04:29.260] 骆驼就更没有必要上那些硬核科技了

[00:04:29.260 --> 00:04:30.920] 消费者买骆驼买的是个什么呢

[00:04:30.920 --> 00:04:32.240] 不是春风衣的功能性

[00:04:32.240 --> 00:04:33.380] 而是春风衣的造型

[00:04:33.380 --> 00:04:34.340] 宽松的版型

[00:04:34.340 --> 00:04:36.380] 能精准遮住微微隆起的小肚子

[00:04:36.380 --> 00:04:37.440] 棱角分明的质感

[00:04:37.440 --> 00:04:39.420] 能隐藏一切不完美的整体线条

[00:04:39.420 --> 00:04:41.260] 显瘦的副作用就是显年轻

[00:04:41.260 --> 00:04:42.600] 再配上一条牛仔裤

[00:04:42.600 --> 00:04:43.680] 配上一双大黄靴

[00:04:43.680 --> 00:04:45.100] 大学生的气质就出来了

[00:04:45.100 --> 00:04:47.700] 要是自拍的时候再配上大学宿舍洗漱台

[00:04:47.700 --> 00:04:49.380] 那永远擦不干净的镜子

[00:04:49.380 --> 00:04:50.840] 瞬间青春无敌了

[00:04:50.840 --> 00:04:51.700] 说的更直白一点

[00:04:51.700 --> 00:04:53.060] 人家买的是个锦铃神器

[00:04:53.060 --> 00:04:53.820] 所以说

[00:04:53.820 --> 00:04:55.860] 吐槽穿骆驼都是假户外爱好者的人

[00:04:55.860 --> 00:04:57.460] 其实并没有理解骆驼的定位

[00:04:57.460 --> 00:04:59.780] 骆驼其实是给了想要入门山系穿搭

[00:04:59.780 --> 00:05:01.740] 想要追逐流行的人一个最平价

[00:05:01.740 --> 00:05:02.980] 决策成本最低的选择

[00:05:02.980 --> 00:05:04.880] 至于那些真正的硬核户外爱好者

[00:05:04.880 --> 00:05:05.800] 骆驼既没有能力

[00:05:05.800 --> 00:05:07.080] 也没有打算触打他们

[00:05:07.080 --> 00:05:07.980] 反过来说

[00:05:07.980 --> 00:05:09.460] 那些自驾穿越边疆国道

[00:05:09.460 --> 00:05:11.680] 或者去阿尔卑斯山区登山探险的人

[00:05:11.680 --> 00:05:13.540] 也不太可能在户外服饰上省钱

[00:05:13.540 --> 00:05:14.900] 毕竟光是交通住宿

[00:05:14.900 --> 00:05:15.600] 请假出行

[00:05:15.600 --> 00:05:16.560] 成本就不低了

[00:05:16.560 --> 00:05:17.320] 对他们来说

[00:05:17.320 --> 00:05:19.140] 户外装备很多时候是保命用的

[00:05:19.140 --> 00:05:21.180] 也就不存在跟风凹造型的必要了

[00:05:21.180 --> 00:05:22.300] 最后我再说个题外话

[00:05:22.300 --> 00:05:23.320] 年轻人追捧骆驼

[00:05:23.320 --> 00:05:24.240] 一个隐藏的原因

[00:05:24.240 --> 00:05:25.940] 其实是羽绒服越来越贵了

[00:05:25.940 --> 00:05:26.620] 有媒体统计

[00:05:26.620 --> 00:05:28.440] 现在国产羽绒服的平均售价

[00:05:28.440 --> 00:05:29.880] 已经高达881元

[00:05:29.880 --> 00:05:31.140] 波斯灯均价最高

[00:05:31.140 --> 00:05:31.900] 接近2000元

[00:05:31.900 --> 00:05:32.880] 而且过去几年

[00:05:32.880 --> 00:05:34.800] 国产羽绒服品牌都在转向高端化

[00:05:34.800 --> 00:05:37.060] 羽绒服市场分为8000元以上的奢侈级

[00:05:37.060 --> 00:05:38.440] 2000元以下的大众级

[00:05:38.440 --> 00:05:39.740] 而在中间的高端级

[00:05:39.740 --> 00:05:41.220] 国产品牌一直没有存在感

[00:05:41.220 --> 00:05:42.140] 所以过去几年

[00:05:42.140 --> 00:05:43.520] 波斯灯天空人这些品牌

[00:05:43.520 --> 00:05:45.260] 都把2000元到8000元这个市场

[00:05:45.260 --> 00:05:46.560] 当成未来的发展趋势

[00:05:46.560 --> 00:05:47.980] 东芯证券研报显示

[00:05:47.980 --> 00:05:49.600] 从2018到2021年

[00:05:49.600 --> 00:05:52.080] 波斯灯均价4年涨幅达到60%以上

[00:05:52.080 --> 00:05:53.080] 过去5个财年

[00:05:53.080 --> 00:05:54.300] 这个品牌的营销开支

[00:05:54.300 --> 00:05:56.020] 从20多亿涨到了60多亿

[00:05:56.020 --> 00:05:57.240] 羽绒服价格往上走

[00:05:57.240 --> 00:05:59.160] 年轻消费者就开始抛弃羽绒服

[00:05:59.160 --> 00:06:00.300] 购买平价春风衣

[00:06:00.300 --> 00:06:02.240] 里面再穿个普通价位的摇篱绒

[00:06:02.240 --> 00:06:03.280] 或者羽绒小夹克

[00:06:03.280 --> 00:06:05.100] 也不比大几千的羽绒服差多少

[00:06:05.100 --> 00:06:05.740] 说到底

[00:06:05.740 --> 00:06:07.120] 现在消费社会发达了

[00:06:07.120 --> 00:06:08.300] 没有什么需求是一定要

[00:06:08.300 --> 00:06:09.740] 某种特定的解决方案

[00:06:09.740 --> 00:06:11.500] 特定价位的商品才能实现的

[00:06:11.500 --> 00:06:12.080] 要保暖

[00:06:12.080 --> 00:06:13.140] 羽绒服固然很好

[00:06:13.140 --> 00:06:15.320] 但春风衣加一些内搭也很暖和

[00:06:15.320 --> 00:06:15.820] 要时尚

[00:06:15.820 --> 00:06:17.860] 大几千块钱的设计师品牌非常不错

[00:06:17.860 --> 00:06:19.360] 但350的拼多多服饰

[00:06:19.360 --> 00:06:20.520] 搭得好也能出产

[00:06:20.520 --> 00:06:21.620] 要去野外徒步

[00:06:21.620 --> 00:06:22.940] 花五六千买鸟也可以

[00:06:22.940 --> 00:06:25.100] 但迪卡侬也足以应付大多数状况

[00:06:25.100 --> 00:06:25.720] 所以说

[00:06:25.720 --> 00:06:27.420] 花高价买春风衣当然也OK

[00:06:27.420 --> 00:06:28.540] 三四百买件骆驼

[00:06:28.540 --> 00:06:29.880] 也是可以介绍的选择

[00:06:29.880 --> 00:06:31.900] 何况骆驼也多多少少有一些功能性

[00:06:31.900 --> 00:06:32.840] 毕竟它再怎么样

[00:06:32.840 --> 00:06:33.920] 还是个春风衣

[00:06:33.920 --> 00:06:34.800] 理解了这个事情

[00:06:34.800 --> 00:06:35.740] 就很容易分辨

[00:06:35.740 --> 00:06:36.900] 什么是智商税的

[00:06:36.900 --> 00:06:38.740] 那些向你灌输非某个品牌不用

[00:06:38.740 --> 00:06:39.880] 告诉你某个需求

[00:06:39.880 --> 00:06:41.380] 只有某个产品才能满足

[00:06:41.380 --> 00:06:42.160] 某个品牌

[00:06:42.160 --> 00:06:44.220] 就是某个品类绝对的鄙视链顶端

[00:06:44.220 --> 00:06:45.900] 这类营销的智商税含量

[00:06:45.900 --> 00:06:46.860] 必然是很高的

[00:06:46.860 --> 00:06:48.780] 它的目的是剥夺你选择的权利

[00:06:48.780 --> 00:06:51.220] 让你主动放弃比价和寻找平梯的想法

[00:06:51.220 --> 00:06:52.920] 从而避免与其他品牌竞争

[00:06:52.920 --> 00:06:54.280] 而没有竞争的市场

[00:06:54.280 --> 00:06:56.020] 才是智商税含量最高的市场

[00:06:56.020 --> 00:06:57.360] 消费商业洞见

[00:06:57.360 --> 00:06:58.420] 近在IC实验室

[00:06:58.420 --> 00:06:59.000] 我是馆长

[00:06:59.000 --> 00:06:59.840] 我们下期再见

[00:06:59.840 --> 00:07:01.840] 谢谢大家!

output_srt: saving output to 'chs.wav.srt'

whisper_print_timings: load time = 1232.24 ms

whisper_print_timings: fallbacks = 1 p / 0 h

whisper_print_timings: mel time = 507.42 ms

whisper_print_timings: sample time = 14211.34 ms / 19337 runs ( 0.73 ms per run)

whisper_print_timings: encode time = 9234.67 ms / 19 runs ( 486.04 ms per run)

whisper_print_timings: decode time = 41.85 ms / 2 runs ( 20.92 ms per run)

whisper_print_timings: batchd time = 325320.62 ms / 19329 runs ( 16.83 ms per run)

whisper_print_timings: prompt time = 5857.69 ms / 3869 runs ( 1.51 ms per run)

whisper_print_timings: total time = 356447.78 ms

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ./main -l zh -osrt -m models/ggml-large-v3.bin chs.wav

参考资料:

https://blog.csdn.net/qq_43907505/article/details/135048613?spm=1001.2101.3001.6650.4&utm_medium=distribute.pc_relevant.none-task-blog-2%7Edefault%7EYuanLiJiHua%7EPosition-4-135048613-blog-127843094.235%5Ev43%5Epc_blog_bottom_relevance_base1&depth_1-utm_source=distribute.pc_relevant.none-task-blog-2%7Edefault%7EYuanLiJiHua%7EPosition-4-135048613-blog-127843094.235%5Ev43%5Epc_blog_bottom_relevance_base1&utm_relevant_index=9

https://blog.csdn.net/qq_43907505/article/details/135048613

开源语音识别faster-whisper部署教程

日语源视频:【通过hotbox获取】

https://www.bilibili.com/video/BV1fG4y1b74e/?vd_source=4a6b675fa22dfa306da59f67b1f22616

「原神」神里绫华日语配音,谁能拒绝一只蝴蝶忍呢?

中文源视频:【通过猫抓获取】

https://www.ixigua.com/7320445308314485283

2024-01-05 11:06国产冲锋衣杀疯了!百元骆驼如何营销卖爆?-IC实验室

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ffmpeg

ffmpeg version 4.2.7-0ubuntu0.1 Copyright (c) 2000-2022 the FFmpeg developers

usage: ffmpeg [options] [[infile options] -i infile]... {[outfile options] outfile}...

Use -h to get full help or, even better, run 'man ffmpeg'

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ffmpeg -i chi.mp4 -ar 16000 -ac 1 -c:a pcm_s16le chi.wav

ffmpeg version 4.2.7-0ubuntu0.1 Copyright (c) 2000-2022 the FFmpeg developers

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ffmpeg -i chs.mp4 -ar 16000 -ac 1 -c:a pcm_s16le chs.wav

LOG如下:

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ make clean

I whisper.cpp build info:

I UNAME_S: Linux

I UNAME_P: x86_64

I UNAME_M: x86_64

I CFLAGS: -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3

I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3

I LDFLAGS:

I CC: cc (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

I CXX: g++ (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

rm -f *.o main stream command talk talk-llama bench quantize server lsp libwhisper.a libwhisper.so

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ll

total 19196

drwxrwxr-x 17 rootroot rootroot 4096 2月 2 17:46 ./

drwxr-xr-x 30 rootroot rootroot 4096 2月 2 16:49 ../

drwxrwxr-x 7 rootroot rootroot 4096 2月 2 16:49 bindings/

-rwx------ 1 rootroot rootroot 3465644 1月 12 01:28 chs.mp4*

-rw-rw-r-- 1 rootroot rootroot 13497126 2月 2 17:26 chs.wav

-rw-rw-r-- 1 rootroot rootroot 11821 2月 2 17:41 chs.wav使用CPU.srt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 cmake/

-rw-rw-r-- 1 rootroot rootroot 19150 2月 2 16:49 CMakeLists.txt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 coreml/

drwx------ 2 rootroot rootroot 4096 2月 2 17:45 CPU/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 .devops/

drwxrwxr-x 24 rootroot rootroot 4096 2月 2 16:49 examples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 extra/

-rw-rw-r-- 1 rootroot rootroot 31647 2月 2 16:49 ggml-alloc.c

-rw-rw-r-- 1 rootroot rootroot 4055 2月 2 16:49 ggml-alloc.h

-rw-rw-r-- 1 rootroot rootroot 67212 2月 2 16:49 ggml-backend.c

-rw-rw-r-- 1 rootroot rootroot 11720 2月 2 16:49 ggml-backend.h

-rw-rw-r-- 1 rootroot rootroot 5874 2月 2 16:49 ggml-backend-impl.h

-rw-rw-r-- 1 rootroot rootroot 676115 2月 2 16:49 ggml.c

-rw-rw-r-- 1 rootroot rootroot 440093 2月 2 16:49 ggml-cuda.cu

-rw-rw-r-- 1 rootroot rootroot 2104 2月 2 16:49 ggml-cuda.h

-rw-rw-r-- 1 rootroot rootroot 85094 2月 2 16:49 ggml.h

-rw-rw-r-- 1 rootroot rootroot 7567 2月 2 16:49 ggml-impl.h

-rw-rw-r-- 1 rootroot rootroot 2358 2月 2 16:49 ggml-metal.h

-rw-rw-r-- 1 rootroot rootroot 150160 2月 2 16:49 ggml-metal.m

-rw-rw-r-- 1 rootroot rootroot 225659 2月 2 16:49 ggml-metal.metal

-rw-rw-r-- 1 rootroot rootroot 85693 2月 2 16:49 ggml-opencl.cpp

-rw-rw-r-- 1 rootroot rootroot 1386 2月 2 16:49 ggml-opencl.h

-rw-rw-r-- 1 rootroot rootroot 401791 2月 2 16:49 ggml-quants.c

-rw-rw-r-- 1 rootroot rootroot 13705 2月 2 16:49 ggml-quants.h

drwxrwxr-x 8 rootroot rootroot 4096 2月 2 16:49 .git/

drwxrwxr-x 3 rootroot rootroot 4096 2月 2 16:49 .github/

-rw-rw-r-- 1 rootroot rootroot 803 2月 2 16:49 .gitignore

-rw-rw-r-- 1 rootroot rootroot 96 2月 2 16:49 .gitmodules

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 grammars/

-rw-rw-r-- 1 rootroot rootroot 1072 2月 2 16:49 LICENSE

-rw-rw-r-- 1 rootroot rootroot 14883 2月 2 16:49 Makefile

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 17:24 models/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 openvino/

-rw-rw-r-- 1 rootroot rootroot 1776 2月 2 16:49 Package.swift

-rw-rw-r-- 1 rootroot rootroot 39115 2月 2 16:49 README.md

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 samples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 spm-headers/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 tests/

-rw-rw-r-- 1 rootroot rootroot 232975 2月 2 16:49 whisper.cpp

-rw-rw-r-- 1 rootroot rootroot 30248 2月 2 16:49 whisper.h

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ll main

ls: cannot access 'main': No such file or directory

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ WHISPER_CLBLAST=1 make -j16

I whisper.cpp build info:

I UNAME_S: Linux

I UNAME_P: x86_64

I UNAME_M: x86_64

I CFLAGS: -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST

I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST

I LDFLAGS: -lclblast -lOpenCL

I CC: cc (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

I CXX: g++ (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c ggml-opencl.cpp -o ggml-opencl.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c ggml.c -o ggml.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c ggml-alloc.c -o ggml-alloc.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c ggml-backend.c -o ggml-backend.o

cc -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c ggml-quants.c -o ggml-quants.o

g++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CLBLAST -c whisper.cpp -o whisper.o

ggml-opencl.cpp:15:10: fatal error: clblast.h: No such file or directory

15 | #include <clblast.h>

| ^~~~~~~~~~~

compilation terminated.

make: *** [Makefile:255: ggml-opencl.o] Error 1

make: *** Waiting for unfinished jobs....

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sidp aptg-et install openblas

Command 'sidp' not found, did you mean:

command 'ssdp' from snap ssdp (0.0.1)

command 'sipp' from deb sip-tester (1:3.6.0-1build1)

command 'sip' from deb sip-dev (4.19.21+dfsg-1build1)

command 'sfdp' from deb graphviz (2.42.2-3build2)

See 'snap info <snapname>' for additional versions.

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sidp apt-get install openblas

Command 'sidp' not found, did you mean:

command 'ssdp' from snap ssdp (0.0.1)

command 'sfdp' from deb graphviz (2.42.2-3build2)

command 'sip' from deb sip-dev (4.19.21+dfsg-1build1)

command 'sipp' from deb sip-tester (1:3.6.0-1build1)

See 'snap info <snapname>' for additional versions.

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sudo apt-get install openblas

[sudo] password for rootroot:

Reading package lists... Done

Building dependency tree

Reading state information... Done

E: Unable to locate package openblas

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sudo apt install openblas

Reading package lists... Done

Building dependency tree

Reading state information... Done

E: Unable to locate package openblas

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sudo apt-get install libopenblas-dev

Reading package lists... Done

Building dependency tree

Reading state information... Done

The following additional packages will be installed:

libopenblas-pthread-dev libopenblas0 libopenblas0-pthread

The following NEW packages will be installed:

libopenblas-dev libopenblas-pthread-dev libopenblas0 libopenblas0-pthread

0 upgraded, 4 newly installed, 0 to remove and 11 not upgraded.

Need to get 13.7 MB of archives.

After this operation, 153 MB of additional disk space will be used.

Do you want to continue? [Y/n] y

Get:1 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libopenblas0-pthread amd64 0.3.8+ds-1ubuntu0.20.04.1 [9,127 kB]

Get:2 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libopenblas0 amd64 0.3.8+ds-1ubuntu0.20.04.1 [5,892 B]

Get:3 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libopenblas-pthread-dev amd64 0.3.8+ds-1ubuntu0.20.04.1 [4,526 kB]

Get:4 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal-updates/universe amd64 libopenblas-dev amd64 0.3.8+ds-1ubuntu0.20.04.1 [16.4 kB]

Fetched 13.7 MB in 2s (8,470 kB/s)

Selecting previously unselected package libopenblas0-pthread:amd64.

(Reading database ... 207405 files and directories currently installed.)

Preparing to unpack .../libopenblas0-pthread_0.3.8+ds-1ubuntu0.20.04.1_amd64.deb ...

Unpacking libopenblas0-pthread:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Selecting previously unselected package libopenblas0:amd64.

Preparing to unpack .../libopenblas0_0.3.8+ds-1ubuntu0.20.04.1_amd64.deb ...

Unpacking libopenblas0:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Selecting previously unselected package libopenblas-pthread-dev:amd64.

Preparing to unpack .../libopenblas-pthread-dev_0.3.8+ds-1ubuntu0.20.04.1_amd64.deb ...

Unpacking libopenblas-pthread-dev:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Selecting previously unselected package libopenblas-dev:amd64.

Preparing to unpack .../libopenblas-dev_0.3.8+ds-1ubuntu0.20.04.1_amd64.deb ...

Unpacking libopenblas-dev:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Setting up libopenblas0-pthread:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/libblas.so.3 to provide /usr/lib/x86_64-linux-gnu/libblas.so.3 (libblas.so.3-x86_64-linux-gnu) in auto mode

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/liblapack.so.3 to provide /usr/lib/x86_64-linux-gnu/liblapack.so.3 (liblapack.so.3-x86_64-linux-gnu) in auto mode

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/libopenblas.so.0 to provide /usr/lib/x86_64-linux-gnu/libopenblas.so.0 (libopenblas.so.0-x86_64-linux-gnu) in auto mode

Setting up libopenblas0:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Setting up libopenblas-pthread-dev:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/libblas.so to provide /usr/lib/x86_64-linux-gnu/libblas.so (libblas.so-x86_64-linux-gnu) in auto mode

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/liblapack.so to provide /usr/lib/x86_64-linux-gnu/liblapack.so (liblapack.so-x86_64-linux-gnu) in auto mode

update-alternatives: using /usr/lib/x86_64-linux-gnu/openblas-pthread/libopenblas.so to provide /usr/lib/x86_64-linux-gnu/libopenblas.so (libopenblas.so-x86_64-linux-gnu) in auto mode

Setting up libopenblas-dev:amd64 (0.3.8+ds-1ubuntu0.20.04.1) ...

Processing triggers for libc-bin (2.31-0ubuntu9.14) ...

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ WHISPER_CUBLAS=1 make -j16

expr: syntax error: unexpected argument ‘11.6’

I whisper.cpp build info:

I UNAME_S: Linux

I UNAME_P: x86_64

I UNAME_M: x86_64

I CFLAGS: -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I LDFLAGS: -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

I CC: cc (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

I CXX: g++ (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0

nvcc --forward-unknown-to-host-compiler -arch=all -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include -Wno-pedantic -c ggml-cuda.cu -o ggml-cuda.o

make: nvcc: Command not found

make: *** [Makefile:225: ggml-cuda.o] Error 127

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ nvcc -v

Command 'nvcc' not found, but can be installed with:

sudo apt install nvidia-cuda-toolkit

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sudo apt install nvidia-cuda-toolkit

Reading package lists... Done

Building dependency tree

Reading state information... Done

The following additional packages will be installed:

g++-8 javascript-common libaccinj64-10.1 libcublas10 libcublaslt10 libcudart10.1 libcufft10 libcufftw10 libcuinj64-10.1 libcupti-dev libcupti-doc libcupti10.1 libcurand10 libcusolver10 libcusolvermg10 libcusparse10 libjs-jquery libnppc10 libnppial10 libnppicc10

libnppicom10 libnppidei10 libnppif10 libnppig10 libnppim10 libnppist10 libnppisu10 libnppitc10 libnpps10 libnvblas10 libnvgraph10 libnvidia-compute-545 libnvidia-ml-dev libnvjpeg10 libnvrtc10.1 libnvtoolsext1 libnvvm3 libstdc++-8-dev libthrust-dev libvdpau-dev

node-html5shiv nvidia-cuda-dev nvidia-cuda-doc nvidia-cuda-gdb nvidia-opencl-dev nvidia-profiler nvidia-visual-profiler ocl-icd-opencl-dev opencl-c-headers

Suggested packages:

g++-8-multilib gcc-8-doc apache2 | lighttpd | httpd libstdc++-8-doc libvdpau-doc nodejs nvidia-driver | nvidia-tesla-440-driver | nvidia-tesla-418-driver libpoclu-dev

Recommended packages:

libnvcuvid1 nsight-compute nsight-systems

The following NEW packages will be installed:

g++-8 javascript-common libaccinj64-10.1 libcublas10 libcublaslt10 libcudart10.1 libcufft10 libcufftw10 libcuinj64-10.1 libcupti-dev libcupti-doc libcupti10.1 libcurand10 libcusolver10 libcusolvermg10 libcusparse10 libjs-jquery libnppc10 libnppial10 libnppicc10

libnppicom10 libnppidei10 libnppif10 libnppig10 libnppim10 libnppist10 libnppisu10 libnppitc10 libnpps10 libnvblas10 libnvgraph10 libnvidia-compute-545 libnvidia-ml-dev libnvjpeg10 libnvrtc10.1 libnvtoolsext1 libnvvm3 libstdc++-8-dev libthrust-dev libvdpau-dev

node-html5shiv nvidia-cuda-dev nvidia-cuda-doc nvidia-cuda-gdb nvidia-cuda-toolkit nvidia-opencl-dev nvidia-profiler nvidia-visual-profiler ocl-icd-opencl-dev opencl-c-headers

0 upgraded, 50 newly installed, 0 to remove and 11 not upgraded.

Need to get 1,111 MB/1,160 MB of archives.

After this operation, 3,056 MB of additional disk space will be used.

Do you want to continue? [Y/n] y

Get:1 file:/var/cuda-repo-ubuntu2004-12-3-local libnvidia-compute-545 545.23.08-0ubuntu1 [48.8 MB]

Err:1 file:/var/cuda-repo-ubuntu2004-12-3-local libnvidia-compute-545 545.23.08-0ubuntu1

File not found - /var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb (2: No such file or directory)

Get:2 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 libstdc++-8-dev amd64 8.4.0-3ubuntu2 [1,537 kB]

Get:3 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 g++-8 amd64 8.4.0-3ubuntu2 [10.1 MB]

Get:4 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 javascript-common all 11 [6,066 B]

Get:5 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libaccinj64-10.1 amd64 10.1.243-3 [1,893 kB]

Get:6 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcublaslt10 amd64 10.1.243-3 [9,249 kB]

Get:7 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcublas10 amd64 10.1.243-3 [29.7 MB]

Get:8 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcudart10.1 amd64 10.1.243-3 [125 kB]

Get:9 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcufft10 amd64 10.1.243-3 [85.3 MB]

Get:10 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcufftw10 amd64 10.1.243-3 [124 kB]

Get:11 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcuinj64-10.1 amd64 10.1.243-3 [2,030 kB]

Get:12 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcupti10.1 amd64 10.1.243-3 [4,311 kB]

Get:13 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcurand10 amd64 10.1.243-3 [39.0 MB]

Get:14 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcusolver10 amd64 10.1.243-3 [44.5 MB]

Get:15 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcusolvermg10 amd64 10.1.243-3 [28.1 MB]

Get:16 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcusparse10 amd64 10.1.243-3 [56.8 MB]

Get:17 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libjs-jquery all 3.3.1~dfsg-3 [329 kB]

Get:18 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppc10 amd64 10.1.243-3 [123 kB]

Get:19 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppial10 amd64 10.1.243-3 [3,667 kB]

Get:20 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppicc10 amd64 10.1.243-3 [1,621 kB]

Get:21 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppicom10 amd64 10.1.243-3 [539 kB]

Get:22 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppidei10 amd64 10.1.243-3 [2,001 kB]

Get:23 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppif10 amd64 10.1.243-3 [22.0 MB]

Get:24 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppig10 amd64 10.1.243-3 [12.0 MB]

Get:25 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppim10 amd64 10.1.243-3 [2,694 kB]

Get:26 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppist10 amd64 10.1.243-3 [7,313 kB]

Get:27 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppisu10 amd64 10.1.243-3 [116 kB]

Get:28 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnppitc10 amd64 10.1.243-3 [802 kB]

Get:29 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnpps10 amd64 10.1.243-3 [2,970 kB]

Get:30 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvblas10 amd64 10.1.243-3 [129 kB]

Get:31 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvgraph10 amd64 10.1.243-3 [44.5 MB]

Get:32 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvidia-ml-dev amd64 10.1.243-3 [58.1 kB]

Get:33 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvjpeg10 amd64 10.1.243-3 [1,227 kB]

Get:34 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvrtc10.1 amd64 10.1.243-3 [6,307 kB]

Get:35 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 libvdpau-dev amd64 1.3-1ubuntu2 [37.3 kB]

Get:36 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/universe amd64 node-html5shiv all 3.7.3+dfsg-3 [12.9 kB]

Get:37 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcupti-dev amd64 10.1.243-3 [4,779 kB]

Get:38 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libcupti-doc all 10.1.243-3 [2,117 kB]

Get:39 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvtoolsext1 amd64 10.1.243-3 [25.1 kB]

Get:40 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libnvvm3 amd64 10.1.243-3 [4,436 kB]

Get:41 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 libthrust-dev all 1.9.5-1 [526 kB]

Get:42 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-cuda-dev amd64 10.1.243-3 [420 MB]

Get:43 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-cuda-doc all 10.1.243-3 [102 MB]

Get:44 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-cuda-gdb amd64 10.1.243-3 [2,722 kB]

Get:45 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-profiler amd64 10.1.243-3 [2,673 kB]

Get:46 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 opencl-c-headers all 2.2~2019.08.06-g0d5f18c-1 [29.9 kB]

Get:47 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/main amd64 ocl-icd-opencl-dev amd64 2.2.11-1ubuntu1 [2,512 B]

Get:48 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-opencl-dev amd64 10.1.243-3 [16.5 kB]

Get:49 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-cuda-toolkit amd64 10.1.243-3 [35.0 MB]

Get:50 http://mirrors.tuna.tsinghua.edu.cn/ubuntu focal/multiverse amd64 nvidia-visual-profiler amd64 10.1.243-3 [115 MB]

Fetched 1,111 MB in 29s (38.0 MB/s)

E: Failed to fetch file:/var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb File not found - /var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb (2: No such file or directory)

E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing?

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ sudo apt install nvidia-cuda-toolkit

Reading package lists... Done

Building dependency tree

Reading state information... Done

The following additional packages will be installed:

g++-8 javascript-common libaccinj64-10.1 libcublas10 libcublaslt10 libcudart10.1 libcufft10 libcufftw10 libcuinj64-10.1 libcupti-dev libcupti-doc libcupti10.1 libcurand10 libcusolver10 libcusolvermg10 libcusparse10 libjs-jquery libnppc10 libnppial10 libnppicc10

libnppicom10 libnppidei10 libnppif10 libnppig10 libnppim10 libnppist10 libnppisu10 libnppitc10 libnpps10 libnvblas10 libnvgraph10 libnvidia-compute-545 libnvidia-ml-dev libnvjpeg10 libnvrtc10.1 libnvtoolsext1 libnvvm3 libstdc++-8-dev libthrust-dev libvdpau-dev

node-html5shiv nvidia-cuda-dev nvidia-cuda-doc nvidia-cuda-gdb nvidia-opencl-dev nvidia-profiler nvidia-visual-profiler ocl-icd-opencl-dev opencl-c-headers

Suggested packages:

g++-8-multilib gcc-8-doc apache2 | lighttpd | httpd libstdc++-8-doc libvdpau-doc nodejs nvidia-driver | nvidia-tesla-440-driver | nvidia-tesla-418-driver libpoclu-dev

Recommended packages:

libnvcuvid1 nsight-compute nsight-systems

The following NEW packages will be installed:

g++-8 javascript-common libaccinj64-10.1 libcublas10 libcublaslt10 libcudart10.1 libcufft10 libcufftw10 libcuinj64-10.1 libcupti-dev libcupti-doc libcupti10.1 libcurand10 libcusolver10 libcusolvermg10 libcusparse10 libjs-jquery libnppc10 libnppial10 libnppicc10

libnppicom10 libnppidei10 libnppif10 libnppig10 libnppim10 libnppist10 libnppisu10 libnppitc10 libnpps10 libnvblas10 libnvgraph10 libnvidia-compute-545 libnvidia-ml-dev libnvjpeg10 libnvrtc10.1 libnvtoolsext1 libnvvm3 libstdc++-8-dev libthrust-dev libvdpau-dev

node-html5shiv nvidia-cuda-dev nvidia-cuda-doc nvidia-cuda-gdb nvidia-cuda-toolkit nvidia-opencl-dev nvidia-profiler nvidia-visual-profiler ocl-icd-opencl-dev opencl-c-headers

0 upgraded, 50 newly installed, 0 to remove and 11 not upgraded.

Need to get 0 B/1,160 MB of archives.

After this operation, 3,056 MB of additional disk space will be used.

Do you want to continue? [Y/n] y

Get:1 file:/var/cuda-repo-ubuntu2004-12-3-local libnvidia-compute-545 545.23.08-0ubuntu1 [48.8 MB]

Err:1 file:/var/cuda-repo-ubuntu2004-12-3-local libnvidia-compute-545 545.23.08-0ubuntu1

File not found - /var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb (2: No such file or directory)

E: Failed to fetch file:/var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb File not found - /var/cuda-repo-ubuntu2004-12-3-local/./libnvidia-compute-545_545.23.08-0ubuntu1_amd64.deb (2: No such file or directory)

E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing?

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ nvcc -v

Command 'nvcc' not found, but can be installed with:

sudo apt install nvidia-cuda-toolkit

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ cd /usr/local/

rootroot@rootroot-X99-Turbo:/usr/local$ ll

total 44

drwxr-xr-x 11 root root 4096 1月 15 17:10 ./

drwxr-xr-x 14 root root 4096 3月 16 2023 ../

drwxr-xr-x 2 root root 4096 1月 15 17:10 bin/

lrwxrwxrwx 1 root root 22 1月 15 17:10 cuda -> /etc/alternatives/cuda/

lrwxrwxrwx 1 root root 25 1月 15 17:10 cuda-12 -> /etc/alternatives/cuda-12/

drwxr-xr-x 15 root root 4096 1月 15 17:10 cuda-12.3/

drwxr-xr-x 2 root root 4096 3月 16 2023 etc/

drwxr-xr-x 2 root root 4096 3月 16 2023 games/

drwxr-xr-x 2 root root 4096 3月 16 2023 include/

drwxr-xr-x 4 root root 4096 12月 16 19:57 lib/

lrwxrwxrwx 1 root root 9 12月 16 18:23 man -> share/man/

drwxr-xr-x 2 root root 4096 3月 16 2023 sbin/

drwxr-xr-x 7 root root 4096 3月 16 2023 share/

drwxr-xr-x 2 root root 4096 3月 16 2023 src/

rootroot@rootroot-X99-Turbo:/usr/local$ cd cuda

rootroot@rootroot-X99-Turbo:/usr/local/cuda$ ll

total 136

drwxr-xr-x 15 root root 4096 1月 15 17:10 ./

drwxr-xr-x 11 root root 4096 1月 15 17:10 ../

drwxr-xr-x 3 root root 4096 1月 15 17:09 bin/

drwxr-xr-x 5 root root 4096 1月 15 17:07 compute-sanitizer/

drwxr-xr-x 3 root root 4096 1月 15 17:09 doc/

-rw-r--r-- 1 root root 160 10月 31 17:24 DOCS

-rw-r--r-- 1 root root 61498 10月 31 17:24 EULA.txt

drwxr-xr-x 4 root root 4096 1月 16 10:39 extras/

drwxr-xr-x 4 root root 4096 1月 15 17:09 gds/

lrwxrwxrwx 1 root root 28 10月 31 17:20 include -> targets/x86_64-linux/include/

lrwxrwxrwx 1 root root 24 10月 31 17:20 lib64 -> targets/x86_64-linux/lib/

drwxr-xr-x 7 root root 4096 1月 15 17:09 libnvvp/

drwxr-xr-x 2 root root 4096 1月 15 17:09 nsightee_plugins/

drwxr-xr-x 3 root root 4096 1月 15 17:09 nvml/

drwxr-xr-x 6 root root 4096 1月 15 17:07 nvvm/

-rw-r--r-- 1 root root 524 10月 31 17:24 README

drwxr-xr-x 3 root root 4096 1月 15 17:07 share/

drwxr-xr-x 2 root root 4096 1月 15 17:09 src/

drwxr-xr-x 3 root root 4096 1月 15 17:07 targets/

drwxr-xr-x 2 root root 4096 1月 15 17:07 tools/

-rw-r--r-- 1 root root 3037 11月 30 02:48 version.json

rootroot@rootroot-X99-Turbo:/usr/local/cuda$

rootroot@rootroot-X99-Turbo:/usr/local/cuda$ cd bin/

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ ll

total 159484

drwxr-xr-x 3 root root 4096 1月 15 17:09 ./

drwxr-xr-x 15 root root 4096 1月 15 17:10 ../

-rwxr-xr-x 1 root root 88848 11月 23 03:32 bin2c*

lrwxrwxrwx 1 root root 4 10月 31 21:25 computeprof -> nvvp*

-rwxr-xr-x 1 root root 112 10月 31 17:41 compute-sanitizer*

drwxr-xr-x 2 root root 4096 1月 15 17:07 crt/

-rwxr-xr-x 1 root root 7336920 11月 23 03:32 cudafe++*

-rwxr-xr-x 1 root root 15812648 10月 31 18:46 cuda-gdb*

-rwxr-xr-x 1 root root 812256 10月 31 18:46 cuda-gdbserver*

-rwxr-xr-x 1 root root 75928 10月 31 17:49 cu++filt*

-rwxr-xr-x 1 root root 536064 10月 31 17:46 cuobjdump*

-rwxr-xr-x 1 root root 802968 11月 23 03:32 fatbinary*

-rwxr-xr-x 1 root root 3826 11月 30 02:48 ncu*

-rwxr-xr-x 1 root root 3616 11月 30 02:48 ncu-ui*

-rwxr-xr-x 1 root root 1580 10月 31 17:36 nsight_ee_plugins_manage.sh*

-rwxr-xr-x 1 root root 197 11月 30 02:48 nsight-sys*

-rwxr-xr-x 1 root root 743 11月 30 02:48 nsys*

-rwxr-xr-x 1 root root 833 11月 30 02:48 nsys-ui*

-rwxr-xr-x 1 root root 21784968 11月 23 03:32 nvcc*

-rwxr-xr-x 1 root root 10456 11月 23 03:32 __nvcc_device_query*

-rw-r--r-- 1 root root 417 11月 23 03:32 nvcc.profile

-rwxr-xr-x 1 root root 50674712 10月 31 17:45 nvdisasm*

-rwxr-xr-x 1 root root 29746536 11月 23 03:32 nvlink*

-rwxr-xr-x 1 root root 6022464 10月 31 21:16 nvprof*

-rwxr-xr-x 1 root root 109536 10月 31 17:44 nvprune*

-rwxr-xr-x 1 root root 285 10月 31 21:25 nvvp*

-rwxr-xr-x 1 root root 29421152 11月 23 03:32 ptxas*

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ nvcc -v

Command 'nvcc' not found, but can be installed with:

sudo apt install nvidia-cuda-toolkit

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ ./nvcc -v

nvcc fatal : No input files specified; use option --help for more information

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ ll nvcc

-rwxr-xr-x 1 root root 21784968 11月 23 03:32 nvcc*

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ ./nvcc

bin2c cuda-gdb ncu nsys-ui nvlink

computeprof cuda-gdbserver ncu-ui nvcc nvprof

compute-sanitizer cu++filt nsight_ee_plugins_manage.sh __nvcc_device_query nvprune

crt/ cuobjdump nsight-sys nvcc.profile nvvp

cudafe++ fatbinary nsys nvdisasm ptxas

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ ./nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2023 NVIDIA Corporation

Built on Wed_Nov_22_10:17:15_PST_2023

Cuda compilation tools, release 12.3, V12.3.107

Build cuda_12.3.r12.3/compiler.33567101_0

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$

rootroot@rootroot-X99-Turbo:/usr/local/cuda/bin$ cd ..

rootroot@rootroot-X99-Turbo:/usr/local/cuda$ ll

total 136

drwxr-xr-x 15 root root 4096 1月 15 17:10 ./

drwxr-xr-x 11 root root 4096 1月 15 17:10 ../

drwxr-xr-x 3 root root 4096 1月 15 17:09 bin/

drwxr-xr-x 5 root root 4096 1月 15 17:07 compute-sanitizer/

drwxr-xr-x 3 root root 4096 1月 15 17:09 doc/

-rw-r--r-- 1 root root 160 10月 31 17:24 DOCS

-rw-r--r-- 1 root root 61498 10月 31 17:24 EULA.txt

drwxr-xr-x 4 root root 4096 1月 16 10:39 extras/

drwxr-xr-x 4 root root 4096 1月 15 17:09 gds/

lrwxrwxrwx 1 root root 28 10月 31 17:20 include -> targets/x86_64-linux/include/

lrwxrwxrwx 1 root root 24 10月 31 17:20 lib64 -> targets/x86_64-linux/lib/

drwxr-xr-x 7 root root 4096 1月 15 17:09 libnvvp/

drwxr-xr-x 2 root root 4096 1月 15 17:09 nsightee_plugins/

drwxr-xr-x 3 root root 4096 1月 15 17:09 nvml/

drwxr-xr-x 6 root root 4096 1月 15 17:07 nvvm/

-rw-r--r-- 1 root root 524 10月 31 17:24 README

drwxr-xr-x 3 root root 4096 1月 15 17:07 share/

drwxr-xr-x 2 root root 4096 1月 15 17:09 src/

drwxr-xr-x 3 root root 4096 1月 15 17:07 targets/

drwxr-xr-x 2 root root 4096 1月 15 17:07 tools/

-rw-r--r-- 1 root root 3037 11月 30 02:48 version.json

rootroot@rootroot-X99-Turbo:/usr/local/cuda$ cd lib64/

rootroot@rootroot-X99-Turbo:/usr/local/cuda/lib64$ ll

total 4137208

drwxr-xr-x 4 root root 4096 1月 15 17:09 ./

drwxr-xr-x 4 root root 4096 1月 15 17:07 ../

drwxr-xr-x 6 root root 4096 1月 15 17:07 cmake/

lrwxrwxrwx 1 root root 19 10月 31 21:16 libaccinj64.so -> libaccinj64.so.12.3

lrwxrwxrwx 1 root root 23 10月 31 21:16 libaccinj64.so.12.3 -> libaccinj64.so.12.3.101

-rw-r--r-- 1 root root 2412184 10月 31 21:16 libaccinj64.so.12.3.101

-rw-r--r-- 1 root root 1493144 10月 31 20:51 libcheckpoint.so

lrwxrwxrwx 1 root root 17 10月 31 17:51 libcublasLt.so -> libcublasLt.so.12

lrwxrwxrwx 1 root root 23 10月 31 17:51 libcublasLt.so.12 -> libcublasLt.so.12.3.4.1

-rw-r--r-- 1 root root 518358624 10月 31 17:51 libcublasLt.so.12.3.4.1

-rw-r--r-- 1 root root 781766258 10月 31 17:51 libcublasLt_static.a

lrwxrwxrwx 1 root root 15 10月 31 17:51 libcublas.so -> libcublas.so.12

lrwxrwxrwx 1 root root 21 10月 31 17:51 libcublas.so.12 -> libcublas.so.12.3.4.1

-rw-r--r-- 1 root root 106679344 10月 31 17:51 libcublas.so.12.3.4.1

-rw-r--r-- 1 root root 168603496 10月 31 17:51 libcublas_static.a

-rw-r--r-- 1 root root 1647010 10月 31 17:48 libcudadevrt.a

lrwxrwxrwx 1 root root 15 10月 31 17:48 libcudart.so -> libcudart.so.12

lrwxrwxrwx 1 root root 21 10月 31 17:48 libcudart.so.12 -> libcudart.so.12.3.101

-rw-r--r-- 1 root root 703808 10月 31 17:48 libcudart.so.12.3.101

-rw-r--r-- 1 root root 1417724 10月 31 17:48 libcudart_static.a

lrwxrwxrwx 1 root root 14 10月 31 17:57 libcufft.so -> libcufft.so.11

lrwxrwxrwx 1 root root 21 10月 31 17:57 libcufft.so.11 -> libcufft.so.11.0.12.1

-rw-r--r-- 1 root root 177827520 10月 31 17:57 libcufft.so.11.0.12.1

-rw-r--r-- 1 root root 199432168 10月 31 17:57 libcufft_static.a

-rw-r--r-- 1 root root 199334148 10月 31 17:57 libcufft_static_nocallback.a

lrwxrwxrwx 1 root root 15 10月 31 17:57 libcufftw.so -> libcufftw.so.11

lrwxrwxrwx 1 root root 22 10月 31 17:57 libcufftw.so.11 -> libcufftw.so.11.0.12.1

-rw-r--r-- 1 root root 966600 10月 31 17:57 libcufftw.so.11.0.12.1

-rw-r--r-- 1 root root 79566 10月 31 17:57 libcufftw_static.a

lrwxrwxrwx 1 root root 19 10月 26 07:36 libcufile_rdma.so -> libcufile_rdma.so.1

lrwxrwxrwx 1 root root 23 10月 26 07:36 libcufile_rdma.so.1 -> libcufile_rdma.so.1.8.1

-rw-r--r-- 1 root root 43320 10月 26 07:36 libcufile_rdma.so.1.8.1

-rw-r--r-- 1 root root 65206 10月 26 07:36 libcufile_rdma_static.a

lrwxrwxrwx 1 root root 14 10月 26 07:36 libcufile.so -> libcufile.so.0

lrwxrwxrwx 1 root root 18 10月 26 07:36 libcufile.so.0 -> libcufile.so.1.8.1

-rw-r--r-- 1 root root 2993680 10月 26 07:36 libcufile.so.1.8.1

-rw-r--r-- 1 root root 24282190 10月 26 07:36 libcufile_static.a

-rw-r--r-- 1 root root 948952 10月 31 17:49 libcufilt.a

lrwxrwxrwx 1 root root 18 10月 31 21:16 libcuinj64.so -> libcuinj64.so.12.3

lrwxrwxrwx 1 root root 22 10月 31 21:16 libcuinj64.so.12.3 -> libcuinj64.so.12.3.101

-rw-r--r-- 1 root root 2832640 10月 31 21:16 libcuinj64.so.12.3.101

-rw-r--r-- 1 root root 30922 10月 31 17:48 libculibos.a

lrwxrwxrwx 1 root root 14 10月 31 20:51 libcupti.so -> libcupti.so.12

lrwxrwxrwx 1 root root 20 10月 31 20:51 libcupti.so.12 -> libcupti.so.2023.3.1

-rw-r--r-- 1 root root 7683440 10月 31 20:51 libcupti.so.2023.3.1

-rw-r--r-- 1 root root 19214978 10月 31 20:51 libcupti_static.a

lrwxrwxrwx 1 root root 15 11月 23 03:55 libcurand.so -> libcurand.so.10

lrwxrwxrwx 1 root root 23 11月 23 03:55 libcurand.so.10 -> libcurand.so.10.3.4.107

-rw-r--r-- 1 root root 96259504 11月 23 03:55 libcurand.so.10.3.4.107

-rw-r--r-- 1 root root 96328614 11月 23 03:55 libcurand_static.a

-rw-r--r-- 1 root root 16788330 10月 31 18:36 libcusolver_lapack_static.a

-rw-r--r-- 1 root root 1005514 10月 31 18:36 libcusolver_metis_static.a

lrwxrwxrwx 1 root root 19 10月 31 18:36 libcusolverMg.so -> libcusolverMg.so.11

lrwxrwxrwx 1 root root 27 10月 31 18:36 libcusolverMg.so.11 -> libcusolverMg.so.11.5.4.101

-rw-r--r-- 1 root root 83040368 10月 31 18:36 libcusolverMg.so.11.5.4.101

lrwxrwxrwx 1 root root 17 10月 31 18:36 libcusolver.so -> libcusolver.so.11

lrwxrwxrwx 1 root root 25 10月 31 18:36 libcusolver.so.11 -> libcusolver.so.11.5.4.101

-rw-r--r-- 1 root root 115640600 10月 31 18:36 libcusolver.so.11.5.4.101

-rw-r--r-- 1 root root 133576956 10月 31 18:36 libcusolver_static.a

lrwxrwxrwx 1 root root 17 10月 31 18:09 libcusparse.so -> libcusparse.so.12

lrwxrwxrwx 1 root root 25 10月 31 18:09 libcusparse.so.12 -> libcusparse.so.12.2.0.103

-rw-r--r-- 1 root root 267184960 10月 31 18:09 libcusparse.so.12.2.0.103

-rw-r--r-- 1 root root 299914796 10月 31 18:09 libcusparse_static.a

-rw-r--r-- 1 root root 1005514 10月 31 18:36 libmetis_static.a

lrwxrwxrwx 1 root root 13 10月 31 18:19 libnppc.so -> libnppc.so.12

lrwxrwxrwx 1 root root 19 10月 31 18:19 libnppc.so.12 -> libnppc.so.12.2.3.2

-rw-r--r-- 1 root root 1642992 10月 31 18:19 libnppc.so.12.2.3.2

-rw-r--r-- 1 root root 30686 10月 31 18:19 libnppc_static.a

lrwxrwxrwx 1 root root 15 10月 31 18:19 libnppial.so -> libnppial.so.12

lrwxrwxrwx 1 root root 21 10月 31 18:19 libnppial.so.12 -> libnppial.so.12.2.3.2

-rw-r--r-- 1 root root 17568560 10月 31 18:19 libnppial.so.12.2.3.2

-rw-r--r-- 1 root root 19071940 10月 31 18:19 libnppial_static.a

lrwxrwxrwx 1 root root 15 10月 31 18:19 libnppicc.so -> libnppicc.so.12

lrwxrwxrwx 1 root root 21 10月 31 18:19 libnppicc.so.12 -> libnppicc.so.12.2.3.2

-rw-r--r-- 1 root root 7500616 10月 31 18:19 libnppicc.so.12.2.3.2

-rw-r--r-- 1 root root 7041694 10月 31 18:19 libnppicc_static.a

lrwxrwxrwx 1 root root 16 10月 31 18:19 libnppidei.so -> libnppidei.so.12

lrwxrwxrwx 1 root root 22 10月 31 18:19 libnppidei.so.12 -> libnppidei.so.12.2.3.2

-rw-r--r-- 1 root root 11134104 10月 31 18:19 libnppidei.so.12.2.3.2

-rw-r--r-- 1 root root 11875304 10月 31 18:19 libnppidei_static.a

lrwxrwxrwx 1 root root 14 10月 31 18:19 libnppif.so -> libnppif.so.12

lrwxrwxrwx 1 root root 20 10月 31 18:19 libnppif.so.12 -> libnppif.so.12.2.3.2

-rw-r--r-- 1 root root 101066824 10月 31 18:19 libnppif.so.12.2.3.2

-rw-r--r-- 1 root root 103942380 10月 31 18:19 libnppif_static.a

lrwxrwxrwx 1 root root 14 10月 31 18:19 libnppig.so -> libnppig.so.12

lrwxrwxrwx 1 root root 20 10月 31 18:19 libnppig.so.12 -> libnppig.so.12.2.3.2

-rw-r--r-- 1 root root 41137040 10月 31 18:19 libnppig.so.12.2.3.2

-rw-r--r-- 1 root root 41987560 10月 31 18:19 libnppig_static.a

lrwxrwxrwx 1 root root 14 10月 31 18:19 libnppim.so -> libnppim.so.12

lrwxrwxrwx 1 root root 20 10月 31 18:19 libnppim.so.12 -> libnppim.so.12.2.3.2

-rw-r--r-- 1 root root 10322760 10月 31 18:19 libnppim.so.12.2.3.2

-rw-r--r-- 1 root root 9259562 10月 31 18:19 libnppim_static.a

lrwxrwxrwx 1 root root 15 10月 31 18:19 libnppist.so -> libnppist.so.12

lrwxrwxrwx 1 root root 21 10月 31 18:19 libnppist.so.12 -> libnppist.so.12.2.3.2

-rw-r--r-- 1 root root 38171728 10月 31 18:19 libnppist.so.12.2.3.2

-rw-r--r-- 1 root root 39228112 10月 31 18:19 libnppist_static.a

lrwxrwxrwx 1 root root 15 10月 31 18:19 libnppisu.so -> libnppisu.so.12

lrwxrwxrwx 1 root root 21 10月 31 18:19 libnppisu.so.12 -> libnppisu.so.12.2.3.2

-rw-r--r-- 1 root root 716168 10月 31 18:19 libnppisu.so.12.2.3.2

-rw-r--r-- 1 root root 11266 10月 31 18:19 libnppisu_static.a

lrwxrwxrwx 1 root root 15 10月 31 18:19 libnppitc.so -> libnppitc.so.12

lrwxrwxrwx 1 root root 21 10月 31 18:19 libnppitc.so.12 -> libnppitc.so.12.2.3.2

-rw-r--r-- 1 root root 5530224 10月 31 18:19 libnppitc.so.12.2.3.2

-rw-r--r-- 1 root root 4503836 10月 31 18:19 libnppitc_static.a

lrwxrwxrwx 1 root root 13 10月 31 18:19 libnpps.so -> libnpps.so.12

lrwxrwxrwx 1 root root 19 10月 31 18:19 libnpps.so.12 -> libnpps.so.12.2.3.2

-rw-r--r-- 1 root root 18105592 10月 31 18:19 libnpps.so.12.2.3.2

-rw-r--r-- 1 root root 17960158 10月 31 18:19 libnpps_static.a

lrwxrwxrwx 1 root root 15 10月 31 17:51 libnvblas.so -> libnvblas.so.12

lrwxrwxrwx 1 root root 21 10月 31 17:51 libnvblas.so.12 -> libnvblas.so.12.3.4.1

-rw-r--r-- 1 root root 728856 10月 31 17:51 libnvblas.so.12.3.4.1

lrwxrwxrwx 1 root root 18 10月 31 18:11 libnvJitLink.so -> libnvJitLink.so.12

lrwxrwxrwx 1 root root 24 10月 31 18:11 libnvJitLink.so.12 -> libnvJitLink.so.12.3.101

-rw-r--r-- 1 root root 52190720 10月 31 18:11 libnvJitLink.so.12.3.101

-rw-r--r-- 1 root root 63530708 10月 31 18:11 libnvJitLink_static.a

lrwxrwxrwx 1 root root 15 10月 31 17:49 libnvjpeg.so -> libnvjpeg.so.12

lrwxrwxrwx 1 root root 22 10月 31 17:49 libnvjpeg.so.12 -> libnvjpeg.so.12.3.0.81

-rw-r--r-- 1 root root 6705968 10月 31 17:49 libnvjpeg.so.12.3.0.81

-rw-r--r-- 1 root root 6828780 10月 31 17:49 libnvjpeg_static.a

-rw-r--r-- 1 root root 28538488 10月 31 20:51 libnvperf_host.so

-rw-r--r-- 1 root root 36274804 10月 31 20:51 libnvperf_host_static.a

-rw-r--r-- 1 root root 6018384 10月 31 20:51 libnvperf_target.so

-rw-r--r-- 1 root root 47925582 11月 23 03:32 libnvptxcompiler_static.a

lrwxrwxrwx 1 root root 25 11月 23 03:49 libnvrtc-builtins.so -> libnvrtc-builtins.so.12.3

lrwxrwxrwx 1 root root 29 11月 23 03:49 libnvrtc-builtins.so.12.3 -> libnvrtc-builtins.so.12.3.107

-rw-r--r-- 1 root root 6662024 11月 23 03:49 libnvrtc-builtins.so.12.3.107

-rw-r--r-- 1 root root 6681284 11月 23 03:49 libnvrtc-builtins_static.a

lrwxrwxrwx 1 root root 14 11月 23 03:49 libnvrtc.so -> libnvrtc.so.12

lrwxrwxrwx 1 root root 20 11月 23 03:49 libnvrtc.so.12 -> libnvrtc.so.12.3.107

-rw-r--r-- 1 root root 60792048 11月 23 03:49 libnvrtc.so.12.3.107

-rw-r--r-- 1 root root 75105270 11月 23 03:49 libnvrtc_static.a

lrwxrwxrwx 1 root root 18 10月 31 17:52 libnvToolsExt.so -> libnvToolsExt.so.1

lrwxrwxrwx 1 root root 22 10月 31 17:52 libnvToolsExt.so.1 -> libnvToolsExt.so.1.0.0

-rw-r--r-- 1 root root 40136 10月 31 17:52 libnvToolsExt.so.1.0.0

lrwxrwxrwx 1 root root 14 10月 31 17:37 libOpenCL.so -> libOpenCL.so.1

lrwxrwxrwx 1 root root 16 10月 31 17:37 libOpenCL.so.1 -> libOpenCL.so.1.0

lrwxrwxrwx 1 root root 18 10月 31 17:37 libOpenCL.so.1.0 -> libOpenCL.so.1.0.0

-rw-r--r-- 1 root root 30856 10月 31 17:37 libOpenCL.so.1.0.0

-rw-r--r-- 1 root root 912728 10月 31 20:51 libpcsamplingutil.so

drwxr-xr-x 2 root root 4096 1月 15 17:09 stubs/

rootroot@rootroot-X99-Turbo:/usr/local/cuda/lib64$ cd -

/usr/local/cuda

rootroot@rootroot-X99-Turbo:/usr/local/cuda$ cd ~/whisper.cpp/

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ll

total 20728

drwxrwxr-x 17 rootroot rootroot 4096 2月 2 17:46 ./

drwxr-xr-x 30 rootroot rootroot 4096 2月 2 16:49 ../

drwxrwxr-x 7 rootroot rootroot 4096 2月 2 16:49 bindings/

-rwx------ 1 rootroot rootroot 3465644 1月 12 01:28 chs.mp4*

-rw-rw-r-- 1 rootroot rootroot 13497126 2月 2 17:26 chs.wav

-rw-rw-r-- 1 rootroot rootroot 11821 2月 2 17:41 chs.wav使用CPU.srt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 cmake/

-rw-rw-r-- 1 rootroot rootroot 19150 2月 2 16:49 CMakeLists.txt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 coreml/

drwx------ 2 rootroot rootroot 4096 2月 2 17:45 CPU/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 .devops/

drwxrwxr-x 24 rootroot rootroot 4096 2月 2 16:49 examples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 extra/

-rw-rw-r-- 1 rootroot rootroot 31647 2月 2 16:49 ggml-alloc.c

-rw-rw-r-- 1 rootroot rootroot 4055 2月 2 16:49 ggml-alloc.h

-rw-rw-r-- 1 rootroot rootroot 20504 2月 2 17:46 ggml-alloc.o

-rw-rw-r-- 1 rootroot rootroot 67212 2月 2 16:49 ggml-backend.c

-rw-rw-r-- 1 rootroot rootroot 11720 2月 2 16:49 ggml-backend.h

-rw-rw-r-- 1 rootroot rootroot 5874 2月 2 16:49 ggml-backend-impl.h

-rw-rw-r-- 1 rootroot rootroot 58464 2月 2 17:46 ggml-backend.o

-rw-rw-r-- 1 rootroot rootroot 676115 2月 2 16:49 ggml.c

-rw-rw-r-- 1 rootroot rootroot 440093 2月 2 16:49 ggml-cuda.cu

-rw-rw-r-- 1 rootroot rootroot 2104 2月 2 16:49 ggml-cuda.h

-rw-rw-r-- 1 rootroot rootroot 85094 2月 2 16:49 ggml.h

-rw-rw-r-- 1 rootroot rootroot 7567 2月 2 16:49 ggml-impl.h

-rw-rw-r-- 1 rootroot rootroot 2358 2月 2 16:49 ggml-metal.h

-rw-rw-r-- 1 rootroot rootroot 150160 2月 2 16:49 ggml-metal.m

-rw-rw-r-- 1 rootroot rootroot 225659 2月 2 16:49 ggml-metal.metal

-rw-rw-r-- 1 rootroot rootroot 550040 2月 2 17:46 ggml.o

-rw-rw-r-- 1 rootroot rootroot 85693 2月 2 16:49 ggml-opencl.cpp

-rw-rw-r-- 1 rootroot rootroot 1386 2月 2 16:49 ggml-opencl.h

-rw-rw-r-- 1 rootroot rootroot 401791 2月 2 16:49 ggml-quants.c

-rw-rw-r-- 1 rootroot rootroot 13705 2月 2 16:49 ggml-quants.h

-rw-rw-r-- 1 rootroot rootroot 198024 2月 2 17:46 ggml-quants.o

drwxrwxr-x 8 rootroot rootroot 4096 2月 2 16:49 .git/

drwxrwxr-x 3 rootroot rootroot 4096 2月 2 16:49 .github/

-rw-rw-r-- 1 rootroot rootroot 803 2月 2 16:49 .gitignore

-rw-rw-r-- 1 rootroot rootroot 96 2月 2 16:49 .gitmodules

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 grammars/

-rw-rw-r-- 1 rootroot rootroot 1072 2月 2 16:49 LICENSE

-rw-rw-r-- 1 rootroot rootroot 14883 2月 2 16:49 Makefile

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 17:24 models/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 openvino/

-rw-rw-r-- 1 rootroot rootroot 1776 2月 2 16:49 Package.swift

-rw-rw-r-- 1 rootroot rootroot 39115 2月 2 16:49 README.md

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 samples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 spm-headers/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 tests/

-rw-rw-r-- 1 rootroot rootroot 232975 2月 2 16:49 whisper.cpp

-rw-rw-r-- 1 rootroot rootroot 30248 2月 2 16:49 whisper.h

-rw-rw-r-- 1 rootroot rootroot 728384 2月 2 17:46 whisper.o

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ cd ..

rootroot@rootroot-X99-Turbo:~$

rootroot@rootroot-X99-Turbo:~$ cp .bashrc bak1.bashrc

rootroot@rootroot-X99-Turbo:~$ cd -

/home/rootroot/whisper.cpp

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ ll

total 20728

drwxrwxr-x 17 rootroot rootroot 4096 2月 2 17:46 ./

drwxr-xr-x 30 rootroot rootroot 4096 2月 2 17:55 ../

drwxrwxr-x 7 rootroot rootroot 4096 2月 2 16:49 bindings/

-rwx------ 1 rootroot rootroot 3465644 1月 12 01:28 chs.mp4*

-rw-rw-r-- 1 rootroot rootroot 13497126 2月 2 17:26 chs.wav

-rw-rw-r-- 1 rootroot rootroot 11821 2月 2 17:41 chs.wav使用CPU.srt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 cmake/

-rw-rw-r-- 1 rootroot rootroot 19150 2月 2 16:49 CMakeLists.txt

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 coreml/

drwx------ 2 rootroot rootroot 4096 2月 2 17:45 CPU/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 .devops/

drwxrwxr-x 24 rootroot rootroot 4096 2月 2 16:49 examples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 extra/

-rw-rw-r-- 1 rootroot rootroot 31647 2月 2 16:49 ggml-alloc.c

-rw-rw-r-- 1 rootroot rootroot 4055 2月 2 16:49 ggml-alloc.h

-rw-rw-r-- 1 rootroot rootroot 20504 2月 2 17:46 ggml-alloc.o

-rw-rw-r-- 1 rootroot rootroot 67212 2月 2 16:49 ggml-backend.c

-rw-rw-r-- 1 rootroot rootroot 11720 2月 2 16:49 ggml-backend.h

-rw-rw-r-- 1 rootroot rootroot 5874 2月 2 16:49 ggml-backend-impl.h

-rw-rw-r-- 1 rootroot rootroot 58464 2月 2 17:46 ggml-backend.o

-rw-rw-r-- 1 rootroot rootroot 676115 2月 2 16:49 ggml.c

-rw-rw-r-- 1 rootroot rootroot 440093 2月 2 16:49 ggml-cuda.cu

-rw-rw-r-- 1 rootroot rootroot 2104 2月 2 16:49 ggml-cuda.h

-rw-rw-r-- 1 rootroot rootroot 85094 2月 2 16:49 ggml.h

-rw-rw-r-- 1 rootroot rootroot 7567 2月 2 16:49 ggml-impl.h

-rw-rw-r-- 1 rootroot rootroot 2358 2月 2 16:49 ggml-metal.h

-rw-rw-r-- 1 rootroot rootroot 150160 2月 2 16:49 ggml-metal.m

-rw-rw-r-- 1 rootroot rootroot 225659 2月 2 16:49 ggml-metal.metal

-rw-rw-r-- 1 rootroot rootroot 550040 2月 2 17:46 ggml.o

-rw-rw-r-- 1 rootroot rootroot 85693 2月 2 16:49 ggml-opencl.cpp

-rw-rw-r-- 1 rootroot rootroot 1386 2月 2 16:49 ggml-opencl.h

-rw-rw-r-- 1 rootroot rootroot 401791 2月 2 16:49 ggml-quants.c

-rw-rw-r-- 1 rootroot rootroot 13705 2月 2 16:49 ggml-quants.h

-rw-rw-r-- 1 rootroot rootroot 198024 2月 2 17:46 ggml-quants.o

drwxrwxr-x 8 rootroot rootroot 4096 2月 2 16:49 .git/

drwxrwxr-x 3 rootroot rootroot 4096 2月 2 16:49 .github/

-rw-rw-r-- 1 rootroot rootroot 803 2月 2 16:49 .gitignore

-rw-rw-r-- 1 rootroot rootroot 96 2月 2 16:49 .gitmodules

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 grammars/

-rw-rw-r-- 1 rootroot rootroot 1072 2月 2 16:49 LICENSE

-rw-rw-r-- 1 rootroot rootroot 14883 2月 2 16:49 Makefile

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 17:24 models/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 openvino/

-rw-rw-r-- 1 rootroot rootroot 1776 2月 2 16:49 Package.swift

-rw-rw-r-- 1 rootroot rootroot 39115 2月 2 16:49 README.md

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 samples/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 spm-headers/

drwxrwxr-x 2 rootroot rootroot 4096 2月 2 16:49 tests/

-rw-rw-r-- 1 rootroot rootroot 232975 2月 2 16:49 whisper.cpp

-rw-rw-r-- 1 rootroot rootroot 30248 2月 2 16:49 whisper.h

-rw-rw-r-- 1 rootroot rootroot 728384 2月 2 17:46 whisper.o

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ source ~/.bashrc

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ echo $P

$PATH $PIPESTATUS $PPID $PS1 $PS2 $PS4 $PWD

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ echo $PATH

/usr/local/cuda/bin:/home/rootroot/.local/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/snap/bin

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2023 NVIDIA Corporation

Built on Wed_Nov_22_10:17:15_PST_2023

Cuda compilation tools, release 12.3, V12.3.107

Build cuda_12.3.r12.3/compiler.33567101_0

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ echo $LD_LIBRARY_PATH

/usr/local/cuda/lib64:

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$

rootroot@rootroot-X99-Turbo:~/whisper.cpp$ WHISPER_CUBLAS=1 make -j16

I whisper.cpp build info:

I UNAME_S: Linux

I UNAME_P: x86_64

I UNAME_M: x86_64

I CFLAGS: -I. -O3 -DNDEBUG -std=c11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -D_XOPEN_SOURCE=600 -D_GNU_SOURCE -pthread -mavx -mavx2 -mfma -mf16c -msse3 -mssse3 -DGGML_USE_CUBLAS -I/usr/local/cuda/include -I/opt/cuda/include -I/targets/x86_64-linux/include

I LDFLAGS: -lcuda -lcublas -lculibos -lcudart -lcublasLt -lpthread -ldl -lrt -L/usr/local/cuda/lib64 -L/opt/cuda/lib64 -L/targets/x86_64-linux/lib

I CC: cc (Ubuntu 9.4.0-1ubuntu1~20.04.2) 9.4.0