windows上使用anconda安装tensorrt环境

- 1 安装tensorrt

- 1.1 下载最新的稳定的tensorrt 8.6.1(tensorrt对应的cuda、cudnn等版本是参考链接4)

- 1.2 将tensorrt添加到环境变量

- 1.3 安装tensorrt依赖

- 1.4 安装Pycuda

- 1.5 安装pytorch

- 2 测试

- 2.1 测试TensorRT 样例(这个测试主要来源于参考链接1)

- 2.2 测试trtexec是否可以使用(这个测试主要来源于参考链接2)

- 2.2.1 生成pytorch模型

- 2.2.2 将pytorch模型转化为onnx

- 2.2.3 将ONNX格式转成TensorRT格式

- 2.2.4 测试生成的tensorrt模型

- 3 容易出现的问题

- 3.1 cuda 和 tensorrt 版本不匹配的问题

- 3.2 出现编译和加载时不是同一个cuda cuBLAS/cuBLAS

- 4 参考链接

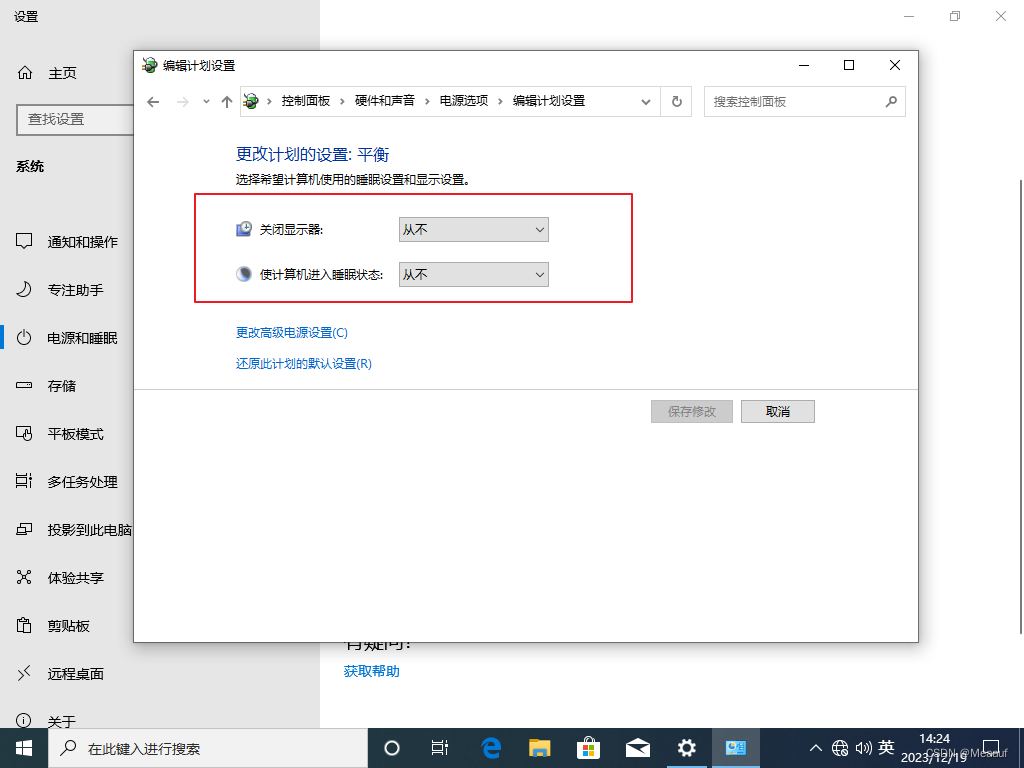

本次使用的window环境是win 11,windows环境安装cuda(cuda版本为11.6.2)和cudnn(cudnn版本为8.8.0其实应该下载8.9.0,tensorrt 8.6.1对应的cudnn版本是8.9.0,如下图1),anconda的安装就不用介绍了,如果不会安装,可以参考这篇文章

图

1

图1

图1

图

1

图1

图1

1 安装tensorrt

1.1 下载最新的稳定的tensorrt 8.6.1(tensorrt对应的cuda、cudnn等版本是参考链接4)

从nvidia官方文件中可以看出,在windows上安装tensorrt只能通过Zip File Installation这个安装方式来进行安装。

- 首先前往tensorrt官网下载,登录会出现不同版本的tensorrt资源,如图2,

点击TensorRT 8。

图

2

图2

图2

图

2

图2

图2 - 然后,直接下载下图3中的

TensorRT 8.6 GA for Windows 10 and CUDA 11.0, 11.1, 11.2, 11.3, 11.4, 11.5, 11.6, 11.7 and 11.8 ZIP Package。

图

3

图3

图3

图

3

图3

图3

1.2 将tensorrt添加到环境变量

- 下载完毕后,将其解压,并且进入lib子文件夹,如下图4所示,将路径

D:\TensorRT-8.6.1.6\lib添加到系统环境变量中。

图

4

图4

图4

图

4

图4

图4

1.3 安装tensorrt依赖

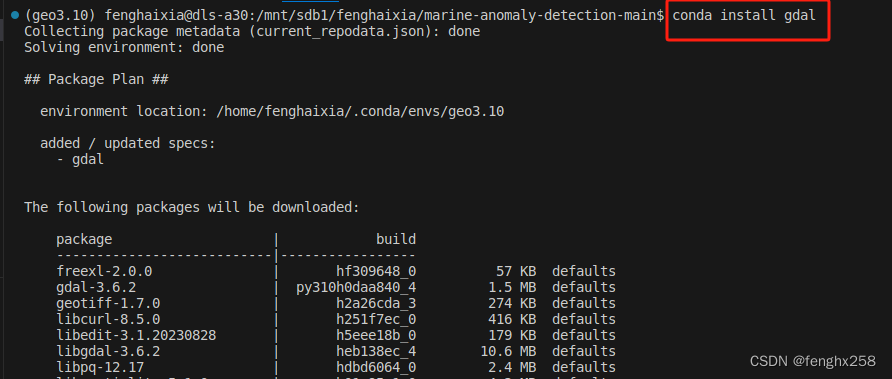

创建一个anconda环境,python版本为python==3.10.12

conda create -n tensorrt python==3.10.12

激活环境

activate tensorrt

进入刚才解压后的TensorRT文件夹内的python子目录,根据python版本选择好对用的whl文件,如下图5所示,并执行下面代码

pip install D:\TensorRT-8.6.1.6\python\tensorrt-8.6.1-cp310-none-win_amd64.whl

图

5

图5

图5上面代码执行结果如下所示

图

5

图5

图5上面代码执行结果如下所示

C:\Users\Administrator>conda create -n tensorrt python==3.10.12

Collecting package metadata (current_repodata.json): done

Solving environment: unsuccessful attempt using repodata from current_repodata.json, retrying with next repodata source.

Collecting package metadata (repodata.json): done

Solving environment: done

1.4 安装Pycuda

前往下载Pycuda的网站,找到Pycuda,并点击Pycuda,就会跳到下图6下载Pycuda版本的网站,然后下载pycuda‑2022.1+cuda116‑cp310‑cp310‑win_amd64.whl

图

6

图6

图6进入tensorrt的conda虚拟环境,输入以下代码指令安装Pycuda

图

6

图6

图6进入tensorrt的conda虚拟环境,输入以下代码指令安装Pycuda

pip install C:\Users\Administrator\Downloads\pycuda‑2022.1+cuda116‑cp310‑cp310‑win_amd64.whl

执行结果如下所示,就代表成功了

(tensorrt) C:\Users\Administrator>pip install C:\Users\Administrator\Downloads\pycuda-2022.1+cuda116-cp310-cp310-win_amd64.whl

Looking in indexes: https://pypi.org/simple, https://pypi.ngc.nvidia.com

Processing c:\users\administrator\downloads\pycuda-2022.1+cuda116-cp310-cp310-win_amd64.whl

Collecting pytools>=2011.2 (from pycuda==2022.1+cuda116)

Downloading pytools-2023.1.1-py2.py3-none-any.whl.metadata (2.7 kB)

Collecting appdirs>=1.4.0 (from pycuda==2022.1+cuda116)

Downloading appdirs-1.4.4-py2.py3-none-any.whl (9.6 kB)

Collecting mako (from pycuda==2022.1+cuda116)

Downloading Mako-1.3.0-py3-none-any.whl.metadata (2.9 kB)

Requirement already satisfied: platformdirs>=2.2.0 in c:\users\administrator\appdata\roaming\python\python310\site-packages (from pytools>=2011.2->pycuda==2022.1+cuda116) (4.1.0)

Collecting typing-extensions>=4.0 (from pytools>=2011.2->pycuda==2022.1+cuda116)

Downloading typing_extensions-4.9.0-py3-none-any.whl.metadata (3.0 kB)

Collecting MarkupSafe>=0.9.2 (from mako->pycuda==2022.1+cuda116)

Downloading MarkupSafe-2.1.4-cp310-cp310-win_amd64.whl.metadata (3.1 kB)

Downloading pytools-2023.1.1-py2.py3-none-any.whl (70 kB)

---------------------------------------- 70.6/70.6 kB 256.7 kB/s eta 0:00:00

Downloading Mako-1.3.0-py3-none-any.whl (78 kB)

---------------------------------------- 78.6/78.6 kB 1.5 MB/s eta 0:00:00

Downloading MarkupSafe-2.1.4-cp310-cp310-win_amd64.whl (17 kB)

Downloading typing_extensions-4.9.0-py3-none-any.whl (32 kB)

Installing collected packages: appdirs, typing-extensions, MarkupSafe, pytools, mako, pycuda

Successfully installed MarkupSafe-2.1.4 appdirs-1.4.4 mako-1.3.0 pycuda-2022.1+cuda116 pytools-2023.1.1 typing-extensions-4.9.0

1.5 安装pytorch

进入tensorrt虚拟环境中,安装pytorch,注意这个安装pytorch,一定要使用pip的方式安装,不要使用conda的方式安装

pip install torch==1.13.1+cu116 torchvision==0.14.1+cu116 torchaudio==0.13.1 --extra-index-url https://download.pytorch.org/whl/cu116

安装成功后,可以查看pytorch的cuda是不是可以用

import torch

torch.cuda.is_available() # 为True则可以用

2 测试

2.1 测试TensorRT 样例(这个测试主要来源于参考链接1)

tensorrt官方提供了可供测试的样例,进入刚才下载好的tensorrt文件夹下面的samples\python\network_api_pytorch_mnist目录下,这里我们选择一个手写数字识别的示例,如下图7所示。

图

7

图7

图7拷贝路径,在tensorrt的虚拟环境下,cd 此路径,然后输入如下指令

图

7

图7

图7拷贝路径,在tensorrt的虚拟环境下,cd 此路径,然后输入如下指令

python sample.py

执行结果如下,就代表成功了

(tensorrt) D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist>python sample.py

Train Epoch: 1 [0/60000 (0%)] Loss: 2.292776

Train Epoch: 1 [6400/60000 (11%)] Loss: 0.694761

Train Epoch: 1 [12800/60000 (21%)] Loss: 0.316812

Train Epoch: 1 [19200/60000 (32%)] Loss: 0.101704

Train Epoch: 1 [25600/60000 (43%)] Loss: 0.087654

Train Epoch: 1 [32000/60000 (53%)] Loss: 0.230672

Train Epoch: 1 [38400/60000 (64%)] Loss: 0.189763

Train Epoch: 1 [44800/60000 (75%)] Loss: 0.157570

Train Epoch: 1 [51200/60000 (85%)] Loss: 0.043530

Train Epoch: 1 [57600/60000 (96%)] Loss: 0.107672

Test set: Average loss: 0.0927, Accuracy: 9732/10000 (97%)

Train Epoch: 2 [0/60000 (0%)] Loss: 0.049581

Train Epoch: 2 [6400/60000 (11%)] Loss: 0.063095

Train Epoch: 2 [12800/60000 (21%)] Loss: 0.086241

Train Epoch: 2 [19200/60000 (32%)] Loss: 0.100145

Train Epoch: 2 [25600/60000 (43%)] Loss: 0.087662

Train Epoch: 2 [32000/60000 (53%)] Loss: 0.064293

Train Epoch: 2 [38400/60000 (64%)] Loss: 0.053872

Train Epoch: 2 [44800/60000 (75%)] Loss: 0.153787

Train Epoch: 2 [51200/60000 (85%)] Loss: 0.065774

Train Epoch: 2 [57600/60000 (96%)] Loss: 0.067333

Test set: Average loss: 0.0520, Accuracy: 9835/10000 (98%)

[01/26/2024-20:43:07] [TRT] [W] CUDA lazy loading is not enabled. Enabling it can significantly reduce device memory usage and speed up TensorRT initialization. See "Lazy Loading" section of CUDA documentation https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#lazy-loading

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:112: DeprecationWarning: Use set_memory_pool_limit instead.

config.max_workspace_size = common.GiB(1)

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:75: DeprecationWarning: Use add_convolution_nd instead.

conv1 = network.add_convolution(

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:78: DeprecationWarning: Use stride_nd instead.

conv1.stride = (1, 1)

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:80: DeprecationWarning: Use add_pooling_nd instead.

pool1 = network.add_pooling(input=conv1.get_output(0), type=trt.PoolingType.MAX, window_size=(2, 2))

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:81: DeprecationWarning: Use stride_nd instead.

pool1.stride = (2, 2)

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:85: DeprecationWarning: Use add_convolution_nd instead.

conv2 = network.add_convolution(pool1.get_output(0), 50, (5, 5), conv2_w, conv2_b)

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:86: DeprecationWarning: Use stride_nd instead.

conv2.stride = (1, 1)

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:88: DeprecationWarning: Use add_pooling_nd instead.

pool2 = network.add_pooling(conv2.get_output(0), trt.PoolingType.MAX, (2, 2))

D:\TensorRT-8.6.1.6\samples\python\network_api_pytorch_mnist\sample.py:89: DeprecationWarning: Use stride_nd instead.

pool2.stride = (2, 2)

[01/26/2024-20:43:11] [TRT] [W] CUDA lazy loading is not enabled. Enabling it can significantly reduce device memory usage and speed up TensorRT initialization. See "Lazy Loading" section of CUDA documentation https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#lazy-loading

Test Case: 2

Prediction: 2

2.2 测试trtexec是否可以使用(这个测试主要来源于参考链接2)

2.2.1 生成pytorch模型

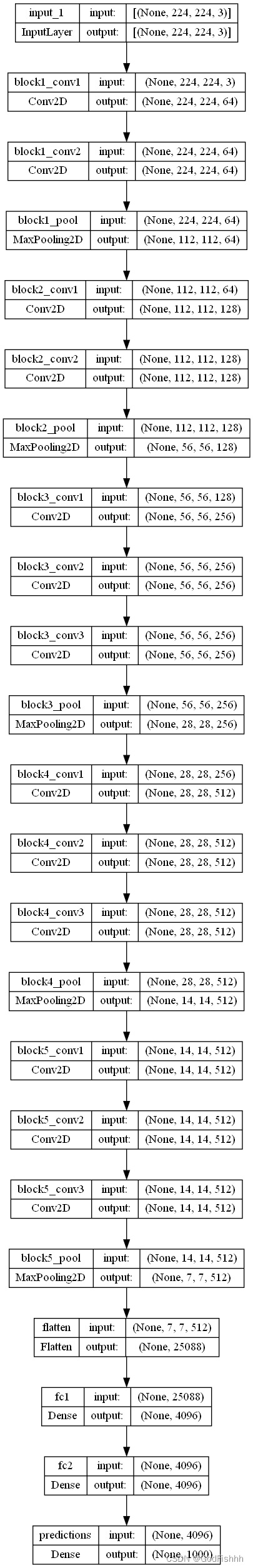

使用pytorch官方提供的resnet34训练flower数据集,得到pytorch模型,代码来源https://github.com/WZMIAOMIAO/deep-learning-for-image-processing/tree/master/pytorch_classification/Test5_resnet,flower数据集的来源也是这个链接的上一文件的readme.md,训练完成后,会得到resNet34.pth,如下图8所示

图

8

图8

图8

图

8

图8

图8

2.2.2 将pytorch模型转化为onnx

这里就是以Pytorch官方提供的ResNet34为例(也就是上面代码训练好的resNet34.pth),直接从torchvision中实例化ResNet34并载入自己在flower_photos数据集上训练好的权重,然后在转成ONNX格式,示例代码如下:

import torch

import torch.onnx

import onnx

import onnxruntime

import numpy as np

from torchvision.models import resnet34

device = torch.device("cpu")

def to_numpy(tensor):

return tensor.detach().cpu().numpy() if tensor.requires_grad else tensor.cpu().numpy()

def main():

weights_path = "resNet34.pth"

onnx_file_name = "resnet34.onnx"

batch_size = 1

img_h = 224

img_w = 224

img_channel = 3

# create model and load pretrain weights

model = resnet34(pretrained=False, num_classes=5)

model.load_state_dict(torch.load(weights_path, map_location='cpu'))

model.eval()

# input to the model

# [batch, channel, height, width]

x = torch.rand(batch_size, img_channel, img_h, img_w, requires_grad=True)

torch_out = model(x)

# export the model

torch.onnx.export(model, # model being run

x, # model input (or a tuple for multiple inputs)

onnx_file_name, # where to save the model (can be a file or file-like object)

input_names=["input"],

output_names=["output"],

verbose=False)

# check onnx model

onnx_model = onnx.load(onnx_file_name)

onnx.checker.check_model(onnx_model)

ort_session = onnxruntime.InferenceSession(onnx_file_name)

# compute ONNX Runtime output prediction

ort_inputs = {ort_session.get_inputs()[0].name: to_numpy(x)}

ort_outs = ort_session.run(None, ort_inputs)

# compare ONNX Runtime and Pytorch results

# assert_allclose: Raises an AssertionError if two objects are not equal up to desired tolerance.

np.testing.assert_allclose(to_numpy(torch_out), ort_outs[0], rtol=1e-03, atol=1e-05)

print("Exported model has been tested with ONNXRuntime, and the result looks good!")

if __name__ == '__main__':

main()

在运行上面代码之前,还得在tensorrt虚拟环境中安装onnx(onnx==1.12.0)和onnxruntime(onnxruntime==1.12.0)(onnx和onnxruntime的版本对应关系可以参考这个链接,当然如果要查看最新的版本的,可以直接google哦)

pip install onnx==1.12.0

pip install onnxruntime==1.12.0

注意,这里将Pytorch模型转成ONNX后,又利用ONNXRUNTIME载入导出的模型,然后输入同样的数据利用np.testing.assert_allclose方法对比转换前后输出的差异,其中rtol代表相对偏差,atol代表绝对偏差,如果两者的差异超出指定的精度则会报错。在转换后,会在当前文件夹中生成一个resnet34.onnx文件。

2.2.3 将ONNX格式转成TensorRT格式

将ONNX转成TensorRT engine的方式有多种,其中最简单的就是使用trtexec工具。在上面2.2.2章节中已经将Pyotrch中的Resnet34转成ONNX格式了,接下来可以直接使用trtexec工具将其转为TensorRT engine格式:

trtexec --onnx=resnet34.onnx --saveEngine=trt_output/resnet34.trt

其中:

- –onnx是指向生成的onnx模型文件路径

- –saveEngine是保存TensorRT engine的文件路径(发现一个小问题,就是保存的目录必须提前创建好,如果没有创建的话就会报错)

在进行trtexec之前,还需要将trtexec.exe即D:\TensorRT-8.6.1.6\bin添加到环境变量中,具体得添加过程就不赘述了,需要添加得路径如下图9所示

图

9

图9

图9添加环境变量后,如果使用VScode,请务必将VScode关闭后,在再次打开在上述终端中执行指令;也可以通过win 11自带的cmd窗口执行上述命令,两种方法的都可以,执行结果如下所示:

图

9

图9

图9添加环境变量后,如果使用VScode,请务必将VScode关闭后,在再次打开在上述终端中执行指令;也可以通过win 11自带的cmd窗口执行上述命令,两种方法的都可以,执行结果如下所示:

(tensorrt) C:\Users\Administrator\Desktop\resnet>trtexec --onnx=resnet34.onnx --saveEngine=trt_output/resnet34.trt

&&&& RUNNING TensorRT.trtexec [TensorRT v8601] # trtexec --onnx=resnet34.onnx --saveEngine=trt_output/resnet34.trt

[01/26/2024-22:16:49] [I] === Model Options ===

[01/26/2024-22:16:49] [I] Format: ONNX

[01/26/2024-22:16:49] [I] Model: resnet34.onnx

[01/26/2024-22:16:49] [I] Output:

[01/26/2024-22:16:49] [I] === Build Options ===

[01/26/2024-22:16:49] [I] Max batch: explicit batch

[01/26/2024-22:16:49] [I] Memory Pools: workspace: default, dlaSRAM: default, dlaLocalDRAM: default, dlaGlobalDRAM: default

[01/26/2024-22:16:49] [I] minTiming: 1

[01/26/2024-22:16:49] [I] avgTiming: 8

[01/26/2024-22:16:49] [I] Precision: FP32

[01/26/2024-22:16:49] [I] LayerPrecisions:

[01/26/2024-22:16:49] [I] Layer Device Types:

[01/26/2024-22:16:49] [I] Calibration:

[01/26/2024-22:16:49] [I] Refit: Disabled

[01/26/2024-22:16:49] [I] Version Compatible: Disabled

[01/26/2024-22:16:49] [I] TensorRT runtime: full

[01/26/2024-22:16:49] [I] Lean DLL Path:

[01/26/2024-22:16:49] [I] Tempfile Controls: { in_memory: allow, temporary: allow }

[01/26/2024-22:16:49] [I] Exclude Lean Runtime: Disabled

[01/26/2024-22:16:49] [I] Sparsity: Disabled

[01/26/2024-22:16:49] [I] Safe mode: Disabled

[01/26/2024-22:16:49] [I] Build DLA standalone loadable: Disabled

[01/26/2024-22:16:49] [I] Allow GPU fallback for DLA: Disabled

[01/26/2024-22:16:49] [I] DirectIO mode: Disabled

[01/26/2024-22:16:49] [I] Restricted mode: Disabled

[01/26/2024-22:16:49] [I] Skip inference: Disabled

[01/26/2024-22:16:49] [I] Save engine: trt_output/resnet34.trt

[01/26/2024-22:16:49] [I] Load engine:

[01/26/2024-22:16:49] [I] Profiling verbosity: 0

[01/26/2024-22:16:49] [I] Tactic sources: Using default tactic sources

[01/26/2024-22:16:49] [I] timingCacheMode: local

[01/26/2024-22:16:49] [I] timingCacheFile:

[01/26/2024-22:16:49] [I] Heuristic: Disabled

[01/26/2024-22:16:49] [I] Preview Features: Use default preview flags.

[01/26/2024-22:16:49] [I] MaxAuxStreams: -1

[01/26/2024-22:16:49] [I] BuilderOptimizationLevel: -1

[01/26/2024-22:16:49] [I] Input(s)s format: fp32:CHW

[01/26/2024-22:16:49] [I] Output(s)s format: fp32:CHW

[01/26/2024-22:16:49] [I] Input build shapes: model

[01/26/2024-22:16:49] [I] Input calibration shapes: model

[01/26/2024-22:16:49] [I] === System Options ===

......

[01/26/2024-22:17:07] [I] Average on 10 runs - GPU latency: 2.13367 ms - Host latency: 2.35083 ms (enqueue 0.329321 ms)

[01/26/2024-22:17:07] [I]

[01/26/2024-22:17:07] [I] === Performance summary ===

[01/26/2024-22:17:07] [I] Throughput: 408.164 qps

[01/26/2024-22:17:07] [I] Latency: min = 2.1969 ms, max = 11.5844 ms, mean = 2.34914 ms, median = 2.26282 ms, percentile(90%) = 2.5896 ms, percentile(95%) = 2.74451 ms, percentile(99%) = 3.15137 ms

[01/26/2024-22:17:07] [I] Enqueue Time: min = 0.214111 ms, max = 11.3787 ms, mean = 0.462134 ms, median = 0.360229 ms, percentile(90%) = 0.757202 ms, percentile(95%) = 0.912842 ms, percentile(99%) = 1.60339 ms

[01/26/2024-22:17:07] [I] H2D Latency: min = 0.20166 ms, max = 0.341309 ms, mean = 0.229284 ms, median = 0.223328 ms, percentile(90%) = 0.264771 ms, percentile(95%) = 0.274536 ms, percentile(99%) = 0.304443 ms

[01/26/2024-22:17:07] [I] GPU Compute Time: min = 1.9906 ms, max = 11.3264 ms, mean = 2.11385 ms, median = 2.0265 ms, percentile(90%) = 2.33765 ms, percentile(95%) = 2.4895 ms, percentile(99%) = 2.89893 ms

[01/26/2024-22:17:07] [I] D2H Latency: min = 0.00415039 ms, max = 0.0593262 ms, mean = 0.00600404 ms, median = 0.00463867 ms, percentile(90%) = 0.0119629 ms, percentile(95%) = 0.0145569 ms, percentile(99%) = 0.0292969 ms

[01/26/2024-22:17:07] [I] Total Host Walltime: 3.0037 s

[01/26/2024-22:17:07] [I] Total GPU Compute Time: 2.59158 s

[01/26/2024-22:17:07] [W] * GPU compute time is unstable, with coefficient of variance = 15.3755%.

[01/26/2024-22:17:07] [W] If not already in use, locking GPU clock frequency or adding --useSpinWait may improve the stability.

[01/26/2024-22:17:07] [I] Explanations of the performance metrics are printed in the verbose logs.

[01/26/2024-22:17:07] [I]

&&&& PASSED TensorRT.trtexec [TensorRT v8601] # trtexec --onnx=resnet34.onnx --saveEngine=trt_output/resnet34.trt

执行结果生成的trt模型如下图10所示:

图

10

图10

图10

图

10

图10

图10

2.2.4 测试生成的tensorrt模型

这个测试demo是参考链接2写的,在样例中对比ONNX和TensorRT的输出结果:

import numpy as np

import tensorrt as trt

import onnxruntime

import pycuda.driver as cuda

import pycuda.autoinit

def normalize(image: np.ndarray) -> np.ndarray:

"""

Normalize the image to the given mean and standard deviation

"""

image = image.astype(np.float32)

mean = (0.485, 0.456, 0.406)

std = (0.229, 0.224, 0.225)

image /= 255.0

image -= mean

image /= std

return image

def onnx_inference(onnx_path: str, image: np.ndarray):

# load onnx model

ort_session = onnxruntime.InferenceSession(onnx_path)

# compute onnx Runtime output prediction

ort_inputs = {ort_session.get_inputs()[0].name: image}

res_onnx = ort_session.run(None, ort_inputs)[0]

return res_onnx

def trt_inference(trt_path: str, image: np.ndarray):

# Load the network in Inference Engine

trt_logger = trt.Logger(trt.Logger.WARNING)

with open(trt_path, "rb") as f, trt.Runtime(trt_logger) as runtime:

engine = runtime.deserialize_cuda_engine(f.read())

with engine.create_execution_context() as context:

# Set input shape based on image dimensions for inference

context.set_binding_shape(engine.get_binding_index("input"), (1, 3, image.shape[-2], image.shape[-1]))

# Allocate host and device buffers

bindings = []

for binding in engine:

binding_idx = engine.get_binding_index(binding)

size = trt.volume(context.get_binding_shape(binding_idx))

dtype = trt.nptype(engine.get_binding_dtype(binding))

if engine.binding_is_input(binding):

input_buffer = np.ascontiguousarray(image)

input_memory = cuda.mem_alloc(image.nbytes)

bindings.append(int(input_memory))

else:

output_buffer = cuda.pagelocked_empty(size, dtype)

output_memory = cuda.mem_alloc(output_buffer.nbytes)

bindings.append(int(output_memory))

stream = cuda.Stream()

# Transfer input data to the GPU.

cuda.memcpy_htod_async(input_memory, input_buffer, stream)

# Run inference

context.execute_async_v2(bindings=bindings, stream_handle=stream.handle)

# Transfer prediction output from the GPU.

cuda.memcpy_dtoh_async(output_buffer, output_memory, stream)

# Synchronize the stream

stream.synchronize()

res_trt = np.reshape(output_buffer, (1, -1))

return res_trt

def main():

image_h = 224

image_w = 224

onnx_path = "resnet34.onnx"

trt_path = "trt_output/resnet34.trt"

image = np.random.randn(image_h, image_w, 3)

normalized_image = normalize(image)

# Convert the resized images to network input shape

# [h, w, c] -> [c, h, w] -> [1, c, h, w]

normalized_image = np.expand_dims(np.transpose(normalized_image, (2, 0, 1)), 0)

onnx_res = onnx_inference(onnx_path, normalized_image)

ir_res = trt_inference(trt_path, normalized_image)

np.testing.assert_allclose(onnx_res, ir_res, rtol=1e-03, atol=1e-05)

print("Exported model has been tested with TensorRT Runtime, and the result looks good!")

if __name__ == '__main__':

main()

执行结果如下:

(tensorrt) C:\Users\Administrator\Desktop\resnet>C:/ProgramData/anaconda3/envs/tensorrt/python.exe c:/Users/Administrator/Desktop/resnet/demo.py

[01/26/2024-22:19:08] [TRT] [W] CUDA lazy loading is not enabled. Enabling it can significantly reduce device memory usage and speed up TensorRT initialization. See "Lazy Loading" section of CUDA documentation https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#lazy-loading

c:\Users\Administrator\Desktop\resnet\demo.py:39: DeprecationWarning: Use get_tensor_name instead.

context.set_binding_shape(engine.get_binding_index("input"), (1, 3, image.shape[-2], image.shape[-1]))

c:\Users\Administrator\Desktop\resnet\demo.py:39: DeprecationWarning: Use set_input_shape instead.

context.set_binding_shape(engine.get_binding_index("input"), (1, 3, image.shape[-2], image.shape[-1]))

c:\Users\Administrator\Desktop\resnet\demo.py:43: DeprecationWarning: Use get_tensor_name instead.

binding_idx = engine.get_binding_index(binding)

c:\Users\Administrator\Desktop\resnet\demo.py:44: DeprecationWarning: Use get_tensor_shape instead.

size = trt.volume(context.get_binding_shape(binding_idx))

c:\Users\Administrator\Desktop\resnet\demo.py:45: DeprecationWarning: Use get_tensor_dtype instead.

dtype = trt.nptype(engine.get_binding_dtype(binding))

c:\Users\Administrator\Desktop\resnet\demo.py:46: DeprecationWarning: Use get_tensor_mode instead.

if engine.binding_is_input(binding):

Exported model has been tested with TensorRT Runtime, and the result looks good!

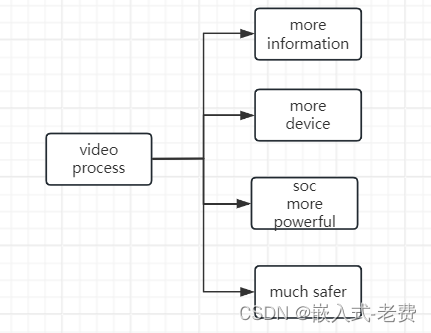

这个结果已经是成功了,只不过里面还有一些瑕疵,如结果中提到的CUDA lazy loading is not enabled的问题,可以使用在win 11的系统变量中添加变量名为CUDA_MODULE_LOADING和变量值为LAZY,如下图11所示:

图

11

图11

图11添加之后,再次执行代码,得到结果,可以看出,上面的

图

11

图11

图11添加之后,再次执行代码,得到结果,可以看出,上面的CUDA lazy loading is not enabled的问题已经解决了,剩下的问题原因就是tensorrt的版本为最新的8.6.1,这个测试demo是来源于参考链接2,使用的tensorrt版本为8.2.5,8.2.5中的很多API接口在8.6.1中更新了,都已经需要被8.6.1中的代替,这个就不解决了。

(tensorrt) C:\Users\Administrator\Desktop\resnet>C:/ProgramData/anaconda3/envs/tensorrt/python.exe c:/Users/Administrator/Desktop/resnet/demo.py

c:\Users\Administrator\Desktop\resnet\demo.py:39: DeprecationWarning: Use get_tensor_name instead.

context.set_binding_shape(engine.get_binding_index("input"), (1, 3, image.shape[-2], image.shape[-1]))

c:\Users\Administrator\Desktop\resnet\demo.py:39: DeprecationWarning: Use set_input_shape instead.

context.set_binding_shape(engine.get_binding_index("input"), (1, 3, image.shape[-2], image.shape[-1]))

c:\Users\Administrator\Desktop\resnet\demo.py:43: DeprecationWarning: Use get_tensor_name instead.

binding_idx = engine.get_binding_index(binding)

c:\Users\Administrator\Desktop\resnet\demo.py:44: DeprecationWarning: Use get_tensor_shape instead.

size = trt.volume(context.get_binding_shape(binding_idx))

c:\Users\Administrator\Desktop\resnet\demo.py:45: DeprecationWarning: Use get_tensor_dtype instead.

dtype = trt.nptype(engine.get_binding_dtype(binding))

c:\Users\Administrator\Desktop\resnet\demo.py:46: DeprecationWarning: Use get_tensor_mode instead.

if engine.binding_is_input(binding):

Exported model has been tested with TensorRT Runtime, and the result looks good!

3 容易出现的问题

3.1 cuda 和 tensorrt 版本不匹配的问题

这个问题可能会导致在执行2.2测试的时候出现退出程序的问题,在断点调试的时候,应该会出现下面的问题,尽可能的让cuda和tensorrt的版本一致

trt.volume(context.get_binding_shape(binding_idx))

[WinError 10054] 远程主机强迫关闭了一个现有的连接。

3.2 出现编译和加载时不是同一个cuda cuBLAS/cuBLAS

即是下面的问题

[TRT] TensorRT was linked against cuBLAS/cuBLAS LT 11.6.3 but loaded cuBLAS/cuBLAS LT 11.5.1

最终要的解决方法前面已经提到过,就是anconda创建的虚拟环境tensorrt一定要使用pip安装pytorch,如果使用conda的方法安装pytorch,tensorrt会自动安装cudatoolkit,这可能会导致与win 11环境中安装的cuda版本不一致,导致出现上面的问题。

当然,如果不是上一段话引起的问题,使用pip的方法解决不了的话,可以阅读这一篇文章和这一篇文章。

4 参考链接

- TensorRT(一)Windows+Anaconda配置TensorRT环境 (Python版 )

- TensorRT安装记录(8.2.5)

- TensorRT的支持的cuda、cudnn等环境版本