多维时序 | Matlab实现DBO-LSTM蜣螂算法优化长短期记忆神经网络多变量时间序列预测

目录

- 多维时序 | Matlab实现DBO-LSTM蜣螂算法优化长短期记忆神经网络多变量时间序列预测

- 效果一览

- 基本介绍

- 程序设计

- 参考资料

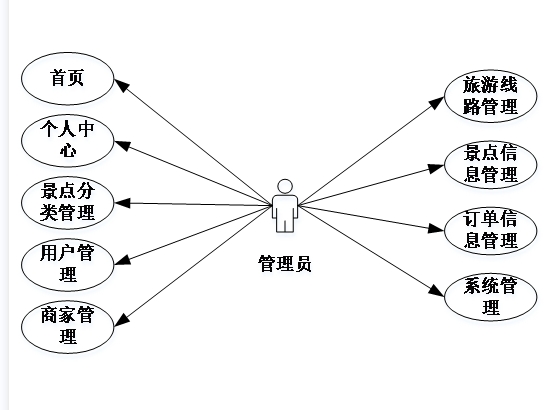

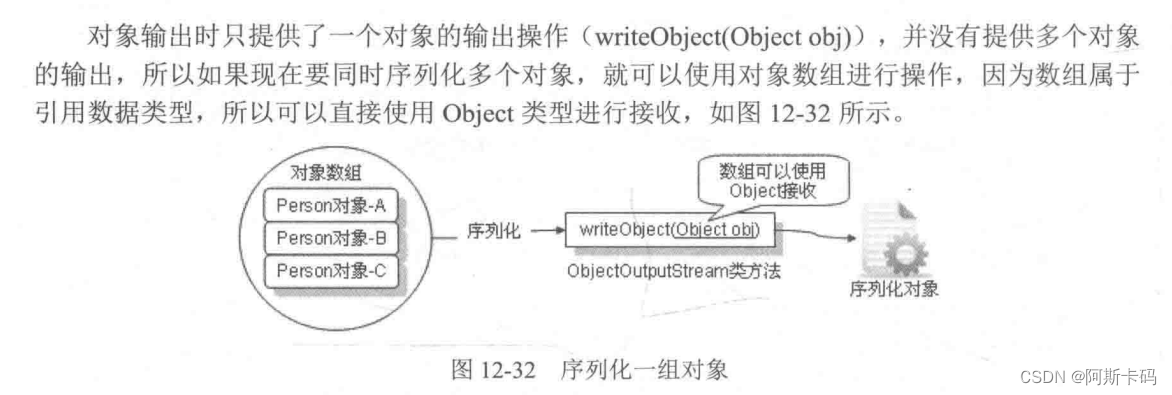

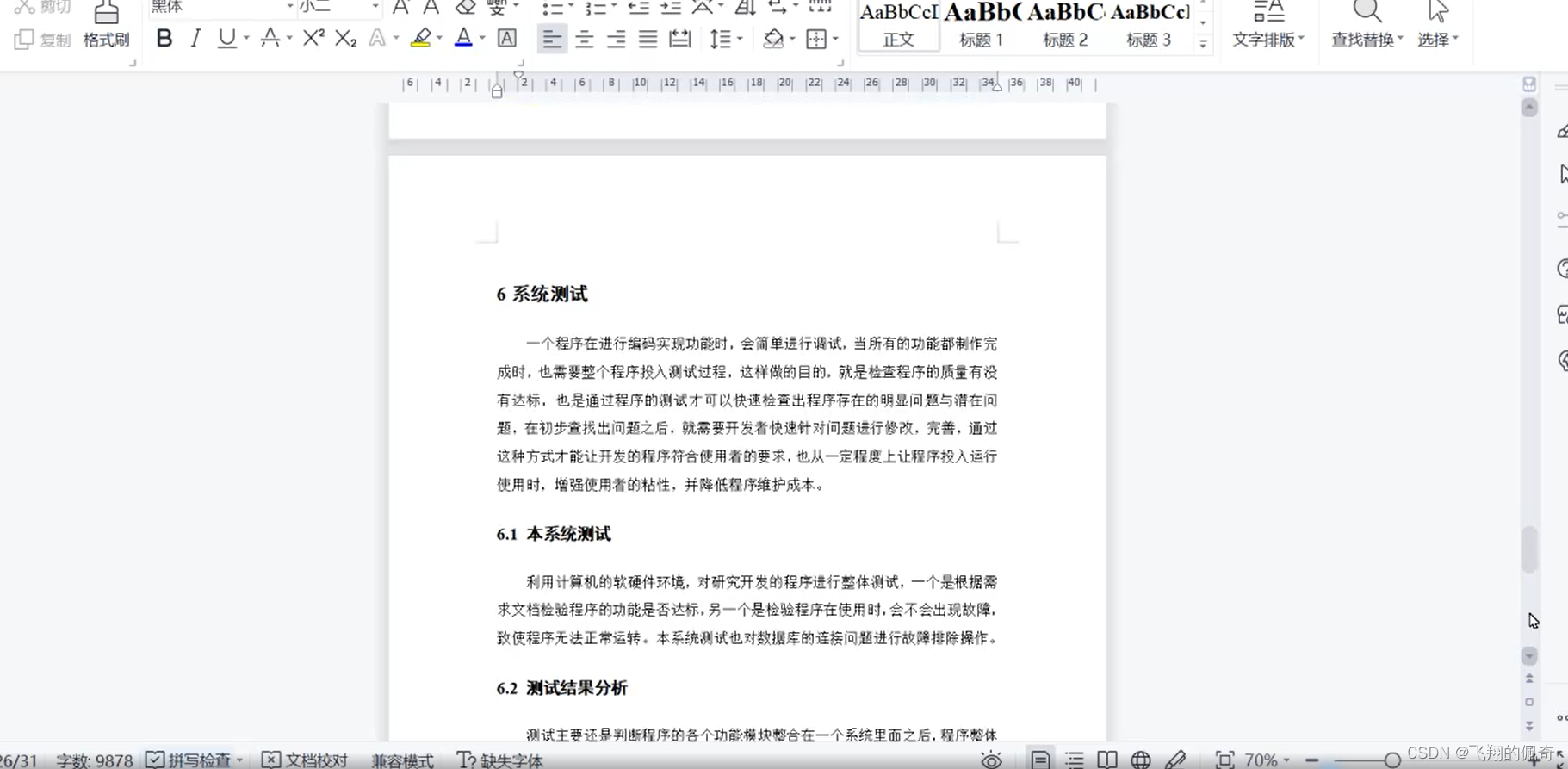

效果一览

基本介绍

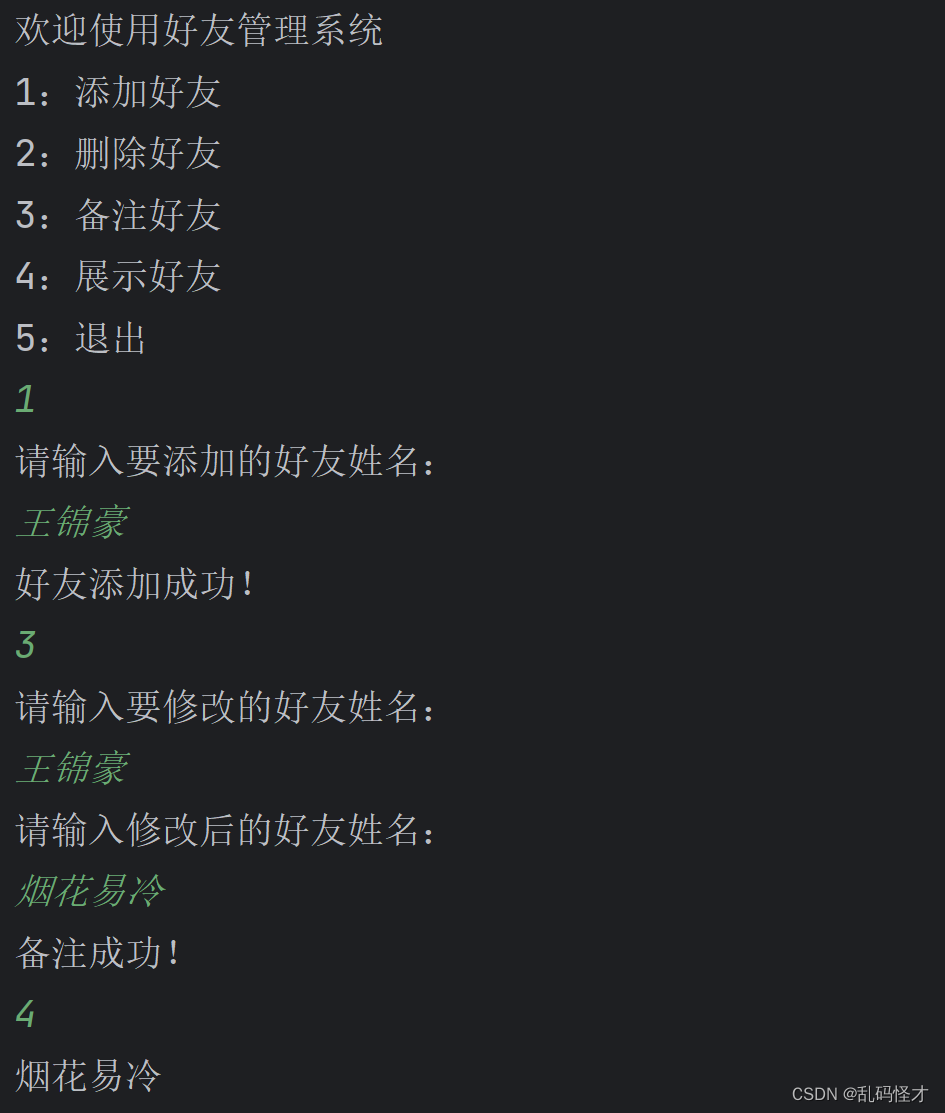

1.Matlab实现DBO-LSTM多变量时间序列预测,蜣螂算法优化长短期记忆神经网络;

蜣螂算法优化LSTM的学习率,隐藏层节点,正则化系数;

2.运行环境为Matlab2018b;

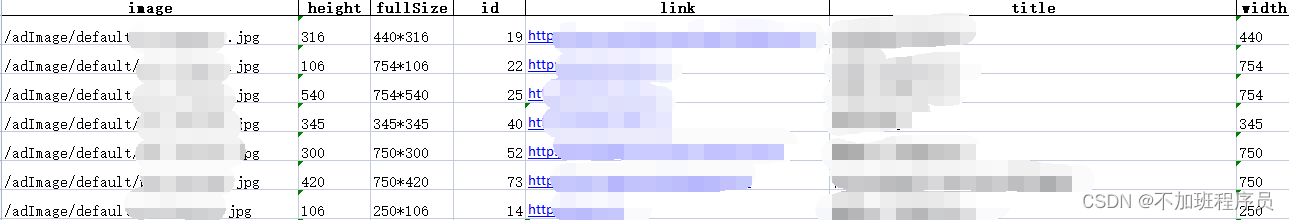

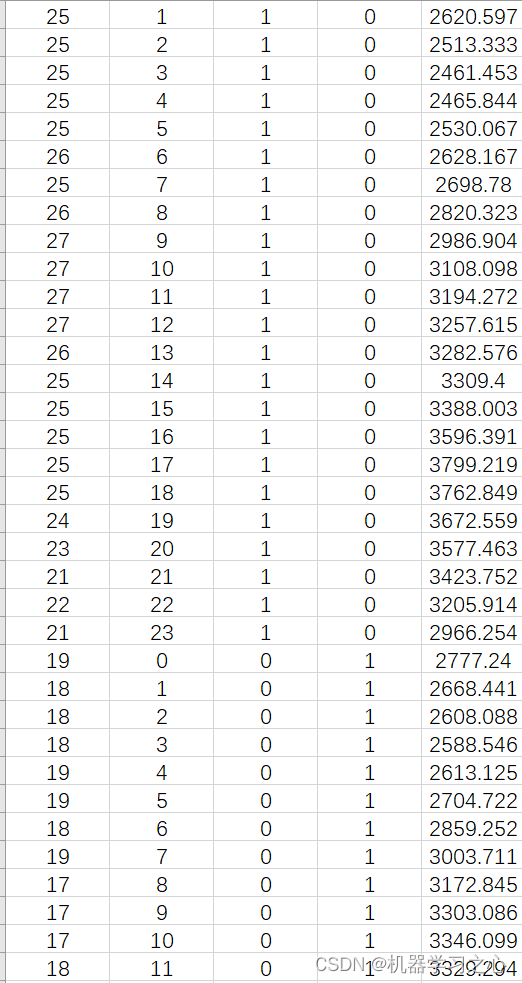

3.输入多个特征,输出单个变量,考虑历史特征的影响,多变量时间序列预测;

4.data为数据集,main.m为主程序,运行即可,所有文件放在一个文件夹;

5.命令窗口输出R2、MSE、MAE、MAPE和MBE多指标评价;

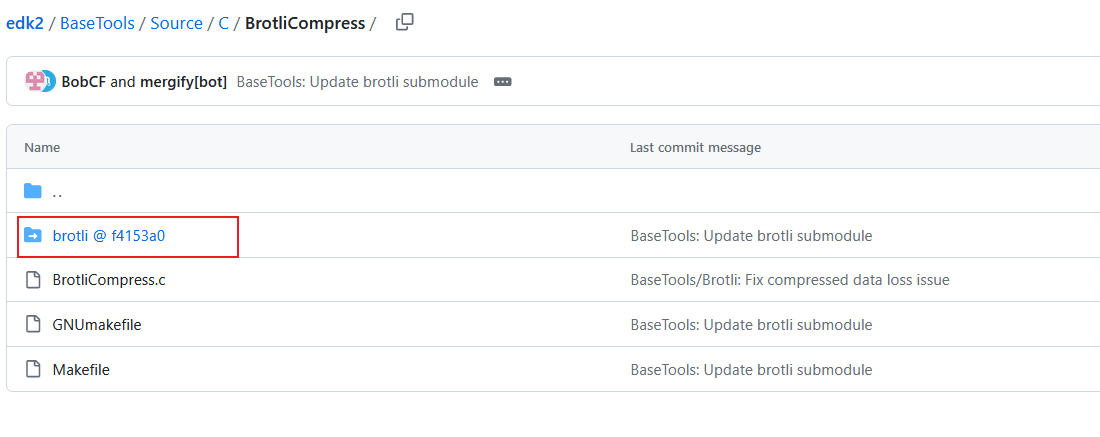

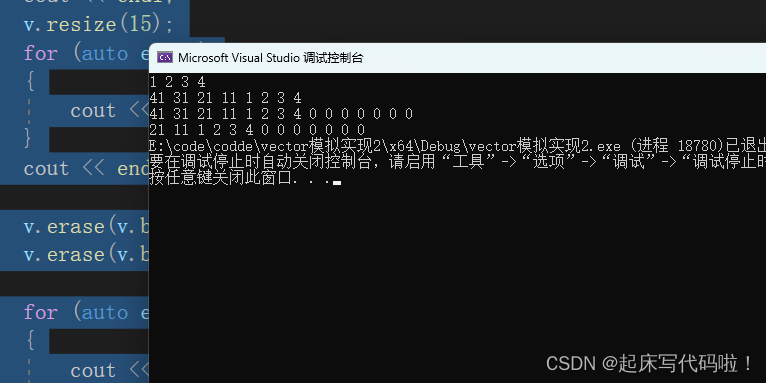

程序设计

- 完整程序和数据下载方式资源处下载:Matlab实现DBO-LSTM蜣螂算法优化长短期记忆神经网络多变量时间序列预测。

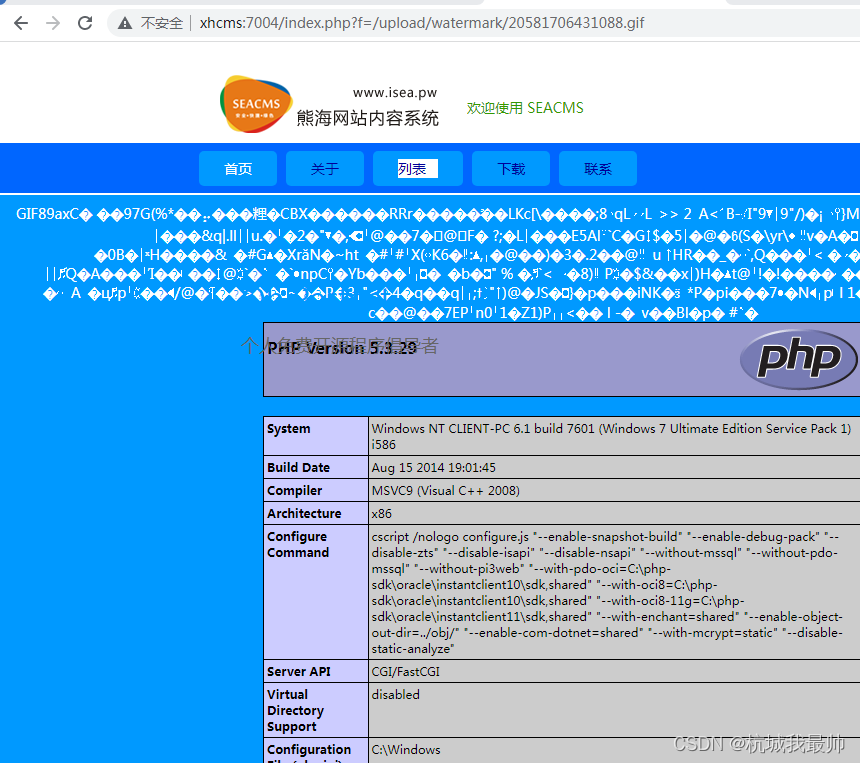

%% 优化算法参数设置

SearchAgents_no = 5; % 种群数量

Max_iteration = 8; % 最大迭代次数

dim = 3; % 优化参数个数

lb = [1e-4, 10, 1e-4]; % 参数取值下界(学习率,隐藏层节点,正则化系数)

ub = [1e-2, 30, 1e-1]; % 参数取值上界(学习率,隐藏层节点,正则化系数)

fitness = @(x)fical(x,p_train,t_train,f_);

%% 记录最佳参数

Best_pos(1, 2) = round(Best_pos(1, 2));

best_lr = Best_pos(1, 1);

best_hd = Best_pos(1, 2);

best_l2 = Best_pos(1, 3);

%% 建立模型

% ---------------------- 修改模型结构时需对应修改fical.m中的模型结构 --------------------------

layers = [

sequenceInputLayer(f_) % 输入层

reluLayer % Relu激活层

fullyConnectedLayer(outdim) % 输出回归层

regressionLayer];

%% 参数设置

% ---------------------- 修改模型参数时需对应修改fical.m中的模型参数 --------------------------

options = trainingOptions('adam', ... % Adam 梯度下降算法

'MaxEpochs', 500, ... % 最大训练次数 500

'InitialLearnRate', best_lr, ... % 初始学习率 best_lr

'LearnRateSchedule', 'piecewise', ... % 学习率下降

'LearnRateDropFactor', 0.5, ... % 学习率下降因子 0.1

'LearnRateDropPeriod', 400, ... % 经过 400 次训练后 学习率为 best_lr * 0.5

'Shuffle', 'every-epoch', ... % 每次训练打乱数据集

'ValidationPatience', Inf, ... % 关闭验证

'L2Regularization', best_l2, ... % 正则化参数

'Plots', 'training-progress', ... % 画出曲线

'Verbose', false);

%% 训练模型

net = trainNetwork(p_train, t_train, layers, options);

%% 仿真验证

t_sim1 = predict(net, p_train);

t_sim2 = predict(net, p_test );

%% 数据反归一化

T_sim1 = mapminmax('reverse', t_sim1, ps_output);

T_sim2 = mapminmax('reverse', t_sim2, ps_output);

T_sim1=double(T_sim1);

T_sim2=double(T_sim2);

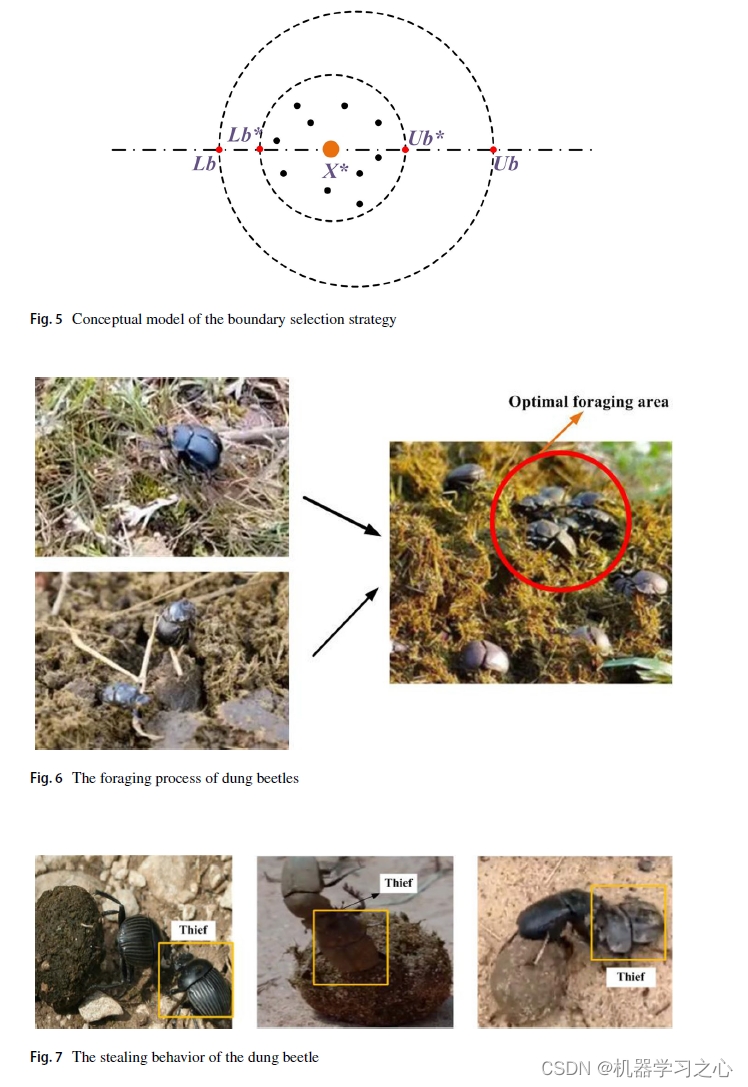

pFit = fit;

pX = x;

XX=pX;

[ fMin, bestI ] = min( fit ); % fMin denotes the global optimum fitness value

bestX = x( bestI, : ); % bestX denotes the global optimum position corresponding to fMin

% Start updating the solutions.

for t = 1 : M

[fmax,B]=max(fit);

worse= x(B,:);

r2=rand(1);

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

for i = 1 : pNum

if(r2<0.9)

r1=rand(1);

a=rand(1,1);

if (a>0.1)

a=1;

else

a=-1;

end

x( i , : ) = pX( i , :)+0.3*abs(pX(i , : )-worse)+a*0.1*(XX( i , :)); % Equation (1)

else

aaa= randperm(180,1);

if ( aaa==0 ||aaa==90 ||aaa==180 )

x( i , : ) = pX( i , :);

end

theta= aaa*pi/180;

x( i , : ) = pX( i , :)+tan(theta).*abs(pX(i , : )-XX( i , :)); % Equation (2)

end

x( i , : ) = Bounds( x(i , : ), lb, ub );

fit( i ) = fobj( x(i , : ) );

end

[ fMMin, bestII ] = min( fit ); % fMin denotes the current optimum fitness value

bestXX = x( bestII, : ); % bestXX denotes the current optimum position

R=1-t/M; %

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

Xnew1 = bestXX.*(1-R);

Xnew2 =bestXX.*(1+R); %%% Equation (3)

Xnew1= Bounds( Xnew1, lb, ub );

Xnew2 = Bounds( Xnew2, lb, ub );

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

Xnew11 = bestX.*(1-R);

Xnew22 =bestX.*(1+R); %%% Equation (5)

Xnew11= Bounds( Xnew11, lb, ub );

Xnew22 = Bounds( Xnew22, lb, ub );

%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%%

for i = ( pNum + 1 ) :12 % Equation (4)

参考资料

[1] https://blog.csdn.net/kjm13182345320/article/details/129215161

[2] https://blog.csdn.net/kjm13182345320/article/details/128105718