Apache Zeppelin结合Apache Airflow使用1

文章目录

- Apache Zeppelin结合Apache Airflow使用1

- 前言

- 一、安装Airflow

- 二、使用步骤

- 1.目标

- 2.编写DAG

- 2.加载、执行DAG

- 总结

前言

之前学了Zeppelin的使用,今天开始结合Airflow串任务。

Apache Airflow和Apache Zeppelin是两个不同的工具,各自用于不同的目的。Airflow用于编排和调度工作流,而Zeppelin是一个交互式数据分析和可视化的笔记本工具。虽然它们有不同的主要用途,但可以结合使用以满足一些复杂的数据处理和分析需求。

下面是一些结合使用Airflow和Zeppelin的方式:

-

Airflow调度Zeppelin Notebooks:

- 使用Airflow编写调度任务,以便在特定时间或事件触发时运行Zeppelin笔记本。

- 在Airflow中使用Zeppelin的REST API或CLI命令来触发Zeppelin笔记本的执行。

-

数据流管道:

- 使用Airflow编排数据处理和转换任务,例如从数据源提取数据、清理和转换数据。

- 在Zeppelin中创建笔记本,用于进一步的数据分析、可视化和报告生成。

- Airflow任务完成后,触发Zeppelin笔记本执行以基于最新数据执行分析。

-

参数传递:

- 通过Airflow参数传递,将一些参数值传递给Zeppelin笔记本,以便在不同任务之间共享信息。

- Zeppelin笔记本可以从Airflow任务中获取参数值,以适应特定的数据分析需求。

-

日志和监控:

- 使用Airflow监控工作流的运行情况,查看任务的日志和执行状态。

- 在Zeppelin中记录和可视化Airflow工作流的关键指标,以获得更全面的工作流性能洞察。

-

整合数据存储:

- Airflow可以用于从不同数据源中提取数据,然后将数据传递给Zeppelin进行进一步的分析。

- Zeppelin可以使用Airflow任务生成的数据,进行更深入的数据挖掘和分析。

结合使用Airflow和Zeppelin能够充分发挥它们各自的优势,实现更全面、可控和可视化的数据处理和分析工作流。

一、安装Airflow

安装参考:

https://airflow.apache.org/docs/apache-airflow/stable/start.html

CentOS 7.9安装后启动会报错,还需要配置下sqlite,参考:https://airflow.apache.org/docs/apache-airflow/2.8.0/howto/set-up-database.html#setting-up-a-sqlite-database

[root@slas bin]# airflow standalone

Traceback (most recent call last):

File "/root/.pyenv/versions/3.9.10/bin/airflow", line 5, in <module>

from airflow.__main__ import main

File "/root/.pyenv/versions/3.9.10/lib/python3.9/site-packages/airflow/__init__.py", line 52, in <module>

from airflow import configuration, settings

File "/root/.pyenv/versions/3.9.10/lib/python3.9/site-packages/airflow/configuration.py", line 2326, in <module>

conf.validate()

File "/root/.pyenv/versions/3.9.10/lib/python3.9/site-packages/airflow/configuration.py", line 718, in validate

self._validate_sqlite3_version()

File "/root/.pyenv/versions/3.9.10/lib/python3.9/site-packages/airflow/configuration.py", line 824, in _validate_sqlite3_version

raise AirflowConfigException(

airflow.exceptions.AirflowConfigException: error: SQLite C library too old (< 3.15.0). See https://airflow.apache.org/docs/apache-airflow/2.8.0/howto/set-up-database.html#setting-up-a-sqlite-database

二、使用步骤

1.目标

我想做个简单的demo,包括两个节点,实现如图所示功能,读取csv,去重:

csv文件输入在airflow上实现,去重在zeppelin上实现。

2.编写DAG

先实现extract_data_script.py,做个简单的读取csv指定列数据写入新的csv文件。

import argparse

import pandas as pd

def extract_and_write_data(date, output_csv, columns_to_extract):

# 读取指定列的数据

csv_file_path = f"/home/works/datasets/data_{date}.csv"

df = pd.read_csv(csv_file_path, usecols=columns_to_extract)

# 将数据写入新的 CSV 文件

df.to_csv(output_csv, index=False)

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.add_argument("--date", type=str, required=True, help="Date parameter passed by Airflow")

args = parser.parse_args()

# 输出 CSV 文件路径(替换为实际的路径)

output_csv_path = "/home/works/output/extracted_data.csv"

# 指定要提取的列

columns_to_extract = ['column1', 'column2', 'column3']

# 调用函数进行数据提取和写入

extract_and_write_data(args.date, output_csv_path, columns_to_extract)

然后在 Zeppelin 中创建一个 Python 笔记本(Notebook),其中包含被 Airflow DAG 调用的代码。加载先前从 output/extracted_data.csv 文件中提取的数据:

%python

# 导入必要的库

import pandas as pd

# 加载先前从 CSV 文件中提取的数据

csv_file_path = "/home/works/output/extracted_data.csv"

# 读取 CSV 文件

df = pd.read_csv(csv_file_path)

# 过滤掉 column1 为空的行

df = df[df['column1'].notnull()]

# 去重,以 column2、column3 字段为联合去重依据

deduplicated_df = df.drop_duplicates(subset=["column2", "column3"])

# 保存去重后的结果到新的 CSV 文件

deduplicated_df.to_csv("/home/works/output/dd_data.csv", index=False)

将这个 Zeppelin 笔记本保存,并记住笔记本的paragraph ID, Airflow DAG 需要使用这个 ID 来调用 Zeppelin 笔记本。

接下来,用VSCode编写zeppelin_integration.py代码如下,上传到$AIRFLOW_HOME/dags目录下:

from airflow import DAG

from airflow.operators.bash_operator import BashOperator

from datetime import datetime, timedelta

default_args = {

'owner': 'airflow',

'depends_on_past': False,

'start_date': datetime(2024, 1, 1),

'email_on_failure': False,

'email_on_retry': False,

'retries': 1,

'retry_delay': timedelta(minutes=5),

}

dag = DAG(

'zeppelin_integration',

default_args=default_args,

schedule=timedelta(days=1),

)

extract_data_task = BashOperator(

task_id='extract_data',

bash_command='python /home/works/z/extract_data_script.py --date {{ ds }}',

dag=dag,

)

run_zeppelin_notebook_task = BashOperator(

task_id='run_zeppelin_notebook',

bash_command='curl -X POST -HContent-Type:application/json http://IP:PORT/api/notebook/run/2JND7T68E/paragraph_1705372327640_1111015359',

dag=dag,

)

# Set the task dependencies

extract_data_task >> run_zeppelin_notebook_task

2.加载、执行DAG

如下命令进行测试,先执行下代码看看语法是否都正确,然后list出tasks,并逐一test:

# python zeppelin_integration.py

# airflow tasks list zeppelin_integration

extract_data

run_zeppelin_notebook

# airflow tasks test zeppelin_integration extract_data 20240122

[2024-01-22T08:57:45.805+0800] {dagbag.py:538} INFO - Filling up the DagBag from /root/airflow/dags

[2024-01-22T08:57:47.853+0800] {taskinstance.py:1957} INFO - Dependencies all met for dep_context=non-requeueable deps ti=<TaskInstance: zeppelin_integration.extract_data __airflow_temporary_run_2024-01-22T00:57:47.740537+00:00__ [None]>

[2024-01-22T08:57:47.860+0800] {taskinstance.py:1957} INFO - Dependencies all met for dep_context=requeueable deps ti=<TaskInstance: zeppelin_integration.extract_data __airflow_temporary_run_2024-01-22T00:57:47.740537+00:00__ [None]>

[2024-01-22T08:57:47.861+0800] {taskinstance.py:2171} INFO - Starting attempt 1 of 2

[2024-01-22T08:57:47.861+0800] {taskinstance.py:2250} WARNING - cannot record queued_duration for task extract_data because previous state change time has not been saved

[2024-01-22T08:57:47.862+0800] {taskinstance.py:2192} INFO - Executing <Task(BashOperator): extract_data> on 2024-01-20T00:00:00+00:00

[2024-01-22T08:57:47.900+0800] {taskinstance.py:2481} INFO - Exporting env vars: AIRFLOW_CTX_DAG_OWNER='airflow' AIRFLOW_CTX_DAG_ID='zeppelin_integration' AIRFLOW_CTX_TASK_ID='extract_data' AIRFLOW_CTX_EXECUTION_DATE='2024-01-20T00:00:00+00:00' AIRFLOW_CTX_TRY_NUMBER='1' AIRFLOW_CTX_DAG_RUN_ID='__airflow_temporary_run_2024-01-22T00:57:47.740537+00:00__'

[2024-01-22T08:57:47.904+0800] {subprocess.py:63} INFO - Tmp dir root location: /tmp

[2024-01-22T08:57:47.905+0800] {subprocess.py:75} INFO - Running command: ['/bin/bash', '-c', 'python /home/works/z/extract_data_script.py --date 2024-01-20']

[2024-01-22T08:57:47.914+0800] {subprocess.py:86} INFO - Output:

[2024-01-22T08:57:48.553+0800] {subprocess.py:97} INFO - Command exited with return code 0

[2024-01-22T08:57:48.632+0800] {taskinstance.py:1138} INFO - Marking task as SUCCESS. dag_id=zeppelin_integration, task_id=extract_data, execution_date=20240120T000000, start_date=, end_date=20240122T005748

# airflow tasks test zeppelin_integration run_zeppelin_notebook 20240122

[2024-01-22T09:01:43.665+0800] {dagbag.py:538} INFO - Filling up the DagBag from /root/airflow/dags

[2024-01-22T09:01:45.835+0800] {taskinstance.py:1957} INFO - Dependencies all met for dep_context=non-requeueable deps ti=<TaskInstance: zeppelin_integration.run_zeppelin_notebook __airflow_temporary_run_2024-01-22T01:01:45.733341+00:00__ [None]>

[2024-01-22T09:01:45.843+0800] {taskinstance.py:1957} INFO - Dependencies all met for dep_context=requeueable deps ti=<TaskInstance: zeppelin_integration.run_zeppelin_notebook __airflow_temporary_run_2024-01-22T01:01:45.733341+00:00__ [None]>

[2024-01-22T09:01:45.844+0800] {taskinstance.py:2171} INFO - Starting attempt 1 of 2

[2024-01-22T09:01:45.844+0800] {taskinstance.py:2250} WARNING - cannot record queued_duration for task run_zeppelin_notebook because previous state change time has not been saved

[2024-01-22T09:01:45.845+0800] {taskinstance.py:2192} INFO - Executing <Task(BashOperator): run_zeppelin_notebook> on 2024-01-22T00:00:00+00:00

[2024-01-22T09:01:45.904+0800] {taskinstance.py:2481} INFO - Exporting env vars: AIRFLOW_CTX_DAG_OWNER='airflow' AIRFLOW_CTX_DAG_ID='zeppelin_integration' AIRFLOW_CTX_TASK_ID='run_zeppelin_notebook' AIRFLOW_CTX_EXECUTION_DATE='2024-01-22T00:00:00+00:00' AIRFLOW_CTX_TRY_NUMBER='1' AIRFLOW_CTX_DAG_RUN_ID='__airflow_temporary_run_2024-01-22T01:01:45.733341+00:00__'

[2024-01-22T09:01:45.909+0800] {subprocess.py:63} INFO - Tmp dir root location: /tmp

[2024-01-22T09:01:45.910+0800] {subprocess.py:75} INFO - Running command: ['/bin/bash', '-c', 'curl -X POST -HContent-Type:application/json http://100.100.30.220:8181/api/notebook/run/2JND7T68E/paragraph_1705372327640_1111015359']

[2024-01-22T09:01:45.921+0800] {subprocess.py:86} INFO - Output:

[2024-01-22T09:01:45.931+0800] {subprocess.py:93} INFO - % Total % Received % Xferd Average Speed Time Time Time Current

[2024-01-22T09:01:45.931+0800] {subprocess.py:93} INFO - Dload Upload Total Spent Left Speed

100 50 100 50 0 0 8 0 0:00:06 0:00:06 --:--:-- 12

[2024-01-22T09:01:52.003+0800] {subprocess.py:93} INFO - {"status":"OK","body":{"code":"SUCCESS","msg":[]}}

[2024-01-22T09:01:52.003+0800] {subprocess.py:97} INFO - Command exited with return code 0

[2024-01-22T09:01:52.098+0800] {taskinstance.py:1138} INFO - Marking task as SUCCESS. dag_id=zeppelin_integration, task_id=run_zeppelin_notebook, execution_date=20240122T000000, start_date=, end_date=20240122T010152

最后用命令airflow scheduler将它添加到airflow里。

# airflow scheduler

____________ _____________

____ |__( )_________ __/__ /________ __

____ /| |_ /__ ___/_ /_ __ /_ __ \_ | /| / /

___ ___ | / _ / _ __/ _ / / /_/ /_ |/ |/ /

_/_/ |_/_/ /_/ /_/ /_/ \____/____/|__/

[2024-01-22T09:28:21.829+0800] {task_context_logger.py:63} INFO - Task context logging is enabled

[2024-01-22T09:28:21.831+0800] {executor_loader.py:115} INFO - Loaded executor: SequentialExecutor

[2024-01-22T09:28:21.868+0800] {scheduler_job_runner.py:808} INFO - Starting the scheduler

[2024-01-22T09:28:21.869+0800] {scheduler_job_runner.py:815} INFO - Processing each file at most -1 times

。。。

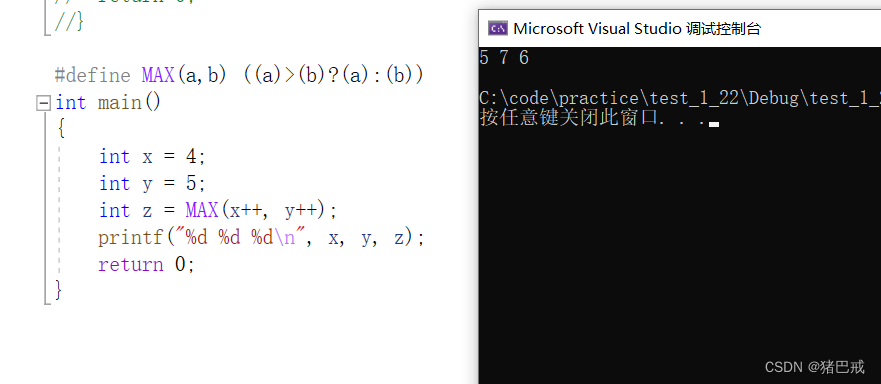

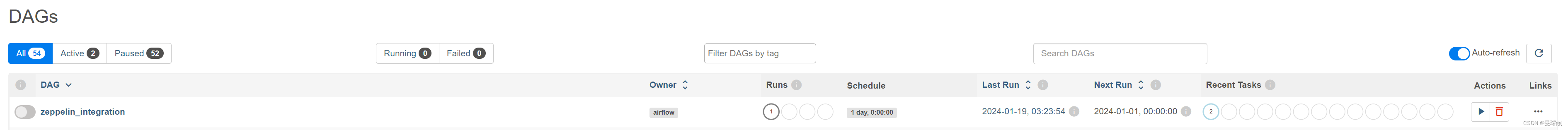

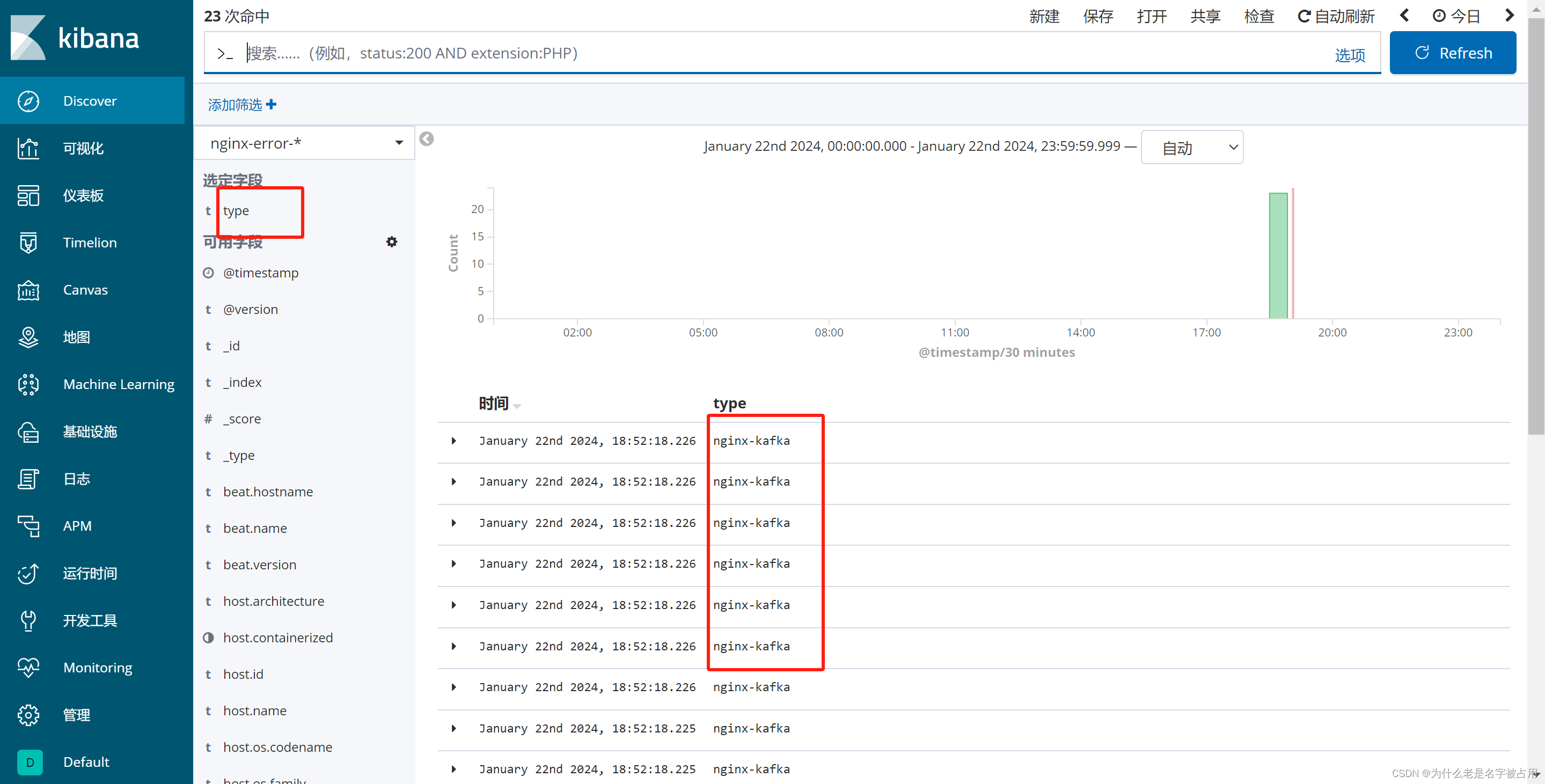

页面上会增加一个DAG,如图:

在Actions里可以点击执行。

总结

以上就是今天要讲的内容,总体来说集成两个工具还是很方便的,期待后面更多的应用。

![[亲测有效]CentOS7下安装mysql5.7](https://img-blog.csdnimg.cn/direct/23f28ce28327404d8b4b7e615ec77bfd.png)