背景

大数据平台的租户要使用udf,他们用beeline连接,

意味着要通过hs2,但如果有多个hs2,各个hs2之间不能共享,需要先把文件传到hdfs,然后手动在各hs2上create function。之后就可以永久使用了,重启hs2也可以

调研

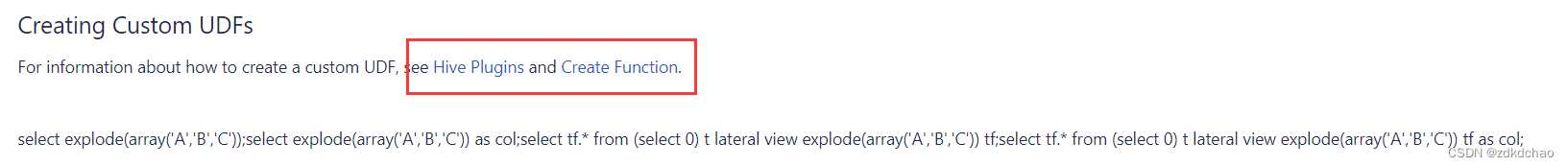

先查的hive官网

https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF#LanguageManualUDF-CreatingCustomUDFs

用beeline执行add jar 和create function,但发现只在当前的hs2生效

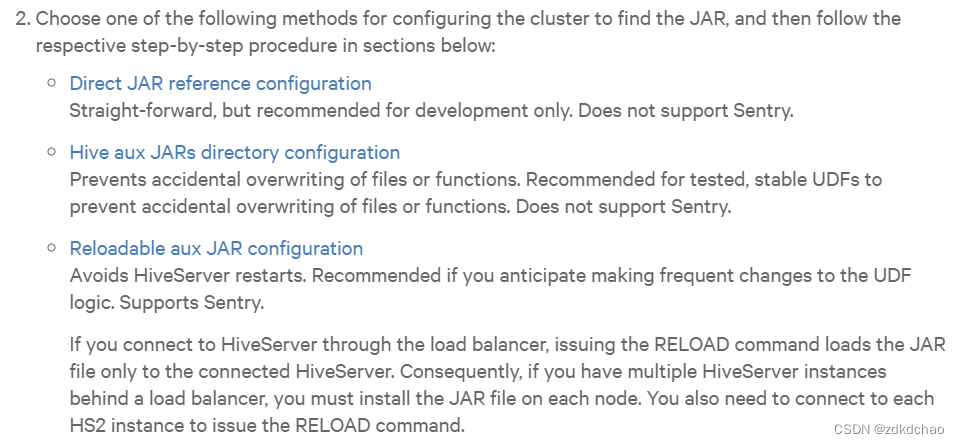

然后查cdh官网

cdh的官网上说配UDF,需要考虑是否重启hs2,是否启用sentry,列出了3种方案。

https://docs.cloudera.com/documentation/enterprise/latest/topics/cm_mc_hive_udf.html

Direct JAR reference configuration

Straight-forward, but recommended for development only. Does not support Sentry.

试了下,是永久的,重启仍然生效,但只对当前的hs2有效,如果有多个hs2,需要在每个hs2上都执行create function命令

虽然我们开了sentry,但没影响,sentry仍然有效

pom

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>sm3UDF</artifactId>

<version>1.0</version>

<packaging>jar</packaging>

<name>sm3UDF</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.bouncycastle</groupId>

<artifactId>bcprov-jdk15on</artifactId>

<version>1.68</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.1.1</version>

</dependency>

<!-- <dependency>-->

<!-- <groupId>junit</groupId>-->

<!-- <artifactId>junit</artifactId>-->

<!-- <version>4.13.2</version>-->

<!-- <scope>test</scope>-->

<!-- </dependency>-->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>2.1.1-cdh6.3.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.1</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>

java

package org.picc.encrypt;

import org.apache.commons.codec.binary.Hex;

import org.apache.hadoop.io.Text;

import org.bouncycastle.crypto.digests.SM3Digest;

import org.apache.hadoop.hive.ql.exec.UDF;

public class Sm3Fun extends UDF{

public static String sm3(String saltBefore, String text, String saltAfter) {

if (text == null) {

return null;

}

Text result = new Text();

SM3Digest digest = new SM3Digest();

Text sb = new Text(saltBefore);

Text value = new Text(text);

Text sa = new Text(saltAfter);

byte[] hashData = new byte[32];

digest.reset();

digest.update(sb.getBytes(), 0, sb.getLength());

digest.update(value.getBytes(), 0, value.getLength());

digest.update(sa.getBytes(), 0, sa.getLength());

digest.doFinal(hashData, 0);

String sm3Hex = Hex.encodeHexString(hashData);

result.set(sm3Hex);

return result.toString();

}

public String evaluate(String text) {

if (text == null) {

return null;

}

Text result = new Text();

SM3Digest digest = new SM3Digest();

Text value = new Text(text);

byte[] hashData = new byte[32];

digest.reset();

digest.update(value.getBytes(), 0, value.getLength());

digest.doFinal(hashData, 0);

String sm3Hex = Hex.encodeHexString(hashData);

result.set(sm3Hex);

return result.toString();

}

}

![[设计模式Java实现附plantuml源码~创建型] 对象的克隆~原型模式](https://img-blog.csdnimg.cn/direct/2473c30ed78045959184631275c0a1bb.png)