10.4.1 模型

Bahdanau 等人提出了一个没有严格单向对齐限制的可微注意力模型。在预测词元时,如果不是所有输入词元都相关,模型将仅对齐(或参与)输入序列中与当前预测相关的部分。这是通过将上下文变量视为注意力集中的输出来实现的。

新的基于注意力的模型与 9.7 节中的模型相同,只不过 9.7 节中的上下文变量 c \boldsymbol{c} c 在任何解码时间步 t ′ \boldsymbol{t'} t′ 都会被 c t ′ \boldsymbol{c}_{t'} ct′ 替换。假设输入序列中有 T \boldsymbol{T} T 个词元,解码时间步 t ′ \boldsymbol{t'} t′ 的上下文变量是注意力集中的输出:

c t ′ = ∑ t = 1 T α ( s t ′ − 1 , h t ) h t \boldsymbol{c}_{t'}=\sum^T_{t=1}{\alpha{(\boldsymbol{s}_{t'-1},\boldsymbol{h}_t)\boldsymbol{h}_t}} ct′=t=1∑Tα(st′−1,ht)ht

参数字典:

-

遵循与 9.7 节中的相同符号表达

-

时间步 t ′ − 1 \boldsymbol{t'-1} t′−1 时的解码器隐状态 s t ′ − 1 \boldsymbol{s}_{t'-1} st′−1 是查询

-

编码器隐状态 h t \boldsymbol{h}_t ht 既是键,也是值

-

注意力权重 α \alpha α 是使用上节所定义的加性注意力打分函数计算的

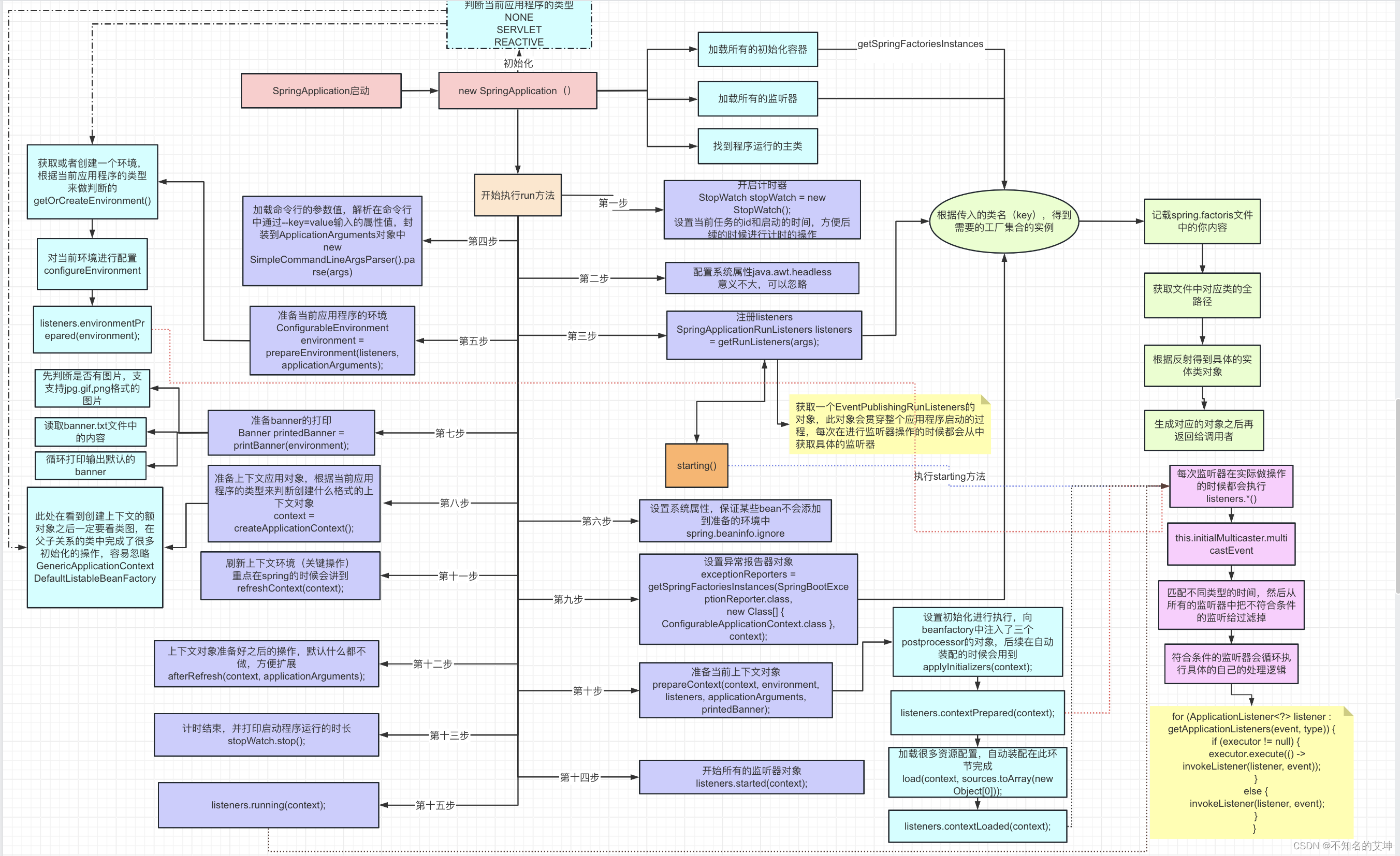

从图中可以看到,加入注意力机制后:

-

将编码器对每次词的输出作为 key 和 value

-

将解码器对上一个词的输出作为 querry

-

将注意力的输出和下一个词的词嵌入合并作为解码器输入

import torch

from torch import nn

from d2l import torch as d2l

10.4.2 定义注意力解码器

AttentionDecoder 类定义了带有注意力机制解码器的基本接口

#@save

class AttentionDecoder(d2l.Decoder):

"""带有注意力机制解码器的基本接口"""

def __init__(self, **kwargs):

super(AttentionDecoder, self).__init__(**kwargs)

@property

def attention_weights(self):

raise NotImplementedError

在 Seq2SeqAttentionDecoder 类中实现带有 Bahdanau 注意力的循环神经网络解码器。初始化解码器的状态,需要下面的输入:

-

编码器在所有时间步的最终层隐状态,将作为注意力的键和值;

-

上一时间步的编码器全层隐状态,将作为初始化解码器的隐状态;

-

编码器有效长度(排除在注意力池中填充词元)。

class Seq2SeqAttentionDecoder(AttentionDecoder):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqAttentionDecoder, self).__init__(**kwargs)

self.attention = d2l.AdditiveAttention(

num_hiddens, num_hiddens, num_hiddens, dropout)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.GRU(

embed_size + num_hiddens, num_hiddens, num_layers,

dropout=dropout)

self.dense = nn.Linear(num_hiddens, vocab_size)

def init_state(self, enc_outputs, enc_valid_lens, *args): # 新增 enc_valid_lens 表示有效长度

# outputs的形状为(batch_size,num_steps,num_hiddens).

# hidden_state的形状为(num_layers,batch_size,num_hiddens)

outputs, hidden_state = enc_outputs

return (outputs.permute(1, 0, 2), hidden_state, enc_valid_lens)

def forward(self, X, state):

# enc_outputs的形状为(batch_size,num_steps,num_hiddens).

# hidden_state的形状为(num_layers,batch_size,num_hiddens)

enc_outputs, hidden_state, enc_valid_lens = state

# 输出X的形状为(num_steps,batch_size,embed_size)

X = self.embedding(X).permute(1, 0, 2)

outputs, self._attention_weights = [], []

for x in X:

# query的形状为(batch_size,1,num_hiddens)

query = torch.unsqueeze(hidden_state[-1], dim=1) # 解码器最终隐藏层的上一个输出添加querry个数的维度后作为querry

# context的形状为(batch_size,1,num_hiddens)

context = self.attention(

query, enc_outputs, enc_outputs, enc_valid_lens) # 编码器的输出作为key和value

# 在特征维度上连结

x = torch.cat((context, torch.unsqueeze(x, dim=1)), dim=-1) # 并起来当解码器输入

# 将x变形为(1,batch_size,embed_size+num_hiddens)

out, hidden_state = self.rnn(x.permute(1, 0, 2), hidden_state)

outputs.append(out)

self._attention_weights.append(self.attention.attention_weights) # 存一下注意力权重

# 全连接层变换后,outputs的形状为 (num_steps,batch_size,vocab_size)

outputs = self.dense(torch.cat(outputs, dim=0))

return outputs.permute(1, 0, 2), [enc_outputs, hidden_state,

enc_valid_lens]

@property

def attention_weights(self):

return self._attention_weights

encoder = d2l.Seq2SeqEncoder(vocab_size=10, embed_size=8, num_hiddens=16,

num_layers=2)

encoder.eval()

decoder = Seq2SeqAttentionDecoder(vocab_size=10, embed_size=8, num_hiddens=16,

num_layers=2)

decoder.eval()

X = torch.zeros((4, 7), dtype=torch.long) # (batch_size,num_steps)

state = decoder.init_state(encoder(X), None)

output, state = decoder(X, state)

output.shape, len(state), state[0].shape, len(state[1]), state[1][0].shape

(torch.Size([4, 7, 10]), 3, torch.Size([4, 7, 16]), 2, torch.Size([4, 16]))

10.4.3 训练

embed_size, num_hiddens, num_layers, dropout = 32, 32, 2, 0.1

batch_size, num_steps = 64, 10

lr, num_epochs, device = 0.005, 250, d2l.try_gpu()

train_iter, src_vocab, tgt_vocab = d2l.load_data_nmt(batch_size, num_steps)

encoder = d2l.Seq2SeqEncoder(

len(src_vocab), embed_size, num_hiddens, num_layers, dropout)

decoder = Seq2SeqAttentionDecoder(

len(tgt_vocab), embed_size, num_hiddens, num_layers, dropout)

net = d2l.EncoderDecoder(encoder, decoder)

d2l.train_seq2seq(net, train_iter, lr, num_epochs, tgt_vocab, device)

loss 0.020, 7252.9 tokens/sec on cuda:0

engs = ['go .', "i lost .", 'he\'s calm .', 'i\'m home .']

fras = ['va !', 'j\'ai perdu .', 'il est calme .', 'je suis chez moi .']

for eng, fra in zip(engs, fras):

translation, dec_attention_weight_seq = d2l.predict_seq2seq(

net, eng, src_vocab, tgt_vocab, num_steps, device, True)

print(f'{eng} => {translation}, ',

f'bleu {d2l.bleu(translation, fra, k=2):.3f}')

go . => va !, bleu 1.000

i lost . => j'ai perdu ., bleu 1.000

he's calm . => il est mouillé ., bleu 0.658

i'm home . => je suis chez moi ., bleu 1.000

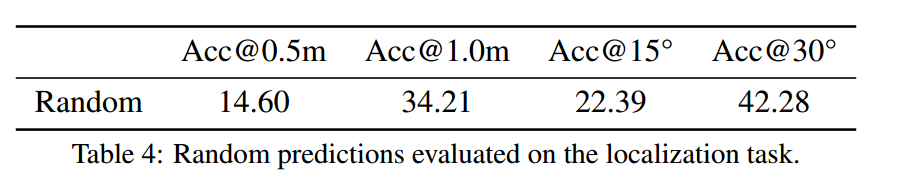

训练结束后,下面通过可视化注意力权重会发现,每个查询都会在键值对上分配不同的权重,这说明在每个解码步中,输入序列的不同部分被选择性地聚集在注意力池中。

attention_weights = torch.cat([step[0][0][0] for step in dec_attention_weight_seq], 0).reshape((

1, 1, -1, num_steps))

# 加上一个包含序列结束词元

d2l.show_heatmaps(

attention_weights[:, :, :, :len(engs[-1].split()) + 1].cpu(),

xlabel='Key positions', ylabel='Query positions')

练习

(1)在实验中用LSTM替换GRU。

class Seq2SeqEncoder_LSTM(d2l.Encoder):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqEncoder_LSTM, self).__init__(**kwargs)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.lstm = nn.LSTM(embed_size, num_hiddens, num_layers, # 更换为 LSTM

dropout=dropout)

def forward(self, X, *args):

X = self.embedding(X)

X = X.permute(1, 0, 2)

output, state = self.lstm(X)

return output, state

class Seq2SeqAttentionDecoder_LSTM(AttentionDecoder):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqAttentionDecoder_LSTM, self).__init__(**kwargs)

self.attention = d2l.AdditiveAttention(

num_hiddens, num_hiddens, num_hiddens, dropout)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.LSTM(

embed_size + num_hiddens, num_hiddens, num_layers,

dropout=dropout)

self.dense = nn.Linear(num_hiddens, vocab_size)

def init_state(self, enc_outputs, enc_valid_lens, *args):

outputs, hidden_state = enc_outputs

return (outputs.permute(1, 0, 2), hidden_state, enc_valid_lens)

def forward(self, X, state):

enc_outputs, hidden_state, enc_valid_lens = state

X = self.embedding(X).permute(1, 0, 2)

outputs, self._attention_weights = [], []

for x in X:

query = torch.unsqueeze(hidden_state[-1][0], dim=1) # 解码器最终隐藏层的上一个输出添加querry个数的维度后作为querry

context = self.attention(

query, enc_outputs, enc_outputs, enc_valid_lens)

x = torch.cat((context, torch.unsqueeze(x, dim=1)), dim=-1)

out, hidden_state = self.rnn(x.permute(1, 0, 2), hidden_state)

outputs.append(out)

self._attention_weights.append(self.attention.attention_weights)

outputs = self.dense(torch.cat(outputs, dim=0))

return outputs.permute(1, 0, 2), [enc_outputs, hidden_state,

enc_valid_lens]

@property

def attention_weights(self):

return self._attention_weights

embed_size_LSTM, num_hiddens_LSTM, num_layers_LSTM, dropout_LSTM = 32, 32, 2, 0.1

batch_size_LSTM, num_steps_LSTM = 64, 10

lr_LSTM, num_epochs_LSTM, device_LSTM = 0.005, 250, d2l.try_gpu()

train_iter_LSTM, src_vocab_LSTM, tgt_vocab_LSTM = d2l.load_data_nmt(batch_size_LSTM, num_steps_LSTM)

encoder_LSTM = Seq2SeqEncoder_LSTM(

len(src_vocab_LSTM), embed_size_LSTM, num_hiddens_LSTM, num_layers_LSTM, dropout_LSTM)

decoder_LSTM = Seq2SeqAttentionDecoder_LSTM(

len(tgt_vocab_LSTM), embed_size_LSTM, num_hiddens_LSTM, num_layers_LSTM, dropout_LSTM)

net_LSTM = d2l.EncoderDecoder(encoder_LSTM, decoder_LSTM)

d2l.train_seq2seq(net_LSTM, train_iter_LSTM, lr_LSTM, num_epochs_LSTM, tgt_vocab_LSTM, device_LSTM)

loss 0.021, 7280.8 tokens/sec on cuda:0

engs = ['go .', "i lost .", 'he\'s calm .', 'i\'m home .']

fras = ['va !', 'j\'ai perdu .', 'il est calme .', 'je suis chez moi .']

for eng, fra in zip(engs, fras):

translation, dec_attention_weight_seq_LSTM = d2l.predict_seq2seq(

net_LSTM, eng, src_vocab_LSTM, tgt_vocab_LSTM, num_steps_LSTM, device_LSTM, True)

print(f'{eng} => {translation}, ',

f'bleu {d2l.bleu(translation, fra, k=2):.3f}')

go . => va !, bleu 1.000

i lost . => j'ai perdu ., bleu 1.000

he's calm . => puis-je <unk> <unk> ., bleu 0.000

i'm home . => je suis chez moi ., bleu 1.000

attention_weights_LSTM = torch.cat([step[0][0][0] for step in dec_attention_weight_seq_LSTM], 0).reshape((

1, 1, -1, num_steps_LSTM))

# 加上一个包含序列结束词元

d2l.show_heatmaps(

attention_weights_LSTM[:, :, :, :len(engs[-1].split()) + 1].cpu(),

xlabel='Key positions', ylabel='Query positions')

(2)修改实验以将加性注意力打分函数替换为缩放点积注意力,它如何影响训练效率?

class Seq2SeqAttentionDecoder_Dot(AttentionDecoder):

def __init__(self, vocab_size, embed_size, num_hiddens, num_layers,

dropout=0, **kwargs):

super(Seq2SeqAttentionDecoder, self).__init__(**kwargs)

self.attention = d2l.DotProductAttention( # 替换为缩放点积注意力

num_hiddens, num_hiddens, num_hiddens, dropout)

self.embedding = nn.Embedding(vocab_size, embed_size)

self.rnn = nn.GRU(

embed_size + num_hiddens, num_hiddens, num_layers,

dropout=dropout)

self.dense = nn.Linear(num_hiddens, vocab_size)

def init_state(self, enc_outputs, enc_valid_lens, *args):

outputs, hidden_state = enc_outputs

return (outputs.permute(1, 0, 2), hidden_state, enc_valid_lens)

def forward(self, X, state):

enc_outputs, hidden_state, enc_valid_lens = state

X = self.embedding(X).permute(1, 0, 2)

outputs, self._attention_weights = [], []

for x in X:

query = torch.unsqueeze(hidden_state[-1], dim=1)

context = self.attention(

query, enc_outputs, enc_outputs, enc_valid_lens)

x = torch.cat((context, torch.unsqueeze(x, dim=1)), dim=-1)

out, hidden_state = self.rnn(x.permute(1, 0, 2), hidden_state)

outputs.append(out)

self._attention_weights.append(self.attention.attention_weights)

outputs = self.dense(torch.cat(outputs, dim=0))

return outputs.permute(1, 0, 2), [enc_outputs, hidden_state,

enc_valid_lens]

@property

def attention_weights(self):

return self._attention_weights

embed_size_Dot, num_hiddens_Dot, num_layers_Dot, dropout_Dot = 32, 32, 2, 0.1

batch_size_Dot, num_steps_Dot = 64, 10

lr_Dot, num_epochs_Dot, device_Dot = 0.005, 250, d2l.try_gpu()

train_iter_Dot, src_vocab_Dot, tgt_vocab_Dot = d2l.load_data_nmt(batch_size_Dot, num_steps_Dot)

encoder_Dot = Seq2SeqEncoder_LSTM(

len(src_vocab_Dot), embed_size_LSTM, num_hiddens_Dot, num_layers_Dot, dropout_Dot)

decoder_Dot = Seq2SeqAttentionDecoder_LSTM(

len(tgt_vocab_Dot), embed_size_Dot, num_hiddens_Dot, num_layers_Dot, dropout_Dot)

net_Dot = d2l.EncoderDecoder(encoder_Dot, decoder_Dot)

d2l.train_seq2seq(net_Dot, train_iter_Dot, lr_Dot, num_epochs_Dot, tgt_vocab_Dot, device_Dot)

loss 0.021, 7038.8 tokens/sec on cuda:0

engs = ['go .', "i lost .", 'he\'s calm .', 'i\'m home .']

fras = ['va !', 'j\'ai perdu .', 'il est calme .', 'je suis chez moi .']

for eng, fra in zip(engs, fras):

translation, dec_attention_weight_seq_Dot = d2l.predict_seq2seq(

net_Dot, eng, src_vocab_Dot, tgt_vocab_Dot, num_steps_Dot, device_Dot, True)

print(f'{eng} => {translation}, ',

f'bleu {d2l.bleu(translation, fra, k=2):.3f}')

go . => va !, bleu 1.000

i lost . => j'ai perdu ., bleu 1.000

he's calm . => il est riche ., bleu 0.658

i'm home . => je suis chez moi ., bleu 1.000

attention_weights_Dot = torch.cat([step[0][0][0] for step in dec_attention_weight_seq_Dot], 0).reshape((

1, 1, -1, num_steps_Dot))

# 加上一个包含序列结束词元

d2l.show_heatmaps(

attention_weights_Dot[:, :, :, :len(engs[-1].split()) + 1].cpu(),

xlabel='Key positions', ylabel='Query positions')