WebRTC音视频通话-实现iOS端调用ossrs视频通话服务

之前搭建ossrs服务,可以查看:https://blog.csdn.net/gloryFlow/article/details/132257196

这里iOS端使用GoogleWebRTC联调ossrs实现视频通话功能。

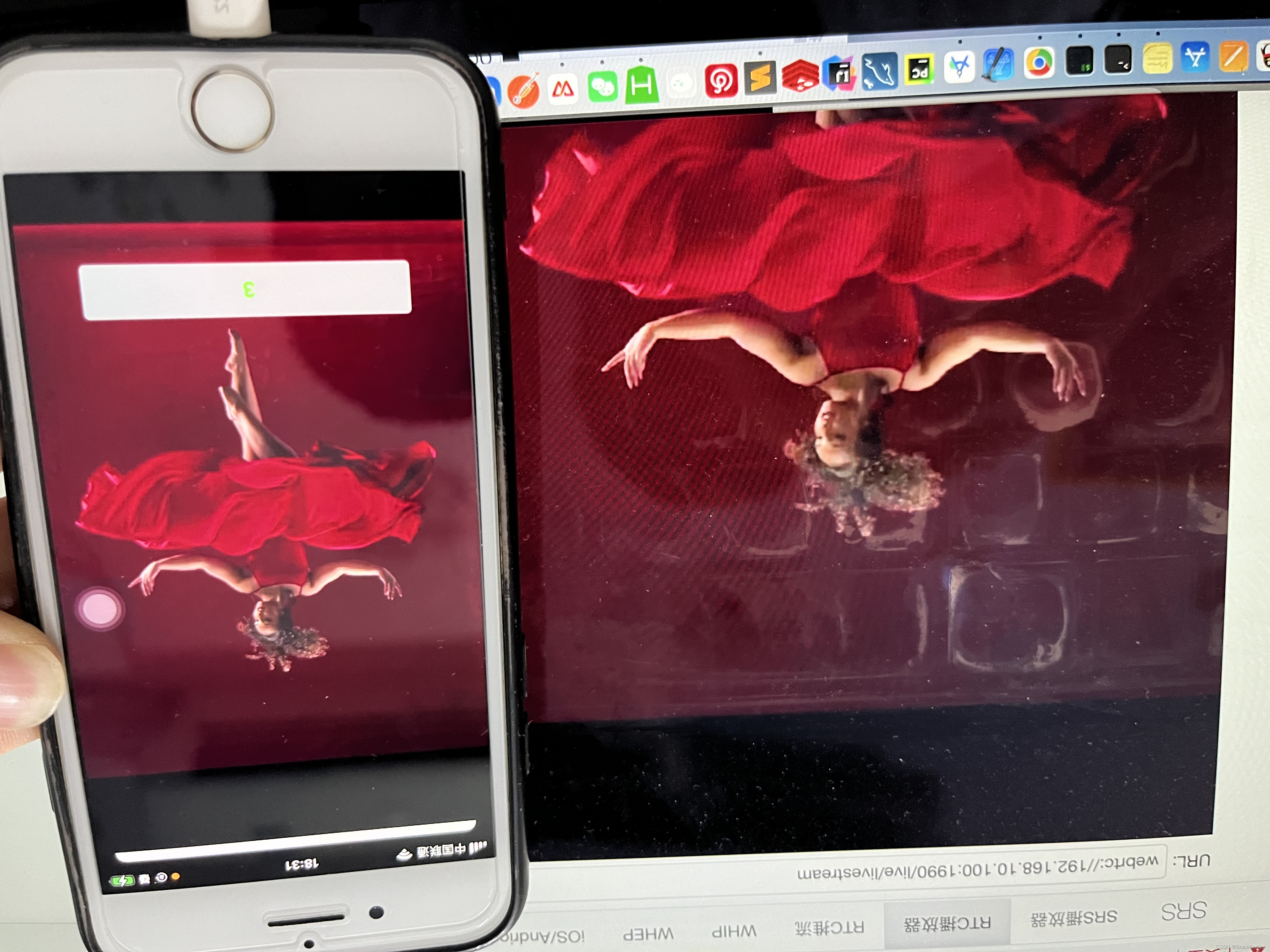

一、iOS端调用ossrs视频通话效果图

iOS端端效果图

ossrs效果图

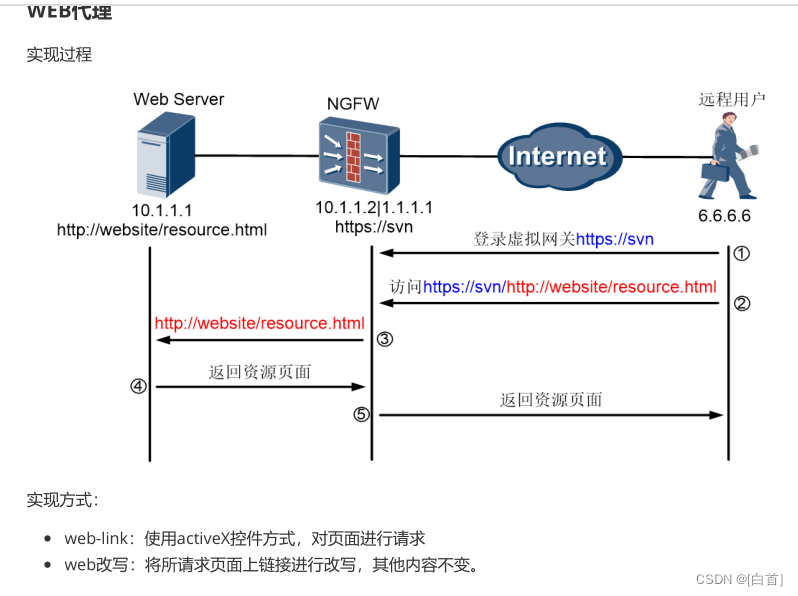

一、WebRTC是什么?

WebRTC (Web Real-Time Communications) 是一项实时通讯技术,它允许网络应用或者站点,在不借助中间媒介的情况下,建立浏览器之间点对点(Peer-to-Peer)的连接,实现视频流、音频流或者其他任意数据的传输。

查看https://zhuanlan.zhihu.com/p/421503695

需要了解的关键

- NAT

Network Address Translation(网络地址转换) - STUN

Session Traversal Utilities for NAT(NAT会话穿越应用程序) - TURN

Traversal Using Relay NAT(通过Relay方式穿越NAT) - ICE

Interactive Connectivity Establishment(交互式连接建立) - SDP

Session Description Protocol(会话描述协议) - WebRTC

Web Real-Time Communications(web实时通讯技术)

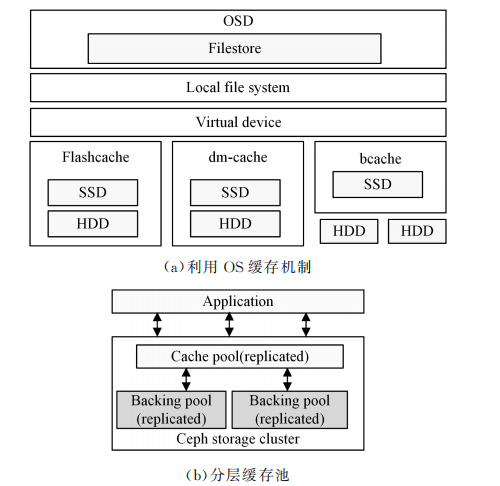

WebRTC offer交换流程如图所示

二、实现iOS端调用ossrs视频通话

创建好实现iOS端调用ossrs视频通话的工程。如果使用P2P点对点的音视频通话,信令服务器,stun/trunP2P穿透和转发服务器这类需要自己搭建了。ossrs中包含stun/trun穿透和转发服务器。我这边实现iOS端调用ossrs服务。

2.1、权限设置

在iOS端调用ossrs视频通话需要相机、语音权限

在info.plist中添加

<key>NSCameraUsageDescription</key>

<string>APP需要获取相机权限</string>

<key>NSMicrophoneUsageDescription</key>

<string>APP需要获取麦克风权限</string>

2.2、工程需要用到GoogleWebRTC

工程需要用到GoogleWebRTC库,在podfile文件中引入库,注意不同版本的GoogleWebRTC代码还是有些差别的。

target 'WebRTCApp' do

pod 'GoogleWebRTC'

pod 'ReactiveObjC'

pod 'SocketRocket'

pod 'HGAlertViewController', '~> 1.0.1'

end

之后执行pod install

2.3、GoogleWebRTC主要API

在使用GoogleWebRTC前,先看下主要的类

- RTCPeerConnection

RTCPeerConnection是WebRTC用于构建点对点连接器

- RTCPeerConnectionFactory

RTCPeerConnectionFactory是RTCPeerConnection工厂类

- RTCVideoCapturer

RTCVideoCapturer是摄像头采集器,获取画面与音频,这个之后可以替换掉。可以自定义,方便获取CMSampleBufferRef进行画面的美颜滤镜、虚拟头像等处理。

- RTCVideoTrack

RTCVideoTrack是视频轨Track

- RTCAudioTrack

RTCAudioTrack是音频轨Track

- RTCDataChannel

RTCDataChannel是建立高吞吐量、低延时的信道,可以传输数据。

- RTCMediaStream

RTCMediaStream是媒体流(摄像头的视频、麦克风的音频)的同步流。

- SDP

SDP即Session Description Protocol(会话描述协议)

SDP由一行或多行UTF-8文本组成,每行以一个字符的类型开头,后跟等号(=),然后是包含值或描述的结构化文本,其格式取决于类型。如下为一个SDP内容示例:

v=0

o=alice 2890844526 2890844526 IN IP4

s=

c=IN IP4

t=0 0

m=audio 49170 RTP/AVP 0

a=rtpmap:0 PCMU/8000

m=video 51372 RTP/AVP 31

a=rtpmap:31 H261/90000

m=video 53000 RTP/AVP 32

a=rtpmap:32 MPV/90000

这是会用到的WebRTC主要的API类。

2.4、使用WebRTC代码实现

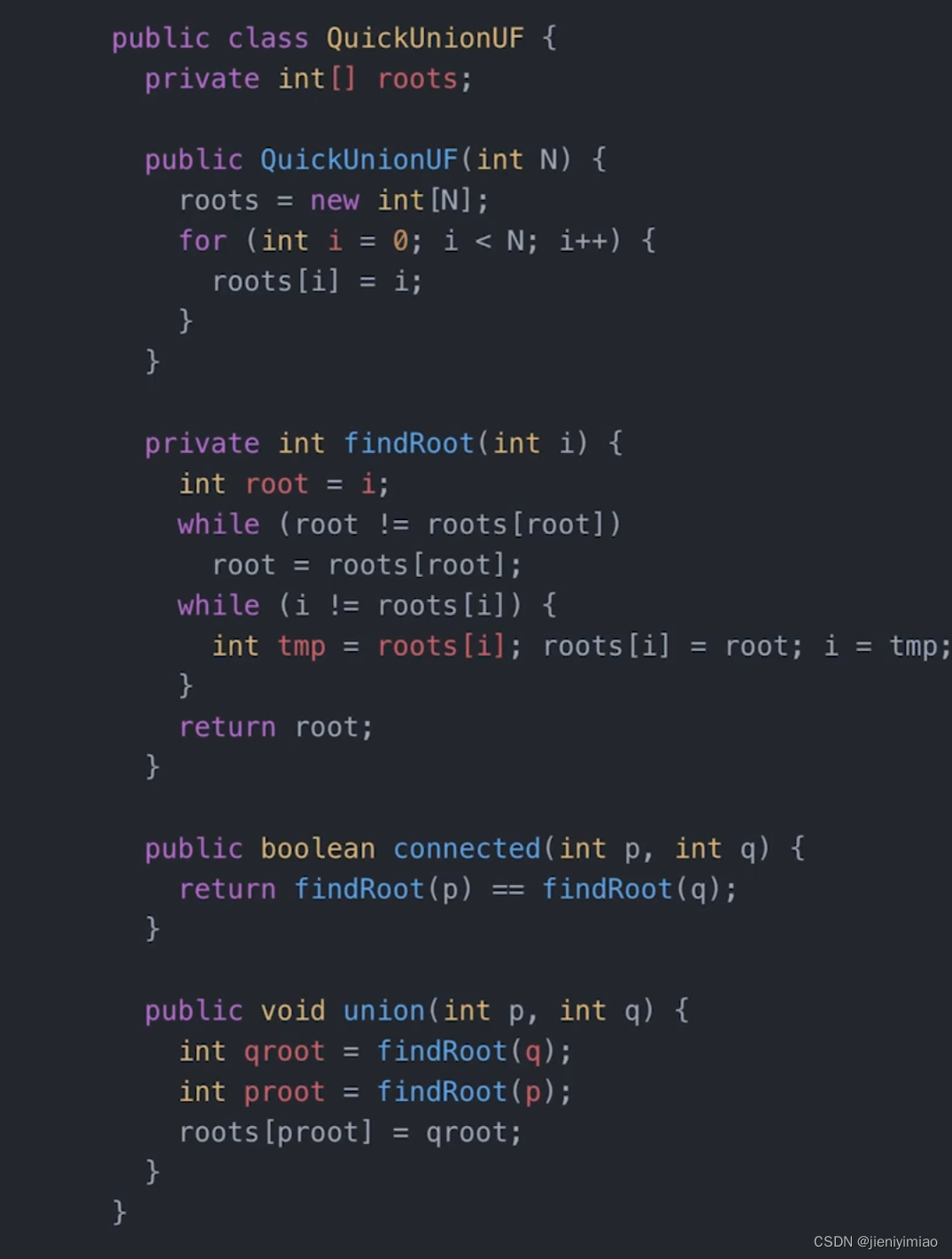

使用WebRTC实现P2P音视频流程如图

这里调用ossrs实现步骤如下

关键点设置

初始化RTCPeerConnectionFactory

#pragma mark - Lazy

- (RTCPeerConnectionFactory *)factory {

if (!_factory) {

RTCInitializeSSL();

RTCDefaultVideoEncoderFactory *videoEncoderFactory = [[RTCDefaultVideoEncoderFactory alloc] init];

RTCDefaultVideoDecoderFactory *videoDecoderFactory = [[RTCDefaultVideoDecoderFactory alloc] init];

_factory = [[RTCPeerConnectionFactory alloc] initWithEncoderFactory:videoEncoderFactory decoderFactory:videoDecoderFactory];

}

return _factory;

}

通过RTCPeerConnectionFactory生成RTCPeerConnection

self.peerConnection = [self.factory peerConnectionWithConfiguration:newConfig constraints:constraints delegate:nil];

将RTCAudioTrack及RTCVideoTrack添加到peerConnection

NSString *streamId = @"stream";

// Audio

RTCAudioTrack *audioTrack = [self createAudioTrack];

self.localAudioTrack = audioTrack;

RTCRtpTransceiverInit *audioTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];

audioTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;

audioTrackTransceiver.streamIds = @[streamId];

[self.peerConnection addTransceiverWithTrack:audioTrack init:audioTrackTransceiver];

// Video

RTCVideoTrack *videoTrack = [self createVideoTrack];

self.localVideoTrack = videoTrack;

RTCRtpTransceiverInit *videoTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];

videoTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;

videoTrackTransceiver.streamIds = @[streamId];

[self.peerConnection addTransceiverWithTrack:videoTrack init:videoTrackTransceiver];

设置摄像头RTCCameraVideoCapturer及文件视频Capturer

- (RTCVideoTrack *)createVideoTrack {

RTCVideoSource *videoSource = [self.factory videoSource];

// 经过测试比1920*1080大的尺寸,无法通过srs播放

[videoSource adaptOutputFormatToWidth:1920 height:1080 fps:20];

// 如果是模拟器

if (TARGET_IPHONE_SIMULATOR) {

self.videoCapturer = [[RTCFileVideoCapturer alloc] initWithDelegate:videoSource];

} else{

self.videoCapturer = [[RTCCameraVideoCapturer alloc] initWithDelegate:videoSource];

}

RTCVideoTrack *videoTrack = [self.factory videoTrackWithSource:videoSource trackId:@"video0"];

return videoTrack;

}

摄像头本地采集的画面本地显示

startCaptureLocalVideo的renderer为RTCEAGLVideoView

- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer {

if (!self.isPublish) {

return;

}

if (!renderer) {

return;

}

if (!self.videoCapturer) {

return;

}

RTCVideoCapturer *capturer = self.videoCapturer;

if ([capturer isKindOfClass:[RTCCameraVideoCapturer class]]) {

if (!([RTCCameraVideoCapturer captureDevices].count > 0)) {

return;

}

AVCaptureDevice *frontCamera = RTCCameraVideoCapturer.captureDevices.firstObject;

// if (frontCamera.position != AVCaptureDevicePositionFront) {

// return;

// }

RTCCameraVideoCapturer *cameraVideoCapturer = (RTCCameraVideoCapturer *)capturer;

AVCaptureDeviceFormat *formatNilable;

NSArray *supportDeviceFormats = [RTCCameraVideoCapturer supportedFormatsForDevice:frontCamera];

NSLog(@"supportDeviceFormats:%@",supportDeviceFormats);

formatNilable = supportDeviceFormats[4];

// if (supportDeviceFormats && supportDeviceFormats.count > 0) {

// NSMutableArray *formats = [NSMutableArray arrayWithCapacity:0];

// for (AVCaptureDeviceFormat *format in supportDeviceFormats) {

// CMVideoDimensions videoVideoDimensions = CMVideoFormatDescriptionGetDimensions(format.formatDescription);

// float width = videoVideoDimensions.width;

// float height = videoVideoDimensions.height;

// // only use 16:9 format.

// if ((width / height) >= (16.0/9.0)) {

// [formats addObject:format];

// }

// }

//

// if (formats.count > 0) {

// NSArray *sortedFormats = [formats sortedArrayUsingComparator:^NSComparisonResult(AVCaptureDeviceFormat *obj1, AVCaptureDeviceFormat *obj2) {

// CMVideoDimensions f1VD = CMVideoFormatDescriptionGetDimensions(obj1.formatDescription);

// CMVideoDimensions f2VD = CMVideoFormatDescriptionGetDimensions(obj2.formatDescription);

// float width1 = f1VD.width;

// float width2 = f2VD.width;

// float height2 = f2VD.height;

// // only use 16:9 format.

// if ((width2 / height2) >= (1.7)) {

// return NSOrderedAscending;

// } else {

// return NSOrderedDescending;

// }

// }];

//

// if (sortedFormats && sortedFormats.count > 0) {

// formatNilable = sortedFormats.lastObject;

// }

// }

// }

if (!formatNilable) {

return;

}

NSArray *formatArr = [RTCCameraVideoCapturer supportedFormatsForDevice:frontCamera];

for (AVCaptureDeviceFormat *format in formatArr) {

NSLog(@"AVCaptureDeviceFormat format:%@", format);

}

[cameraVideoCapturer startCaptureWithDevice:frontCamera format:formatNilable fps:20 completionHandler:^(NSError *error) {

NSLog(@"startCaptureWithDevice error:%@", error);

}];

}

if ([capturer isKindOfClass:[RTCFileVideoCapturer class]]) {

RTCFileVideoCapturer *fileVideoCapturer = (RTCFileVideoCapturer *)capturer;

[fileVideoCapturer startCapturingFromFileNamed:@"beautyPicture.mp4" onError:^(NSError * _Nonnull error) {

NSLog(@"startCaptureLocalVideo startCapturingFromFileNamed error:%@", error);

}];

}

[self.localVideoTrack addRenderer:renderer];

}

创建的createOffer

- (void)offer:(void (^)(RTCSessionDescription *sdp))completion {

if (self.isPublish) {

self.mediaConstrains = self.publishMediaConstrains;

} else {

self.mediaConstrains = self.playMediaConstrains;

}

RTCMediaConstraints *constrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.mediaConstrains optionalConstraints:self.optionalConstraints];

NSLog(@"peerConnection:%@",self.peerConnection);

__weak typeof(self) weakSelf = self;

[weakSelf.peerConnection offerForConstraints:constrains completionHandler:^(RTCSessionDescription * _Nullable sdp, NSError * _Nullable error) {

if (error) {

NSLog(@"offer offerForConstraints error:%@", error);

}

if (sdp) {

[weakSelf.peerConnection setLocalDescription:sdp completionHandler:^(NSError * _Nullable error) {

if (error) {

NSLog(@"offer setLocalDescription error:%@", error);

}

if (completion) {

completion(sdp);

}

}];

}

}];

}

设置setRemoteDescription

- (void)setRemoteSdp:(RTCSessionDescription *)remoteSdp completion:(void (^)(NSError * _Nullable error))completion {

[self.peerConnection setRemoteDescription:remoteSdp completionHandler:completion];

}

整体代码如下

WebRTCClient.h

#import <Foundation/Foundation.h>

#import <WebRTC/WebRTC.h>

#import <UIKit/UIKit.h>

@protocol WebRTCClientDelegate;

@interface WebRTCClient : NSObject

@property (nonatomic, weak) id<WebRTCClientDelegate> delegate;

/**

connect工厂

*/

@property (nonatomic, strong) RTCPeerConnectionFactory *factory;

/**

是否push

*/

@property (nonatomic, assign) BOOL isPublish;

/**

connect

*/

@property (nonatomic, strong) RTCPeerConnection *peerConnection;

/**

RTCAudioSession

*/

@property (nonatomic, strong) RTCAudioSession *rtcAudioSession;

/**

DispatchQueue

*/

@property (nonatomic) dispatch_queue_t audioQueue;

/**

mediaConstrains

*/

@property (nonatomic, strong) NSDictionary *mediaConstrains;

/**

publishMediaConstrains

*/

@property (nonatomic, strong) NSDictionary *publishMediaConstrains;

/**

playMediaConstrains

*/

@property (nonatomic, strong) NSDictionary *playMediaConstrains;

/**

optionalConstraints

*/

@property (nonatomic, strong) NSDictionary *optionalConstraints;

/**

RTCVideoCapturer摄像头采集器

*/

@property (nonatomic, strong) RTCVideoCapturer *videoCapturer;

/**

local语音localAudioTrack

*/

@property (nonatomic, strong) RTCAudioTrack *localAudioTrack;

/**

localVideoTrack

*/

@property (nonatomic, strong) RTCVideoTrack *localVideoTrack;

/**

remoteVideoTrack

*/

@property (nonatomic, strong) RTCVideoTrack *remoteVideoTrack;

/**

RTCVideoRenderer

*/

@property (nonatomic, weak) id<RTCVideoRenderer> remoteRenderView;

/**

localDataChannel

*/

@property (nonatomic, strong) RTCDataChannel *localDataChannel;

/**

localDataChannel

*/

@property (nonatomic, strong) RTCDataChannel *remoteDataChannel;

- (instancetype)initWithPublish:(BOOL)isPublish;

- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer;

- (void)answer:(void (^)(RTCSessionDescription *sdp))completionHandler;

- (void)offer:(void (^)(RTCSessionDescription *sdp))completionHandler;

#pragma mark - Hiden or show Video

- (void)hidenVideo;

- (void)showVideo;

#pragma mark - Hiden or show Audio

- (void)muteAudio;

- (void)unmuteAudio;

- (void)speakOff;

- (void)speakOn;

- (void)changeSDP2Server:(RTCSessionDescription *)sdp

urlStr:(NSString *)urlStr

streamUrl:(NSString *)streamUrl

closure:(void (^)(BOOL isServerRetSuc))closure;

@end

@protocol WebRTCClientDelegate <NSObject>

- (void)webRTCClient:(WebRTCClient *)client didDiscoverLocalCandidate:(RTCIceCandidate *)candidate;

- (void)webRTCClient:(WebRTCClient *)client didChangeConnectionState:(RTCIceConnectionState)state;

- (void)webRTCClient:(WebRTCClient *)client didReceiveData:(NSData *)data;

@end

WebRTCClient.m

#import "WebRTCClient.h"

#import "HttpClient.h"

@interface WebRTCClient ()<RTCPeerConnectionDelegate, RTCDataChannelDelegate>

@property (nonatomic, strong) HttpClient *httpClient;

@end

@implementation WebRTCClient

- (instancetype)initWithPublish:(BOOL)isPublish {

self = [super init];

if (self) {

self.isPublish = isPublish;

self.httpClient = [[HttpClient alloc] init];

RTCMediaConstraints *constraints = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:nil optionalConstraints:self.optionalConstraints];

RTCConfiguration *newConfig = [[RTCConfiguration alloc] init];

newConfig.sdpSemantics = RTCSdpSemanticsUnifiedPlan;

self.peerConnection = [self.factory peerConnectionWithConfiguration:newConfig constraints:constraints delegate:nil];

[self createMediaSenders];

[self createMediaReceivers];

// srs not support data channel.

// self.createDataChannel()

[self configureAudioSession];

self.peerConnection.delegate = self;

}

return self;

}

- (void)createMediaSenders {

if (!self.isPublish) {

return;

}

NSString *streamId = @"stream";

// Audio

RTCAudioTrack *audioTrack = [self createAudioTrack];

self.localAudioTrack = audioTrack;

RTCRtpTransceiverInit *audioTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];

audioTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;

audioTrackTransceiver.streamIds = @[streamId];

[self.peerConnection addTransceiverWithTrack:audioTrack init:audioTrackTransceiver];

// Video

RTCVideoTrack *videoTrack = [self createVideoTrack];

self.localVideoTrack = videoTrack;

RTCRtpTransceiverInit *videoTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];

videoTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;

videoTrackTransceiver.streamIds = @[streamId];

[self.peerConnection addTransceiverWithTrack:videoTrack init:videoTrackTransceiver];

}

- (void)createMediaReceivers {

if (!self.isPublish) {

return;

}

if (self.peerConnection.transceivers.count > 0) {

RTCRtpTransceiver *transceiver = self.peerConnection.transceivers.firstObject;

if (transceiver.mediaType == RTCRtpMediaTypeVideo) {

RTCVideoTrack *track = (RTCVideoTrack *)transceiver.receiver.track;

self.remoteVideoTrack = track;

}

}

}

- (void)configureAudioSession {

[self.rtcAudioSession lockForConfiguration];

@try {

NSError *error;

[self.rtcAudioSession setCategory:AVAudioSessionCategoryPlayAndRecord withOptions:AVAudioSessionCategoryOptionDefaultToSpeaker error:&error];

NSError *modeError;

[self.rtcAudioSession setMode:AVAudioSessionModeVoiceChat error:&modeError];

NSLog(@"configureAudioSession error:%@, modeError:%@", error, modeError);

} @catch (NSException *exception) {

NSLog(@"configureAudioSession exception:%@", exception);

}

[self.rtcAudioSession unlockForConfiguration];

}

- (RTCAudioTrack *)createAudioTrack {

/// enable google 3A algorithm.

NSDictionary *mandatory = @{

@"googEchoCancellation": kRTCMediaConstraintsValueTrue,

@"googAutoGainControl": kRTCMediaConstraintsValueTrue,

@"googNoiseSuppression": kRTCMediaConstraintsValueTrue,

};

RTCMediaConstraints *audioConstrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:mandatory optionalConstraints:self.optionalConstraints];

RTCAudioSource *audioSource = [self.factory audioSourceWithConstraints:audioConstrains];

RTCAudioTrack *audioTrack = [self.factory audioTrackWithSource:audioSource trackId:@"audio0"];

return audioTrack;

}

- (RTCVideoTrack *)createVideoTrack {

RTCVideoSource *videoSource = [self.factory videoSource];

// 经过测试比1920*1080大的尺寸,无法通过srs播放

[videoSource adaptOutputFormatToWidth:1920 height:1080 fps:20];

// 如果是模拟器

if (TARGET_IPHONE_SIMULATOR) {

self.videoCapturer = [[RTCFileVideoCapturer alloc] initWithDelegate:videoSource];

} else{

self.videoCapturer = [[RTCCameraVideoCapturer alloc] initWithDelegate:videoSource];

}

RTCVideoTrack *videoTrack = [self.factory videoTrackWithSource:videoSource trackId:@"video0"];

return videoTrack;

}

- (void)offer:(void (^)(RTCSessionDescription *sdp))completion {

if (self.isPublish) {

self.mediaConstrains = self.publishMediaConstrains;

} else {

self.mediaConstrains = self.playMediaConstrains;

}

RTCMediaConstraints *constrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.mediaConstrains optionalConstraints:self.optionalConstraints];

NSLog(@"peerConnection:%@",self.peerConnection);

__weak typeof(self) weakSelf = self;

[weakSelf.peerConnection offerForConstraints:constrains completionHandler:^(RTCSessionDescription * _Nullable sdp, NSError * _Nullable error) {

if (error) {

NSLog(@"offer offerForConstraints error:%@", error);

}

if (sdp) {

[weakSelf.peerConnection setLocalDescription:sdp completionHandler:^(NSError * _Nullable error) {

if (error) {

NSLog(@"offer setLocalDescription error:%@", error);

}

if (completion) {

completion(sdp);

}

}];

}

}];

}

- (void)answer:(void (^)(RTCSessionDescription *sdp))completion {

RTCMediaConstraints *constrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.mediaConstrains optionalConstraints:self.optionalConstraints];

__weak typeof(self) weakSelf = self;

[weakSelf.peerConnection answerForConstraints:constrains completionHandler:^(RTCSessionDescription * _Nullable sdp, NSError * _Nullable error) {

if (error) {

NSLog(@"answer answerForConstraints error:%@", error);

}

if (sdp) {

[weakSelf.peerConnection setLocalDescription:sdp completionHandler:^(NSError * _Nullable error) {

if (error) {

NSLog(@"answer setLocalDescription error:%@", error);

}

if (completion) {

completion(sdp);

}

}];

}

}];

}

- (void)setRemoteSdp:(RTCSessionDescription *)remoteSdp completion:(void (^)(NSError * _Nullable error))completion {

[self.peerConnection setRemoteDescription:remoteSdp completionHandler:completion];

}

- (void)setRemoteCandidate:(RTCIceCandidate *)remoteCandidate {

[self.peerConnection addIceCandidate:remoteCandidate];

}

- (void)setMaxBitrate:(int)maxBitrate {

NSMutableArray *videoSenders = [NSMutableArray arrayWithCapacity:0];

for (RTCRtpSender *sender in self.peerConnection.senders) {

if (sender.track && [kRTCMediaStreamTrackKindVideo isEqualToString:sender.track.kind]) {

[videoSenders addObject:sender];

}

}

if (videoSenders.count > 0) {

RTCRtpSender *firstSender = [videoSenders firstObject];

RTCRtpParameters *parameters = firstSender.parameters;

NSNumber *maxBitrateBps = [NSNumber numberWithInt:maxBitrate];

parameters.encodings.firstObject.maxBitrateBps = maxBitrateBps;

}

}

- (void)setMaxFramerate:(int)maxFramerate {

NSMutableArray *videoSenders = [NSMutableArray arrayWithCapacity:0];

for (RTCRtpSender *sender in self.peerConnection.senders) {

if (sender.track && [kRTCMediaStreamTrackKindVideo isEqualToString:sender.track.kind]) {

[videoSenders addObject:sender];

}

}

if (videoSenders.count > 0) {

RTCRtpSender *firstSender = [videoSenders firstObject];

RTCRtpParameters *parameters = firstSender.parameters;

NSNumber *maxFramerateNum = [NSNumber numberWithInt:maxFramerate];

// 该版本暂时没有maxFramerate,需要更新到最新版本

parameters.encodings.firstObject.maxFramerate = maxFramerateNum;

}

}

- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer {

if (!self.isPublish) {

return;

}

if (!renderer) {

return;

}

if (!self.videoCapturer) {

return;

}

RTCVideoCapturer *capturer = self.videoCapturer;

if ([capturer isKindOfClass:[RTCCameraVideoCapturer class]]) {

if (!([RTCCameraVideoCapturer captureDevices].count > 0)) {

return;

}

AVCaptureDevice *frontCamera = RTCCameraVideoCapturer.captureDevices.firstObject;

// if (frontCamera.position != AVCaptureDevicePositionFront) {

// return;

// }

RTCCameraVideoCapturer *cameraVideoCapturer = (RTCCameraVideoCapturer *)capturer;

AVCaptureDeviceFormat *formatNilable;

NSArray *supportDeviceFormats = [RTCCameraVideoCapturer supportedFormatsForDevice:frontCamera];

NSLog(@"supportDeviceFormats:%@",supportDeviceFormats);

formatNilable = supportDeviceFormats[4];

// if (supportDeviceFormats && supportDeviceFormats.count > 0) {

// NSMutableArray *formats = [NSMutableArray arrayWithCapacity:0];

// for (AVCaptureDeviceFormat *format in supportDeviceFormats) {

// CMVideoDimensions videoVideoDimensions = CMVideoFormatDescriptionGetDimensions(format.formatDescription);

// float width = videoVideoDimensions.width;

// float height = videoVideoDimensions.height;

// // only use 16:9 format.

// if ((width / height) >= (16.0/9.0)) {

// [formats addObject:format];

// }

// }

//

// if (formats.count > 0) {

// NSArray *sortedFormats = [formats sortedArrayUsingComparator:^NSComparisonResult(AVCaptureDeviceFormat *obj1, AVCaptureDeviceFormat *obj2) {

// CMVideoDimensions f1VD = CMVideoFormatDescriptionGetDimensions(obj1.formatDescription);

// CMVideoDimensions f2VD = CMVideoFormatDescriptionGetDimensions(obj2.formatDescription);

// float width1 = f1VD.width;

// float width2 = f2VD.width;

// float height2 = f2VD.height;

// // only use 16:9 format.

// if ((width2 / height2) >= (1.7)) {

// return NSOrderedAscending;

// } else {

// return NSOrderedDescending;

// }

// }];

//

// if (sortedFormats && sortedFormats.count > 0) {

// formatNilable = sortedFormats.lastObject;

// }

// }

// }

if (!formatNilable) {

return;

}

NSArray *formatArr = [RTCCameraVideoCapturer supportedFormatsForDevice:frontCamera];

for (AVCaptureDeviceFormat *format in formatArr) {

NSLog(@"AVCaptureDeviceFormat format:%@", format);

}

[cameraVideoCapturer startCaptureWithDevice:frontCamera format:formatNilable fps:20 completionHandler:^(NSError *error) {

NSLog(@"startCaptureWithDevice error:%@", error);

}];

}

if ([capturer isKindOfClass:[RTCFileVideoCapturer class]]) {

RTCFileVideoCapturer *fileVideoCapturer = (RTCFileVideoCapturer *)capturer;

[fileVideoCapturer startCapturingFromFileNamed:@"beautyPicture.mp4" onError:^(NSError * _Nonnull error) {

NSLog(@"startCaptureLocalVideo startCapturingFromFileNamed error:%@", error);

}];

}

[self.localVideoTrack addRenderer:renderer];

}

- (void)renderRemoteVideo:(id<RTCVideoRenderer>)renderer {

if (!self.isPublish) {

return;

}

self.remoteRenderView = renderer;

}

- (RTCDataChannel *)createDataChannel {

RTCDataChannelConfiguration *config = [[RTCDataChannelConfiguration alloc] init];

RTCDataChannel *dataChannel = [self.peerConnection dataChannelForLabel:@"WebRTCData" configuration:config];

if (!dataChannel) {

return nil;

}

dataChannel.delegate = self;

self.localDataChannel = dataChannel;

return dataChannel;

}

- (void)sendData:(NSData *)data {

RTCDataBuffer *buffer = [[RTCDataBuffer alloc] initWithData:data isBinary:YES];

[self.remoteDataChannel sendData:buffer];

}

- (void)changeSDP2Server:(RTCSessionDescription *)sdp

urlStr:(NSString *)urlStr

streamUrl:(NSString *)streamUrl

closure:(void (^)(BOOL isServerRetSuc))closure {

__weak typeof(self) weakSelf = self;

[self.httpClient changeSDP2Server:sdp urlStr:urlStr streamUrl:streamUrl closure:^(NSDictionary *result) {

if (result && [result isKindOfClass:[NSDictionary class]]) {

NSString *sdp = [result objectForKey:@"sdp"];

if (sdp && [sdp isKindOfClass:[NSString class]] && sdp.length > 0) {

RTCSessionDescription *remoteSDP = [[RTCSessionDescription alloc] initWithType:RTCSdpTypeAnswer sdp:sdp];

[weakSelf setRemoteSdp:remoteSDP completion:^(NSError * _Nullable error) {

NSLog(@"changeSDP2Server setRemoteDescription error:%@", error);

}];

}

}

}];

}

#pragma mark - Hiden or show Video

- (void)hidenVideo {

[self setVideoEnabled:NO];

}

- (void)showVideo {

[self setVideoEnabled:YES];

}

- (void)setVideoEnabled:(BOOL)isEnabled {

[self setTrackEnabled:[RTCVideoTrack class] isEnabled:isEnabled];

}

- (void)setTrackEnabled:(Class)track isEnabled:(BOOL)isEnabled {

for (RTCRtpTransceiver *transceiver in self.peerConnection.transceivers) {

if (transceiver && [transceiver isKindOfClass:track]) {

transceiver.sender.track.isEnabled = isEnabled;

}

}

}

#pragma mark - Hiden or show Audio

- (void)muteAudio {

[self setAudioEnabled:NO];

}

- (void)unmuteAudio {

[self setAudioEnabled:YES];

}

- (void)speakOff {

__weak typeof(self) weakSelf = self;

dispatch_async(self.audioQueue, ^{

[weakSelf.rtcAudioSession lockForConfiguration];

@try {

NSError *error;

[self.rtcAudioSession setCategory:AVAudioSessionCategoryPlayAndRecord withOptions:AVAudioSessionCategoryOptionDefaultToSpeaker error:&error];

NSError *ooapError;

[self.rtcAudioSession overrideOutputAudioPort:AVAudioSessionPortOverrideNone error:&ooapError];

NSLog(@"speakOff error:%@, ooapError:%@", error, ooapError);

} @catch (NSException *exception) {

NSLog(@"speakOff exception:%@", exception);

}

[weakSelf.rtcAudioSession unlockForConfiguration];

});

}

- (void)speakOn {

__weak typeof(self) weakSelf = self;

dispatch_async(self.audioQueue, ^{

[weakSelf.rtcAudioSession lockForConfiguration];

@try {

NSError *error;

[self.rtcAudioSession setCategory:AVAudioSessionCategoryPlayAndRecord withOptions:AVAudioSessionCategoryOptionDefaultToSpeaker error:&error];

NSError *ooapError;

[self.rtcAudioSession overrideOutputAudioPort:AVAudioSessionPortOverrideSpeaker error:&ooapError];

NSError *activeError;

[self.rtcAudioSession setActive:YES error:&activeError];

NSLog(@"speakOn error:%@, ooapError:%@, activeError:%@", error, ooapError, activeError);

} @catch (NSException *exception) {

NSLog(@"speakOn exception:%@", exception);

}

[weakSelf.rtcAudioSession unlockForConfiguration];

});

}

- (void)setAudioEnabled:(BOOL)isEnabled {

[self setTrackEnabled:[RTCAudioTrack class] isEnabled:isEnabled];

}

#pragma mark - RTCPeerConnectionDelegate

/** Called when the SignalingState changed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didChangeSignalingState:(RTCSignalingState)stateChanged {

NSLog(@"peerConnection didChangeSignalingState:%ld", (long)stateChanged);

}

/** Called when media is received on a new stream from remote peer. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection didAddStream:(RTCMediaStream *)stream {

NSLog(@"peerConnection didAddStream");

if (self.isPublish) {

return;

}

NSArray *videoTracks = stream.videoTracks;

if (videoTracks && videoTracks.count > 0) {

RTCVideoTrack *track = videoTracks.firstObject;

self.remoteVideoTrack = track;

}

if (self.remoteVideoTrack && self.remoteRenderView) {

id<RTCVideoRenderer> remoteRenderView = self.remoteRenderView;

RTCVideoTrack *remoteVideoTrack = self.remoteVideoTrack;

[remoteVideoTrack addRenderer:remoteRenderView];

}

/**

if let audioTrack = stream.audioTracks.first{

print("audio track faund")

audioTrack.source.volume = 8

}

*/

}

/** Called when a remote peer closes a stream.

* This is not called when RTCSdpSemanticsUnifiedPlan is specified.

*/

- (void)peerConnection:(RTCPeerConnection *)peerConnection didRemoveStream:(RTCMediaStream *)stream {

NSLog(@"peerConnection didRemoveStream");

}

/** Called when negotiation is needed, for example ICE has restarted. */

- (void)peerConnectionShouldNegotiate:(RTCPeerConnection *)peerConnection {

NSLog(@"peerConnection peerConnectionShouldNegotiate");

}

/** Called any time the IceConnectionState changes. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didChangeIceConnectionState:(RTCIceConnectionState)newState {

NSLog(@"peerConnection didChangeIceConnectionState:%ld", newState);

if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didChangeConnectionState:)]) {

[self.delegate webRTCClient:self didChangeConnectionState:newState];

}

}

/** Called any time the IceGatheringState changes. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didChangeIceGatheringState:(RTCIceGatheringState)newState {

NSLog(@"peerConnection didChangeIceGatheringState:%ld", newState);

}

/** New ice candidate has been found. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didGenerateIceCandidate:(RTCIceCandidate *)candidate {

NSLog(@"peerConnection didGenerateIceCandidate:%@", candidate);

if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didDiscoverLocalCandidate:)]) {

[self.delegate webRTCClient:self didDiscoverLocalCandidate:candidate];

}

}

/** Called when a group of local Ice candidates have been removed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didRemoveIceCandidates:(NSArray<RTCIceCandidate *> *)candidates {

NSLog(@"peerConnection didRemoveIceCandidates:%@", candidates);

}

/** New data channel has been opened. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didOpenDataChannel:(RTCDataChannel *)dataChannel {

NSLog(@"peerConnection didOpenDataChannel:%@", dataChannel);

self.remoteDataChannel = dataChannel;

}

/** Called when signaling indicates a transceiver will be receiving media from

* the remote endpoint.

* This is only called with RTCSdpSemanticsUnifiedPlan specified.

*/

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didStartReceivingOnTransceiver:(RTCRtpTransceiver *)transceiver {

NSLog(@"peerConnection didStartReceivingOnTransceiver:%@", transceiver);

}

/** Called when a receiver and its track are created. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didAddReceiver:(RTCRtpReceiver *)rtpReceiver

streams:(NSArray<RTCMediaStream *> *)mediaStreams {

NSLog(@"peerConnection didAddReceiver");

}

/** Called when the receiver and its track are removed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didRemoveReceiver:(RTCRtpReceiver *)rtpReceiver {

NSLog(@"peerConnection didRemoveReceiver");

}

#pragma mark - RTCDataChannelDelegate

/** The data channel state changed. */

- (void)dataChannelDidChangeState:(RTCDataChannel *)dataChannel {

NSLog(@"dataChannelDidChangeState:%@", dataChannel);

}

/** The data channel successfully received a data buffer. */

- (void)dataChannel:(RTCDataChannel *)dataChannel

didReceiveMessageWithBuffer:(RTCDataBuffer *)buffer {

if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didReceiveData:)]) {

[self.delegate webRTCClient:self didReceiveData:buffer.data];

}

}

#pragma mark - Lazy

- (RTCPeerConnectionFactory *)factory {

if (!_factory) {

RTCInitializeSSL();

RTCDefaultVideoEncoderFactory *videoEncoderFactory = [[RTCDefaultVideoEncoderFactory alloc] init];

RTCDefaultVideoDecoderFactory *videoDecoderFactory = [[RTCDefaultVideoDecoderFactory alloc] init];

for (RTCVideoCodecInfo *codec in videoEncoderFactory.supportedCodecs) {

if (codec.parameters) {

NSString *profile_level_id = codec.parameters[@"profile-level-id"];

if (profile_level_id && [profile_level_id isEqualToString:@"42e01f"]) {

videoEncoderFactory.preferredCodec = codec;

break;

}

}

}

_factory = [[RTCPeerConnectionFactory alloc] initWithEncoderFactory:videoEncoderFactory decoderFactory:videoDecoderFactory];

}

return _factory;

}

- (dispatch_queue_t)audioQueue {

if (!_audioQueue) {

_audioQueue = dispatch_queue_create("cn.ifour.webrtc", NULL);

}

return _audioQueue;

}

- (RTCAudioSession *)rtcAudioSession {

if (!_rtcAudioSession) {

_rtcAudioSession = [RTCAudioSession sharedInstance];

}

return _rtcAudioSession;

}

- (NSDictionary *)mediaConstrains {

if (!_mediaConstrains) {

_mediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:

kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveAudio,

kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveVideo,

kRTCMediaConstraintsValueTrue, @"IceRestart",

nil];

}

return _mediaConstrains;

}

- (NSDictionary *)publishMediaConstrains {

if (!_publishMediaConstrains) {

_publishMediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:

kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveAudio,

kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveVideo,

kRTCMediaConstraintsValueTrue, @"IceRestart",

nil];

}

return _publishMediaConstrains;

}

- (NSDictionary *)playMediaConstrains {

if (!_playMediaConstrains) {

_playMediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:

kRTCMediaConstraintsValueTrue, kRTCMediaConstraintsOfferToReceiveAudio,

kRTCMediaConstraintsValueTrue, kRTCMediaConstraintsOfferToReceiveVideo,

kRTCMediaConstraintsValueTrue, @"IceRestart",

nil];

}

return _playMediaConstrains;

}

- (NSDictionary *)optionalConstraints {

if (!_optionalConstraints) {

_optionalConstraints = [[NSDictionary alloc] initWithObjectsAndKeys:

kRTCMediaConstraintsValueTrue, @"DtlsSrtpKeyAgreement",

nil];

}

return _optionalConstraints;

}

@end

三、本地视频画面显示

使用RTCEAGLVideoView本地摄像头视频画面

self.localRenderer = [[RTCEAGLVideoView alloc] initWithFrame:CGRectZero];

// self.localRenderer.videoContentMode = UIViewContentModeScaleAspectFill;

[self addSubview:self.localRenderer];

[self.webRTCClient startCaptureLocalVideo:self.localRenderer];

代码如下

PublishView.h

#import <UIKit/UIKit.h>

#import "WebRTCClient.h"

@interface PublishView : UIView

- (instancetype)initWithFrame:(CGRect)frame webRTCClient:(WebRTCClient *)webRTCClient;

@end

PublishView.m

#import "PublishView.h"

@interface PublishView ()

@property (nonatomic, strong) WebRTCClient *webRTCClient;

@property (nonatomic, strong) RTCEAGLVideoView *localRenderer;

@end

@implementation PublishView

- (instancetype)initWithFrame:(CGRect)frame webRTCClient:(WebRTCClient *)webRTCClient {

self = [super initWithFrame:frame];

if (self) {

self.webRTCClient = webRTCClient;

self.localRenderer = [[RTCEAGLVideoView alloc] initWithFrame:CGRectZero];

// self.localRenderer.videoContentMode = UIViewContentModeScaleAspectFill;

[self addSubview:self.localRenderer];

[self.webRTCClient startCaptureLocalVideo:self.localRenderer];

}

return self;

}

- (void)layoutSubviews {

[super layoutSubviews];

self.localRenderer.frame = self.bounds;

NSLog(@"self.localRenderer frame:%@", NSStringFromCGRect(self.localRenderer.frame));

}

@end

四、ossrs推流rtc服务

我这里通过调用rtc/v1/publish/从ossrs获得remotesdp,这里请求的地址如下:https://192.168.10.100:1990/rtc/v1/publish/

使用NSURLSessionDataTask实现http请求,请求代码如下

HttpClient.h

#import <Foundation/Foundation.h>

#import <WebRTC/WebRTC.h>

@interface HttpClient : NSObject<NSURLSessionDelegate>

- (void)changeSDP2Server:(RTCSessionDescription *)sdp

urlStr:(NSString *)urlStr

streamUrl:(NSString *)streamUrl

closure:(void (^)(NSDictionary *result))closure;

@end

WebRTCClient.m

#import "HttpClient.h"

#import "IPUtil.h"

@interface HttpClient ()

@property (nonatomic, strong) NSURLSession *session;

@end

@implementation HttpClient

- (instancetype)init

{

self = [super init];

if (self) {

self.session = [NSURLSession sessionWithConfiguration:[NSURLSessionConfiguration defaultSessionConfiguration] delegate:self delegateQueue:[NSOperationQueue mainQueue]];

}

return self;

}

- (void)changeSDP2Server:(RTCSessionDescription *)sdp

urlStr:(NSString *)urlStr

streamUrl:(NSString *)streamUrl

closure:(void (^)(NSDictionary *result))closure {

//设置URL

NSURL *urlString = [NSURL URLWithString:urlStr];

//创建可变请求对象

NSMutableURLRequest* mutableRequest = [[NSMutableURLRequest alloc] initWithURL:urlString];

//设置请求类型

[mutableRequest setHTTPMethod:@"POST"];

//创建字典,存放要上传的数据

NSMutableDictionary *dict = [[NSMutableDictionary alloc] init];

[dict setValue:urlStr forKey:@"api"];

[dict setValue:[self createTid] forKey:@"tid"];

[dict setValue:streamUrl forKey:@"streamurl"];

[dict setValue:sdp.sdp forKey:@"sdp"];

[dict setValue:[IPUtil localWiFiIPAddress] forKey:@"clientip"];

//将字典转化NSData类型

NSData *dictPhoneData = [NSJSONSerialization dataWithJSONObject:dict options:0 error:nil];

//设置请求体

[mutableRequest setHTTPBody:dictPhoneData];

//设置请求头

[mutableRequest addValue:@"application/json" forHTTPHeaderField:@"Content-Type"];

[mutableRequest addValue:@"application/json" forHTTPHeaderField:@"Accept"];

//创建任务

NSURLSessionDataTask *dataTask = [self.session dataTaskWithRequest:mutableRequest completionHandler:^(NSData * _Nullable data, NSURLResponse * _Nullable response, NSError * _Nullable error) {

if (error == nil) {

NSLog(@"请求成功:%@",data);

NSString *dataString = [[NSString alloc] initWithData:data encoding:kCFStringEncodingUTF8];

NSLog(@"请求成功 dataString:%@",dataString);

NSDictionary *result = [NSJSONSerialization JSONObjectWithData:data options:NSJSONReadingAllowFragments error:nil];

NSLog(@"NSURLSessionDataTask result:%@", result);

if (closure) {

closure(result);

}

} else {

NSLog(@"网络请求失败!");

}

}];

//启动任务

[dataTask resume];

}

- (NSString *)createTid {

NSDate *date = [[NSDate alloc] init];

int timeInterval = (int)([date timeIntervalSince1970]);

int random = (int)(arc4random());

NSString *str = [NSString stringWithFormat:@"%d*%d", timeInterval, random];

if (str.length > 7) {

NSString *tid = [str substringToIndex:7];

return tid;

}

return @"";

}

#pragma mark -session delegate

-(void)URLSession:(NSURLSession *)session didReceiveChallenge:(NSURLAuthenticationChallenge *)challenge completionHandler:(void (^)(NSURLSessionAuthChallengeDisposition, NSURLCredential * _Nullable))completionHandler {

NSURLSessionAuthChallengeDisposition disposition = NSURLSessionAuthChallengePerformDefaultHandling;

__block NSURLCredential *credential = nil;

if ([challenge.protectionSpace.authenticationMethod isEqualToString:NSURLAuthenticationMethodServerTrust]) {

credential = [NSURLCredential credentialForTrust:challenge.protectionSpace.serverTrust];

if (credential) {

disposition = NSURLSessionAuthChallengeUseCredential;

} else {

disposition = NSURLSessionAuthChallengePerformDefaultHandling;

}

} else {

disposition = NSURLSessionAuthChallengePerformDefaultHandling;

}

if (completionHandler) {

completionHandler(disposition, credential);

}

}

@end

这里用到了获取ip的类,代码如下

IPUtil.h

#import <Foundation/Foundation.h>

@interface IPUtil : NSObject

+ (NSString *)localWiFiIPAddress;

@end

IPUtil.m

#import "IPUtil.h"

#include <arpa/inet.h>

#include <netdb.h>

#include <net/if.h>

#include <ifaddrs.h>

#import <dlfcn.h>

#import <SystemConfiguration/SystemConfiguration.h>

@implementation IPUtil

+ (NSString *)localWiFiIPAddress

{

BOOL success;

struct ifaddrs * addrs;

const struct ifaddrs * cursor;

success = getifaddrs(&addrs) == 0;

if (success) {

cursor = addrs;

while (cursor != NULL) {

// the second test keeps from picking up the loopback address

if (cursor->ifa_addr->sa_family == AF_INET && (cursor->ifa_flags & IFF_LOOPBACK) == 0)

{

NSString *name = [NSString stringWithUTF8String:cursor->ifa_name];

if ([name isEqualToString:@"en0"]) // Wi-Fi adapter

return [NSString stringWithUTF8String:inet_ntoa(((struct sockaddr_in *)cursor->ifa_addr)->sin_addr)];

}

cursor = cursor->ifa_next;

}

freeifaddrs(addrs);

}

return nil;

}

@end

五、调用ossrs推流rtc服务

通过ossrs推流rtc服务,实现本地createOffer之后设置setLocalDescription,再调用rtc/v1/publish/

代码如下

- (void)publishBtnClick {

__weak typeof(self) weakSelf = self;

[self.webRTCClient offer:^(RTCSessionDescription *sdp) {

[weakSelf.webRTCClient changeSDP2Server:sdp urlStr:@"https://192.168.10.100:1990/rtc/v1/publish/" streamUrl:@"webrtc://192.168.10.100:1990/live/livestream" closure:^(BOOL isServerRetSuc) {

NSLog(@"isServerRetSuc:%@",(isServerRetSuc?@"YES":@"NO"));

}];

}];

}

在ViewController上的界面及推流操作

PublishViewController.h

#import <UIKit/UIKit.h>

#import "PublishView.h"

@interface PublishViewController : UIViewController

@end

PublishViewController.m

#import "PublishViewController.h"

@interface PublishViewController ()<WebRTCClientDelegate>

@property (nonatomic, strong) WebRTCClient *webRTCClient;

@property (nonatomic, strong) PublishView *publishView;

@property (nonatomic, strong) UIButton *publishBtn;

@end

@implementation PublishViewController

- (void)viewDidLoad {

[super viewDidLoad];

// Do any additional setup after loading the view.

self.view.backgroundColor = [UIColor whiteColor];

self.publishView = [[PublishView alloc] initWithFrame:CGRectZero webRTCClient:self.webRTCClient];

[self.view addSubview:self.publishView];

self.publishView.backgroundColor = [UIColor lightGrayColor];

self.publishView.frame = self.view.bounds;

CGFloat screenWidth = CGRectGetWidth(self.view.bounds);

CGFloat screenHeight = CGRectGetHeight(self.view.bounds);

self.publishBtn = [UIButton buttonWithType:UIButtonTypeCustom];

self.publishBtn.frame = CGRectMake(50, screenHeight - 160, screenWidth - 2*50, 46);

self.publishBtn.layer.cornerRadius = 4;

self.publishBtn.backgroundColor = [UIColor grayColor];

[self.publishBtn setTitle:@"publish" forState:UIControlStateNormal];

[self.publishBtn addTarget:self action:@selector(publishBtnClick) forControlEvents:UIControlEventTouchUpInside];

[self.view addSubview:self.publishBtn];

self.webRTCClient.delegate = self;

}

- (void)publishBtnClick {

__weak typeof(self) weakSelf = self;

[self.webRTCClient offer:^(RTCSessionDescription *sdp) {

[weakSelf.webRTCClient changeSDP2Server:sdp urlStr:@"https://192.168.10.100:1990/rtc/v1/publish/" streamUrl:@"webrtc://192.168.10.100:1990/live/livestream" closure:^(BOOL isServerRetSuc) {

NSLog(@"isServerRetSuc:%@",(isServerRetSuc?@"YES":@"NO"));

}];

}];

}

#pragma mark - WebRTCClientDelegate

- (void)webRTCClient:(WebRTCClient *)client didDiscoverLocalCandidate:(RTCIceCandidate *)candidate {

NSLog(@"webRTCClient didDiscoverLocalCandidate");

}

- (void)webRTCClient:(WebRTCClient *)client didChangeConnectionState:(RTCIceConnectionState)state {

NSLog(@"webRTCClient didChangeConnectionState");

/**

RTCIceConnectionStateNew,

RTCIceConnectionStateChecking,

RTCIceConnectionStateConnected,

RTCIceConnectionStateCompleted,

RTCIceConnectionStateFailed,

RTCIceConnectionStateDisconnected,

RTCIceConnectionStateClosed,

RTCIceConnectionStateCount,

*/

UIColor *textColor = [UIColor blackColor];

BOOL openSpeak = NO;

switch (state) {

case RTCIceConnectionStateCompleted:

case RTCIceConnectionStateConnected:

textColor = [UIColor greenColor];

openSpeak = YES;

break;

case RTCIceConnectionStateDisconnected:

textColor = [UIColor orangeColor];

break;

case RTCIceConnectionStateFailed:

case RTCIceConnectionStateClosed:

textColor = [UIColor redColor];

break;

case RTCIceConnectionStateNew:

case RTCIceConnectionStateChecking:

case RTCIceConnectionStateCount:

textColor = [UIColor blackColor];

break;

default:

break;

}

dispatch_async(dispatch_get_main_queue(), ^{

NSString *text = [NSString stringWithFormat:@"%ld", state];

[self.publishBtn setTitle:text forState:UIControlStateNormal];

[self.publishBtn setTitleColor:textColor forState:UIControlStateNormal];

if (openSpeak) {

[self.webRTCClient speakOn];

}

// if textColor == .green {

// self?.webRTCClient.speakerOn()

// }

});

}

- (void)webRTCClient:(WebRTCClient *)client didReceiveData:(NSData *)data {

NSLog(@"webRTCClient didReceiveData");

}

#pragma mark - Lazy

- (WebRTCClient *)webRTCClient {

if (!_webRTCClient) {

_webRTCClient = [[WebRTCClient alloc] initWithPublish:YES];

}

return _webRTCClient;

}

@end

当点击按钮开启rtc推流。效果图如下

六、WebRTC视频文件推流

WebRTC还为我们提供了视频文件推流RTCFileVideoCapturer

if ([capturer isKindOfClass:[RTCFileVideoCapturer class]]) {

RTCFileVideoCapturer *fileVideoCapturer = (RTCFileVideoCapturer *)capturer;

[fileVideoCapturer startCapturingFromFileNamed:@"beautyPicture.mp4" onError:^(NSError * _Nonnull error) {

NSLog(@"startCaptureLocalVideo startCapturingFromFileNamed error:%@", error);

}];

}

推送的本地视频效果图如下

至此实现了WebRTC音视频通话的iOS端调用ossrs视频通话服务功能。内容较多,描述可能不准确,请见谅。

七、小结

WebRTC音视频通话-实现iOS端调用ossrs视频通话服务。内容较多,描述可能不准确,请见谅。本文地址:https://blog.csdn.net/gloryFlow/article/details/132262724

学习记录,每天不停进步。