官网链接

Language Modeling with nn.Transformer and torchtext — PyTorch Tutorials 2.0.1+cu117 documentation

使用 NN.TRANSFORMER 和 TORCHTEXT进行语言建模

这是一个关于训练模型使用nn.Transformer来预测序列中的下一个单词的教程。

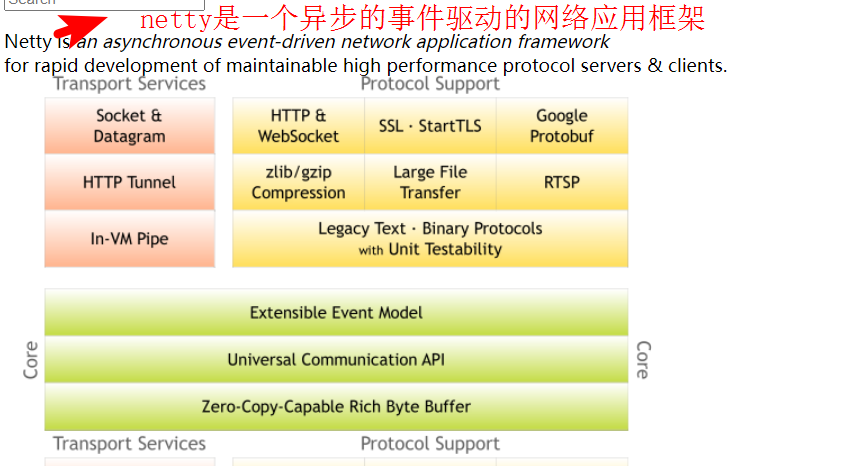

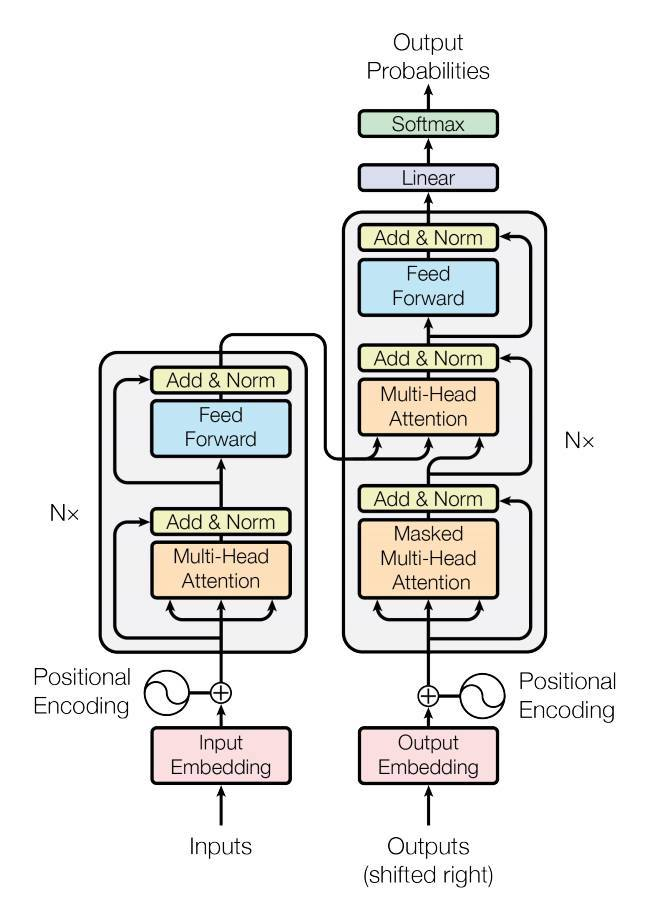

PyTorch 1.2版本包含了一个基于论文Attention is All You Need的标准transformer模块。与循环神经网络(RNNs)相比,transformer模型已被证明在许多序列对序列任务中具有更高的质量,同时具有更高的并发性。

nn.Transformer 模块完全依赖于注意力机制(作为nn.MultiheadAttention实现)来绘制输入和输出之间的全局依赖关系。nn.Transformer 模块是高度模块化的,这样一个单一的组件(例如,nn.TransformerEncoder)可以很容易地使用/组合。

定义模型

在本教程中,我们在语言建模任务上训练一个nn.TransformerEncoder模型。请注意,本教程不包括nn.TransformerDecoder的训练,如上图右半部分所示。语言建模任务是为给定单词(或单词序列)跟随单词序列的可能性分配一个概率。首先将一系列标记传递给源文本嵌入层,然后是一个位置编码器来解释单词的顺序(请参阅下一段了解更多细节)。nn.TransformerEncoder 由nn.TransformerEncoderLayer的多个层组成。除了输入序列外,还需要一个方形注意掩码,因为在nn.TransformerDecoder中的自注意层只允许参与序列中较早的位置。对于语言建模任务,应掩盖未来位置上的任何标记。为了生成输出词的概率分布,nn.TransformerEncoder 模型的输出通过一个线性层来输出非规范化的对数。这里没有应用log-softmax函数,因为稍后会使用CrossEntropyLoss,它要求输入是非标准化的对数。

import math

import os

from tempfile import TemporaryDirectory

from typing import Tuple

import torch

from torch import nn, Tensor

from torch.nn import TransformerEncoder, TransformerEncoderLayer

from torch.utils.data import dataset

class TransformerModel(nn.Module):

def __init__(self, ntoken: int, d_model: int, nhead: int, d_hid: int,

nlayers: int, dropout: float = 0.5):

super().__init__()

self.model_type = 'Transformer'

self.pos_encoder = PositionalEncoding(d_model, dropout)

encoder_layers = TransformerEncoderLayer(d_model, nhead, d_hid, dropout)

self.transformer_encoder = TransformerEncoder(encoder_layers, nlayers)

self.embedding = nn.Embedding(ntoken, d_model)

self.d_model = d_model

self.linear = nn.Linear(d_model, ntoken)

self.init_weights()

def init_weights(self) -> None:

initrange = 0.1

self.embedding.weight.data.uniform_(-initrange, initrange)

self.linear.bias.data.zero_()

self.linear.weight.data.uniform_(-initrange, initrange)

def forward(self, src: Tensor, src_mask: Tensor = None) -> Tensor:

"""

Arguments:

src: Tensor, shape ``[seq_len, batch_size]``

src_mask: Tensor, shape ``[seq_len, seq_len]``

Returns:

output Tensor of shape ``[seq_len, batch_size, ntoken]``

"""

src = self.embedding(src) * math.sqrt(self.d_model)

src = self.pos_encoder(src)

output = self.transformer_encoder(src, src_mask)

output = self.linear(output)

return outputPositionalEncoding模块注入一些关于序列中记号的相对或绝对位置的信息。位置编码器与文本嵌入层具有相同的维数,因此两者可以相加。这里,我们使用不同频率的正弦和余弦函数。

class PositionalEncoding(nn.Module):

def __init__(self, d_model: int, dropout: float = 0.1, max_len: int = 5000):

super().__init__()

self.dropout = nn.Dropout(p=dropout)

position = torch.arange(max_len).unsqueeze(1)

div_term = torch.exp(torch.arange(0, d_model, 2) * (-math.log(10000.0) / d_model))

pe = torch.zeros(max_len, 1, d_model)

pe[:, 0, 0::2] = torch.sin(position * div_term)

pe[:, 0, 1::2] = torch.cos(position * div_term)

self.register_buffer('pe', pe)

def forward(self, x: Tensor) -> Tensor:

"""

Arguments:

x: Tensor, shape ``[seq_len, batch_size, embedding_dim]``

"""

x = x + self.pe[:x.size(0)]

return self.dropout(x)加载和批处理数据

本教程使用torchtext生成Wikitext-2数据集。要访问torchtext数据集,请按照GitHub - pytorch/data: A PyTorch repo for data loading and utilities to be shared by the PyTorch domain libraries. 的说明安装torchdata.

%%bash

pip install portalocker

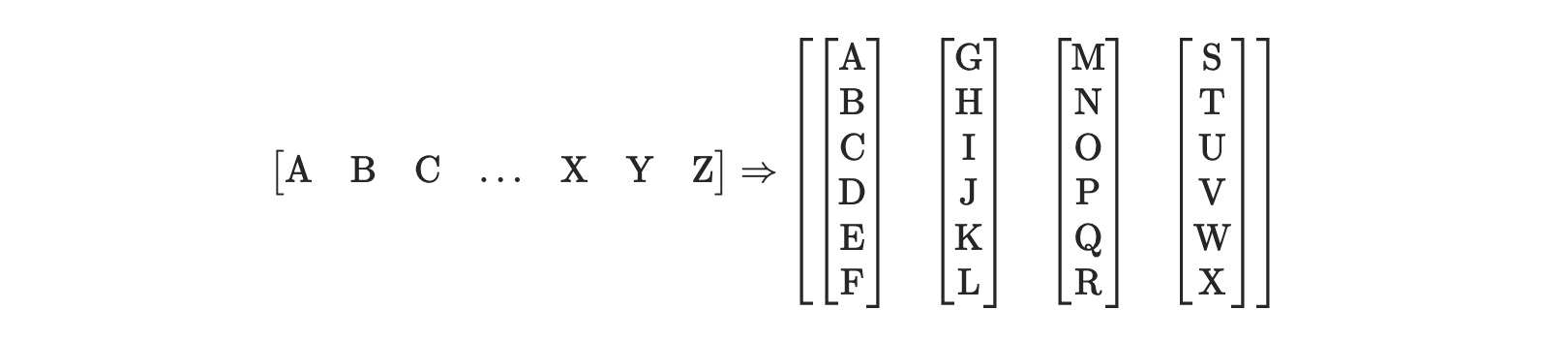

pip install torchdata词汇对象是基于训练数据集构建的,用于将令牌数值化为张量。Wikitext-2将稀疏令牌表示为unk。给定顺序数据的一维向量,batchify() 方法将数据排列到batch_size列中。如果数据没有均匀地分成batch_size列,那么数据将被裁剪。例如,以字母表为数据(总长度为26),batch_size=4,我们将字母表分成长度为6的序列,得到4个这样的序列。

批处理支持更多的并行处理。然而,批处理意味着模型独立处理每一列。在上面的例子中,G和F的依赖关系是无法学习的。

from torchtext.datasets import WikiText2

from torchtext.data.utils import get_tokenizer

from torchtext.vocab import build_vocab_from_iterator

train_iter = WikiText2(split='train')

tokenizer = get_tokenizer('basic_english')

vocab = build_vocab_from_iterator(map(tokenizer, train_iter), specials=['<unk>'])

vocab.set_default_index(vocab['<unk>'])

def data_process(raw_text_iter: dataset.IterableDataset) -> Tensor:

"""Converts raw text into a flat Tensor."""

data = [torch.tensor(vocab(tokenizer(item)), dtype=torch.long) for item in raw_text_iter]

return torch.cat(tuple(filter(lambda t: t.numel() > 0, data)))

# ``train_iter`` was "consumed" by the process of building the vocab,

# so we have to create it again

train_iter, val_iter, test_iter = WikiText2()

train_data = data_process(train_iter)

val_data = data_process(val_iter)

test_data = data_process(test_iter)

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

def batchify(data: Tensor, bsz: int) -> Tensor:

"""Divides the data into ``bsz`` separate sequences, removing extra elements

that wouldn't cleanly fit.

Arguments:

data: Tensor, shape ``[N]``

bsz: int, batch size

Returns:

Tensor of shape ``[N // bsz, bsz]``

"""

seq_len = data.size(0) // bsz

data = data[:seq_len * bsz]

data = data.view(bsz, seq_len).t().contiguous()

return data.to(device)

batch_size = 20

eval_batch_size = 10

train_data = batchify(train_data, batch_size) # shape ``[seq_len, batch_size]``

val_data = batchify(val_data, eval_batch_size)

test_data = batchify(test_data, eval_batch_size)函数生成输入和目标序列

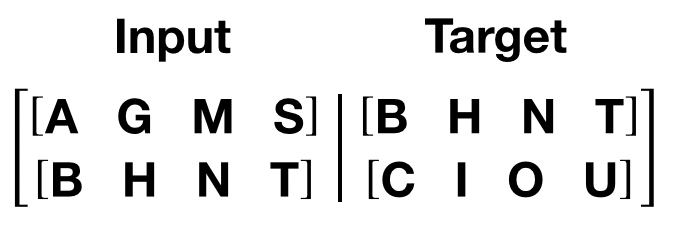

get_batch() 为transformer模型生成一对输入-目标序列。它将源数据细分为长度为bptt的块。对于语言建模任务,模型需要以下单词作为目标。例如,如果bptt值为2,那么当i = 0时,我们将得到以下两个变量:

应该注意的是,数据块的维度是0,与Transformer模型中的S维度一致。批次维度N 的维度是1.

bptt = 35

def get_batch(source: Tensor, i: int) -> Tuple[Tensor, Tensor]:

"""

Args:

source: Tensor, shape ``[full_seq_len, batch_size]``

i: int

Returns:

tuple (data, target), where data has shape ``[seq_len, batch_size]`` and

target has shape ``[seq_len * batch_size]``

"""

seq_len = min(bptt, len(source) - 1 - i)

data = source[i:i+seq_len]

target = source[i+1:i+1+seq_len].reshape(-1)

return data, target初始化实例

模型超参数定义如下。词汇表大小等于词汇表对象的长度。

ntokens = len(vocab) # size of vocabulary

emsize = 200 # embedding dimension

d_hid = 200 # dimension of the feedforward network model in ``nn.TransformerEncoder``

nlayers = 2 # number of ``nn.TransformerEncoderLayer`` in ``nn.TransformerEncoder``

nhead = 2 # number of heads in ``nn.MultiheadAttention``

dropout = 0.2 # dropout probability

model = TransformerModel(ntokens, emsize, nhead, d_hid, nlayers, dropout).to(device)运行模型

我们将CrossEntropyLoss与 SGD (随机梯度下降)优化器一起使用。学习率最初设置为5.0,并遵循StepLR 计划。在训练期间,我们使用nn.utils.clip_grad_norm_ 防止梯度爆炸。

import time

criterion = nn.CrossEntropyLoss()

lr = 5.0 # learning rate

optimizer = torch.optim.SGD(model.parameters(), lr=lr)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 1.0, gamma=0.95)

def train(model: nn.Module) -> None:

model.train() # turn on train mode

total_loss = 0.

log_interval = 200

start_time = time.time()

num_batches = len(train_data) // bptt

for batch, i in enumerate(range(0, train_data.size(0) - 1, bptt)):

data, targets = get_batch(train_data, i)

output = model(data)

output_flat = output.view(-1, ntokens)

loss = criterion(output_flat, targets)

optimizer.zero_grad()

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), 0.5)

optimizer.step()

total_loss += loss.item()

if batch % log_interval == 0 and batch > 0:

lr = scheduler.get_last_lr()[0]

ms_per_batch = (time.time() - start_time) * 1000 / log_interval

cur_loss = total_loss / log_interval

ppl = math.exp(cur_loss)

print(f'| epoch {epoch:3d} | {batch:5d}/{num_batches:5d} batches | '

f'lr {lr:02.2f} | ms/batch {ms_per_batch:5.2f} | '

f'loss {cur_loss:5.2f} | ppl {ppl:8.2f}')

total_loss = 0

start_time = time.time()

def evaluate(model: nn.Module, eval_data: Tensor) -> float:

model.eval() # turn on evaluation mode

total_loss = 0.

with torch.no_grad():

for i in range(0, eval_data.size(0) - 1, bptt):

data, targets = get_batch(eval_data, i)

seq_len = data.size(0)

output = model(data)

output_flat = output.view(-1, ntokens)

total_loss += seq_len * criterion(output_flat, targets).item()

return total_loss / (len(eval_data) - 1)循环epochs,如果验证损失是目前为止我们看到的最好的,那么保存模型。在每个epoch之后调整学习速率。

best_val_loss = float('inf')

epochs = 3

with TemporaryDirectory() as tempdir:

best_model_params_path = os.path.join(tempdir, "best_model_params.pt")

for epoch in range(1, epochs + 1):

epoch_start_time = time.time()

train(model)

val_loss = evaluate(model, val_data)

val_ppl = math.exp(val_loss)

elapsed = time.time() - epoch_start_time

print('-' * 89)

print(f'| end of epoch {epoch:3d} | time: {elapsed:5.2f}s | '

f'valid loss {val_loss:5.2f} | valid ppl {val_ppl:8.2f}')

print('-' * 89)

if val_loss < best_val_loss:

best_val_loss = val_loss

torch.save(model.state_dict(), best_model_params_path)

scheduler.step()

model.load_state_dict(torch.load(best_model_params_path)) # load best model states输出

| epoch 1 | 200/ 2928 batches | lr 5.00 | ms/batch 31.00 | loss 8.15 | ppl 3449.06

| epoch 1 | 400/ 2928 batches | lr 5.00 | ms/batch 28.73 | loss 6.25 | ppl 517.05

| epoch 1 | 600/ 2928 batches | lr 5.00 | ms/batch 28.56 | loss 5.61 | ppl 274.25

| epoch 1 | 800/ 2928 batches | lr 5.00 | ms/batch 28.42 | loss 5.31 | ppl 202.30

| epoch 1 | 1000/ 2928 batches | lr 5.00 | ms/batch 28.33 | loss 4.95 | ppl 140.81

| epoch 1 | 1200/ 2928 batches | lr 5.00 | ms/batch 28.28 | loss 4.55 | ppl 94.20

| epoch 1 | 1400/ 2928 batches | lr 5.00 | ms/batch 28.36 | loss 4.21 | ppl 67.25

| epoch 1 | 1600/ 2928 batches | lr 5.00 | ms/batch 28.45 | loss 3.99 | ppl 54.28

| epoch 1 | 1800/ 2928 batches | lr 5.00 | ms/batch 28.65 | loss 3.74 | ppl 41.89

| epoch 1 | 2000/ 2928 batches | lr 5.00 | ms/batch 28.56 | loss 3.66 | ppl 38.71

| epoch 1 | 2200/ 2928 batches | lr 5.00 | ms/batch 28.67 | loss 3.48 | ppl 32.44

| epoch 1 | 2400/ 2928 batches | lr 5.00 | ms/batch 28.74 | loss 3.49 | ppl 32.78

| epoch 1 | 2600/ 2928 batches | lr 5.00 | ms/batch 28.60 | loss 3.38 | ppl 29.50

| epoch 1 | 2800/ 2928 batches | lr 5.00 | ms/batch 28.46 | loss 3.29 | ppl 26.94

-----------------------------------------------------------------------------------------

| end of epoch 1 | time: 86.92s | valid loss 2.06 | valid ppl 7.88

-----------------------------------------------------------------------------------------

| epoch 2 | 200/ 2928 batches | lr 4.75 | ms/batch 28.88 | loss 3.10 | ppl 22.18

| epoch 2 | 400/ 2928 batches | lr 4.75 | ms/batch 28.50 | loss 3.02 | ppl 20.55

| epoch 2 | 600/ 2928 batches | lr 4.75 | ms/batch 28.65 | loss 2.86 | ppl 17.50

| epoch 2 | 800/ 2928 batches | lr 4.75 | ms/batch 28.68 | loss 2.85 | ppl 17.28

| epoch 2 | 1000/ 2928 batches | lr 4.75 | ms/batch 28.59 | loss 2.67 | ppl 14.43

| epoch 2 | 1200/ 2928 batches | lr 4.75 | ms/batch 28.55 | loss 2.68 | ppl 14.57

| epoch 2 | 1400/ 2928 batches | lr 4.75 | ms/batch 28.51 | loss 2.72 | ppl 15.13

| epoch 2 | 1600/ 2928 batches | lr 4.75 | ms/batch 28.44 | loss 2.69 | ppl 14.71

| epoch 2 | 1800/ 2928 batches | lr 4.75 | ms/batch 28.46 | loss 2.60 | ppl 13.51

| epoch 2 | 2000/ 2928 batches | lr 4.75 | ms/batch 28.51 | loss 2.61 | ppl 13.60

| epoch 2 | 2200/ 2928 batches | lr 4.75 | ms/batch 28.64 | loss 2.57 | ppl 13.04

| epoch 2 | 2400/ 2928 batches | lr 4.75 | ms/batch 28.67 | loss 2.57 | ppl 13.08

| epoch 2 | 2600/ 2928 batches | lr 4.75 | ms/batch 28.56 | loss 2.57 | ppl 13.05

| epoch 2 | 2800/ 2928 batches | lr 4.75 | ms/batch 28.61 | loss 2.55 | ppl 12.81

-----------------------------------------------------------------------------------------

| end of epoch 2 | time: 86.63s | valid loss 1.83 | valid ppl 6.24

-----------------------------------------------------------------------------------------

| epoch 3 | 200/ 2928 batches | lr 4.51 | ms/batch 28.71 | loss 2.43 | ppl 11.35

| epoch 3 | 400/ 2928 batches | lr 4.51 | ms/batch 28.82 | loss 2.37 | ppl 10.65

| epoch 3 | 600/ 2928 batches | lr 4.51 | ms/batch 28.67 | loss 2.27 | ppl 9.64

| epoch 3 | 800/ 2928 batches | lr 4.51 | ms/batch 28.74 | loss 2.29 | ppl 9.83

| epoch 3 | 1000/ 2928 batches | lr 4.51 | ms/batch 28.55 | loss 2.22 | ppl 9.22

| epoch 3 | 1200/ 2928 batches | lr 4.51 | ms/batch 28.73 | loss 2.25 | ppl 9.48

| epoch 3 | 1400/ 2928 batches | lr 4.51 | ms/batch 28.57 | loss 2.29 | ppl 9.89

| epoch 3 | 1600/ 2928 batches | lr 4.51 | ms/batch 28.73 | loss 2.36 | ppl 10.62

| epoch 3 | 1800/ 2928 batches | lr 4.51 | ms/batch 28.52 | loss 2.20 | ppl 9.07

| epoch 3 | 2000/ 2928 batches | lr 4.51 | ms/batch 28.61 | loss 2.26 | ppl 9.57

| epoch 3 | 2200/ 2928 batches | lr 4.51 | ms/batch 28.53 | loss 2.20 | ppl 9.03

| epoch 3 | 2400/ 2928 batches | lr 4.51 | ms/batch 28.45 | loss 2.23 | ppl 9.26

| epoch 3 | 2600/ 2928 batches | lr 4.51 | ms/batch 28.56 | loss 2.21 | ppl 9.13

| epoch 3 | 2800/ 2928 batches | lr 4.51 | ms/batch 28.54 | loss 2.31 | ppl 10.03

-----------------------------------------------------------------------------------------

| end of epoch 3 | time: 86.63s | valid loss 1.28 | valid ppl 3.60

-----------------------------------------------------------------------------------------在测试数据集上评估最佳模型

test_loss = evaluate(model, test_data)

test_ppl = math.exp(test_loss)

print('=' * 89)

print(f'| End of training | test loss {test_loss:5.2f} | '

f'test ppl {test_ppl:8.2f}')

print('=' * 89)输出

=========================================================================================

| End of training | test loss 1.26 | test ppl 3.54

=========================================================================================