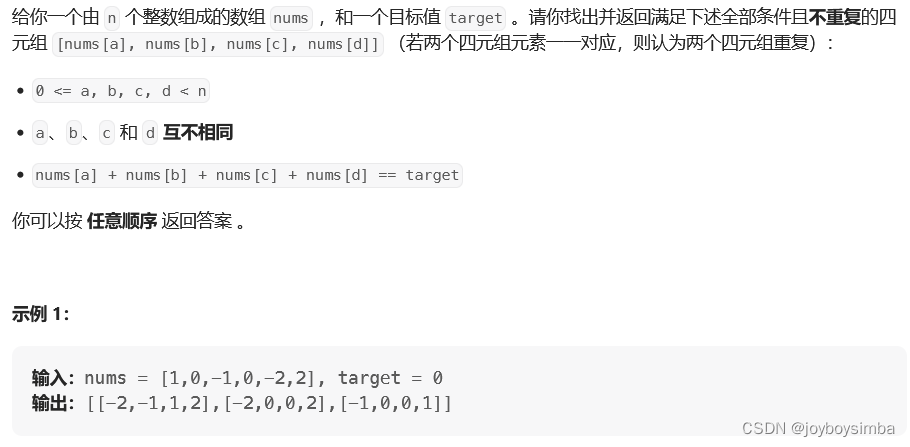

目录

- 一、部署CoreDNS

- 二、配置高可用

- 三、配置负载均衡

- 四、部署 Dashboard

一、部署CoreDNS

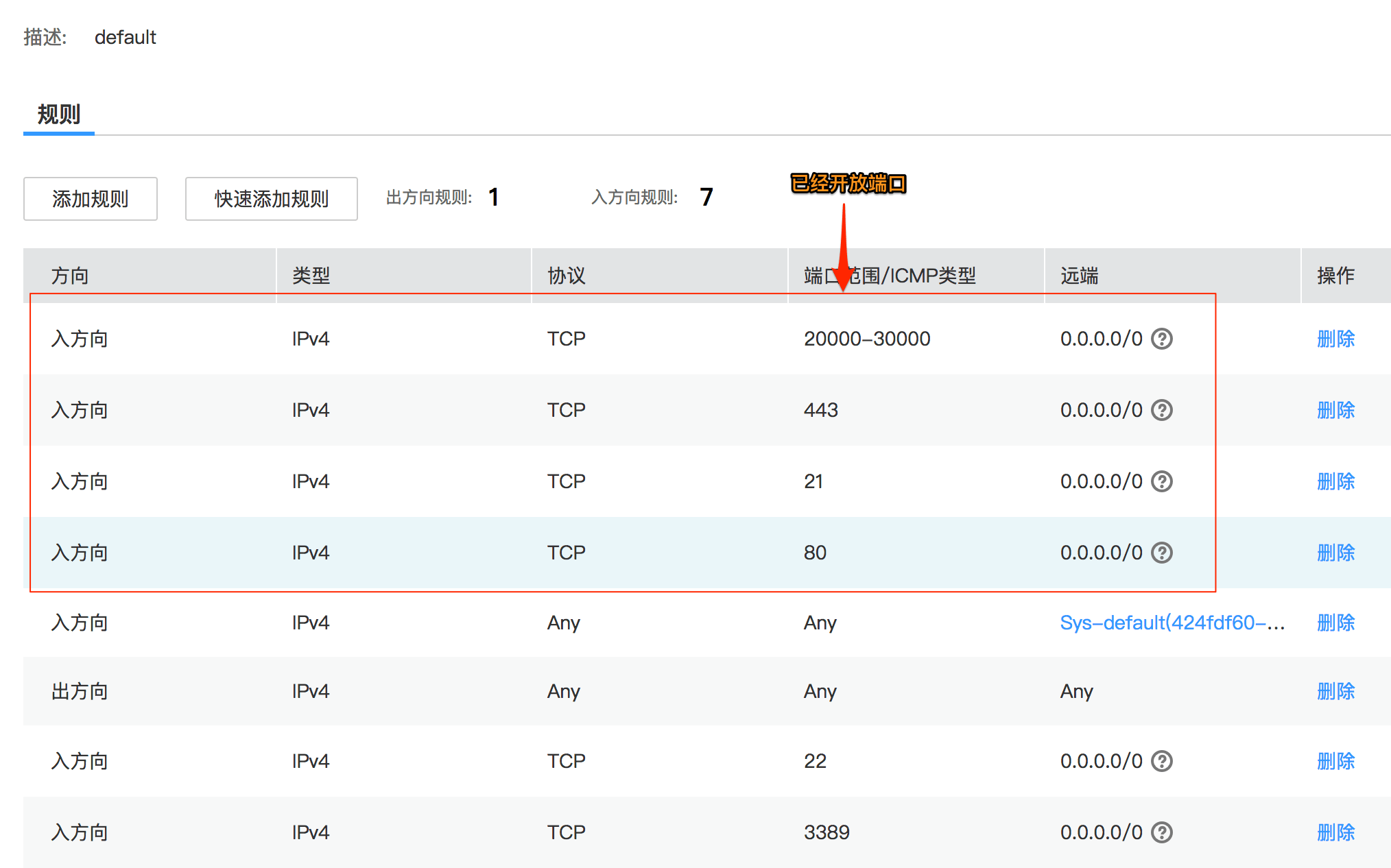

在所有 node 节点上操作

#上传 coredns.tar 到 /opt 目录中

cd /opt

docker load -i coredns.tar

在 master01 节点上操作

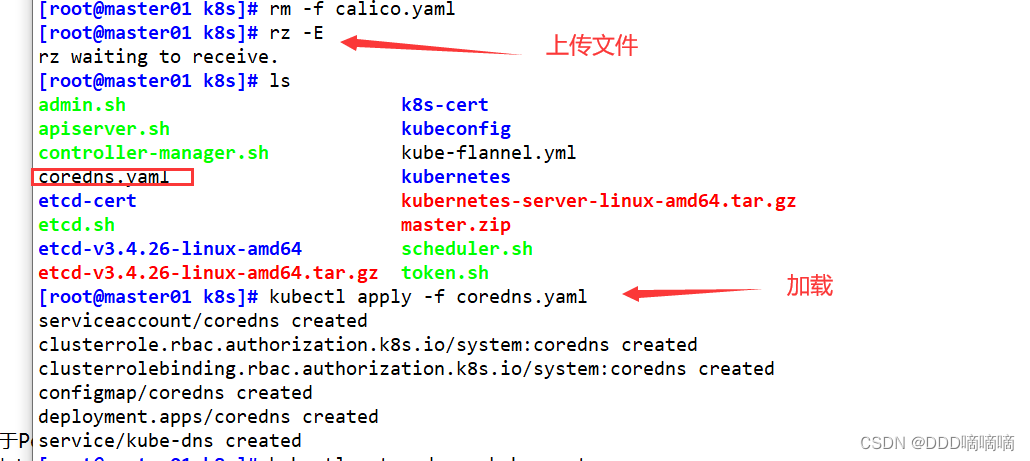

#上传 coredns.yaml 文件到 /opt/k8s 目录中,部署 CoreDNS

cd /opt/k8s

kubectl apply -f coredns.yaml

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-5ffbfd976d-j6shb 1/1 Running 0 32s

#给kubelete绑定角色,赋予管理源权限

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system;anonymous

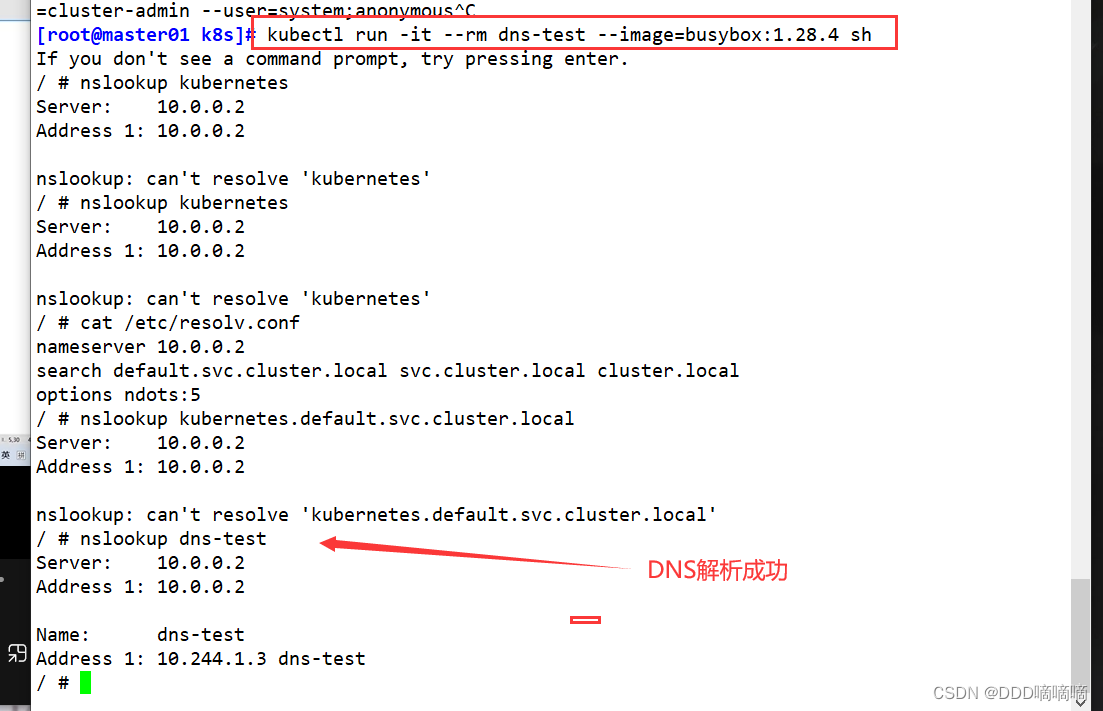

#DNS 解析测试

kubectl run -it --rm dns-test --image=busybox:1.28.4 sh

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.0.0.2

Address 1: 10.0.0.2 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.0.0.1 kubernetes.default.svc.cluster.local

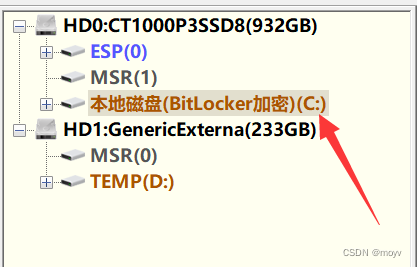

二、配置高可用

初始化环境

初始化环境看这里

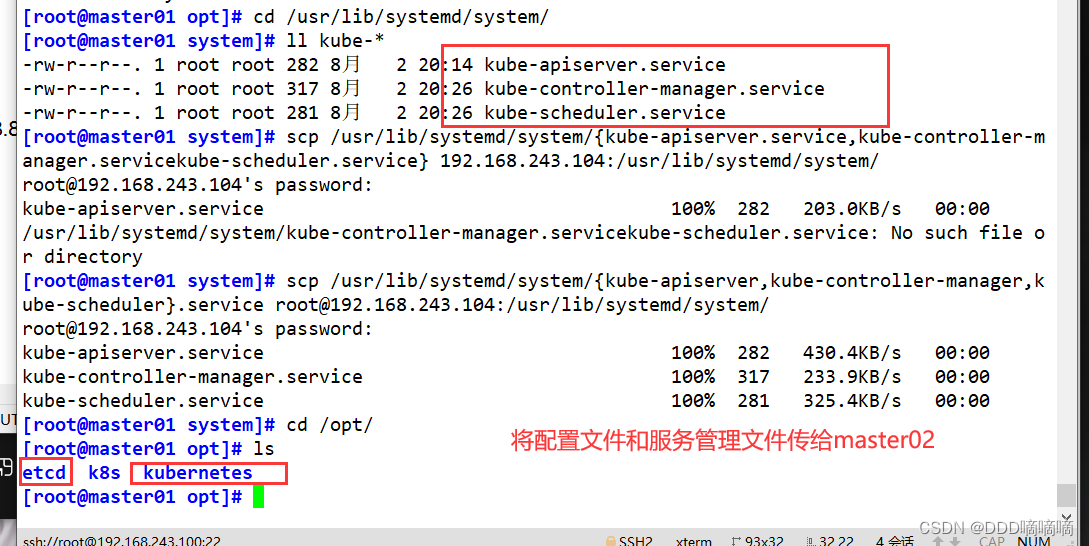

//从 master01 节点上拷贝证书文件、各master组件的配置文件和服务管理文件到 master02 节点

scp -r /opt/etcd/ root@192.168.80.20:/opt/

scp -r /opt/kubernetes/ root@192.168.80.20:/opt

scp /usr/lib/systemd/system/{kube-apiserver,kube-controller-manager,kube-scheduler}.service root@192.168.80.20:/usr/lib/systemd/system/

scp -r /root/.kube/ root@192.168.243.104:/root

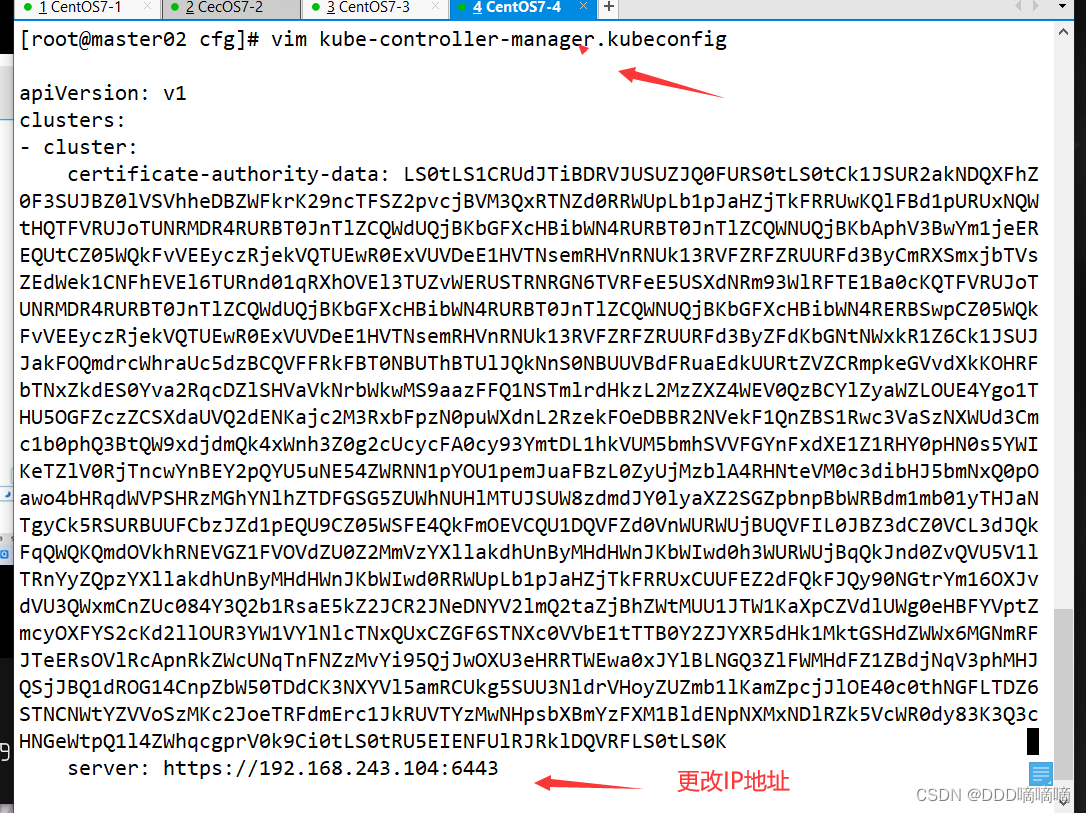

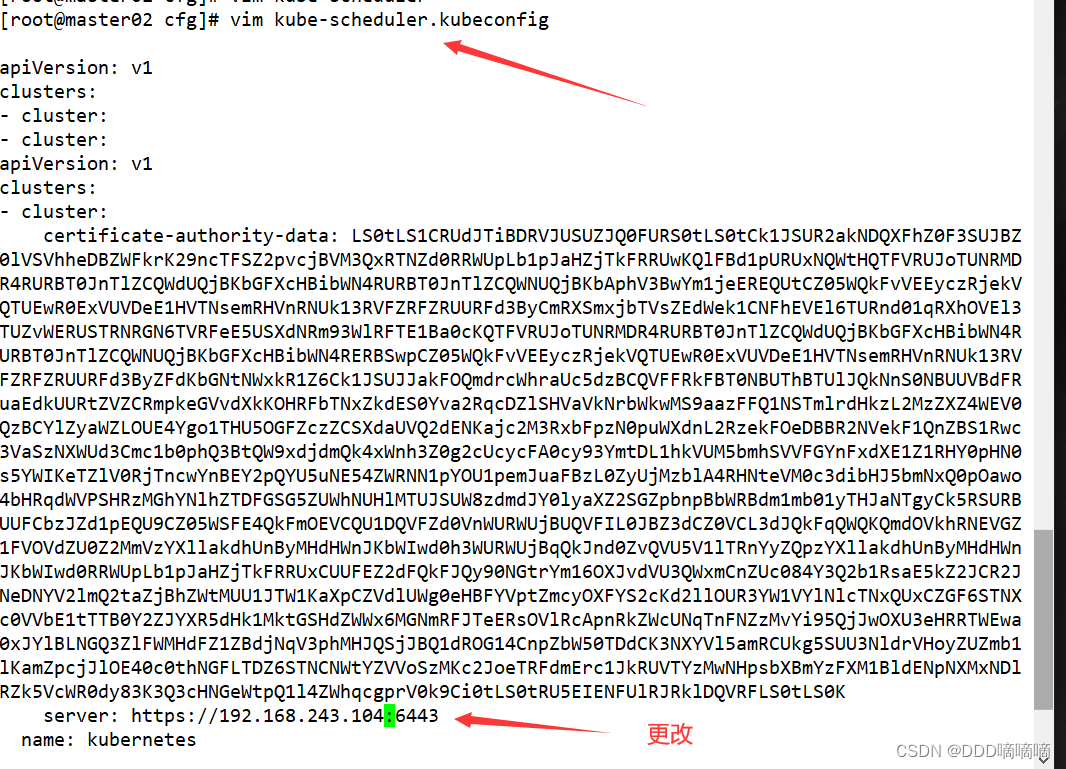

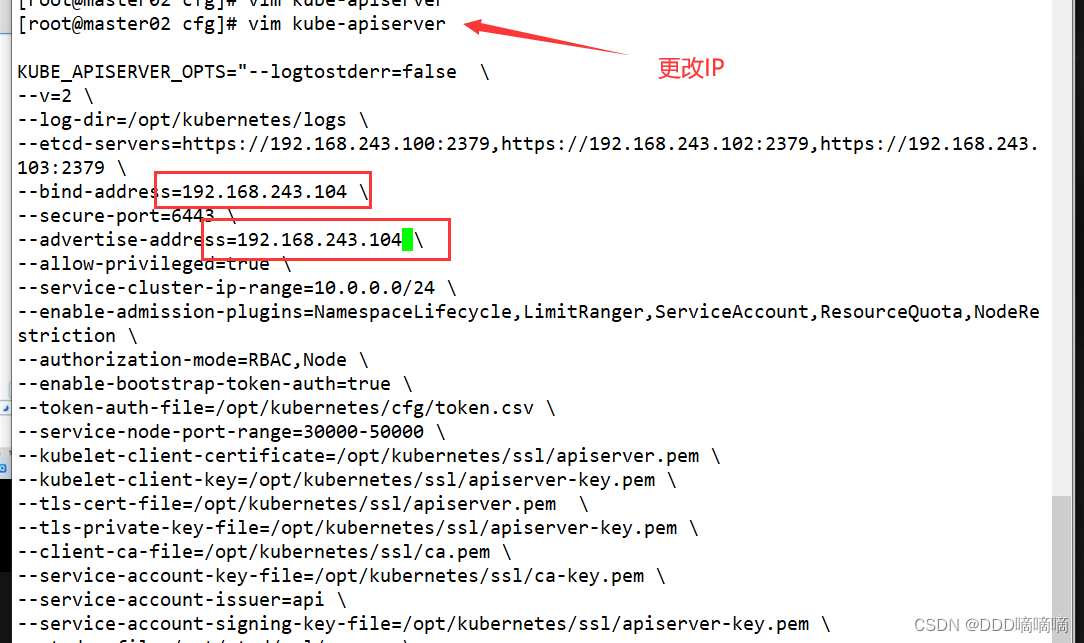

//修改配置文件kube-apiserver中的IP

vim /opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.80.10:2379,https://192.168.80.11:2379,https://192.168.80.12:2379 \

--bind-address=192.168.80.20 \ #修改

--secure-port=6443 \

--advertise-address=192.168.80.20 \ #修改

......

//在 master02 节点上启动各服务并设置开机自启

systemctl start kube-apiserver.service

systemctl enable kube-apiserver.service

systemctl start kube-controller-manager.service

systemctl enable kube-controller-manager.service

systemctl start kube-scheduler.service

systemctl enable kube-scheduler.service

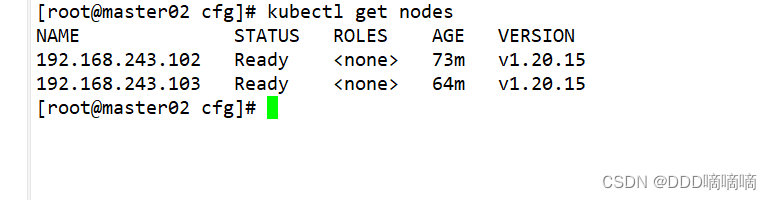

//查看node节点状态

ln -s /opt/kubernetes/bin/* /usr/local/bin/

kubectl get nodes

kubectl get nodes -o wide #-o=wide:输出额外信息;对于Pod,将输出Pod所在的Node名

//此时在master02节点查到的node节点状态仅是从etcd查询到的信息,而此时node节点实际上并未与master02节点建立通信连接,因此需要使用一个VIP把node节点与master节点都关联起来

三、配置负载均衡

//配置load balancer集群双机热备负载均衡(nginx实现负载均衡,keepalived实现双机热备)

##### 在lb01、lb02节点上操作 #####

//配置nginx的官方在线yum源,配置本地nginx的yum源

cat > /etc/yum.repos.d/nginx.repo << 'EOF'

[nginx]

name=nginx repo

baseurl=http://nginx.org/packages/centos/7/$basearch/

gpgcheck=0

EOF

yum install nginx -y

//修改nginx配置文件,配置四层反向代理负载均衡,指定k8s群集2台master的节点ip和6443端口

vim /etc/nginx/nginx.conf

events {

worker_connections 1024;

}

#添加

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.80.10:6443;

server 192.168.80.20:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

http {

......

//检查配置文件语法

nginx -t

//启动nginx服务,查看已监听6443端口

systemctl start nginx

systemctl enable nginx

netstat -natp | grep nginx

//部署keepalived服务

yum install keepalived -y

//修改keepalived配置文件

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER #lb01节点的为 NGINX_MASTER,lb02节点的为 NGINX_BACKUP

}

#添加一个周期性执行的脚本

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh" #指定检查nginx存活的脚本路径

}

vrrp_instance VI_1 {

state MASTER #lb01节点的为 MASTER,lb02节点的为 BACKUP

interface ens33 #指定网卡名称 ens33

virtual_router_id 51 #指定vrid,两个节点要一致

priority 100 #lb01节点的为 100,lb02节点的为 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.80.100/24 #指定 VIP

}

track_script {

check_nginx #指定vrrp_script配置的脚本

}

}

//创建nginx状态检查脚本

vim /etc/nginx/check_nginx.sh

#!/bin/bash

#egrep -cv "grep|$$" 用于过滤掉包含grep 或者 $$ 表示的当前Shell进程ID

count=$(ps -ef | grep nginx | egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

chmod +x /etc/nginx/check_nginx.sh

//启动keepalived服务(一定要先启动了nginx服务,再启动keepalived服务)

systemctl start keepalived

systemctl enable keepalived

ip a #查看VIP是否生成

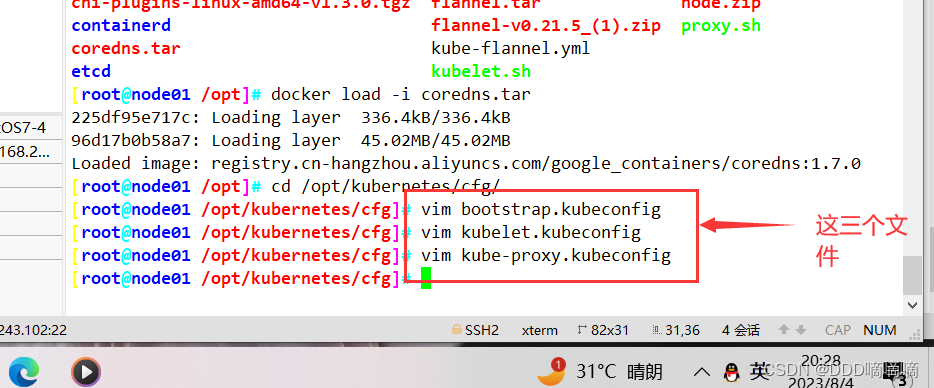

//修改node节点上的bootstrap.kubeconfig,kubelet.kubeconfig配置文件为VIP

cd /opt/kubernetes/cfg/

vim bootstrap.kubeconfig

server: https://192.168.80.100:6443

vim kubelet.kubeconfig

server: https://192.168.80.100:6443

vim kube-proxy.kubeconfig

server: https://192.168.80.100:6443

//重启kubelet和kube-proxy服务

systemctl restart kubelet.service

systemctl restart kube-proxy.service

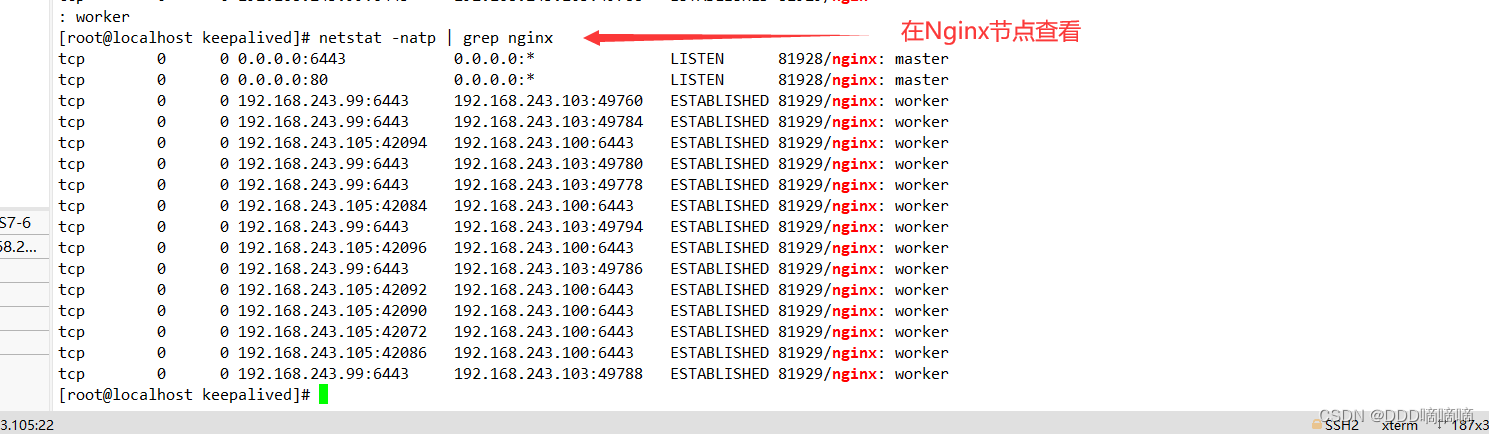

//在 lb01 上查看 nginx 和 node 、 master 节点的连接状态

netstat -natp | grep nginx

tcp 0 0 0.0.0.0:6443 0.0.0.0:* LISTEN 44904/nginx: master

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 44904/nginx: master

tcp 0 0 192.168.80.100:6443 192.168.80.12:46954 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.14:45074 192.168.80.10:6443 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.14:53308 192.168.80.20:6443 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.14:53316 192.168.80.20:6443 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.100:6443 192.168.80.11:48784 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.14:45070 192.168.80.10:6443 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.100:6443 192.168.80.11:48794 ESTABLISHED 44905/nginx: worker

tcp 0 0 192.168.80.100:6443 192.168.80.12:46968 ESTABLISHED 44905/nginx: worker

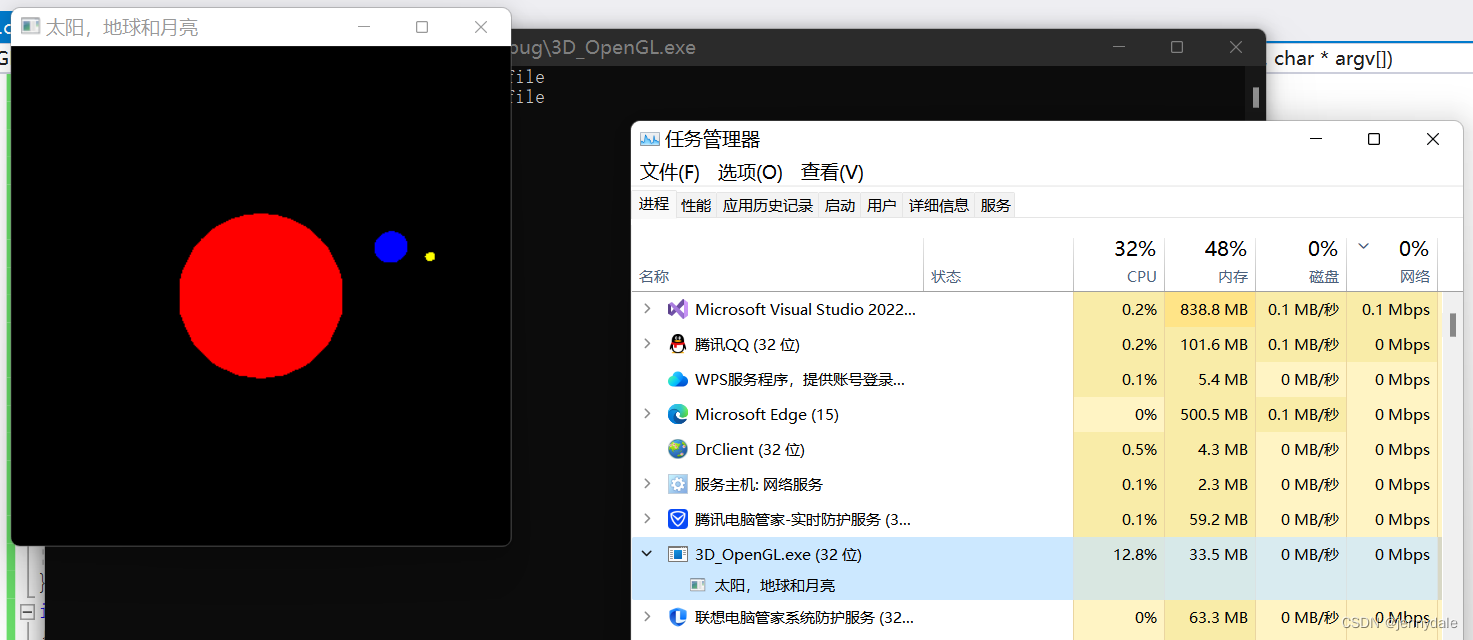

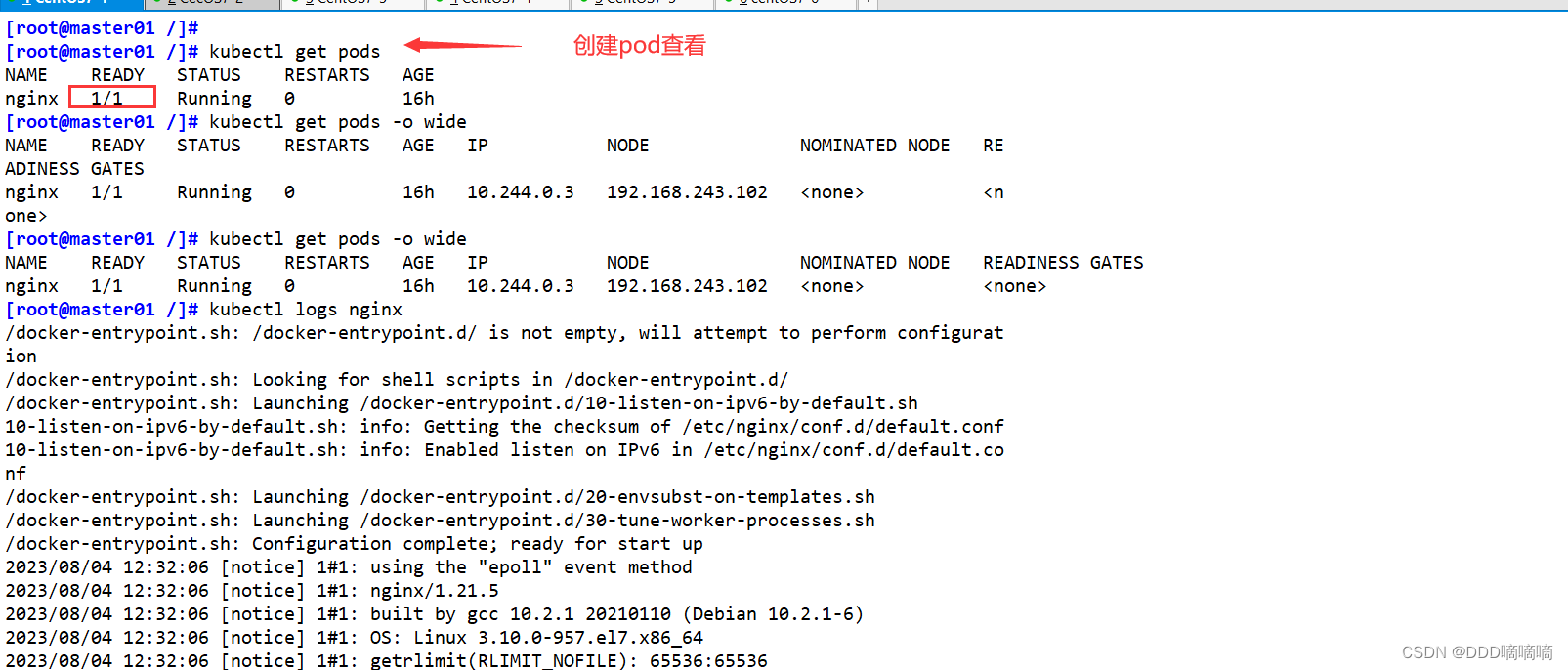

在 master01 节点上测试

//测试创建pod

kubectl run nginx --image=nginx

//查看Pod的状态信息

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-dbddb74b8-nf9sk 0/1 ContainerCreating 0 33s #正在创建中

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-dbddb74b8-nf9sk 1/1 Running 0 80s #创建完成,运行中

kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

nginx-dbddb74b8-26r9l 1/1 Running 0 10m 172.17.36.2 192.168.80.15 <none>

//READY为1/1,表示这个Pod中有1个容器

//在对应网段的node节点上操作,可以直接使用浏览器或者curl命令访问

curl 172.17.36.2

//这时在master01节点上查看nginx日志,发现没有权限查看

kubectl logs nginx-dbddb74b8-nf9sk

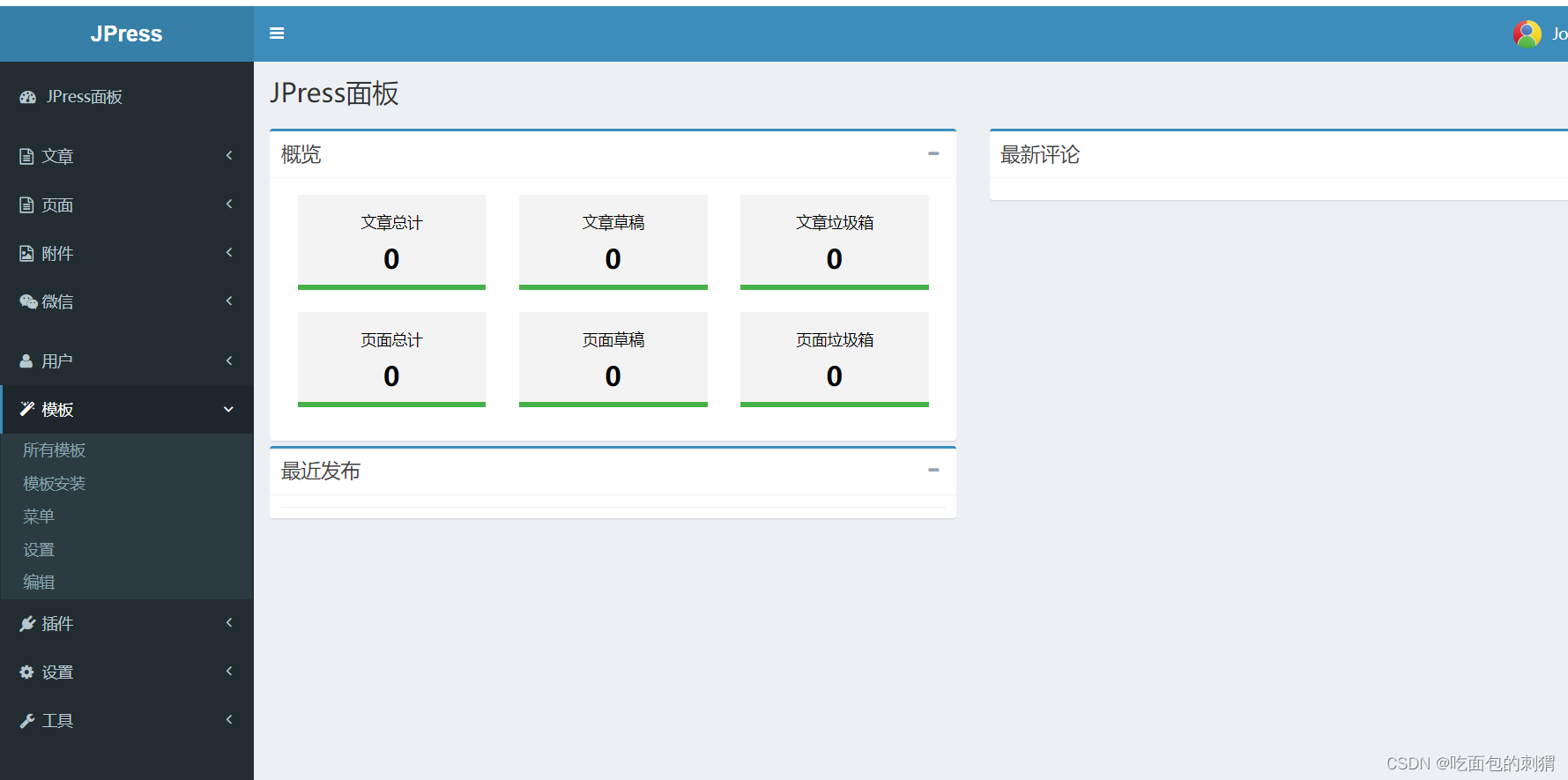

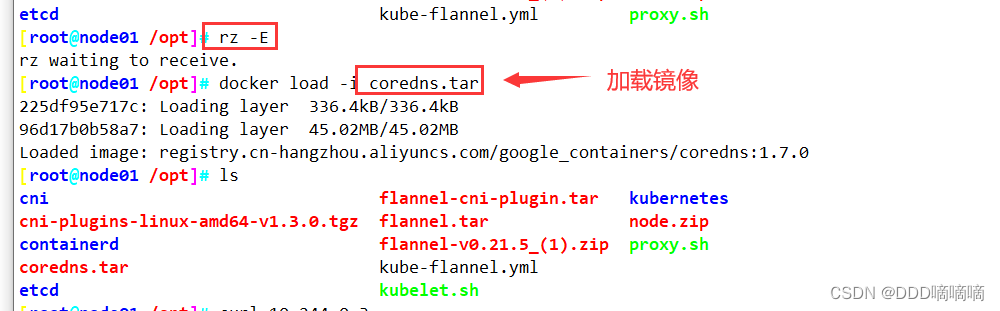

四、部署 Dashboard

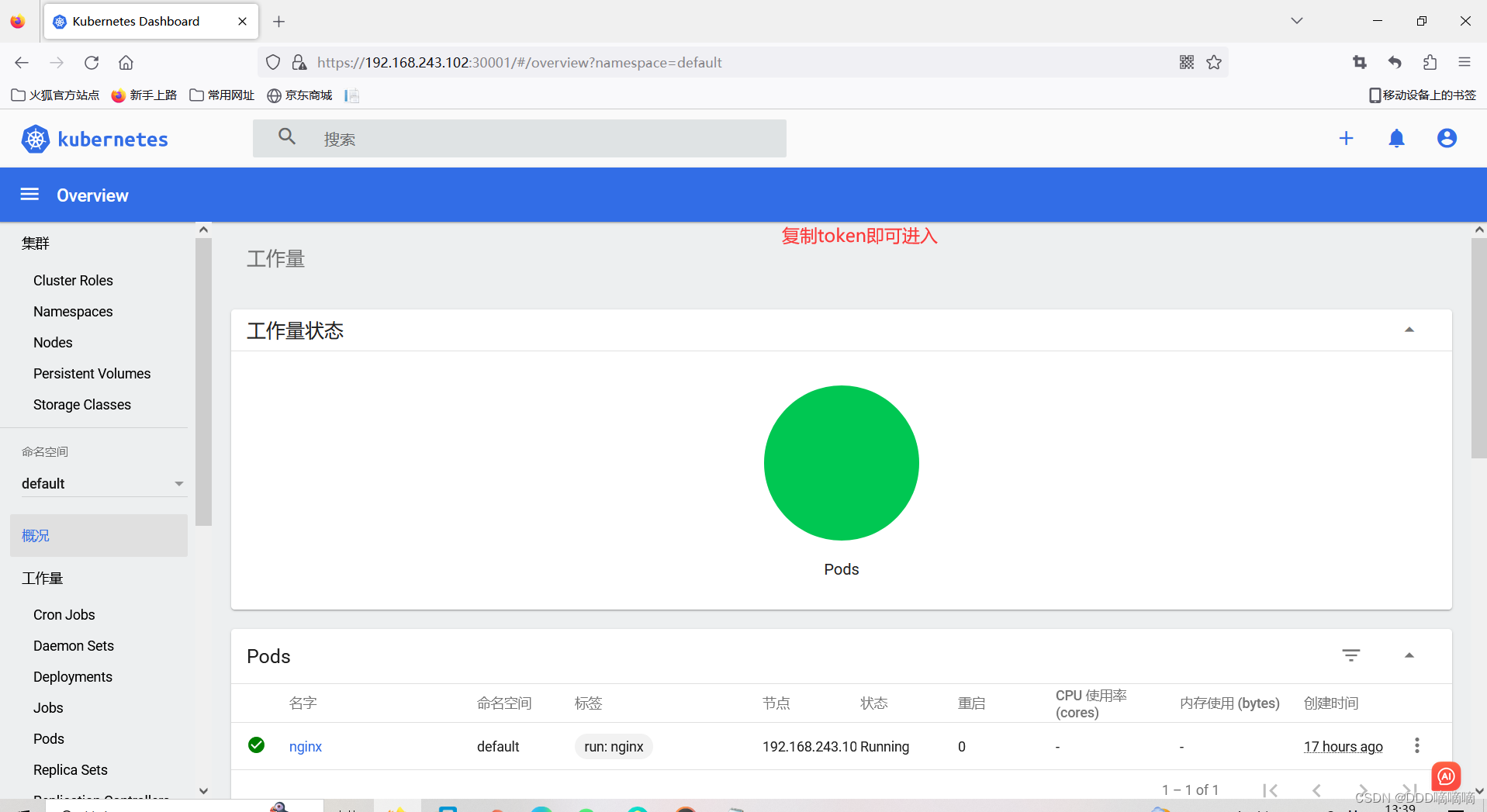

- 仪表板是基于Web的Kubernetes用户界面。您可以使用仪表板将容器化应用程序部署到Kubernetes集群,对容器化应用程序进行故障排除,并管理集群本身及其伴随资源。您可以使用仪表板来概述群集上运行的应用程序,以及创建或修改单个Kubernetes资源(例如部署,作业,守护进程等)。例如,您可以使用部署向导扩展部署,启动滚动更新,重新启动Pod或部署新应用程序。仪表板还提供有关群集中Kubernetes资源状态以及可能发生的任何错误的信息。

在 master01 节点上操作

#上传 recommended.yaml 文件到 /opt/k8s 目录中

cd /opt/k8s

vim recommended.yaml

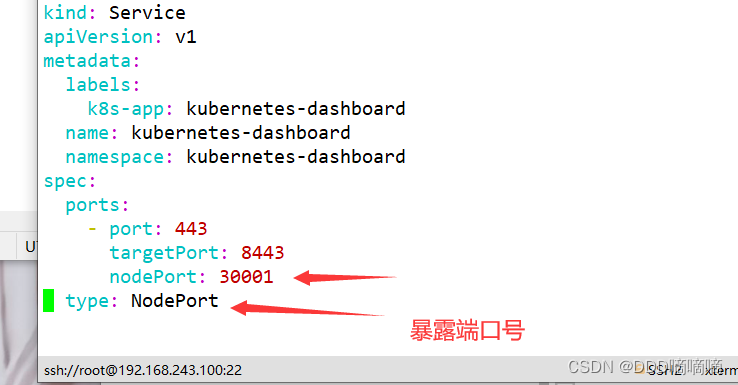

#默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

nodePort: 30001 #添加

type: NodePort #添加

selector:

k8s-app: kubernetes-dashboard

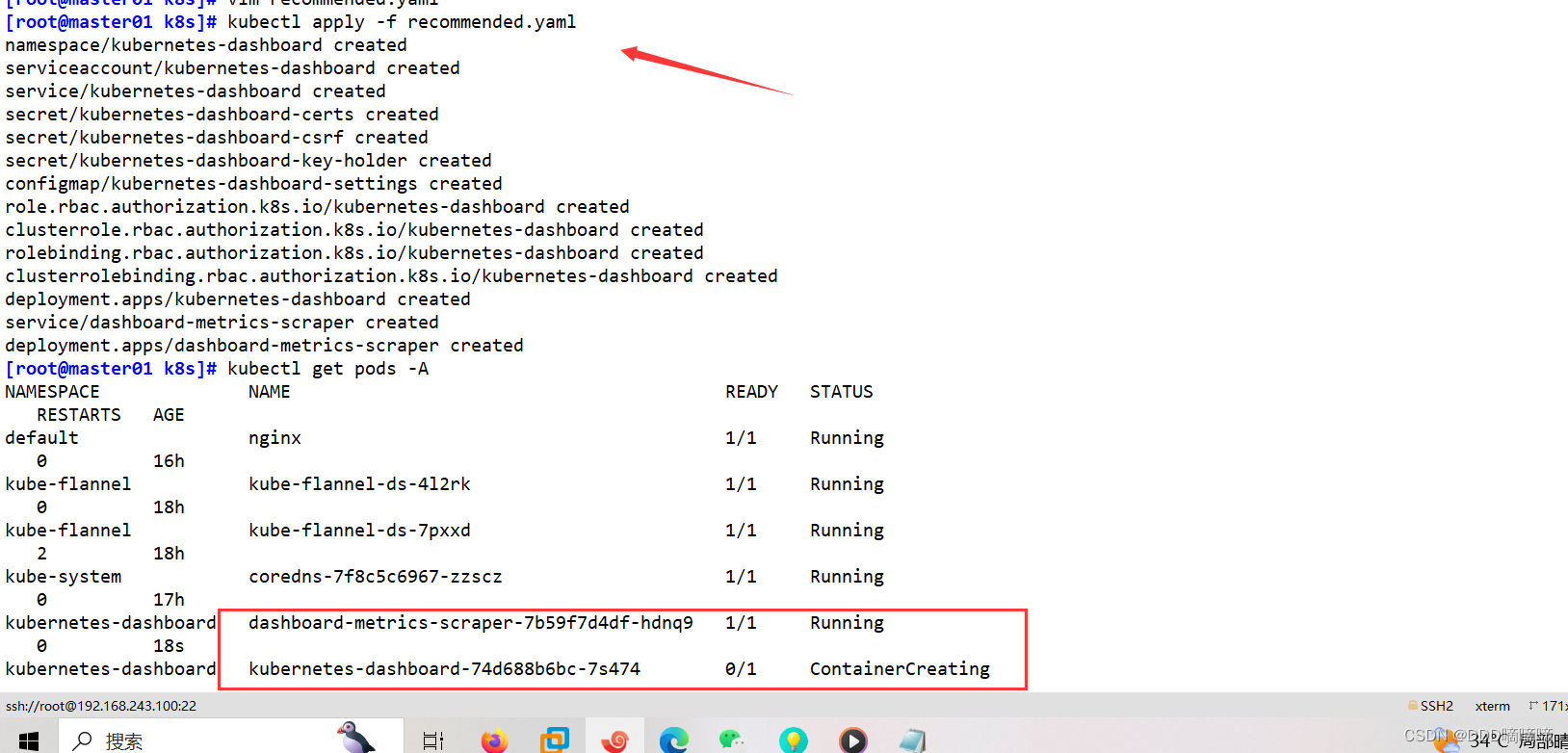

kubectl apply -f recommended.yaml

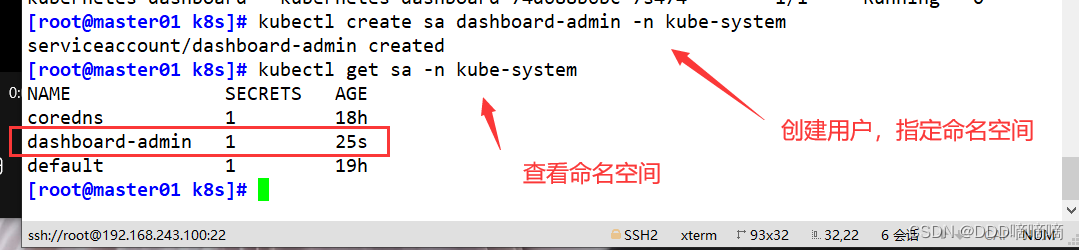

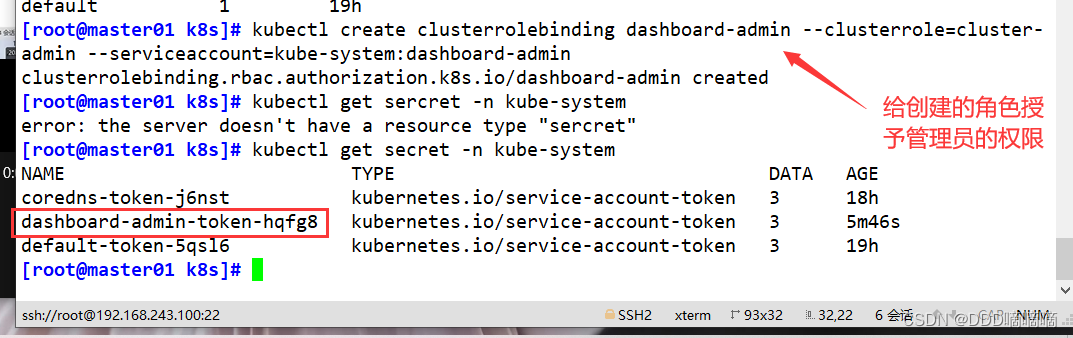

#创建service account并绑定默认cluster-admin管理员集群角色

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

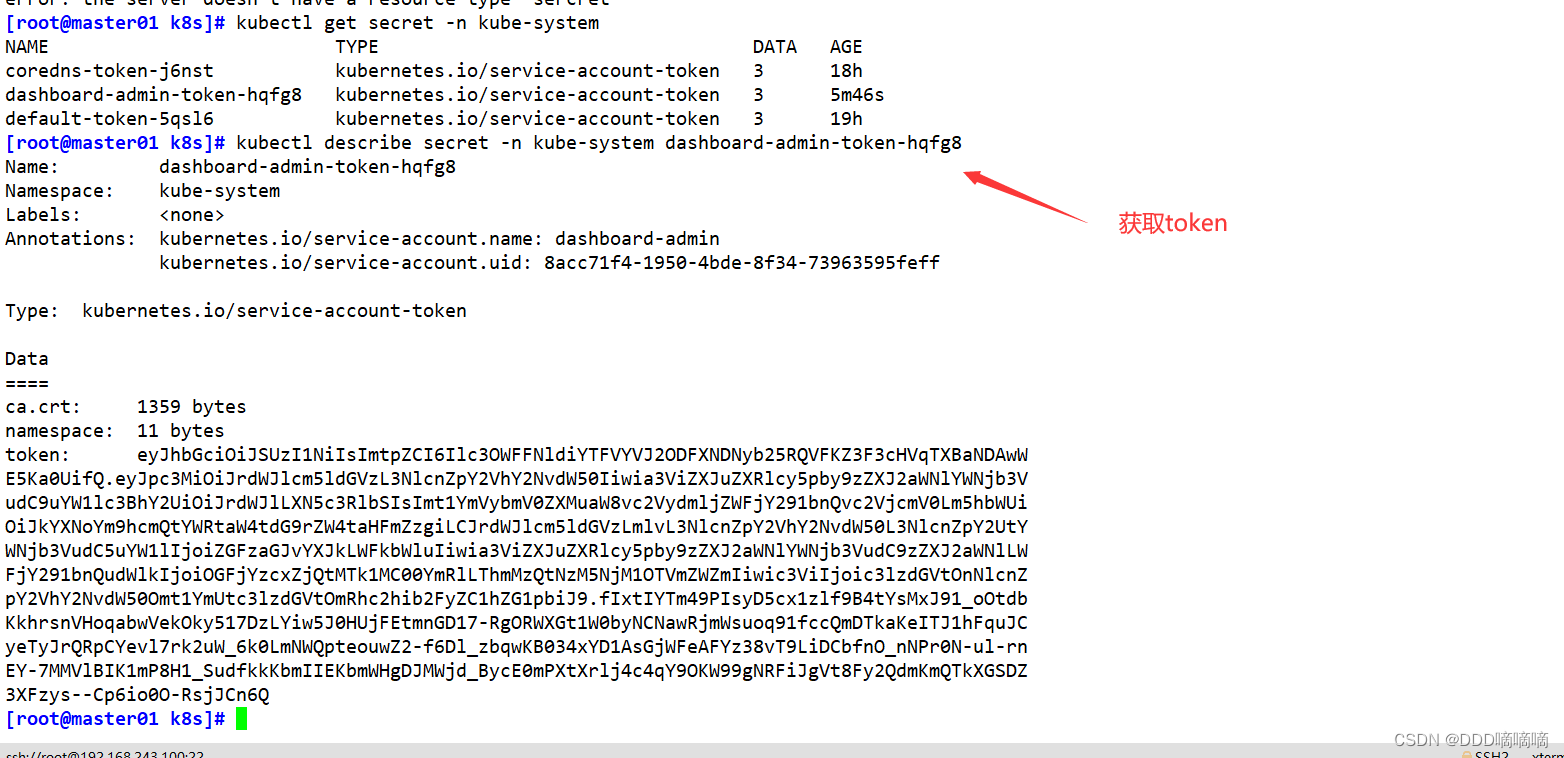

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

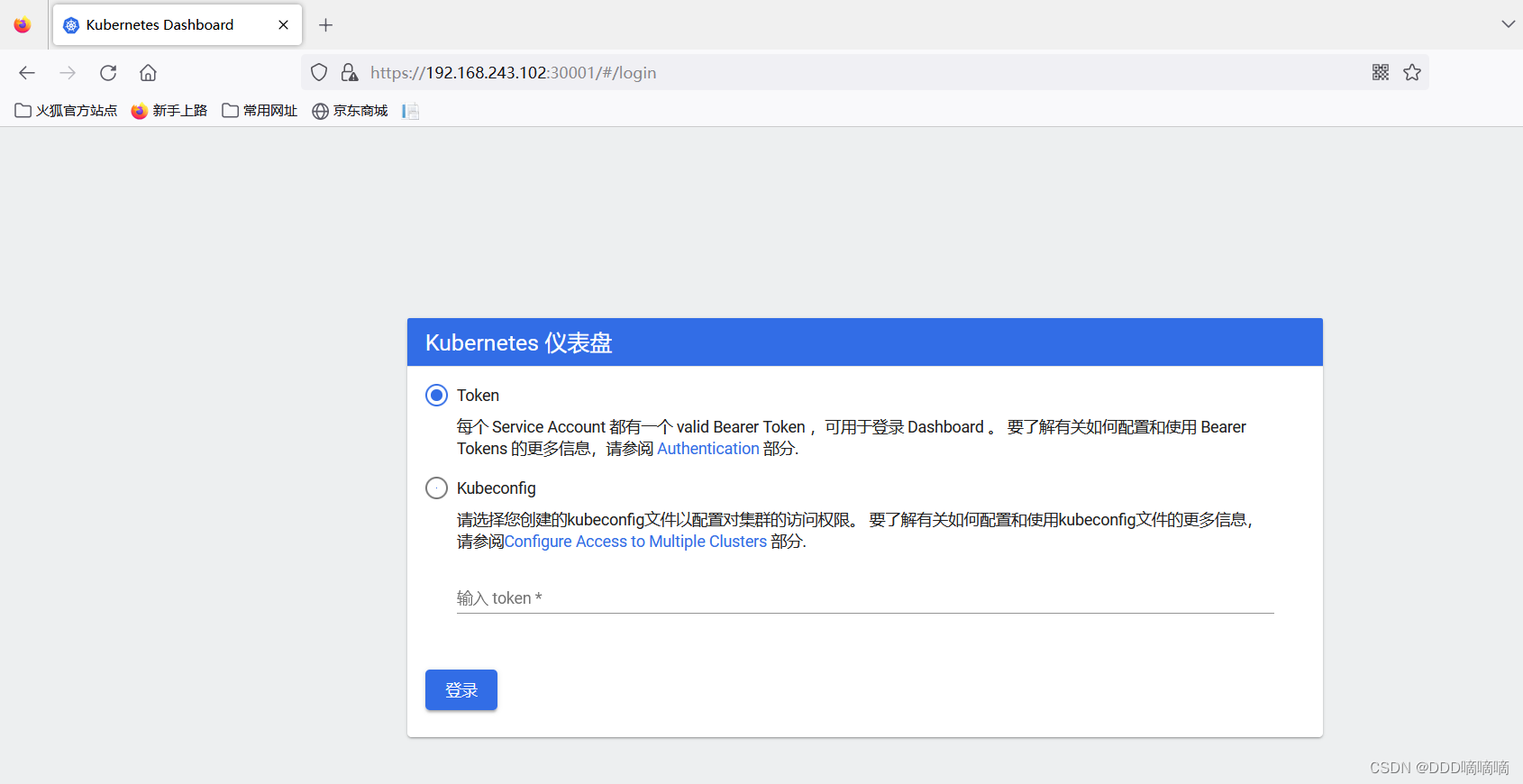

#使用输出的token登录Dashboard

https://NodeIP:30001