利用卷神经网络实现IOSLab数字手写图像识别

文章目录

- 利用卷神经网络实现IOSLab数字手写图像识别

- 一、前言

- 二、作业要求

- 三、数据集样本分析

- 四、代码实现

- 1、运行环境

- 2、导入依赖项

- 3、导入数据集

- 4、加载数据和数据预处理

- 5、划分数据集

- 6、CNN网络结构构建

- 7、编译模型

- 8、训练模型

- 9、模型评估

- 10、最终结果

- 五、最后我想说

一、前言

本次博客的内容是利用IOSLab数字手写图像识别的实现,是我们代数与逻辑的老师给我们布置的期末作业。我利用的是卷神经网络(CNN)实现的。

二、作业要求

利用老师自制的IOSLab手写数字集实现数字识别以及性别识别,可以使用神经网络或者SVM,线性回归,逻辑回归,贝叶斯决策,决策树,集成学习,概率图模型等之一的方法实现。

三、数据集样本分析

下面给出老师首先处理好的数据集样本示例:

数据集一共有8000张照片,这些图像跟官方的MNIST手写数字数据集类似,所以识别起来方式类似,我们通过观察数据集照片可以发现,每一张照片都对应一个标签在图片下面,例如第一张照片标签为:0M_0101,其中对我们有用的信息只有前两个字符0和M,0对应图片上的数字,M对应男性手写数字字体,后面的W对应的则是女性手写数字字体。

分析到这里,如何进行判断我们应该就知道一个方法了,那就是我们将数据集进行遍历,提取照片的标签放入列表中,然后我们只取标签的前两个对我们判断有用字符。

我们如何进行判断,到这里有两个选择,第一个选择将两个标签视为一个整体,对其进行映射处理,将其放入到一个字典中,每个组合对应一个数值,后面判断就可以通过该数值进行比对,如果跟该数值一致则判断正确。第二个选择就是将两个标签视为两个部分分别判断,后面步骤跟第一种选择类似,我选择的是第一种方法,大致理论是这样,可能讲的不是很清楚,我们直接上后面实现代码就知道了。

四、代码实现

1、运行环境

我使用的是谷歌的Colaboratory进行代码运行的,目前使用下来觉得还不错,主要是免费加上提供的GPU算力还可以,感兴趣的同学可以去尝试一下,在这里我就不过多介绍使用方法,如果不懂的同学也可以来问我。

我是因为电脑出现了一些问题所以使用的云平台进行的运行,如果你自身电脑配置足够也可以使用自己的电脑进行运行,不过推荐使用TensorFlow2.4.0版本运行。

2、导入依赖项

import os

import keras

import numpy as np

from PIL import Image

import matplotlib.pyplot as plt

3、导入数据集

没有使用谷歌的Colaboratory可以省略这一步。

from google.colab import drive

drive.mount('/content/gdrive')

4、加载数据和数据预处理

# 遍历并读取全部数据

labels = []

images = []

data_dir = "/content/gdrive/MyDrive/Colab Notebooks/data/"

files = os.listdir(data_dir)

for file in files:

image = np.array(Image.open(data_dir + file))

label = file[:2]

images.append(image)

labels.append(label)

其中的data_dir换成自己的数据集地址路径即可。

然后我们查看一下第一张数据样本照片:

import pylab

pylab.imshow(images[0])

print(labels[0])

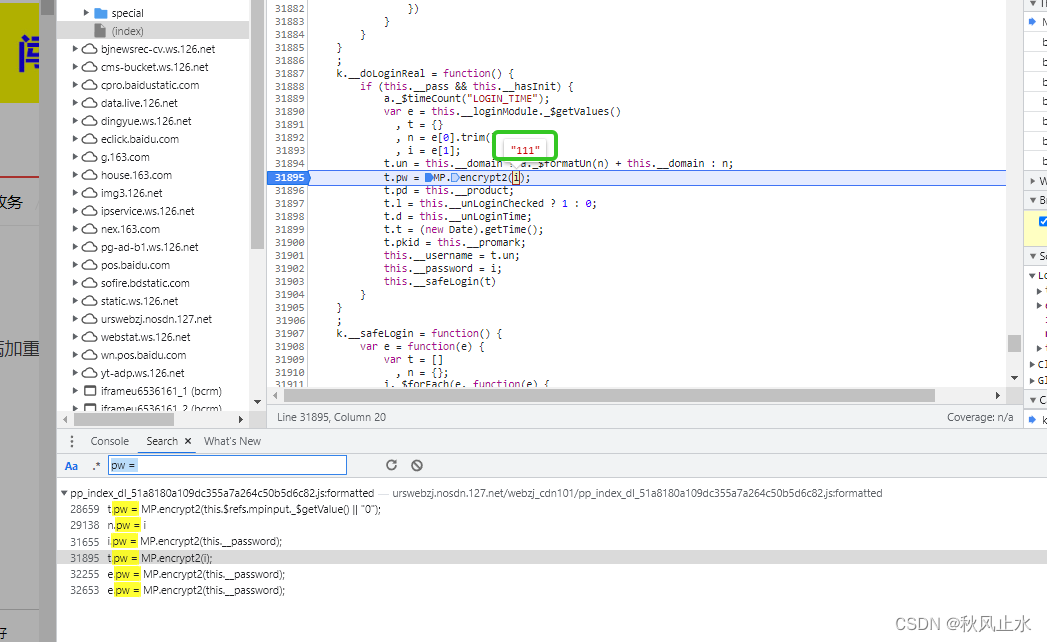

按照上面分析对数据标签进行映射处理:

# 将数据标签进行映射

label_map = {}

for label in labels:

if label not in label_map:

label_map[label] = len(label_map)

print(label_map)

处理后结果如下:

{'0W': 0, '1W': 1, '6W': 2, '8W': 3, '7W': 4, '4W': 5, '9W': 6, '2W': 7, '3W': 8, '5W': 9, '6M': 10, '7M': 11, '9M': 12, '3M': 13, '4M': 14, '5M': 15, '8M': 16, '2M': 17, '0M': 18, '1M': 19}

设置数据归一化:

X = np.expand_dims((1 - (np.array(images) / 255.0)), -1)

Y = np.array([label_map[y] for y in labels])

5、划分数据集

这里我们划分了训练集、验证集和测试集:

from sklearn.model_selection import train_test_split

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, random_state=42, test_size=0.1)

X_train, X_val, Y_train, Y_val = train_test_split(X_train, Y_train, random_state=42, test_size=0.1)

X_train.shape, Y_train.shape, X_val.shape, Y_val.shape

((6480, 100, 100, 1), (6480,), (720, 100, 100, 1), (720,))

6、CNN网络结构构建

这里我是仿照的传统手写数字识别的CNN网络结构构建的,并不是最好的网络结构,大家自己自行搭建最合适的。

model = keras.Sequential([

# 设置二维卷积层1,设置32个3*3卷积核,activation参数将激活函数设置为ReLu函数,

keras.layers.Input(shape=[100, 100, 1]),

keras.layers.Conv2D(filters=32, kernel_size=(3, 3), padding='same', activation='relu'),

# 池化层1,2*2采样

keras.layers.MaxPooling2D((2, 2)),

# 设置二维卷积层2,设置64个3*3卷积核,activation参数将激活函数设置为ReLu函数

keras.layers.Conv2D(filters=64, kernel_size=(3, 3), padding='same', activation='relu'),

# 池化层2,2*2采样

keras.layers.MaxPooling2D((2, 2)),

keras.layers.Dropout(0.5), # Dropout层防止模型训练过拟合

keras.layers.Flatten(), # Flatten层,连接卷积层与全连接层

keras.layers.Dense(128, activation='relu'), # 全连接层,特征进一步提取,128为输出空间的维数,activation参数将激活函数设置为ReLu函数

keras.layers.Dropout(0.5),

keras.layers.Dense(len(label_map), activation='softmax') # 输出层,输出预期结果,我们自定义的标签字典长度为输出空间的维数

])

# 打印网络结构

model.summary()

打印的结果是:

Model: "sequential_10"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d_20 (Conv2D) (None, 100, 100, 32) 320

max_pooling2d_20 (MaxPoolin (None, 50, 50, 32) 0

g2D)

conv2d_21 (Conv2D) (None, 50, 50, 64) 18496

max_pooling2d_21 (MaxPoolin (None, 25, 25, 64) 0

g2D)

dropout_20 (Dropout) (None, 25, 25, 64) 0

flatten_10 (Flatten) (None, 40000) 0

dense_20 (Dense) (None, 128) 5120128

dropout_21 (Dropout) (None, 128) 0

dense_21 (Dense) (None, 20) 2580

=================================================================

Total params: 5,141,524

Trainable params: 5,141,524

Non-trainable params: 0

_________________________________________________________________

7、编译模型

我们设置优化器为Adam优化器,设置损失函数为交叉熵损失函数。

batch_size = 128

epochs = 50

model.compile(optimizer="adam",

loss='sparse_categorical_crossentropy',

metrics=['accuracy'])

8、训练模型

history = model.fit(

X_train,

Y_train,

batch_size=batch_size,

epochs=epochs,

validation_data=(X_val, Y_val)

)

训练的结果是:

Epoch 1/50

51/51 [==============================] - 3s 49ms/step - loss: 2.6542 - accuracy: 0.1765 - val_loss: 2.0517 - val_accuracy: 0.3986

Epoch 2/50

51/51 [==============================] - 2s 44ms/step - loss: 1.8994 - accuracy: 0.3849 - val_loss: 1.4461 - val_accuracy: 0.5250

Epoch 3/50

51/51 [==============================] - 2s 44ms/step - loss: 1.4393 - accuracy: 0.5106 - val_loss: 1.2178 - val_accuracy: 0.5681

Epoch 4/50

51/51 [==============================] - 2s 44ms/step - loss: 1.1575 - accuracy: 0.5931 - val_loss: 1.0610 - val_accuracy: 0.6403

Epoch 5/50

51/51 [==============================] - 2s 44ms/step - loss: 0.9996 - accuracy: 0.6392 - val_loss: 0.9510 - val_accuracy: 0.6583

Epoch 6/50

51/51 [==============================] - 2s 44ms/step - loss: 0.8632 - accuracy: 0.6806 - val_loss: 0.9430 - val_accuracy: 0.6736

Epoch 7/50

51/51 [==============================] - 2s 45ms/step - loss: 0.7646 - accuracy: 0.7083 - val_loss: 0.9221 - val_accuracy: 0.6625

Epoch 8/50

51/51 [==============================] - 2s 45ms/step - loss: 0.6832 - accuracy: 0.7387 - val_loss: 0.8978 - val_accuracy: 0.6736

Epoch 9/50

51/51 [==============================] - 2s 45ms/step - loss: 0.6341 - accuracy: 0.7627 - val_loss: 0.9280 - val_accuracy: 0.6597

Epoch 10/50

51/51 [==============================] - 2s 44ms/step - loss: 0.5643 - accuracy: 0.7823 - val_loss: 0.9092 - val_accuracy: 0.6764

Epoch 11/50

51/51 [==============================] - 2s 44ms/step - loss: 0.5276 - accuracy: 0.7881 - val_loss: 0.9558 - val_accuracy: 0.6694

Epoch 12/50

51/51 [==============================] - 2s 45ms/step - loss: 0.4750 - accuracy: 0.8174 - val_loss: 0.9492 - val_accuracy: 0.6653

Epoch 13/50

51/51 [==============================] - 2s 45ms/step - loss: 0.4715 - accuracy: 0.8228 - val_loss: 0.9498 - val_accuracy: 0.6556

Epoch 14/50

51/51 [==============================] - 2s 44ms/step - loss: 0.4281 - accuracy: 0.8312 - val_loss: 0.9901 - val_accuracy: 0.6361

Epoch 15/50

51/51 [==============================] - 2s 45ms/step - loss: 0.4026 - accuracy: 0.8441 - val_loss: 0.9836 - val_accuracy: 0.6569

Epoch 16/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3842 - accuracy: 0.8566 - val_loss: 0.9848 - val_accuracy: 0.6903

Epoch 17/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3780 - accuracy: 0.8556 - val_loss: 1.0002 - val_accuracy: 0.6708

Epoch 18/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3523 - accuracy: 0.8654 - val_loss: 1.0268 - val_accuracy: 0.6694

Epoch 19/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3183 - accuracy: 0.8765 - val_loss: 1.0117 - val_accuracy: 0.6833

Epoch 20/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3131 - accuracy: 0.8836 - val_loss: 1.0741 - val_accuracy: 0.6611

Epoch 21/50

51/51 [==============================] - 2s 44ms/step - loss: 0.3117 - accuracy: 0.8818 - val_loss: 1.0466 - val_accuracy: 0.6653

Epoch 22/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2964 - accuracy: 0.8873 - val_loss: 1.0898 - val_accuracy: 0.6583

Epoch 23/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2937 - accuracy: 0.8849 - val_loss: 1.0655 - val_accuracy: 0.6583

Epoch 24/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2833 - accuracy: 0.8894 - val_loss: 1.0780 - val_accuracy: 0.6556

Epoch 25/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2485 - accuracy: 0.9039 - val_loss: 1.1686 - val_accuracy: 0.6639

Epoch 26/50

51/51 [==============================] - 2s 45ms/step - loss: 0.2545 - accuracy: 0.9014 - val_loss: 1.0805 - val_accuracy: 0.6847

Epoch 27/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2429 - accuracy: 0.9065 - val_loss: 1.0698 - val_accuracy: 0.6764

Epoch 28/50

51/51 [==============================] - 2s 45ms/step - loss: 0.2274 - accuracy: 0.9153 - val_loss: 1.2039 - val_accuracy: 0.6639

Epoch 29/50

51/51 [==============================] - 2s 45ms/step - loss: 0.2421 - accuracy: 0.9103 - val_loss: 1.1720 - val_accuracy: 0.6500

Epoch 30/50

51/51 [==============================] - 2s 45ms/step - loss: 0.2323 - accuracy: 0.9168 - val_loss: 1.1635 - val_accuracy: 0.6806

Epoch 31/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2265 - accuracy: 0.9173 - val_loss: 1.1737 - val_accuracy: 0.6625

Epoch 32/50

51/51 [==============================] - 2s 45ms/step - loss: 0.2137 - accuracy: 0.9159 - val_loss: 1.1867 - val_accuracy: 0.6611

Epoch 33/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2145 - accuracy: 0.9213 - val_loss: 1.2354 - val_accuracy: 0.6778

Epoch 34/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2050 - accuracy: 0.9238 - val_loss: 1.1631 - val_accuracy: 0.6722

Epoch 35/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2018 - accuracy: 0.9279 - val_loss: 1.1623 - val_accuracy: 0.6778

Epoch 36/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2071 - accuracy: 0.9248 - val_loss: 1.1234 - val_accuracy: 0.6917

Epoch 37/50

51/51 [==============================] - 2s 44ms/step - loss: 0.2050 - accuracy: 0.9228 - val_loss: 1.1518 - val_accuracy: 0.6944

Epoch 38/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1836 - accuracy: 0.9323 - val_loss: 1.2082 - val_accuracy: 0.6708

Epoch 39/50

51/51 [==============================] - 2s 43ms/step - loss: 0.1817 - accuracy: 0.9338 - val_loss: 1.2318 - val_accuracy: 0.6875

Epoch 40/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1837 - accuracy: 0.9313 - val_loss: 1.2278 - val_accuracy: 0.6736

Epoch 41/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1776 - accuracy: 0.9353 - val_loss: 1.2489 - val_accuracy: 0.6792

Epoch 42/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1875 - accuracy: 0.9306 - val_loss: 1.1912 - val_accuracy: 0.6875

Epoch 43/50

51/51 [==============================] - 2s 43ms/step - loss: 0.1739 - accuracy: 0.9355 - val_loss: 1.3096 - val_accuracy: 0.6611

Epoch 44/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1665 - accuracy: 0.9397 - val_loss: 1.3223 - val_accuracy: 0.6625

Epoch 45/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1623 - accuracy: 0.9378 - val_loss: 1.2948 - val_accuracy: 0.6667

Epoch 46/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1680 - accuracy: 0.9361 - val_loss: 1.2993 - val_accuracy: 0.6611

Epoch 47/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1820 - accuracy: 0.9347 - val_loss: 1.2694 - val_accuracy: 0.6597

Epoch 48/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1704 - accuracy: 0.9352 - val_loss: 1.2661 - val_accuracy: 0.6556

Epoch 49/50

51/51 [==============================] - 2s 43ms/step - loss: 0.1633 - accuracy: 0.9389 - val_loss: 1.3099 - val_accuracy: 0.6653

Epoch 50/50

51/51 [==============================] - 2s 44ms/step - loss: 0.1439 - accuracy: 0.9477 - val_loss: 1.2711 - val_accuracy: 0.6583

Y_pred = model.predict(X_test)

25/25 [==============================] - 0s 6ms/step

9、模型评估

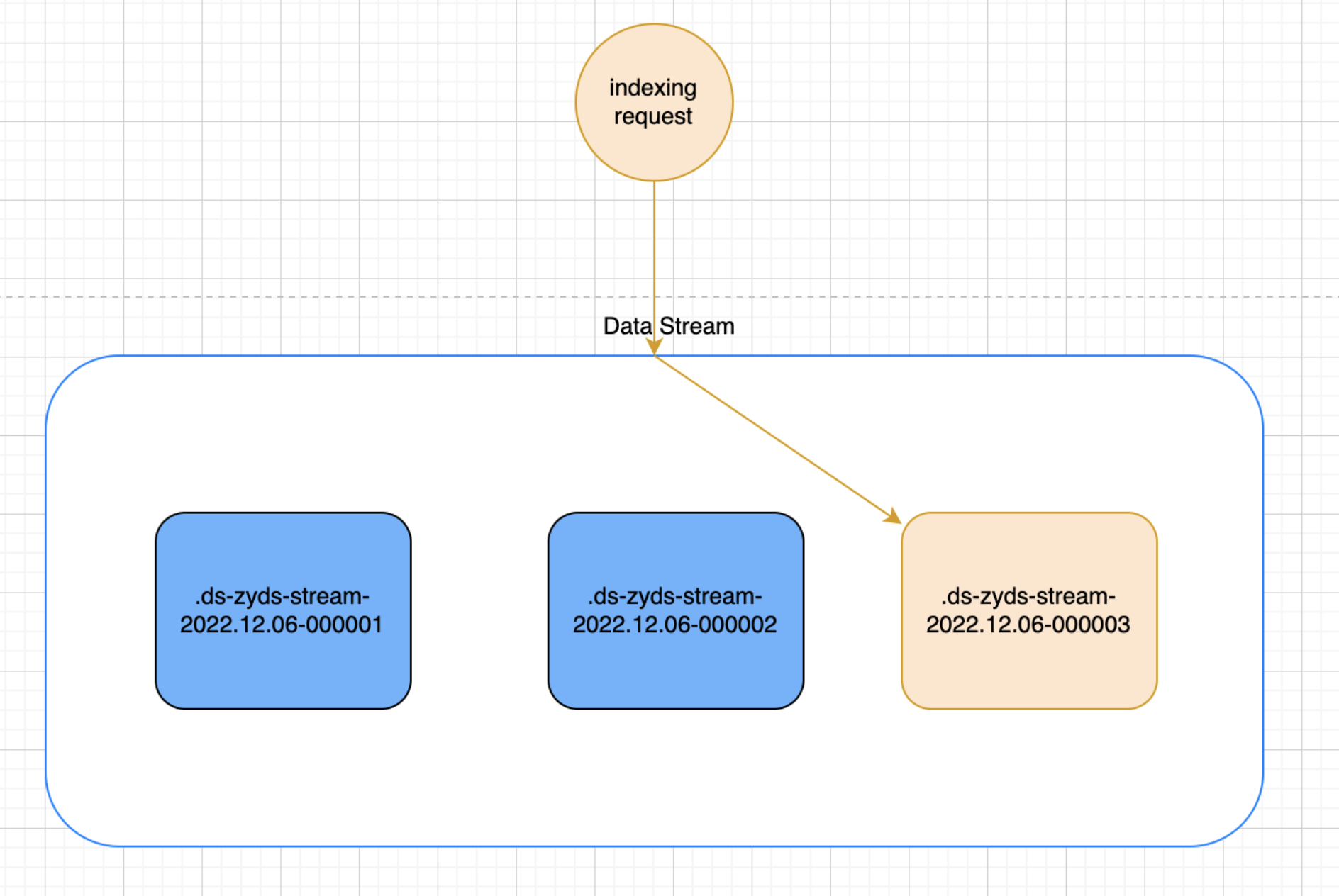

对于模型评估,我们使用最常用的两种方式进行模型评估——模型混淆矩阵和Accuracy-Loss图。

混淆矩阵:

from sklearn.metrics import ConfusionMatrixDisplay

cmd = ConfusionMatrixDisplay.from_predictions(Y_test, np.argmax(Y_pred, axis=-1), display_labels=label_map.keys())

在混线矩阵中,以对角线为分界线。以上图为例子,对角线的位置表示预测正确,对角线以外的位置表示把样本错误的预测为其他样本。由上图我们可以发现,我们的图中权值较大的值基本都集中在对角线位置,对角线以外的位置错误率也较低,所以整体来说模型算比较好。

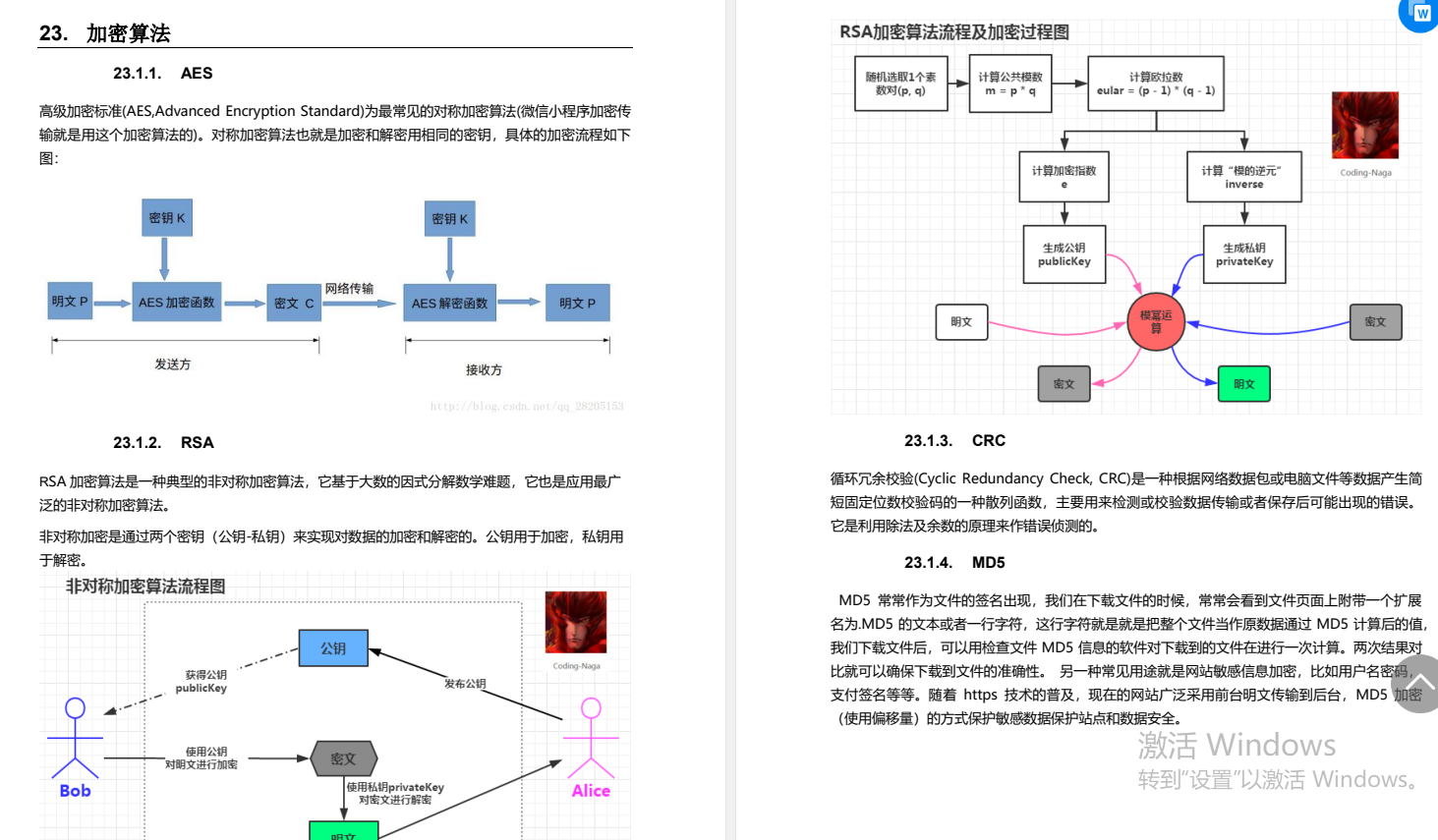

Accuracy-Loss图:

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs_range = range(epochs)

plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

由上图我们可以看到模型算是展现了良好的拟合,训练和测试学习曲线收敛,没有明显的过拟合或欠拟合迹象,证明我们的模型较好。

10、最终结果

还记得我们前面进行的标签映射操作吧,我们将最后的预测结果转换成原始标签,对其进行判断然后看看总体的准确率是多少以及数字和性别两者单一准确率分别是多少。

invert_lable_map = {v: k for k, v in label_map.items()}

num_acc = 0.

sex_acc = 0.

both_acc = 0.

for yp, yt in zip(np.argmax(Y_pred, axis=-1), Y_test):

# 转换为原始标签

yp = invert_lable_map.get(yp)

yt = invert_lable_map.get(yt)

if yp == yt: # 完全一致

both_acc += 1

num_acc += 1

sex_acc += 1

elif yp[0] == yt[0]: # 数字一致

num_acc += 1

elif yp[1] == yt[1]: # 性别一致

sex_acc += 1

print("Both ACC:", both_acc / len(Y_test))

print("Number ACC:", num_acc / len(Y_test))

print("Gender ACC:", sex_acc / len(Y_test))

Both ACC: 0.68

Number ACC: 0.905

Gender ACC: 0.74

可以看出单一数字的识别率比较高,单一性别识别率较高,总体准确率还不错,你们可以再自行进行调参,准确率肯定不止这么高,在实现的过程中我只使用了一个模型进行整体训练,你们可以写两个模型对其进行分别训练,那样准确率肯定比单一模型的要高。

五、最后我想说

因为疫情原因,我们学校提前放假了,今天在回家的路上,坐在高铁上实在是无聊,所以我就索性不等回家之后写,直接在高铁上写完,写的比较仓促,有些话可能讲得不是很到位,还请大家见谅,也欢迎大家来和我交流沟通共同学习。