目录

1. 基本操作

2. 卷积操作

2.1 torch.nn.functional — conv2d

2.2 torch.nn.Conv2d

3. 池化层

4. 非线性激活

4.1 使用ReLU非线性激活

4.2 使用Sigmoid非线性激活

5. 线性激活

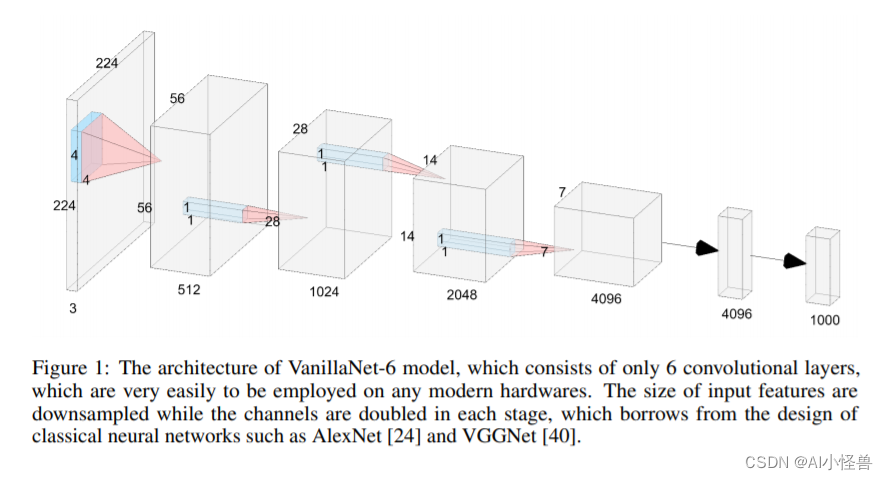

6. PyTorch的一些图像模型

1. 基本操作

import torch

from torch import nn

class MyModel(nn.Module):

def __init__(self):

super(MyModel, self).__init__()

def forward(self, input):

output = input + 1

return output

my_module1 = MyModel()

x = torch.tensor(1.0)

output = my_module1(x)

print(output) # tensor(2.)

2. 卷积操作

2.1 torch.nn.functional -- conv2d

图片来源于:b站up主 我是土堆

注意conv2d为二维卷积,conv1d为一维卷积。

nn_conv.py

import torch

import torch.nn.functional as F

# 输入图像

input = torch.tensor([

[1, 2, 0, 3, 1],

[0, 1, 2, 3, 1],

[1, 2, 1, 0, 0],

[5, 2, 3, 1, 1],

[2, 1, 0, 1, 1]

])

# 卷积核

kernel = torch.tensor([

[1, 2, 1],

[0, 1, 0],

[2, 1, 0]

])

input = torch.reshape(input, (1, 1, 5, 5))

kernel = torch.reshape(kernel, (1, 1, 3, 3))

print(input.shape, kernel.shape)

output = F.conv2d(input, kernel, stride=1)

print(output)

torch.Size([1, 1, 5, 5]) torch.Size([1, 1, 3, 3])

tensor([[[[10, 12, 12],

[18, 16, 16],

[13, 9, 3]]]])

stride(步长)若为2:

output2 = F.conv2d(input, kernel, stride=2)

print(output2)tensor([[[[10, 12],

[13, 3]]]])

padding为1时,相当于在外侧填充了值为0的两行和两列:

output3 = F.conv2d(input, kernel, stride=1, padding=1)

print(output3)tensor([[[[ 1, 3, 4, 10, 8],

[ 5, 10, 12, 12, 6],

[ 7, 18, 16, 16, 8],

[11, 13, 9, 3, 4],

[14, 13, 9, 7, 4]]]])

2.2 torch.nn.Conv2d

介绍:

in_channels -- 输入的通道数

out_channels -- 根据卷积核数,如in_channels=1,卷积核有两个,则out_channels=2

nn_conv2d.py

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10('./dataset', train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=64)

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

# 我们的图像是三通道的(RGB)

# 3×3大小的卷积核一共有6个,因为输出为6个,每个卷积核有3通道

self.conv1 = Conv2d(in_channels=3, out_channels=6, kernel_size=3, stride=1, padding=0)

def forward(self, x):

x = self.conv1(x)

return x

myModule1 = MyModule()

writer = SummaryWriter('./logs')

step = 0

for data in dataloader:

imgs, targets = data

output = myModule1(imgs)

print(imgs.shape) # torch.Size([64, 3, 32, 32])

print(output.shape) # torch.Size([64, 6, 30, 30])

output = torch.reshape(output, (-1, 3, 30, 30)) # 不知道多少时填-1,会帮你自动计算,相当于batch_size多了

writer.add_images('input', imgs, step)

writer.add_images('output', output, step)

step = step + 1

writer.close()

注意:output要进行reshape是因为tensorboard在放图片时,没有六通道的处理。于是我们相当于把六通道分成2个三通道,匀出来的分给了batch_size,这样batch_size的数量就扩大了两倍。在不知道具体数为多少时,我们可以用-1代替。

3. 池化层

图片来源于:b站up主 我是土堆

说明:若没有设置步长,则默认为kernel_size大小。若Ceil_model设置为True,则不够3×3的部分也会保留进行计算;如果Ceil_model设置为False,则不够3×3的部分直接舍去,不进行计算。

N—batch_size C—Channels

nn_maxpool.py

import torch

from torch import nn

from torch.nn import MaxPool2d

input = torch.tensor([

[1, 2, 0, 3, 1],

[0, 1, 2, 3, 1],

[1, 2, 1, 0, 0],

[5, 2, 3, 1, 1],

[2, 1, 0, 1, 1]

], dtype=torch.float32)

# -1——batch_size数,1——通道数

input = torch.reshape(input, (-1, 1, 5, 5))

# print(input.shape) # print(input.shape)#

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.maxpool1 = MaxPool2d(kernel_size=3, ceil_mode=True)

def forward(self, x):

output = self.maxpool1(x)

return output

myModule1 = MyModule()

output = myModule1(input)

print(output)

Note:要将input转为浮点数。

tensor([[[[2., 3.],

[5., 1.]]]])

若ceil_model=False:

tensor([[[[2.]]]])

最大池化的目的:保留输入的特征,同时把数据量减小。

nn_maxpool.py

import torch

import torchvision

from torch import nn

from torch.nn import MaxPool2d

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10('./dataset', train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=64)

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.maxpool1 = MaxPool2d(kernel_size=3, ceil_mode=False)

def forward(self, x):

output = self.maxpool1(x)

return output

myModule1 = MyModule()

writer = SummaryWriter('./logs')

step = 0

for data in dataloader:

imgs, targets = data

writer.add_images('input', imgs, step)

output = myModule1(imgs)

writer.add_images('output', output, step)

step = step + 1

writer.close()

4. 非线性激活

4.1 使用ReLU非线性激活

Note:inplace

图片来源于:b站up主 我是土堆

nn_relu.py

import torch

from torch import nn

from torch.nn import ReLU

input = torch.tensor([

[1, -0.5],

[-1, 3]

])

# batch_size,channel,HW

input = torch.reshape(input, (-1, 1, 2, 2))

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.relu1 = ReLU()

def forward(self, x):

output = self.relu1(x)

return output

myModule1 = MyModule()

output = myModule1(input)

print(output)

tensor([[[[1., 0.],

[0., 3.]]]])

4.2 使用Sigmoid非线性激活

import torch

import torchvision

from torch import nn

from torch.nn import ReLU, Sigmoid

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10('./dataset', train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=64)

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.relu1 = ReLU()

self.sigmoid1 = Sigmoid()

def forward(self, x):

output = self.sigmoid1(x)

return output

myModule1 = MyModule()

writer = SummaryWriter('logs')

step = 0

for data in dataloader:

imgs, targets = data

writer.add_images('input', imgs, step)

output = myModule1(imgs)

writer.add_images('output', output, step)

step = step + 1

writer.close()

5. 线性激活

nn_linear.py

import torch

import torchvision

from torch import nn

from torch.nn import Linear

from torch.utils.data import DataLoader

dataset = torchvision.datasets.CIFAR10('./dataset', train=False, transform=torchvision.transforms.ToTensor(),

download=True)

dataloader = DataLoader(dataset, batch_size=64)

class MyModule(nn.Module):

def __init__(self):

super(MyModule, self).__init__()

self.linear1 = Linear(196608, 10) # in_features,out_features

def forward(self, x):

output = self.linear1(x)

return output

myModule1 = MyModule()

for data in dataloader:

imgs, targets = data

# print(imgs.shape) # torch.Size([64, 3, 32, 32])

# output = torch.reshape(imgs, (1, 1, 1, -1))

# print(output.shape) # torch.Size([1, 1, 1, 196608])

# 可以使用torch.flatten来拉成一维向量

output = torch.flatten(imgs)

# print(output.shape) # torch.Size([196608])

output = myModule1(output)

# print(output.shape) # torch.Size([10])

6. PyTorch的一些图像模型