初识Yolov5是看到一个视频可以检测街道上所有的行人,并实时框选出来。之后学习了CNN卷积神经网络,在完成一个项目需求时,发现卷积神经网络在切割图像方面仍然不太好用。于是我想到了之前看到的Yolov5,实战后不禁感慨一句:真的太强大了!它比“R-CNN”快1000倍,比“Fast R-CNN”快100倍!You Only Look Once,这个被称为“暗网”的国外的开源项目,目标就是让计算机识得世间万物。接下来,跟随我的脚步,一起来看看这篇《基于Yolov5的口罩检测》文章吧!

目录

一、Yolov5简介

二、项目背景

三、检测效果

四、数据集处理

五、结果分析

六、总结

七、模型代码(部分)

一、Yolov5简介

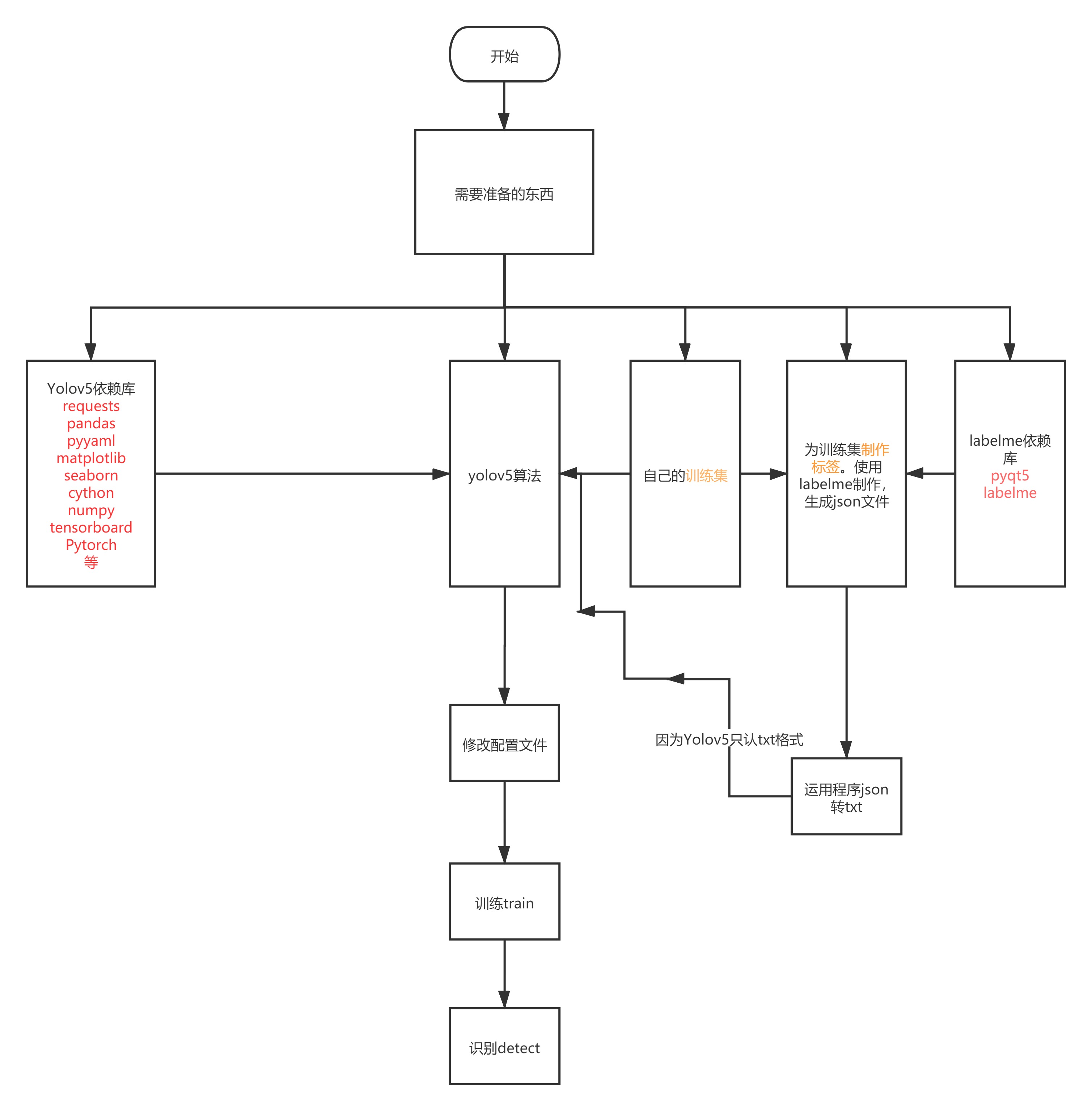

2020年6月25日,Ultralytics发布了YOLOV5 的第一个正式版本,其性能与YOLO V4不相伯仲,同样也是现今最先进的对象检测技术,并在推理速度上是目前最强,yolov5按大小分为四个模型yolov5s、yolov5m、yolov5l、yolov5x。其中的复杂的网络结构、数学基础在这里就不一一介绍(太复杂,笔者也只能看个大概,很难说清楚),在这里,引用另一个博主的Yolov5的网络结构图:Yolov5网络结构图,以及一篇流程图:Yolov5操作流程图

YOLOv5是YOLO系列的一个延申,您也可以看作是基于YOLOv3、YOLOv4的改进作品。YOLOv5没有相应的论文说明,但是作者在Github上积极地开放源代码,通过对源码分析,我们也能很快地了解YOLOv5的网络架构和工作原理。

二、项目背景

当前新冠疫情仍然严重,在公众场合需要佩戴口罩已经成为常识。新型冠状病毒的主要传播途径就是飞沫传播,戴上口罩就可以有效的阻隔病毒的传播。口罩是预防呼吸道传染病的重要防线,可以降低新型冠状病毒感染风险。口罩不仅可以防止病人喷射飞沫,降低飞沫量和喷射速度,还可以阻挡含病毒的飞沫核,防止佩戴者吸入。有研究显示,只要双方都佩戴口罩且间隔1.8米以上,造成感染的几率几乎为0。

但是,在我们周围总有人不喜欢戴口罩,无论是进出商场、教室、街道、地下停车场等公共场所,还是在人员密集的会议室里,他们都不喜欢口罩的“束缚”。运用Yolov5训练出来的口罩检测模型进行检测,就能准确实时的找到哪些人带了口罩、哪些人没带。可以做的定点提醒,或者是阻止他出入公共场所。节省了人力,大幅提高效率。

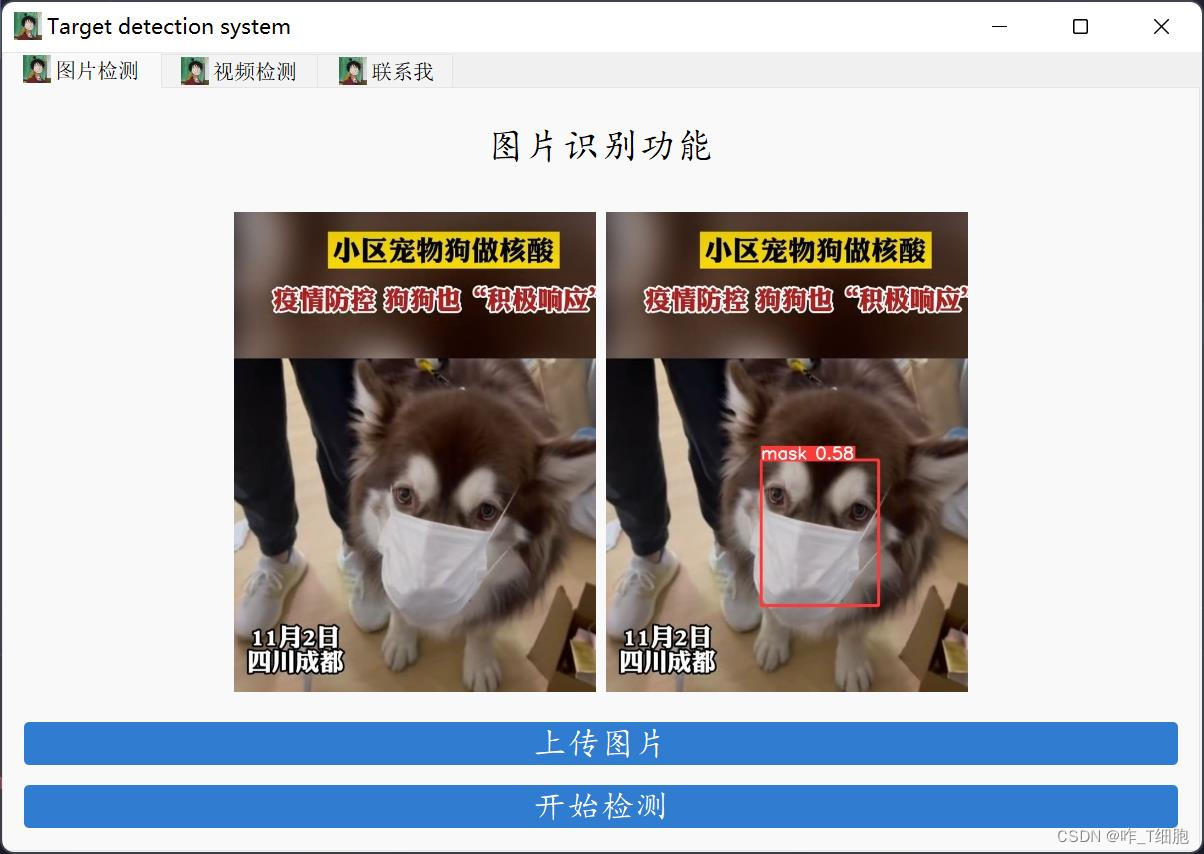

三、检测效果

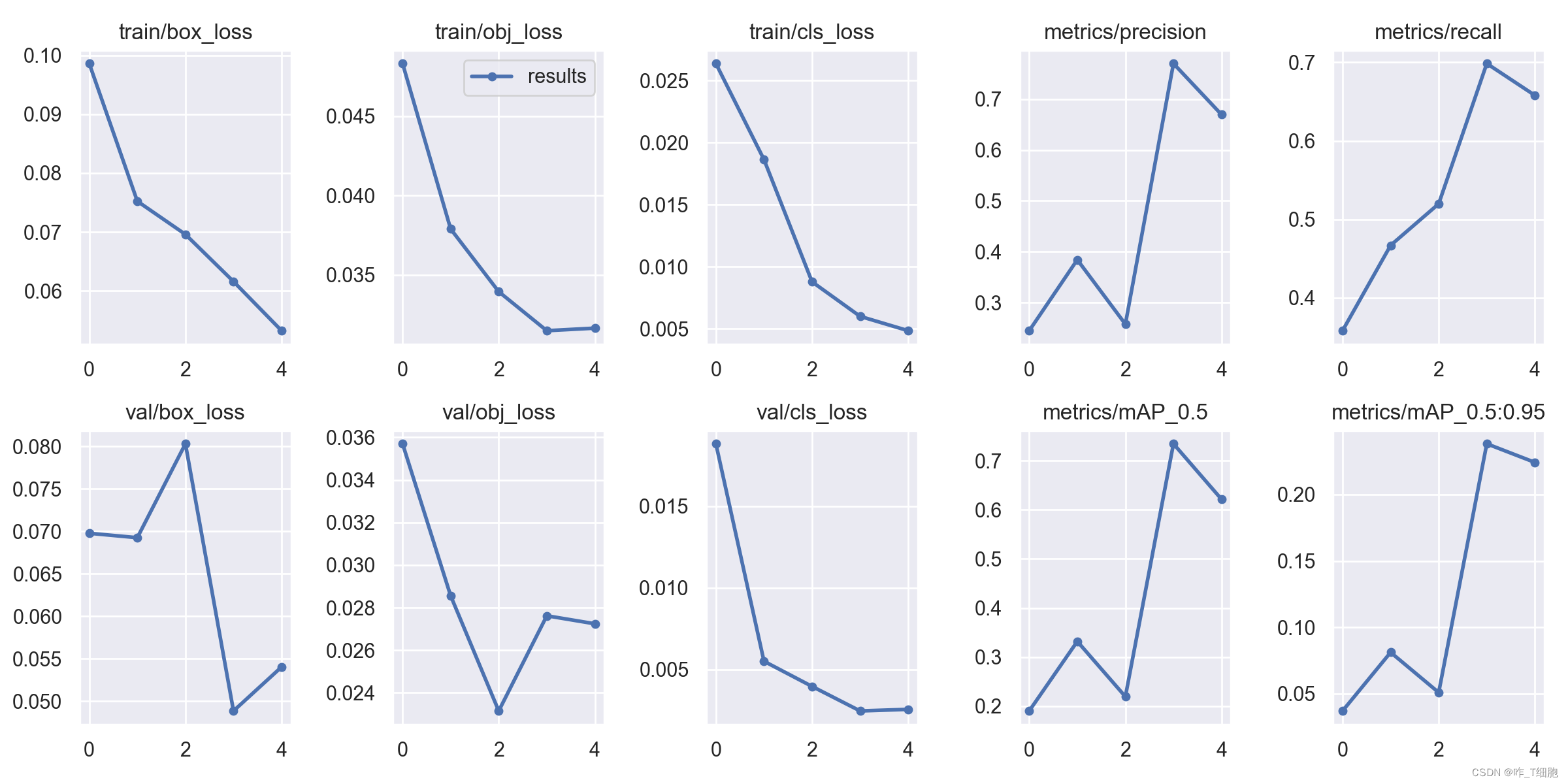

因为我是拿CPU运行的,速度很慢,epoch取了5次,即每张图片学习了5次,一共有1200组训练数据,训练了三个半小时,之后在项目的实际应用的时候会考虑修改为GPU运行,这样速度可以提高很多。我们直接看模型的检测效果及视频的检测效果:

通过上述例子可以看到,仅经过五次学习,识别的精度已经很高了,再一次感叹Yolov5的强大!

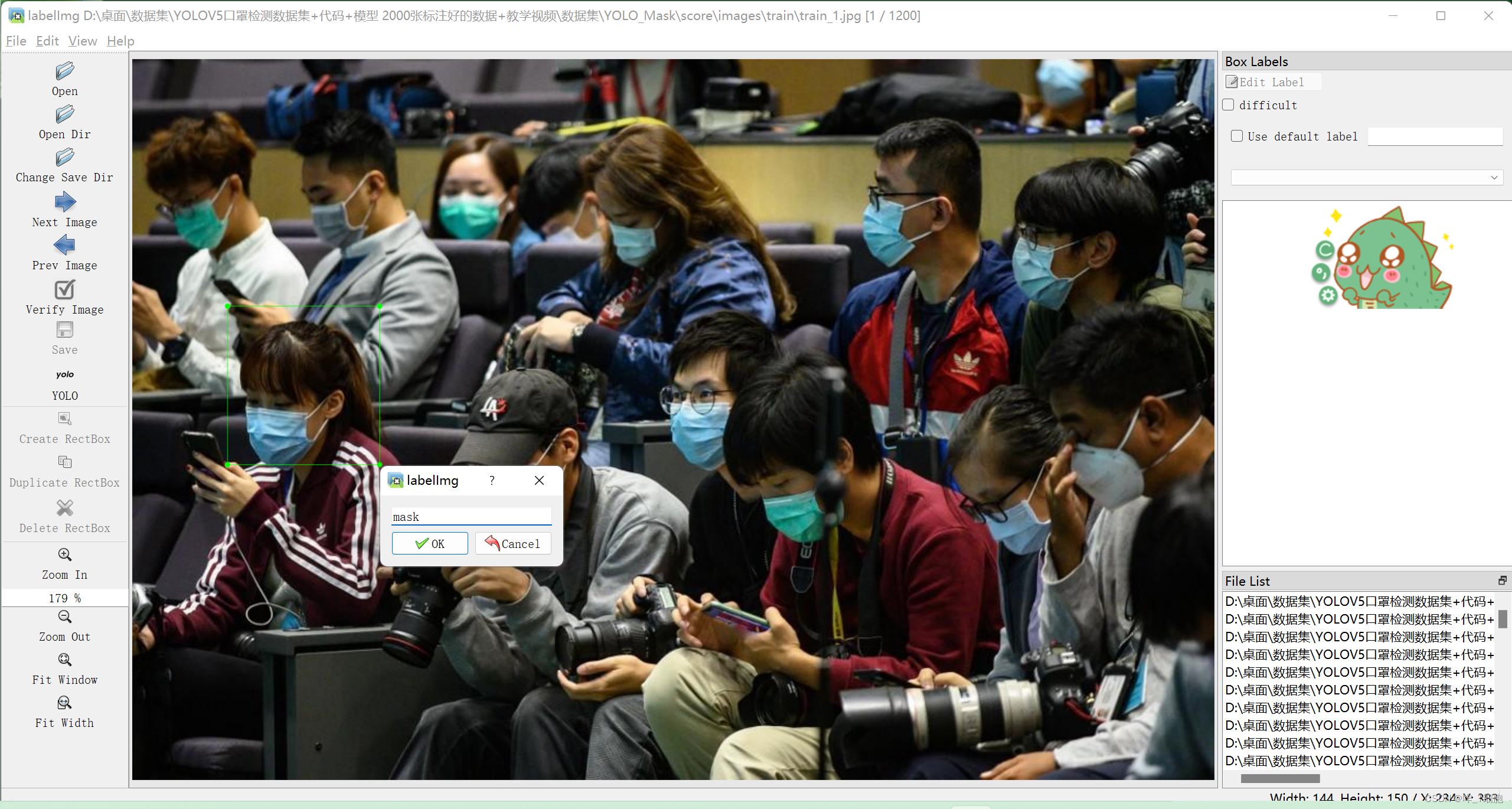

四、数据集处理 添加标签

训练的数据集总共有1200张戴口罩的和没戴口罩的照片,验证集有400张照片,对应的标签也已经存在相应的文件夹下。这里重点讲解下数据集标签的标注,我觉得这是Yolov5特别亲民的一个地方,也是他的强大之处——你可以标记你任何想标记的地方!

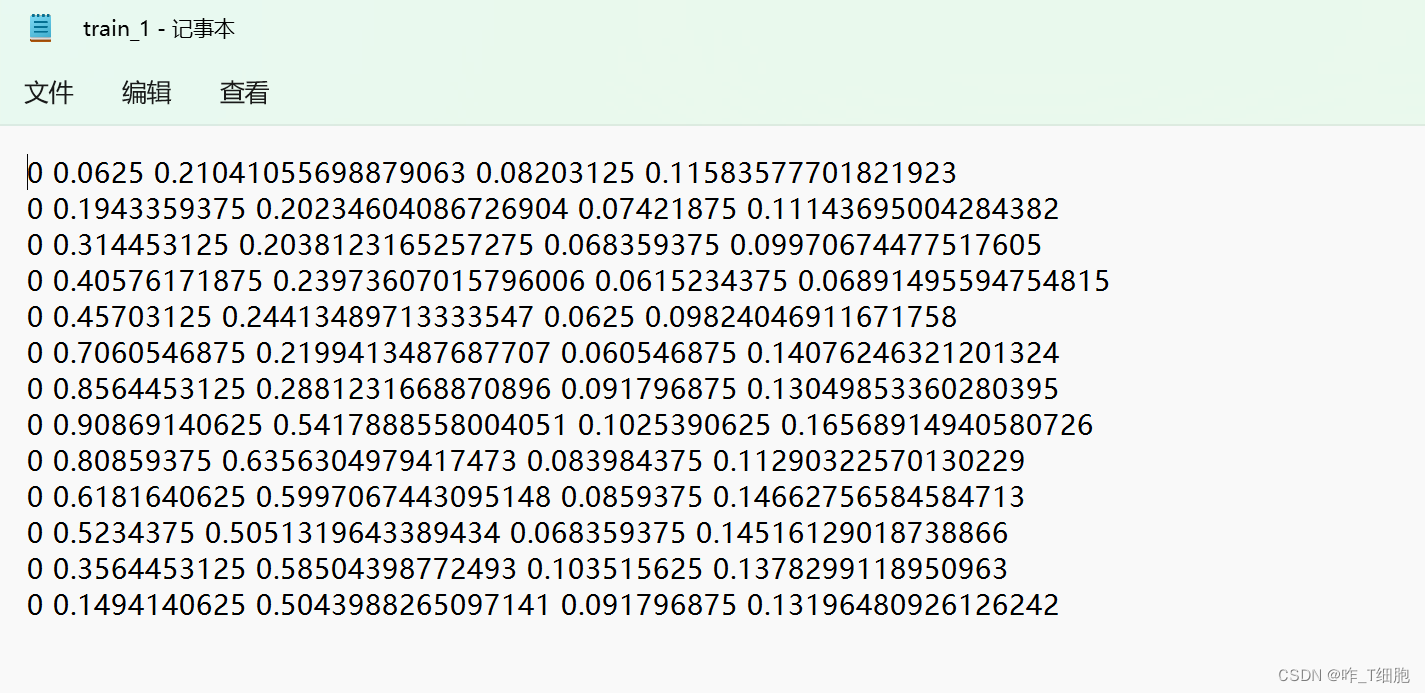

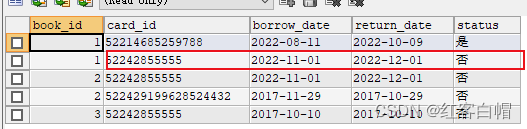

可以在你的虚拟环境中进入labelImg,这是他的界面。左侧open Dir可以打开数据集的文件夹,Change Save Dir是你的保存路径,Next和Prev Image分别是上一张和下一张图片。重点来了:Create RectBox绘制一个矩形框将你需要框选的对象框选出来,并添加标签。我这里框选了红色衣服的女士,并给她添加标签mask,说明她带了口罩。接着标记第二个人,直到所有的人都标记完之后,可以得到一个该图片的txt文件:

我们一行一行看,每一行代表着图片里面的一个人,第一个数字 0说明是第一类,在这里就是带了口罩,后两个数字是矩形框的的中心点坐标,最后两个数字是矩形框的长宽。在训练模型时要将原图片和对应的标签一起传入进去作为一组训练集,这样机器才能够慢慢认识一个人有没有戴口罩。

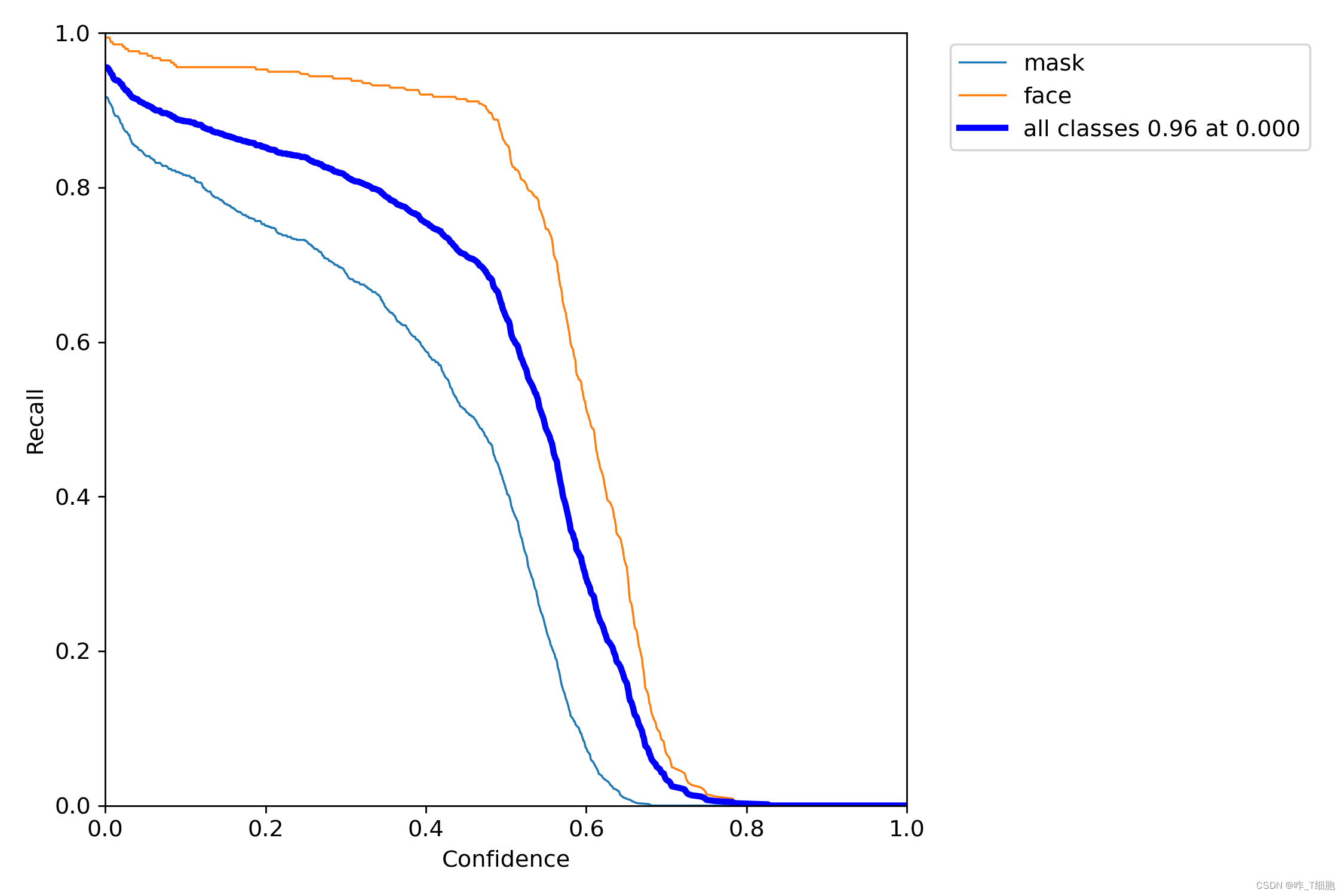

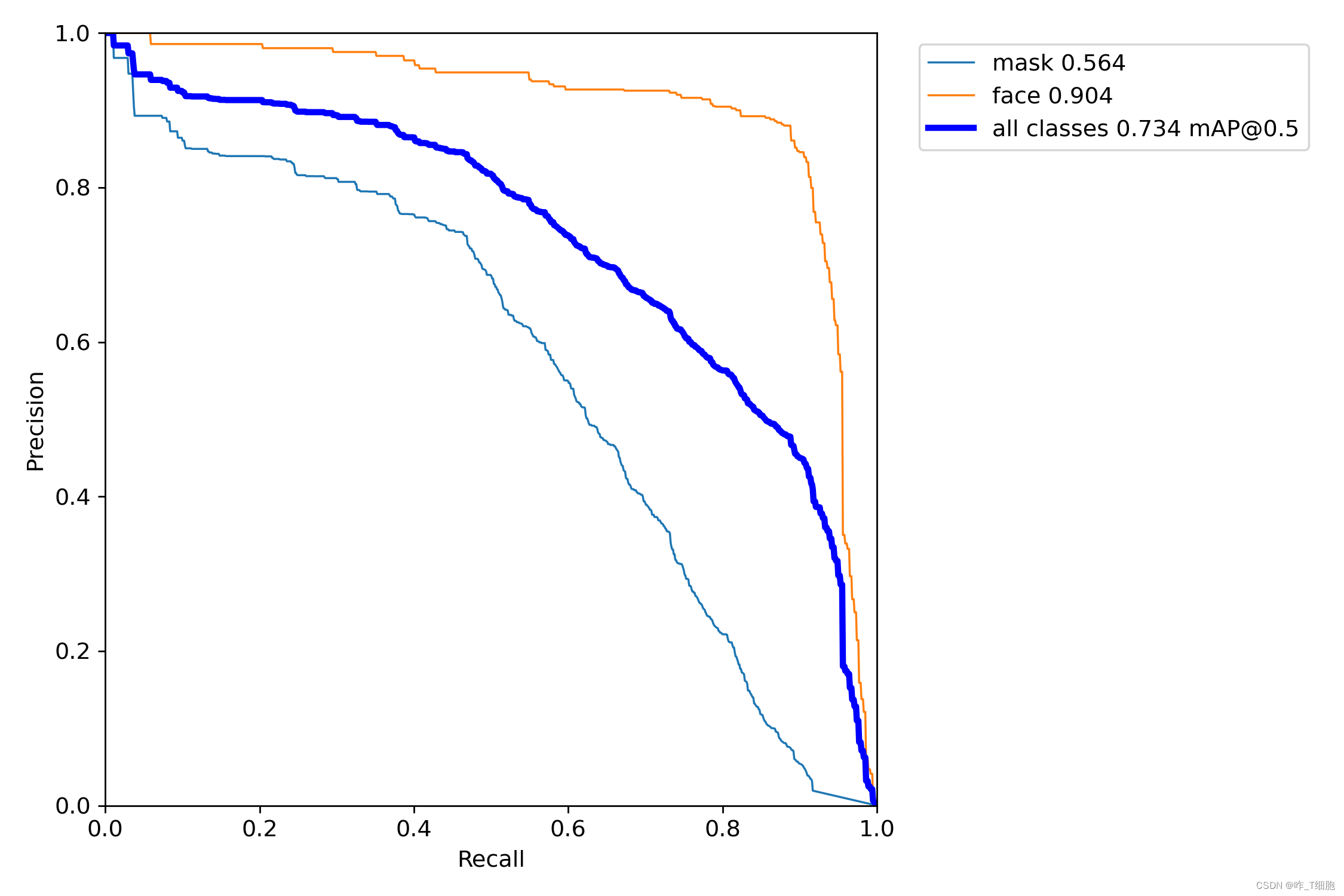

五、结果分析

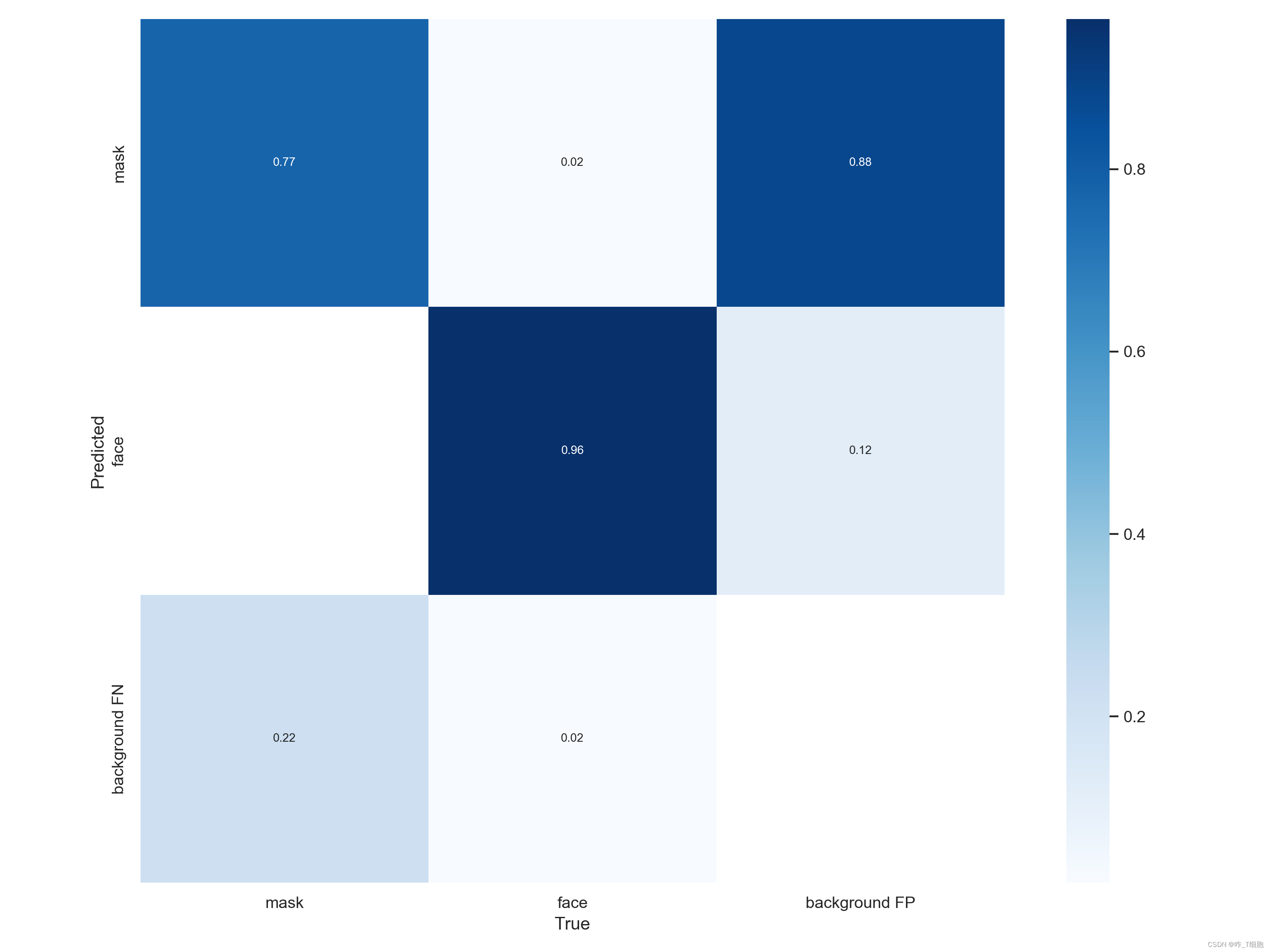

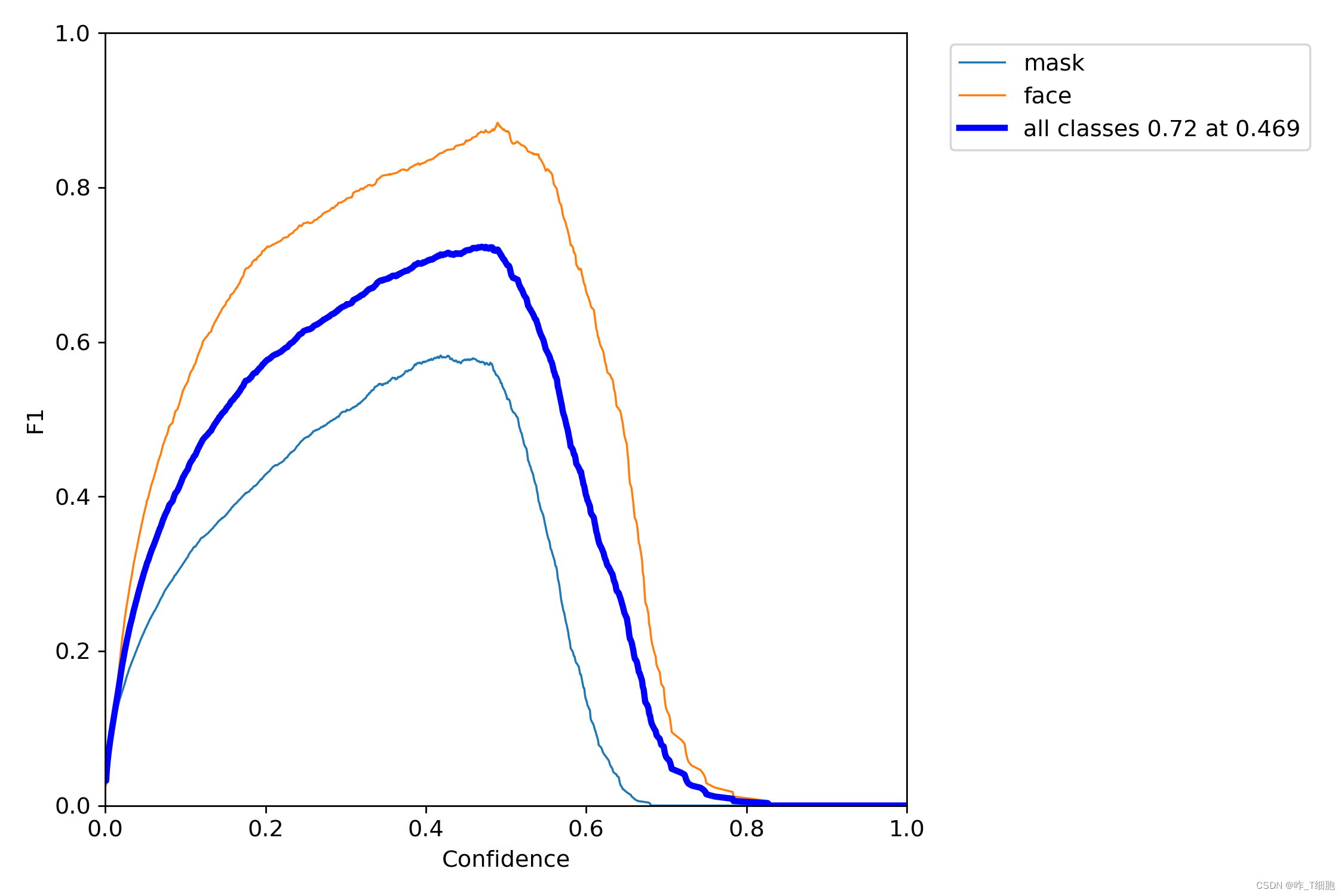

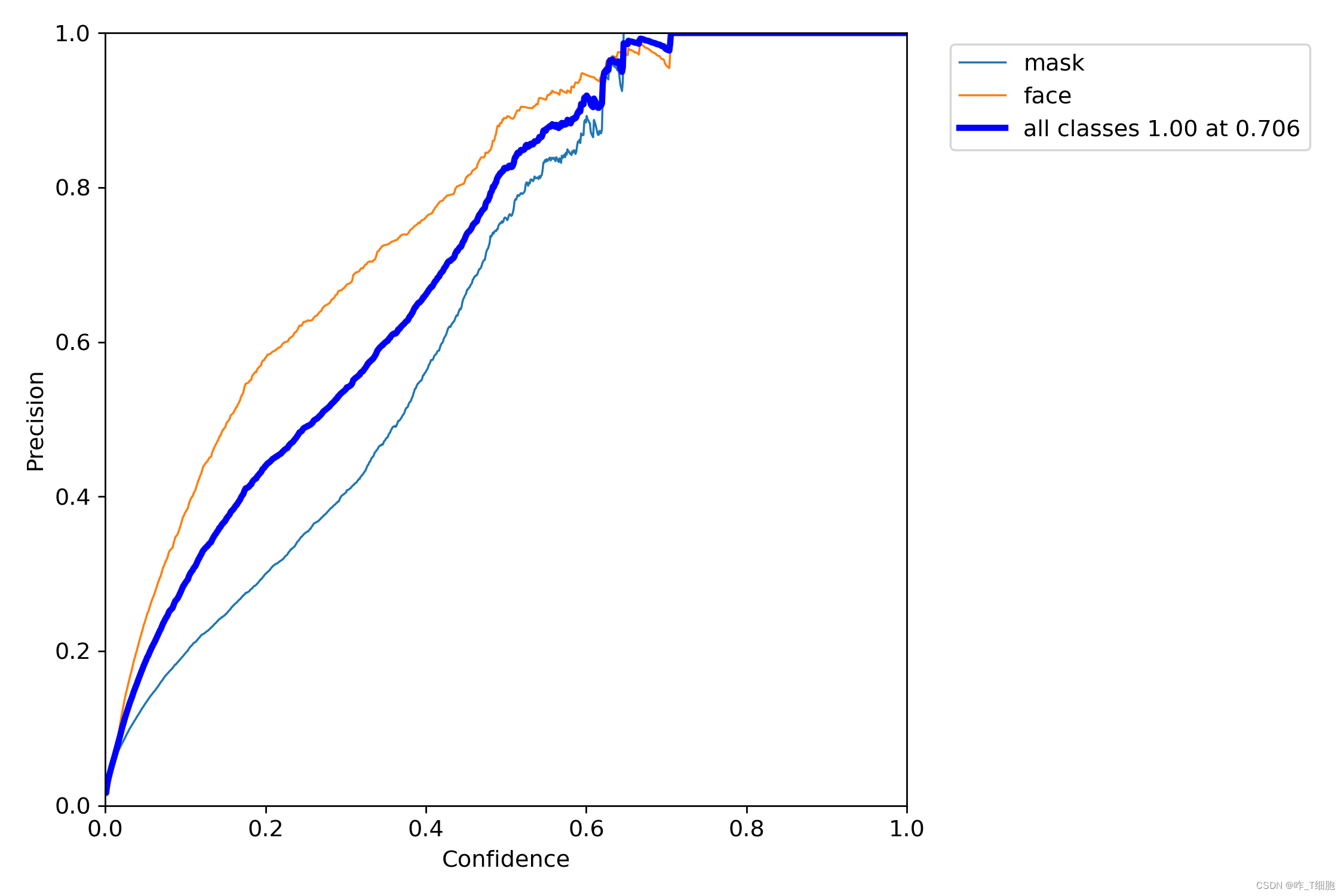

从图中可以看出,仅训练了5次(即每张图片机器学习了5遍), 对mask的识别精度可以达到0.564,对face的识别精度可以达到0.904,实在是恐怖!

六、总结

Yolov5真的是一个利器,确实要比CNN强大很多,里面复杂的神经网络函数复杂交错。这个开源项目让我再一次感受到了机器学习的强大,你可以让计算机认出他任何想要认出的东西,且识别的精度很高。比如说

- 火灾检测,一片森林或楼道只要一有火焰的模样,计算机就能分辨出并报警,这样可以有效地减少经济损失甚至挽救生命。

- 安检检测,现在的地铁飞机安检,都是人观察扫描仪扫描出来的图像,看有没有可疑物体,如果用Yolov5训练出识别危险物品的模型,就能减少大量人力,且准确率可能比人还要高。

- 无人驾驶。通过yolov5检测车道和行人车辆,控制整个车子运转,只要有足够多的数据集,这个领域还是很值得探索的。

这次实战让我深深明白了:人工智能 = 人工+智能,先有人工才有智能,人工筛选标注数据集甚至会比搭建整个机器学习框架所用的时间更长,数据集的宝贵一不言而喻。每天我们看似习以为常的图片验证码(选出图片中的红绿灯)其实都在把我们当成他们免费的劳动力,在帮他们给图片添加标签,哈哈。未来的世界很广阔,人工智能的世界依旧很精彩,继续加油!如果你对本篇文章感兴趣也欢迎私信或者评论区交流哦!

想要继续深入研究的小伙伴可以看这几个文章:

手把手教你使用YOLOV5训练自己的目标检测模型-口罩检测-视频教程

手把手教你使用YOLOV5训练自己的目标检测模型

电脑是如何学会瞬间识别物体的

七、模型代码(部分)

训练模型:

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

"""

Train a YOLOv5 model on a custom dataset

Usage:

$ python path/to/train.py --data coco128.yaml --weights yolov5s.pt --img 640

"""

import argparse

import math

import os

import random

import sys

import time

from copy import deepcopy

from datetime import datetime

from pathlib import Path

import numpy as np

import torch

import torch.distributed as dist

import torch.nn as nn

import yaml

from torch.cuda import amp

from torch.nn.parallel import DistributedDataParallel as DDP

from torch.optim import SGD, Adam, lr_scheduler

from tqdm import tqdm

FILE = Path(__file__).resolve()

ROOT = FILE.parents[0] # YOLOv5 root directory

if str(ROOT) not in sys.path:

sys.path.append(str(ROOT)) # add ROOT to PATH

ROOT = Path(os.path.relpath(ROOT, Path.cwd())) # relative

import val # for end-of-epoch mAP

from models.experimental import attempt_load

from models.yolo import Model

from utils.autoanchor import check_anchors

from utils.autobatch import check_train_batch_size

from utils.callbacks import Callbacks

from utils.datasets import create_dataloader

from utils.downloads import attempt_download

from utils.general import (LOGGER, NCOLS, check_dataset, check_file, check_git_status, check_img_size,

check_requirements, check_suffix, check_yaml, colorstr, get_latest_run, increment_path,

init_seeds, intersect_dicts, labels_to_class_weights, labels_to_image_weights, methods,

one_cycle, print_args, print_mutation, strip_optimizer)

from utils.loggers import Loggers

from utils.loggers.wandb.wandb_utils import check_wandb_resume

from utils.loss import ComputeLoss

from utils.metrics import fitness

from utils.plots import plot_evolve, plot_labels

from utils.torch_utils import EarlyStopping, ModelEMA, de_parallel, select_device, torch_distributed_zero_first

LOCAL_RANK = int(os.getenv('LOCAL_RANK', -1)) # https://pytorch.org/docs/stable/elastic/run.html

RANK = int(os.getenv('RANK', -1))

WORLD_SIZE = int(os.getenv('WORLD_SIZE', 1))

def train(hyp, # path/to/hyp.yaml or hyp dictionary

opt,

device,

callbacks

):

save_dir, epochs, batch_size, weights, single_cls, evolve, data, cfg, resume, noval, nosave, workers, freeze, = \

Path(opt.save_dir), opt.epochs, opt.batch_size, opt.weights, opt.single_cls, opt.evolve, opt.data, opt.cfg, \

opt.resume, opt.noval, opt.nosave, opt.workers, opt.freeze

# Directories

w = save_dir / 'weights' # weights dir

(w.parent if evolve else w).mkdir(parents=True, exist_ok=True) # make dir

last, best = w / 'last.pt', w / 'best.pt'

# Hyperparameters

if isinstance(hyp, str):

with open(hyp, errors='ignore') as f:

hyp = yaml.safe_load(f) # load hyps dict

LOGGER.info(colorstr('hyperparameters: ') + ', '.join(f'{k}={v}' for k, v in hyp.items()))

# Save run settings

with open(save_dir / 'hyp.yaml', 'w') as f:

yaml.safe_dump(hyp, f, sort_keys=False)

with open(save_dir / 'opt.yaml', 'w') as f:

yaml.safe_dump(vars(opt), f, sort_keys=False)

data_dict = None

# Loggers

if RANK in [-1, 0]:

loggers = Loggers(save_dir, weights, opt, hyp, LOGGER) # loggers instance

if loggers.wandb:

data_dict = loggers.wandb.data_dict

if resume:

weights, epochs, hyp = opt.weights, opt.epochs, opt.hyp

# Register actions

for k in methods(loggers):

callbacks.register_action(k, callback=getattr(loggers, k))

# Config

plots = not evolve # create plots

cuda = device.type != 'cpu'

init_seeds(1 + RANK)

with torch_distributed_zero_first(LOCAL_RANK):

data_dict = data_dict or check_dataset(data) # check if None

train_path, val_path = data_dict['train'], data_dict['val']

nc = 1 if single_cls else int(data_dict['nc']) # number of classes

names = ['item'] if single_cls and len(data_dict['names']) != 1 else data_dict['names'] # class names

assert len(names) == nc, f'{len(names)} names found for nc={nc} dataset in {data}' # check

is_coco = isinstance(val_path, str) and val_path.endswith('coco/val2017.txt') # COCO dataset

# Model

check_suffix(weights, '.pt') # check weights

pretrained = weights.endswith('.pt')

if pretrained:

with torch_distributed_zero_first(LOCAL_RANK):

weights = attempt_download(weights) # download if not found locally

ckpt = torch.load(weights, map_location=device) # load checkpoint

model = Model(cfg or ckpt['model'].yaml, ch=3, nc=nc, anchors=hyp.get('anchors')).to(device) # create

exclude = ['anchor'] if (cfg or hyp.get('anchors')) and not resume else [] # exclude keys

csd = ckpt['model'].float().state_dict() # checkpoint state_dict as FP32

csd = intersect_dicts(csd, model.state_dict(), exclude=exclude) # intersect

model.load_state_dict(csd, strict=False) # load

LOGGER.info(f'Transferred {len(csd)}/{len(model.state_dict())} items from {weights}') # report

else:

model = Model(cfg, ch=3, nc=nc, anchors=hyp.get('anchors')).to(device) # create

# Freeze

freeze = [f'model.{x}.' for x in range(freeze)] # layers to freeze

for k, v in model.named_parameters():

v.requires_grad = True # train all layers

if any(x in k for x in freeze):

LOGGER.info(f'freezing {k}')

v.requires_grad = False

# Image size

gs = max(int(model.stride.max()), 32) # grid size (max stride)

imgsz = check_img_size(opt.imgsz, gs, floor=gs * 2) # verify imgsz is gs-multiple

# Batch size

if RANK == -1 and batch_size == -1: # single-GPU only, estimate best batch size

batch_size = check_train_batch_size(model, imgsz)

# Optimizer

nbs = 64 # nominal batch size

accumulate = max(round(nbs / batch_size), 1) # accumulate loss before optimizing

hyp['weight_decay'] *= batch_size * accumulate / nbs # scale weight_decay

LOGGER.info(f"Scaled weight_decay = {hyp['weight_decay']}")

g0, g1, g2 = [], [], [] # optimizer parameter groups

for v in model.modules():

if hasattr(v, 'bias') and isinstance(v.bias, nn.Parameter): # bias

g2.append(v.bias)

if isinstance(v, nn.BatchNorm2d): # weight (no decay)

g0.append(v.weight)

elif hasattr(v, 'weight') and isinstance(v.weight, nn.Parameter): # weight (with decay)

g1.append(v.weight)

if opt.adam:

optimizer = Adam(g0, lr=hyp['lr0'], betas=(hyp['momentum'], 0.999)) # adjust beta1 to momentum

else:

optimizer = SGD(g0, lr=hyp['lr0'], momentum=hyp['momentum'], nesterov=True)

optimizer.add_param_group({'params': g1, 'weight_decay': hyp['weight_decay']}) # add g1 with weight_decay

optimizer.add_param_group({'params': g2}) # add g2 (biases)

LOGGER.info(f"{colorstr('optimizer:')} {type(optimizer).__name__} with parameter groups "

f"{len(g0)} weight, {len(g1)} weight (no decay), {len(g2)} bias")

del g0, g1, g2

# Scheduler

if opt.linear_lr:

lf = lambda x: (1 - x / (epochs - 1)) * (1.0 - hyp['lrf']) + hyp['lrf'] # linear

else:

lf = one_cycle(1, hyp['lrf'], epochs) # cosine 1->hyp['lrf']

scheduler = lr_scheduler.LambdaLR(optimizer, lr_lambda=lf) # plot_lr_scheduler(optimizer, scheduler, epochs)

# EMA

ema = ModelEMA(model) if RANK in [-1, 0] else None

# Resume

start_epoch, best_fitness = 0, 0.0

if pretrained:

# Optimizer

if ckpt['optimizer'] is not None:

optimizer.load_state_dict(ckpt['optimizer'])

best_fitness = ckpt['best_fitness']

# EMA

if ema and ckpt.get('ema'):

ema.ema.load_state_dict(ckpt['ema'].float().state_dict())

ema.updates = ckpt['updates']

# Epochs

start_epoch = ckpt['epoch'] + 1

if resume:

assert start_epoch > 0, f'{weights} training to {epochs} epochs is finished, nothing to resume.'

if epochs < start_epoch:

LOGGER.info(f"{weights} has been trained for {ckpt['epoch']} epochs. Fine-tuning for {epochs} more epochs.")

epochs += ckpt['epoch'] # finetune additional epochs

del ckpt, csd

# DP mode

if cuda and RANK == -1 and torch.cuda.device_count() > 1:

LOGGER.warning('WARNING: DP not recommended, use torch.distributed.run for best DDP Multi-GPU results.\n'

'See Multi-GPU Tutorial at https://github.com/ultralytics/yolov5/issues/475 to get started.')

model = torch.nn.DataParallel(model)

# SyncBatchNorm

if opt.sync_bn and cuda and RANK != -1:

model = torch.nn.SyncBatchNorm.convert_sync_batchnorm(model).to(device)

LOGGER.info('Using SyncBatchNorm()')

# Trainloader

train_loader, dataset = create_dataloader(train_path, imgsz, batch_size // WORLD_SIZE, gs, single_cls,

hyp=hyp, augment=True, cache=opt.cache, rect=opt.rect, rank=LOCAL_RANK,

workers=workers, image_weights=opt.image_weights, quad=opt.quad,

prefix=colorstr('train: '), shuffle=True)

mlc = int(np.concatenate(dataset.labels, 0)[:, 0].max()) # max label class

nb = len(train_loader) # number of batches

assert mlc < nc, f'Label class {mlc} exceeds nc={nc} in {data}. Possible class labels are 0-{nc - 1}'

# Process 0

if RANK in [-1, 0]:

val_loader = create_dataloader(val_path, imgsz, batch_size // WORLD_SIZE * 2, gs, single_cls,

hyp=hyp, cache=None if noval else opt.cache, rect=True, rank=-1,

workers=workers, pad=0.5,

prefix=colorstr('val: '))[0]

if not resume:

labels = np.concatenate(dataset.labels, 0)

# c = torch.tensor(labels[:, 0]) # classes

# cf = torch.bincount(c.long(), minlength=nc) + 1. # frequency

# model._initialize_biases(cf.to(device))

if plots:

plot_labels(labels, names, save_dir)

# Anchors

if not opt.noautoanchor:

check_anchors(dataset, model=model, thr=hyp['anchor_t'], imgsz=imgsz)

model.half().float() # pre-reduce anchor precision

callbacks.run('on_pretrain_routine_end')

# DDP mode

if cuda and RANK != -1:

model = DDP(model, device_ids=[LOCAL_RANK], output_device=LOCAL_RANK)

# Model attributes

nl = de_parallel(model).model[-1].nl # number of detection layers (to scale hyps)

hyp['box'] *= 3 / nl # scale to layers

hyp['cls'] *= nc / 80 * 3 / nl # scale to classes and layers

hyp['obj'] *= (imgsz / 640) ** 2 * 3 / nl # scale to image size and layers

hyp['label_smoothing'] = opt.label_smoothing

model.nc = nc # attach number of classes to model

model.hyp = hyp # attach hyperparameters to model

model.class_weights = labels_to_class_weights(dataset.labels, nc).to(device) * nc # attach class weights

model.names = names

# Start training

t0 = time.time()

nw = max(round(hyp['warmup_epochs'] * nb), 1000) # number of warmup iterations, max(3 epochs, 1k iterations)

# nw = min(nw, (epochs - start_epoch) / 2 * nb) # limit warmup to < 1/2 of training

last_opt_step = -1

maps = np.zeros(nc) # mAP per class

results = (0, 0, 0, 0, 0, 0, 0) # P, R, mAP@.5, mAP@.5-.95, val_loss(box, obj, cls)

scheduler.last_epoch = start_epoch - 1 # do not move

scaler = amp.GradScaler(enabled=cuda)

stopper = EarlyStopping(patience=opt.patience)

compute_loss = ComputeLoss(model) # init loss class

LOGGER.info(f'Image sizes {imgsz} train, {imgsz} val\n'

f'Using {train_loader.num_workers * WORLD_SIZE} dataloader workers\n'

f"Logging results to {colorstr('bold', save_dir)}\n"

f'Starting training for {epochs} epochs...')

for epoch in range(start_epoch, epochs): # epoch ------------------------------------------------------------------

model.train()

# Update image weights (optional, single-GPU only)

if opt.image_weights:

cw = model.class_weights.cpu().numpy() * (1 - maps) ** 2 / nc # class weights

iw = labels_to_image_weights(dataset.labels, nc=nc, class_weights=cw) # image weights

dataset.indices = random.choices(range(dataset.n), weights=iw, k=dataset.n) # rand weighted idx

# Update mosaic border (optional)

# b = int(random.uniform(0.25 * imgsz, 0.75 * imgsz + gs) // gs * gs)

# dataset.mosaic_border = [b - imgsz, -b] # height, width borders

mloss = torch.zeros(3, device=device) # mean losses

if RANK != -1:

train_loader.sampler.set_epoch(epoch)

pbar = enumerate(train_loader)

LOGGER.info(('\n' + '%10s' * 7) % ('Epoch', 'gpu_mem', 'box', 'obj', 'cls', 'labels', 'img_size'))

if RANK in [-1, 0]:

pbar = tqdm(pbar, total=nb, ncols=NCOLS, bar_format='{l_bar}{bar:10}{r_bar}{bar:-10b}') # progress bar

optimizer.zero_grad()

for i, (imgs, targets, paths, _) in pbar: # batch -------------------------------------------------------------

ni = i + nb * epoch # number integrated batches (since train start)

imgs = imgs.to(device, non_blocking=True).float() / 255 # uint8 to float32, 0-255 to 0.0-1.0

# Warmup

if ni <= nw:

xi = [0, nw] # x interp

# compute_loss.gr = np.interp(ni, xi, [0.0, 1.0]) # iou loss ratio (obj_loss = 1.0 or iou)

accumulate = max(1, np.interp(ni, xi, [1, nbs / batch_size]).round())

for j, x in enumerate(optimizer.param_groups):

# bias lr falls from 0.1 to lr0, all other lrs rise from 0.0 to lr0

x['lr'] = np.interp(ni, xi, [hyp['warmup_bias_lr'] if j == 2 else 0.0, x['initial_lr'] * lf(epoch)])

if 'momentum' in x:

x['momentum'] = np.interp(ni, xi, [hyp['warmup_momentum'], hyp['momentum']])

# Multi-scale

if opt.multi_scale:

sz = random.randrange(imgsz * 0.5, imgsz * 1.5 + gs) // gs * gs # size

sf = sz / max(imgs.shape[2:]) # scale factor

if sf != 1:

ns = [math.ceil(x * sf / gs) * gs for x in imgs.shape[2:]] # new shape (stretched to gs-multiple)

imgs = nn.functional.interpolate(imgs, size=ns, mode='bilinear', align_corners=False)

# Forward

with amp.autocast(enabled=cuda):

pred = model(imgs) # forward

loss, loss_items = compute_loss(pred, targets.to(device)) # loss scaled by batch_size

if RANK != -1:

loss *= WORLD_SIZE # gradient averaged between devices in DDP mode

if opt.quad:

loss *= 4.

# Backward

scaler.scale(loss).backward()

# Optimize

if ni - last_opt_step >= accumulate:

scaler.step(optimizer) # optimizer.step

scaler.update()

optimizer.zero_grad()

if ema:

ema.update(model)

last_opt_step = ni

# Log

if RANK in [-1, 0]:

mloss = (mloss * i + loss_items) / (i + 1) # update mean losses

mem = f'{torch.cuda.memory_reserved() / 1E9 if torch.cuda.is_available() else 0:.3g}G' # (GB)

pbar.set_description(('%10s' * 2 + '%10.4g' * 5) % (

f'{epoch}/{epochs - 1}', mem, *mloss, targets.shape[0], imgs.shape[-1]))

callbacks.run('on_train_batch_end', ni, model, imgs, targets, paths, plots, opt.sync_bn)

# end batch ------------------------------------------------------------------------------------------------

# Scheduler

lr = [x['lr'] for x in optimizer.param_groups] # for loggers

scheduler.step()

if RANK in [-1, 0]:

# mAP

callbacks.run('on_train_epoch_end', epoch=epoch)

ema.update_attr(model, include=['yaml', 'nc', 'hyp', 'names', 'stride', 'class_weights'])

final_epoch = (epoch + 1 == epochs) or stopper.possible_stop

if not noval or final_epoch: # Calculate mAP

results, maps, _ = val.run(data_dict,

batch_size=batch_size // WORLD_SIZE * 2,

imgsz=imgsz,

model=ema.ema,

single_cls=single_cls,

dataloader=val_loader,

save_dir=save_dir,

plots=False,

callbacks=callbacks,

compute_loss=compute_loss)

# Update best mAP

fi = fitness(np.array(results).reshape(1, -1)) # weighted combination of [P, R, mAP@.5, mAP@.5-.95]

if fi > best_fitness:

best_fitness = fi

log_vals = list(mloss) + list(results) + lr

callbacks.run('on_fit_epoch_end', log_vals, epoch, best_fitness, fi)

# Save model

if (not nosave) or (final_epoch and not evolve): # if save

ckpt = {'epoch': epoch,

'best_fitness': best_fitness,

'model': deepcopy(de_parallel(model)).half(),

'ema': deepcopy(ema.ema).half(),

'updates': ema.updates,

'optimizer': optimizer.state_dict(),

'wandb_id': loggers.wandb.wandb_run.id if loggers.wandb else None,

'date': datetime.now().isoformat()}

# Save last, best and delete

torch.save(ckpt, last)

if best_fitness == fi:

torch.save(ckpt, best)

if (epoch > 0) and (opt.save_period > 0) and (epoch % opt.save_period == 0):

torch.save(ckpt, w / f'epoch{epoch}.pt')

del ckpt

callbacks.run('on_model_save', last, epoch, final_epoch, best_fitness, fi)

# Stop Single-GPU

if RANK == -1 and stopper(epoch=epoch, fitness=fi):

break

# Stop DDP TODO: known issues shttps://github.com/ultralytics/yolov5/pull/4576

# stop = stopper(epoch=epoch, fitness=fi)

# if RANK == 0:

# dist.broadcast_object_list([stop], 0) # broadcast 'stop' to all ranks

# Stop DPP

# with torch_distributed_zero_first(RANK):

# if stop:

# break # must break all DDP ranks

# end epoch ----------------------------------------------------------------------------------------------------

# end training -----------------------------------------------------------------------------------------------------

if RANK in [-1, 0]:

LOGGER.info(f'\n{epoch - start_epoch + 1} epochs completed in {(time.time() - t0) / 3600:.3f} hours.')

for f in last, best:

if f.exists():

strip_optimizer(f) # strip optimizers

if f is best:

LOGGER.info(f'\nValidating {f}...')

results, _, _ = val.run(data_dict,

batch_size=batch_size // WORLD_SIZE * 2,

imgsz=imgsz,

model=attempt_load(f, device).half(),

iou_thres=0.65 if is_coco else 0.60, # best pycocotools results at 0.65

single_cls=single_cls,

dataloader=val_loader,

save_dir=save_dir,

save_json=is_coco,

verbose=True,

plots=True,

callbacks=callbacks,

compute_loss=compute_loss) # val best model with plots

if is_coco:

callbacks.run('on_fit_epoch_end', list(mloss) + list(results) + lr, epoch, best_fitness, fi)

callbacks.run('on_train_end', last, best, plots, epoch, results)

LOGGER.info(f"Results saved to {colorstr('bold', save_dir)}")

torch.cuda.empty_cache()

return results

# 明天把这些模型都试试效果先,一波波给他训练完毕,找个公开的数据集测试一下。

def parse_opt(known=False):

parser = argparse.ArgumentParser()

parser.add_argument('--weights', type=str, default=ROOT / 'pretrained/yolov5s.pt', help='initial weights path')

parser.add_argument('--cfg', type=str, default=ROOT / 'models/yolov5s.yaml', help='model.yaml path')

parser.add_argument('--data', type=str, default=ROOT / 'data/data.yaml', help='dataset.yaml path')

parser.add_argument('--hyp', type=str, default=ROOT / 'data/hyps/hyp.scratch.yaml', help='hyperparameters path')

parser.add_argument('--epochs', type=int, default=300)

parser.add_argument('--batch-size', type=int, default=4, help='total batch size for all GPUs, -1 for autobatch')

parser.add_argument('--imgsz', '--img', '--img-size', type=int, default=640, help='train, val image size (pixels)')

parser.add_argument('--rect', action='store_true', help='rectangular training')

parser.add_argument('--resume', nargs='?', const=True, default=False, help='resume most recent training')

parser.add_argument('--nosave', action='store_true', help='only save final checkpoint')

parser.add_argument('--noval', action='store_true', help='only validate final epoch')

parser.add_argument('--noautoanchor', action='store_true', help='disable autoanchor check')

parser.add_argument('--evolve', type=int, nargs='?', const=300, help='evolve hyperparameters for x generations')

parser.add_argument('--bucket', type=str, default='', help='gsutil bucket')

parser.add_argument('--cache', type=str, nargs='?', const='ram', help='--cache images in "ram" (default) or "disk"')

parser.add_argument('--image-weights', action='store_true', help='use weighted image selection for training')

parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

# parser.add_argument('--multi-scale', action='store_true', help='vary img-size +/- 50%%')

parser.add_argument('--multi-scale', default=True, help='vary img-size +/- 50%%')

parser.add_argument('--single-cls', action='store_true', help='train multi-class data as single-class')

parser.add_argument('--adam', action='store_true', help='use torch.optim.Adam() optimizer')

parser.add_argument('--sync-bn', action='store_true', help='use SyncBatchNorm, only available in DDP mode')

parser.add_argument('--workers', type=int, default=0, help='max dataloader workers (per RANK in DDP mode)')

parser.add_argument('--project', default=ROOT / 'runs/train', help='save to project/name')

parser.add_argument('--name', default='exp', help='save to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

parser.add_argument('--quad', action='store_true', help='quad dataloader')

parser.add_argument('--linear-lr', action='store_true', help='linear LR')

parser.add_argument('--label-smoothing', type=float, default=0.0, help='Label smoothing epsilon')

parser.add_argument('--patience', type=int, default=100, help='EarlyStopping patience (epochs without improvement)')

parser.add_argument('--freeze', type=int, default=0, help='Number of layers to freeze. backbone=10, all=24')

parser.add_argument('--save-period', type=int, default=-1, help='Save checkpoint every x epochs (disabled if < 1)')

parser.add_argument('--local_rank', type=int, default=-1, help='DDP parameter, do not modify')

# Weights & Biases arguments

parser.add_argument('--entity', default=None, help='W&B: Entity')

parser.add_argument('--upload_dataset', action='store_true', help='W&B: Upload dataset as artifact table')

parser.add_argument('--bbox_interval', type=int, default=-1, help='W&B: Set bounding-box image logging interval')

parser.add_argument('--artifact_alias', type=str, default='latest', help='W&B: Version of dataset artifact to use')

opt = parser.parse_known_args()[0] if known else parser.parse_args()

return opt

def main(opt, callbacks=Callbacks()):

# Checks

if RANK in [-1, 0]:

print_args(FILE.stem, opt)

check_git_status()

check_requirements(exclude=['thop'])

# Resume

if opt.resume and not check_wandb_resume(opt) and not opt.evolve: # resume an interrupted run

ckpt = opt.resume if isinstance(opt.resume, str) else get_latest_run() # specified or most recent path

assert os.path.isfile(ckpt), 'ERROR: --resume checkpoint does not exist'

with open(Path(ckpt).parent.parent / 'opt.yaml', errors='ignore') as f:

opt = argparse.Namespace(**yaml.safe_load(f)) # replace

opt.cfg, opt.weights, opt.resume = '', ckpt, True # reinstate

LOGGER.info(f'Resuming training from {ckpt}')

else:

opt.data, opt.cfg, opt.hyp, opt.weights, opt.project = \

check_file(opt.data), check_yaml(opt.cfg), check_yaml(opt.hyp), str(opt.weights), str(opt.project) # checks

assert len(opt.cfg) or len(opt.weights), 'either --cfg or --weights must be specified'

if opt.evolve:

opt.project = str(ROOT / 'runs/evolve')

opt.exist_ok, opt.resume = opt.resume, False # pass resume to exist_ok and disable resume

opt.save_dir = str(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok))

# DDP mode

device = select_device(opt.device, batch_size=opt.batch_size)

if LOCAL_RANK != -1:

assert torch.cuda.device_count() > LOCAL_RANK, 'insufficient CUDA devices for DDP command'

assert opt.batch_size % WORLD_SIZE == 0, '--batch-size must be multiple of CUDA device count'

assert not opt.image_weights, '--image-weights argument is not compatible with DDP training'

assert not opt.evolve, '--evolve argument is not compatible with DDP training'

torch.cuda.set_device(LOCAL_RANK)

device = torch.device('cuda', LOCAL_RANK)

dist.init_process_group(backend="nccl" if dist.is_nccl_available() else "gloo")

# Train

if not opt.evolve:

train(opt.hyp, opt, device, callbacks)

if WORLD_SIZE > 1 and RANK == 0:

LOGGER.info('Destroying process group... ')

dist.destroy_process_group()

# Evolve hyperparameters (optional)

else:

# Hyperparameter evolution metadata (mutation scale 0-1, lower_limit, upper_limit)

meta = {'lr0': (1, 1e-5, 1e-1), # initial learning rate (SGD=1E-2, Adam=1E-3)

'lrf': (1, 0.01, 1.0), # final OneCycleLR learning rate (lr0 * lrf)

'momentum': (0.3, 0.6, 0.98), # SGD momentum/Adam beta1

'weight_decay': (1, 0.0, 0.001), # optimizer weight decay

'warmup_epochs': (1, 0.0, 5.0), # warmup epochs (fractions ok)

'warmup_momentum': (1, 0.0, 0.95), # warmup initial momentum

'warmup_bias_lr': (1, 0.0, 0.2), # warmup initial bias lr

'box': (1, 0.02, 0.2), # box loss gain

'cls': (1, 0.2, 4.0), # cls loss gain

'cls_pw': (1, 0.5, 2.0), # cls BCELoss positive_weight

'obj': (1, 0.2, 4.0), # obj loss gain (scale with pixels)

'obj_pw': (1, 0.5, 2.0), # obj BCELoss positive_weight

'iou_t': (0, 0.1, 0.7), # IoU training threshold

'anchor_t': (1, 2.0, 8.0), # anchor-multiple threshold

'anchors': (2, 2.0, 10.0), # anchors per output grid (0 to ignore)

'fl_gamma': (0, 0.0, 2.0), # focal loss gamma (efficientDet default gamma=1.5)

'hsv_h': (1, 0.0, 0.1), # image HSV-Hue augmentation (fraction)

'hsv_s': (1, 0.0, 0.9), # image HSV-Saturation augmentation (fraction)

'hsv_v': (1, 0.0, 0.9), # image HSV-Value augmentation (fraction)

'degrees': (1, 0.0, 45.0), # image rotation (+/- deg)

'translate': (1, 0.0, 0.9), # image translation (+/- fraction)

'scale': (1, 0.0, 0.9), # image scale (+/- gain)

'shear': (1, 0.0, 10.0), # image shear (+/- deg)

'perspective': (0, 0.0, 0.001), # image perspective (+/- fraction), range 0-0.001

'flipud': (1, 0.0, 1.0), # image flip up-down (probability)

'fliplr': (0, 0.0, 1.0), # image flip left-right (probability)

'mosaic': (1, 0.0, 1.0), # image mixup (probability)

'mixup': (1, 0.0, 1.0), # image mixup (probability)

'copy_paste': (1, 0.0, 1.0)} # segment copy-paste (probability)

with open(opt.hyp, errors='ignore') as f:

hyp = yaml.safe_load(f) # load hyps dict

if 'anchors' not in hyp: # anchors commented in hyp.yaml

hyp['anchors'] = 3

opt.noval, opt.nosave, save_dir = True, True, Path(opt.save_dir) # only val/save final epoch

# ei = [isinstance(x, (int, float)) for x in hyp.values()] # evolvable indices

evolve_yaml, evolve_csv = save_dir / 'hyp_evolve.yaml', save_dir / 'evolve.csv'

if opt.bucket:

os.system(f'gsutil cp gs://{opt.bucket}/evolve.csv {save_dir}') # download evolve.csv if exists

for _ in range(opt.evolve): # generations to evolve

if evolve_csv.exists(): # if evolve.csv exists: select best hyps and mutate

# Select parent(s)

parent = 'single' # parent selection method: 'single' or 'weighted'

x = np.loadtxt(evolve_csv, ndmin=2, delimiter=',', skiprows=1)

n = min(5, len(x)) # number of previous results to consider

x = x[np.argsort(-fitness(x))][:n] # top n mutations

w = fitness(x) - fitness(x).min() + 1E-6 # weights (sum > 0)

if parent == 'single' or len(x) == 1:

# x = x[random.randint(0, n - 1)] # random selection

x = x[random.choices(range(n), weights=w)[0]] # weighted selection

elif parent == 'weighted':

x = (x * w.reshape(n, 1)).sum(0) / w.sum() # weighted combination

# Mutate

mp, s = 0.8, 0.2 # mutation probability, sigma

npr = np.random

npr.seed(int(time.time()))

g = np.array([meta[k][0] for k in hyp.keys()]) # gains 0-1

ng = len(meta)

v = np.ones(ng)

while all(v == 1): # mutate until a change occurs (prevent duplicates)

v = (g * (npr.random(ng) < mp) * npr.randn(ng) * npr.random() * s + 1).clip(0.3, 3.0)

for i, k in enumerate(hyp.keys()): # plt.hist(v.ravel(), 300)

hyp[k] = float(x[i + 7] * v[i]) # mutate

# Constrain to limits

for k, v in meta.items():

hyp[k] = max(hyp[k], v[1]) # lower limit

hyp[k] = min(hyp[k], v[2]) # upper limit

hyp[k] = round(hyp[k], 5) # significant digits

# Train mutation

results = train(hyp.copy(), opt, device, callbacks)

# Write mutation results

print_mutation(results, hyp.copy(), save_dir, opt.bucket)

# Plot results

plot_evolve(evolve_csv)

LOGGER.info(f'Hyperparameter evolution finished\n'

f"Results saved to {colorstr('bold', save_dir)}\n"

f'Use best hyperparameters example: $ python train.py --hyp {evolve_yaml}')

def run(**kwargs):

# Usage: import train; train.run(data='coco128.yaml', imgsz=320, weights='yolov5m.pt')

opt = parse_opt(True)

for k, v in kwargs.items():

setattr(opt, k, v)

main(opt)

# python train.py --data mask_data.yaml --cfg mask_yolov5s.yaml --weights pretrained/yolov5s.pt --epoch 100 --batch-size 4 --device cpu

# python train.py --data mask_data.yaml --cfg mask_yolov5l.yaml --weights pretrained/yolov5l.pt --epoch 100 --batch-size 4

# python train.py --data mask_data.yaml --cfg mask_yolov5m.yaml --weights pretrained/yolov5m.pt --epoch 100 --batch-size 4

if __name__ == "__main__":

opt = parse_opt()

main(opt)

![使用多阶段和多尺度联合通道协调注意融合网络进行单图去雨[2022论文]](https://img-blog.csdnimg.cn/d6d07118b78e414f8a42a41d56d9aadf.png)