前言

瑞莎星睿 O6 (Radxa Orion O6) 拥有高达 28.8TOPs NPU (Neural Processing Unit) 算力,支持 INT4 / INT8 / INT16 / FP16 / BF16 和 TF32 类型的加速。这里通过通过官方的工具链进行FaceParsingBiSeNet的部署

1. FaceParsingBiSeNet onnx 推理

-

首先从百度网盘 提取码 8gin,下载开源的模型:face_parsing_512x512.onnx

-

编写 onnx 的推理脚本,如下

import os

import cv2

import argparse

import numpy as np

from PIL import Image

import onnxruntime

import time

def letterbox(image, new_shape=(640, 640), color=(114, 114, 114), auto=False, scaleFill=False, scaleup=True):

"""

对图像进行letterbox操作,保持宽高比缩放并填充到指定尺寸

:param image: 输入的图像,格式为numpy数组 (height, width, channels)

:param new_shape: 目标尺寸,格式为 (height, width)

:param color: 填充颜色,默认为 (114, 114, 114)

:param auto: 是否自动计算最小矩形,默认为True

:param scaleFill: 是否不保持宽高比直接缩放,默认为False

:param scaleup: 是否只放大不缩小,默认为True

:return: 处理后的图像,缩放比例,填充大小

"""

shape = image.shape[:2] # 当前图像的高度和宽度

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

if not scaleup: # 只缩小不放大(为了更好的效果)

r = min(r, 1.0)

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # 计算填充尺寸

if auto: # 最小矩形

dw, dh = np.mod(dw, 64), np.mod(dh, 64) # 强制为 64 的倍数

dw /= 2 # 从两侧填充

dh /= 2

if shape[::-1] != new_unpad: # 缩放图像

image = cv2.resize(image, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

image = cv2.copyMakeBorder(image, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # 添加填充

scale_ratio = r

pad_size = (dw, dh)

return image, scale_ratio, pad_size

def preprocess_image(image, shape, bgr2rgb=True):

"""图片预处理"""

img, scale_ratio, pad_size = letterbox(image, new_shape=shape)

if bgr2rgb:

img = img[:, :, ::-1]

img = img.transpose(2, 0, 1) # HWC2CHW

img = np.ascontiguousarray(img, dtype=np.float32)

return img, scale_ratio, pad_size

def generate_mask(img, seg, outpath, scale=0.4):

'分割结果可视化'

color = [

[255, 0, 0],

[255, 85, 0],

[255, 170, 0],

[255, 0, 85],

[255, 0, 170],

[0, 255, 0],

[85, 255, 0],

[170, 255, 0],

[0, 255, 85],

[0, 255, 170],

[0, 0, 255],

[85, 0, 255],

[170, 0, 255],

[0, 85, 255],

[0, 170, 255],

[255, 255, 0],

[255, 255, 85],

[255, 255, 170],

[255, 0, 255],

[255, 85, 255]

]

img = img.transpose(1, 2, 0) # HWC2CHW

minidx = int(seg.min())

maxidx = int(seg.max())

color_img = np.zeros_like(img)

for i in range(minidx, maxidx):

if i <= 0:

continue

color_img[seg == i] = color[i]

showimg = scale * img + (1 - scale) * color_img

Image.fromarray(showimg.astype(np.uint8)).save(outpath)

if __name__ == '__main__':

# define cmd arguments

parser = argparse.ArgumentParser()

parser.add_argument('--image-path', type=str, help='path of the input image (a file)')

parser.add_argument('--output-path', type=str, help='paht for saving the predicted alpha matte (a file)')

parser.add_argument('--model-path', type=str, help='path of the ONNX model')

args = parser.parse_args()

# check input arguments

if not os.path.exists(args.image_path):

print('Cannot find the input image: {0}'.format(args.image_path))

exit()

if not os.path.exists(args.model_path):

print('Cannot find the ONXX model: {0}'.format(args.model_path))

exit()

ref_size = [512, 512]

# read image

im = cv2.imread(args.image_path)

img, scale_ratio, pad_size = preprocess_image(im, ref_size)

showimg = img.copy()[::-1, ...]

mean = np.asarray([0.485, 0.456, 0.406])

scale = np.asarray([0.229, 0.224, 0.225])

mean = mean.reshape((3, 1, 1))

scale = scale.reshape((3, 1, 1))

img = (img / 255 - mean) * scale

im = img[None].astype(np.float32)

np.save("models/ComputeVision/Semantic_Segmentation/onnx_faceparse/datasets/calibration_data.npy", im)

# Initialize session and get prediction

session = onnxruntime.InferenceSession(args.model_path, None)

input_name = session.get_inputs()[0].name

output_name = session.get_outputs()[0].name

output = session.run([output_name], {input_name: im})

start_time = time.perf_counter()

for _ in range(5):

output = session.run([output_name], {input_name: im})

end_time = time.perf_counter()

use_time = (end_time - start_time) * 1000

fps = 1000 / use_time

print(f"推理耗时:{use_time:.2f} ms, fps:{fps:.2f}")

# refine matte

seg = np.argmax(output[0], axis=1).squeeze()

generate_mask(showimg, seg, args.output_path)

- 推理

python models/ComputeVision/Semantic_Segmentation/onnx_faceparse/inference_onnx.py --image-path models/ComputeVision/Semantic_Segmentation/onnx_faceparse/test_data/test_lite_face_parsing.png --output-path output/face_parsering.jpg --model-path asserts/models/bisenet/face_parsing_512x512.onnx

打印输出

推理耗时:1544.36 ms, fps:0.65

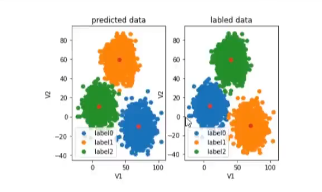

可视化效果

- 代码解释

- np.save(“models/ComputeVision/Semantic_Segmentation/onnx_faceparse/datasets/calibration_data.npy”, im)

这里是将输入保存供 NPU PTQ 量化使用.

2. FaceParsingBiSeNet NPU 推理

- 创建 cfg 配置文件,具体如下。

[Common]

mode = build

[Parser]

model_type = onnx

model_name = face_parsing_512x512

detection_postprocess =

model_domain = image_segmentation

input_model = /home/5_radxa/ai_model_hub/asserts/models/bisenet/face_parsing_512x512.onnx

input = input

input_shape = [1, 3, 512, 512]

output = out

output_dir = ./

[Optimizer]

output_dir = ./

calibration_data = ./datasets/calibration_data.npy

calibration_batch_size = 1

metric_batch_size = 1

dataset = NumpyDataset

quantize_method_for_weight = per_channel_symmetric_restricted_range

quantize_method_for_activation = per_tensor_asymmetric

save_statistic_info = True

[GBuilder]

outputs = bisenet.cix

target = X2_1204MP3

profile = True

tiling = fps

注意: [Parser]中的 input,output 是输入,输出 tensor 的名字,可以通过 netron 打开 onnx 模型看。输入,输出名字不匹配时,会有报错:

[I] Build with version 6.1.3119

[I] Parsing model....

[I] [Parser]: Begin to parse onnx model face_parsing_512x512...

2025-04-18 11:13:53.104146: W tensorflow/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libcudart.so.11.0'; dlerror: libcudart.so.11.0: cannot open shared object file: No such file or directory; LD_LIBRARY_PATH: /root/miniconda3/envs/radxa/lib/python3.8/site-packages/cv2/../../lib64:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib/:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib

2025-04-18 11:13:53.104217: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

2025-04-18 11:13:54.266791: W tensorflow/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libcuda.so.1'; dlerror: libcuda.so.1: cannot open shared object file: No such file or directory; LD_LIBRARY_PATH: /root/miniconda3/envs/radxa/lib/python3.8/site-packages/cv2/../../lib64:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib/:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib

2025-04-18 11:13:54.266893: W tensorflow/stream_executor/cuda/cuda_driver.cc:269] failed call to cuInit: UNKNOWN ERROR (303)

2025-04-18 11:13:54.266959: I tensorflow/stream_executor/cuda/cuda_diagnostics.cc:156] kernel driver does not appear to be running on this host (chenjun): /proc/driver/nvidia/version does not exist

[E] [Parser]: Graph does not contain such a node/tensor name:output

[E] [Parser]: Parser Failed!

- 编译模型

在 x86 主机上编译模型

cd models/ComputeVision/Semantic_Segmentation/onnx_faceparse

cixbuild ./cfg/onnx_bisenet.cfg

报错: ImportError: libaipu_simulator_x2.so: cannot open shared object file: No such file or directory

解决方案

- 通过

find / -name libaipu_simulator_x2.so - 通过

export LD_LIBRARY_PATH=/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib/libaipu_simulator_x2.so:$LD_LIBRARY_PATH添加环境变量

编译成功的打印信息

[I] Build with version 6.1.3119

[I] Parsing model....

[I] [Parser]: Begin to parse onnx model face_parsing_512x512...

2025-04-18 11:20:16.726111: W tensorflow/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libcudart.so.11.0'; dlerror: libcudart.so.11.0: cannot open shared object file: No such file or directory; LD_LIBRARY_PATH: /root/miniconda3/envs/radxa/lib/python3.8/site-packages/cv2/../../lib64:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib/:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib

2025-04-18 11:20:16.726199: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

2025-04-18 11:20:17.908202: W tensorflow/stream_executor/platform/default/dso_loader.cc:64] Could not load dynamic library 'libcuda.so.1'; dlerror: libcuda.so.1: cannot open shared object file: No such file or directory; LD_LIBRARY_PATH: /root/miniconda3/envs/radxa/lib/python3.8/site-packages/cv2/../../lib64:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib/:/root/miniconda3/envs/radxa/lib/python3.8/site-packages/AIPUBuilder/simulator-lib

2025-04-18 11:20:17.908267: W tensorflow/stream_executor/cuda/cuda_driver.cc:269] failed call to cuInit: UNKNOWN ERROR (303)

2025-04-18 11:20:17.908283: I tensorflow/stream_executor/cuda/cuda_diagnostics.cc:156] kernel driver does not appear to be running on this host (chenjun): /proc/driver/nvidia/version does not exist

[W] [Parser]: The output name out is not a node but a tensor. However, we will use the node Resize_161 as output node.

2025-04-18 11:20:21.229063: I tensorflow/core/platform/cpu_feature_guard.cc:151] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 AVX512F FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

[I] [Parser]: The input tensor(s) is/are: input_0

[I] [Parser]: Input input from cfg is shown as tensor input_0 in IR!

[I] [Parser]: Output out from cfg is shown as tensor Resize_161_post_transpose_0 in IR!

[I] [Parser]: 0 error(s), 1 warning(s) generated.

[I] [Parser]: Parser done!

[I] Parse model complete

[I] Simplifying float model.

[I] [IRChecker] Start to check IR: /home/5_radxa/ai_model_hub/models/ComputeVision/Semantic_Segmentation/onnx_faceparse/internal/face_parsing_512x512.txt

[I] [IRChecker] model_name: face_parsing_512x512

[I] [IRChecker] IRChecker: All IR pass (Checker Plugin disabled)

[I] [graph.cpp :1600] loading graph weight: /home/5_radxa/ai_model_hub/models/ComputeVision/Semantic_Segmentation/onnx_faceparse/./internal/face_parsing_512x512.bin size: 0x322454c

[I] Start to simplify the graph...

[I] Using fixed-point full optimization, it may take long long time ....

[I] GSim simplified result:

------------------------------------------------------------------------

OpType.Eltwise: -3

OpType.Mul: +3

OpType.Tile: -3

------------------------------------------------------------------------

略

略

略

略

略

[I] [builder.cpp:1939] Read and Write:80.21MB

[I] [builder.cpp:1080] Reduce constants memory size: 3.477MB

[I] [builder.cpp:2411] memory statistics for this graph (face_parsing_512x512)

[I] [builder.cpp: 585] Total memory : 0x00d52b98 Bytes ( 13.323MB)

[I] [builder.cpp: 585] Text section: 0x00042200 Bytes ( 0.258MB)

[I] [builder.cpp: 585] RO section: 0x00006d00 Bytes ( 0.027MB)

[I] [builder.cpp: 585] Desc section: 0x0002ea00 Bytes ( 0.182MB)

[I] [builder.cpp: 585] Data section: 0x00c8b360 Bytes ( 12.544MB)

[I] [builder.cpp: 585] BSS section: 0x0000fb38 Bytes ( 0.061MB)

[I] [builder.cpp: 585] Stack : 0x00040400 Bytes ( 0.251MB)

[I] [builder.cpp: 585] Workspace(BSS) : 0x004c0000 Bytes ( 4.750MB)

[I] [builder.cpp:2427]

[I] [tools.cpp :1181] - compile time: 20.726 s

[I] [tools.cpp :1087] With GM optimization, DDR Footprint stastic(estimation):

[I] [tools.cpp :1094] Read and Write:92.67MB

[I] [tools.cpp :1137] - draw graph time: 0.03 s

[I] [tools.cpp :1954] remove global cwd: /tmp/af3c1da8ea81cc1cf85dba1587ff72126ee96222bb098b52633050918b4c7

build success.......

Total errors: 0, warnings: 15

- NPU 推理可视化

编写 npu 推理脚本, 可视化推理结果,统计推理耗时

import numpy as np

import cv2

import argparse

import os

import sys

import time

# Define the absolute path to the utils package by going up four directory levels from the current file location

_abs_path = "/home/radxa/1_AI_models/ai_model_hub"

# Append the utils package path to the system path, making it accessible for imports

sys.path.append(_abs_path)

from utils.tools import get_file_list

from utils.NOE_Engine import EngineInfer

import os

import cv2

import argparse

import numpy as np

from PIL import Image

def letterbox(image, new_shape=(640, 640), color=(114, 114, 114), auto=False, scaleFill=False, scaleup=True):

"""

对图像进行letterbox操作,保持宽高比缩放并填充到指定尺寸

:param image: 输入的图像,格式为numpy数组 (height, width, channels)

:param new_shape: 目标尺寸,格式为 (height, width)

:param color: 填充颜色,默认为 (114, 114, 114)

:param auto: 是否自动计算最小矩形,默认为True

:param scaleFill: 是否不保持宽高比直接缩放,默认为False

:param scaleup: 是否只放大不缩小,默认为True

:return: 处理后的图像,缩放比例,填充大小

"""

shape = image.shape[:2] # 当前图像的高度和宽度

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

if not scaleup: # 只缩小不放大(为了更好的效果)

r = min(r, 1.0)

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # 计算填充尺寸

if auto: # 最小矩形

dw, dh = np.mod(dw, 64), np.mod(dh, 64) # 强制为 64 的倍数

dw /= 2 # 从两侧填充

dh /= 2

if shape[::-1] != new_unpad: # 缩放图像

image = cv2.resize(image, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

image = cv2.copyMakeBorder(image, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # 添加填充

scale_ratio = r

pad_size = (dw, dh)

return image, scale_ratio, pad_size

def preprocess_image(image, shape, bgr2rgb=True):

"""图片预处理"""

img, scale_ratio, pad_size = letterbox(image, new_shape=shape)

if bgr2rgb:

img = img[:, :, ::-1]

img = img.transpose(2, 0, 1) # HWC2CHW

img = np.ascontiguousarray(img, dtype=np.float32)

return img, scale_ratio, pad_size

def generate_mask(img, seg, outpath, scale=0.4):

'分割结果可视化'

color = [

[255, 0, 0],

[255, 85, 0],

[255, 170, 0],

[255, 0, 85],

[255, 0, 170],

[0, 255, 0],

[85, 255, 0],

[170, 255, 0],

[0, 255, 85],

[0, 255, 170],

[0, 0, 255],

[85, 0, 255],

[170, 0, 255],

[0, 85, 255],

[0, 170, 255],

[255, 255, 0],

[255, 255, 85],

[255, 255, 170],

[255, 0, 255],

[255, 85, 255]

]

img = img.transpose(1, 2, 0) # HWC2CHW

minidx = int(seg.min())

maxidx = int(seg.max())

color_img = np.zeros_like(img)

for i in range(minidx, maxidx):

if i <= 0:

continue

color_img[seg == i] = color[i]

showimg = scale * img + (1 - scale) * color_img

Image.fromarray(showimg.astype(np.uint8)).save(outpath)

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--image-path', type=str, help='path of the input image (a file)')

parser.add_argument('--output-path', type=str, help='paht for saving the predicted alpha matte (a file)')

parser.add_argument('--model-path', type=str, help='path of the ONNX model')

args = parser.parse_args()

model = EngineInfer(args.model_path)

ref_size = [512, 512]

# read image

im = cv2.imread(args.image_path)

img, scale_ratio, pad_size = preprocess_image(im, ref_size)

showimg = img.copy()[::-1, ...]

mean = np.asarray([0.485, 0.456, 0.406])

scale = np.asarray([0.229, 0.224, 0.225])

mean = mean.reshape((3, 1, 1))

scale = scale.reshape((3, 1, 1))

img = (img / 255 - mean) * scale

im = img[None].astype(np.float32)

## infer

input_data = [im]

# output = model.forward(input_data)[0]

N = 5

start_time = time.perf_counter()

for _ in range(N):

output = model.forward(input_data)[0]

end_time = time.perf_counter()

use_time = (end_time - start_time) * 1000 / N

fps = N / (end_time - start_time)

print(f"包含输入量化,输出反量化,推理耗时:{use_time:.2f} ms, fps:{fps:.2f}")

fps = model.get_ave_fps()

use_time2 = 1000 / fps

print(f"NPU 计算部分耗时:{use_time2:.2f} ms, fps: {fps:.2f}")

# refine matte

output = np.reshape(output, (1, 19, 512, 512))

seg = np.argmax(output, axis=1).squeeze()

generate_mask(showimg, seg, args.output_path)

# release model

model.clean()

推理

source /home/radxa/1_AI_models/ai_model_hub/.venv/bin/activate

python models/ComputeVision/Semantic_Segmentation/onnx_faceparse/inference_npu.py --image-path models/ComputeVision/Semantic_Segmentation/onnx_faceparse/test_data/test_lite_face_parsing.png --output-path output/face_parsering.jpg --model-path models/ComputeVision/Semantic_Segmentation/onnx_faceparse/bisenet.cix

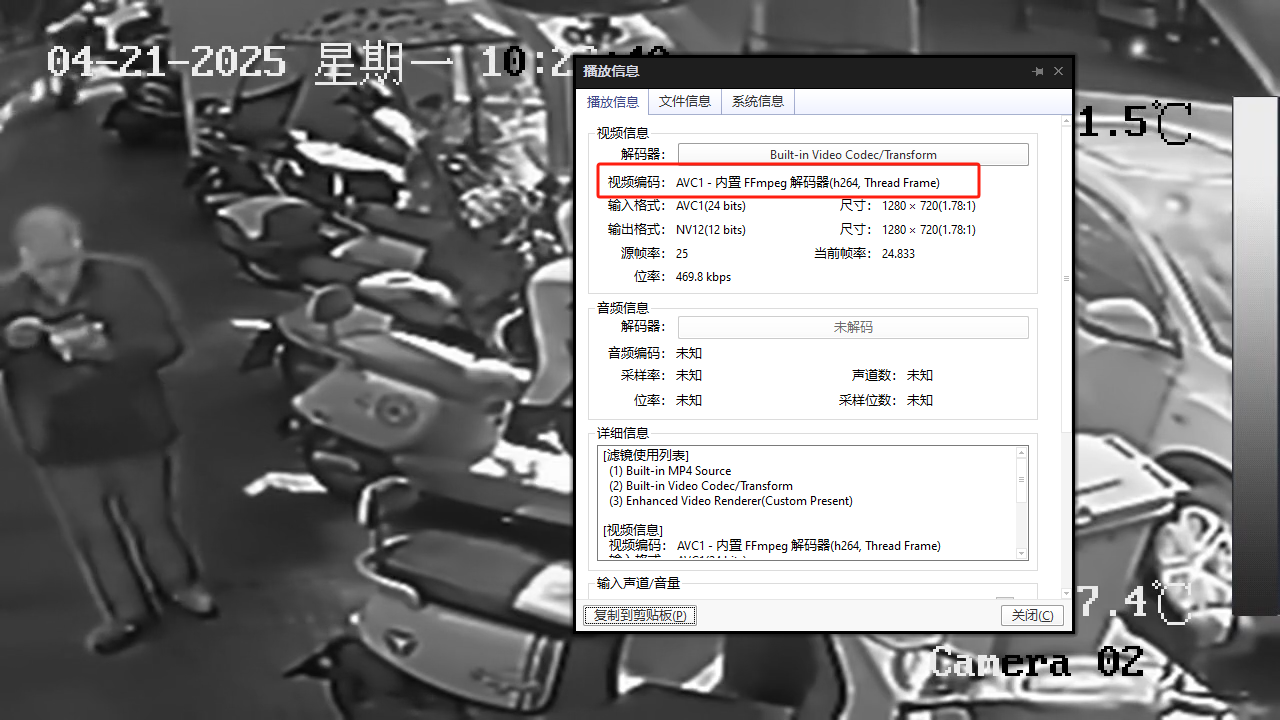

推理耗时

npu: noe_init_context success

npu: noe_load_graph success

Input tensor count is 1.

Output tensor count is 1.

npu: noe_create_job success

包含输入量化,输出反量化,推理耗时:379.63 ms, fps:2.63

NPU 计算部分耗时:10.70 ms, fps: 93.43

npu: noe_clean_job success

npu: noe_unload_graph success

npu: noe_deinit_context success

可以看到这里输入量化,输出反量化还是很耗时

可视化效果ok.

3. benchmark

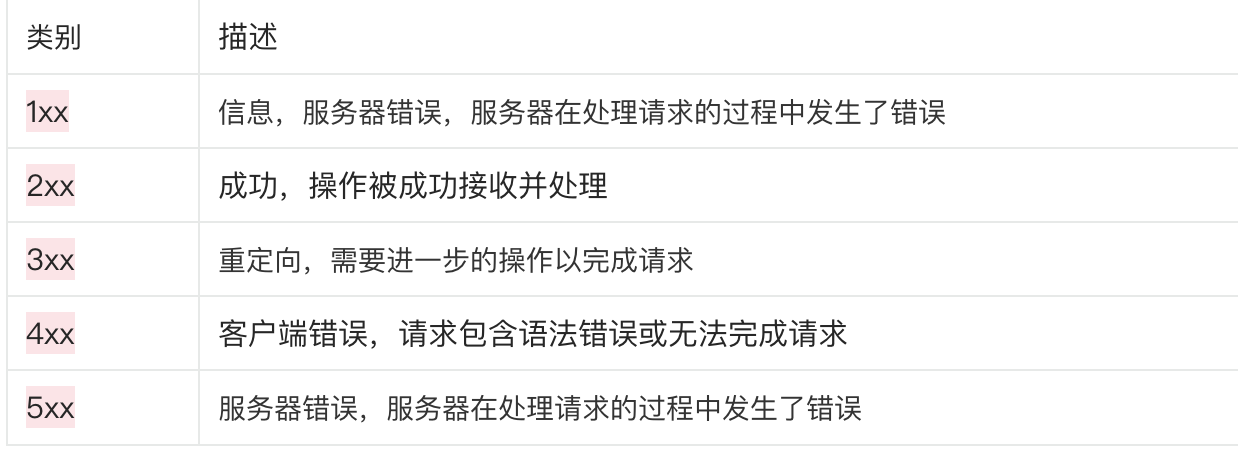

| 序号 | 硬件 | 模型 | 输入分辨率 | 量化类型 | 执行 engine | 推理耗时/ms | fps |

|---|---|---|---|---|---|---|---|

| cpu | bisenet | 512x512 | fp32 | onnxruntime | 309.75 | 3.23 | |

| npu | bisenet | 512x512 | A8W8 | 周易 NPU | 10.70 | 93.43 |

4. 参考

- https://github.com/xlite-dev/lite.ai.toolkit?tab=readme-ov-file

- https://github.com/zllrunning/face-parsing.PyTorch

- 【“星睿O6”评测】RVM人像分割torch➡️ncnn-CPU/GPU和o6-NPU部署全过程

![[Redis]1-高效的数据结构P2-Set](https://i-blog.csdnimg.cn/direct/023f6a4da5a048fdbaaf56a839fe7b9f.png)

![[渗透测试]渗透测试靶场docker搭建 — —全集](https://i-blog.csdnimg.cn/direct/f0d53ffa538f4d1fa5ec1e2b777a54b0.png)