原文:A Cheat Sheet and Some Recipes For Building Advanced RAG — LlamaIndex - Build Knowledge Assistants over your Enterprise DataLlamaIndex is a simple, flexible framework for building knowledge assistants using LLMs connected to your enterprise data.![]() https://www.llamaindex.ai/blog/a-cheat-sheet-and-some-recipes-for-building-advanced-rag-803a9d94c41b

https://www.llamaindex.ai/blog/a-cheat-sheet-and-some-recipes-for-building-advanced-rag-803a9d94c41b

一、TL;DR

- 给出了典型的基础rag并定义了2条rag是成功的要求

- 基于2条rag的成功要求给出了构建高级rag的相关技术,包括块大小优化、结构化外部知识、信息压缩、结果重排等

- 对上述所有的方法,给出了LlamaIndex的demo代码和相关的其他参考链接

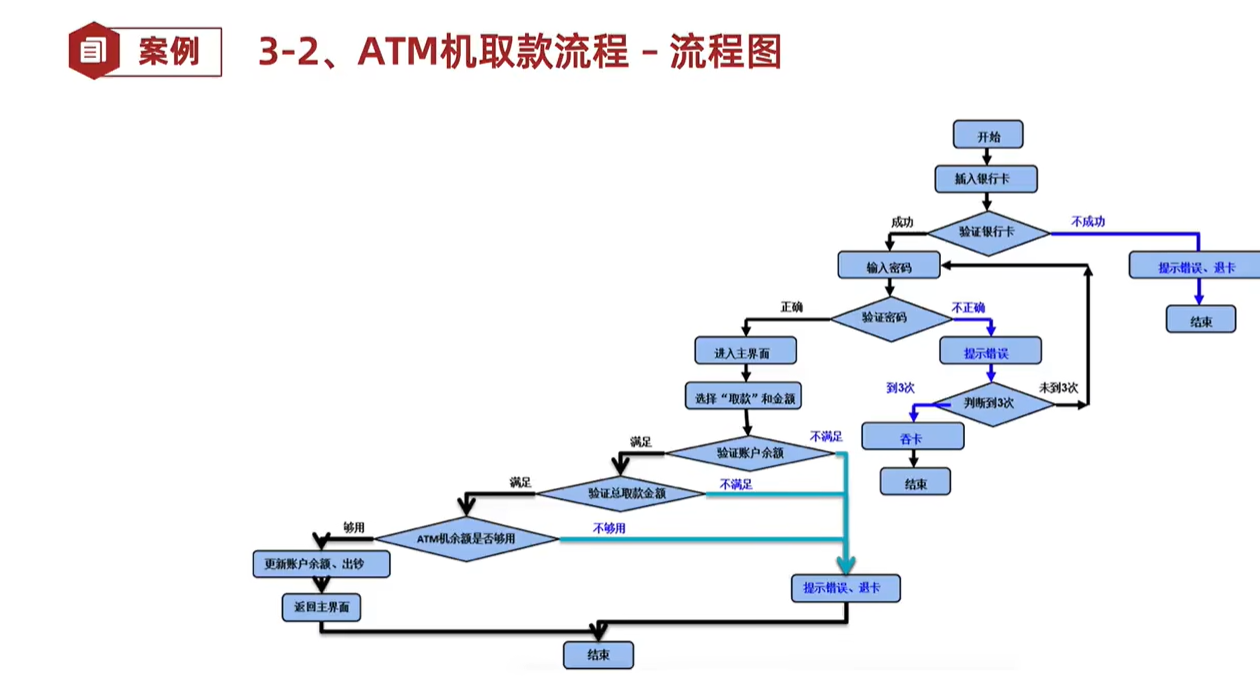

二、基础RAG

如今所定义的主流RAG(检索增强型生成)涉及从外部知识数据库中检索文档,并将这些文档连同用户的查询一起传递给大型语言模型(LLM),以生成回答。换句话说,RAG包含检索组件、外部知识数据库和生成组件。

LlamaIndex基础RAG配方:

from llama_index import SimpleDirectoryReader, VectorStoreIndex

# load data

documents = SimpleDirectoryReader(input_dir="...").load_data()

# build VectorStoreIndex that takes care of chunking documents

# and encoding chunks to embeddings for future retrieval

index = VectorStoreIndex.from_documents(documents=documents)

# The QueryEngine class is equipped with the generator

# and facilitates the retrieval and generation steps

query_engine = index.as_query_engine()

# Use your Default RAG

response = query_engine.query("A user's query")三、RAG的成功要求

为了使一个RAG系统被认为是成功的(即能够为用户提供有用且相关的答案),实际上只有两个高级要求:

- 检索必须能够找到与用户查询最相关的文档(能够找到)。

- 生成必须能够充分利用检索到的文档来充分回答用户查询(充分找到)。

四、高级RAG

构建高级RAG实际上就是应用更复杂的技术和策略(针对检索或生成组件),以确保这些要求最终得以满足。此外,我们可以将一种复杂的技术归类为:要么是独立(或多或少)于另一个要求来解决这两个高级成功要求中的一个,要么是同时解决这两个要求。

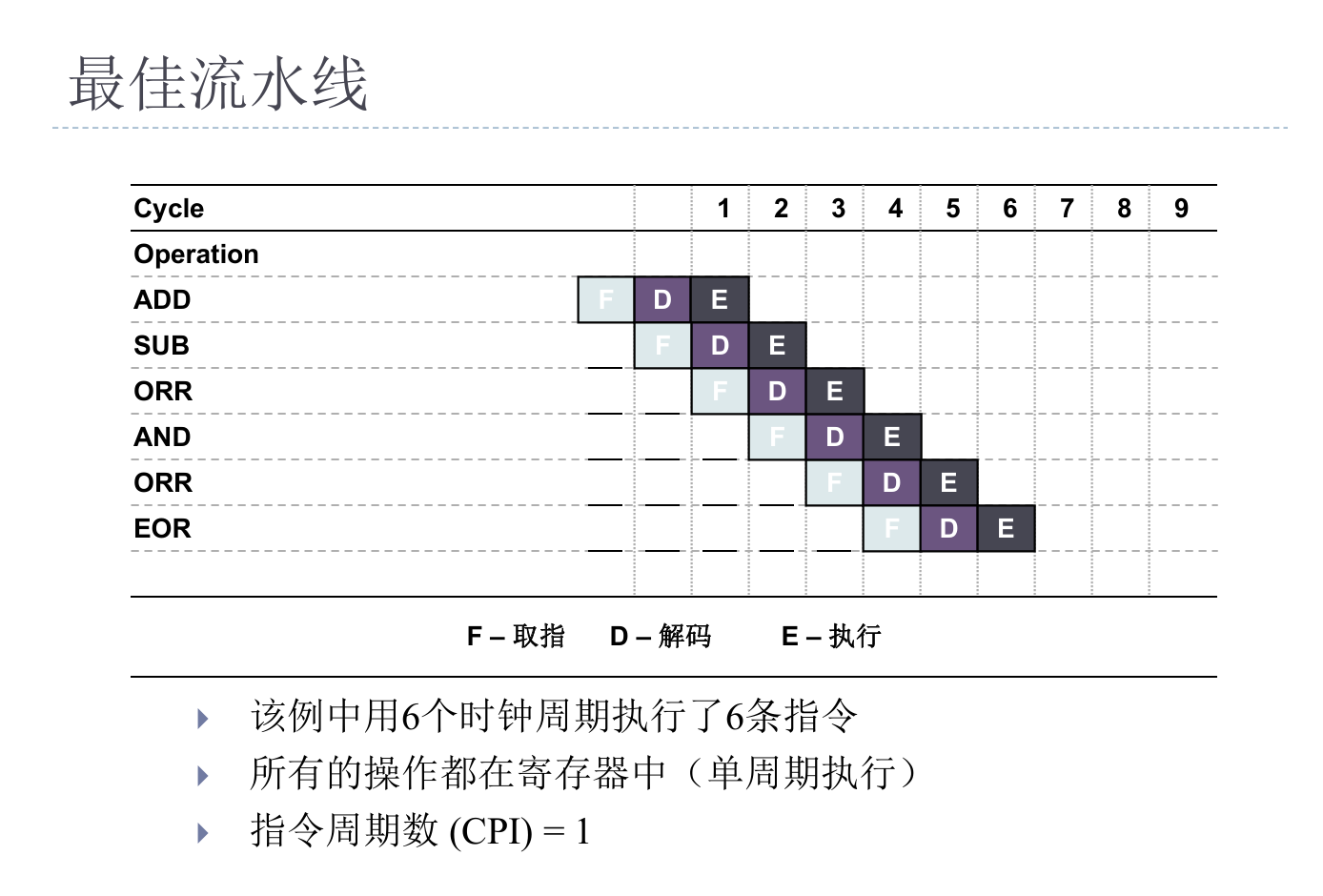

4.1 检索的高级技术必须能够找到与用户查询最相关的文档

下面,我们简要描述一些更复杂的技术,以帮助实现第一个成功要求。

4.1.1 块大小优化

由于LLM(大型语言模型)受到上下文长度的限制,在构建外部知识库时,有必要将文档分割成块。块过大或过小都会给生成组件带来问题,导致回答不准确

LlamaIndex Chunk Size Optimization Recipe:

from llama_index import ServiceContext

from llama_index.param_tuner.base import ParamTuner, RunResult

from llama_index.evaluation import SemanticSimilarityEvaluator, BatchEvalRunner

### Recipe

### Perform hyperparameter tuning as in traditional ML via grid-search

### 1. Define an objective function that ranks different parameter combos

### 2. Build ParamTuner object

### 3. Execute hyperparameter tuning with ParamTuner.tune()

# 1. Define objective function

def objective_function(params_dict):

chunk_size = params_dict["chunk_size"]

docs = params_dict["docs"]

top_k = params_dict["top_k"]

eval_qs = params_dict["eval_qs"]

ref_response_strs = params_dict["ref_response_strs"]

# build RAG pipeline

index = _build_index(chunk_size, docs) # helper function not shown here

query_engine = index.as_query_engine(similarity_top_k=top_k)

# perform inference with RAG pipeline on a provided questions `eval_qs`

pred_response_objs = get_responses(

eval_qs, query_engine, show_progress=True

)

# perform evaluations of predictions by comparing them to reference

# responses `ref_response_strs`

evaluator = SemanticSimilarityEvaluator(...)

eval_batch_runner = BatchEvalRunner(

{"semantic_similarity": evaluator}, workers=2, show_progress=True

)

eval_results = eval_batch_runner.evaluate_responses(

eval_qs, responses=pred_response_objs, reference=ref_response_strs

)

# get semantic similarity metric

mean_score = np.array(

[r.score for r in eval_results["semantic_similarity"]]

).mean()

return RunResult(score=mean_score, params=params_dict)

# 2. Build ParamTuner object

param_dict = {"chunk_size": [256, 512, 1024]} # params/values to search over

fixed_param_dict = { # fixed hyperparams

"top_k": 2,

"docs": docs,

"eval_qs": eval_qs[:10],

"ref_response_strs": ref_response_strs[:10],

}

param_tuner = ParamTuner(

param_fn=objective_function,

param_dict=param_dict,

fixed_param_dict=fixed_param_dict,

show_progress=True,

)

# 3. Execute hyperparameter search

results = param_tuner.tune()

best_result = results.best_run_result

best_chunk_size = results.best_run_result.params["chunk_size"]4.1.2 结构化外部知识

在复杂场景中,可能需要构建比基础向量索引更具结构化的外部知识,以便在处理合理分离的外部知识源时,允许进行递归检索或路由检索:

LlamaIndex Recursive Retrieval Recipe:

from llama_index import SimpleDirectoryReader, VectorStoreIndex

from llama_index.node_parser import SentenceSplitter

from llama_index.schema import IndexNode

### Recipe

### Build a recursive retriever that retrieves using small chunks

### but passes associated larger chunks to the generation stage

# load data

documents = SimpleDirectoryReader(

input_file="some_data_path/llama2.pdf"

).load_data()

# build parent chunks via NodeParser

node_parser = SentenceSplitter(chunk_size=1024)

base_nodes = node_parser.get_nodes_from_documents(documents)

# define smaller child chunks

sub_chunk_sizes = [256, 512]

sub_node_parsers = [

SentenceSplitter(chunk_size=c, chunk_overlap=20) for c in sub_chunk_sizes

]

all_nodes = []

for base_node in base_nodes:

for n in sub_node_parsers:

sub_nodes = n.get_nodes_from_documents([base_node])

sub_inodes = [

IndexNode.from_text_node(sn, base_node.node_id) for sn in sub_nodes

]

all_nodes.extend(sub_inodes)

# also add original node to node

original_node = IndexNode.from_text_node(base_node, base_node.node_id)

all_nodes.append(original_node)

# define a VectorStoreIndex with all of the nodes

vector_index_chunk = VectorStoreIndex(

all_nodes, service_context=service_context

)

vector_retriever_chunk = vector_index_chunk.as_retriever(similarity_top_k=2)

# build RecursiveRetriever

all_nodes_dict = {n.node_id: n for n in all_nodes}

retriever_chunk = RecursiveRetriever(

"vector",

retriever_dict={"vector": vector_retriever_chunk},

node_dict=all_nodes_dict,

verbose=True,

)

# build RetrieverQueryEngine using recursive_retriever

query_engine_chunk = RetrieverQueryEngine.from_args(

retriever_chunk, service_context=service_context

)

# perform inference with advanced RAG (i.e. query engine)

response = query_engine_chunk.query(

"Can you tell me about the key concepts for safety finetuning"

)4.1.3 其他有用的链接

我们有一些指南展示了在复杂情况下确保准确检索的其他高级技术的应用。以下是一些精选链接:

- Building External Knowledge using Knowledge Graphs

- Performing Mixed Retrieval with Auto Retrievers

- Building Fusion Retrievers

- Fine-tuning Embedding Models used in Retrieval

- Transforming Query Embeddings (HyDE)

4.2 生成的高级技术必须能够充分利用检索到的文档

与前一节类似,我们提供了一些此类复杂技术的例子,这些技术可以被描述为确保检索到的文档与生成器的大型语言模型(LLM)很好地对齐。

4.2.1 信息压缩:

大型语言模型(LLM)不仅受到上下文长度的限制,而且如果检索到的文档携带过多的噪声(即无关信息),还可能导致回答质量下降。

LlamaIndex:

from llama_index import SimpleDirectoryReader, VectorStoreIndex

from llama_index.query_engine import RetrieverQueryEngine

from llama_index.postprocessor import LongLLMLinguaPostprocessor

### Recipe

### Define a Postprocessor object, here LongLLMLinguaPostprocessor

### Build QueryEngine that uses this Postprocessor on retrieved docs

# Define Postprocessor

node_postprocessor = LongLLMLinguaPostprocessor(

instruction_str="Given the context, please answer the final question",

target_token=300,

rank_method="longllmlingua",

additional_compress_kwargs={

"condition_compare": True,

"condition_in_question": "after",

"context_budget": "+100",

"reorder_context": "sort", # enable document reorder

},

)

# Define VectorStoreIndex

documents = SimpleDirectoryReader(input_dir="...").load_data()

index = VectorStoreIndex.from_documents(documents)

# Define QueryEngine

retriever = index.as_retriever(similarity_top_k=2)

retriever_query_engine = RetrieverQueryEngine.from_args(

retriever, node_postprocessors=[node_postprocessor]

)

# Used your advanced RAG

response = retriever_query_engine.query("A user query")4.2.2 结果重排:

LLM(大型语言模型)存在所谓的“迷失在中间”现象,即LLM会重点关注提示的两端。鉴于此,在将检索到的文档传递给生成组件之前,重新对它们进行排序是有益的。

LlamaIndex结果重排以提升生成效果的方法:

import os

from llama_index import SimpleDirectoryReader, VectorStoreIndex

from llama_index.postprocessor.cohere_rerank import CohereRerank

from llama_index.postprocessor import LongLLMLinguaPostprocessor

### Recipe

### Define a Postprocessor object, here CohereRerank

### Build QueryEngine that uses this Postprocessor on retrieved docs

# Build CohereRerank post retrieval processor

api_key = os.environ["COHERE_API_KEY"]

cohere_rerank = CohereRerank(api_key=api_key, top_n=2)

# Build QueryEngine (RAG) using the post processor

documents = SimpleDirectoryReader("./data/paul_graham/").load_data()

index = VectorStoreIndex.from_documents(documents=documents)

query_engine = index.as_query_engine(

similarity_top_k=10,

node_postprocessors=[cohere_rerank],

)

# Use your advanced RAG

response = query_engine.query(

"What did Sam Altman do in this essay?"

)4.3 同时满足检索和生成成功要求的高级技术

在本小节中,我们考虑一些复杂的办法,这些办法利用检索和生成的协同作用,以实现更好的检索效果以及更准确地回答用户查询的生成结果。

4.3.1 生成器增强型检索:

这些技术利用LLM(大型语言模型)的固有推理能力,在执行检索之前对用户查询进行优化,以便更准确地表明需要什么内容才能提供有用的回答。

LlamaIndex生成器增强型检索:

from llama_index.llms import OpenAI

from llama_index.query_engine import FLAREInstructQueryEngine

from llama_index import (

VectorStoreIndex,

SimpleDirectoryReader,

ServiceContext,

)

### Recipe

### Build a FLAREInstructQueryEngine which has the generator LLM play

### a more active role in retrieval by prompting it to elicit retrieval

### instructions on what it needs to answer the user query.

# Build FLAREInstructQueryEngine

documents = SimpleDirectoryReader("./data/paul_graham").load_data()

index = VectorStoreIndex.from_documents(documents)

index_query_engine = index.as_query_engine(similarity_top_k=2)

service_context = ServiceContext.from_defaults(llm=OpenAI(model="gpt-4"))

flare_query_engine = FLAREInstructQueryEngine(

query_engine=index_query_engine,

service_context=service_context,

max_iterations=7,

verbose=True,

)

# Use your advanced RAG

response = flare_query_engine.query(

"Can you tell me about the author's trajectory in the startup world?"

)4.3.2 迭代式检索-生成型RAG:

-

在某些复杂情况下,可能需要多步推理才能为用户查询提供有用且相关的答案。

from llama_index.query_engine import RetryQueryEngine

from llama_index.evaluation import RelevancyEvaluator

### Recipe

### Build a RetryQueryEngine which performs retrieval-generation cycles

### until it either achieves a passing evaluation or a max number of

### cycles has been reached

# Build RetryQueryEngine

documents = SimpleDirectoryReader("./data/paul_graham").load_data()

index = VectorStoreIndex.from_documents(documents)

base_query_engine = index.as_query_engine()

query_response_evaluator = RelevancyEvaluator() # evaluator to critique

# retrieval-generation cycles

retry_query_engine = RetryQueryEngine(

base_query_engine, query_response_evaluator

)

# Use your advanced rag

retry_response = retry_query_engine.query("A user query")五、RAG的评估方面

评估RAG系统当然是至关重要的。在他们的综述论文中,高云帆等人在附带的RAG备忘单的右上角部分指出了7个评估方面。LlamaIndex库包含几个评估抽象以及与RAGAs的集成,以帮助构建者通过这些评估方面的视角,了解他们的RAG系统在多大程度上达到了成功要求。下面,我们列出了一些精选的评估笔记本指南。

- Answer Relevancy and Context Relevancy

- Faithfulness

- Retrieval Evaluation

- Batch Evaluations with BatchEvalRunner

你现在能够构建高级RAG了

在阅读了这篇博客文章之后,我们希望你感觉更有能力、更有信心去应用这些复杂的技术来构建高级RAG系统了!

![[Mac]利用hexo-theme-fluid美化个人博客](https://i-blog.csdnimg.cn/direct/ad5b454aa10542aca6d1f50bd9aa35d4.png)