🎯要点

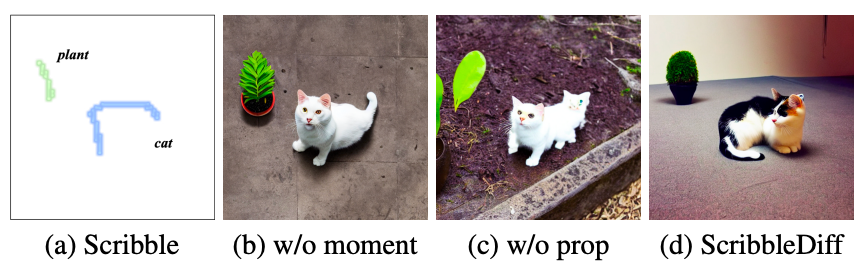

- 使用小规模磁态训练模型,并在二维三维爱德华兹-安德森模型上使用四种算法测试:贪婪算法、模拟退火算法、并行回火算法和本模型。

- 将磁态基态搜索视为马尔可夫决策过程 (MDP),学习最优策略以累积其最大回报。

- 设计图神经网络式编码器表征状态和动作。

- 模型求解非确定性多项式时间问题。

🍁磁态分析

- Python和MATLAB自旋玻璃投资组合神经网络广义方程

🍪语言内容分比

🍇Python马尔可夫决策过程策略选择

我们将要实现的马尔可夫决策过程是一个离散时间随机控制过程。它提供了一个数学框架,用于在结果部分随机、部分受决策者控制的情况下对决策进行建模。马尔可夫决策过程是一种对顺序决策问题进行建模的工具,其中决策者以顺序方式与环境进行交互。

假设问题是:我们有一个由 12 个状态组成的世界,1 个障碍初始状态(状态 5)和 2 个结束状态(状态 10、11)。对于每个状态,我们都有一个奖励,我们希望找到实施最佳奖励累积的策略。对于每个状态,奖励为 -0.04(r=-0.4)。对于状态 10,奖励为 1,对于结束状态 11,奖励为 -1。

1

4

7

10

2

5

8

11

3

6

9

12

\begin{array}{|l|l|l|l|} \hline 1 & 4 & 7 & 10 \\ \hline 2 & 5 & 8 & 11 \\ \hline 3 & 6 & 9 & 12 \\ \hline \end{array}

123456789101112

对于所处的每一个状态,我们都想找到最好的行动,是向北(N)、向南(S)、向东(E)还是向西(W)走。基本上,我们希望以最短的路到达状态 10。首先,让我们创建一个世界类,它将在我们的世界中用于解决问题。这个对象可以帮助我们声明世界、绘制世界并绘制我们发现的移动世界的策略。方法 plot_world 绘制了我们上面看到的图像,将使用值迭代方法代替策略迭代。

使用策略迭代求解马尔可夫决策过程,其中 γ = 0.9 和 r = 0.04。我们使用均匀随机策略初始化了策略迭代算法。并且分别使用 World 类的绘制值和绘制策略函数,将每次迭代步骤后的值函数和策略绘制成网格世界的两个不同图形,我们得到了以下结果:

初次迭代:网格世界值

−

0.287

−

0.169

0.05

1.0

−

0.354

−

0.479

−

1.0

−

0.402

−

0.451

−

0.524

−

0.696

\begin{array}{|l|l|l|l|} \hline-0.287 & -0.169 & 0.05 & 1.0 \\ \hline-0.354 & & -0.479 & -1.0 \\ \hline-0.402 & -0.451 & -0.524 & -0.696 \\ \hline \end{array}

−0.287−0.354−0.402−0.169−0.4510.05−0.479−0.5241.0−1.0−0.696

二次迭代:

0.509 0.649 0.795 1.0 0.398 0.486 − 1.0 0.291 0.207 0.168 − 0.009 \begin{array}{|l|l|l|c|} \hline 0.509 & 0.649 & 0.795 & 1.0 \\ \hline 0.398 & & 0.486 & -1.0 \\ \hline 0.291 & 0.207 & 0.168 & -0.009 \\ \hline \end{array} 0.5090.3980.2910.6490.2070.7950.4860.1681.0−1.0−0.009

三次迭代:

0.509

0.649

0.795

1.0

0.398

0.486

−

1.0

0.291

0.207

0.34

0.126

\begin{array}{|l|l|l|l|} \hline 0.509 & 0.649 & 0.795 & 1.0 \\ \hline 0.398 & & 0.486 & -1.0 \\ \hline 0.291 & 0.207 & 0.34 & 0.126 \\ \hline \end{array}

0.5090.3980.2910.6490.2070.7950.4860.341.0−1.00.126

四次迭代:

0.509

0.649

0.795

1.0

0.398

0.486

−

1.0

0.296

0.253

0.344

0.129

\begin{array}{|l|l|l|l|} \hline 0.509 & 0.649 & 0.795 & 1.0 \\ \hline 0.398 & & 0.486 & -1.0 \\ \hline 0.296 & 0.253 & 0.344 & 0.129 \\ \hline \end{array}

0.5090.3980.2960.6490.2530.7950.4860.3441.0−1.00.129

我们可以看到,经过 4 次迭代后我们得到了解,并且我们可以看到与上一次迭代相比,每次迭代的进展情况。如果使用值迭代,应该得到与策略迭代算法相同的结果。

世界类:

import numpy as np

import matplotlib.pyplot as plt

class World:

def __init__(self):

self.nRows = 3

self.nCols = 4

self.stateObstacles = [5]

self.stateTerminals = [10, 11]

self.nStates = 12

self.nActions = 4

def _plot_world(self):

nStates = self.nStates

nRows = self.nRows

nCols = self.nCols

stateObstacles = self.stateObstacles

stateTerminals = self.stateTerminals

coord = [[0, 0], [nCols, 0], [nCols, nRows], [0, nRows], [0, 0]]

xs, ys = zip(*coord)

plt.plot(xs, ys, "black")

for i in stateObstacles:

(I, J) = np.unravel_index(i, shape=(nRows, nCols), order='F')

coord = [[J, nRows - I],

[J + 1, nRows - I],

[J + 1, nRows - I + 1],

[J, nRows - I + 1],

[J, nRows - I]]

xs, ys = zip(*coord)

plt.fill(xs, ys, "0.5")

plt.plot(xs, ys, "black")

for ind, i in enumerate(stateTerminals):

(I, J) = np.unravel_index(i, shape=(nRows, nCols), order='F')

coord = [[J, nRows - I],

[J + 1, nRows - I],

[J + 1, nRows - I + 1],

[J, nRows - I + 1],

[J, nRows - I]]

xs, ys = zip(*coord)

plt.fill(xs, ys, "0.8")

plt.plot(xs, ys, "black")

plt.plot(xs, ys, "black")

X, Y = np.meshgrid(range(nCols + 1), range(nRows + 1))

plt.plot(X, Y, 'k-')

plt.plot(X.transpose(), Y.transpose(), 'k-')

@staticmethod

def _truncate(n, decimals=0):

multiplier = 10 ** decimals

return int(n * multiplier) / multiplier

def plot(self):

nStates = self.nStates

nRows = self.nRows

nCols = self.nCols

self._plot_world()

states = range(1, nStates + 1)

k = 0

for i in range(nCols):

for j in range(nRows, 0, -1):

plt.text(i + 0.5, j - 0.5, str(states[k]), fontsize=26, horizontalalignment='center', verticalalignment='center')

k += 1

plt.title('MDP gridworld', size=16)

plt.axis("equal")

plt.axis("off")

plt.show()

def plot_value(self, valueFunction):

nRows = self.nRows

nCols = self.nCols

stateObstacles = self.stateObstacles

self._plot_world()

k = 0

for i in range(nCols):

for j in range(nRows, 0, -1):

if k + 1 not in stateObstacles:

plt.text(i + 0.5, j - 0.5, str(self._truncate(valueFunction[k], 3)), fontsize=26, horizontalalignment='center', verticalalignment='center')

k += 1

plt.title('MDP gridworld', size=16)

plt.axis("equal")

plt.axis("off")

plt.show()

def plot_policy(self, policy):

nStates = self.nStates

nActions = self.nActions

nRows = self.nRows

nCols = self.nCols

stateObstacles = self.stateObstacles

stateTerminals = self.stateTerminals

policy = policy.reshape(nRows, nCols, order="F").reshape(-1, 1)

X, Y = np.meshgrid(range(nCols + 1), range(nRows + 1))

X1 = X[:-1, :-1]

Y1 = Y[:-1, :-1]

X2 = X1.reshape(-1, 1) + 0.5

Y2 = np.flip(Y1.reshape(-1, 1)) + 0.5

X2 = np.kron(np.ones((1, nActions)), X2)

Y2 = np.kron(np.ones((1, nActions)), Y2)

mat = np.cumsum(np.ones((nStates, nActions)), axis=1).astype("int64")

if policy.shape[1] == 1:

policy = (np.kron(np.ones((1, nActions)), policy) == mat)

index_no_policy = stateObstacles + stateTerminals

index_policy = [item - 1 for item in range(1, nStates + 1) if item not in index_no_policy]

mask = policy.astype("int64") * mat

mask = mask.reshape(nRows, nCols, nCols)

X3 = X2.reshape(nRows, nCols, nActions)

Y3 = Y2.reshape(nRows, nCols, nActions)

alpha = np.pi - np.pi / 2 * mask

self._plot_world()

for ii in index_policy:

ax = plt.gca()

j = int(ii / nRows)

i = (ii + 1 - j * nRows) % nCols - 1

index = np.where(mask[i, j] > 0)[0]

h = ax.quiver(X3[i, j, index], Y3[i, j, index], np.cos(alpha[i, j, index]), np.sin(alpha[i, j, index]), 0.3)

states = range(1, nStates + 1)

k = 0

for i in range(nCols):

for j in range(nRows, 0, -1):

plt.text(i + 0.25, j - 0.25, str(states[k]), fontsize=16, horizontalalignment='right', verticalalignment='bottom')

k += 1

plt.axis("equal")

plt.axis("off")

plt.show()

主程度:

from World import World

import numpy as np

import pandas as pd

import copy

def construct_p(world, p=0.8, step=-0.04):

nstates = world.get_nstates()

nrows = world.get_nrows()

obsacle_index = world.get_stateobstacles()

terminal_index = world.get_stateterminals()

bad_index = obsacle_index + terminal_index

rewards = np.array([step] * 4 + [0] + [step] * 4 + [1, -1] + [step])

actions = ["N", "S", "E", "W"]

transition_models = {}

for action in actions:

transition_model = np.zeros((nstates, nstates))

for i in range(1, nstates + 1):

if i not in bad_index:

if action == "N":

if i + nrows <= nstates and (i + nrows) not in obsacle_index:

transition_model[i - 1][i + nrows - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if 0 < i - nrows <= nstates and (i - nrows) not in obsacle_index:

transition_model[i - 1][i - nrows - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if (i - 1) % nrows > 0 and (i - 1) not in obsacle_index:

transition_model[i - 1][i - 1 - 1] += p

else:

transition_model[i - 1][i - 1] += p

if action == "S":

if i + nrows <= nstates and (i + nrows) not in obsacle_index:

transition_model[i - 1][i + nrows - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if 0 < i - nrows <= nstates and (i - nrows) not in obsacle_index:

transition_model[i - 1][i - nrows - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if 0 < i % nrows and (i + 1) not in obsacle_index and (i + 1) <= nstates:

transition_model[i - 1][i + 1 - 1] += p

else:

transition_model[i - 1][i - 1] += p

if action == "E":

if i + nrows <= nstates and (i + nrows) not in obsacle_index:

transition_model[i - 1][i + nrows - 1] += p

else:

transition_model[i - 1][i - 1] += p

if 0 < i % nrows and (i + 1) not in obsacle_index and (i + 1) <= nstates:

transition_model[i - 1][i + 1 - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if (i - 1) % nrows > 0 and (i - 1) not in obsacle_index:

transition_model[i - 1][i - 1 - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if action == "W":

if 0 < i - nrows <= nstates and (i - nrows) not in obsacle_index:

transition_model[i - 1][i - nrows - 1] += p

else:

transition_model[i - 1][i - 1] += p

if 0 < i % nrows and (i + 1) not in obsacle_index and (i + 1) <= nstates:

transition_model[i - 1][i + 1 - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

if (i - 1) % nrows > 0 and (i - 1) not in obsacle_index:

transition_model[i - 1][i - 1 - 1] += (1 - p) / 2

else:

transition_model[i - 1][i - 1] += (1 - p) / 2

elif i in terminal_index:

transition_model[i - 1][i - 1] = 1

transition_models[action] = pd.DataFrame(transition_model, index=range(1, nstates + 1), columns=range(1, nstates + 1))

return transition_models, rewards

def max_action(transition_models, rewards, gamma, s, V, actions, terminal_ind):

maxs = {key: 0 for key in actions}

max_a = ""

action_map = {k: v for k, v in zip(actions, [1, 3, 2, 4])}

for action in actions:

if s not in terminal_ind:

maxs[action] += rewards[s - 1] + gamma * np.dot(transition_models[action].loc[s, :].values, V)

else:

maxs[action] = rewards[s - 1]

maxi = -10 ** 10

for key in maxs:

if maxs[key] > maxi:

max_a = key

maxi = maxs[key]

return maxi, action_map[max_a]

def value_iteration(world, transition_models, rewards, gamma=1.0, theta=10 ** -4):

nstates = world.get_nstates()

terminal_ind = world.get_stateterminals()

V = np.zeros((nstates, ))

P = np.zeros((nstates, 1))

actions = ["N", "S", "E", "W"]

delta = theta + 1

while delta > theta:

delta = 0

v = copy.deepcopy(V)

for s in range(1, nstates + 1):

V[s - 1], P[s - 1] = max_action(transition_models, rewards, gamma, s, v, actions, terminal_ind)

delta = max(delta, np.abs(v[s - 1] - V[s - 1]))

return V, P

def policy_iter(policy, world, transition_models, rewards, gamma=0.9, theta=10 ** -4):

nstates = world.get_nstates()

terminal_ind = world.get_stateterminals()

V = np.zeros((nstates,))

a = ["N", "S", "E", "W"]

while True:

delta = 0

for s in range(nstates):

v = 0

for action, action_prob in enumerate(policy[s]):

action = a[action]

if s not in terminal_ind:

v += rewards[s - 1] + action_prob * gamma * np.dot(transition_models[action].loc[s, :].values, V)

else:

v = rewards[s - 1]

delta = max(delta, np.abs(v - V[s]))

V[s] = v

print (V[s])

if delta < theta:

break

return np.array(V)